LessWrong 2.0 Reader

View: New · Old · Topnext page (older posts) →

Eliezer Yudkowsky (Eliezer_Yudkowsky) · 2006-01-01T08:00:05.370Z · comments (7)

That hasn’t been my experience. I’ve tried solving hard problems, sometimes I succeed and sometimes I fail, but I keep trying.

Whether I feel good about it is almost entirely determined by whether I’m depressed at the time. When depressed, by brain tells me almost any action is not a good idea, and trying to solve hard problems is particularly idiotic and doomed to fail. Maddeningly, being depressed was a hard problem in this sense, so it took me a long time to fix. Now I take steps at the first sign of depression.

owencb on Express interest in an "FHI of the West"(Caveat: it's been a while since I've visited Constellation, so if things have changed recently I may be out of touch.)

I'm not sure that Constellation should be doing anything differently. I think there's a spectrum of how much your culture is like blue-skies thinking vs highly prioritized on the most important things. I think that FHI was more towards the first end of this spectrum, and Constellation is more towards the latter. I think that there are a lot of good things that come with being further in that direction, but I do think it means you're less likely to produce very novel ideas.

To illustrate via caricatures in a made-up example: say someone turned up in one of the offices and said "OK here's a model I've been developing of how aliens might build AGI". I think the vibe in Constellation would trend towards people are interested to chat about it for fifteen minutes at lunch (questions a mix of the treating-it-as-a-game and the pointed but-how-will-this-help-us), and then say they've got work they've got to get back to. I think the vibe in FHI would have trended more towards people treat it as a serious question (assuming there's something interesting to the model), and it generates an impromptu 3-hour conversation at a whiteboard with four people fleshing out details and variations, which ends with someone volunteering to send round a first draft of a paper. I also think Constellation is further in the direction of being bought into some common assumptions than FHI was; e.g. it would seem to me more culturally legit to start a conversation questioning whether AI risk was real at FHI than Constellation.

I kind of think there's something valuable about the Constellation culture on this one, and I don't want to just replace it with the FHI one. But I think there's something important and valuable about the FHI thing which I'd love to see existing in some more places.

(In the process of writing this comment it occurred to me that Constellation could perhaps decide to have some common spaces which try to be more FHI-like, while trying not to change the rest. Honestly I think this is a little hard without giving that subspace a strong distinct identity. It's possible they should do that; my immediate take now that I've thought to pose the question is that I'm confused about it.)

harfe on What is the best way to talk about probabilities you expect to change with evidence/experiments?A lot of the probabilities we talk about are probabilities we expect to change with evidence. If we flip a coin, our p(heads) changes after we observe the result of the flipped coin. My p(rain today) changes after I look into the sky and see clouds. In my view, there is nothing special in that regard for your p(doom). Uncertainty is in the mind, not in reality.

However, how you expect your p(doom) to change depending on facts or observation is useful information and it can be useful to convey that information. Some options that come to mind:

describe a model: If your p(doom) estimate is the result of a model consisting of other variables, just describing this model is useful information about your state of knowledge, even if that model is only approximate. This seems to come closest to your actual situation.

describe your probability distribution of your p(doom) in 1 year (or another time frame): You could say that you think there is a 25% chance that your p(doom) in 1 year is between 10% and 30%. Or give other information about that distribution. Note: your current p(doom) should be the mean of your p(doom) in 1 year.

describe your probability distribution of your p(doom) after a hypothetical month of working on a better p(doom) estimate: You could say that if you were to work hard for a month on investigating p(doom), you think there is a 25% chance that your p(doom) after that month is between 10% and 30%. This is similar to 2., but imo a bit more informative. Again, your p(doom) should be the mean of your p(doom) after a hypothetical month of investigation, even if you don't actually do that investigation.

For example, if there were certain states of the world which I wanted to avoid at all costs (and thus violate the continuity axiom), I could assign zero utility to it and use geometric averaging. I couldn’t do this with arithmetic averaging and any finite utilities.

Well, you can't have some states as "avoid at all costs" and others as "achieve at all costs", because putting them in the same lottery leads to nonsense, no matter what averaging you use. And allowing only one of the two seems arbitrary. So it seems cleanest to disallow both.

If I wanted to program a robot which sometimes preferred lotteries to any definite outcome, I wouldn’t be able to program the robot using arithmetic averaging over goodness values.

But geometric averaging wouldn't let you do that either, or am I missing something?

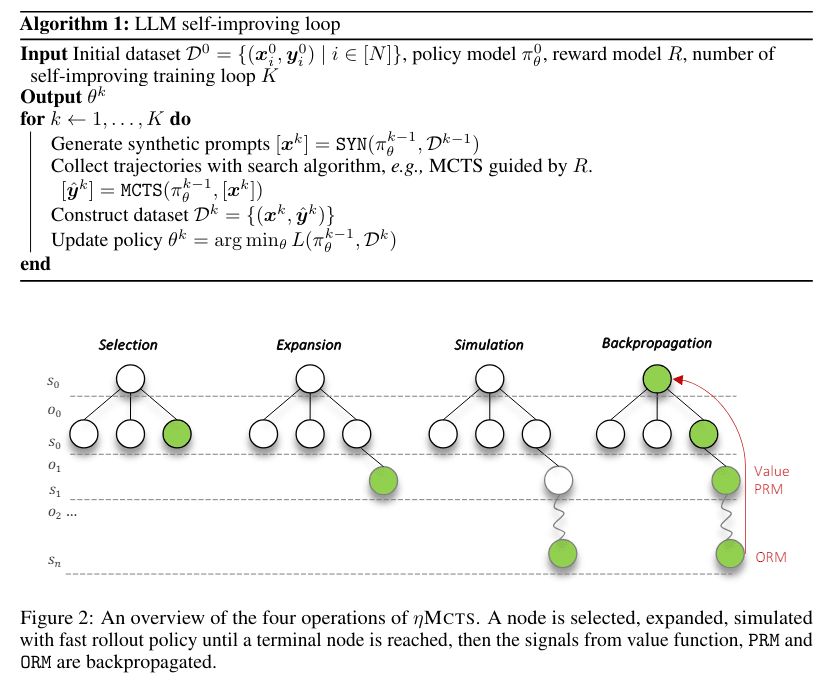

gunnar_zarncke on [Intro to brain-like-AGI safety] 6. Big picture of motivation, decision-making, and RLToward Self-Improvement of LLMs via Imagination, Searching, and Criticizing

Abstract:

Despite the impressive capabilities of Large Language Models (LLMs) on various tasks, they still struggle with scenarios that involves complex reasoning and planning. Recent work proposed advanced prompting techniques and the necessity of fine-tuning with high-quality data to augment LLMs’ reasoning abilities. However, these approaches are inherently constrained by data availability and quality. In light of this, self-correction and self-learning emerge as viable solutions, employing strategies that allow LLMs to refine their outputs and learn from self-assessed rewards. Yet, the efficacy of LLMs in self-refining its response, particularly in complex reasoning and planning task, remains dubious. In this paper, we introduce ALPHALLM for the self-improvements of LLMs, which integrates Monte Carlo Tree Search (MCTS) with LLMs to establish a self-improving loop, thereby enhancing the capabilities of LLMs without additional annotations. Drawing inspiration from the success of AlphaGo, ALPHALLM addresses the unique challenges of combining MCTS with LLM for self-improvement, including data scarcity, the vastness search spaces of language tasks, and the subjective nature of feedback in language tasks. ALPHALLM is comprised of prompt synthesis component, an efficient MCTS approach tailored for language tasks, and a trio of critic models for precise feedback. Our experimental results in mathematical reasoning tasks demonstrate that ALPHALLM significantly enhances the performance of LLMs without additional annotations, showing the potential for self-improvement in LLMs

https://arxiv.org/pdf/2404.12253.pdf

This looks suspiciously like using the LLM as a Thought Generator, the MCTS roll-out as the Thought Assessor, and the reward model R as the Steering System.This would be the first LLM model that I have seen that would be amenable to brain-like steering interventions.

gilch on hydrogen tube transportHydrogen can only burn in the presence of oxygen. The pipe does not contain any, and combustion isn't possible until after they have had time to mix. It's also not going to explode from the pressure, because it's the same as the atmosphere. The shaped charge is obviously going to explode, that's the point, but it will be more directional. That still doesn't sound safe in an enclosed space. Maybe the vehicle could deploy a gasket seal with airbags or something to reduce the leakage of expensive hydrogen.

metallicdragon on What is the best way to talk about probabilities you expect to change with evidence/experiments?Is that not just Conditional Probability? Your overall P(doom) is 6%, but your P(doom|something) is 3%, and your P(doom|something_else) is 15%. If you need something more complex you could draw a probability tree.

a-h on Should we maximize the Geometric Expectation of Utility?(apologies for taking a couple of days to respond, work has been busy)

I think your robot example nicely demonstrates the difference between our intuitions. As cubefox pointed out in another comment, what representation you want to use depends on what you take as basic.

There are certain types of preferences/behaviours which cannot be expressed using arithmetic averaging. These are the ones which violate VNM, and I think violating VNM axioms isn't totally crazy. I think its worth exploring these VNM-violating preferences and seeing what they look like when more fleshed out. That's what I tried to do in this post.

If I wanted a robot that violated one of the VNM axioms, then I wouldn't be able to describe it by 'nailing down the averaging method to use ordinary arithmetic averaging and assigning goodness values'. For example, if there were certain states of the world which I wanted to avoid at all costs (and thus violate the continuity axiom), I could assign zero utility to it and use geometric averaging. I couldn't do this with arithmetic averaging and any finite utilities [1].

A better example is Scott Garrabrant's argument [LW · GW] regarding abandoning the VNM axiom of independence. If I wanted to program a robot which sometimes preferred lotteries to any definite outcome, I wouldn't be able to program the robot using arithmetic averaging over goodness values.

I think that these examples show that there is at least some independence between averaging methods and utility/goodness.

(ok, I guess you could assign 'negative infinity' utility to those states if you wanted. But once you're doing stuff like that, it seems to me that geometric averaging is a much more intuitive way to describe these preferences. )

Condensation is not just possible but would happen by default. You described the tubes as steel lined with aluminum in contact with the ground, if not buried. That's going to be consistently cool enough for passive condensation.

Getting water out of a long tube shouldn't be hard with multiple drains, and if there's any incline, you just need them at the bottom. You can just dump it in the ground. Use a plumbing trap to keep the gasses separated. They're at equal pressure, so this should work, and the pressure can also be maintained mostly passively with hydrogen bladders exposed to the atmosphere on the outside, although the burned hydrogen will have to be regenerated before they empty completely, but this can be done anywhere on the pipe. Hydrogen can be easily regenerated by electrolysis of water, which doesn't seem any more expensive than charging the batteries. It might be even cheaper to crack if off of natural gas or to use white hydrogen when available.

Are turbines more expensive than electric motors for similar power? It's true that conventional piston engines are heavy, but batteries are also heavy, especially the cheaper chemistries.

Alternatively, run electricity through the pipe to power the vehicles so they don't have to carry any extra weight for power. It's coated with conductive aluminum already. If half-pipes could be welded with a dielectric material and not cost any more that would work. Or use an internal monorail, but maybe only if you were going to do that already. Or you could suspend a wire. That's got to be pretty cheap compared to the pipe itself.

the-gears-to-ascension on My Detailed Notes & Commentary from Secular Solsticeseems like it goes against the rationalist virtue of changing ones' mind to refuse to change a song because everyone likes it the way it is.