How good a proxy for accuracy is precision?

post by Elizabeth (pktechgirl) · 2019-08-31T01:01:57.330Z · LW · GW · 3 commentsThis is a question post.

Contents

Answers 4 Lawrence None 3 comments

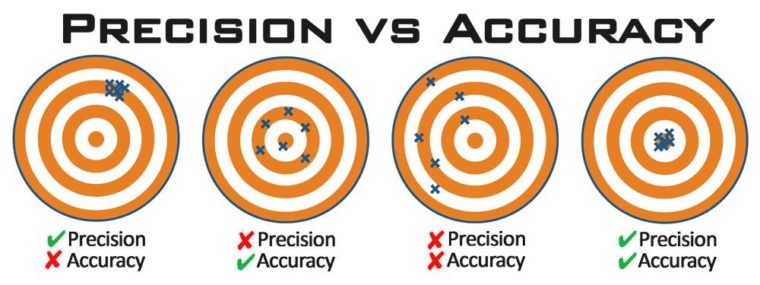

Accuracy = averaging to the right answer.

Precision = having a low standard deviation.

It's easy to get a precise but inaccurate answer- just guess 0 every time. But for situations where people aren't goodharting, are a cluster of answers that are more similar to each other more likely to average to the correct answer than a cluster of answers that aren't? And how does that change based on how the answers were generated (e.g. same individual giving multiple answers over time vs. multiple individuals answering vs. multiple groups answering)? In what ways do accuracy and precision directly trade off against each other?

Answers

I believe your definition of accuracy differs from the ISO definition (which is the usage I learned in undergrad statistics classes, and also the usage most online sources seem to agree with): a measurement is accurate insofar as it is close to the true value. By this definition, the reason the second graph is accurate but not precise is because all the points are close to the true value. I'll be using that definition in the remainder of my post. That being said, Wikipedia does claim your usage is the more common usage of the word.

I don't have a clear sense of how to answer your question empirically, so I'll give a theoretical answer.

Suppose our goal is to predict some value . Let be our predictor for (for example, we could have ask a subject to predict ). A natural way to measure accuracy for prediction tasks is the mean squared error , where a lower mean square error is higher accuracy. The Bias Variance Decomposition of mean squared error gives us:

The first term on the right is the bias of your estimator - how far the expected value of your estimator is from the true value. An unbiased estimator is one that, in expectation, gives you the right value (what you mean by "accuracy" in your post, and what ISO calls "trueness"). The second term is the variance of your estimator - how far your estimator is, in expectation, from the average value of the estimator. Rephrasing a bit, this measures how imprecise your estimator is, on average.

As both the terms on the right are always non-negative, the bias and variance of your estimator both lower bound your mean square error.

However, it turns out that there's often a trade off between having an unbiased estimator and a more precise estimator, known appropriately as the bias-variance trade-off. In fact, there are many classic examples in statistics of estimators that are biased but have lower MSE than any unbiased estimator. (Here's the first one I found during Googling)

↑ comment by Elizabeth (pktechgirl) · 2019-09-01T20:23:00.623Z · LW(p) · GW(p)

Looks like what I'm calling accuracy ISO calls "trueness", and ISO!accuracy is a combination of trueness and precision.

3 comments

Comments sorted by top scores.

comment by Elizabeth (pktechgirl) · 2019-08-31T01:03:14.471Z · LW(p) · GW(p)

I would just like to complain about how many bullseye diagrams I looked at where the "low accuracy" picture averaged to about the bullseye, because the creator had randomly spewed dots and the average location of an image is in fact its center.

Replies from: LawChan↑ comment by LawrenceC (LawChan) · 2019-09-01T17:52:53.663Z · LW(p) · GW(p)

I wonder if that's because they're using the ISO definition of accuracy? A quick google search for these diagrams led me to this reddit thread, where the discussion below reflects the fact that people use different definitions of accuracy.

EDIT: here's a diagram of the form that Elizabeth is complaining about (source: the aforementioned reddit thread):

comment by Ruby · 2019-08-31T04:47:42.014Z · LW(p) · GW(p)

This seems to be a question about the correlation of the two over all processes that generate estimates, which seems very hard to do. Even supposing you had this correlation over processes, I'm guessing once you have a specific process in mind, you get to condition on what to know about it in a way that just screens off the prior.

In a given domain though, maybe there are useful priors one could have given what one knows about the particular process. I'll try to think of examples.