There Is No Control System For COVID

post by Mike Harris (mike-harris-1) · 2021-04-06T17:16:04.171Z · LW · GW · 8 commentsContents

Introduction: The Problem: The Vulnerability Model: A Thought Experiment: Formal Model: Effects of Modeling Vulnerability: Standard Model: Stable Vulnerability: Semi-Stable Vulnerability: Alternatives: Faked Data: Behavior: Heterogenity: California in the Winter: Variants: Superspreading Events: Vulnerable Environments: Different Transmission Types: Future Work: None 8 comments

Introduction:

The standard model for explaining COVID transmission has a serious problem with the data. In the United States, despite seemingly large differences in policy and behavior, the difference in infection rates has been relatively small.

In this article I will explain why this convergence is so surprising for the standard model and how a relatively small modification can give much more sensible results. I will also discuss some of the other suggested approaches and why they are not able to adequately solve this problem.

The Problem:

If we look at the infection data as of November 2021, before California and New York had their winter surge, we see widely varying COVID infection rates. Vermont, the least infected state, had less than 3% of its population infected. The most infected state (excluding ones with major outbreaks before lockdown) was North Dakota with almost 28% of its population infected.

These large differences were attributed to different levels of restrictions and seriousness among the residents in fighting COVID. California was not the least infected state, and Florida not the most infected, but as large states with deviating policy responses and corresponding differences in infection rates they came to epitomize the two sides.

Florida, with its loose policies had people spend ~20% more time outside the house than California did, and by November 1st 20% of the population had been infected compared to California's 12%.

The implication then is that if Florida were to reduce its transmission by the 20% necessary to match California it would reduce its infection rate to 12%. However if we try to fit the daily transmission rate to the observed data in Florida, we see that reducing it by 20% would mean that only 0.75% would have been infected. In order to match California's infection rate Florida would have needed to reduce its transmission rate by just 3%

Using similar simulations we can see that the difference needed for Florida to match the best state, Vermont, is just 10% while an increase of 10% would have doubled the number of infections, and made it by far the highest in the country.

When you combine these points together you get the following problems for the standard model:

- Despite seemingly wide variations in behavior and lockdown strictness all states had nearly the same amount of transmission.

- The duration of the epidemic is highly unlikely, a little more transmission and most states would have had a single large spike in cases, a little less transmission and the disease would have been virtually eradicated.

There is a secondary problem for the standard model. If we compare the transmission rates between Florida and California we do not see a consistently higher rate in Florida.

Instead it fluctuates, at times Florida even seems to be more "closed" than California, against all intuition and in a way not confirmed by the mobility data. If you look at the Effective RT tab on covidestim.org you will see that this fluctuating pattern is common across all the different states.

The Vulnerability Model:

In order to address these problems I will propose the following modification to the standard model.

- Some people are more "vulnerable" to infection than others.

- Who is "vulnerable" changes over time.

In other words, at any given time most people are "invulnerable" and it is difficult to infect them, however over the course of a year most people will go through a period of "vulnerablity" where they are especially susceptible to infection.

If we then try to infer transmission rates based on this model we get this result instead:

Starting in June, Florida began to diverge from California, and had ~20% more transmission. Over the next few months this gap widened at a fairly steady pace. This fits with the data, and does not have any closing of the gap which needs to be explained.

Furthermore, a 20% transmission reduction does in fact result in ~10% being infected (just like California). A 10% increase in transmission reduction means that ~25% are infected rather than ~40%.

This resolves both of the problems that the standard model faced, we don't need for all states to fit into an exceptionally narrow range, and the lack of exponential spikes or dropoff is naturally explained.

In the following sections I will try to explain why making these changes to the standard model results in these changes in behavior.

A Thought Experiment:

Suppose that you repeatedly ran an experiment where you put one infected person in a room with 100 healthy people for one hour. On average at the end of this experiment two of the healthy people become infected.

You are now asked to predict the results of two follow up experiments.

- How many healthy people would be infected if instead there were two sick people in the room.

- How many healthy people would be infected if they were in the room for two hours rather than one.

Without knowing the mechanism behind how the disease was transmitted, it would be hard to predict the results.

If for instance the mechanism was that the sick person randomly coughed on two people per hour, it would be easy to predict that in either case four people would be infected.

On the other hand if the sick person person coughed on everybody, and only two people get sick there would be two extreme possibilities:

- If you get coughed on you have a 2% chance of getting sick => Twice the coughs lead to twice the infection.

- 2% of people get sick if they are coughed on, everybody else is safe => Twice the coughs have no impact on infection.

The standard model assumes that the first alternative is true. In my model we consider an in between possibility, where some people are safe if you cough on them, and others have some chance of getting sick. Precisely how many people get sick if there are twice as many sick people (or people are in the room for twice as long) is uncertain, and depends on where on the spectrum between the two extremes we are at.

Formal Model:

If we want to formalize this model, we could say that there are three parameters of interest:

- How many people are vulnerable.

- How many people would be infected if everybody was vulnerable.

- How stable is vulnerability. If we repeated the experiment a week later, how many of the same people would be vulnerable.

At the start of an outbreak, when nobody is immune, we can measure the product of the first two parameters. This is called R0 in the standard model. Over time, even if R0 stays constant, different values for the three parameters will lead to different epidemic curves.

Effects of Modeling Vulnerability:

We can consider three different scenarios

| Experiment | Exposed | Vulnerable | Stability | R0 |

|---|---|---|---|---|

| Standard Model | 2 | 100% | 100% | 2 |

| Stable Vulnerability | 10 | 20% | 100% | 2 |

| Semistable Vulnerability | 10 | 20% | 90% | 2 |

In all of the scenarios we will use the same starting conditions:

- There is a population of 1,000,000 people

- On the first day, there is 1 person infected.

- On average a person will infect two other vulnerable people at random (R0=2)

- Once a person is infected they are permanently immune from further infections.

Standard Model:

In the standard model, everybody is vulnerable and we get the familiar exponential curve. Once the population reaches 50% immunity cases peak, and then start to decline rapidly.

Stable Vulnerability:

In this model we simply assume that only one out of five people can be infected. Again we get the same curve as in the standard model, with one key difference. Instead of needing 50% of the population to become immune, we only need 50% of those who are vulnerable (or 10% of the population) to become immune.

Semi-Stable Vulnerability:

If we consider a situation where the vulnerable population is not stable over time something interesting happens.

The initial spike is very similar to the stable case. Once 50% of the vulnerable are infected, the spike dies down. Unlike the stable case however, there is a second, smaller spike which occurs later on and an even smaller third one.

Every week some of the vulnerable population is replaced at random from the general population. Since these newly vulnerable people were not affected by the first spike, they have much less immunity. As fewer new people get infected the immune percentage of the vulnerable population degrades to match the general population.

Eventually, when that percentage drops below 50%, the population loses "herd immunity" and cases start to rise again. Until the overall population reaches equilibrium with the vulnerable population, the cycle will continue.

If we compare the weekly growth rates between the standard model and the vulnerability model, the difference becomes apparent. In the standard model there is no natural tendency towards stability. Once cases start to fall, they will continue to fall unless something changes. On the other hand the Semi-Stable model has a strong tendency to stabilize at a constant level.

Alternatives:

As mentioned earlier there are three alternative explanations for this surprising behavior. In this section I will explain why I think these explanations are not nearly as compelling.

Faked Data:

In the earlier graphs I used data from COVID Estim which tries to account for test availability and other such confounding factors. However it would not be accurate if the cases data was being intentionally manipulated. According to this theory, Florida's (and other states) cases do fit the standard model. If we could see the real data, we would see the expected spike.

This theory has two major flaws. Our estimates are based on a number of different data sources and directions (hospitalizations, confirmed cases, confirmed deaths, excess deaths). All of these different metrics point to very similar infection rates. Any manipulation should have left telltale signs in at least some of these numbers.

However there is a much more serious flaw in the theory. Suppose that Florida had been manipulating its data and in fact the summer spike was as severe as predicted.

To keep up the deception, these same officials would have had to manipulate the numbers in the other direction! A spike of that magnitude in the summer should have caused the fall and winter spike to completely disappear.

Behavior:

The behavioral explanation relies on people adapting their behavior in response to the observed course of the epidemic. When case numbers rise too high, people stay home and when case numbers drop people go out and party. Like a thermostat, this serves to keep case numbers at a constant level.

This explanation is adopted in various forms, by those who are strongly pro-lockdown, those who are strongly anti-lockdown, and those who are somewhere in the middle. However I believe that the behavioral story is implausible.

Firstly, feedback mechanisms like this work best when they are

- Linear: Small behavioral changes cause small differences in outcome.

- Precise: It is possible to make fine tuned adjustments in behavior to achieve the desired outcome.

- Immediate Feedback: After making a change, it is possible to quickly see the outcome.

With COVID-19, none of these factors are present, which makes the proposed precision of the feedback mechanism implausible.

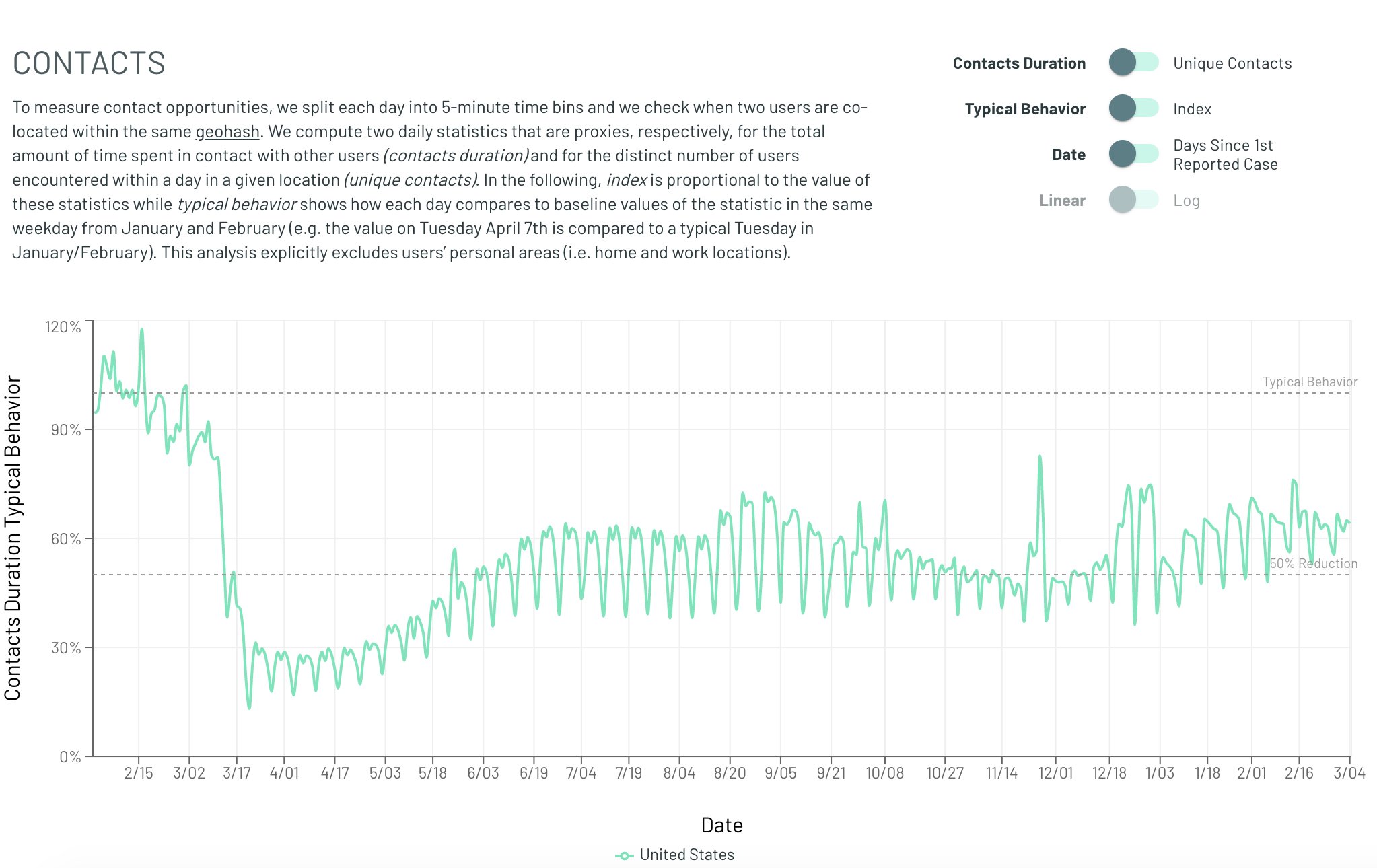

Finally whatever the feedback mechanism is, it is not readily observable in mobility data. For instance here is a graph of COVID "close contacts" over time.

Whatever the feedback mechanism is, it is not observable with anything close to the required magnitude in that data. Obviously this dataset is not perfect and perhaps there are other changes in behavior which are being missed. But other datasets (time away from home, Google mobility trends) also don't show any obvious trend. If dramatic behavioral changes are driving the change in spread, why can't we see them?

Heterogenity:

The other main explanation for the apparent flatness, is that transmission is heterogenous. Early on the most social and susceptible groups get completely infected, and then as they reach herd immunity only those groups which are close to stability remain transmitting. This explanation can mathematically explain the earlier than expected peaks, and some of the flatness. However these heterogenous models make two predictions which have not fared very well.

Heterogenous models predict a much lower herd immunity threshhold. After the most vulnerable groups have been infected the rest of the population should have a much harder time becoming infected. But in places like Florida and Texas the winter wave was much more severe than the summer one, when theoretically they should have been far more resilient.

More importantly though is the problem of where this hyper-social heterogenous spread happened. Where is the observable group which both had super high spread early on, but now appears to be completely immune? Aren't insular communities which are hosting 10,000 person weddings prime candidates for this burnout? Why does nobody seem to have the 80-90% infection rate predicted by this heterogenity?

Similarly to the behavioral analysis, proponents of heterogenous spread are left pointing to nebulous, informal social groups. But still, at some point we should be able to point to a town or a group which has very obvious heterogenous spread.

The vulnerability model does not have this problem. If we compare Florida to a hypothetical super high transmission state, with twice the transmission levels. We see that even though the super high transmission does reach a much higher peak, it never reaches "herd immunity" where cases drop to near nothingness. That means that it is entirely plausible for some groups to be much more transmitting than others, while still not ever seeing the near complete immunity that the standard model would predict.

California in the Winter:

You may have noticed that in our earlier graphs, California's big spike in the winter was cut off. In the standard model this spike is explainable but mysterious. For whatever reason, starting in October the level of exposure to COVID jumped to a level much higher than ever seen before.

Our basic vulnerability model does far worse. As more and more people should be immune, it breaks down when confronted with California's spike.

If we update our vulnerability model so that starting in October more of the population becomes vulnerable (going from 20% -> 33% over time) we see something more feasible.

In neither model is there a particularly compelling explanation for what happened, something clearly changed to cause the spike, and it is still not clear what.

Variants:

Starting in the fall of 2020 a new variant of COVID started circulating in the UK. Researchers estimated that it was ~50% more infectious than the baseline variety, and as expected it came to represent a greater and greater proportion of the observed infections.

As of March 2021, an estimated 50% of all new infections in Florida are due to this variant. However instead of leading to a skyrocketing case rate, this climb has occurred as case numbers have continued to decline.

In the standard model this should absolutely lead to an explosion of infection levels. Somehow, behavioral changes have precisely offset the effect of this much more contagious variant.

In the vulnerability model we can see that although the introduction of a more infectious variant is associated with increased case levels, it is a much gentler increase, which could more easily be masked by minor external factors (e.g. seasonality).

Superspreading Events:

One fact that this theory has difficulty explaining is how, if only a small fraction of people are vulnerable at any point, we see cases where a wave of infections spreads rapidly throughout a small group. There are two main plausible explanations for how these sorts of superspreader events can occur.

Vulnerable Environments:

In our model most of the population is not susceptible to infection at any one time. However there may be groups of people where that is not the case. Environments like nursing homes have many immunocompromised people, who may be much more likely to become infected. Similarly, there may be cases where the environment itself, rather than the people in it, leads to the higher vulnerability. Prisons or other group living environments may have lower sanitation standards which could explain why more of the people are vulnerable.

Different Transmission Types:

The other possibility is that within close quarters, transmission is more direct and a much greater proportion of the population is vulnerable. however, the bulk of the transmission occurs in more casual settings where only a small fraction of people are vulnerable.

Future Work:

This model proposed is not intended to be a fully realistic description of the world, instead it is intended to provide a basic framework on which more complex models can be built, here are some examples of possible future extensions which could make this model more realistic:

- Heterogenity: We assume that the population mixing is random and uniform, what happens when different populations with different vulnerability rates and different transmission characteristics interact?

- Vulnerability: We assume that vulnerability is a binary variable, and if you are not vulnerable there is absolutely no chance of being infected. What happens if instead each person has a different susceptibility level which changes over time?

- Household Infections: We have some data on how COVID is transmitted within a household (about 30-40% of household members catch it). Could we use that data to determine the vulnerability? Could we look for deviations in that number over time to determine seasonality?

8 comments

Comments sorted by top scores.

comment by jimrandomh · 2021-04-06T22:15:08.707Z · LW(p) · GW(p)

I think vitamin D deficiency might be the hidden factor that determines vulnerability. Reasons for thinking this:

- Vitamin D is an immune modulator, so there's a clear mechanism.

- Vitamin D deficiency is about the right fraction of the population, 29-41% depending where you set the threshold, which approximately matches the household secondary attack rate and the infection rate at superspreading events.

- It varies over time with random variation in diet and indoor/outdoor activity patterns.

- It correlates with season, latitude, race and BMI.

I think there's a reasonably high chance that, if any town had handed out 5kIU vitamin D supplements to everyone, that town would have had almost no cases.

Replies from: MichaelLowe↑ comment by MichaelLowe · 2021-04-06T22:51:50.657Z · LW(p) · GW(p)

This is an interesting hypothesis, but I find it implausible that there is large temporal variation in vitamin D levels. Seasonal variation which might be even the biggest factor affects everybody the same, and it just does not seem to match my experience that the majority of the population changes their diet in such random ways that they could become Vitamin d deficient by chance. Same with indoor/outdoor activities, most people's life is not that variable that they are spending each day outside one month, but not the next. Besides, Vitamin D deficiency is correlated very strongly with various commodities, which definitely do not randomly fluctuate.

I would also bet that the secondary household attack rate is similar across different age groups (except children) while it is known that Vitamin D deficiency is much likelier in older people.

Replies from: smountjoy↑ comment by smountjoy · 2021-04-07T06:45:04.474Z · LW(p) · GW(p)

Supporting your point, the ACX post on COVID and Vitamin D cites this study finding that Vitamin D levels are relatively stable.

Relevant from the abstract: "The 25(OH)D levels were correlated between visits 2 and 3 (3 y interval) among whites (r = 0.73) and blacks (r = 0.66)."

comment by dbmcclain · 2021-04-09T04:38:10.163Z · LW(p) · GW(p)

Very well presented. However, there is another aspect that most people seem to be missing. I come from an area of physics and engineering where I have dealt with control systems in the face of severe lag. Long time delay between changing a control parameter and seeing its effect.

We have a situation in the current pandemic where there seems to be a roughly 2 week period from the time a person becomes infected (and contagious) and to when it finally presents observable symptoms. Everyone in the country is pushing for reopening the bars and restaurants, and they are understandably impatient. But when the pandemic first appears to be tapering off is precisely the wrong time to reopen everything. You need to give the incubation period a chance to pass. Yet, repeatedly, people follow their impulses and governors respond to business pressure.

When a system has severe phase lag, as in this case, humans are notably incapable of dealing properly with them. We tend to see oscillatory behavior develop in the magnitude of system reaction - infections in this case. That is a signature of severe phase lag, and the simplistic control response based on immediately perceived threat levels. What we need is some feed-forward, or derivative (based on rates of change) injected into the control system to help stabilize the response.

(A bit of integral should be called for too... don't stop with control until the integrated or accumulated infections have declined to acceptable levels. You need all three terms - integral, proportional, and derivative, in this case. And all we have seen is a control response based on proportional impressions. This is notoriously prone to oscillation, just as we have seen.)

comment by MichaelLowe · 2021-04-06T23:29:20.230Z · LW(p) · GW(p)

So if the model is true, one potential source of temporal variation might be waning immunity acquired after being exposed but not infected. Will link studies later, but many non-infected people show some T-cell responses against Sars-Cov2. In this scenario, e.g. a doctor gets coughed on, gets lucky, and develops some sort of temporal immunity that protects them for the next few months. After some time though this protection wanes and their risk increases again (this would probably not be a binary but continuous process).

I know too little about immunology, but afaik T-cell immunity wanes very slowly, so it does not quite fit the mark. Maybe there are other forms of immunity/antibodies that would explain this better.

Replies from: MichaelLowe↑ comment by MichaelLowe · 2021-04-09T19:57:22.578Z · LW(p) · GW(p)

It does seem that close contacts of infected people acquire T-cell immunity even without infection, but at least 90 days after exposure there does not seem to be a decreasing trend: https://www.nature.com/articles/s41467-021-22036-z

comment by MichaelLowe · 2021-04-06T22:54:19.107Z · LW(p) · GW(p)

Very interesting model, thanks for writing this up!

I will have to think about it in more depth. How do settings fit into this scenario where we know that basically everyone (50-75%) gets infected in an arguably short time frame: meat plants, close living quarters or prisons?

Replies from: mike-harris-1↑ comment by Mike Harris (mike-harris-1) · 2021-04-06T23:03:57.718Z · LW(p) · GW(p)

I agree that this is a relative weakness of the model. I think part of it is that the division into vulnerable/invulnerable is a simplification. If for instance you injected somebody with COVID then everybody would be "vulnerable". So in some environments conditions are ideal for spread which makes many relatively immune people become infected.