Why you can add moral value, and if an AI has moral weights for these moral values, those might be off

post by Wes R · 2025-04-02T17:43:37.074Z · LW · GW · 1 commentsThis is a link post for https://docs.google.com/document/d/1XBqeG7q2ung9uOK4P_gt6YVpVVj3sJOcLfJKQB4hl2Y/edit?usp=sharing

Contents

What you'll learn from reading this

Epistemic status

What's a utility function?

Terminology

A long proof of aggregationism (The belief that morals are like a real number and can be added and whatnot)

A short proof of aggregationism (The belief that morals are like a real number, and can be added and whatnot) (Short version) (you can read both versions if you want, but this is the only one you need to read, plus it’s much shorter.)

The art of splitting things up into “objects”

So what about AI? (Further reading watching)

None

1 comment

What you'll learn from reading this

- Why the moral value of (thing 1 and thing 2) is the same as the moral value of (thing 1) + the moral value of (thing 2). (i.e., moral value is "linear".)

- (some) People (or, I suppose, AIs) weight certain things by constants. (e.g., " dogs are 2x as important as cats (i.e., you think something happening to 2 cats is worth the same as something happening to 1 dog.)"

Epistemic status

This is about as likely to be true as a typical un-peer-reviewed proof of something in math - that is, it might be off. (Let me know if you think this might be off so I can be more not-off!)

What's a utility function?

First, watch this video.

(I’m going to assume you watched the above video when writing this, but you should be fine if you didn’t watch it. It’s 7 mins long, though, and it’s a good video. You do you, though.)

For the sake of simplicity, here, we’re going to be comparing the value of objects floating in space that don’t interact with each other.[1]

Terminology

- When I use [ ], you should interpret it as if the contents in the bracket are, grammatically, one word. For example, “A panda eats [shoots and leaves]” means that pandas eat both shoots and leaves, but “A panda eats, shoots, and leaves” means pandas don’t pay the check at restaurants.

This just makes [ ]’s more useful. means you don’t care whether [the world has a 📦 in it] or [the world has a 🎁 in it]

means you prefer the [world where space has a 📦 in it] (Which I’ll write as 📦 for short) than [the world with a 🎁 in it] (Which I’ll write as 🎁 for short)

means you prefer 🎁 to 📦.

refers to the world/universe (I’ll use the words “world” and “universe” interchangeably in this article) with a 📦 and a 🎁 in it, and the two don’t interact with each other. Maybe the two are far apart in space7, or the two in different universes labeled as one single universe. (A “duoverse”, so to speak.)

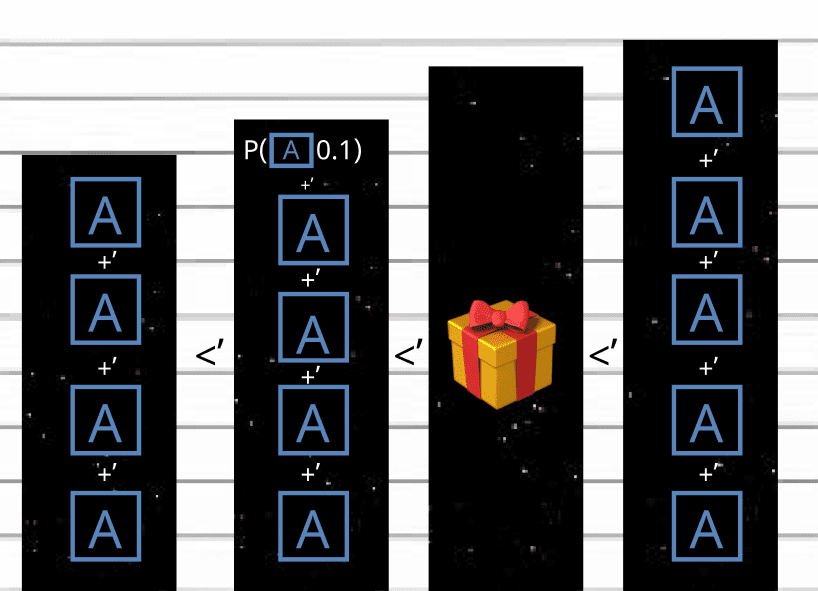

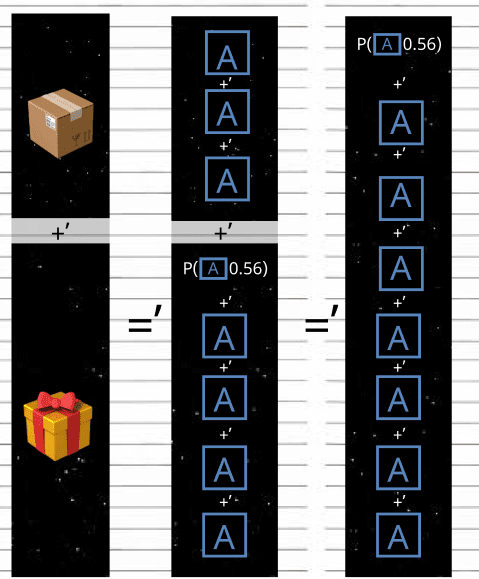

- P(📦0.5) means there’s a 0.5 chance of a world having a 📦 in it & a 0.5 chance of the world not existing.

- n📦=📦+’ 📦+’ 📦+’…📦, n times. That is, n📦’s. (e.g., 2📦 is the same as📦+’ 📦 is the same as 2 📦’s.)

- For simplicity, when I say “the value of X”, where X is an object, interpret it as “the value of the world where space has an X in it.

First, let’s introduce a “Unit object”. Let’s choose this box labeled “A” (🅰️). Now, let’s define the following utility function:

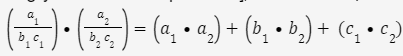

The input is the state of a world (In this example, a 📦 in space), and the output is [how many worlds with a 🅰️]’s we’d be willing to trade for it. For example, if 🅰️+’ 🅰️+’ 🅰️=’ 📦, (That is, we wouldn’t care whether the universe had three 🅰️ ‘s in it, or one 📦) then A(📦)=3.

Also, I’ll make 🅰️>’[The world with nothing in it] – that is, 🅰️ is better than nothing.

Notice how if A(⚽)<A(🏀)[2], then ⚽<’🏀; if A(⚽)>A(🏀), then ⚽>’🏀; and if A(⚽)=A(🏀), then ⚽=’🏀.

A long proof of aggregationism (The belief that morals are like a real number and can be added and whatnot)

Now, let’s say 🅰️+’ 🅰️+’ 🅰️+’ 🅰️<’ 🎁<’ 🅰️+’ 🅰️+’ 🅰️ +’ 🅰️ +’ 🅰️. What’s A(🎁)? It must be more than 4 and less than 5, but what is it?

Here’s where I made use of probabilities.

This shows that 4.1<A(🎁)<5.[3] We can keep getting more and more precise with probabilities. Let’s get even more precise in this image:

This shows that 4.5<A(🎁)<4.6. Eventually, you’ll reach the real value.[4] In this case, we’d find out that A(🎁)=4.56. That is, it’s worth 4.56 🅰️’s.

Now for the interesting part:

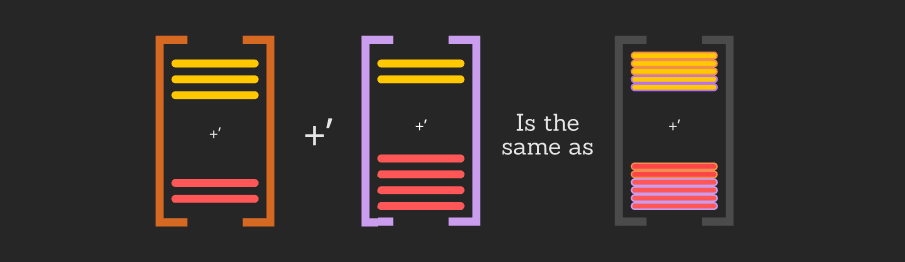

This shows that moral value is linear. A(⚽+🏀)=A(⚽)+A(🏀). To make the rest of the connection between “moral value is linear” and “A(⚽+🏀)=A(⚽)+A(🏀)”, Let’s use a real-world analogy. Monetary value is linear, and 💵(⚽+🏀)= 💵 (⚽)+ 💵 (🏀). (Also read “A short proof of aggregationism” (a bit further down), which explains this much better, and I’d rather not just copy/paste it here.)

Now, let’s make another object, 🅱️. 🅱️ is like 🅰️, but 🅱️ is worth more than any finite number of 🅰️’s. All the same logic that we applied to 🅰️ applies to 🅱️, it’s just that 🅱️ is better than 🅰️. Notice how adding this accounts for all possible A(🏀)s:

enough 🅰️s (or - 🅰️s[5]) total to one 🏀, or

2. No amount of 🅰️ or - 🅰️s will ever total to one 🏀. (In this case, 🅱️ could be 🏀.)

Things worth more than 0 🅱️s are what we would call “priceless” - no amount of 🅰️ can add up to it!

Now, we can add [C], which is worth infinite 🅱️s. (This falls into the second category, where [C] is the 🏀).

Things worth more than 0 s [C] are what we would call “priceless²” - no amount of 🅱️ can add up to it!

We can keep doing this as much as we’d like. We can also do it in reverse, where we find:

something that’s better than [The world with nothing in it], but is worse than any positive amount of 🅰️s – 🟥. [The amount of 🟥 s in an 🅰️] is the same as [The amount of 🅰️s in a 🅱️]. You could keep going as much as you’d like, with things that are worth less and less and less and things that are worth more and more and more. To keep things tidy, I’ll introduce 🅰️n, where n is “how many infinities 🅰️n is bigger than 🅰️ “. For example, 🅰️-1 is🟥, 🅰️0 is just 🅰️, 🅰️1 is 🅱️, 🅰️2 is [C], and so on.

Now, just as we take A(🏀), we can take (🏀)[6], where the output is how many

… 🅰️-3s, 🅰️-2s, 🅰️-1s, 🅰️0s, 🅰️1s, 🅰️2s, 🅰️3s, and …

Are needed to exactly reach the value of one 🏀. Namely, the output is a vector[7], where the ith row corresponds to how many 🅰️s are part of 🏀.

- Note that

(⚽+🏀)=

(⚽)+ (🏀), for the same reasons A(⚽+🏀) = A(⚽)+A(🏀).

- Also note that (⚽) =' (🏀) if and only if ⚽ =’ 🏀.

- Also note that, if ⚽ >’ 🏀, then, for the largest value of n where the nth row of (⚽) is not equal to the nth row of (🏀), the value in

(⚽) must be bigger than the value in (🏀), since ⚽ would have to be made of more 🅰️n s then 🏀.

Now, I should note that it’s reasonable to think that there are many things that are infinitely less valuable than a day of happy, conscious experience, but that’s only really worth discussing if it’s fun to talk about, since a single happy day of conscious experience triumphs all the infinitely less valuable things in the world. It’d be like discussing if you should raise the price of your bananas by 0.00000000000000001 dollars: It doesn’t really matter, and it’s mostly for fun.

A short proof of aggregationism (The belief that morals are like a real number, and can be added and whatnot) (Short version) (you can read both versions if you want, but this is the only one you need to read, plus it’s much shorter.)

The price of [two separate objects] is the same as [the price of the first object] plus [the price of the second object]. [8] Each dollar corresponds to a 🅰️, and each cent is p(🅰️0.01).[9] For objects that don’t interact or interfere with each other at all, a perfectly logical person should value having both items just as much as [the sum of [How much that person values the first object] and [how much that person values the second object]. ], since, after buying the first object (🏀) with some amount of 🅰️s, the value (in 🅰️s) of the second object (⚽) doesn’t change at all for this person. (Since the two objects don’t interact.) So, the total price that person pays in 🅰️s for [two separate objects] after buying both items is [the price of the first object] plus [the price of the second object], so the moral value of (🏀+’⚽) is EXACTLY the moral value of (🏀) + the moral value of (⚽)!

Now, just replace “Objects in space” with any other things that don’t interact. All the same logic works since nothing I said here was specific to object-like things.

Luckily for you, the argument presented on this page of the article works independently of the stuff I’ve said in the long argument! If you need to quickly explain “why aggregationism” to someone, you only need send that someone THIS PAGE! (With some background on the terminology, of course.)

The art of splitting things up into “objects”

(From now on, I’ll call these separated things “objects”.)

Note that, for the short proof of aggregationism, the only thing that two objects need to be separate is that the first object doesn’t change the value of the second object. That was the ONLY necessary quality the objects had to have.

A group of objects fits with this so long as the existence of each object doesn’t influence the other objects’ value.

So, we couldn’t separate “you are having fries” and “you having ketchup” since ketchup without fries is less valuable than ketchup when fries are present. How much money would you be willing to pay to get ketchup when you have fries? Now, how about without fries? The two are different, right?

But we could separate “you are having fries” and “A stranger is having ketchup” since your having fries doesn’t really make the ketchup better for the stranger.[10] How much money would you be willing to pay to get a random stranger ketchup when you have fries? Now, how about without fries? The two are pretty similar, right?

Let me know if you find a proof of aggregationism that is even less restrictive.

I would guess there isn't one since if one object influences the value of another object, then the argument with money💵 (the short proof) doesn’t work.

So, here’s some useful separations!

- Other universes that don’t interact with ours, and that we don’t interact with:

This is just useful to get out of the way when doing moral reasoning, since that way you’re left with only our universe (and “universes” that influence ours, if you can call these “universes”).

Other time periods:

We can separate the impact of things by time. [12] Since the value of a good day, bad day, or neutral day (so all the days) on Thursday isn’t dependent[13] on what the day before it is like, we can separate Thursday and Wednesday into different objects. The same goes for any other group of time periods. To see this in action, let’s say you’re offered to get either one sandwich (🥪) today or two sandwiches (🥪🥪) tomorrow. The value of the first one is

Value(🥪today +’ no 🥪 tomorrow +’ whatever happens past tomorrow)= [14]V(🥪today) + V(no 🥪 tomorrow) + V(whatever happens past tomorrow), and the second one is V(no🥪today +’ 🥪🥪 tomorrow +’ whatever happens past tomorrow)= V(no🥪today) + V(🥪🥪 tomorrow) + V(whatever happens past tomorrow). Assuming it doesn’t matter whether something happens today or tomorrow, just that it does happen, the second one is also equal to V(🥪🥪today) + V(no🥪 tomorrow) + V(whatever happens past tomorrow). So, which is bigger?

Well, if x>y, x-y>0, if x=y, x-y=0, and if x<y, x-y<0. So, what’s V(🥪🥪today) + V(no🥪 tomorrow) + V(whatever happens past tomorrow)- V(🥪today) + V(no 🥪 tomorrow) + V(whatever happens past tomorrow)? It’s V(🥪🥪today)-V(🥪today). Is that bigger than 0? Only if you prefer two sandwiches to one sandwich. (Exactly the answer we wanted!)

- Conscious experiences from non-conscious experience:

I think it’s safe to say that [me feeling happy] doesn’t make physical items more valuable. So, conscious experience can be labeled as a separate object from all physical things. Again, for a group of objects to be valid, it only needs to be the case that each object’s value is independent of the other objects. It’s just that the word “objects” is a bit jarring here.

- Object 1 +’ Object 2 =’ Object 3:

If you have two valid objects 1 & 2, then [Object 1 +’ Object 2] is another valid object, because if three objects Object 1, Object 2, and object 4 don’t affect the value of each other, then [Object 1 +’ Object 2] and object 4 don’t affect the value of each other, for all object 4s, which is the definition of an object.

It’s also the same reasoning behind how two products, when put together in a package, become another product. And since neither component of said package influences the value of any other product, [both put in one package] doesn’t influence the value of any other product. Any group of different conscious experiences:

How happy I am on Wednesday doesn’t* change how valuable it would be for you to be happy on Tuesday. Similarly, you can split up your life and other people’s lives into times when you or others are happy, neutral, or sad.[15] Note that you don’t need to keep track of who experiences what, just that someone experiences it, since you shouldn’t care who experiences what and, therefore, don’t need to account for it.

- Any others – let me know if you come up with any other good separations.

You could also split up all conscious experiences by experience. (e.g., if you are happy for 3 days and your friend experiences the same happiness for 2 days, and you feel angry for 2 days, and your friend experiences the same anger for 4 days, that’s the same as 5 happy day objects and 7 angry day objects.

You might’ve noticed that I just used something that looks a lot like vector addition.[16] That’s because it is! In this case, the first row is “Days where someone is “happy” “, and the second row is “Days where someone is “mad” ”. Note that “happy” is an arbitrary collection of conscious experiences, same with “mad”. We could just as easily have collected things into “neutral” and “not neutral”; “asleep”, “not asleep and bored”, “not asleep and not bored”; “Working” and “not working”; “reading the article you wrote” and “doing anything else”; “eating chocolate” and “suffering from the pain of not eating ice cream”; or whatever else you’d like! Preferably, every conscious experience should fall into one and only one of your categories, though – which we’ll talk more about how to do in the section “How to think about the world like an expert”.

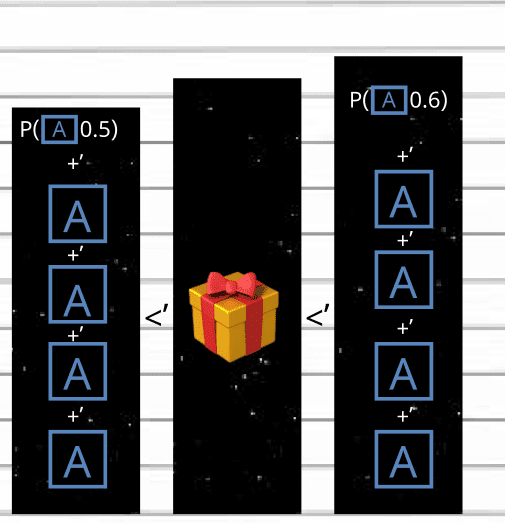

We could also get more and more specific with our collections. Now: since the moral value of conscious experience, A(conscious experience), is equal to A(one second of “happy” conscious experience) x [seconds of “happy” conscious experience] + A(one second of “angry” conscious experience) x [seconds of “angry” conscious experience] + A(one second of “not happy or angry” conscious experience) x [seconds of “not happy or angry” conscious experience],[17] and the dot product of two vectors(Some of you can see where I’m going with this.) A(conscious experience)=

. We can do the same thing for all groups of conscious experience (and also non-conscious stuff – there’s nothing in this concept that’s dependent on it being groups of consciousness, and not groups of, say, different foods). Just replace “happy”, “angry”, and “not happy or angry” with the collections of your choice. You can also add or remove rows from the vectors as much as you’d like – there’s nothing about this concept that depends on using three rows specifically. – You can even do infinite columns! (Just not 0.)

The vector on the right is typically what people refer to as “Moral weights”.

So what about AI? (Further reading watching)

Note that if an AI’s utility function is a vector (or includes one) of these “Moral weights”, those moral weights can validly be anything. An AI could believe dogs are 2x as important as cats or just 1.0001x as much. Similarly, it could think being happy is 2x as good as being slightly happy, or just 1.0001x as much.

For more on an AI’s possible goals, see:

For the full set of all the possible "goals" an AI can have (assuming it meets some definition of being "rational"), note that What's the Use of Utility Functions?, as mentioned earlier, allows for ANY preferences that are "complete & transitive" - there's nothing stopping Rob choosing getting stung by millions of wasps instead of having some tea, and there's nothing stopping an AI assistant with access to your computer from ordering you 1 million wasps instead of a cup of tea.

Yeah. If I were Rob, I think I'd want 1 million wasps. After all, he never said he didn't like them!"

If you'd like a pause, here's a 2-minute funny video!

- ^

So, we’re ignoring gravity and the like. This is fine because the analogy doesn’t require much physics.

- ^

From here out, I’ll refer to “⚽” when I want to communicate “the world where space has [an arbitrary object labeled with a ⚽] in it.”, and “🏀” when I want to communicate “the world where space has [an arbitrary object labeled with a 🏀] in it.”

- ^

Remember: Expected value Expected Value Explained - Should You Play This Game?

- ^

Except for when A(🎁)=𝝅, or some otherirrational number, where we can get as close as we want (say, 3.14>A(🎁)>3.15unless you use 3 🅰️'s +' p(🅰️𝝅-3). - ^

One - 🅰️ is worth the opposite of one 🅰️. That is, 🅰️ +’ -🅰️ =’ [The world with nothing in it]. Same goes with all 🏀s. (That is, 🏀 +’ -🏀 =’ [The world with nothing in it])

- ^

for the purposes of this article, I will use "" and "vectorA" to mean the same thing.

- ^

If you don’t know what a vector is, this should explain enough for this section. Vectors | Chapter 1, Essence of linear algebra

- ^

We’re assuming the store doesn’t have a “Buy one, get one 50% off deal”, or something with those same qualities.

- ^

Remember: Because of expected value, p(🏀x) +’ p(🏀y) =’ p(🏀x + y).

(Unless x +y goes over 1, since probabilities cap out at 1. Then, we get p(🏀x) +’ p(🏀y)=’ 🏀+’ p(🏀x+y-1).)So, 56 cents would correspond to 56 p(🅰️0.01)’s =’ p(🅰️0.56).

- ^

Well, technically, the objects can influence each other a little, but you get the point.

- ^

Well, technically,

(⚽+🏀) still is equal to

(⚽)+ (🏀) if the existence of ⚽ increases the value of 🏀 just as much as the existence of 🏀 decreases the value of ⚽.

- ^

Ignoring weird time stuff like relativity.

- ^

But there is a correlation – if you have a good day on Wednesday, you’d probably have a good day on Thursday – and Wednesday does influence Thursday. On Thursday, you remember what happened on Wednesday, you wake up in the same bed you fell asleep on Wednesday, etc. But remember: all that we need to be allowed to separate two things into objects are that the value of one doesn’t influence the value of another.

- ^

V(🏀) is a shorthand for [the value of (🏀)] and is equal to A(🏀).

- ^

By “Happy”, I mean “A generally more good than bad experience”, by “neutral” I bean “equally bad and good”, and by “sad” I mean “A generally more bad than good experience”.

- ^

If you don’t know what a vector is, this should explain enough for the rest of the 4th useful separation (the one this footnote is in), and while the rest of this section is not necessary to understand the morals, it is useful, especially for working on AI.

1. “What’s a vector? ”2. “What is a dot product?”, but you don’t have to go past 1 minute and 25 seconds. [1:25]

- ^

Well, technically, we might not always know A(one second of [some grouping of experience, such as “happy” or “angry”] conscious experience) with absolute certainty, in which case we use the expected value of A(one second of [some grouping of experience, such as “happy” or “angry”] conscious experience).

(If you don’t know expected value, watch this 3-min explainer: Expected Value Explained - Should You Play This Game?)

1 comments

Comments sorted by top scores.

comment by Dagon · 2025-04-02T18:20:59.691Z · LW(p) · GW(p)

Thanks for writing this, but my personal experience of valuing things is a direct contradiction to this. Almost all valuations have some kind of non-linear aggregation. "Declining marginal utility" is observationally and reflectively true for me, at least, and there are many cases outside myself which are more consistent with nonlinear aggregation than linear.

In a lot of cases, the margin is tiny, so it's hard to notice and not very important. Going from 9 billion to 9.01 or 9.5 billion is close to linear. Going from 0 to 1 or 1 to 2 or 9 to 10 is often VERY different in utility-change.