Centrists are (probably) less biased

post by Kevin Dorst · 2024-04-07T06:40:29.682Z · LW · GW · 2 commentsThis is a link post for https://kevindorst.substack.com/p/centrists-are-probably-less-biased

Contents

The empirical debate Who’s (probably) right? The results What to make of this? None 2 comments

TLDR: Which side is more responsive to political evidence? Some empirical studies suggest the left; others suggest the center. The debate is ongoing, but some very general dynamics imply that it’s probably the center.

I am not a centrist. I am also biased. (Rationally so, I think.)

Is that a coincidence? Which side of the political spectrum tends to be less biased? That’s a fraught conceptual and normative question. But it’s also, in part, an empirical one. Here’s what we know.

The debate centers around cognitive rigidity—the opposite of cognitive flexibility, understood as the ability to properly adapt to changing environments and questions by switching perspectives and modes of thinking. Rigidity is one aspect of bias: rigid people are less sensitive to relevant evidence.

There are two (not mutually exclusive) hypotheses with empirical support:

- Rigidity-of-the-Right: conservatives are, on average, more cognitively rigid than liberals.

- Rigidity-of-the-Extremes: people on the ideological extremes are, on average, more cognitively rigid than those near the center.

What’s the evidence?

The empirical debate

The evidence for rigidity-of-the-right is based on self-reports.

Of course, no one says “I suck at being flexible” on a survey. Rather: psychologists generate survey questions, find clusters whose answers are correlated (indicating that they measure something), and then label them with what they seem to be measuring.

For example, the “need for cognitive closure” scale has people rate themselves on statements like:

- “I don’t like situations that are uncertain.”

- “I dislike questions which could be answered in many ways.”

- “In most social conflicts, I can easily see which side is right and which is wrong.”

Correlations between political beliefs and answers to such questions provide the main evidence for rigidity-of-the-right. Political conservatism is positively correlated with measures of need for closure (r = 0.26) and dogmatism (r = 0.34), and is negatively correlated with measures of openness to experience (r = -0.32) and tolerance for uncertainty (r = -0.27).

The problem? Self-reports are socially confounded.

That is: people’s self-conception of what they “should” answer—which answer lives up to their (community’s) values—affects what they say. If you’ve ever gone on a first date, you’ll know that the line between description and aspiration can be a fuzzy one. (“I also love to run, meditate, and write for an hour before the sun rises!”)

Likewise with psychology surveys. People within the orbit of science, academia, and journalism—who are more likely to lean left—tend to agree that open-mindedness, humility, and curiosity are virtues.

But talking the talk isn’t walking the walk—plenty of self-described “radicals” are, in practice, extremely conservative. Indeed, there’s evidence that people’s self-reports of “intellectual humility” fail to correlate with objective measures.

Upshot: we should be skeptical of the evidence for rigidity-of-the-right.

What about rigidity-of-the-extremes? It’s supported by objective measures of cognitive flexibility.

For example: “belief bias” measures how often people misclassify valid arguments as invalid (or vice versa), as a function of whether the conclusion aligns with their beliefs. Many studies—including a recent meta-analysis—suggest that that partisans on both sides display an equal amount of belief bias.1

More surprisingly: apparently-unrelated measures of cognitive flexibility turn out to be correlated with ideological extremity (and not conservatism). Zmigrod et al. (2020) use three well-studied measures to test this:

- The remote association task (RAT) asks whether people can find the links between sets of words, like ‘cottage’, ‘swiss’, and ‘cake’. (Link: ‘cheese’.)

- The alternative uses test (AUT) asks how many uses you can think of for ordinary objects—say, a brick. Most people think of ‘building material’ and ‘doorstop’; but few think of ‘self-defense weapon’ or ‘nut cracker’.

- The Wisconsin card sorting task (WCST) asks people to sort cards by a rule for awhile—and then suddenly changes the rule, measuring how quickly they adjust.

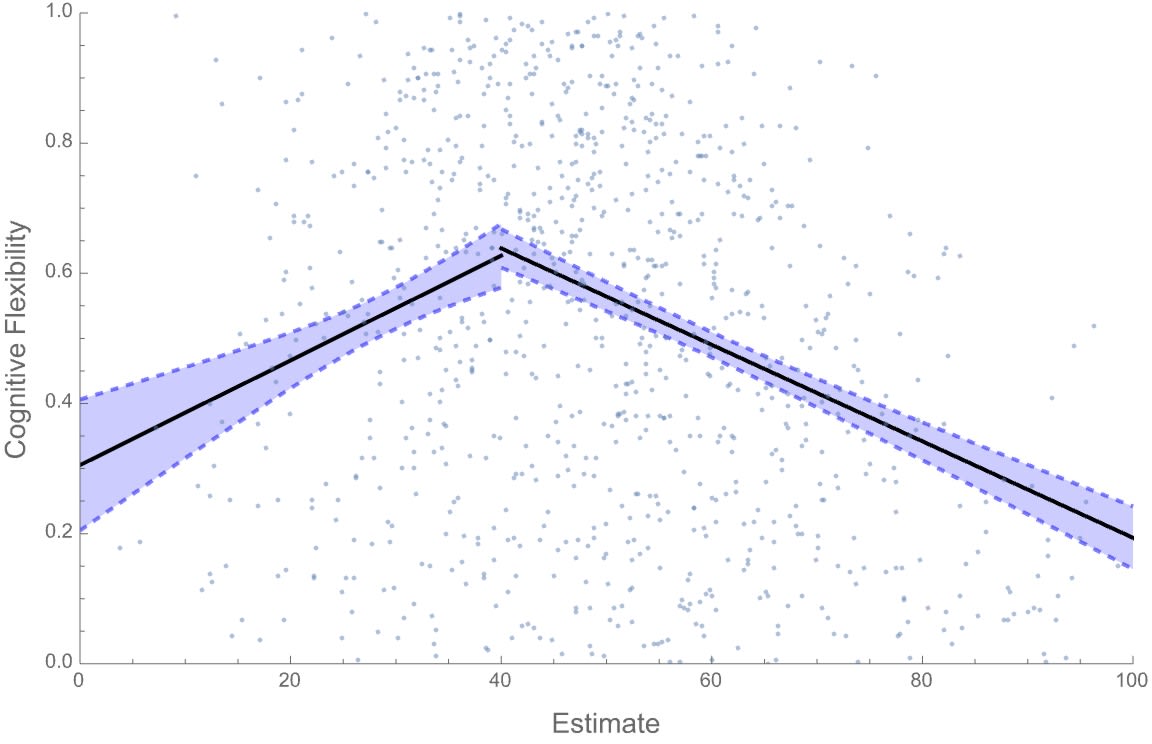

Zmigrod et al. find that those who are more politically extreme (x-axis) are less flexible on each of these measures (y-axes):

Who’s (probably) right?

These empirical waters go deep, and I’m in no position to pronounce a winner. Instead, I’d like to point out that there’s a very general reason to expect rigidity-of-the-extremes—we should have a high prior in it, as we wait for more evidence.

Why? Because those who are more sensitive to relevant evidence—more “cognitively flexible”—will be more reliably pulled toward the truth. This fact will generally induce a correlation between extremity and rigidity.

Here’s a simple model.

We have a population of Bayesians who vary in two ways:

- They have different prior estimates about a given political quantity, µ.

- They have different flexibilities fi: different probabilities of conditioning on (versus ignoring) any relevant piece of evidence about µ that they see.

Start with (1), their priors. The quantity, µ, could be anything. But let’s make it a measure of how often conservatives vs. liberals get things right. For example, µ could be the average proportion of the time that—when liberals and conservatives disagree—conservatives are correct in their economic predictions. (So µ = 100% says that conservatives are always right, µ = 0% says that liberals are always right. Nothing in the simulations depend on this choice of quantity, or that it's constrained between 0–1.)

To keep things simple, suppose our Bayesians know that µ is the mean of a (roughly) normal distribution with known variance. They have differing prior estimates about µ, and they’re going to receive a series of bits of evidence. (“Draws from the distribution”—in this case, instances of economists’ track records.)

Turn to (2), their cognitive flexibilities. Whenever they update, they do so rationally (by conditioning)—but they vary in how likely they are to update on any given piece of evidence.

Precisely: each agent i has a cognitive flexibility fi—between 0 and 1—which says how likely they are to update. If fi = 0.6, then whenever a piece of evidence comes in, agent i is 60%-likely to condition on it, and 40%-likely to ignore it.

This model is simplistic, but it gets at the dynamics: cognitive flexibility is a measure of how responsive you are to relevant information. There are many other ways we could implement this—for example, using the model of “ideological Bayesian updating” from the previous post, or by modulating the degree to which various agents are moved by evidence. The results would be similar.

The results

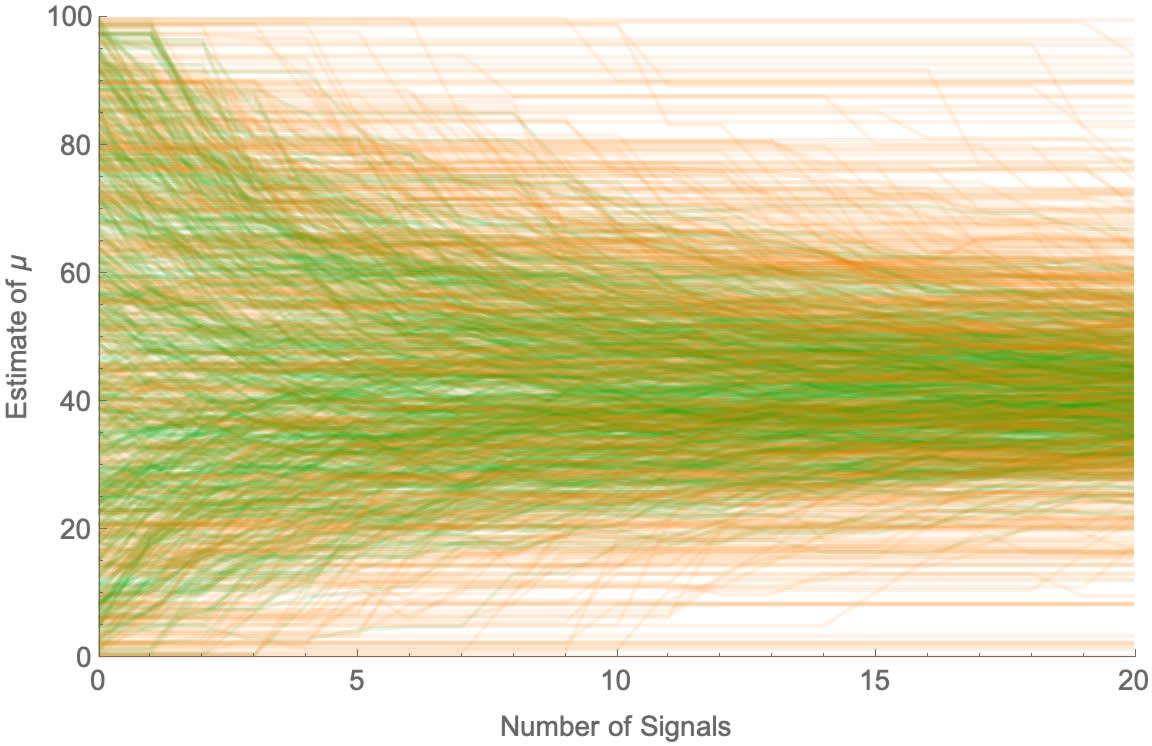

Color an agent green if they’re flexibility is above 0.5 and orange if it’s below 0.5. Randomizing their priors and setting the true value to µ=40%, here’s how their estimates evolve:

More-flexible (green) agents are more-pulled toward the true value, while less-flexible (orange) ones stay near their priors. As a result, those far off on either side of the true value tend to be the ones who are less flexible.

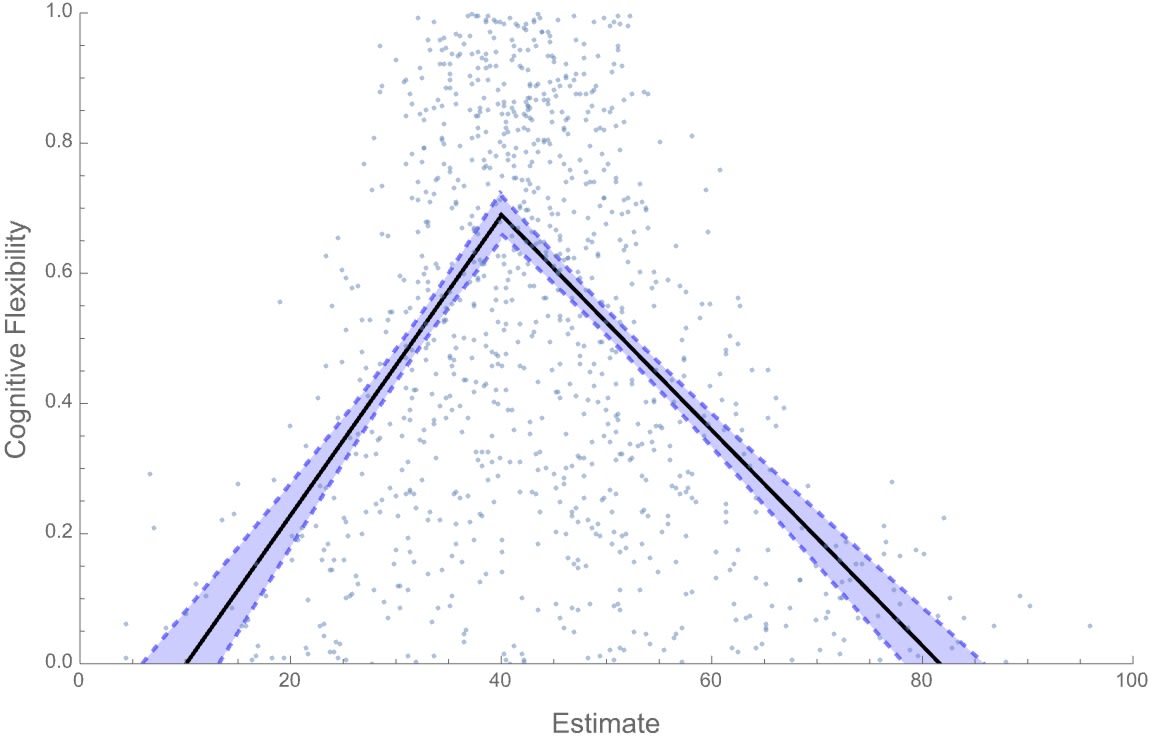

We can see this more precisely. On the x-axis, put each agent’s final estimate of µ, and on the y-axis put their flexibility fi. Then run two regression lines for those above and below the true value (µ=40%). That generates a familiar plot:

We find rigidity-of-the-extremes.

Of course, this result isn’t inevitable. In these simulations, it requires people’s prior estimates to begin on both sides of the true value—if everyone started out overestimating µ, then rigidity would correlate with being on that original side.

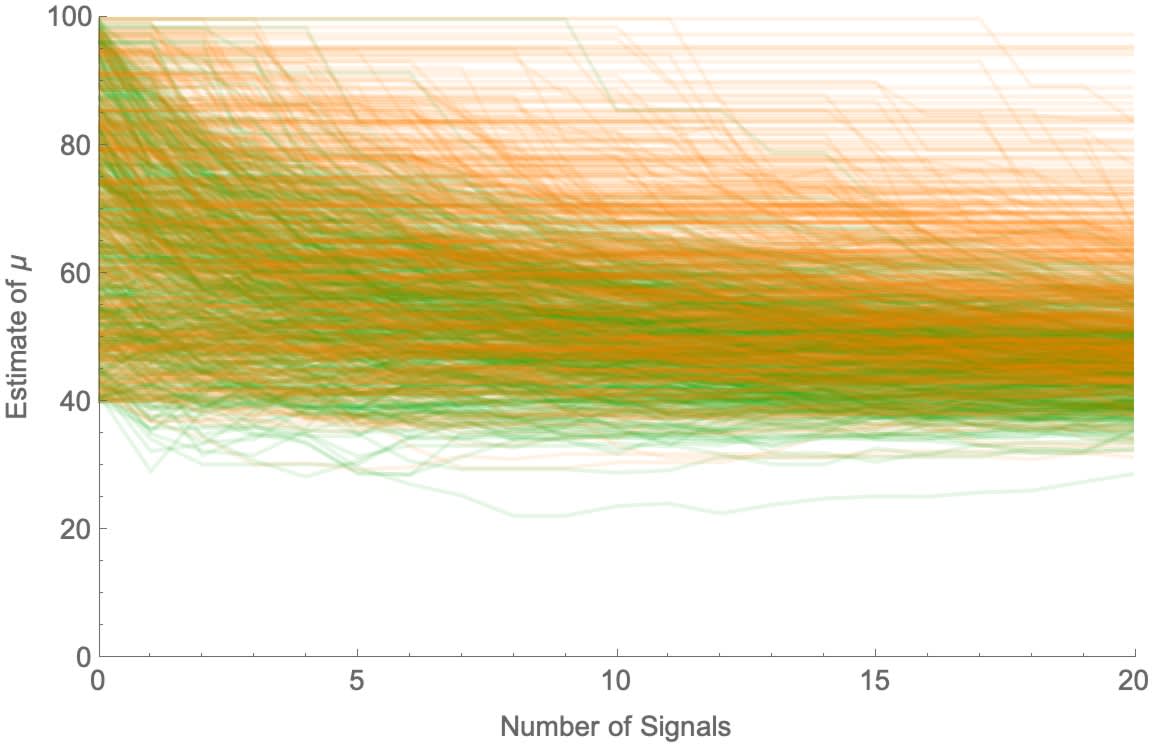

For example, here’s what happens when µ=40 and everyone begins with estimates between 40 and 100:

We find a rigidity-of-the-right effect:

But rigidity-of-the-right is fragile—most reasonable setups lead to rigidity-of-the-extremes.

After all, people’s opinions are also influenced by non-evidential factors. These could be ideological biases or motivated reasoning that pull them to one side or the other. That, obviously, would put those more-susceptible to such biases on the extremes.

Less obviously, the non-evidential factors could also be random noise. It’s widely agreed that people’s opinions suffer from such noise. And if everyone suffers from noise—but some agents are more cognitively flexible—then they’ll be the ones more reliably pulled toward the true value.

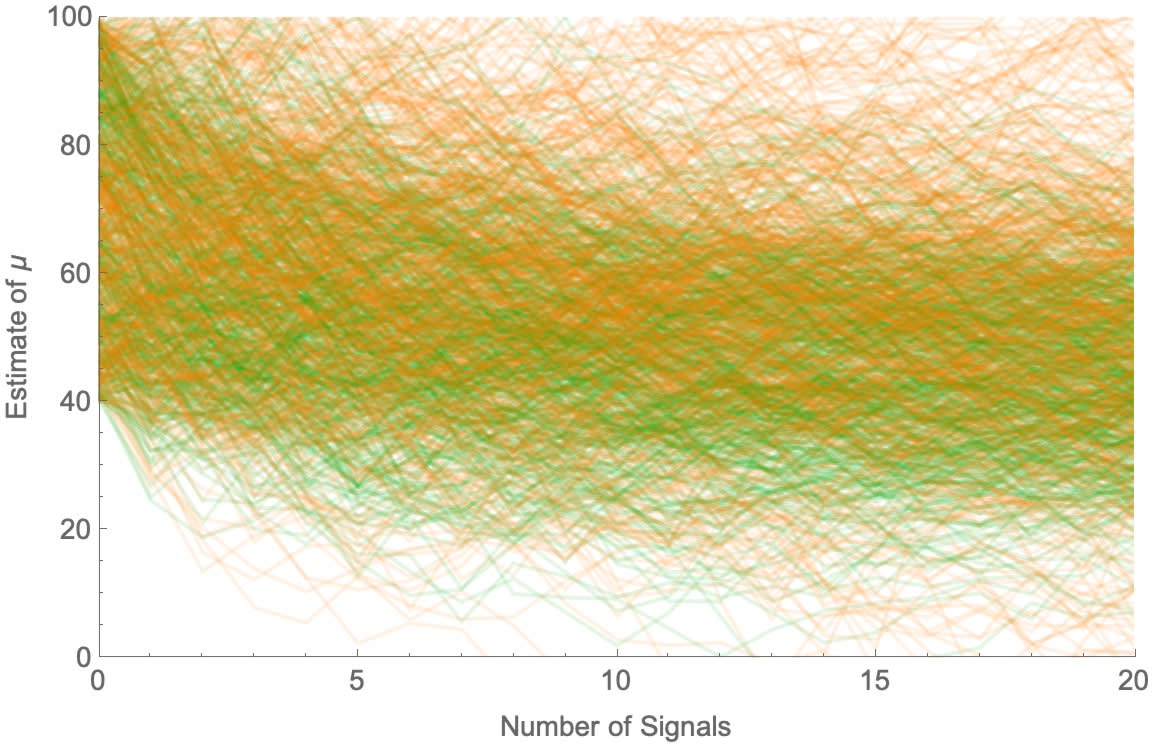

For example, suppose again that µ=40 and everyone starts out on one side, with prior estimates ranging from 40–100. They again vary in how flexible they are (as above), but now their opinions are also subject to random noise (proportional to their degree of uncertainty). The result:

Again, less-flexible (orange) agents spread out more. Despite everyone starting out on one side of the truth—the best-case-scenario for rigidity-of-the-right—we again find rigidity-of-the-extremes:

Noise—or other non-evidential influences on beliefs—makes rigidity-of-the-extremes hard to avoid.

What to make of this?

This doesn’t settle the question. Perhaps further empirical work will support rigidity-of-the-right (or rigidity-of-the-left!). Perhaps—more plausibly—correlations between political ideology and rigidity will vary with the times and political issues.

But still: there’s a very general dynamic—a sort of selection effect—pushing toward rigidity-of-the-extremes, AKA the cognitive flexibility of centrists. So we should expect some version of that hypothesis to come out true.

You might not be a centrist. You might not like centrists. You might even think that centrists are irrational.

Still: on many ways of understanding the fraught notion of ‘bias’, we should expect that—on average—centrists are less biased. Probably.

2 comments

Comments sorted by top scores.

comment by Seth Herd · 2024-04-07T21:28:42.364Z · LW(p) · GW(p)

In your model of why to assume centrists are less biased: aren't you assuming that the truth tends to be in the center of the spectrum? If we knew where the truth lay, there would be no point in studying which side is more biased or better rationalists. Right?

Replies from: Kevin Dorst↑ comment by Kevin Dorst · 2024-04-09T07:47:08.343Z · LW(p) · GW(p)

I think it depends on what we mean by assuming the truth is in the center of the spectrum. In the model at the end, we assume is at the extreme left of the initial distribution—i.e. µ=40, while everyone's estimates are higher than 40. Even then, we end up with a spread where those who end up in the middle (ish—not exactly the middle) are both more accurate and less biased.

What we do need is that wherever the truth is, people will end up being on either side of it. Obviously in some cases that won't hold. But in many cases it will—it's basically inevitable if people's estimates are subject to noise and people's priors aren't in the completely wrong region of logical space.