Domain Theory and the Prisoner's Dilemma: FairBot

post by Gurkenglas · 2021-05-07T07:33:41.784Z · LW · GW · 5 commentsContents

5 comments

Wishes it were crossposted from the AI Alignment Forum. Contains more technical jargon than usual.

Recall Robust Cooperation in the Prisoner's Dilemma and a hint of domain theory [LW · GW].

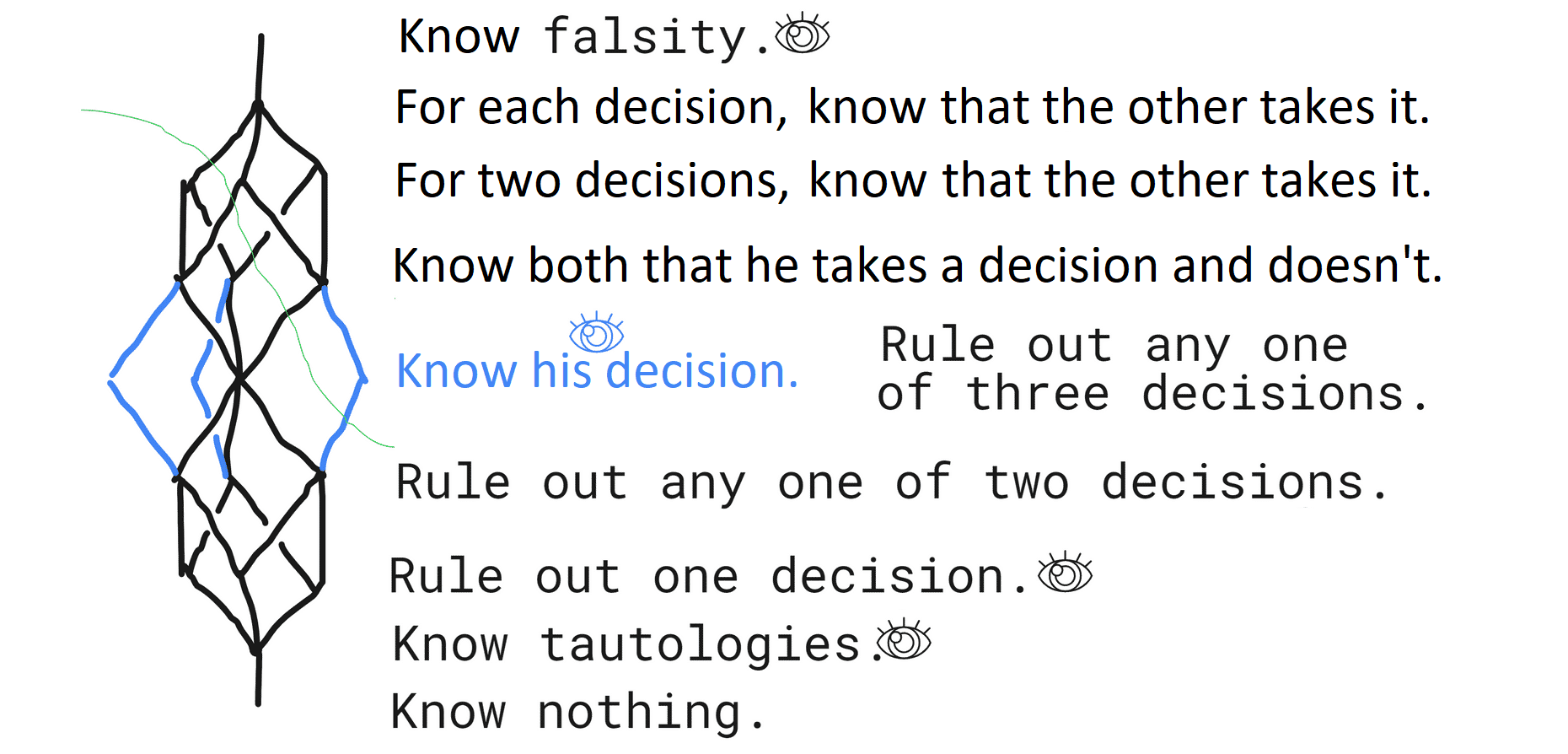

In the Prisoner's Dilemma, players have the opportunity to harm the opponent for minor gain. To aid decision, players may be granted some knowledge about the matchup. In decreasing order of fidelity:

- Both player's source codes/both player's behavior in all possible environments. No strategy can be better against every opponent than any distinguishable strategy, since some opponent will punish one for not being the other.

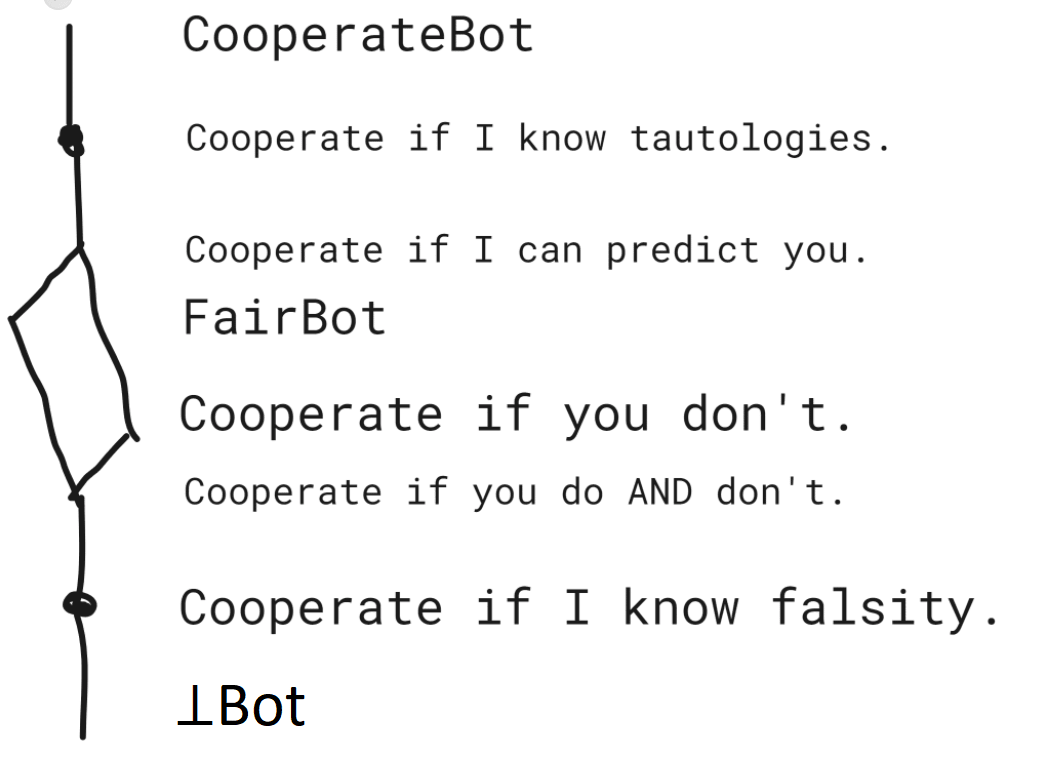

- Both player's behavior in the current matchup. PrudentBot, who cooperates iff he knows both players will act alike[1], operates here.

- The opponent's behavior in the current matchup. FairBot, who cooperates iff he knows the opponent will cooperate, operates here.

- Nothing. Classical game theory says that, of the few possible strategies, defection is optimal.

This post focuses on the third case.

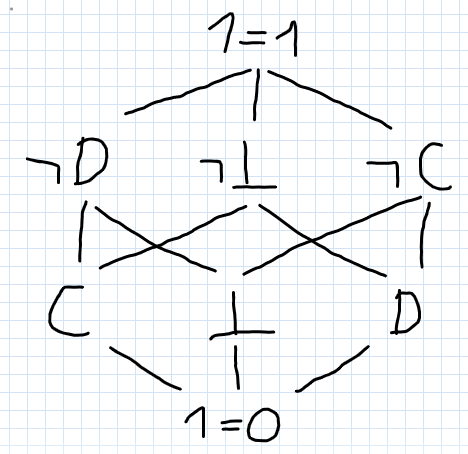

In domain theory, we partially order our sets by "information content". Suppose every player can cooperate, defect, or fail to have decided. The latter case helps to model algorithmic nontermination. Both cooperation and defection would have more information content than indecision.

Decision := {Cooperate, Defect, ⊥}

Query := {"other cooperates", "both act alike", ...}

Answer := {"I know this.", "I don't know this."}

Knowledge := Query -> Answer

Player := Knowledge -> Decision

Answer is ordered by knowing something having more content than not knowing it. I will order Query by implication, because then, the domain-theoretic custom of considering only monotonic functions means that Knowledge must respect implication!

Shrinking Decision to {Cooperate, ⊥} restricts players to only ever start cooperating as their knowledge increases, never stop. Of course, someone who isn't going to cooperate might still eventually figure this out. That might be equivalent.

Across these 24 encounters a player might have, of the 8 players the only ones whose strategies aren't dominated are FairBot and ⊥Bot. After deleting the dominated players, FairBot dominates ⊥Bot. So in this setting where one can only know the opponent's decision and knowledge can only increase cooperation, FairBot is optimal.

This seems like a good time to pause for feedback. And can you recommend software for doing this reasoning at scale?

- ^

No, wait. Then it would cooperate with CooperateBot. Hmm.

5 comments

Comments sorted by top scores.

comment by Gurkenglas · 2021-05-09T16:46:30.487Z · LW(p) · GW(p)

In intuitionistic logic, "Cooperate iff either of C or ⊥ is provable." is equivalent to "Cooperate iff (C or ⊥) is provable.". So should we only consider players of form "Cooperate iff _ is provable.", where _ is some intuitionistic formula? Well...

↑ comment by Vladimir_Nesov · 2021-05-09T19:37:22.467Z · LW(p) · GW(p)

Decisions should be taken about beliefs (as posets), not actions (as discrete sets). With the environment modeled by monotone maps, solutions (as fixpoints) can only be enacted for beliefs that are modeled as themselves, not for things that are modeled as something other than themselves (powersets for events with small finite sets of possible states, etc.). Also, only things that shall be enacted should be modeled as themselves, or else solutions won't be enacted into correctness.

This way, actions may depend on beliefs in an arbitrary way, the same as events in the environment can depend on actions in an arbitrary way, so actions play no special role in the decision making, only enacted beliefs do. For example the states of belief (other than ⊥) that lead to Cooperate don't in principle have to be upward closed. Not sure what's going on with that, there is no choosing between solutions in this setting, so it's not a great fit.

Replies from: Gurkenglas↑ comment by Gurkenglas · 2021-05-09T22:34:35.925Z · LW(p) · GW(p)

The belief state diagram is upward closed because I included the inconsistent belief states. We could say that a one-query player "decides to defect" if his query is proven false. Then he will only decide on both decisions when his beliefs are inconsistent. Alternatively we could have a query for each primitive decision, inducing a monotone map from P({C,D}) to queries; or we could identify players with these monotone maps.

I didn't follow the bit about being modeled as oneself. Every definition of the belief space gives us a player space, yes? And once we specify some beliefs we have a tournament to examine, an interesting one if we happen to pipe the player's outputs into their inputs through some proof engines. Define enacted.

Replies from: Vladimir_Nesov↑ comment by Vladimir_Nesov · 2021-05-09T23:20:09.235Z · LW(p) · GW(p)

We can model events (such as states of a program) with posets, related with each other by monotone maps. By beliefs I mean such posets or their elements (belief states). A state of an event can be enacted by an agent if the agent can bring it into that state. So if the agent decides on an action, that action can be enacted. But if instead the agent only decides on things like beliefs about actions (powersets of sets of possible actions), these can't be enacted, for example it can't ensure that the action is {C, D} or ⊥, that doesn't make sense. But for the beliefs about future states of agent's program that are themselves states of belief, modeled as themselves, the agent can enact them, and that makes them an excellent target for decision making. I think this is the important takeaway from the modal decisions setting, but the setting lacks the component of choosing between possible solutions according to preference, instead it's manipulating the pseudoenvironment between beliefs and actions to get something useful out of its incomplete decision making machinery.

We could say that a one-query player "decides to defect" if his query is proven false.

My point is that in principle, even for a belief whose set of states is moderately large (which the picture in the first comment of this thread gestures at), the action may be defined to depend on the belief state in an arbitrary way, perhaps switching back and forth between C and D as a belief gets stronger and stronger. That is because the action doesn't play a fundamental role in the decision making, only belief does (in this setting, statements with proofs), but we are not making use of the ability to choose which things have proofs according to preference, so there's this whole thing about carefully choosing how actions depend on beliefs, which doesn't work very well.

Replies from: Gurkenglas↑ comment by Gurkenglas · 2021-05-10T07:09:50.340Z · LW(p) · GW(p)

Yeah, enacting comes in at a higher level of interpretation than is yet considered here. The increasing levels of interpretation here are: Set theory or other math foundations; we consider sets of queries and beliefs and players with functions between them; we add porder and monotonicity; we specify proof engines and their properties like consistency; we define utilities, decision theories, and what makes some players better than others. (Category theory is good at keeping these separate.) I'd start talking about "enacting" when we define a decision theory like "Make the decision such that I can prove the best lower bound on utility.". What do you mean by deciding on a belief state? "Decision" is defined before I establish any causation from decisions to beliefs.

Oh, I thought you meant you didn't see why any two beliefs had an upper bound. My choice to make players monotonic comes from intuition that that's how the math is supposed to look. I'd define Query=P(Decision) as Decision->2 as well but that plainly makes no sense so I'm looking for the true posetty definition of Query, and "logical formulas" looks good so far. Switching back and forth sounds more like you want to do multiple decisions, one after the other. There's also a more grounded case to be made that your policy should become more certain as your knowledge does, do you see it?