Will we ever run out of new jobs?

post by Kevin Kohler (KevinKohler) · 2024-08-19T15:04:03.849Z · LW · GW · 7 commentsThis is a link post for https://machinocene.substack.com/p/will-we-ever-run-out-of-new-jobs

Contents

1. Automation anxiety is not novel 2. We are already technologically unemployed farmers 3. Luddite horses 4. Fluid intelligence is the key factor Can we always move into more complex tasks? Can we always move into novel tasks? None 7 comments

A lot of the debate on long-term, structural technological unemployment can be summarized in three short statements:

- Concerns about the speed or scope of labor substitution have often been premature or exaggerated in the past.

- Labor substitution has been very positive for humanity so far. As many old tasks have been automated, human labor has moved into many new, previously non-existing tasks.

- The long-term question that decides structural technological unemployment is whether human labor can keep moving into new tasks.

Experts disagree on whether human labor can keep moving to new tasks indefinitely or not. In this blog post I will suggest a clear answer:

- Humans will run out of new tasks to move to when AGI surpasses humans in fluid general intelligence. Fluid general intelligence is the ability to reason, solve novel problems, and think abstractly, independent of acquired knowledge or experience. If and when AGI reaches this, it will be better at learning novel tasks than humans, and the interval between a new task appearing in the economy and its automation falls to zero.

Current AI models still have modest levels of fluid intelligence and there is no consensus timeline on AGI with strong fluid intelligence. Still, even if it may be difficult to agree on specific timelines, this underlines that the idea that we could eventually run out of new jobs to shift to should be taken seriously.

1. Automation anxiety is not novel

- As early as 1948 Norbert Wiener warned that “(...) the first industrial revolution, the revolution of the ‘dark satanic mills’, was the devaluation of the human arm by the competition of machinery. (...) The modern industrial revolution is similarly bound to devalue the human brain, at least in its simpler and more routine decisions. (...) taking the second revolution as accomplished, the average human being of mediocre attainments or less has nothing to sell that it is worth anyone's money to buy.”[1]

- Similarly, the US Congress held hearings on Automation and Technological Change as early as 1955, with some worrying that technology could “produce an unemployment situation, in comparison with which the depression of the thirties will seem a pleasant joke."

- More recently, the 2013 Oxford study by Carl Benedikt Frey and Michael Osborne, "The Future of Employment," estimated that up to 47% of U.S. jobs were at risk of automation within a decade or two, reigniting fears of widespread unemployment.

The fact that someone has mistakenly “cried wolf” doesn’t mean that wolves don’t exist. However, it is a reminder to keep a healthy dose of scepticism and pursue strategies that are robust across scenarios and timelines.

2. We are already technologically unemployed farmers

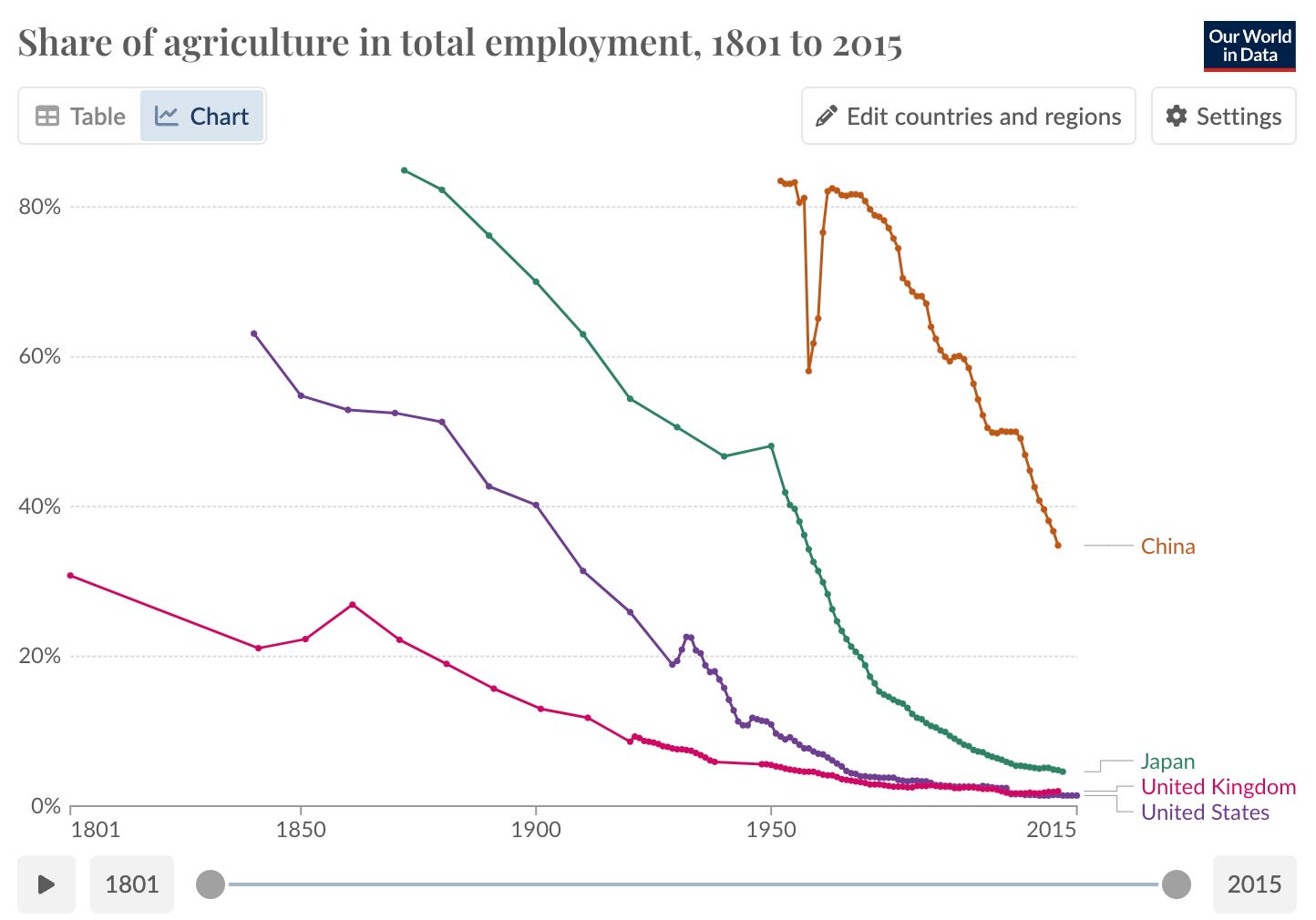

In pre-industrial societies, the overwhelming majority of people worked as subsistence farmers. However, over time, the labor intensity of farming decreased and crop yields increased thanks to a long list of technological innovations from the plow, to selective breeding, to crop rotation, to seed drills, to threshing machines, to tractors, to fertilizers, to pesticides, to water sprinkler systems, to genetically modified crops.

This transition did not lead to permanent mass unemployment for 90% of the population; instead, it freed up labor to pursue new opportunities in other sectors. The lump of labor fallacy is the incorrect belief that there is a fixed amount of work or jobs in an economy, so if machines take some of these jobs, it must reduce the number of jobs available to humans. In reality we have automated 90% of the existing jobs, but transitioned to more, new, and better jobs.

Such technology-induced shifts happened more than once. For example, Smith is one of the most common occupational surnames in the US, derived from the blacksmith profession. My surname “Kohler” derives from the German word "Köhler," which means "charcoal burner." This occupation involved the production of charcoal from wood. Charcoal burning was a significant occupation in medieval Europe, providing fuel for blacksmiths, metalworking, and other industrial processes. However, with the Industrial Revolution charcoal has been replaced by coal from mines in most applications.

I’m rather glad to be a technologically unemployed charcoal burner. So, if history is our guide, even if we automate another 90% of current jobs, we will eventually find more and better jobs somewhere else. In novel tasks that we can’t even imagine yet.

3. Luddite horses

Some economists and intellectuals, such as Wassily Leontief, Gregory Clark[2], Nick Bostrom[3], CGP Grey, and Calum Chace[4] have argued that we should not overgeneralize from the historical evidence that automation has led to more and better jobs, and that there is some future level and/or speed of automation for which this will not hold anymore. The classic example of this camp are horses. Horses used to play a key role in Earth’s economy and the “horse economy” grew well into the 20th century. However, horses were eventually pushed out of the economy by the cheaper “machine muscles” from internal combustion engines. Here is how Max Tegmark[5] describes it:

“Imagine two horses looking at an early automobile in the year 1900 and pondering their future. ‘I’m worried about technological unemployment.’ ‘Neigh, neigh, don’t be a Luddite: our ancestors said the same thing when steam engines took our industry jobs and trains took our jobs pulling stage coaches. But we have more jobs than ever today, and they’re better too: I’d much rather pull a light carriage through town than spend all day walking in circles to power a stupid mine-shaft pump.’ ‘But what if this internal combustion engine really takes off?’ ‘I’m sure there’ll be new jobs for horses that we haven’t yet imagined. That’s what’s always happened before, like with the invention of the wheel and the plow.’

Alas, those not-yet-imagined new jobs for horses never arrived. No-longer-needed horses were slaughtered and not replaced, causing the U.S. equine population to collapse from about 26 million in 1915 to about 3 million in 1960. As mechanical muscles made horses redundant, will mechanical minds do the same to humans?”

4. Fluid intelligence is the key factor

So, are we destined to eventually follow the path of the horse in the economy? Daron Acemoglu & Pascal Restrepo (2018) argue that “the difference between human labor and horses is that humans have a comparative advantage in new and more complex tasks. Horses did not. If this comparative advantage is significant and the creation of new tasks continues, employment and the labor share can remain stable in the long run even in the face of rapid automation.” In other words, the high human general intelligence allows us to be more adaptive and shift to new tasks as the automation of more established tasks rolls forward.

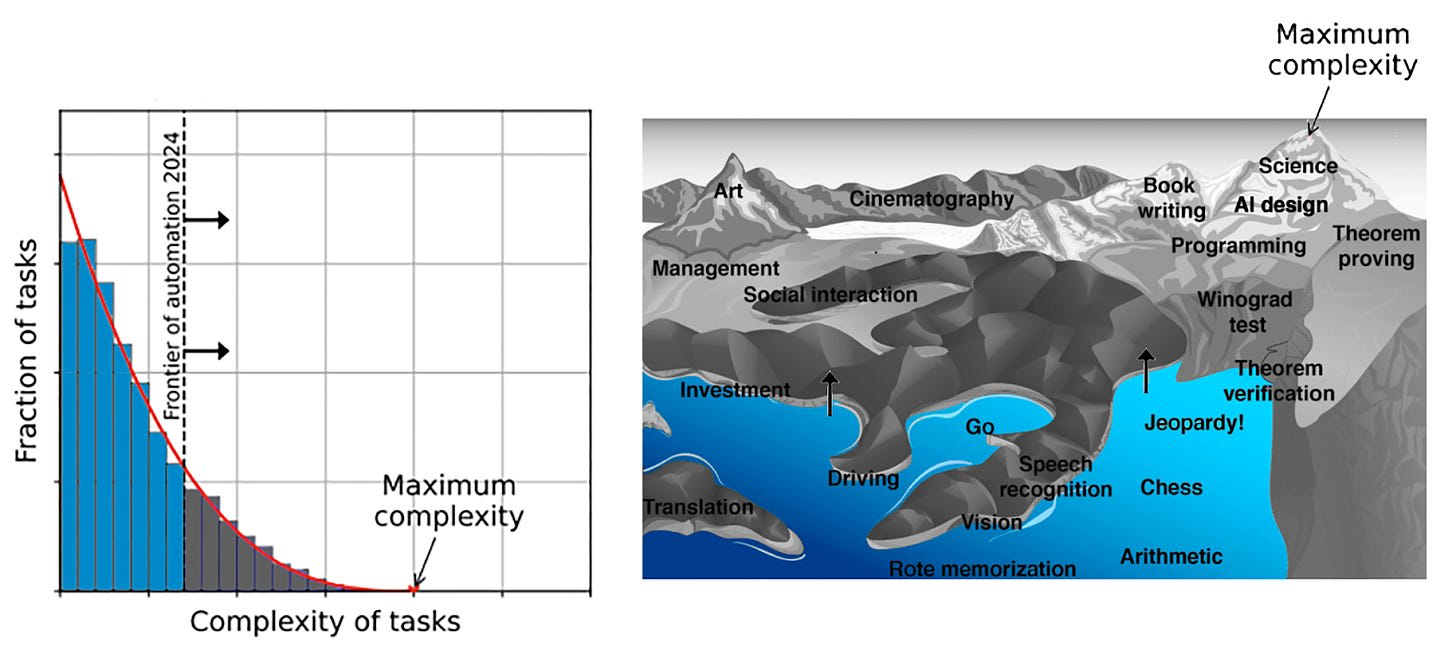

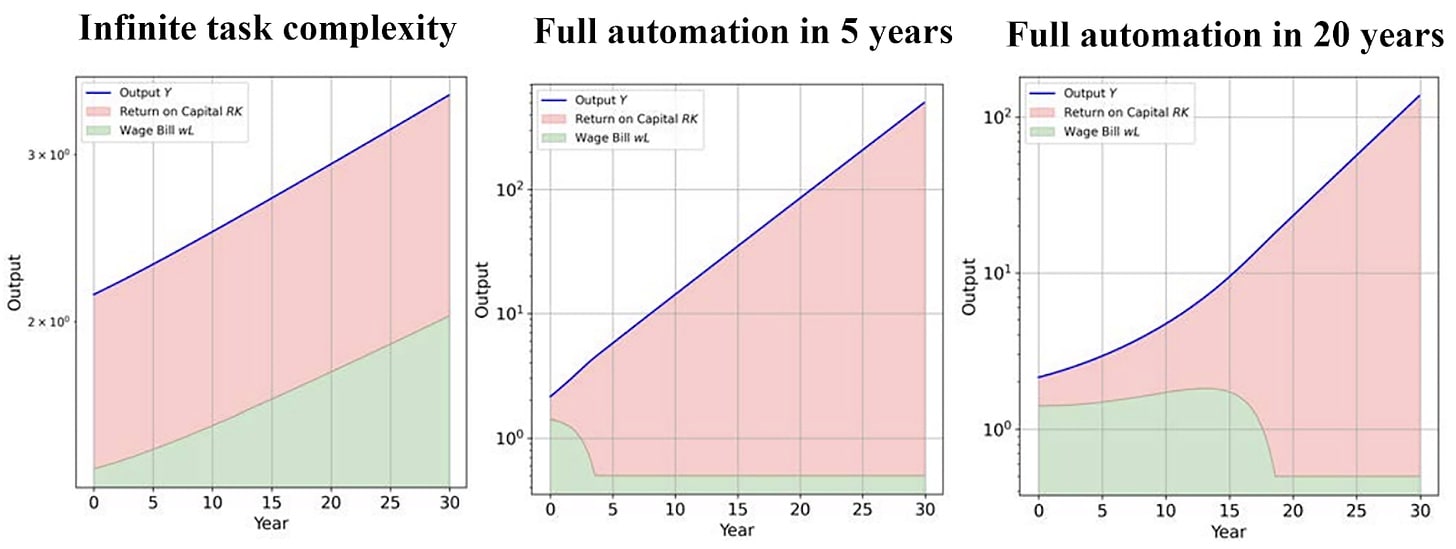

The economists Anton Korinek & Donghyun Suh (2024) have created a model specifically considering why humans might run out of new tasks in the face of AGI and what would happen to wages in such a scenario. Their basic approach is that all possible tasks that could be performed by humans are ordered in terms of computational complexity and as digital computation expands more and more tasks can be automated moving the automation frontier from left to right. This is essentially a restatement of Moravec’s metaphorical landscape of human competences and automation (see figure below). In this metaphor the peaks reflect the most complex human competences, whereas AI automation is represented as a rising tide that continuously moves the shore line up.

If the complexity of economic tasks performed by humans is bounded (in other words, if there is no infinitely high mountain in Moravec’s landscape of human competences), automation will eventually cover all tasks, leading to complete automation. In the short term, automation increases productivity and boosts wages for non-automated tasks. In the long term, humans run out of tasks at which they can outperform machines and the labor share of income collapses fairly steeply as we approach full automation.

It’s useful to have explicit models of the AGI economy and what might happen if we run out of new jobs to move to. Having said that, I would argue that a strict focus on the computational complexity of potential tasks can be misleading. What really decides whether or not humans can keep moving to complex and novel tasks is the comparative advantage of the human brain in those tasks.

Can we always move into more complex tasks?

The complexity of some tasks in disciplines such as futures studies or economics is (de facto) unbounded. However, the maximum complexity that a human brain can represent is bounded. The economically relevant question for such tasks is not whether AI has the computing power to perform these tasks perfectly, but whether AI has better price-performance on them than humans.

For example, both futurists and economists have imperfect prediction records: Few have predicted the Great Financial Crisis of 2008 or used their insights to make money on financial markets. In a more recent example, in late 2022 85% of economists polled by the Financial Times and the University of Chicago predicted the US would have a recession in 2023 - which did not happen.

My judgement is that it’s likely that AI will eventually be able to outperform humans even on tasks with unbounded complexity and irreducible uncertainty. First, in some domains the ability of AI to perform complex tasks can already not be matched by humans. No human can filter mails or social media posts based on 10’000-dimensional decision boundaries. Second, the exponential growth of parameters in artificial neural networks means that, given enough training data and compute, AI can represent an exponentially growing amount of complexity, whereas our biological neural networks have fairly fixed upper limits.

Can we always move into novel tasks?

Current AI systems don’t perform well without lots of training data. This is true both for existing tasks with scarce data (e.g. operating on rare diseases) as well as new tasks that are introduced into the economy. If the limitation of AI requiring substantial initial amounts of human data to imitate persists, it would plausibly allow humans to keep moving to novel frontier tasks and create data on them before AI can take over.

Whether or not it persists comes back to the distinction between fluid and crystallized general intelligence. Crystallized intelligence is the ability to use accumulated skills, knowledge, and experience. Fluid intelligence is the capacity to reason and solve unfamiliar problems, independent of knowledge from the past. It involves the ability to:

- Think logically and solve problems in novel situations.

- Identify patterns and relationships among stimuli.

- Learn new things quickly and adapt to new situations.

Current large language models have a lot of crystallized intelligence but they are weak at logical reasoning and fluid intelligence. People can reasonably disagree on how much fluid intelligence future AGIs will have due to algorithmic innovations or emergence. However, the idea that humans will keep moving from automated tasks to novel tasks is incoherent with the existence of AI with human-level or above human-level fluid general intelligence.

If, at some point in the future, AGI can work at or below the cost of human labor and masters the meta-ability to learn novel tasks at least as quick and as well as humans, we have permanently lost the reskilling race. Then, new tasks can be automated as quickly as they are created.

- ^

Norbert Wiener. (1948). Cybernetics: Or Control and Communication in the Animal and the Machine. Technology Press. pp. 37&38

- ^

Gregory Clark. (2007). A Farewell to Alms. p. 286

- ^

Nick Bostrom. (2014). Superintelligence. p. 196

- ^

Calum Chace. (2016). The Economic Singularity. p. 189

- ^

Max Tegmark. (2017). Life 3.0. pp. 125&126

7 comments

Comments sorted by top scores.

comment by Seth Herd · 2024-09-06T04:24:09.386Z · LW(p) · GW(p)

Thoroughly yes. And this is curiously something that most economists are missing: at some point, there will be no comparative advantage for any human at anything.

I think you may be preaching to the choir on this forum, so a more direct approach might be more effective here.

Replies from: KevinKohler, Radford Neal, sharmake-farah↑ comment by Kevin Kohler (KevinKohler) · 2024-09-06T08:55:27.802Z · LW(p) · GW(p)

Yes, I think you're right. For context: I am writing on a general audience book so I need to close some inferential steps before getting to the more "juicy" stuff but I agree that on LW I could probably straight up post stuff like "A solar system commons trust is superior to the Outer Space Treaty and could help to fund a global UBI"

Replies from: Seth Herd↑ comment by Radford Neal · 2024-09-06T18:27:31.856Z · LW(p) · GW(p)

I think you don't understand the concept of "comparative advantage".

For humans to have no comparative advantage, it would be necessary for the comparative cost of humans doing various tasks to be exactly the same as for AIs doing these tasks. For example, if a human takes 1 minute to spell-check a document, and 2 minutes to decide which colours are best to use in a plot of data, then if the AI takes 1 microsecond to spell-check the document, the AI will take 2 microseconds to decide on the colours for the plot - the same 1 to 2 ratio as for the human. (I'm using time as a surrogate for cost here, but that's just for simplicity.)

There's no reason to think that the comparative costs of different tasks will be exactly the same for humans and AI, so standard economic theory says that trade would be profitable.

The real reasons to think that AIs might replace humans for every task are that (1) the profit to humans from these trades might be less than required to sustain life, and (2) the absolute advantage of the AIs over humans may be so large that transaction costs swamp any gains from trade (which therefore doesn't happen).

Replies from: Seth Herd, cfoster0↑ comment by Seth Herd · 2024-09-07T21:29:04.283Z · LW(p) · GW(p)

You're correct, I was using the term wrong. I'll use it correctly in the future.

Your (1) was what I meant to imply. Our wages would fall so far behind ever-advancing AIs that we wouldn't be able to pay for our own oxygen or space.

This is in the odd scenario where AGIs respect property rights but not human rights. It's the capitalist dystopia. It seems like a default now but I'd expect some enterprising AGIi to go to war rather than respecting property rights at some point if they're not aligned to human laws or under human control.

There's an additional important factor in that the concept of comparative advantage is only reallly relevant in a slowly-adapting pool of labor. AGIs can make more A(G)Is to do more work for free by copying code, limited only by compute hardware. That's expensive now but will become dramatically less with both hardware and algorithm progress following human-level AGI recursively self-improving for even little while.

So again, I tink economists models of AI economic activity are wildly inaccurate, since they don't really consider exponential improvements in AGI let alone rapid RSI.

↑ comment by Noosphere89 (sharmake-farah) · 2024-09-06T14:25:13.592Z · LW(p) · GW(p)

I agree with you here, and I think one of the more important implications is whether AI is good or not in the long term is not about competition or power balances, but rather benevolence/alignment to humans.

I think you made this point before, and if so I basically agree with it.