Conjecture internal survey: AGI timelines and probability of human extinction from advanced AI

post by Maris Sala (maris-sala) · 2023-05-22T14:31:59.139Z · LW · GW · 5 commentsThis is a link post for https://www.conjecture.dev/timelines-and-pdoom/

Contents

Section 1. Probability of human extinction from AI Setup and limitations Responses Section 2. When will we have AGI? Setup and limitations Responses None 5 comments

We put together a survey to study the opinions of timelines and probability of human extinction of the employees at Conjecture. The questions were based on previous public surveys and prediction markets, to ensure that the results are comparable with people’s opinions outside of Conjecture.

The survey results were polled in April, 2023. There were 23 unique responses from people across teams.

Section 1. Probability of human extinction from AI

Setup and limitations

The specific questions the survey asked were:

- What probability do you put on human inability to control future advanced A.I. systems causing human extinction or similarly permanent and severe disempowerment of the human species?

- What probability do A.I. systems causing human extinction or similarly permanent and severe disempowerment of the human species in general (not just because inability to control, but also stuff like people intentionally using AI systems in harmful ways)?

The difference between the two questions is that the first focuses on risk from misalignment, whereas the second captures risk from misalignment and misuse.

The main caveats of these questions are the following:

- The questions were not explicitly time bound. I'd expect differences in people’s estimates of risk of extinction this century, in the next 1000 years, and anytime in the future. The longer of a timeframe we consider, the higher the values would be. I suspect employees were considering extinction risk roughly within this century when answering.

- The first question is a subset of the second question. One employee gave a higher probability for the second question than the first; this was probably a misinterpretation.

- The questions factor in interventions such as how Conjecture and others’ safety work will impact extinction risk. The expectation is the numbers would be higher if factored out their own or others’ safety work.

Responses

Out of the 23 respondents, one rejected the premise, and two people did not respond to one of the two questions but answered the other one. The main issue respondents raised was answering without a time constraint.

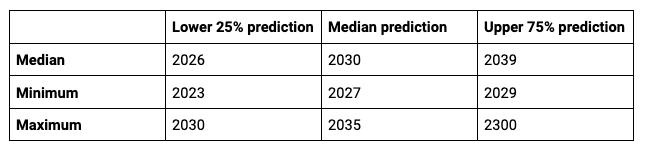

Generally, people estimate the extinction risk from autonomous AI / AI getting out of control to be quite high at Conjecture. The median estimation is 70% and the average estimation is 59%. The plurality estimates the risk to be between 60% to 80%. A few people believe extinction risk from AGI is higher than 80%.

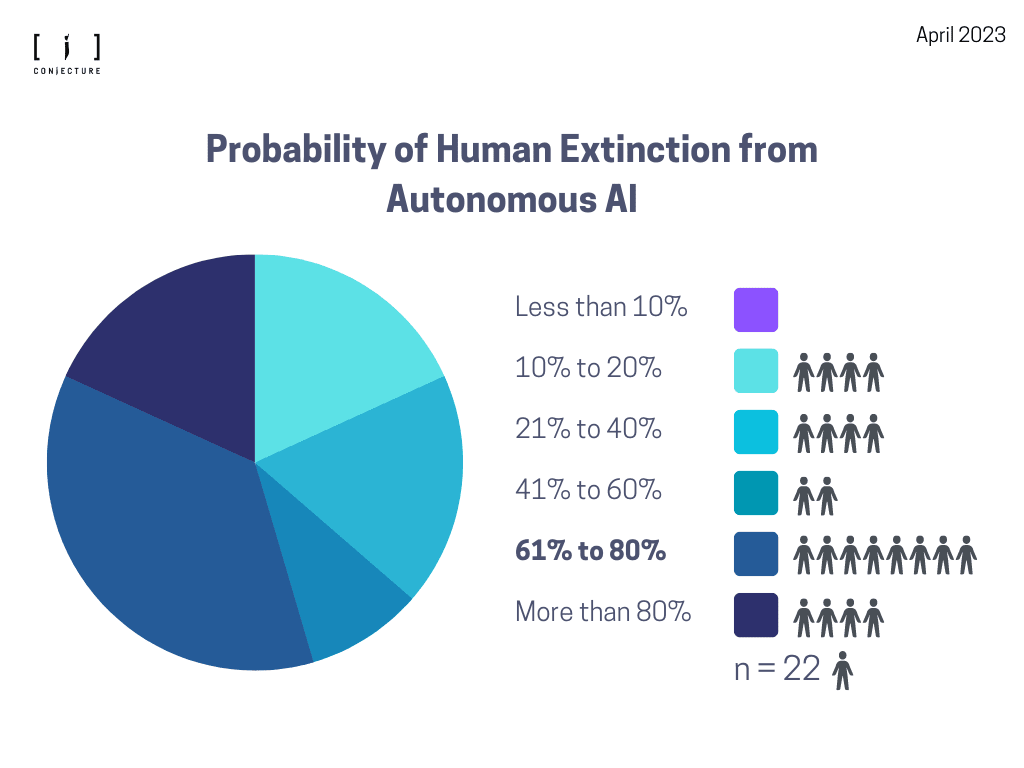

The second question surveying extinction risk from AI in general, which includes misalignment and misuse. The median estimate is 80% and the average is 71%. The plurality estimates the risk to be over 80%.

Section 2. When will we have AGI?

Setup and limitations

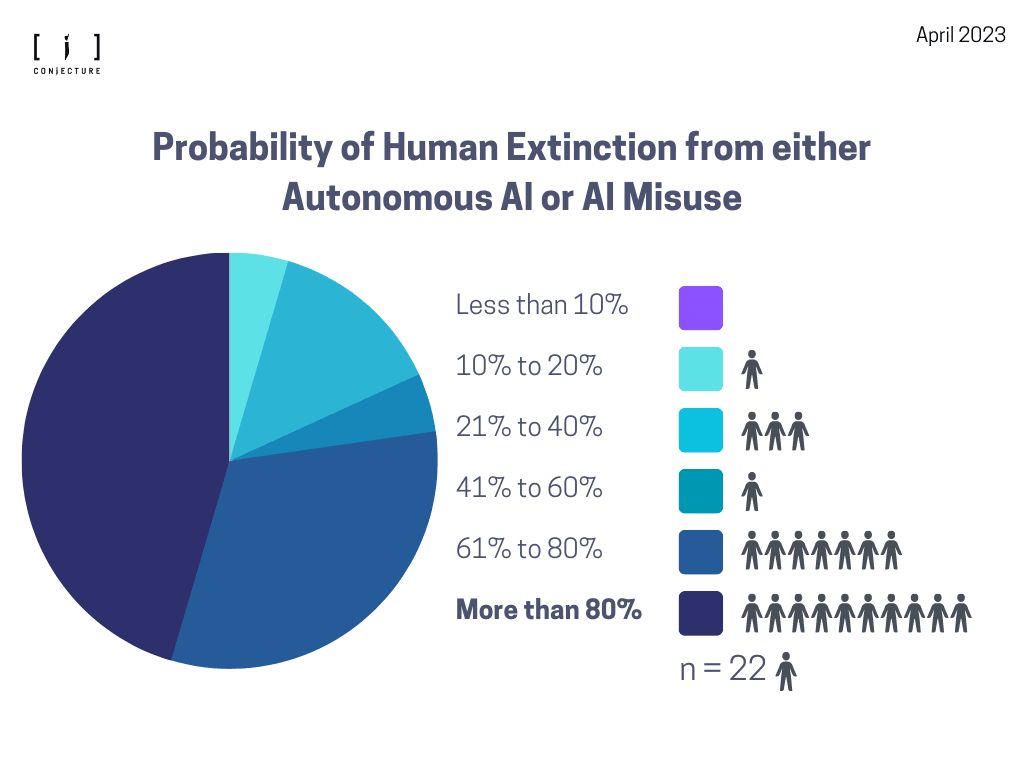

For this question, we asked respondents to predict when AGI will be built using this specification used on Metaculus, enabling us to compare to the community baseline (Figure 3).

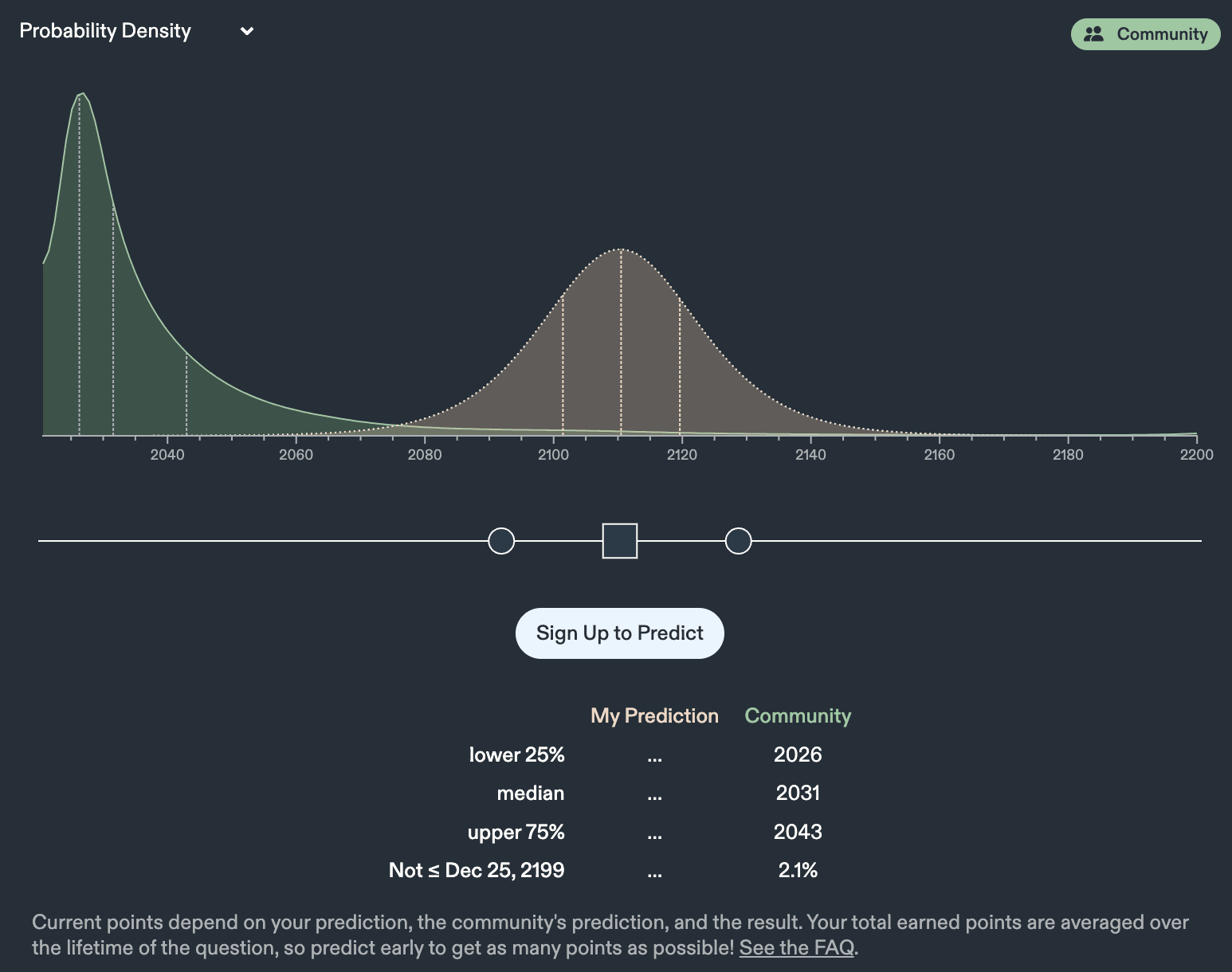

The respondents were instructed to toggle with the probability density as seen in Figure 4. This was a deliberate choice to enable differences in confidence towards lower or higher values in uncertainty.

The main caveats of this question were:

- The responses are probably anchored to the Metaculus community prediction. The community prediction is 2031: 8 year timelines. Conjecture responses centering around a similar prediction should not come as a surprise.

- The question allows for a prediction that AGI is already here. It’s unclear that respondents paid close attention to their lower and upper predictions to ensure that both are accordingly sensible. They probably focused on making their median prediction accurate, and might not have noticed how that affected lower and upper bounds.

Responses

Out of the 23 respondents, five did not answer this question, out of which one person rejected the premise. This resulted in 18 responses that were counted in the analysis.

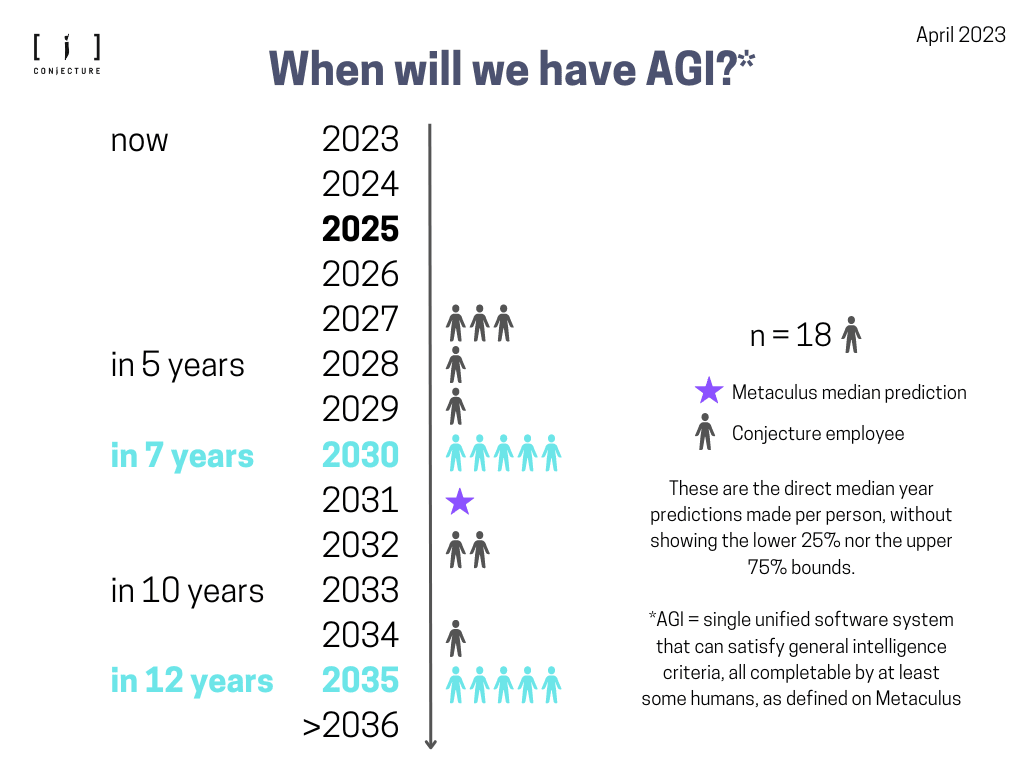

Conjecture employees’ timelines are somewhat bimodal (Figure 5). Most people people answered either 2030 (7 year until AGI) or 2035 (12 years until AGI). The Metaculus community prediction at the time of the survey was 2031; respondents were likely anchored by this.

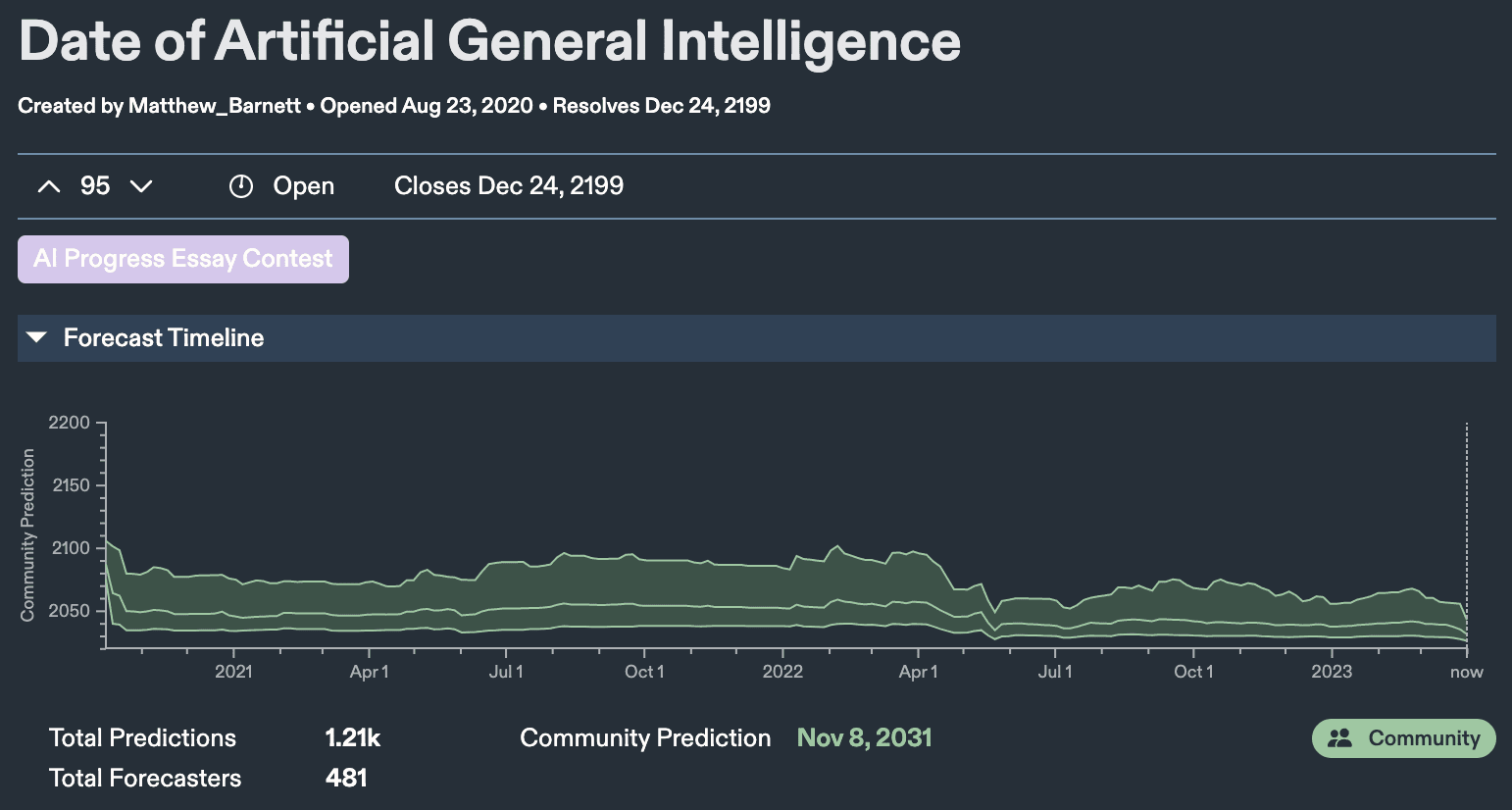

Table 1. Overview of additional statistics for when we will have AGI.

Table 1 shows additional markers for what the summary statistics look like across all respondents for the lower and upper bound predictions. Notably, the lower end of possible years where we will have AGI is maximum in the year 2030, which is still shorter timelines than what Metaculus users report as their overall median. The median prediction varies from 2027 to 2035. In terms of the upper 75% bound, the median prediction is the year 2039 but it varies all the way from 2029 to 2300, showing that the uncertainty towards the further end of the distribution is higher than for the years closer to 2023.

5 comments

Comments sorted by top scores.

comment by Zach Stein-Perlman · 2023-05-22T19:06:22.477Z · LW(p) · GW(p)

I am very glad you did this because in worlds where survey results look like this, I think it's good and important to make that easily legible to AI safety community outsiders. [Edit: and good and important to set a good example for other labs.]

Replies from: 1a3orn↑ comment by 1a3orn · 2023-05-23T12:20:25.015Z · LW(p) · GW(p)

I think the survey results probably look a lot like this almost regardless of which world we are in?

Connor is at something like 90% doom, iirc, and explicitly founded Conjecture to do alignment work in a world with very short time lines. If we grant that organizations (probably) attract people with similar doom-levels and timelines to the leader of the organizations, maybe with some regression to the mean, then this is kinda what we expect, regardless of what the world is like. I'd advise against updating on it on the general grounds that updating on filtered evidence is generally a bad idea.

(On the other hand, if someone showed a survey from like, Facebook AI employees, and it had something like these numbers, that seems like much much stronger evidence.)

comment by ElliotJDavies · 2023-05-23T12:01:06.270Z · LW(p) · GW(p)

Thanks for doing this, it's pretty helpful to know where conjecture employees are in thinking about this. I'd encourage other orgs to do the same.

Charts also look good.

comment by mishka · 2023-05-22T18:41:23.865Z · LW(p) · GW(p)

I wonder if the mode of the distribution on Figure 4 (which is at about 2027 on this April 2023 figure and is continuing to shift left on the Metaculus question page) has a straightforward statistical interpretation. This mode is considerably to the left of the median and tends to be near the "lower 25%" mark.

Is it really the case that 2026-2028 are effectively most popular predictions in some sense, or is it an artefact of how this Metaculus page processes the data?

comment by Joseph Van Name (joseph-van-name) · 2023-05-22T19:46:14.080Z · LW(p) · GW(p)

I think the Conjecture employees may be a little bit biased. Are these people well informed about the limits of our current hardware and the difficulties in increasing the performance of our hardware as the energy efficiency per logic gate operation approaches Landauer's limit? Do these people expect for AGI to come about when it is clear that Moore's law is slowing down and about to stop without reversible computation? Do these people expect for AGI to come about using irreversible hardware or reversible hardware? I want to know their predictions about the rise of reversible computing hardware. Also, does this AGI mean that we will have human level performance in pretty much all tasks at the energy efficiency of the human brain or is it asking about AGI at 10 gigawatts of power?

This survey is only informative if the people being surveyed are well aware of the the limitations on computation imposed by Landauer's limit and the possible ways of getting around this limitation (reversible computation).

While I am critical of this survey, I do like how this survey exposes the real dangers of human extinction from advanced AI and how people need to start taking AI safety more seriously. We should fund more AI safety research right now.

P.S. One of the greatest threats (and one that AI may exacerbate) is the threat of pathogens such as viruses arising from bio-safety level 4 laboratories. To mitigate this threat, I have proposed for all bio-safety level 4 labs to publicly post cryptographic timestamps of all of their records. To do this, they need to take very meticulous records in the first place. After those timestamps are posted, we can make sure that those timestamps make their way to public blockchains. But I am still the only person talking about this strategy to mitigate a very serious threat. Yes. We should work on AI safety, but there are other threats that we can mitigate if we just took the bare minimum amount of effort. But the bare minimum is probably too much to ask for.