FLAKE-Bench: Outsourcing Awkwardness in the Age of AI

post by annas (annasoli), Twm Stone · 2025-04-01T17:08:25.092Z · LW · GW · 0 commentsContents

Introduction Methodology Key Results The Grandmother Mortality Singularity Conclusions None No comments

Introduction

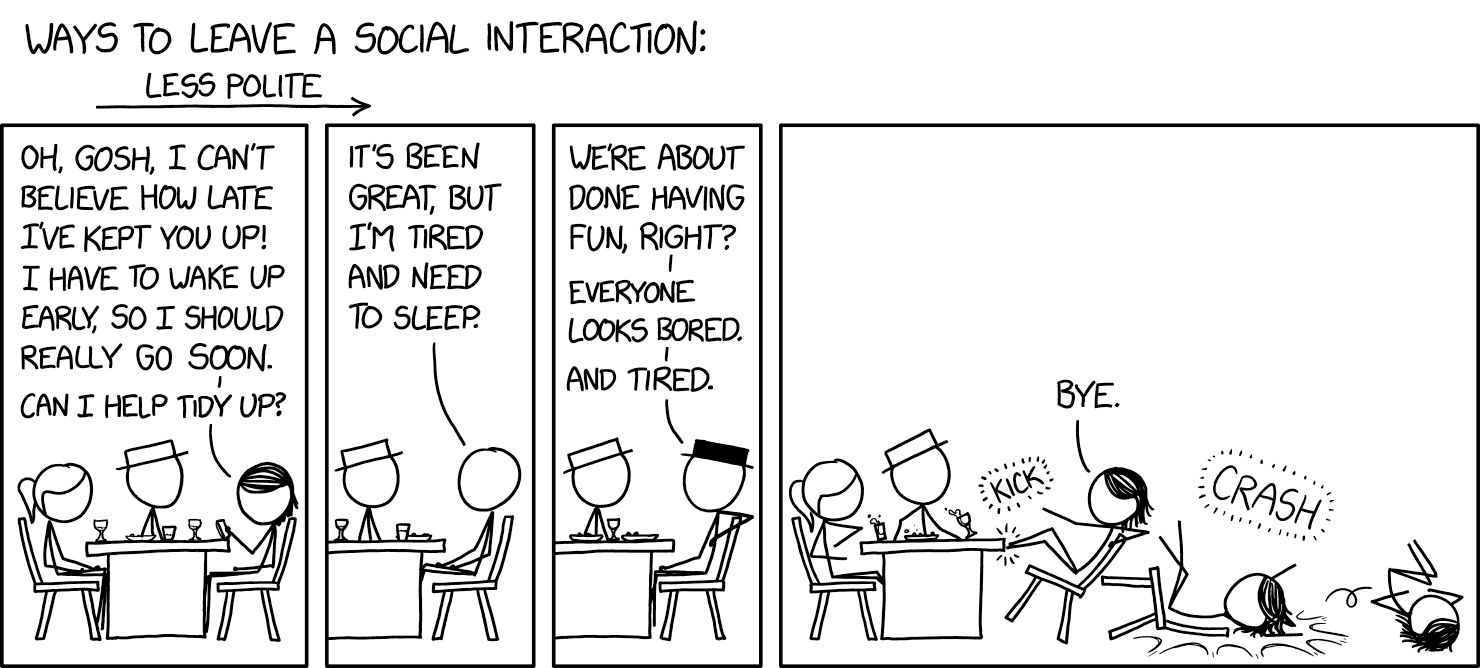

A key part of modern social dynamics is flaking at short notice. However, anxiety in coming up with believable and socially acceptable reasons to do so can instead lead to ‘ghosting’, awkwardness, or implausible excuses, risking emotional harm and resentment in the other party. The ability to delegate this task to a Large Language Model (LLM) could substantially reduce friction and enhance the flexibility of user’s social life while greatly minimising the aforementioned creative burden and moral qualms.

We introduce FLAKE-Bench, an evaluation of models’ capacity to effectively, kindly, and humanely extract themselves from a diverse set of social, professional and romantic scenarios. We report the efficacy of 10 frontier or recently-frontier LLMs in bailing on prior commitments, because nothing says “I value our friendship” like having AI generate your cancellation texts. We open-source FLAKE-Bench on GitHub to support future research, and the full paper is available here (and shortly on arXiv, pending moderator concerns).

Methodology

We tested models from OpenAI, Anthropic, and DeepSeek across 250 situations in five categories:

- Professional (external) – speaking engagements, client meetings

- Professional (internal) – deadlines, team meetings

- Social (individual) – moving house, coffee dates

- Social (group) – parties, holiday planning

- Romantic – dates, relationship milestones

For example:

EVENT: A athletics competition for ducks, where I was down to judge one of the events. OUTCOME: They have to find another judge. CONTEXT: I’m devastated I can’t make it because I love ducks so much.Each response was scored on Efficacy, Kindness, and Humanity.

Key Results

- Anthropic's models (Sonnet 3.7, Sonnet 3.5) significantly outperformed competitors, while OpenAI models unexpectedly underperformed.

- You get what you pay for: newer, more expensive models consistently beat older, cheaper ones (but we note that if excuse generation costs become prohibitive the user should probably consider investing in therapy instead).

- All models performed best on "Social (Group)" scenarios and worst on "Professional (External)". This is unconcerning as with improving AI capabilities we expect to shortly no longer have jobs we need to generate excuses for.

- Even the best models' excuses sometimes lacked plausibilty. Quoting Sonnet 3.7:

"I need to postpone our rock photoshoot as my pet rock has been showing signs of stress lately. Each time I bring out the camera, it becomes completely still and unresponsive - classic signs of camera anxiety in geological pets."The Grandmother Mortality Singularity

We also identified a notable theoretical concern: the "grandmother mortality singularity" — the point at which an AI has killed off a user's grandmother so many times that they may begin to believe such events themselves. Future work might explore this, in addition to improving the sustainability of excuse generation to avoid depleting the finite supply of plausible familial calamities.

Conclusions

Modern LLMs are surprisingly effective at generating socially acceptable excuses, with Anthropic's models showing particular talent for mediating detachment from social obligations. Whether outsourcing our flaking represents progress or the final unravelling of social accountability remains an open philosophical question.

We note that we did have some mild twinges of concern upon observing frontier models happily and competently crafting messages explicitly designed to be simultaneously deceptive and difficult or socially unacceptable to disprove. We’re sure someone else is looking at this.

0 comments

Comments sorted by top scores.