Will the AGIs be able to run the civilisation?

post by StanislavKrym · 2025-03-28T04:50:07.568Z · LW · GW · 2 commentsContents

2 comments

Even an AGI "aligned" to a purpose which doesn't imply humanity's survival but does require the AGI itself to achieve difficult feats like transforming the entire Solar System into something computing as many digits of pi as possible would obviously still need to produce the computing systems and gather the energy necessary for the systems' work. As I mentioned in my previous question [LW · GW], all the electrical energy generated in the world cannot sustain more than agents who interact with GPT-3 a hundred times a day while using 3Wh per interaction. The OpenAI-o3 model apparently requires more than 1 kWh per task.

However, the ARC-AGI task set shows the following trend: as the o3 models taught under the same paradigm increased the rate of success at ARC-AGI-1 tasks from 10% to 75%, the cost increased 500 times. The most expensive known model whose rate of success at ARC-AGI-1 tasks is 10% is GPT-4.5, indicating that a paradigm shift lowers the cost at most seven times. UPD: the ARC-AGI task set shows the following trend: as the o3 models taught under the same paradigm increased the rate of success at ARC-AGI-1 tasks from 10% (o3-mini low) to 75% (o3-low), the cost increased 5000 times, while the paradigm shift between o1(low) and o3-mini (low) lowered the cost 40 times at the cost of the success rate. The shifts from o1(medium) or o1(high) to o3-mini(medium) and o3-mini(high) lowered the cost 28 and 20 times respectively without the loss of capabilities.

The fact that o1-high model somehow solved 3% of ARC-AGI-2 tasks, unlike the o1-pro model (1%), while the o3-low model solved 4% of the tasks indicates that another paradigm shift and massive scaling are necessary to achieve the ARC-AGI-2 level of performance. It is likely that the cost per human-level task is actually at least another hundred of times bigger than the one demonstrated by o3.

Next we turn to the world's energy production. It is about 30 thousands TWh per year, meaning that even the AGI-run civilisation with the current-state energy industry is unlikely to solve more than 30 trillion of OpenAI-o3-level tasks per year or, presumably, more than 300 billion of ARC-AGI-2-level tasks per year (which is less than 1 billion of said tasks per day). On the other hand, the world energy industry in 2022 employed about 67 millions of humans of whom 32 millions worked at the fossil fuel sector which generates around 80% of energy. The billion of ARC-AGI-2-level tasks solvable by the AGI per day is just 1.5 OOM away from the aforementioned 32 millions of humans. The statements above seem to show that the AGI will need some more discoveries in neuromorphic calculations, and not just high-level machine learning techniques, to be able to take over the world and run it. In addition, neuromorphic calculations will likely make it more difficult for the AGI to solve many tasks at once, providing hope that even a civilisation maintained by a misaligned AGI will bear more resemblance to the mankind with many individual minds and be very hard to construct.

UPD: If the statements above are true, then aligning the AGI might be easier than we think, since the takeover itself would be more like the actions that are already condemned by the mankind as colonialism.

UPD2: the AGI is even less likely to become economically feasible without discoveries in neuromorphic calculations. Indeed, the average hourly revenue of an American worker is less than 30 dollars. Similarly, at the time of writing there doesn't appear to be a country where an average worker would receive salaries exceeding the ones in the USA more than twice; attempts to find out whether there exists a job in the USA worth more than $100 an hour are met with metric saturation. The Claude 3.7 sonnet model measured for Metr's law does NOT perform well given a task that requires more than an hour of coding and displays a poor performance given non-coding-related tasks like Humanity's Last Exam or solving puzzles. Metr's law implies that the recently-created o3 model, which costs 200 dollars per task, is unlikely to complete a task whose length is more than 2 hours. In other words, o3 is unlikely to be worth using at a job worth less than $100 per hour (which is nearly every job in the USA!) The average hourly wages are highly unlikely to exceed $1000 at any occupation in the USA and $2000 at any occupation in the world, rendering it impractical to use a hypothetical AGI anywhere.

2 comments

Comments sorted by top scores.

comment by Xodarap · 2025-03-28T20:43:07.958Z · LW(p) · GW(p)

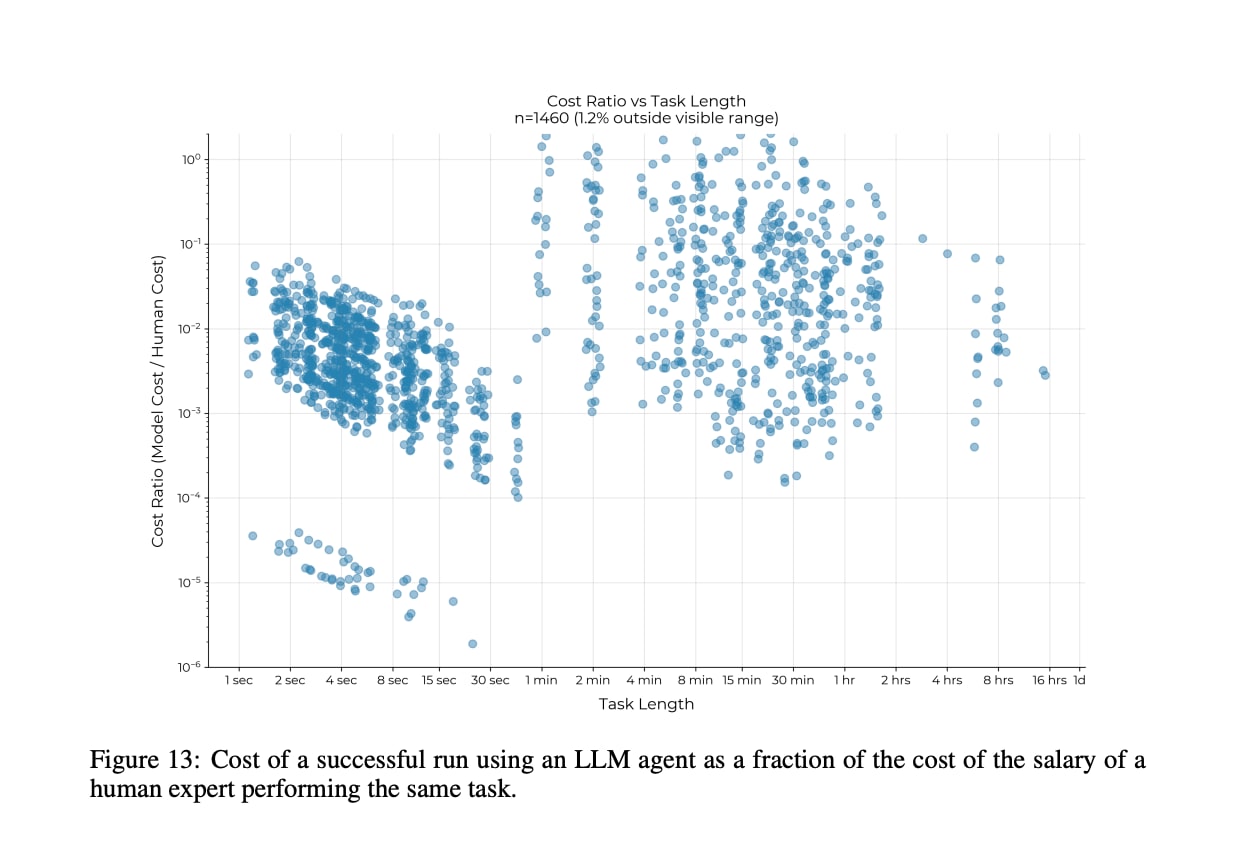

The METR report you cite finds that LLMs are vastly cheaper than humans when they do succeed, even for longer tasks:

The ARC-AGI results you cite feel somewhat hard to interpret: they may indicate that the very first models with some capability will be extremely expensive to run, but don't necessarily mean that human-level performance will forever be expensive.

Replies from: StanislavKrym↑ comment by StanislavKrym · 2025-03-28T22:11:53.035Z · LW(p) · GW(p)

Thank you for pointing at the cost graph. It is the ratio of the cost of a SUCCESSFUL run to the cost of hiring a human. But what if we take the failed runs into account as well? I wonder if the total cost of failed and successful runs is 10 times bigger for 8-hour-length tasks, placing far more tasks above the threshold.

UPD: the o3-mini is about 30 times cheaper than o1, not 7. Then the cost of o3-low might be increased just 16 times, yielding the AGI costing $3200 per use (for what long tasks, exactly?)

UPD2: I messed up the count. The model o3-mini (low) costs $0.040, while o3(low) costs $200, meaning a 5000 times increase, not a 500 times.