Does Diverse News Decrease Polarization?

post by jsteinhardt · 2021-09-11T02:30:16.583Z · LW · GW · 1 commentsContents

Causal Effect of Social Media Levy (2020)'s Intervention How does more diverse news shape opinions and attitudes? Overall Story Appendix: Other Effects Effect of intervention on media consumption Effects of intervention on news slant None 1 comment

This is the third in a series of posts studying how Facebook's newsfeed affects political polarization. To recap:

- In the first post, I showed that polarization trends in the U.S. don't coincide with social media adoption, and argued that Facebook is unlikely to be the primary direct driver of polarization (but could have important indirect effects).

- In the second post, I showed that Facebook isn't more likely than other sources to show users pro-attitudinal content; but it is more likely to show them extreme content on both sides of the political spectrum.

(Recall that we call a news article pro-attitudinal if it agrees with a user's political views, and counter-attitudinal if it runs counter to them; and that this is usually determined by looking at what outlet the article appears in.)

In this post, I'll finally look at the causal effect of the newsfeed on user's beliefs and attitudes. In summary:

- Cheap nudges on Facebook can have strong lasting (≥3 months) effects on user feed content, which translate to small changes in political affect but no measurable change in political beliefs.

- Specifically, the nudge exposed users to moderately counter-attitudinal news, and influenced around 10% of a user's newsfeed on average. This led to a 1-point reduction in affective polarization on a 0-100 scale.

- For context, affective polarization has increased by 10 points in the last 20 years.

- Some cruder estimates suggest that quitting Facebook entirely reduces affective polarization by around 2 points, and providing a fully balanced feed would reduce it by 4 points.

To me this paints an optimistic but limited outlook for interventions on Facebook newsfeeds. Fully balancing the feed seems unattainable without strongly ignoring user preferences, and I'd personally guess that 3 points is about the best you can get. That's only 30% of the polarization we'd like to address, but that could already be a big deal! Especially since a cheap intervention can already get you to 10%.

In addition, the fact that newsfeeds don't seem to influence voting behavior removes some ethical concerns. While many have worried about Facebook influencing elections, in the U.S. at least I suspect this worry is overstated, and the numbers above seem to back that up. If the primary effect of changing newsfeeds is simply to make people less angry with each other, without affecting their voting preferences, that seems like a pretty clear good to me.

Caveats and limitations. These conclusions only cover the direct effects of Facebook on users. If Facebook shapes the incentives of traditional media, it could have a larger total effect than estimated above. These incentive effects are harder to measure, although I'm part of a collaboration trying to take a first pass at this.

The conclusions also are specific to Facebook rather than social media in general. For instance, other lines of evidence suggest that Twitter could drive polarization by socializing users to be angrier (Brady et al., 2021) and by amplifying antisocial users (Bor and Petersen, 2021). I am not as well-versed in this literature, but Noah Smith wrote a recent thought-provoking overview of these two results.

Sources. This post continues to follow Levy (2020); in addition to the descriptive statistics in the last post, Levy performed a clever intervention to estimate causal effects, which I'll describe in more detail below. It also draws partly on Allcott et al. (2020) who study the effects of temporarily quitting Facebook altogether. [Disclosure: Levy, as well as two of the authors of Allcott et al., are collaborators.]

Causal Effect of Social Media

We want to understand the effect on users of changing the newsfeed. If we were Facebook, we could do this by randomly changing a subset of user feeds, and then sending follow-up surveys to measure political attitudes. However, we aren't Facebook, so we need to do something more clever. I'll describe Levy's approach to handling this below.

Levy (2020)'s Intervention

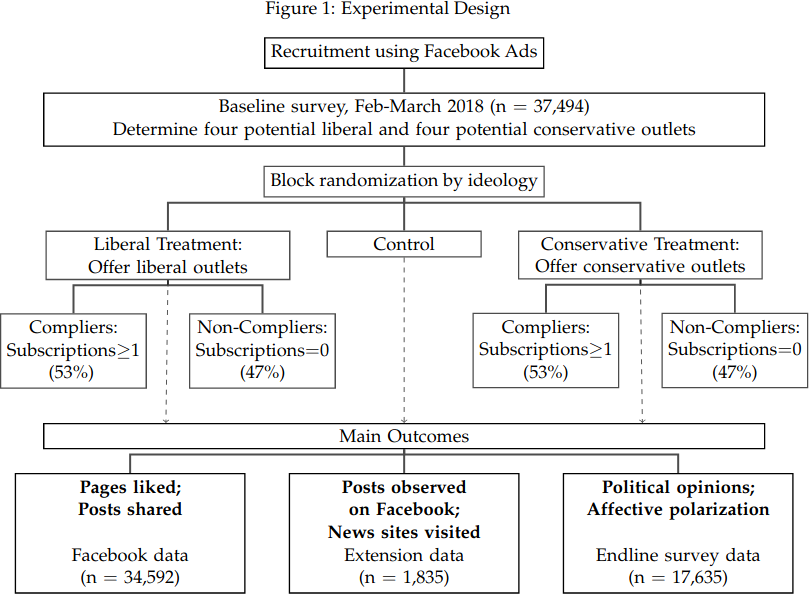

- Levy initially recruited a set of study participants via Facebook ads. (It takes many ads to get one study participants; Levy doesn’t specify the click-through rate, but based on a similar study my guess is it’s around 2%.)

- People who click on the ad are asked to complete a survey, and to give researchers view permissions to their Facebook feed.

- Near the end of the survey it is suggested that they Like the web pages of four media outlets, either all in line with their ideology ("Pro") or all opposing it ("Counter").

- Conservative outlets: Fox, National Review, WSJ, Washington Times

- Liberal outlets: HuffPo, MSNBC, NYT, Slate

- Around 50% of users in both the Pro and Counter arms subscribed to at least one outlet (the “compliers”). Compliers subscribed to 1.4 (counter) or 2.0 (pro) of the 4 outlets on average (see Figure 4 below).

- A follow-up ("endline") survey, 2 months after the intervention, asks various questions about political beliefs (what legislation you would support) and attitudes (how you feel about members of the opposite party). About half of compliers filled this out.

- Users are also asked to install a browser extension that tracks browsing activity. About 5% did so.

How does more diverse news shape opinions and attitudes?

Let's first dive into the meat: Does showing users counter-attitudinal news change their political attitudes and beliefs?

The basic answer is: sort of. It turns out that it doesn't change their beliefs much at all: users aren't going to change how they vote based on Facebook articles. However, it has a small but meaningful effect on attitudes: how users feel about the opposing party. This is important, because this sort of affective polarization is probably the most dangerous to a functioning democracy (see Iyengar et al., 2019), so we want ways to reduce it.

In a bit more detail, Levy finds that for the counter-attitudinal arm, affective polarization decreased by about 1 point on a 0-100 scale; in comparison, it has increased by about 10 points over the past 20 years, so this undoes 10% or "2 years worth" of polarization. That's a small slice of the pie, but pretty impressive for a single intervention!

In even more detail:

- Levy says that "The ITT and TOT effects of the counter-attitudinal treatment decrease the difference between the feeling toward the participant’s party and the opposing party by 0.58 and 0.96 degrees (on a 0-100 scale), respectively. For comparison, in the past 20 years, the feeling thermometer measure increased by 3.83-10.52 degrees." Here ITT refers to the users who clicked on the ad, and TOT refers to the users who further clicked Like on at least one news outlet.

- Looking into the past 20 years comparison, I couldn’t find the source of the 3.83 number, and sources I looked at gave 9-10 degrees change, so I went with that above.

- Measured in standard deviations, ITT/TOT effects are 0.03/0.06 stdevs (this is for an aggregate index of affective polarization that is slightly different than the feelings thermometer above).

- Levy cites a related paper that says: "Allcott et al. (2020) found that disconnecting from Facebook decreases the feeling thermometer measure by 2.09 degrees." This is larger than the 0.96 number, but corresponds to a much stronger intervention. The standard error on Allcott et al.'s estimate is moderately high, so I think it's consistent with either a much larger effect or close to zero effect. However, for an aggregate index of affective polarization, Allcott et al. found a more precisely estimated decrease of about 0.16 standard deviations (95% CI: 0.08 to 0.24), or about 2.5x the effect size in Levy. Altogether, 2 degrees for disconnecting from Facebook feels like a reasonable estimate.

Levy applies a few back-of-the-envelope calculations to get additional estimates:

- "If the feed had an equal share of pro- and counter-attitudinal news, the difference between the feelings toward one’s party and the opposing party would decrease by 3.94 degrees."

- "An increase of one standard deviation in the share of exposure to counter-attitudinal news decreases affective polarization by 0.13 standard deviations.”

- “In a second back-of-the-envelope calculation I estimate how affective polarization would change if individuals were exposed in their Facebook feed to the same share of counter-attitudinal outlets, or the same congruence scale, as they encounter when visiting news sites not through Facebook. I find that the feeling thermometer outcome would decrease by 0.25-0.62 degrees.”

This last point is important, as it implies that the 3.94-degree decrease from equalizing the feed is probably not actually attainable, since it would require showing users content that was significantly more balanced than they would seek out themselves.

Overall Story

Pulling together the above numbers and estimates, it seems to me that:

- Intervention among compliers decreases polarization by an amount equivalent to ≈1 point on the 0-100 thermometer scale.

- It’s difficult to imagine any similar intervention having more than 4x the effect on what news people consume (as that would correspond to fully equalizing feeds, or increasing counter-attitudinal exposure by 2 stdevs).

- I’m personally skeptical that you could do much better than 1 point simply by changing the feed:

- Posts from friends are more than 75% as segregated as FB as a whole (recall that Pages are the main driver of ideological segregation on FB).

- While FB affects some non-FB browsing via influencing searches, the majority of non-FB browsing is direct. So hard to imagine more than doubling the effect of making FB look like non-FB (which is 0.25-0.62 degrees per above).

- Overall, I think FB interventions, through direct effect, could undo 10% of the US polarization increase from the past 20 years. But there could be further effects by changing incentives of news providers, and by decreasing the extremity of news as well as its slant.

- It's possible that interventions could lead to accumulating change over time, rather than a one-time effect. This could then have a much larger effect than estimated above.

Appendix: Other Effects

Levy also estimates several other causal effects, primarily on how much his nudge is able to change the composition and slant of newsfeeds. While not directly related to the polarization question, I still found them interesting, so I've collected them in this section.

Effect of intervention on media consumption

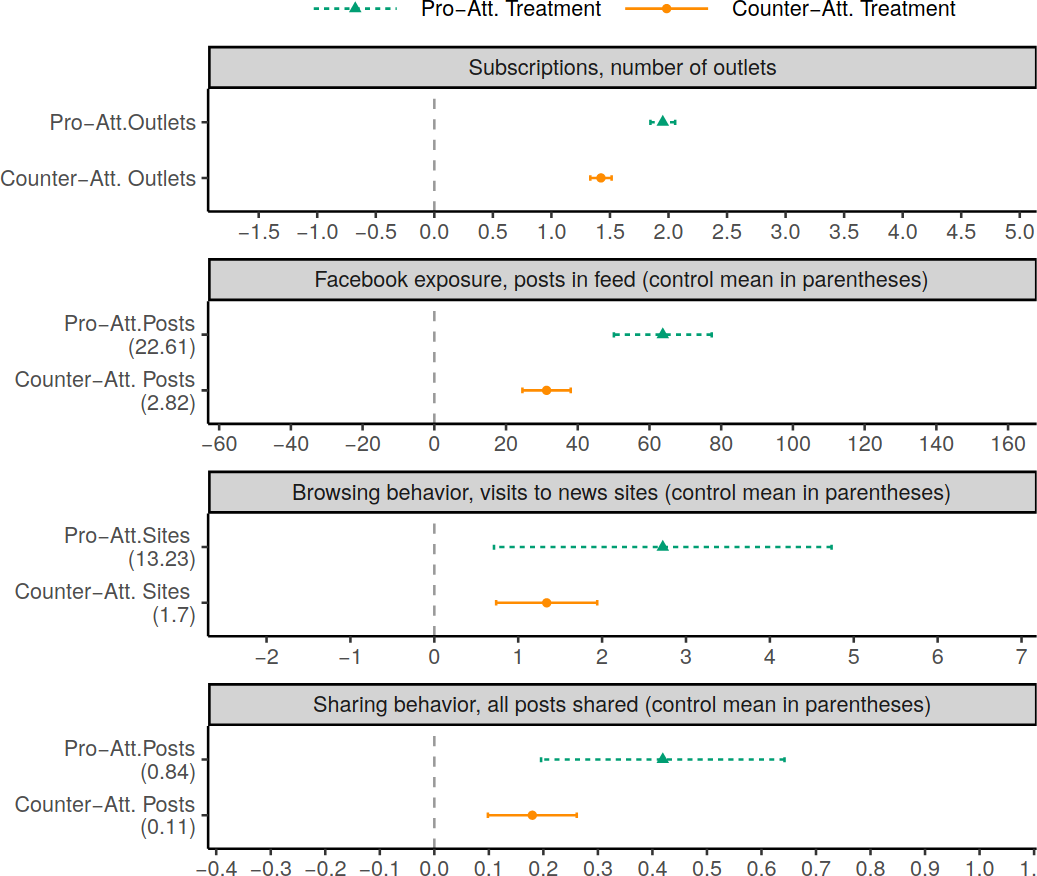

As stated above, Levy nudges users to subscribe to 4 outlets that are either pro-attitudinal (in line with political leaning) or counter-attitudinal. The following figure summarizes the results of this intervention.

Figure 4 of Levy (2020)

Below are some more details on the intervention effects, pulling quotes from the paper.

On the feed. "In the two weeks following the intervention, participants in the pro- and counter-attitudinal treatments were exposed to 64 and 31 additional posts from the potential pro- and counter-attitudinal outlets, respectively. For comparison, control group participants were exposed to 266 posts from leading news outlets, and 2,335 posts in total." Note the implication that only 11% of Facebook posts are news; among those news posts, the intervention adds around 10%-25%.

On post sharing. Levy finds an average of 0.18 shares for counter, 0.41 for pro; over baselines of 0.11, 0.84. In addition, users are not just sharing to disagree---there was an increase even among posts with no commentary; 0.06 for counter, 0.28 for pro (baseline 0.05, 0.46). (See Figure A.6 for details.)

On external visits. "The counter-attitudinal treatment increased total visits to the websites of the counter-attitudinal outlets by 79%, an ITT effect of 1.34 [95%CI≈0.7] visits over a baseline of 1.70 visits in the two weeks following the intervention. The pro-attitudinal treatment increased the number of visits to the websites of pro-attitudinal outlets by 21%, an ITT effect of 2.72 [95%CI≈2] visits over a baseline of 13.23." ITT stands for "intention to treat", which in this case means the average effect once a user clicks on the recruitment ad. Note the large error bars for this measure, probably caused by heterogeneity in external visits and smaller sample size (only the 5% of users who installed the browser extension). My main takeaway is that the counter-attitudinal treatment led users to visit sites they may not have otherwise.

Also, as mentioned in the previous post, users see less posts from the counter-attitudinal outlets than from the pro-attitudinal outlets. Perhaps 40% of this effect comes from the FB algorithm, and the rest from other effects (fewer subscriptions, less browsing time). See Figure 8 or the previous post for details.

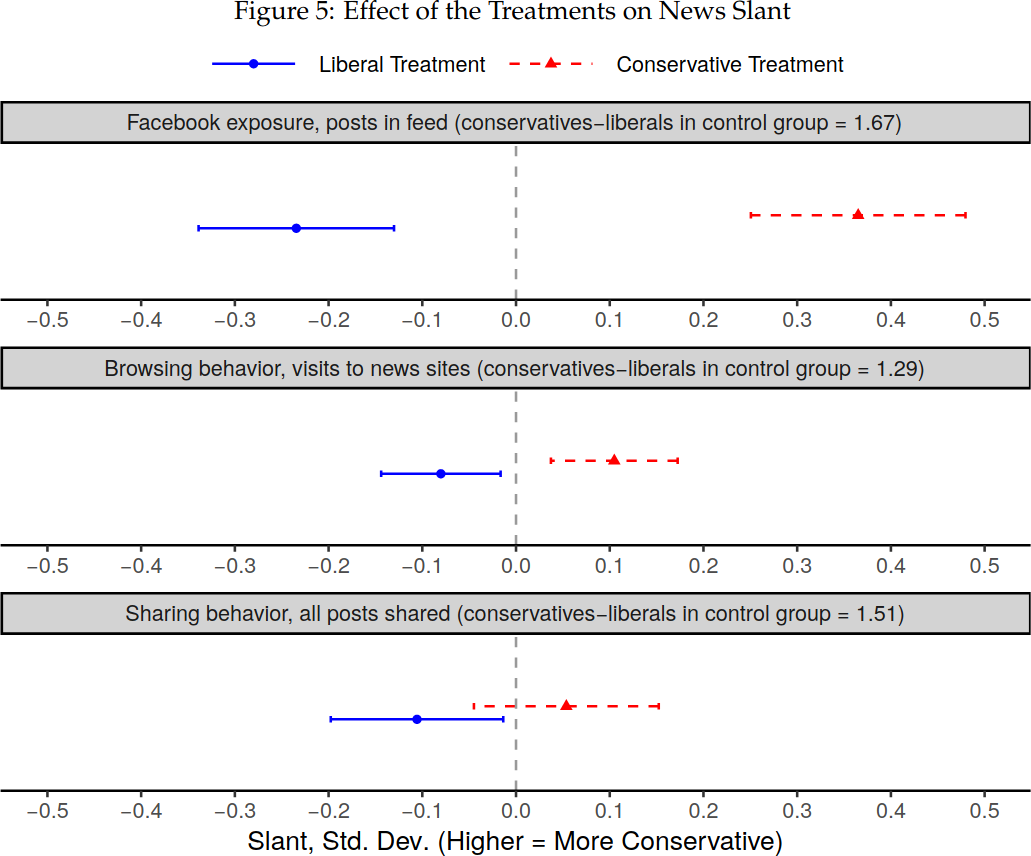

Effects of intervention on news slant

Using the above numbers and some back-of-the-envelope calculations, we can calculate how much this intervention changed the overall slant of newsfeeds. Keep in mind that only 11% of the Facebook feed is news, so it is possible to change the slant significantly without changing the overall feed by that much. As before, I'll mostly quote the result of Levy's calculations, although I did check that they seemed reasonable to me:

-

If you switch from nudging users in a liberal direction vs. nudging them in a conservative direction, you can noticeably shift the slant of their feed: by around 15% of the average difference between liberals and conservatives. Levy: "The combined effects of the liberal and conservative treatments equals 14%-19% (ITT-TOT) of the difference in the slant of news sites visited by conservatives and liberals in the control group." Another estimate (based on Figure 5 below) is that the treatment leads to about a 0.3 standard deviation change in slant.

-

We can further contextualize this as follows: “Based on the Comscore panel, the TOT effect of the liberal treatment would have shifted the online news diet of an individual in Pennsylvania, a swing state, to a diet similar to an individual in New York, a blue state, and the TOT effect of the conservative treatment would have led to a news diet similar to an individual in South Carolina, a red state"

Figure 5: Effects of Treatment on News Slant

The change in newsfeed also affects downstream browsing behavior:

-

"When the compliers’ news feed became one standard deviation more conservative, the slant of the news sites they visit became 0.31 standard deviations more conservative. The effect on the slant of the subset of news sites visited through Facebook is 0.72 standard deviations." But: "Since these calculations rely on stronger assumptions than the ITT and TOT estimates, they should be interpreted cautiously."

-

Levy notes that most of the difference is due to the specific outlets from the study (as opposed to reading new articles more generally). "The mean slant of news consumption is not strongly affected by the treatments when the potential outlets are excluded, implying that the experiment did not have large crowd-in or crowd-out effects."

-

Effects decay a bit over time but are relatively persistent 3 months later (probably at least 50% of the effect after 1 week); see Figures 6 and A.8.

Summary. A cheap nudge on Facebook leads to a 0.3 standard deviation change in news slant on the feed, which leads to perhaps a 0.1 standard deviation change in overall news consumption (take this second number with a grain of salt). This effect decays a bit over time, but only by about 50% after 3 months.

1 comments

Comments sorted by top scores.

comment by CraigMichael · 2021-09-11T05:14:57.945Z · LW(p) · GW(p)

I’m intrigued by the concept of affective polarization.

My views are probably more moderate and this point in my life than they’ve ever been. I’ve said somewhat recently that “I’m an anarchist that’s been mugged by reality.”

My politics and attitudes from 20 years ago are close to what what you might find trending on Twitter, but a bit more extreme.

This will sound crazy, but at times I’ve wondered if I’m not in some kind of after-life or “simulation jail” where I have to deal with millions and millions of people in the US making noises very similar to my own from two decades prior and if it’s it like… some kind of cosmic revenge or the universe trying to teach me a painful lesson. It feels uncanny at times.

That’s kind of a tangent, but maybe relevant background to say myself and others of similar stripes I knew had a lot of extreme points of view at one time, but we were mostly self-marginalized and we’re ineffective because of them. I’d guess this was because we were much more of a minority at the time.

It’s different now in that what was once alienating beliefs and behaviors are now an entry ticket for safe harbor—it means you can express your beliefs and find shelter, even if it’s tenuous and always moving like a peloton.

I have to think that this is ultimately what social media provides, the ability to “move” very efficiently in a herd and in a way that’s the maximally rewarding, but contingent on being maximally harassing to another herd.

It feels like refuge, but it’s all a fugazi.