Queuing theory: Benefits of operating at 60% capacity

post by ampdot · 2023-12-01T18:48:01.426Z · LW · GW · 4 commentsThis is a link post for https://less.works/less/principles/queueing_theory

Contents

4 comments

Related to Slack [? · GW]. Related to Lean Manufacturing, aka JIT Manufacturing.

TL;DR A successful task-based system should sometimes be idle, like 40% of worker ants.

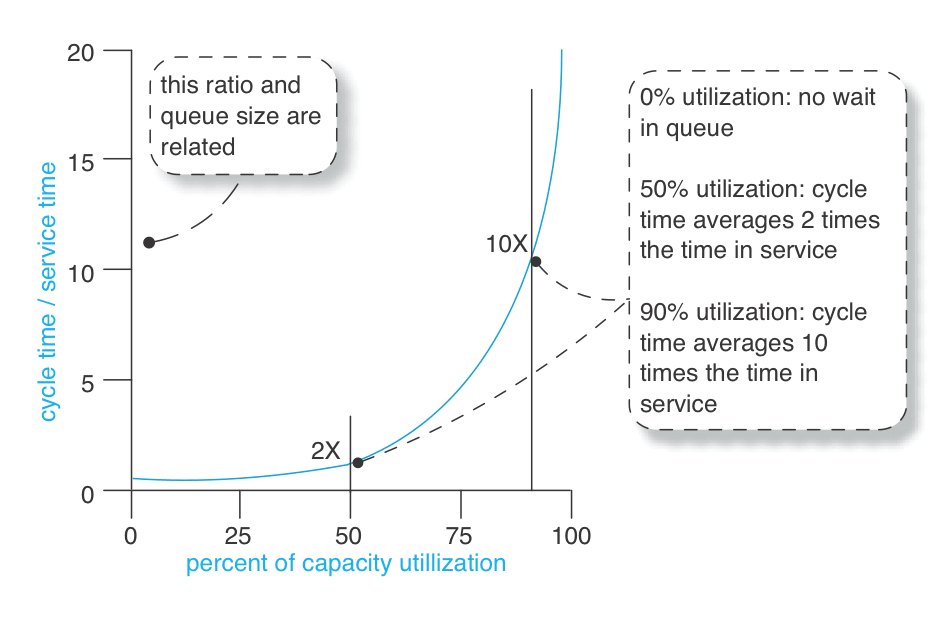

Doing tasks quickly is essential for producing value in many systems. For software teams, delivering a feature gives valuable insight into user needs, which can improve future feature quality. For supply chains, faster delivery releases capital for reinvestment. However, the relationship between capacity utilized and service time is exponential, as shown by the diagram below.

A heuristic we can derive from queuing theory is that the optimal balance between efficiency and capacity typically occurs when the system is around 30-40% idle. For a single producer system, being X% idle is that producer being idle X% of the time. For a multi-producer system, being X% idle is X% of those producers being idle on average. This heuristic applies best to systems involving lots of discrete, oddly-shaped tasks.

The linked post explains this theory in more detail, and gives examples of where queues appear in the real world. See also the Wikipedia article:

Queueing theory is the mathematical study of waiting lines, or queues.[1] A queueing model is constructed so that queue lengths and waiting time can be predicted.[1] Queueing theory is generally considered a branch of operations research because the results are often used when making business decisions about the resources needed to provide a service.

4 comments

Comments sorted by top scores.

comment by Sune · 2023-12-01T22:26:00.669Z · LW(p) · GW(p)

You don’t really need the producers to be “idle”, you just have to ensure that if something important shows up, they are ready to work on that. Instead of having idle producers, you can just have them work on lower priority tasks. Has this also been modelled in queueing theory?

Replies from: Dagon, Viliam↑ comment by Dagon · 2023-12-01T23:42:41.844Z · LW(p) · GW(p)

Definitely, along with switching costs (if you drop a low-priority to work on a high-priority item, there's some delay and some waste involved). In many systems, the switching delay/cost is high enough that it's best to just leave some nodes idle. In others, the low-priority things can be arranged such that dropping/setting-aside is pretty painless.

↑ comment by Viliam · 2023-12-03T15:41:49.984Z · LW(p) · GW(p)

You need to make sure that when something important shows up, (1) the system will clearly recognize that this happened, and (2) the producers will actually be able to abandon the lower-priority task quickly.

I have seen companies trying to implement this, but what often actually happens is that the manager responsible for the lower-priority task just keeps assigning work to the employees anyway. The underlying cause is that the manager's incentives are misaligned with the company goals -- his bonus depends on getting the lower-priority task done. (How would you set up his incentives?)

comment by MondSemmel · 2023-12-02T00:53:43.289Z · LW(p) · GW(p)

Related reading: IIRC the topic of queuing theory features prominently in the great book Algorithms to Live By.