An overview of the mental model theory

post by ScottL · 2015-08-17T13:18:24.519Z · LW · GW · Legacy · 26 commentsContents

Mental Models Fully Explicit Models Model Model Model Model Model Model Model Model Model Model Potentially enduring Variable explicitness Limited They determine how effectively we interact with the systems they model Internal References None 26 comments

There is dispute about what exactly a “mental model” is and the concepts related to it are often aren't clarified well. One feature of them that is generally accepted is that “the structure of mental models ‘mirrors’ the perceived structure of the external system being modelled.” (Doyle & Ford, 1998, p. 17) So, as a starting definition we can say that mental models, in general, are representations in the mind of real or imaginary situations. A full definition won’t be attempted because there is too much contention about what features mental models do and do not have. The features that are accepted will be described in detail which will hopefully lead you to gain a intuitive understanding of what mental models are probably like.

The mental model theory assumes that people do not innately rely on formal rules of inference, but instead rely on their mental models which are based on their understanding of the premises and their general knowledge. A foundational principle of the mental model theory is the principle of truth which states that “reasoners represent as little information as possible in explicit models and, in particular, that they represent only information about what is true” (Johnson-Laird & Savary, 1996, p. 69) Individuals do this to to minimize the load on working memory.

Consider the following example: “There is not a king in the hand, or else there is an ace in the hand”. If we take ‘¬’ to indicate negation, then according to the principle of truth reasoners will construct only two separate models for this example: (¬ king) and (ace). Note that if a proposition is false, then it’s negation is true. In this example (¬ king) is true.

Looking at the mental models of this example we can say that they, like most others, represents the literals in the premises when they are true in the true possibilities, but not when they are false. This means that it does not include (king) and (¬ace) which are false. To keep track of what is false reasoners make mental “footnotes”. This can be problematic as these footnotes are hard to remember and people also tend to only consider what is represented in their mental models of a situation.

The mental model theory is not without its critics and no one knows for sure how people reason, but the theory does make some predictions which have empirical support and these predictions also lead to certain systematic fallacies which have been found to have empirical support. The principle predictions of the mental model theory are that (Johnson-Laird, Girotto, & Legrenzi, 2005, p. 11-12):

- Reasoners normally build models of what is true, not what is false. This is known as the principle of truth and it leads to two main systematic fallacies:the illusion of possibility and the illusion of impossibility.

- Reasoners tend to focus on one of the possible models of multi-model problems, and are thereby led to erroneous conclusions and irrational decisions. This means that they do not consider alternative models and this leads to the focusing effect which is similar to the framing effect in psychology.

- Reasoning is easier from one model than from multiple models. This is known as the disjunctive effect.

A consequence of the principle of truth (people don’t put false possibilities in their model) is the illusion of possibility which is demonstrated in the below problem (Try to solve it):

Before you stands a card-dealing robot. This robot has been programmed to deal one hand of cards. You are going to make a bet with another person on whether the dealt hand will contain an ace or whether it will contain a king. If the dealt hand is just a single queen, it's a draw. Note that the robot is a black box. That is, you don't know anything about how it works, for example the algorithm it uses. You do, however, have two statements about what the possibilities of the dealt hand could be. These two statements are from two different designers of the robot. The problem is that you know that one of the designers lied to you (their statement is always false) and the other designer told the truth. You don't know which one is telling the truth. This means that you know that only one of the following statements about the dealt hand is true.

- The dealt hand will contain either a king or an ace (or both).

- The dealt hand will contain either a queen or an ace (or both).

Based on what you know, should you bet that the dealt hand will contain an Ace or that it will contain a King?

If you think that the ace is the better bet, then you would have made a losing bet. This is because it is because it is impossible for an ace to be in the dealt hand. Only one statement about the dealt hand of cards is true. This fact precludes the possibility that the Ace will be in the dealt hand. The Ace is in both statements and both statements cannot be true as per the requirement. A consequence of this is that it is impossible for the dealt hand to contain an ace which means that the it is more likely for a king to be in the dealt hand. Thus, you should have bet that the hand will contain a king.

If you still don't believe that the king is the better bet, then see this post where I go into the explicit details on this problem.

Confidence in solving the problem has been ruled out as a contributing factor to the illusion. People’s confidence in their conclusions did not differ reliably from the control problems and the problems that were expected to induce the illusion of possibility (Goldvarg & Johnson-Laird, 2000, p. 289)

Another example is (Yang & Johnson-Laird, 2000, p. 453) :

Only one of the following premises is true about a particular hand of cards:

- There is a king in the hand or there is an ace, or both

- There is a queen in the hand or there is an ace, or both

- There is a jack in the hand or there is a ten, or both

Is it possible that there is an ace in the hand?

Nearly everyone responds “yes” which is incorrect. This is because the presence of the ace would render two of the premises true. As per the given rule, only one of the premises is true. Thereby, meaning that only the 3rd premise can be true. This means that there cannot be an ace in the hand. The reason, in summary, for why people respond incorrectly is that they consider the first premise and conclude that an ace is possible. Then, they consider the second premise and reach the same conclusion, but they fail to consider the falsity of the other premise, i.e. if premise 1 is true then premise 2 must be false to fulfil the requirement.

The illusion of impossibility is the opposite of the illusion of possibility. It is demonstrated in the below problem:

Only one of the following premises is true about a particular hand of cards:

- If there is a king in the hand, then there is not an ace

- If there is a queen in the hand, then there is not an ace.

Is it possible that there is a king and an ace in the hand.

People commonly answered “no” which is incorrect. As you can see in the below full explicit model table, rows three and four contain an ace.

|

Mental Models |

Fully Explicit Models |

|||||

|

K |

|

¬A |

|

K |

|

¬A |

|

|

Q |

¬A |

|

|

Q |

¬A |

|

|

|

|

|

¬K |

Q |

A |

|

|

|

|

|

K |

¬Q |

A |

It has been found that illusions of possibility are more compelling than illusions of impossibility. (Goldvarg & Johnson-Laird, 2000, p. 291) The purported reason for this is that “It is easier to infer that a situation is possible as opposed to impossible.” (Johnson-Laird & Bell, 1998, p. 25) This is because possibility only requires that one model of the premises is true, whereas impossibility depends on all the models being false. So, reasoners respond that a situation is possible as soon as they find a model that satisfies it, but to find out if a situation is impossible they will often need to flesh out their models more explicitly which has an effect of reducing the tendency for people to exhibit the illusion of impossibility.

We have already discussed that when people construct mental models they make explicit as little as possible (principle of truth). Now, we will look at their propensity to focus only on the information which is explicit in their models. This is called the focusing effect.

Focusing is the idea that people in general fail to make a thorough search for alternatives when making decisions. When faced with the choice of whether or not to carry out a certain action, they will construct a model of the action and an alternative model, which is often implicit, in which it does not occur, but they will often neglect to search for information about alternative actions. Focusing has been found to be reduced by manipulations which make the alternatives more available. (Legrenzi, Girotto, & Johnson-Laird, 1993)

Focusing leads people to fail to consider possibilities that lie outside of their models. A consequence of this is that they can overlook the correct possibility. If you know nothing about the alternatives to a particular course of action, then you can neither assess their utilities nor compare them with the utility of the action. Hence, one cannot make a rational decision.

The context in which decisions are presented can determine the attributes that individuals will enquire about before making a decision. Consider the choice between two resorts:

- Resort A has good beaches, plenty of sunshine, and is easy to get to.

- Resort B has good beaches, cheap food, and comfortable hotels.

The focusing hypothesis implies that people will only attempt to seek out information in order to flesh out the variables in one of the options, but not the other. For example, because they know about the weather in resort A they will seek out information on the weather in resort B. The hypothesis also predicts that once these attributes have been fleshed out people will generally believe that they can make a decision as to which is the best resort. In summary, the initial specification of the decision acts as a focus for both the information that individuals seek and their ultimate decision and consequently they will tend to overlook other attributes. For example, they may not consider the resort’s hostility to tourists or any other factor not included in the original specification.

The last of the principle predictions of the mental model theory is the disjunction effect, which basically means that people find it easier to reason using one model rather than from multiple models. A disjunction effect occurs when a person will do an action if a specific event occurs and will do the same action if the specific event does not occur, but will not do the same action if they are uncertain whether the specific event will occur. (Shafir & Tversky, 1992) This is a violation of the Sure-Thing Principle in decision theory (sure things should not affect one’s preferences).

The disjunction effect is explained by mental model theory. “If the information available about a particular option is disjunctive in form, then the resulting conflict or load on working memory will make it harder to infer a reason for choosing this option in comparison to an option for which categorical information is available. The harder it is to infer a reason for a choice, the less attractive that choice is likely to be.” (Legrenzi, Girotto, & Johnson-Laird, 1993, p. 64) It has been found that “Problems requiring one mental model elicited more correct responses than problems requiring multiple models, which in turn elicited more correct answers than multiple model problems with no valid answers.” (Schaeken, Johnson-Laird, & d'Ydewalle, 1994, p. 205) An answer is valid if it is not invalidated by another model. If you have two models (A) and (¬A), then they are both invalidate each other.

The three problems below will illustrate the difference between one model, multi-model and multi-model with no valid answer problems.

This first problem can be solved by using only one model.

Premises:

- The suspect ran away before the bank manager was stabbed

- The bank manager was stabbed before the clerk rang the alarm.

- The police arrived at the bank while the bank manager was being stabbed

- The reporter arrived at the bank while the clerk rang the alarm.

What is the temporal relation between the police arriving at the bank and the reporter arriving at the bank?

This problem yields the model below, with time running from left to right, which supports the answer that the police arrived before the reporter. The premises do not support any model that refutes this answer and so it is valid. That is, it must be true given that the premises are true.

|

Model |

||

|

Suspects runs away |

Bank manager was stabbed |

Clerk rang the alarm |

|

|

The police arrived at the bank |

The reporter arrived at the bank |

Premises:

- The suspect ran away before the bank manager was stabbed

- The clerk rang the alarm before the bank manager was stabbed

- The police arrived at the bank while the clerk rang the alarm

- The reporter arrived at the bank while the bank manager was being stabbed

What is the temporal relation between the police arriving at the bank and the reporter arriving at the bank?

This problem yields the three models below, with time running from left to right. The three models support the answer that the police arrived before the reporter because the premises do not support any model that refutes this answer and so it is valid.

|

Model |

||

|

Suspects runs away |

Clerk rang the alarm |

Bank manager was stabbed |

|

|

The police arrived at the bank |

The reporter arrived at the bank |

|

Model |

||

|

Clerk rang the alarm |

Suspects runs away |

Bank manager was stabbed |

|

The police arrived at the bank |

|

The reporter arrived at the bank |

|

Model |

|

|

Suspects runs away/ Clerk rang the alarm |

Bank manager was stabbed |

|

The police arrived at the bank |

The reporter arrived at the bank |

This third problem involves the generation of three possible models and there is also no valid answer. This problem is the hardest out of the three and because of this it had the most incorrect answers and took the longest to solve.

Premises:

- The suspect ran away before the bank manager was stabbed

- The clerk rang the alarm before the bank manager was stabbed

- The police arrived at the bank while the clerk rang the alarm

- The reporter arrived at the bank while the suspect was running away

What is the temporal relation between the police arriving at the bank and the reporter arriving at the bank?

This problem yields the three models below, with time running from left to right. There is no valid answer in these answers. To be valid the same temporal relation must be in all of the models.

|

Model |

||

|

Suspects runs away |

Clerk rang the alarm |

Bank manager was stabbed |

|

The reporter arrived at the bank |

The police arrived at the bank |

|

|

Model |

||

|

Clerk rang the alarm |

Suspects runs away |

Bank manager was stabbed |

|

The police arrived at the bank |

The reporter arrived at the bank |

|

|

Model |

|

|

Suspects runs away/Clerk rang the alarm |

Bank manager was stabbed |

|

The police arrived at the bank/The reporter arrived at the bank |

|

A common example of problems that display disjunctive effects is problems involving meta-reasoning, specifically reasoning about what others are reasoning. Consider the following problem:

Three wise men who can only tell the truth are told to stand in a straight line, one in front of the other. A hat is put on top of each of their heads. They are told that each of these hats was selected from a group of five hats: two black hats and three white hats. The first man, stand at the front of the line, can't see either of the men behind him or their hats. The second man, in the middle, can see only the first man and his hat. The last man, at the rear, can see both other men and their hats.

Each wise man must say what color their hat is if they know it. If they don’t, they must say: “I don’t know”. The wise men cannot interact with each other in any other way. The first wise man who could see the two hats in front of him said, "I don't know". The second wise man heard this and then said, "I don't know". What did the last wise man, who had heard the two previous answers, then say?

This problem can be solved by considering the deductions and models of each of the wise men. The first can only deduce the colour of their hat if the two wise men in front of him have black hats. Knowing this and that the first wise man said that they do not know the second wise man creates the three models below to represent all the possibilities given the information that he knows.

|

Model |

||

|

First wise man |

Second wise man |

Third wise man |

|

? |

White |

White |

|

Model |

||

|

First wise man |

Second wise man |

Third wise man |

|

? |

Black |

White |

|

Model |

||

|

First wise man |

Second wise man |

Third wise man |

|

? |

White |

Black |

The third wise man deduces that if the second wise man had seen a black hat he would have known the colour of his hat, i.e. only the third model would have been possible. Since the second wise man said that he did not know the colour of his hat. The first and second models are the two possibilities which could be true. In both of these possibilities the third wise man’s hat colour is white. Therefore, the third wise man knows that the colour of his hat is white.

The type of problem above can be generalised to any number (n) as long as there are n white hats and n-1 black hats. People often consider these problems to be hard. This is due to three main factors:

- The problems place a considerable load on working memory because a reasoner has to construct a model of one person's model of another person's model of the situation. This can get much harder with problems involving more participants as each participant would need to hold a model of the models of all previous participants. In the above example, the last wise man needs to know that model of the first wise man and use this to infer the model of the second wise man.

- These types of problems often can only be solved by constructing and retaining disjunctive sets of models. We have already covered how disjunctive models are a source of difficulty because of their load on working memory. In this example, the last wise man’s reasoning depends on the three models that the second wise man would have created due to the first wise man’s response.

- The recursive strategy required to solve this problem is not one that people are likely to use without prior training. This is because they have to reflect upon the problem and discover for themselves the information that is latent in each of the wise man’s answers.

The above should suffice as an overview of the principles behind the mental model theory and the limitations to human reasoning that they predict.

There are a few other assumption and principles of mental models. (The assumptions have be taken from the Mental Models and Reasoning website). Three of these have already been covered and they are:

- The principle of truth (mental models represent only what is true)

- Focusing effect (people in general fail to make a thorough search for alternatives when making decisions)

- The greater the number of alternative models needed, the harder the problem is.

The other assumptions include:

- “Each mental model represents a possibility, and its structure and content capture what is common to the different ways in which the possibility might occur.” (Johnson-Laird P. , 1999, p. 116). For example, the premise “There is not a king in the hand, or else there is an ace in the hand” leads to the creation of two models. One for each possibility (¬ king) and (ace).

- “Mental models are iconic insofar as that is possible, i.e., the structure of a model corresponds to the structure of what it represents” (Khemlani, Lotstein, & Johnson-Laird, 2014, p. 2).A visual image is iconic, but icons can also represent states of affairs that cannot be visualized, for example negation. (Johnson-Laird, 2010) Although, mental models are iconic visual imagery is not the same as building a model.So, visual imagery is not a prerequisite for reasoning. In fact, it can also be a burden. “If the content yields visual images that are irrelevant to an inference, as it does with visual relations, reasoning is impeded and reliably takes longer. The vivid details in the mental image interfere with thinking.”(Knauff & Johnson-Laird, 2002, p. 370).There are four sets of relations which lead to mental models (Knauff, Fangmeier, Ruff, & Johnson-Laird, 2003, p. 560):

- Visuospatial relations that are easy to envisage visually and spatially, such as ‘‘above’’ and ‘‘below’’

- Visual relations that are easy to envisage visually but hard to envisage spatially, such as ‘‘cleaner’’ and ‘‘dirtier’’

- Spatial relations that are difficult to envisage visually but easy to envisage spatially, such as ‘‘further north’’ and ‘‘further south’’

- Control relations that are hard to envisage both visually and spatially, such as ‘‘better’’ and ‘‘worse’’

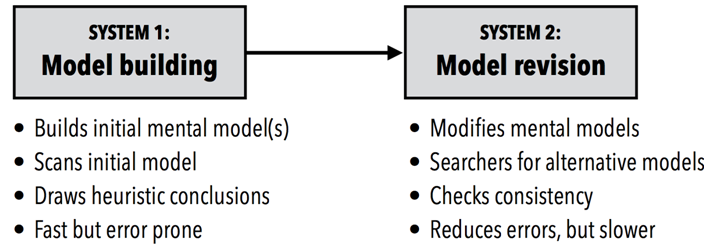

- The mental model theory gives a ‘dual process’ account of reasoning. The deliberative system (system 2) has access to working memory and so it can carry out recursive processes, such as a search for alternative models, and the assignment of numbers to intuitive probabilities. The intuitive system cannot as it does not have access to working memory and only cope with one model at a time. (Johnson-Laird P., 2013, pp. 132-133).

- The mental model theory explains induction, deduction and abduction. Deductions are inferences that either maintain or throw away information. Inductions are inferences that increase information and abduction is a special case of induction that that also introduces new ideas to explain something.

- The meanings of terms such as ‘if’ and ‘or’ can be modulated by context and knowledge.. There are a couple more principles that apply to conditionals, see (Johnson-Laird & Bryne, 2002). An important one is the principle of pragmatic modulation which means that the “the context of a conditional depends on general knowledge in long-term memory and knowledge of the specific circumstances of its utterance. This context is normally represented in explicit models. These models can modulate the core interpretation of a conditional, taking precedence over contradictory models. They can add information to models, prevent the construction of otherwise feasible models, and aid the process of constructing fully explicit models.” (Johnson-Laird & Bryne, 2002, p. 659) An example of this principle in action is that people will often use their geographical knowledge (Stockholm is a city in Sweden) to module the disjunction: Steve is in Stockholm or he is in Sweden. Unlike most other disjunction, this one yields a definite conclusion that Jay is in Sweden.

We have previously been dealing with simple mental models. It is important to note that not all mental models are simple. In fact, they can be quite complicated. Below are some of the general features of mental models in general.

Potentially enduring

There is debate about whether or not mental models are located in working memory or long-term memory. A possibility is that humans reason by constructing mental models of the situation, event and processes in working memory. These models are iconic, but leave open the questions of the nature of the representation in long-term memory. (Nersessian, 2002, p. 143) Describing mental models as potentially enduring is meant to capture the idea that while the minutiae or even large parts of a mental model may be altered, deleted or added the overall mental model or gist can endure in memory in some form over years or decades. So, the mental model is vague, variable and hazy, but can be fleshed out using constructs in long-term memory using system 2 processes.“The mental model is fuzzy. It is incomplete. It is imprecisely stated. Furthermore, within one individual, a mental model changes with time and even during the flow of a single conversation.” (Forrester, 1961, p. 213)

Variable explicitness

Mental models are described as relatively accessible because “the nature of model manipulation can range from implicit to explicit” (Rouse & Morris, 1985, p. 21) . Mental models that are described as implicit are the mental model-like structures that are outside of conscious awareness whereas explicit ones are the opposite. Explicit mental models are the ones which we have full awareness of. They are also often the models that consist of the full set of possibilities. Implicit models are normally generated with system 1 processes without awareness and then later fleshed out into explicit models using system 2 processes. So, implicit and explicit refers to the method for model manipulation rather than a static characteristic of the model. This definition of a mental model captures the essence of what an implicit mental model is:“Mental models are deeply ingrained assumptions, generalizations, or even pictures or images that influence how we understand the world and how we take action. Very often, we are not consciously aware of our mental models or the effects they have on our behaviour.” (Senge, 1990, p. 11)

Limited

Just as real systems vary in size and complexity, mental models do as well. However, due to underlying cognitive structures of the current human brain and expected use of mental models we can put general upper bounds and lower bounds on what we expect this variation to be. These bounds are driven by factors such as:

- The requirement for mental models to be pragmatic. This requirement leads to mental models being simplifications of the systems that they represent. If they were a 1:1 model, then they would not be useful as you could just simply interact with the system itself. This means that mental model must, by necessity, be missing some information about the system.

- Bounded rationality. “The number of variables [people] can in fact properly relate to one another is very limited. The intuitive judgment of even a skilled investigator is quite unreliable in anticipating the dynamic behavior of a simple information-feedback system of perhaps five or six variables.” (Forrester, 1994, p. 60) A mental model needs to be small enough to be able to be implemented in short term memory which is generally considered to have a capacity of two numbers less than 10 "chunks" of information(Shiffrin & Nosofsky, 1994, p. 360) . This limit is flexible in the sense that the amount of information that can be organized meaningfully into a chunk can increase with experience and expertise. A reasonable lower bound is two variables and two causal relationships. Anything less than this, e.g. a single causation such as “If X increases, Y increases”, should be called an assumption or belief, depending on confidence level, rather than a model.

- People are generally poor in developing mental models that handle unintuitive concepts. “People generally adopt an event-based, ‘open-loop’ view of causality, ignore feedback processes, fail to appreciate time delays between action and response and in the reporting of information, do not understand stocks and flows, and are insensitive to nonlinearities which may alter the strengths of different feedback loops as a system evolves. ” (Paich & Sterman, 1993, p. 3)

The term limited is not meant to imply that mental models can’t be complex or have significant effects on our reasoning and behaviour.

Mental models can be simple generalizations such as "people are untrustworthy," or they can be complex theories, such as my assumptions about why members of my family interact as they do. But what is most important to grasp is that mental models are active— they shape how we act. If we believe people are untrustworthy, we act differently from the way we would if we believed they were trustworthy.[...] Two people with different mental models can observe the same event and describe it differently, because they've looked at different details.(Senge, 1990, p. 160)

They determine how effectively we interact with the systems they model

It has been purported that faulty mental models were one of the main factors leading to the delay in evacuating inhabitants of the nearby town of the Chernobyl explosion (Johnson-Laird P. , 1994, pp. 199-200) . The engineers in charge at Chernobyl inferred initially that the explosion had not destroyed the reactor. Such an event was unthinkable from their previous experience, and they had no evidence to suppose that it had occurred. The following problems with how people use their mental models have been found (Norman, 1983, pp. 8-11) :

- People have limited abilities to “run” their mental models. They have limited mental operations that they can complete. Therefore, in general the harder something is to use the less it will be used if there are easier alternatives available.

- Mental models are unstable. People forget the details of the system. This happens frequently when those details or the whole system has not been used for some time. Mental models are also dynamic. They are always being updated.

- Mental models do not have firm boundaries: similar devices and operations can get confused with each other.

- Mental models are “unscientific” in the sense that people often maintain superstitions. That is, behaviour patterns which even though not needed are continued because they cause little in physical effort, but save in mental effort or comfort. The reason for this is that people often have uncertainty as to mechanism, but experience with actions and outcomes. For example, when using a calculator people may press the clear button more than is necessary because in the past non-clearing has resulted in problems.

- Mental models are parsimonious: often people do extra physical operations rather than mental planning that would allow them to avoid those actions; they are willing to trade-off extra physical actions for reduced mental complexity. This is especially true where the extra actions allow for one simplified rule to be applied across multiple systems. Thus, minimizing the chances for confusion.

Internal

The term mental model is meant to make mental models distinct from conceptual models. Mental models are cognitive phenomena that exist only in the mind. Conceptual models are representations or tools used to describe systems.

When considering mental models four different components need to be understood (Norman, 1983, pp. 7-8) :

- Target system - the system that the person is learning or using

- Conceptual model of the target system- is invented to provide an appropriate representation of the target system. Appropriate in this sense means accurate, consistent and complete. Conceptual models are invented by teachers, designers, scientists and engineers. An example would be a design document which users would use to update their mental models so that they are accurate. Conceptual models are tools devised for the purpose of understand or teaching of systems. Mental models are what people actually have in their heads and what guides them in their interaction with the target system.

- User’s mental model of the target system - mental models are naturally evolving and will often be updated after interactions with the system. That is, as you interact with the system you learn about how it works. The mental model may not be necessarily technically accurate and at the end of the day it often cannot be because a model is only useful because it is a simplification of the system it represents. Mental models are constrained or built using information from the user’s technical background, previous experience with similar systems and the structure of the human information processing system.

- Scientist’s conceptualization of a mental model - is a model of the supposed mental model

Conceptualising another person’s mental models can be difficult in explaining what is involved and how to do it well. The following symbols will be used:

- t - represents the target system

- C(t) - represents the conceptual model of the target system

- M(t) - the users mental model of the target system

- C(M(t)) - the actual mental model that we think the user might have.

Three functional factors that apply to M(t) and C(M(t)) are (Norman, 1983, p. 12):

- Belief System: A person’s mental model reflects his or her beliefs about the physical system acquired either through observation, instruction or inference. C(M(t)) should contain the relevant parts of a person’s belief system. This means that C(M(t)) should correspond with how the person believes t to be, which may not necessarily be how it actually is.

- Observability: C(M(t) should correspond with the parameters and states of t that the person’s M(t) can observe or infer. If presumablyaperson does not know about some aspect of t that it should not be in M(t)

- Predictive Power: the purpose of a mental model is to allow the person to understand and to anticipate the behaviour of a physical system. It is for this reason that we can say that mental models have predictive power. People can “run” their models mentally. Therefore, C(M(t)) should also include the relevant information processing and knowledge structures that make it possible for the person to use M(t) to predict and understand t. Prediction is one of the major aspects of one’s mental models and this must be captured in any description of them.

References

- Doyle, J., & Ford, D. (1998). Mental Models Concepts for System Dynamics Research.

- Forrester, J. (1961). Industrial Dynamics. New York: Wiley.

- Forrester, J. (1994). Policies, decisions, and information sources for modeling. In J. Morecroft, & J. Sterman, Modeling for Learning. Portland: Productivity Press.

- Goldvarg, Y., & Johnson-Laird, P. (2000). Illusions in modal reasoning. Memory & Cognition, 282-294.

- Johnson-Laird. (2010). Mental models and human reasoning. PNAS.

- Johnson-Laird, P. (1994). Mental models and probabilistic thinking. Cognition, 189-209.

- Johnson-Laird, P. (1999). Deductive Reasoning. Annual Reviews, 109-135.

- Johnson-Laird, P. (2013). Mental models and cognitive change. Cognitive Psychology, 131-138.

- Johnson-Laird, P., & Bell, V. (1998). A Model Theory of Modal Reasoning. Cognitive Science, 25-51.

- Johnson-Laird, P., & Bryne, R. (2002). Conditionals: A Theory of Meaning, Pragmatics, and Inference. Psychological Review, 646-678.

- Johnson-Laird, P., & Savary, F. (1996). Illusory inferences about probabilities. Acta Psychologica, 69-90.

- Johnson-Laird, P., Girotto, V., & Legrenzi, P. (2005, 3 18). http://www.si.umich.edu/ICOS/gentleintro.html Retrieved from http://www.si.umich.edu: http://www.si.umich.edu/ICOS/gentleintro.html. Second link as first no longer works: http://musicweb.ucsd.edu/~sdubnov/Mu206/MentalModels.pdf

- Khemlani, S., Lotstein, M., & Johnson-Laird, P. (2014). A mental model theory of set membership. Washington: US Naval Research Laboratory.

- Knauff, M., & Johnson-Laird, P. (2002). Visual imagery can impede reasoning. Memory & Cognition, 363-371.

- Knauff, M., Fangmeier, T., Ruff, C., & Johnson-Laird, P. (2003). Reasoning, Models, and Images: Behavioral Measures and Cortical Activity. Cognitive Neuroscience, 559–573.

- Legrenzi, P., Girotto, V., & Johnson-Laird, P. (1993). Focussing in reasoning and decision making. Cognition, 37-66.

- Nersessian, N. (2002). The cognitive basis of model-based reasoning in science. In P. Carruthers, S. Stich, & M. Siegal, The Cognitive Basis of Science (pp. 133-153). Cambridge University Press.

- Norman, D. (1983). Mental Models. In D. Gentner, & A. Stevens, Mental Models (pp. 7-14). LEA.

- Paich, M., & Sterman, J. (1993). Market Growth, Collapse and Failures to Learn from Interactive Simulation Games. System Dynamics, 1439-1458.

- Rouse, W., & Morris, N. (1985). on looking into the black box: prospects and limits in the search for mental models. Norcross.

- Schaeken, W., Johnson-Laird, P., & d'Ydewalle, G. (1994). Mental models and temporal reasoning. Cognition, 205-334.

- Senge, P. (1990). The Fifth Discipline: The Art and Practice of the Learning Organization. New York: Doubleday.

- Shafir, E., & Tversky, A. (1992). Thinking through uncertainty: nonconsequential reasoning and choice. Cognitive Psychology, 449-474.

- Shiffrin, R., & Nosofsky, R. (1994). Seven Plus or Minus Two: A commentary On Capacity Limitations. Psychological Review, 357-361.

- Yang, Y., & Johnson-Laird, P. (2000). Illusions in quantified reasoning: How to make the impossible seem possible, and vice versa. Memory & Cognition, 452-465.

26 comments

Comments sorted by top scores.

comment by alicey · 2015-08-17T15:39:16.957Z · LW(p) · GW(p)

Reading this was a bit annoying:

Only one statement about a hand of cards is true:

There is a King or Ace or both.

There is a Queen or Ace or both.

Which is more likely, King or Ace?

... The majority of people respond that the Ace is more likely to occur, but this is logically incorrect.

It is just communicating badly https://xkcd.com/169/ . In a common parse, Ace is more likely to occur. It would be more likely to be parsed as you intended if you had said

Only one of the following premises is true about a particular hand of cards:

(like you did on the next question!)

Replies from: Tyrrell_McAllister, tom_cr, ScottL↑ comment by Tyrrell_McAllister · 2015-08-17T16:42:47.341Z · LW(p) · GW(p)

I'd guess that getting this question "correct" almost requires having been trained to parse the problem in a certain formal way — namely, purely in terms of propositional logic.

Otherwise, a perfectly reasonable parsing of the problem would be equivalent to the following:

Before you stands a card-dealing robot, which has just been programmed to deal a hand of cards. Exactly one of the following statements is true of the robot's hand-dealing algorithm:

- The algorithm chooses from among only those hands that contain either a king or an ace (or both).

- The algorithm chooses from among only those hands that contain either a queen or an ace (or both).

The robot now deals a hand. Which is more probable: the hand contains a King or the hand contains an Ace?

On this reading, Ace is most probable.

Indeed, this "algorithmic" reading seems like the more natural one if you're used to trying to model the world as running according to some algorithm — that is, if, for you, "learning about the world" means "learning more about the algorithm that runs the word".

The propositional-logic reading (the one endorsed by the OP) might be more natural if, for you, "learning about the world" means "learning more about the complicated conjunction-and-disjunction of propositions that precisely carves out the actual world from among the possible worlds."

Replies from: None, ScottL↑ comment by [deleted] · 2015-08-20T02:54:07.663Z · LW(p) · GW(p)

I'd guess that getting this question "correct" almost requires having been trained to parse the problem in a certain formal way — namely, purely in terms of propositional logic.

Indeed.

The propositional-logic reading (the one endorsed by the OP) might be more natural if, for you, "learning about the world" means "learning more about the complicated conjunction-and-disjunction of propositions that precisely carves out the actual world from among the possible worlds."

In fact, when I got to this part, I actually skipped the rest of the article, thinking, "What sort of halfway competent cognitive scientist actually proposes that we represent the world using first-order symbolic logic? Where's the statistical content?"

Hopefully I'm not being too harsh there, but I think we know enough about learning in the abstract and its relation to actually-existing human cognition to ditch purely formal theories in favor of expecting that any good theory of cognition should be able to show statistical behavior.

Replies from: ScottL↑ comment by ScottL · 2015-08-20T08:14:40.246Z · LW(p) · GW(p)

The mental model theory was initially created to describe the comprehension of discourse and deductive reasoning. But, it can also describe naive probabilistic reasoning. I might write something about this later, but it will probably be a while. I think that I should read this book first if I did. I didn't go into any of the details of this in the above post. There are few other principles and it gets a bit more complicated. You can see this paper if you are interested.

A mental model is defined as a representation of a possibility that has a structure and content that captures what is common to the different ways in which the possibility might occur. It is a theory about how people naively reason, not neccesarily how they have been trained to reason which will probably be more explicit.

Here is an example:

According to a population screening, 4 out of 10 people have the disease, 3 out of 4 people with the disease have the symptom, and 2 out of the 6 people without the disease have the symptom. A person selected at random has the symptom. What's the probability that this person has the disease?

The model theory allows that probabilities can be represented in models and that the assertion can be represented by either a model of equiprobable possibilities or a model with numerical tags on the possibilities. This tag might be frequencies or probabilities. This means that people might make the follow models.

or an equivalent one if they are thinking in probabilities is

↑ comment by ScottL · 2015-08-18T02:43:40.574Z · LW(p) · GW(p)

I'd guess that getting this question "correct" almost requires having been trained to parse the problem in a certain formal way — namely, purely in terms of propositional logic.

To get the question correct you just need to consider the falsity of the premises. You don't neccesarily have to parse the problem in a fromal way, although that would help.

On this reading, Ace is most probable.

Ace is not more probable. It is imposible to have an ace in the dealt hand due to the requiement that only one of the premises is true. The basic idea is that one of the premises must be false which means that an ace is impossible. It is impossible because if an ace is in the dealt hand, then this means that both premises are true which violates the requirement (Exactly one of these statements is true). I have explained this further in this post

Replies from: Kaj_Sotala, Tyrrell_McAllister↑ comment by Kaj_Sotala · 2015-08-18T04:03:39.802Z · LW(p) · GW(p)

I think the problem here is that you're talking to people who have been trained to think in terms of probabilities and probability trees, and furthermore, asking "what is more likely" automatically primes people to think in terms of a probability tree.

The way I originally thought about this was:

- Suppose premise 1 is true. Then two possible combinations out of three might contain a king, so 2/3 probability for a king, and since I guess we're supposed to assume that premise 1 has a 50% probability, then that means a king has a 2/6 = 1/3 probability overall. By the same logic, ace has a 2/3 probability in this branch, for a 1/3 probability overall.

- Now suppose that premise 2 is true. By the same logic as above, this branch contributes an additional 1/3 to the ace's probability mass. But this branch has no king, so the king acquires no probability mass.

- Thus the chance of an ace is 2/3 and the chance of a king is 1/3.

In other words, I interpreted the "only one of the following premises is true" as "each of these two premises has a 50% probability", to a large extent because the question of likeliness primed me to think in terms of probability trees, not logical possibilities.

Arguably, more careful thought would have suggested that possibly I shouldn't think of this as a probability tree, since you never specified the relative probabilities of the premises, and giving them some relative probability was necessary for building the probability tree. On the other hand, in informal probability puzzles, it's often common to assume that if we're picking one option out of a set of N options, then each option has a probability of 1/N unless otherwise stated. Thus, this wording is ambiguous.

In one sense, me interpreting the problem in these terms could be taken to support the claims of model theory - after all, I was focusing on only one possible model at a time, and failed to properly consider their conjunction. But on the other hand, it's also known that people tend to interpret things in the framework they've been taught to interpret them, and to use the context to guide their choice of the appropriate framework in the case of ambiguous wording. Here the context was the use of word of the "likely", guiding the choice towards the probability tree framework. So I would claim that this example alone isn't sufficient to distinguish between whether a person reading it gives the incorrect answer because of the predictions of model theory alone, or whether because the person misinterpreted the intent of the wording.

Replies from: ScottL↑ comment by ScottL · 2015-08-18T06:01:03.469Z · LW(p) · GW(p)

I updated the first example to one that is similar to the one above by Tyrrell_McAllister. Can you please let me know if it solves the issues you had with the original example.

Replies from: Kaj_Sotala↑ comment by Kaj_Sotala · 2015-08-19T14:48:53.238Z · LW(p) · GW(p)

That does look better! Though since I can't look at it with fresh eyes, I can't say how I'd interpret it if I were to see it for the first time now.

↑ comment by Tyrrell_McAllister · 2015-08-18T23:49:49.159Z · LW(p) · GW(p)

Ace is not more probable.

Ace is more probable in the scenario that I described.

Of course, as you say, Ace is impossible in the scenario that you described (under its intended reading). The scenario that I described is a different one, one in which Ace is most probable. Nonetheless, I expect that someone not trained to do otherwise would likely misinterpret your original scenario as equivalent to mine. Thus, their wrong answer would, in that sense, be the right answer to the wrong question.

Replies from: ScottL↑ comment by ScottL · 2015-08-19T01:49:42.722Z · LW(p) · GW(p)

I'm sorry I am not really understanding your point. I have read your scenario multiple times and I see that the ace is impossible in it. Can you do me favour and read this post and then let me know if you still believe that the ace is not impossible.

Of course, as you say, Ace is impossible in the scenario that you described (under its intended reading). The scenario that I described is a different one, one in which Ace is most probable.

I don't see any difference between your scenario and the one I had originally. The ace is impossible in your scenario as well because it is in both statements and you have the requirement that "Exactly one of the following statements is true" which means that the other must be false. If ace was in the hand, then both statements would be true, which cannot be the case as exactly one of the statements can be true, not both.

Also, I rewrote the first example in the post so that it is similar to yours.

Replies from: Tyrrell_McAllister↑ comment by Tyrrell_McAllister · 2015-08-19T03:16:40.901Z · LW(p) · GW(p)

Last I checked, your edits haven't changed which answer is correct in your scenario. As you've explained, the Ace is impossible given your set-up.

(By the way, I thought that the earliest version of your wording was perfectly adequate, provided that the reader was accustomed to puzzles given in a "propositional" form. Otherwise, I expect, the reader will naturally assume something like the "algorithmic" scenario that I've been describing.)

In my scenario, the information given is not about which propositions are true about the outcome, but rather about which algorithms are controlling the outcome.

To highlight the difference, let me flesh out my story.

Let K be the set of card-hands that contain at least one King, let A be the set of card-hands that contain at least one Ace, and let Q be the set of card-hands that contain at least one Queen.

I'm programming the card-dealing robot. I've prepared two different algorithms, either of which could be used by the robot:

Algorithm 1: Choose a hand uniformly at random from K ∪ A, and then deal that hand.

Algorithm 2: Choose a hand uniformly at random from Q ∪ A, and then deal that hand.

These are two different algorithms. If the robot is programmed with one of them, it cannot be programmed with the other. That is, the algorithms are mutually exclusive. Moreover, I am going to use one or the other of them. These two algorithms exhaust all of the possibilities.

In other words, of the two algorithm-descriptions above, exactly one of them will truthfully describe the robot's actual algorithm.

I flip a coin to determine which algorithm will control the robot. After the coin flip, I program the robot accordingly, supply it with cards, and bring you to the table with the robot.

You know all of the above.

Now the robot deals you a hand, face down. Based on what you know, which is more probable: that the hand contains a King, or that the hand contains an Ace?

Replies from: ScottL↑ comment by tom_cr · 2015-10-02T21:08:08.487Z · LW(p) · GW(p)

I think that the communication goals of the OP were not to tell us something about a hand of cards, but rather to demonstrate that certain forms of misunderstanding are common, and that this maybe tells us something about the way our brains work.

The problem quoted unambiguously precludes the possibility of an ace, yet many of us seem to incorrectly assume that the statement is equivalent to something like, 'One of the following describes the criterion used to select a hand of cards.....,' under which, an ace is likely. The interesting question is, why?

In order to see the question as interesting, though, I first have to see the effect as real.

↑ comment by ScottL · 2015-08-18T01:53:20.267Z · LW(p) · GW(p)

Thanks for the advice. It was a mistake. I have updated it to: "Only one of the following premises is true about a particular hand of cards".

Replies from: selylindi↑ comment by selylindi · 2015-09-01T19:28:44.689Z · LW(p) · GW(p)

FWIW, I still got the question wrong with the new wording because I interpreted it as "One ... is true [and the other is unknown]" whereas the intended interpretation was "One ... is true [and the other is false]".

In one sense this is a communication failure, because people normally mean the first and not the second. On the other hand, the fact that people normally mean the first proves the point - we usually prefer not to reason based on false statements.

comment by Kaj_Sotala · 2015-08-18T03:30:37.903Z · LW(p) · GW(p)

Great article! A concrete demonstration of some of the ways we reason, and how that might lead to various fallacies.

It might have been better to make it into a series of shorter posts - as it was, I initially had the same misreading about the "King or Ace" question as alicey did (even after the rewrite) and stopped to spend considerable time thinking about it and your claim that King was more likely, after which I had enough mental fatigue that I didn't feel like going through the rest of the examples anymore. But I intend to return to this article later to finish reading it.

comment by mavant · 2015-08-23T18:51:41.358Z · LW(p) · GW(p)

The Ace is in both statements and both statements cannot be true as per the requirement.

No.

deal :: IO CardHand

deal = do

x <- randomBoolean

if x

then generateHandsContainingEitherOrBothOf (King, Ace)

else generateHandsContainingEitherOrBothOf (Queen, Ace)

Asking a trick question and then insisting on a particular reading does not constitute evidence of a logical fallacy being committed by the answerer.

Replies from: ScottL↑ comment by ScottL · 2015-08-23T23:09:38.789Z · LW(p) · GW(p)

It's not a trick question. It's pretty much the same as the example used in the literature and then I have a few other examples that are straight from the literature. The literature on mental models is mainly on deductive reasoning. That is why the question is in the format it is.I have rephrased it to try to make it more clear that it is not about which algorithm is correct.

Note that the robot is a black box. That is, you don't know anything about how it works, for example the algorithm it uses. You do, however, have two statements about the what the possibilities of what the dealt hand could be. These two statements are from two different designers of the robot. The problem is that you know that one of the designers lied to you and the other designer told the truth. This means that you know that only one of the following statements about the dealt hand is true.

Can you please let me know if you think this helps. Also, did you have the same problem with the second problem.

The thing is that the problem requires a particular reading because a different reading makes it a totally different problem. Under your reading the question really is:

The dealt hand will contain cards from only one of the following sets of cards:

- K, A, K and A

- Q, A, Q and A

Obviously, that's a totally different problem. If you have any suggestions on how to improve the question, let me know.

Replies from: mavant, Jiro↑ comment by mavant · 2015-08-24T01:22:24.839Z · LW(p) · GW(p)

The fact that it's the same phrasing used in the literature is really concerning, because it means the interpretation the literature gives is wrong: Many subjects may in fact be generating a mental model (based on deductive reasoning, no less!) which is entirely compatible with the problem-as-stated and yet which produces a different answer than the one the researchers expected.

One could certainly write '(Ace is present OR King is present) XOR (Queen is present OR Ace is present)' which trivially reduces to '(King is present OR Queen is present) AND (Ace is not present)', but that gives the game away a bit - as perhaps it should! The fact that phrasing the knowledge formally rather than in ad-hoc English makes the correct answer so much more obvious is a strong indicator that this is a deficiency in the original researchers' grasp of idiomatic English, not in their research subjects' grasp of logic.

It's difficult for me to look at the problem with fresh eyes, so I can't be entirely certain whether the added 'black box' note helps. It doesn't look helpful.

What would be really useful would be a physical situation in which the propositional-logic reading of the statements is the only correct interpretation. There is luckily a common silly-logic-puzzle trope which evokes this:

The dealer-robot has two heads, one of which always lies and one of which always tells the truth. You don't know which is which. After dealing the hand, but before showing it to you, the robot dealer takes a peek.

One of the robot's heads has told you that the dealt hand contains either a king or an ace (or both).

The robot's other head has told you that the dealt hand contains either a queen or an ace (or both).

comment by kpreid · 2015-08-23T01:37:32.817Z · LW(p) · GW(p)

I appreciate this article for introducing research I was not previously aware of.

However, as other commenters did, I find myself bothered by the way the examples assume one uses exactly one particular approach to thinking — but in a different aspect. Specifically, I made the effort to work through the example problems myself, and

To solve this second problem you need to use multiple models.

is false. I only need one model, which leaves some facts unspecified. I reasoned as follows:

- What I need to know is the relation between “police” and “reporter”.

- Everything we know about “police” is that it is simultaneous with “alarm”.

- Everything we know about “reporter” is that it is simultaneous with “stabbed”.

- What do we know about the two newly mentioned events? That “alarm” is before “stabbed”.

- Therefore “police” is before “reporter” (or, if we do not check further, the premises could be inconsistent).

This is building up exactly as much model as we need to reach the conclusion.

I will claim that this is a more realistic mode of reasoning — that is, more applicable to real-world problems — than the one you assume, because it does not assume that all of the information available is relevant, or that there even is a well-defined boundary of “all of the information”.

Replies from: ScottL↑ comment by ScottL · 2015-08-23T03:21:22.307Z · LW(p) · GW(p)

I find myself bothered by the way the examples assume one uses exactly one particular approach to thinking — but in a different aspect.

I am not sure how to fix this. Plus, the examples (except the first) are all from the literature on mental models.

To solve this second problem you need to use multiple models.

is false. I only need one model, which leaves some facts unspecified.

I changed the post. I shouldn't have used the word solved. I meant that you need to generate all of the models if you are going to ensure that the model with the conclusion is valid or as you say not 'inconsistent'. So, you not only have the reach the conclusion. You need to also check if it's valid. That's why you go through all three models. In the last example the police arrived before the reporter in one model and the reporter arrived after the police in another of the models. Therefore, the example is invalid.

Replies from: kpreid↑ comment by kpreid · 2015-08-25T02:52:03.783Z · LW(p) · GW(p)

Plus, the examples (except the first) are all from the literature on mental models.

Then my criticism is of the literature, not your post.

I meant that you need to generate all of the models if you are going to ensure that the model with the conclusion is valid or as you say not 'inconsistent'. So, you not only have [to] reach the conclusion. You need to also check if it's valid.

Reality is never inconsistent (in that sense). Therefore, I only need to check to guard against errors in my reasoning or in the information I have given; neither of these is necessary.

That's why you go through all three models. In the last example the police arrived before the reporter in one model and the reporter arrived after the police in another of the models. Therefore, the example is invalid.

In the last example, the type of reasoning I described above would find no answer, not multiple ones.

(And, to clarify my terminology, the last example is not an instance of "the premises are inconsistent"; rather, there is insufficient information.)