The Leapfrogging Terminus and the Fuzzy Cut

post by Jim Pivarski (jim-pivarski) · 2025-03-31T04:08:24.023Z · LW · GW · 6 commentsContents

The Leapfrogging Terminus Beginning of time End of time Size of the universe The most fundamental particle And more! The Fuzzy Cut The line between quantum and classical physics The line between conscious and non-conscious matter Why is this categorization useful? None 7 comments

I've been thinking about some maybe-undecidable philosophical questions, and it occurred to me that they fall into some neat categories. These questions are maybe-undecidable because of the absolute claims they want to make, while experimental measurements can never be certain, or the terms of the claim are hard to formulate as a physical experiment. Nevertheless, people have opinions on them because they're foundational to our view of the universe: it's hard to not have opinions on them. Even defaulting to a null hypothesis that could, in principle, be overturned is privileging one view over another.

I'm calling the first category the "Leapfrogging Terminus" because it has to do with absolute beginnings, extents, or building blocks, and they may or may not be the true end-of-the-line. The second category is the "Fuzzy Cut" because we have irreconcilable models of the world on the ends of a linear spectrum, and something must happen in the middle. There are likely other categories, but I'm writing these down because they form a pattern and I like patterns.

The Leapfrogging Terminus

Beginning of time

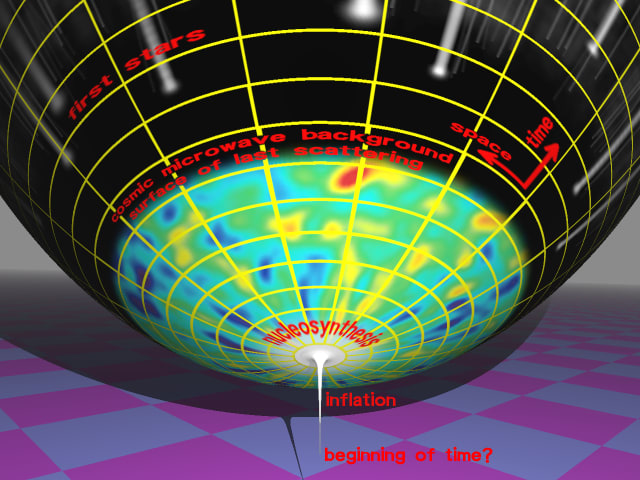

The classic example of a Leapfrogging Terminus is the question of whether time has a beginning. Contrary to a widespread belief, physical cosmology doesn't settle this question. Arguments about the Big Bang and the Steady State Universe were both physical and philosophical. The physical Big Bang theory only holds that the present phase of the universe began 13.7 billion years ago, a phase that consists of the overall scale of space expanding as a function of time, subject to the gravitational pull of its contents: radiation, ordinary matter, dark matter, and dark energy. There's plenty of physical evidence to support tracing this expansion backward, and if you extrapolate far enough, there is a point in time when the energy density was infinite and the scale factor was zero.

But nothing's ever provably zero in experimental science. Even the best limits on the mass of the photon are finite: < eV, though most physicists simply assume it's zero. To extrapolate the universe's scale factor to zero, you have to assume that the Standard Model is valid at all energy scales, far above the scales that have actually been tested in colliders, and there are reasons to doubt that. Even apart from particle physics, cosmologists have reasons to believe there was an inflationary phase at very early times, in which the scale factor grew exponentially with time, as for at least 60 "e-folds" or . An exponential curve, , doesn't cross anywhere, so the inflationary phase might not have had any beginning at all. The current state of physical cosmology is not just in-principle undecided about whether time had a beginning, as the masslessness of the photon is in-principle undecidable. It's a wide-open area of active research.

And yet, people have opinions on whether time had a beginning. Judeo-Christian religions have traditionally considered it important that time had a beginning, for a single cause (God) to have created it, while the Dharmic religions describe very old (more than 13.7 billion years!), infinitely old, or cyclic universes. The physics community mostly favored an eternal universe before Friedmann, Lemaître, Robertson, and Walker introduced what is now the Big Bang theory, and it was a point against Georges Lemaître that he was a priest, "reintroducing religion into science."

But even beyond religious cosmologies and arguments among physicists, a lot of people just can't imagine a world without a beginning, while others can't imagine a time without something before it. Tell me if the following sounds familiar:

"At some point, if you go back far enough, it all had to be started by something at some time. How could it go on and on forever, backwards? That doesn't make any sense."

"Okay, suppose there is a Beginning of Time. What happened a minute earlier?"

This kind of argument makes my skin crawl because I've had it many times in my head, and it doesn't end. Each position leap-frogs over the other in profundity.

From the finite-universe perspective, supposing that an eternity stretches backward in time opens the door to history being filled with infinitely many things, an infinitude that is somehow more palatable in the future (as possibilities to be invented) than the past (as water under the bridge). Sometimes, Buddhists use this to say that, with reincarnation, every combination of human relationship has already happened, so when you squash a fly, you're squashing someone who was your mother in some previous life. (Mathematically, that must depend on how many beings there are, either finite or a smaller infinity than the infinite age of the universe for the infinite combinatorics of family relationships to have played out.)

From the infinite-universe perspective, "What's before that?" feels like one-upmanship, but it's just ignoring or misunderstanding the first person's claim, not refuting it. These two positions "leap-frog each other in profundity" because from one perspective, it can feel like people arguing the other just haven't gotten their heads around the idea. Like, if only they could imagine a world without a beginning/with a beginning then they would realize that this larger, more inclusive possibility is true.

End of time

As with the finiteness of history, we can do the same for the finiteness of the future.

"One day, it will all end in the Big Crunch/Doomsday. There will be nothing after that because space-time will collapse to a singularity/all will enter hypostatic union with God/Light will finally be separated from Darkness in the Great Conflagration." (The last one's Manichaeism.)

"Oh yeah? What happens a minute later?"

"Well, nothing happens a minute later. That's what I mean by The End. Time comes to an end."

A third interloper: "No, it cycles back to the beginning again! The universe is on repeat."

"It exactly cycles? Or with some differences? Because if it's exactly the same the second time around, how is that different from just calling it The End? And if it's not the same the second time around, why are you calling it a cycle at all? That's just a continuation of time, after the cataclysmic event."

Size of the universe

It's a useless argument, but it's a type of useless argument that fits a pattern. You could have exactly the same round-and-round about the infinite expanse of space. Space is not one-dimensional or coupled with causality like time, so it feels like a different argument, but there are some important similarities. First, physical cosmology doesn't settle it: the size of the universe is at least 78 billion light-years, but the assumption that it's infinite is like the assumption that the photon is massless—the simplest possibility consistent with all measurements. In fact, there are three possibilities:

- The universe is infinite, and it is homogeneous and isotropic at the largest scales.

- The universe is finite, and somewhere there's a wall where space stops.

- The universe is finite because it has a non-trivial, compact topology. If you go far enough in one direction, you'll end up coming around the other side to where you started.

Possibility (1) is the usual assumption. Possibility (2) is ugly: it immediately begs the question of "what's on the other side of the wall?" and that question has more force than the same question applied to a beginning of time because time is one-dimensional and coupled with causality. We're accustomed to being able to walk around spatial walls to inspect the other side—we've never been able to travel to the past.

Possibility (3) has attracted more interest from mathematicians than cosmologists because it lets them play with different topologies. Space could be a 3-sphere (the surface of the sphere has 3 dimensions; a normal sphere is a "2-sphere") if it is large enough to be consistent with limits on the overall curvature of space, which has been measured to be very close to flat (Euclidean). Space could be a torus, which is exactly flat. Video games in which flying off one edge of the screen brings you to the other edge are examples of a 2-dimensional torus. For a while in 2006‒2008, Poincaré dodecahedral space (video) was a possibility, but the very small, non-zero curvature required by that model has since been ruled out.

Unlike the age of the universe, we might someday discover that the universe has finite size, by seeing the same object or its influence in two nearly opposite directions. An infinite universe can be ruled out, but a finite universe can't be ruled out. It could just be so large that no influence is detectable beyond the cosmological horizon.

All of the same games that can be played with the finiteness of the age of the universe can be played with the finiteness of its size.

"The universe is finite."

"No, it's infinite! You just can't imagine something that can go on and on forever, but open your mind and you'll see that it's true."

"You just can't imagine non-trivial, compact topologies."

Or maybe,

"The universe could be a 3-torus, which doubles back on itself in , , and ." (Shows picture of an inner tube, which is a 2-torus.) "Like the surface of this inner tube."

"Oh yeah? What's inside the tube? Or just outside of it?"

"No, no, the 3-torus is only the surface manifold; there is no inside or outside. The manifold doesn't need to be embedded in a larger space."

"You just can't imagine stepping out of the inner tube."

The most fundamental particle

For a completely different example of the Leapfrogging Terminus, consider the search for the most fundamental particle of matter. You know, the atom. I mean, the nucleus. I mean, the proton. I mean, the quark. (I mean, the superstring/D-brane?) The most-basic building blocks story has been revised so many times that it tempts onlookers to immediately ask, for every proposed fundamental particle, "What if there's something inside it?" Just as with the beginning of time, the question itself contradicts the notion of "fundamental particle," but it could be taken as a challenge that the particle we're looking at is not fundamental at all, and any scientific claim should be open to such questioning.

But now consider this: what if there is no fundamental particle because inside each one are still-more fundamental particles, and the chain just keeps going down, like an infinite Russian doll. Or maybe, as many 19th century physicists thought, there's no fundamental particle but a continuum of matter that can be infinitely subdivided.

And more!

Although they're different questions, the finiteness of the age of the universe, of the extent of space, and the fundamentalness of the building blocks of matter are all about the limits of inquiry in some dimension. It's strange to imagine there being a limit—as strange as a brick wall at the end of space—and it's also strange to imagine there isn't, such as the idea that nature gave us an unending sequence of nesting dolls to discover. All of the above are physics examples, but how about the primacy of sense-experience versus the primacy of the objective world? One frog says that brains are just a product of physical processes and has the fMRI scans to prove it, while the other frog says that we only know what an fMRI scan means because we understand physics (provisionally!) with our minds. So which derives from which? Hop, hop, hop...

The Fuzzy Cut

The line between quantum and classical physics

Whereas the Leapfrogging Terminus is a problem at an endpoint of some spectrum, the Fuzzy Cut is a problem in the middle. That is, we have two irreconcilable worldviews, each of which is comfortable at its end of a spectrum, and each wants to be the whole of reality, but there's the other one sitting at the other end of the line to contend with. A good example to start with is the quantum/classical divide.

Quantum physics is consistent with (or "an extension of") classical physics in the sense that the experimental predictions of quantum physics, at low resolution, asymptotically resemble the experimental predictions of classical physics. From the point of view that the world is entirely quantum mechanical, it's easy to see how we could mistakenly believe that it is classical if our experiments don't have enough precision.

However, the two theories are completely different in how they describe particles and how they move. In classical physics, properties of an object can be represented as a real number that has a single value, but in quantum physics, some properties are discrete, yet take on a distribution of values. For example, the angular momentum of a spinning flywheel is a single real number, such as . An experimentalist might not be able to determine its exact value, but classical theory says that it has a value, and only one value. However, the angular momentum of an isolated electron can be or , in which is , but it can also be 30% and 70% or some other distribution over the two choices—but only those two choices. Continuous quantum properties, such as the position of a particle, can be any continuous distribution, such as a blob that's mostly in your hand but partly in the Andromeda galaxy.

The part of quantum physics that is fundamentally at odds with classical physics is the von Neumann postulate, which says that if you measure a distributed quantum state, such as the angular momentum of an electron, the measurement will either result in or , and the probability of each possibility depends on the distribution before the measurement. Another way of saying this is that if an electron were initially in a 30%/70% distributed state and the experiment measured , the state after measurement is 100% and 0% . The von Neumann postulate is sometimes called the "collapse of the wavefunction" because of this change to a much simpler distribution. (A wavefunction is closely related to a distribution, and I'm not making the distinction.)

Classical physics could have been extended to allow for distributed properties. If it were, we would say, "From a distance, a particle looks like a point, but up close we find that it's a mushy blob." Collapsing wavefunctions are incompatible because they're not just mushy blobs but probability blobs. If a particle is mostly in your hand yet partly in the Andromeda galaxy, that's fine: we may not call it a particle, we might call it continuous matter—of which some of it is here and some of it is there. But if, by opening your hand, you could make it entirely here and not at all there, or vice-versa (you don't get to choose which), that's a fundamentally different way of looking at the world.

The Fuzzy Cut problem is that small things behave quantum mechanically and large things behave classically. We have to come up with a world-view that accounts for these observations and is consistent with itself. There are many different ways to do this, and physical observation is only a partial constraint. The rest of the problem is philosophical. Here are a few of them, a non-exhaustive list:

- Quantum mechanics applies to anything smaller than 1 nanometer, or some other line in the sand. When a subatomic property is measured in an experiment, amplifying it to something that will move a needle or be registered by a computer, the process crosses this size-scale threshold and randomly selects a value from its distribution for the large, classical apparatus. (I think it's safe to say that no one really believes this: it's the strawman that people make the Copenhagen interpretation out to be if they're opposed to the Copenhagen interpretation.)

- Wavefunctions collapse when they can be perceived by human (or other intelligent) consciousnesses. Schrödinger's cat may be in a 50% completely alive, 50% completely dead state, but if Wigner's friend is in the box with the cat, he will observe the cat and the cat will be 100% in one or the other state.

- All of the forces of nature are quantum mechanical except for gravity, which is classical. Interaction with gravity, which is much weaker than the other forces but significant for large objects, forces distributions to collapse to single values. (This also addresses the difficulty of formulating a self-consistent quantum gravity by saying gravity isn't quantum!)

- Something else, or maybe a law of nature itself randomly triggers collapses all the time: objective collapse. We only notice in big systems because the fundamental rate is slow and it shows up in large aggregates.

- Nature is entirely classical—it only appears to be quantum because there are hidden variables that randomize results. This idea was mainstream for a while, but Bell's inequality (and its experimental confirmation) restricts the scope of possible hidden variable theories: they must have action at a distance or the wavefunction doesn't have a real value independent of observers, or the whole universe is superdeterministic—none of which are attractive theories.

- Nature is entirely quantum, and wavefunctions only appear to collapse because human observers are entangled along with the electron they're observing. For instance, if an electron is 30% and 70% and a human observer sees , that's just part of the human's wavefunction. Another part of the human's wavefunction sees . This is happening all the time for every quantum property that interacts with anything else, so the wavefunction is unimaginably huge and complicated. This is called the many-worlds interpretation because the coupled wavefunction is so all-encompassing that you could call parts of it worlds.

- Nature is entirely quantum, but there's an effect similar to but different from thermodynamics in which interactions with an environment push wavefunctions toward states like 0.001% and 99.999% . This is called decoherence, and it's relatively mainstream.

- Giving up on the interpretation of wavefunctions as real, objective properties of the universe, quantum Bayesianism considers them to be subjective degrees of belief.

- And many more!

Although physical experiments constrain the set of viable interpretations, it's hard to see how any future experiment could reduce them to only one. Some part of this is philosophical choice. For instance, maybe the universe is superdeterministic: it's contrived in such a way that humans, physical parts of the universe, will only choose to make measurements in such a way that's consistent with Bell's inequality. (Gag!) If we're going to have any mental model of nature, we're going to have to make decisions that are not guided by experiment. This was also true in the days of classical physics, but back then those decisions would have been called "being reasonable."

The line between conscious and non-conscious matter

My second example of a Fuzzy Cut is consciousness, as in the Hard Problem Of. Before I start into it, I want to make sure there's no confusion: this is my second example of a type of philosophical question—I am not saying that there is a connection between consciousness and quantum mechanics. That is one of the quantum interpretations that was historically proposed (#2, above), but I'm not proposing it here, and I don't think it's true.

Consciousness has its own Fuzzy Cut because two things seem to be evident: we are conscious, in the sense of you being self-aware and not only reading this text but knowing that you're reading this text, and human minds appear to be physical processes. The matter in a human brain, perhaps including the endocrine system, perhaps including gut bacteria for its influence on human emotions, is conscious in a way that rocks and dirt are not. But what makes brains (and hormones and gut bacteria) so special?

As with quantum mechanics, there's a list of solutions that try to address it that includes both "everything is conscious" and "nothing is conscious."

- Everything is conscious, at least a little bit. That's panpsychism, and variants range from animist religions to being somehow related to information entropy.

- Nothing is conscious, not even you reading this page. Proponents argue that "consciousness is an illusion," which is provocative because an "illusion" usually presumes an observer to be deluded, but they follow up with the point that maybe we only think it's a problem because the words we use problemize it, like Wittgenstein's fly bottle.

- There is a line between res cogitans (thinking stuff) and res extensa (material stuff, matter that is extended in space). This is Descartes's dualism.

- Or it's not a property of any matter, but the configurations that matter can be arranged in. Or it emerges as complex patterns from simple components (like thermodynamics or cellular automata). Or it's a matter of how components function, and could be reproduced by a computer program, which is not material, either.

- Or, consciousness is the only thing that exists, and it imagines the material world.

My point here is not to argue for any one of these views, just as I didn't for quantum mechanics. (I'm not settled, myself.) My point is that there's a similarity in the type of these problems, since they're trying to reconcile two poles that independently seem sensible, but don't make sense together.

Why is this categorization useful?

It may be that all of the above are "problems" in name only, that I (and others like me) have been bewitched by words and just need to find the way out, not to solve the problems as stated. Nevertheless, some of them still seem like problems to me. What am I to think of a world in which quantum wavefunctions collapse (and obey Bell's inequality)? What am I to think of the fact that I think and am matter, when it doesn't seem like matter can think at all?

The kinds of questions that do not seem problematic to me—anymore—are the Leapfrogging Terminus type. I used to think I had to have an opinion on whether the universe had a beginning or space a finite extent, but it just doesn't seem like a problem anymore because I can see how the wheel turns from "It had to start somewhere" to "What was before that?" Even earlier, I used to think I had to have an opinion on whether life is common in the universe or Earth is alone—until I realized that that's just an empirical question that doesn't have any evidence one way or the other... yet. The proper thing to do with an empirical question is to look for or wait for evidence, so I just need to be patient (or die waiting). Leapfrogging Terminus questions are not empirical, but they also don't demand conclusions. It's nice to think them through, but ultimately realize that a brain that evolved to understand its local environment won't necessarily have a grip on absolutes like edge of the universe. Not without empirical data, anyway.

While I can feel "done" with the Leapfrogging Terminus questions, Fuzzy Cut questions still bother me. And yet, maybe the right thing to do is to set them aside. Perhaps the two types are similar in this regard.

6 comments

Comments sorted by top scores.

comment by Yair Halberstadt (yair-halberstadt) · 2025-03-31T13:31:22.578Z · LW(p) · GW(p)

Am I correct that decoherence isn't really an interpretation of quantum mechanics, but an empirically verified statistical consequence of the standard model?

Replies from: jim-pivarski↑ comment by Jim Pivarski (jim-pivarski) · 2025-03-31T14:41:52.437Z · LW(p) · GW(p)

Most of the interpretations aren't "pure" in the sense of being completely independent of empirical constraints. Decoherence is one that posits a physical process (unlike many worlds and quantum Bayesianism) that doesn't require new laws of physics (unlike objective collapse theories, including gravity mediated). It requires a collective effect from the environment, which is why I say it's like thermodynamics.

However, it isn't as well-understood as thermodynamics. I've read some papers in which the authors describe a simple, special-case example of an environment and show that a quantum system does get pushed toward basis states like 0.001% spin-up and 99.999% spin-down. In fact, part of the problem is, "What's special about the basis states we see in the lab, like position, momentum, energy, spin up/down, field value, particle number, etc., rather than any other linear combination of them?" I remember reading about simple models in which an "isolated" particle, i.e. one surrounded by low-energy photons spontaneously coming out of the vacuum, is pushed toward its energy basis (think of a hydrogen atom, defined by its energy levels) and a colliding particle involved in one high-energy collision is pushed toward its position basis (a wave that becomes more particle-like on impact).

You're reminding me that I found decoherence more compelling than the rest. But what's missing, unless there's been a breakthrough I don't know about, is generalization. These were very simplified special cases. Also, there's still a philosophical choice to be made because this world-view is taking the collapse seriously as an objective physical process, but not a discrete one that leaves us in exact basis states. It's more like a phase transition that isn't infinitely sharp.

I doubt that anything specific to the Standard Model (high-energy generality, like electroweak symmetry breaking) has anything to do with it, since low-energy electrons also need to decohere, but you probably meant, "standard physics, no new laws," right? Decoherence is that: no new laws (unless there are yet more models of decoherence that I don't know about).

Replies from: yair-halberstadt↑ comment by Yair Halberstadt (yair-halberstadt) · 2025-03-31T16:16:11.974Z · LW(p) · GW(p)

Got it. So in a ways it's more like a mathematical conjecture than a philosophical theory. We posit a statistical result, we have some toy examples which provide us with some intuition for it, but right now we're not able to prove the general case. We hope to do so in the future, and people are actively working on doing so.

Also isn't many worlds a straightforward interpretation of decoherence? Decoherence says that regions of large complex superpositions stop interfering with each other, and hence such regions will act classically, many worlds just says that the regions you're not in presumably still exist? Or are there some extra hoops there?

Replies from: TAG↑ comment by TAG · 2025-03-31T17:04:06.439Z · LW(p) · GW(p)

MWI is more than one theory.

There is an approach to MWI based on coherent superpositions, and a version based on decoherence. These are (for all practical purposes) incompatible opposites, but are treated as interchangeable in Yudkowsky's writings. Decoherent branches are large, stable, non interacting and irreversible...everything that would be intuitively expected of a "world". But there is no empirical evidence for them (in the plural) , nor are they obviously supported by the core mathematics of quantum mechanics, the Schrödinger equation.Coherent superpositions are small scale , down to single particles, observer dependent, reversible, and continue to interact (strictly speaking , interfere) after "splitting". the last point is particularly problematical. because if large scale coherent superposition exist , that would create naked eye evidence at macrocsopic scale:, e.g. ghostly traces of a world where the Nazis won.

We have evidence of small scale coherent superposition, since a number of observed quantum.effects depend on it, and we have evidence of decoherence, since complex superposition are difficult to maintain. What we don't have evidence of is decoherence into multiple branches. From the theoretical perspective, decoherence is a complex , entropy like process which occurs when a complex system interacts with its environment. Decoherence isn't simple [LW · GW]. But without decoherence, MW doesn't match observation. So there is no theory of MW that is both simple and empirically adequate, contra Yudkowsky and Deutsch.

Decoherence says that regions of large complex superpositions stop interfering with each other

It says that the "off diagonal" terms vanish, but that would tend to.generate a single predominant outcome (except, perhaps, where the environment is highly symmetrical).

Replies from: jim-pivarski, yair-halberstadt↑ comment by Jim Pivarski (jim-pivarski) · 2025-03-31T23:50:00.917Z · LW(p) · GW(p)

I have heard of versions of many-worlds that are supposed to be testable, and you're probably referring to one of them. The one that I'm most familiar with ("classic many-worlds"?) is much more of a pure interpretation, though: in that version, there is no collapse and the apparent collapse is a matter of perspective. A component of the wavefunction that I perceive as me sees the electron in the spin-down state, but in the big superposition, there's another component like me but seeing the spin-up state. I can't communicate with the other me (or "mes," plural) because we're just components of a big vector—we don't interact.

On the other hand, classic decoherence posits that the wavefunction really does collapse, just not to 100% pure states. Although there's technically a superposition of electrons and a superposition of mes, it's heavily dominated by one component. Thus, the two interpretations, classic many-worlds and classic decoherence, are different interpretations.

If the state of theory and/or experiment developed further and these asymptotic projections were shown to exist in generality, that would bolster decoherence but not eliminate many-worlds: you could still imagine a 0.0001% me being coupled with the spin-up electron. It would, however, undermine the motivation. If, instead, these asymptotic projections were shown to not exist, then that would undermine decoherence to the point of refutation (i.e. only die-hards would keep looking for variants of the theory that aren't ruled out). So classic decoherence is more falsifiable than classic many-worlds. That's what I mean by saying that many-worlds is more purely philosophical.

But I was careful to say "classic" everywhere. With this being such an active area of research, I'm sure there are versions of these theories that don't fit the description above. (They're all "more than one theory.") As I said, I've heard of variants of many-worlds that are less purely philosophical, and that must be what you're referring to, @TAG [LW · GW].

Replies from: TAG↑ comment by TAG · 2025-04-02T16:19:21.587Z · LW(p) · GW(p)

I have heard of versions of many-worlds that are supposed to be testable

The're are versions that are falsified, for all practical purposes, because they fail to.predict broadly classical observations -- sharp valued real numbers, without pesky complex numbers or superpositions. I mean mainly the original Everett theory of 1957. There have been various attempts to patch the problems -- preferred basis, Decoherence , anthropics, etc, -- so there are various non falsified theories.

The one that I’m most familiar with (“classic many-worlds”?) is much more of a pure interpretation, though: in that version, there is no collapse and the apparent collapse is a matter of perspective. A component of the wavefunction that I perceive as me sees the electron in the spin-down state, but in the big superposition, there’s another component like me but seeing the spin-up state. I can’t communicate with the other me (or “mes,” plural) because we’re just components of a big vector—we don’t interact.

Merely saying that everything is a component of a big vector doesn't show that observers dont go into superposition with themselves, because the same description applies to anything which is in superposition..it's a very broad claim.

What you call classic MWI is what I the have-your-cake-and-eat-it ... assuming nothing except that collapse doesn't occur, you conclude that observers make classical observations for not particular reason...you doing even nominate Decoherence or preferred basis as the mechanism that gets rid of the unwanted stuff.

On the other hand, classic decoherence posits that the wavefunction really does collapse, just not to 100% pure states. Although there’s technically a superposition of electrons and a superposition of mes, it’s heavily dominated by one component. Thus, the two interpretations, classic many-worlds and classic decoherence, are different interpretations.

OK. I would call that single world decoherence. Many worlders appeal to Decoherence as well.

So classic decoherence is more falsifiable than classic many-worlds.

If classic MW means Everetts RSI, it's already false.