The Most Important Century: Sequence Introduction

post by HoldenKarnofsky · 2021-09-03T20:19:27.917Z · LW · GW · 5 commentsContents

Roadmap Our wildly2 important era Our century's potential for acceleration Forecasting transformative AI this century Wrapping up Acknowledgements None 5 comments

[Moderators' note: with permission, I am crossposting Holden Karnofsky's Most Important Century series, originally published on his blog. In my (Ruby's) opinion, this may be the most compelling extant write-up that argues we are living in an exceptionally important time.

Posts in the series will be published every 4th day until we are caught up, then will be posted here as they are published. Links in this roadmap will be updated as posts are published to LessWrong. Eager readers are encouraged to "read ahead" in the original.]

This is a roadmap/"extended table of contents" for a series of posts arguing for a good chance that we're in the most important century of all time.

I think we have good reason to believe that the 21st century could be the most important century ever for humanity. I think the most likely way this would happen would be via the development of advanced AI systems that lead to explosive growth and scientific advancement, getting us more quickly than most people imagine to a deeply unfamiliar future.

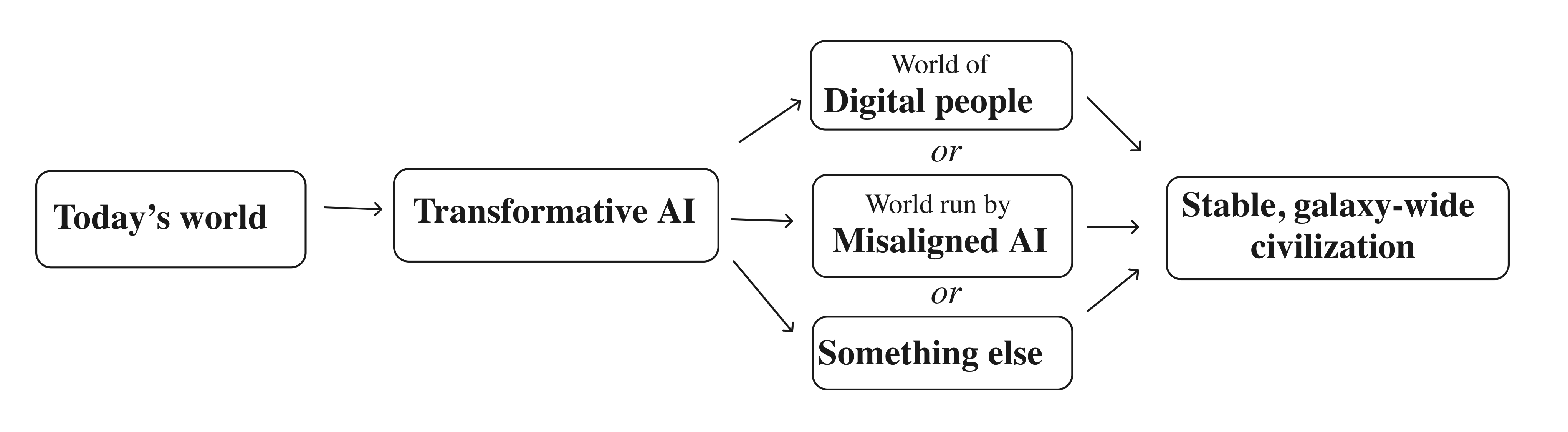

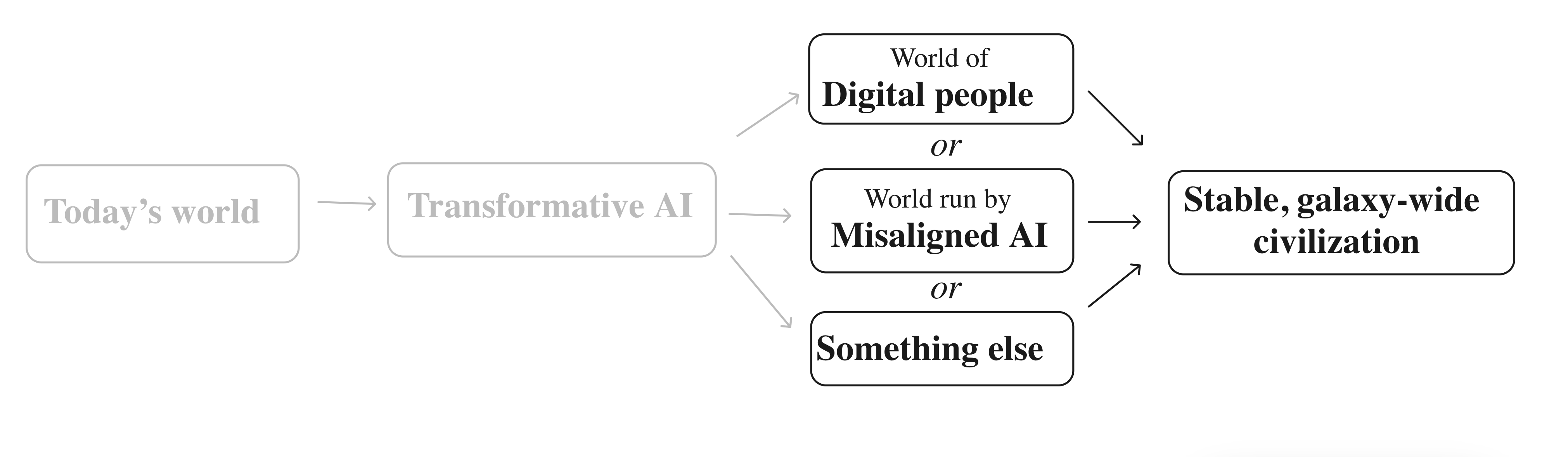

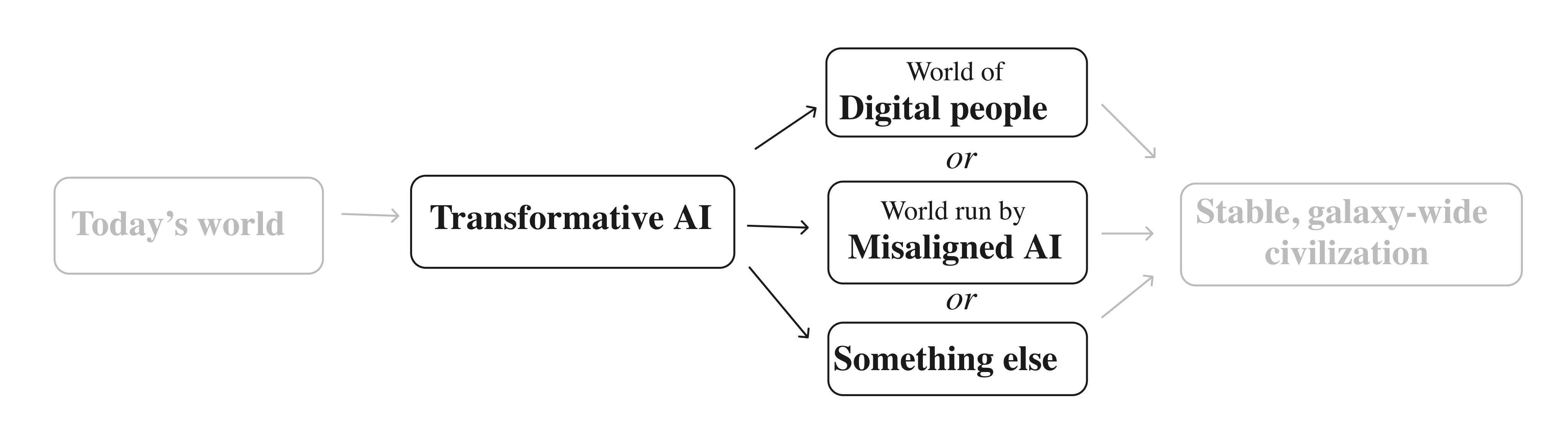

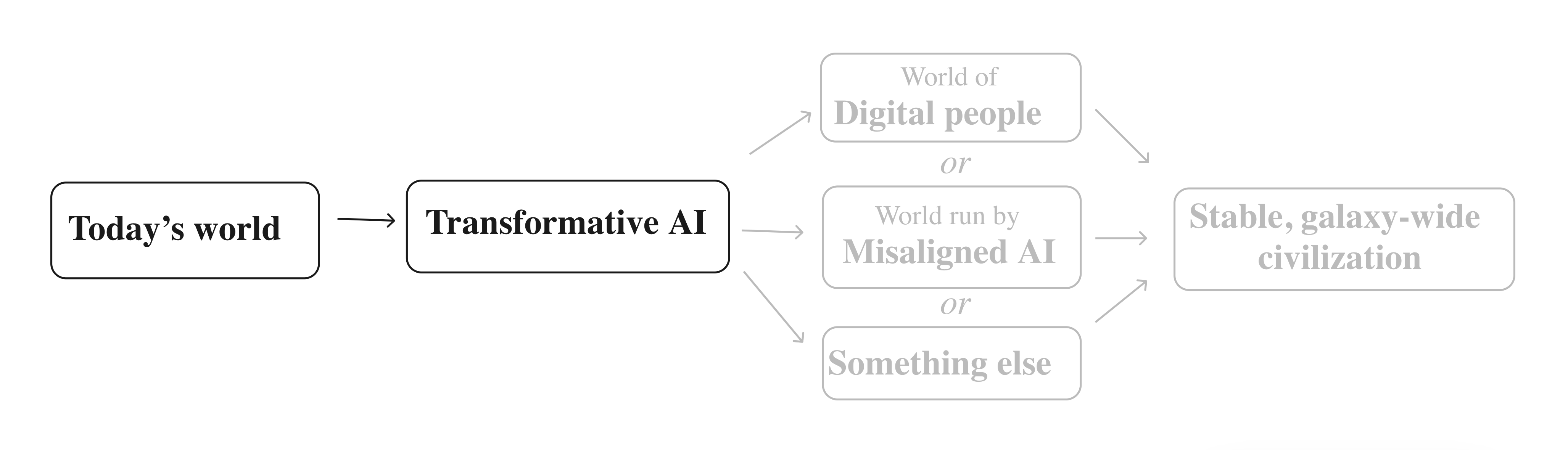

A bit more specifically,1 [LW(p) · GW(p)] I think there is a good chance that:

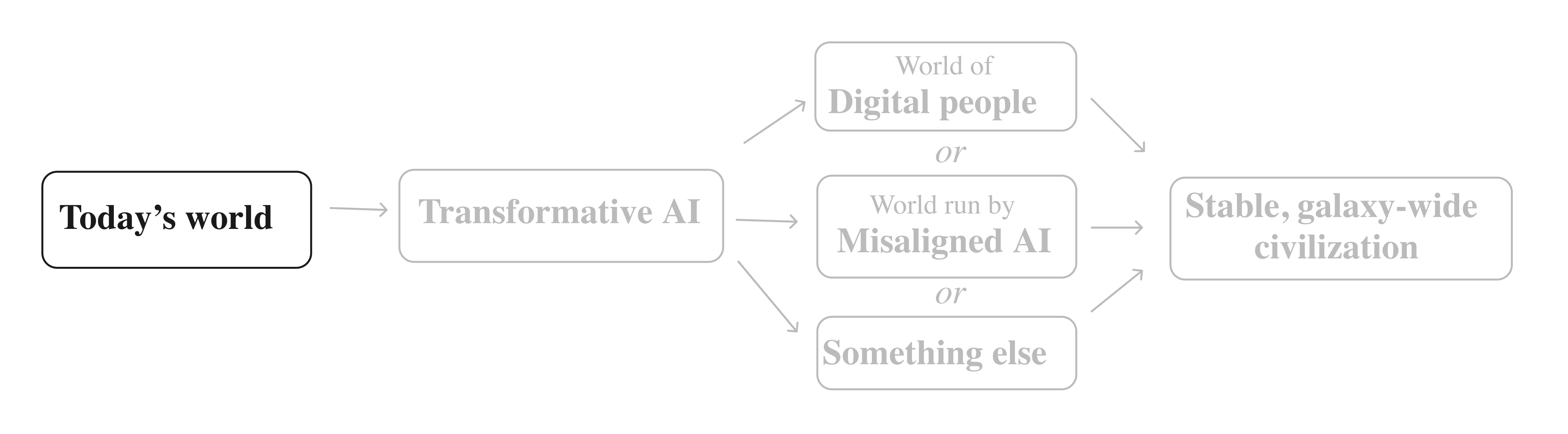

- During the century we're in right now, we will develop technologies that cause us to transition to a state in which humans as we know them are no longer the main force in world events. This is our last chance to shape how that transition happens.

- Whatever the main force in world events is (perhaps digital people, misaligned AI, or something else) will create highly stable civilizations that populate our entire galaxy for billions of years to come. The transition taking place this century could shape all of that.

I think it's very unclear whether this would be a good or bad thing. What matters is that it could go a lot of different ways, and we have a chance to affect that.

I believe the above possibility doesn't get enough attention, discussion, or investment, particularly from people whose goal is to make the world better. By writing about it, I'd like to either help change that, or gain more opportunities to get criticized and change my mind.

This post serves as a summary/roadmap for an 11-post series arguing these points (and the posts themselves are often effectively summaries of longer analyses by others). I will add links as I put out posts in the series.

Roadmap

Our wildly2 [LW(p) · GW(p)] important era

All possible views about humanity's long-term future are wild [LW · GW] argues that two simple observations - (a) it appears likely that we will eventually be able to spread throughout the galaxy, and (b) it doesn't seem any other life form has done that yet - are sufficient to make the case that we live in an incredibly important time. I illustrate this with a timeline of the galaxy.

The Duplicator [? · GW] explains the basic mechanism by which "eventually" above could become "soon": the ability to "copy human minds" could lead to a productivity explosion. This is background for the next few pieces.

Digital People Would Be An Even Bigger Deal [? · GW] discusses how achievable-seeming technology - in particular, mind uploading - could lead to unprecedented productivity, control of the environment, and more. The result could be a stable, galaxy-wide civilization that is deeply unfamiliar from today's vantage point.

Our century's potential for acceleration

This Can't Go On [LW · GW] looks at economic growth and scientific advancement over the course of human history. Over the last few generations, growth has been pretty steady. But zooming out to a longer time frame, it seems that growth has greatly accelerated recently; is near its historical high point; and is faster than it can be for all that much longer (there aren't enough atoms in the galaxy to sustain this rate of growth for even another 10,000 years).

The times we live in are unusual and unstable. Rather than planning on more of the same, we should anticipate stagnation (growth and scientific advancement slowing down), explosion (further acceleration) or collapse.

Forecasting Transformative AI, Part 1: What Kind of AI? (not yet posted on LW) introduces the possibility of AI systems that automate scientific and technological advancement, which could cause explosive productivity. I argue that such systems would be "transformative" in the sense of bringing us into a new, qualitatively unfamiliar future.

Forecasting transformative AI this century

Forecasting Transformative AI: What's the Burden of Proof? (not yet posted on LW) argues that we shouldn't have too high a "burden of proof" on believing that transformative AI could be developed this century, partly because our century is already special in many ways that you can see without detailed analysis of AI.

Forecasting Transformative AI: Are we "trending toward" transformative AI? (not yet posted on LW) discusses the basic structure of forecasting transformative AI, the problems with trying to forecast it based on trends in "AI impressiveness," and the state of AI researcher opinion on transformative AI timelines.

Forecasting transformative AI: the "biological anchors" method in a nutshell (not yet published) summarizes the biological anchors framework [AF · GW] for forecasting AI. This framework is the main factor in my specific forecasts.

I am forecasting more than a 10% chance transformative AI will be developed within 15 years (by 2036); a ~50% chance it will be developed within 40 years (by 2060); and a ~2/3 chance it will be developed this century (by 2100).

AI Forecasting Expertise (not yet published) addresses the question, "Where does expert opinion stand on all of this?"

- The claims I'm making neither contradict a particular expert consensus, nor are supported by one (though most of the key reports I cite have had external expert review). They are, rather, claims about topics that simply have no "field" of experts devoted to studying them.

- Some people might choose to ignore any claims that aren't actively supported by a robust expert consensus; but I don't think that is what we should be doing here.

Wrapping up

Implications of living in the most important century (not published yet) discusses what we can do to help the most important century go as well as possible.

The Most Important Century in a Nutshell (not published yet) will summarize the series in a few pages.

Acknowledgements

I have few-to-no claims to originality. The vast bulk of the claims, observations and insights in this series came from some combination of:

- Years of discussions with others, particularly in the effective altruism and rationalist communities. It's hard to trace specific ideas to specific people within this context, but I know that a huge amount of my thinking comes at least proximately from Carl Shulman, Dario Amodei and Paul Christiano, and that Nick Bostrom's and Eliezer Yudkowsky's work has been very influential generally. (I also understand that earlier futurists and transhumanists influenced these people and communities, though I haven't engaged directly much with their works.)

- In-depth analyses by the Open Philanthropy Longtermist Worldview Investigations team: Ajeya Cotra and Tom Davidson (especially) as well as Nick Beckstead, Joe Carlsmith, and David Roodman. I've also drawn heavily on reports by Katja Grace and Luke Muehlhauser.

In addition, I owe thanks to:

- Ajeya Cotra, María Gutiérrez Rojas and Ludwig Schubert and for help with visualizations.

- A number of people for feedback on earlier drafts:

- My sister Daliya Karnofsky, my wife Daniela Amodei, and Elie Hassenfeld: special thanks for reading the earliest (least readable) drafts and often giving detailed feedback on multiple iterations.

- People who served as "beta readers" and gave significant amounts of feedback, particularly on what was and wasn't making sense for them: Alexander Berger, Damon Binder, Lukas Gloor, Derek Hopf, Mike Levine, Eli Nathan, Sella Nevo, Julian Sancton, Simon Shifrin, Tracy Williams. (Plus a number of people already mentioned above.)

5 comments

Comments sorted by top scores.

comment by Evan R. Murphy · 2022-12-15T23:27:40.488Z · LW(p) · GW(p)

Consider this a short review of the entire "This Most Important Century" sequence, not just the Introduction post.

This series was one of the first and most compelling writings I read when I was first starting to consider AI risk. It basically swayed me from thinking AI was a long way off and will likely have moderate impact among technologies, to thinking AI will likely be transformative and come in the next few decades.

After that I decided to become an AI alignment researcher, in part because of these posts. So the impact of these posts on me personally was quite large.

I found out about this series on Ezra Klein's podcast, when Holden was interviewed there about this series, before I was a LessWrong reader. Given the size of Ezra's audience, I'd be surprised if I was the only one these posts made an impact on. So I would guess the influence of this series was pretty substantial, even outside of their impact on me personally.

comment by HoldenKarnofsky · 2021-08-29T15:40:01.276Z · LW(p) · GW(p)

Footnotes Container

This comment is a container for our temporary "footnotes-as-comments" implementation that gives us hover-over-footnotes.

Replies from: HoldenKarnofsky, HoldenKarnofsky↑ comment by HoldenKarnofsky · 2021-08-29T15:41:44.289Z · LW(p) · GW(p)

2. "Wild" is the best simple term I can think of to communicate the idea that a claim seems like it's "a big deal if true, so much so that it seems a bit hard to believe it's true." Other synonyms could include "out there," "crazy" or "wacky" (though those have some additional connotations I prefer to avoid), and this gif.

↑ comment by HoldenKarnofsky · 2021-08-29T15:40:51.481Z · LW(p) · GW(p)

1. For a more detailed elaboration of what I mean by "most important century," see here (not likely to be of interest to most readers).

comment by Дмитрий Зеленский (dmitrii-zelenskii) · 2021-09-12T13:37:35.715Z · LW(p) · GW(p)

"The Duplicator (not yet posted on LW)" - now posted, n'est ce-pas?