Posts

Comments

Some suggestions for testing the limits of abstract, or spatial reasoning:

the back of the letter 'E'

the back of the letter e

back of the number 42

the back of the last letter in the alphabet

underside of flat earth

the view of earth from 1,000,000,000 km away

view of earth from a million miles away

a view of bacteria using a billion times magnification

That part also jumped out to me as a leap in logic, but I think there's a case to be made for that scenario, that gives it some chance of happening (~50%). It would be the first crypto accepted by the US government. That's a great marketing tagline, and even if the US created some random crypto linked to gasoline, tobacco, or marijuana taxes it'll probably be hyped. Also, it seems that almost anything marketed as a cryptocurrency leads to speculation, even though this proposal is actually more like a digital asset or security.

I wonder if a motivation for this post has to do with Georgist-style land value tax. Turning these land leases into securities will create a market with market prices for the land leases, making it easier to assess the value of, and tax, the land.

There's the issue of differing value of different plots of land. Land isn't fungible, but if large enough swaths of land are similar enough to be grouped up, then it could work.

For the Perpetual Futures arbitrage, the return on investment is sensitive to the price difference between the future and the underlying asset. A price difference of 0.3% is needed for 10% returns per month (since 1.1^(1/30) ≈ 1.00318), while a price difference of 0.1% only gets 3% per month. I went on Binance and checked the spot price vs. the futures price for a moment in time; it was about $50 difference for BTC, or 0.085%.

So I am convinced that it's profitable to arbitrage crypto futures; I predict the rate of return for the next 12 months at 10% - 40% (50% C.I.) with a median of 20% per year. There's a chance of losing everything, but the expected value only decreases by 2% per 1% of losing everything, so as long as you estimate it to be small (<5%) and aren't betting everything, the risk is manageable.

It seems doubtful to me that the 50% - 100% returns per year that you've experienced in the last month will be sustained for a year, and I wonder how it compares to other investments in crypto, both in expected return and in risk profile. Staking Ethereum gets 8% - 12% with little uncertainty, with the caveat that your money is locked up, so one gives up an opportunity cost to chase better investments. Investing in a basket of crypto or specific cryptocurrencies gives ??? rate of return; I think it would have much higher risk and higher returns compared to crypto arbitrage, so higher than the 18 to 20% I estimated for the futures arbitrage in the last paragraph.

In the previous sentence I compared a risky asset to a mostly risk-free arbitrage, and it might seem that's there's no principled way to compare the two, with the only commonality being both are in the crypto space. The assumption I make is that traders are willing to pay more for the futures for higher leverage, and that the market is efficient in some way, such that there's a relation between the expectations of the rate of return of the traders and the price premium they're willing to pay. So I still find some assumptions of market efficiency useful, even if the concrete statement of the Efficient Market Hypothesis does not hold.

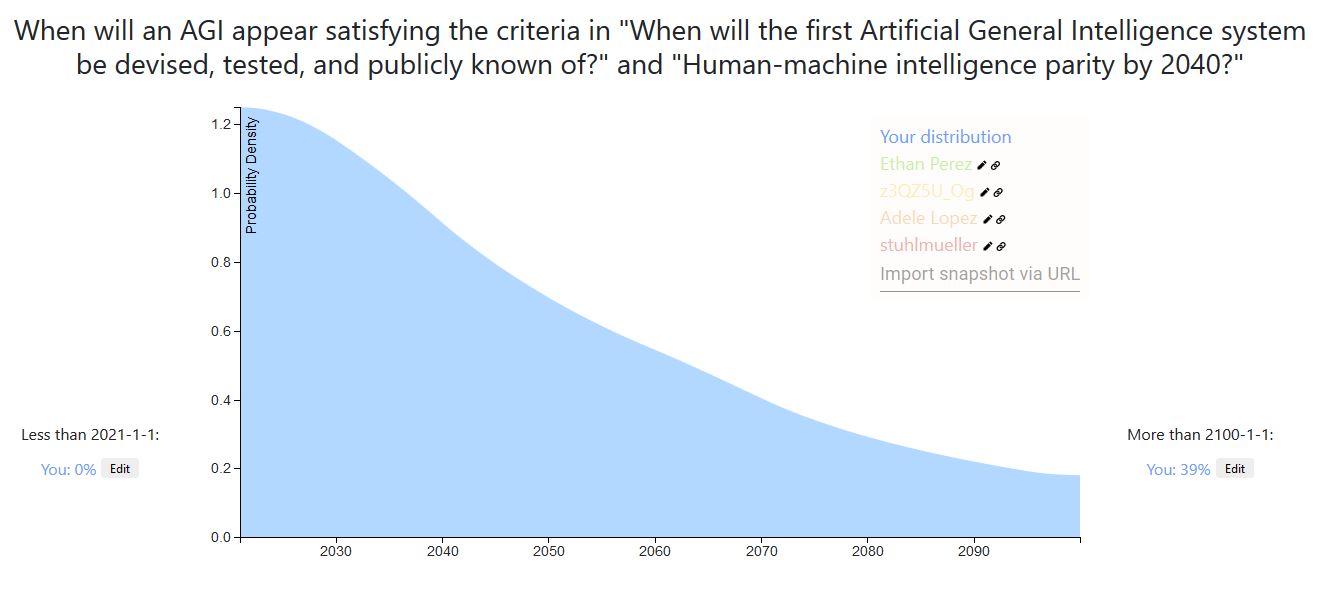

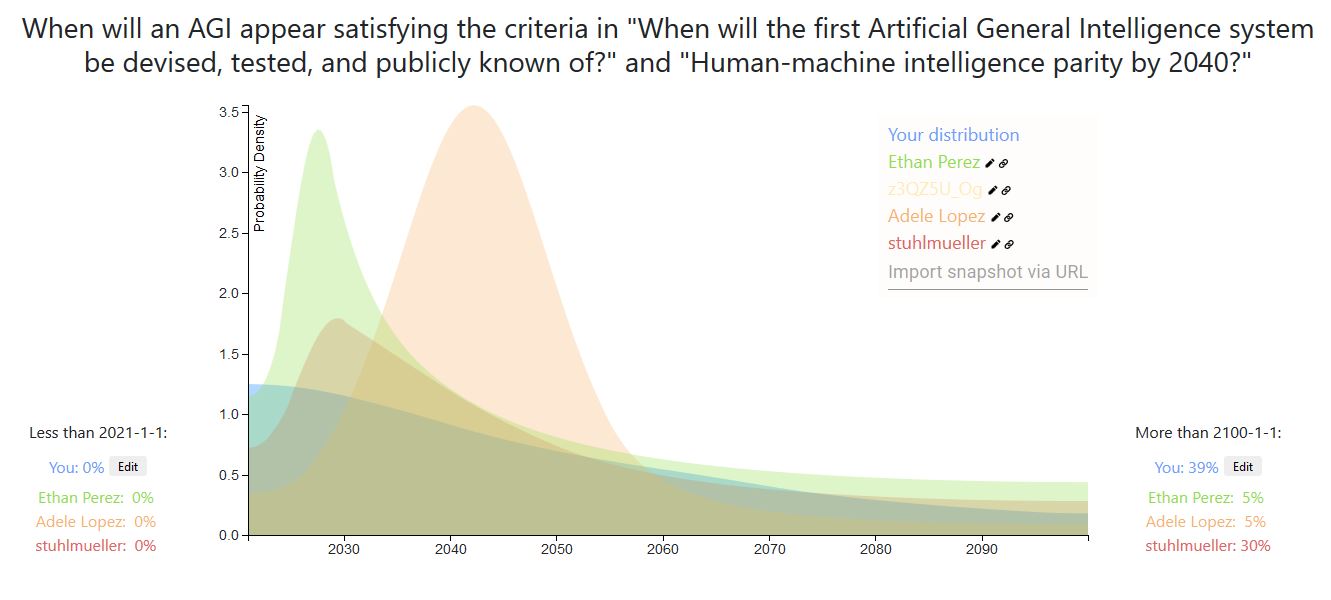

A week ago I recorded a prediction on AI timeline after reading a Vox article on GPT-3 . In general I'm much more spread out in time than the Lesswrong community. Also, I weigh more heavily outside view considerations than detailed inside view information. For example, a main consideration of my prediction is using the heurastic With 50% probability, things will last twice as long as they already have, with the starting time of 1956, the time of the Dartmouth College summer AI conference.

If AGI will definitely happen eventually, then the heuristic gives us [21.3, 64, 192] years at the [25th, 50th, 75th] percentiles of AGI to occur. AGI may never happen, but the chance of that is small enough that adjusting for that here will not make a big difference (I put ~10% that AGI will not happen for 500 years or more, but it already matches that distribution quite well).

A more inside view consideration is: what happens if the current machine learning paradigm scales to AGI? Given that assumption, a 50% confidence interval might be [2028, 2045] (since the current burst of machine learning research began in 2012-2013), which is more in line with the Lesswrong predictions and Metaculus community prediction . Taking the super outside view consideration and the outside view-ish consideration together, I get the prediction I made a week ago.

I adapted my prediction to the timeline of this post [1], and compared it with some other commenters predictions [2].

I think the Metaculus crowd median is among the highest-quality predictions out there. Especially when someone goes through all the questions where they're confident the median is off, and makes comments pointing this out. I used to do this, some months back when there were more short term questions on Metaculus and more questions where I differed from the community. When you made a bunch of comments of this type a month back on Metaculus, that covered most of the 'holes', in my opinion, and now there are only a few questions where I differ from the median prediction.

Another source of predictions is from the IARPA Geoforecasting Challenge, where if you're competing you have access to hundreds of MTurk human predictions through an API. The quality of the predictions are not as great, and there are some questions where the MTurk crowd is way off. But they do have a question on whether Iran will execute or be targeted in a national military attack.

I agree that it's quite possible to beat the best publicly available forecasts. I've been wanting to work together on a small team to do this (where I imagine the same set of people would debate and make the predictions). If anyone's interested in this, I'm datscilly on Metaculus and can be reached at [my name] at gmail.