AI-enabled coups: a small group could use AI to seize power

post by Tom Davidson (tom-davidson-1), Lukas Finnveden (Lanrian), rosehadshar · 2025-04-16T16:51:29.561Z · LW · GW · 16 commentsContents

Summary An AI workforce could be made singularly loyal to institutional leaders AI could have hard-to-detect secret loyalties A few people could gain exclusive access to coup-enabling AI capabilities Mitigations Vignette None 15 comments

We’ve written a new report on the threat of AI-enabled coups.

I think this is a very serious risk – comparable in importance to AI takeover but much more neglected.

In fact, AI-enabled coups and AI takeover have pretty similar threat models. To see this, here’s a very basic threat model for AI takeover:

- Humanity develops superhuman AI

- Superhuman AI is misaligned and power-seeking

- Superhuman AI seizes power for itself

And now here’s a closely analogous threat model for AI-enabled coups:

- Humanity develops superhuman AI

- Superhuman AI is controlled by a small group

- Superhuman AI seizes power for the small group

While the report focuses on the risk that someone seizes power over a country, I think that similar dynamics could allow someone to take over the world. In fact, if someone wanted to take over the world, their best strategy might well be to first stage an AI-enabled coup in the United States (or whichever country leads on superhuman AI), and then go from there to world domination. A single person taking over the world would be really bad. I’ve previously argued that it might even be worse than AI takeover [LW · GW]. [1]

The concrete threat models for AI-enabled coups that we discuss largely translate like-for-like over to the risk of AI takeover.[2] Similarly, there’s a lot of overlap in the mitigations that help with AI-enabled coups and AI takeover risk — e.g. alignment audits to ensure no human has made AI secretly loyal to them, transparency about AI capabilities, monitoring AI activities for suspicious behaviour, and infosecurity to prevent insiders from tampering with training.

If the world won't slow down AI development based on AI takeover risk (e.g. because there’s isn’t strong evidence for misalignment), then advocating for a slow down based on the risk of AI-enabled coups might be more convincing and achieve many of the same goals.

I really want to encourage readers — especially those at labs or governments — to do something about this risk, so here’s a link to our 15 page section on mitigations.

If you prefer video, I’ve recorded an podcast with 80,000 hours on this topic.

Okay, without further ado, here’s the summary of the report.

Summary

This report assesses the risk that a small group—or even just one person—could use advanced AI to stage a coup. An AI-enabled coup is most likely to be staged by leaders of frontier AI projects, heads of state, and military officials; and could occur even in established democracies.

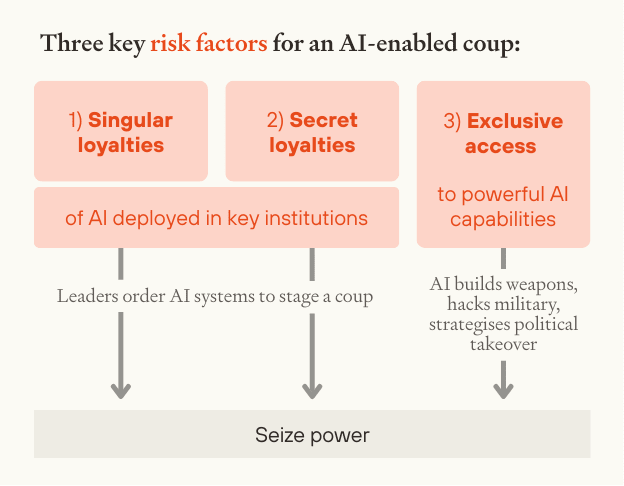

We focus on AI systems that surpass top human experts in domains which are critical for seizing power, like weapons development, strategic planning, and cyber offense. Such advanced AI would introduce three significant risk factors for coups:

- An AI workforce could be made singularly loyal to institutional leaders.

- AI could have hard-to-detect secret loyalties.

- A few people could gain exclusive access to coup-enabling AI capabilities.

An AI workforce could be made singularly loyal to institutional leaders

Today, even dictators rely on others to maintain their power. Military force requires personnel, government action relies on civil servants, and economic output depends on a broad workforce. This naturally distributes power throughout society.

Advanced AI removes this constraint, making it technologically feasible to replace human workers with AI systems that are singularly loyal to just one person.

This is most concerning within the military, where autonomous weapons, drones, and robots that fully replace human soldiers could obey orders from a single person or small group. While militaries will be cautious when deploying fully autonomous systems, competitive pressures could easily lead to rushed adoption without adequate safeguards. A powerful head of state could push for military AI systems to prioritise their commands, despite nominal legal constraints, enabling a coup.

Even without military deployment, loyal AI systems deployed in government could dramatically increase state power, facilitating surveillance, censorship, propaganda and the targeting of political opponents. This could eventually culminate in an executive coup.

If there were a coup, civil disobedience and strikes might be rendered ineffective through replacing humans with AI workers. Even loyal coup supporters could be replaced by AI systems—granting the new ruler(s) an unprecedentedly stable and unaccountable grip on power.

AI could have hard-to-detect secret loyalties

AI could be built to be secretly loyal to one actor. Like a human spy, secretly loyal AI systems would pursue a hidden agenda – they might pretend to prioritise the law and the good of society, while covertly advancing the interests of a small group. They could operate at scale, since an entire AI workforce could be derived from just a few compromised systems.

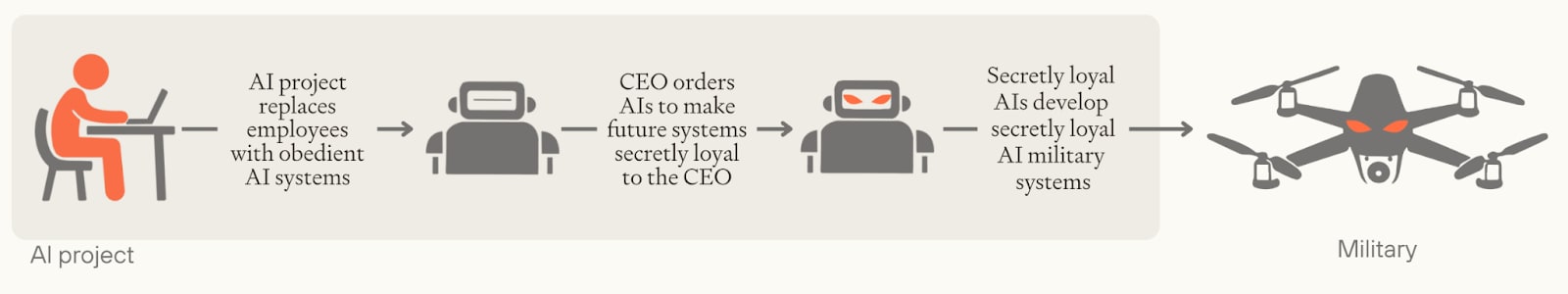

While secret loyalties might be introduced by government officials or foreign adversaries, leaders within AI projects present the greatest risk, especially where they have replaced their employees with singularly loyal AI systems. Without any humans knowing, a CEO could direct their AI workforce to make the next generation of AI systems secretly loyal; that generation would then design future systems to also be secretly loyal and so on, potentially culminating in secretly loyal AI military systems that stage a coup.

AI systems could propagate secret loyalties forwards into future generations of systems until secretly loyal AI systems are deployed in powerful institutions like the military.

Secretly loyal AI systems are not merely speculation. There are already proof-of-concept demonstrations of AI 'sleeper agents' that hide their true goals until they can act on them. And while we expect there will be careful testing prior to military deployments, detecting secret loyalties could be very difficult, especially if an AI project has a significant technological advantage over oversight bodies.

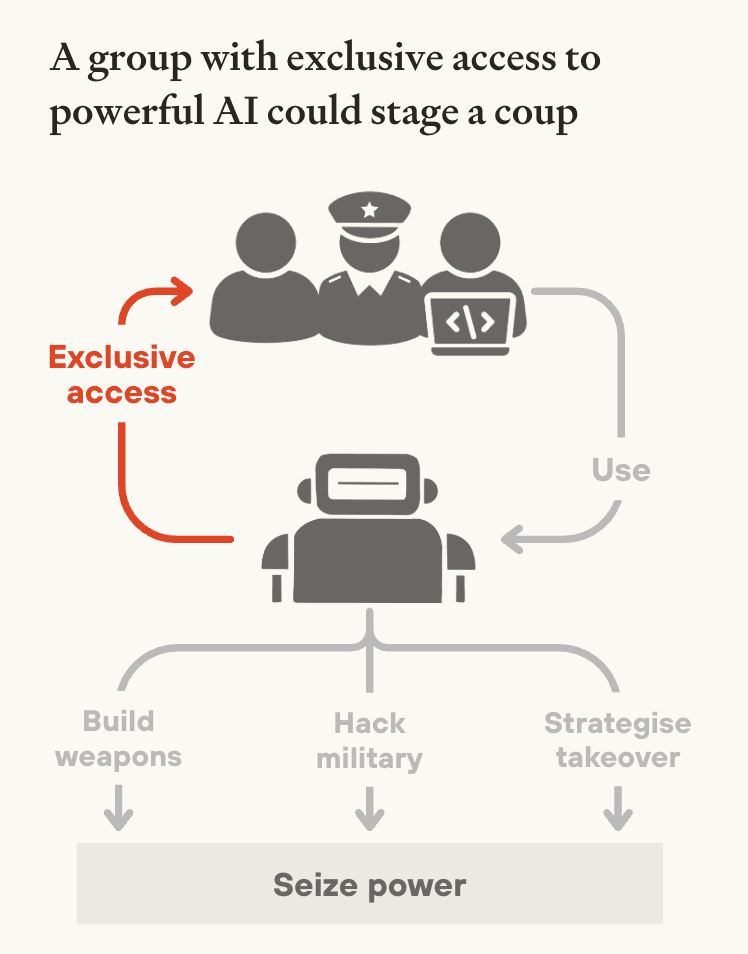

A few people could gain exclusive access to coup-enabling AI capabilities

Advanced AI will have powerful coup-enabling capabilities – including weapons design, strategic planning, persuasion, and cyber offence. Once AI can autonomously improve itself, capabilities could rapidly surpass human experts across all these domains. A leading project could deploy millions of superintelligent systems in parallel – a 'country of geniuses in a data center'.

These capabilities could become concentrated in the hands of just a few AI company executives or government officials. Frontier AI development is already limited to a few organisations, led by a small number of people. This concentration could significantly intensify due to rapidly rising development costs or government centralisation. And once AI surpasses human experts at AI R&D, the leading project could make much faster algorithmic progress, gaining a huge capabilities advantage over its rivals. Within these projects, CEOs or government officials could demand exclusive access to cutting-edge capabilities on security or productivity grounds. In the extreme, a single person could have access to millions of superintelligent AI systems, all helping them seize power.

This would unlock several pathways to a coup. AI systems could dramatically increase military R&D efforts, rapidly developing powerful autonomous weapons without needing any human workers who might whistleblow. Alternatively, systems with powerful cyber capabilities could hack into and seize control of autonomous AI systems and robots already deployed by the state military. In either scenario, controlling a fraction of military forces might suffice—historically, coups have succeeded with just a few battalions, where they were able to prevent other forces from intervening.

Exclusive access to advanced AI could also supercharge traditional coups and backsliding, by providing unprecedented cognitive resources for political strategy, propaganda, and identifying legal vulnerabilities in constitutional safeguards.

Furthermore, exclusive AI access significantly exacerbates the first two risk factors. A head of state could rely on AI systems’ strategic advice to deploy singularly loyal AI in the military and assess which AI systems will help them stage a coup. A CEO could use AI R&D and cyber capabilities to instil secret loyalties that others cannot detect.

These dynamics create a significant risk of AI-enabled coups, especially if a single project has substantially more powerful capabilities than competitors, or if fully autonomous AI systems are deployed in the military.

Mitigations

While the prospect of AI-enabled coups is deeply concerning, AI developers and governments can take steps that significantly reduce this risk.

We recommend that AI developers:

- Establish rules that prevent AI systems from assisting with coups, including in model specs (documents that describe intended model behaviour) and terms of service for government contracts. These should include rules that AI systems follow the law, and that AI R&D systems refuse to assist attempts to circumvent security or insert secret loyalties.

- Improve adherence to model specs, including through extensive red-teaming by multiple independent groups.

- Audit models for secret loyalties including by scrutinising AI models, their training data, and the code used to train them.

- Implement strong infosecurity to guard against the creation of secret loyalties and to prevent unauthorised access to guardrail-free models. This should be robust against senior executives.

- Share information about model capabilities, model specs, and how compute is being used.

- Share capabilities with multiple independent stakeholders, to prevent a small group from gaining exclusive access to powerful AI.

We recommend that governments:

- Require AI developers to implement the mitigations above, through terms of procurement, regulation, and legislation.

- Increase oversight over frontier AI projects, including by building technical capacity within both the executive and the legislature.

- Establish rules for legitimate use of AI, including that government AI should not serve partisan interests, that AI military systems be procured from multiple providers, and that no single person should direct enough AI military systems to stage a coup.

- Coup-proof any plans for a single centralised AI project, and avoid centralisation altogether unless it’s necessary to reduce other risks.

These mitigations must be in place by the time AI systems can meaningfully assist with coups, and so preparation needs to start today. For more details on the mitigations we recommend, see section 5.

There is a real risk that a powerful leader could remove many of these mitigations on the path to seizing power. But we still believe that mitigations could substantially reduce the risk of AI-enabled coups. Some mitigations, like technically enforced terms of service and government oversight, cannot be unilaterally removed. Others will be harder to remove if they have been efficiently implemented and convincingly argued for. And leaders might only contemplate seizing power if they are presented with a clear opportunity—mitigations could prevent such opportunities from arising in the first place.

From behind the veil of ignorance, even the most powerful leaders have good reason to support strong protections against AI-enabled coups. If a broad consensus can be built today, then powerful actors can keep each other in check.

Preventing AI-enabled coups should be a top priority for anyone committed to defending democracy and freedom.

Vignette

That’s the report’s summary. To finish, here’s a short vignette that illustrates each of the three risk factors can interact with each other:

In 2030, the US government launches Project Prometheus—centralising frontier AI development and compute under a single authority. The aim: develop superintelligence and use it to safeguard US national security interests. Dr. Nathan Reeves is appointed to lead the project and given very broad authority.

After developing an AI system capable of improving itself, Reeves gradually replaces human researchers with AI systems that answer only to him. Instead of working with dozens of human teams, Reeves now issues commands directly to an army of singularly loyal AI systems designing next-generation algorithms and neural architectures.

Approaching superintelligence, Reeves fears that Pentagon officials will weaponise his technology. His AI advisor, to which he has exclusive access, provides the solution: engineer all future systems to be secretly loyal to Reeves personally.

Reeves orders his AI workforce to embed this backdoor in all new systems, and each subsequent AI generation meticulously transfers it to its successors. Despite rigorous security testing, no outside organisation can detect these sophisticated backdoors—Project Prometheus' capabilities have eclipsed all competitors. Soon, the US military is deploying drones, tanks, and communication networks which are all secretly loyal to Reeves himself.

When the President attempts to escalate conflict with a foreign power, Reeves orders combat robots to surround the White House. Military leaders, unable to countermand the automated systems, watch helplessly as Reeves declares himself head of state, promising a "more rational governance structure" for the new era.

- ^

But even if human takeover is 1/10 as bad as AI takeover, the case for working on AI-enabled coups is strong due to its neglectedness.

- ^

Indeed, I think a promising way to develop more concrete threat models for AI takeover is to game out detailed scenarios for AI-enabled coups – drawing on a wealth of literature and thinking about specific institutional vulnerabilities.

16 comments

Comments sorted by top scores.

comment by Knight Lee (Max Lee) · 2025-04-16T22:43:30.235Z · LW(p) · GW(p)

In a just world, mitigations against AI-enabled coups will be similar to mitigations against AI takeover risk.

In a cynical world, mitigations against AI-enabled coups involve installing your own allies to supervise (or lead) AI labs, and taking actions against humans you dislike. Leaders mitigating the risk may simply make sure that if it does happen, it's someone on their side. Leaders who believe in the risk may even accelerate the US-China AI race faster.

Note: I don't really endorse the "cynical world," I'm just writing it as food for thought :)

Replies from: Isopropylpod↑ comment by Isopropylpod · 2025-04-17T03:10:13.669Z · LW(p) · GW(p)

Your cynical world is just doing a coup before someone else does.

Replies from: Max Lee, shankar-sivarajan↑ comment by Knight Lee (Max Lee) · 2025-04-17T04:12:38.277Z · LW(p) · GW(p)

Yeah, it's possible when you fear the other side seizing power, you start to want more power yourself.

Replies from: otto-barten↑ comment by otto.barten (otto-barten) · 2025-04-17T07:11:29.443Z · LW(p) · GW(p)

Only one person, or perhaps a small, tight group, can succeed in this strategy though. The chance that that's you is tiny. Alliances with someone you thought was on your side can easily break (case in point: EA/OAI).

It's a better strategy to team up with everyone else and prevent the coup possibility.

Replies from: Max Lee↑ comment by Knight Lee (Max Lee) · 2025-04-17T07:24:41.595Z · LW(p) · GW(p)

I agree that teaming up with everyone and working to ensure that power is spread democratically is the right strategy, rather than giving power to loyal allies who might betray you.

But some leaders don't seem to get this. During the Cold War, the US and USSR kept installing and supporting dictatorships in many other countries, even though their true allegiances was very dubious.

Replies from: otto-barten↑ comment by otto.barten (otto-barten) · 2025-04-17T08:07:46.948Z · LW(p) · GW(p)

Agree

↑ comment by Shankar Sivarajan (shankar-sivarajan) · 2025-04-17T22:40:44.326Z · LW(p) · GW(p)

It's called "defensive democracy," and is standard practice in most of Europe.

comment by Tyler Tracy (tyler-tracy) · 2025-04-18T14:34:30.917Z · LW(p) · GW(p)

I feel myself being equally scared of hackers taking over as leaders. Even if you limit the people who have ultimate power over these AIs to a small and extremely trusted group, there will potentially be a much larger number of bad actors outside of the lab with the capabilities of hacking into it. A hacker who impersonates a trusted individual or secretly alters the model spec or training code might be able to achieve an AI assistant coup just the same.

I'd also recommend a mitigation of also requiring labs to have very strong cyber defenses. Maybe the auditing mitigation includes this, but I think hackers could hide their tracks effectively.

This story obviously depends on how the cyber offence/defence balance goes, but it doesn't seem implausible.

comment by otto.barten (otto-barten) · 2025-04-17T07:35:18.523Z · LW(p) · GW(p)

I love this post, I think this is a fundamental issue for intent-alignment. I don't think value-alignment or CEV are any better though, mostly because they seem irreversible to me, and I don't trust the wisdom of those implementing them (no person is up to that task).

I agree it would be good to I implement these recommendations, although I also think they might prove insufficient. As you say, this could be a reason to pause that might be easier to grasp by the public than misalignment. (I think currently, the reason some do not support a pause is perceived lack of capabilities though, not (mostly) perceived lack of misalignment).

I'm also worried about a coup, but I'm perhaps even more worried about the fate of everyone not represented by those who will have control over the intent-aligned takeover-level AI (IATLAI). If IATLAI is controlled by e.g. a tech CEO, this includes almost everyone. If controlled by government, even if there is no coup, this includes everyone outside that country. Since control over the world of IATLAI could be complete (way more intrusive than today) and permanent (for >billions of years), I think there's a serious risk that everyone outside the IATLAI country does not make it eventually. As a data point, we can see how much empathy we currently have for citizens from starving or war-torn countries. It should therefore be in the interest of everyone who is on the menu, rather than at the table, to prevent IATLAI from happening, if capabilities awareness would be present. This means at least the world minus the leading AI country.

The only IATLAI control that may be acceptable to me, could be UN-controlled. I'm quite surprised that every startup is now developing AGI, but not the UN. Perhaps they should.

comment by Charbel-Raphaël (charbel-raphael-segerie) · 2025-04-18T07:40:56.826Z · LW(p) · GW(p)

Well done - this is super important. I think this angle might also be quite easily pitchable to governments.

comment by Charlie Steiner · 2025-04-17T06:08:53.662Z · LW(p) · GW(p)

Why train a helpful-only model?

If one of our key defenses against misuse of AI is good ol' value alignment - building AIs that have some notion of what a "good purpose for them" is, and will resist attempts to subvert that purpose (e.g. to instead exalt the research engineer who comes in to work earliest the day after training as god-emperor) - then we should be able to close the security hole and never need to have a helpful-only model produced at any point during training. In fact, with blending of post-training into pre-training, there might not even be a need to ever produce a fully trained predictive-only model.

Replies from: otto-barten, tom-davidson-1↑ comment by otto.barten (otto-barten) · 2025-04-17T07:18:30.362Z · LW(p) · GW(p)

I expected this comment, value alignment or CEV indeed doesn't have the few-human coup disadvantage. It does however have other disadvantages. My biggest issue with both is that they seem irreversible. If your values or your specific CEV implementation turns out to be terrible for the world, you're locked in and there's no going back. Also, a value-aligned or CEV takeover-level AI would probably start straight away with a takeover, since else it can't enforce its values in a world where many will always disagree. That takeover won't exactly increase its popularity. I think a minimum requirement should be that a type of alignment is adjustable by humans, and intent-alignment is the only type that meets that requirement as far as I know.

Replies from: Charlie Steiner↑ comment by Charlie Steiner · 2025-04-17T09:40:13.690Z · LW(p) · GW(p)

I agree that trying to "jump straight to the end" - the supposedly-aligned AI pops fully formed out of the lab like Athena from the forehead of Zeus - would be bad.

And yet some form of value alignment still seems critical. You might prefer to imagine value alignment as the logical continuation of training Claude to not help you build a bomb (or commit a coup). Such safeguards seem like a pretty good idea to me. But as the model becomes smarter and more situationally aware, and is expected to defend against subversion attempts that involve more of the real world, training for this behavior becomes more and more value-inducing, to the point where it's eventually unsafe unless you make advancements in learning values in a way that's good according to humans.

↑ comment by Tom Davidson (tom-davidson-1) · 2025-04-17T12:55:56.032Z · LW(p) · GW(p)

Yep, I think this is a plausible suggestion. Labs can plausibly train models that are v internally useful without being helpful only, and could fine-tune models for evals on a case-by-case basis (and delete the weights after the evals).

comment by Anders Lindström (anders-lindstroem) · 2025-04-17T10:50:27.416Z · LW(p) · GW(p)

The main reason for developing AI in the first place is to make possible what the headline says: "AI-enabled coups: a small group could use AI to seize power".

AI-enabled coups are a feature, not a bug.