Live Machinery: An Interface Design Philosophy for Wholesome AI Futures

post by Sahil · 2024-11-01T17:24:09.957Z · LW · GW · 3 commentsContents

Fluid interfaces for sensemaking at pace with AI development.

0. What is this?

Huh?

Wait, so you think AI can only be mildly intelligent?

But you only care about the short term, of “mild intelligence”?

So you don’t care about risks?

1. What is Live Machinery / autostructuring?

Okay, I’m sold. Are we going to build some AI tools?

“Wishes and prayers”?! Until that last bit I was hopeful you were doing the opposite of “build an AGI god” thing that everyone seems to end up at!

So AI is going to do the meaning-making for me?

What’s an example of a wish-based, postformal interface?

Preface for the table.

Table of Contrasting Examples

Cool! Do I get to slap a chatbot on something to make a Talk-To-Your-X interface?

2. Who am I (the participant) and what am I going to do in the workshop?

What is the schedule of my participation?

How do I sign up?

The main invitation ends here, and what follows is reference material for the above. I'd recommend reading it if you'd enjoy more texture. The appendix, especially

3. What is Opportunity Modeling?

Are we going to extrapolate trendlines and look at scaling laws?

Is this d/acc?

How do you do opportunity modeling?

Why are you excited about the top-middle sextant in particular?

Wait, why are there “sextants" again?

4. What is Live Theory?

Oooh, more detail please? Is this automation of research?

Can you give me an example?

So LLMs can help create formalisms. What’s the big deal?

Is the whole goal about enhancing and augmenting conceptual research?

This is dumb and not how theories are made?

Okay, again in different words then?

Can I have more vague slogany words about the whole vision, then, that I can keep in the back of my mind while we do very concrete things?

Live theory in oversimplified claims:

Claims about AI.

Claims about theorization.

Assorted connections between Live Theory and Opportunity Modeling

Design Principles

I. Design Recipes

II. Design Principles

A. Prayer-based

B. Non-modular

C. Seamless

D. Ownership

E. Pluralism

F. Acausality

G. Sane, Soothing, Wholesome

III. Extended Table Preface

None

3 comments

Fluid interfaces for sensemaking at pace with AI development.

- Register interest here for the workshop in November 2024 (or to be invited to future workshops). To know some practical details, go to section 2 [? · GW].

- Click here to help raise funds for the workshop and related events.

- Apply for the AI Safety camp 2025 project on live machinery in tech and governance.

- To learn more about the broader vision and engage with this strange community, send me a DM.

Disclaimer: this is both an invitation and something of a reference doc. I've put the more reference-y material in collapsible sections at the end. The bold font is for easier skimming.

0. What is this?

This is an extended rendezvous for creating culture and technology, for those enlivened by reimagining AI-based interfaces.

Specifically, we’re creating for the near future where our infrastructure is embedded with realistic levels of intelligence (i.e., only mildly creative but widely adopted) yet full of novel, wild design paradigms anyway.

In particular, we’re going to concretely explore wholesome interface designs that anticipate a teleattention era. Where cheap attentivity implies unstructured meanings can scalably compose without requiring shared preformed protocols, because moderate AI [LW · GW] reliably does tailored, just-in-time “autostructuring".

The focus (which we will get to, with some patience) is on applying this to transforming research methodology and interaction [LW · GW]. This is what “live theory” [? · GW] is about: sensemaking that can feed into a rich, sane, and heart-opening future.

Huh?

It’s a project for AI interfaces that don’t suck, to redo how (conceptual AI safety) research is done.

Wait, so you think AI can only be mildly intelligent?

Nope.

But you only care about the short term, of “mild intelligence”?

Nope, the opposite. We expect AI to be very, very, very transformative. And therefore, we expect intervening periods to be very, very transformative (as should you!). Additionally, we expect even “very transformative” intervening periods to be crucial, and quite weird themselves, however short they might be.

In preparing for this upcoming intervening period, we want to work on the newly enabled design ontologies of sensemaking that can keep pace with a world replete with AIs and their prolific outputs. Using the near-term crazy future to meet the even crazier far-off future is the only way to go.

So you don’t care about risks?

Nope, the opposite. This is all about research methodological opportunities meeting risks of infrastructural insensitivity [? · GW]. As you’ll see in the last section [LW · GW], we will specifically move towards adaptive sensemaking meeting super adaptive dangers.

Watch a 10 minute video here for a little more background: Scaling What Doesn’t Scale: Teleattention Tech.

1. What is Live Machinery / autostructuring?

Autostructuring is about moving into fresh paradigms of post-formal design, where we scale productive interactions not via replicating fixed structure but via ubiquitous attentive infrastructure. That’s a dense sentence, so let’s unpack.

The only way to scale so far has been cookie-cutter replication of structure.

For example, this text, even though quite adjustable, comes in a relatively fixed formatting scheme. Bold, italics and underline have somehow become the universal three options on text. They’re so ingrained that we rarely even imagine that we might need anything else. If you extend this yourself by creating a new style called “wave” or “overline”, you can’t copy it into a google doc and expect it to work. Who knows whether they’ll be wanted. Almost no fonts will be compatible, because they weren’t given any notice about “wave” or “overline”. People won’t know how or when to use it either.

But maybe you would still like to use an “overline” style on your favorite fonts. Unfortunately, you don’t matter to the intermediating machines. It’s too expensive to take care of your whims. What happens when you share the doc with us? We’ll all have to add everyone’s crap to our shared schema, if you get your way. It just doesn’t scale.

…unless we don’t have to share structure. Unless you can have a custom interface designed for you, attentive to your needs. AI might take minutes to do that now, cost a ton, and execute unreliably without feedback. But the cost, latency, and error is falling rapidly.

Most importantly, a tailormade interface could fulfill not just your individual structuring wishes around the doc, but also attend to interoperating wishes. Intelligently displaying or substituting overline with underline for my view of your doc could be something it comes up with. Instead of having a fixed universal format, we could have a plethora of self-maintaining, near-instantaneous translation between the local structures that we each are happy with.

That’s a very simple example interface of what this new, anticipative design paradigm is about. Anti-hype around the powers of AI in the next few years, but hype around the powers of designing AI ubiquitously into our infrastructure. When we have attentive, interoperating infrastructure that can, say, generate an entire UI from scratch in as much time as it takes to open a website (which we’re certainly heading towards), we don’t need duplication of fixed/universal structure. Instead of distributing some finalized formal specification (like code), we can distribute and match informal suggestions of meaning (like comments or prompts) that immediately turn into formal outputs as needed.

This expands scope and fluidity, without losing interoperability. Instead of forcing human subtlety to conform to fixed shared structure just to be able to interoperate with each other, AI can autostructure: auto-adapt to, co-create with, and harmonize with the rich subtleties of specific living individuals and their communities.

Our eventual aim is to apply this interface-design-philosophy to postformal sensemaking, especially for mitigating AI risk. We aim to see whether we can do better than the rigid machinery of formal equations, which means redesigning the machinery (i.e. interfaces, infrastructure, methodology) underlying formal theories to take advantage of AI assistance. This is termed live theory [? · GW].

This is an ambitious goal, so first we will cut our teeth on (useful) interfaces in general, not mainly theorization or sensemaking, so as to gain familiarity with the design philosophy. The last section of this post is a primer on “live theory”. [LW · GW]

Okay, I’m sold. Are we going to build some AI tools?

Not just tools; you build culture. You build the new design philosophies of interfaces and interaction, when you can do things that don’t scale, at scale [LW · GW]. This is not at all what we're used to, and so it has barely shown up in the tools available today, despite the AI hype (maybe even because of the hype).

Of primary interest here is this new ability to scale attentivity/sensitivity [LW · GW]enabled by the wish-fulfilling intelligences that will quickly become ubiquitous in our infrastructure. The wide availability of even mild but fast and cheap intelligence supports scalable detail-orientation and personal attention that can delegate, and even obviate, structuring demands from humans (although we’re still free to).

Being able to autostructure things as easily as one might do, say, a lookup today, undermines a lot of the fundamental design assumptions about how data, protocols, interfaces, information, and abstraction operate. Each of these basic ideas were shaped in a time when we’ve had to rely on fixed formalisms and logistical structure to transport our digital goods.

Without any hype, it is possible to say that we’re entering a “postformal” era where you don’t have to formalize to make things workable. Comments are often as good as code (increasingly so, as tech improves), maybe even better and more flexible. This holds the possibility of moving our interfaces away from abstracted commands and controls and into subtle wishes and prayers.

“Wishes and prayers”?! Until that last bit I was hopeful you were doing the opposite of “build an AGI god” thing that everyone seems to end up at!

Yes, this is very much not about building an AGI god.

Here’s a definition of “prayer” apt for infrastructure that is mildly adaptive but widely adopted.

"Prayer", not as in "pray to an AI god”, but "prayer" as in "send out a message to a fabric of intelligence without trying super hard to control or delineate its workings in detail, in a way that is honest to you rather than a message controlled/built for someone or something else.

When machines can actuate potential ideas from 1 to 100 nearly instantaneously, most of the work of living beings will be to supply heartfelt relevance, meaning, vision; the 0 to 1. [1] (Note that being able to generate “ideas” is not the same as being clued in to relevance; mildly intelligent AI can be of great help with ideas, but can lack subtle connection to meaningfulness and be boring or hallucinatory or deceptive.)

However, we living beings will assist the wish-fulfilling machine apparatus that will surround us, especially while we’re still in the mild-to-moderate intelligence levels. This will not look like detailed modular commands or recipes (nor genies that require no interaction at all) but context-sensitive hints and anchors that will feed into the AI-assisted actuation.

So AI is going to do the meaning-making for me?

No. This is neither about you, nor the AI.

Meaning happens in the middle, between us. Existing machinery both supports (because of scale and speed) and hinders (because of homogenization and fixity) the dynamics of rich meaning.

Trying to zero in on the relationship between you and AI bots is missing the point. There are no endless spammy AI bots in this vision. Just because it is cheap to generate AI content, doesn’t mean you should spam the user and abdicate responsibility for tasteful design.

This is about how you and I will relate to each other via AI as mostly-ignorable infrastructure. Like the internet, which is rarely the topic of conversation even in your internet-mediated video calls, and only foregrounded when the internet doesn't work right for a few seconds.

So this is about matching your postformal prayers with mine, and fluidly creating just-in-time interfaces and structures that precisely suit our wishes. This means interfaces do not have to work with tradeoffs that come with preformed/universal structures. They are free to be specific to the heterogeneous context, thanks to attentive infrastructure.

What’s an example of a wish-based, postformal interface?

Check out the table below [? · GW] for a mix of ideas. Skip directly to the table if you’re tired of abstract prefacing.

Preface for the table.

If you do have more patience, some more on the table and the two most important columns:

- Table Format

- The zeroth column is the problem area. Each row is a particular problem area, usually involving some kind of logistics of distribution of meaning, followed by particular design frames for solutions.

- The first column is the formal approach, which involves informational packaging before exporting. This is the default of using thoughtful but fixed structure to make it possible to move information and meaning around, and until recently, the only way to scale information products.

- The second column is the “tacked on” AI or the lazy approach. This is the way most incorporate AI today, and serves as a useful contrast. It's usually chatbots, translations, or summaries.

- The third column is the postformal or live way of designing things with AI, which is the style we’ll explore. The fourth column then provides additional commentary for this third column.

- Lazy vs Live

- Sometimes lazy design does not seem too far from the postformal ones. This is expected, of course, as these possibilities become clearer to everyone. The difference between live and lazy is in whether it anticipates designing for AI technology, in whatever form, to become extremely cheap, fast, integrated. If it shies away from that, it might not be taking the future seriously enough.

- A column omitted for the sake of space is the “AGI” column, which is also lazy. It is the other extreme of unimaginativeness. Expecting a silver bullet for literally everything that excludes anything else is a design blackhole. So both ends of “just add AI to what we’ve always done” and “just let AGI do anything there is to be done” are vague and unspecific. The rich middle [LW · GW] between these two extremes is where reality and thoughtfulness lies. You’ll get a taste of these in the table.

“Reality” doesn’t mean everything has to be buildable right away. In fact, when tech picks up speed (as is underway), anticipations and potentials become more and more real, both in terms of relevance and shaping of the future.

Building to anticipated adaptivity (i.e., finishing ability, to fulfill wishes with executional excellence) in our machinery + adoption (i.e. wishing ability, a collective culture of wholesome wishing) can foster beautiful dances. - A quick overview of what to look for in the examples. An extended explanation of these adjectives is at the bottom of the post [LW · GW].

[Contrasting descriptors of live and lazy] AI “tacked on” (or lazy) AI postformal (or live) unscoped

supplements formalism

un- or over- collaborative

bottlenecked by modularity

centralized meaning

mass production

single-use tool

single-distribution

decontextualizing

specific

supplants formalisms

collaborative

seamless and exploits contextuality

peer-to-peer meaning

tailormade production

ongoing interfacing layer

pluralistic-distribution

recontextualizing

Table of Contrasting Examples

The ones in orange are not representative, only warm-ups chosen for their familiarity and simplicity. The ones in green are the main ones.

| A specific problem | Formal (non AI) Structuring | AI “tacked on” (or lazy) | AI postformal (or live) Pay attention to this column. | Additional Commentary |

| Device authentication. | Enter a keycode or password. |

[thankfully, we probably skipped this as a civilization] | Face/fingerprint detection. |

These three are non-representative but simple examples that already exist, just to warm-up on the columns. |

| Talking to people across the world. | Everybody learns a common language, like English. | AI translation tools are integrated into video calls so everyone can speak and hear in their own language. | ||

| Text input into a device. | Discrete keypresses on a keyboard. | Speech-to-text. | Swipe on keyboard. | |

| Disseminating information to the public. | Books and posts written as formal publications. | Reader uploads a book to an AI chatbot, and tends to ask for summaries or clarifications. | The writer writes not a book, but a book-prompt that carries intuitions for the insights. An economics textbook-prompt, for example, could contain pointers to the results and ideas but the language, examples, style would combine with readers’ backgrounds (and prayers) to turn into independent textbooks for each reader. Importantly, this frees up producers of books from having to straddle and homogenize the range of audiences they might want to speak to | This is a bit like text reflow, but for content. Endless atomizing dialogue is avoided, so you still get a book experience. They don't have to be independent books either, and can be interdependent. Commentary on your own book can be translated to someone else's book. |

| Organizing information for yourself. | Put text and media in files, put files in folders, index them and make them searchable.

| Talk-to-your-data & RAGs (retrieval-augmented generation) let you retrieve your information in natural language and interact with it.

Smartly suggested tags for your notebook.

| Having to “place” information in “containers” is unnecessary rigidity of structure. Instead, you dump information and insights as needed in one “place”, with relationships and indexing hints/prayers produced in collaboration with AI that leaves flow uninterrupted. These hint-annotations can be used to make the store and retrieve actions more fluidly entangled. Views and attention-cycles over this information are created for you just-in-time, replacing permanent structure. | Frequencies of stability of structure and cycling content will be an enduring artform that can easily turn predatory if not left to be an artform. |

| Privacy in publishing the outputs of conversation. | Manually create formal output and omit private stuff; or publish recording but use formal tools of pausing/editing | Have an AI-generated summary of conversation and prompt it to delete private seeming details. Then distribute this common output via your blog or paper. | In the midst of conversation, talk fluidly but occasionally place a “wish” of “privacy here” to listening AI, whenever it seems relevant. On demand from a specific ‘consumer of the conversation’, the AI combines these hints and background details to produce just-in-time outputs, that regenerate the private information so as to omit details but preserve meaning and rhythm. | The fluid nature of post-formal interfaces means you can stream everything all the time. Privacy can be applied automatically, as personal AI trains on your personal privacy-needs, so fewer annotations are needed over time. GenAI can handle privatizing of even faces, say, and replace them with randomly generated ones. |

| Mathematics and theorization generally, for distributing insight. | Create formal definitions and systems that capture invariants. | AI proof assistants that help with the process from taking mathematical formalisms (that still capture context-independent invariants) to computerized proof-formalisms that everyone can use. | Instead of crafting formalisms, humans craft formalism-prompts that serve as anchoring insight more than any formalism can. These prompts become relevant formalisms and definitions near-instantaneously for any local application, created in a context-sensitive way with the help of AI. They don't have to be formally compatible, and so can scaffold specifics that aren’t capturable by invariants. They can interoperate translation of private notation and generated distillations. | The next section on Live Theory covers this. The new kinds of reliability (proofs) and interoperation (correspondences), themselves would be postformal, and are still under conceptual investigation. End-to-end functional yet reliable sensemaking in the far enough future might replace systematization entirely. |

| Regulation | Codification of law; regulations are static, uniform and universal. E.g. a building code | A chat-bot interface for the regulations for home-builders to talk to. E.g. a building code chatbot | Regulations used to articulate specific objectives; these can be specialized to local context. E.g. 90% of new houses should be able to withstand 1-in-100 year natural disasters. You communicate your building plans to the AI, which can then advise if they are adequate to the area. If not, it can recommend improvements. |

Live Governance is a whole stream here, with more detail and background in the link. For AI policy especially, it might make it easier to do substrate-sensitive risk management of AI catastrophe. |

Cool! Do I get to slap a chatbot on something to make a Talk-To-Your-X interface?

No, you will be disqualified and blackmailed. Chatbots are considered a thoughtcrime in this workshop.

In seriousness, we’re looking for interfaces that aren’t endlessly nauseatingly demanding of the user under the pretext of “choice”. We want to provide the user with some fluid, liberating constraints. Surely we can look further than just “do what you’ve always been doing, but also query a chatbot”.

Here are some questions that highlight our design focus (scroll down for more), although not comprehensive for obvious reasons:

- Is it live, rather than lazy?

- Is the problem space specific?

- Does it innovate on locating an implicit fixed structure that we didn't even realize is optional if AI is involved? Or did it do barely better than a chat/summarization interface?

- How much is it prepared to take advantage of i) increased adaptivity and ii) increased adoption in the future?

- Does it embrace new cultures of interaction, rather than just new tech? Does it manage to not be endless generative spam?

- If there is dystopic potential of the tech, does it seem to notice and mitigate or transmute that?

Some more specific design principles with examples in the appendix.

2. Who am I (the participant) and what am I going to do in the workshop?

As mentioned above, participants are invited to imagine and build

not just tools; you build culture. You build the new design philosophies of interfaces, when you can do things that don’t scale, at scale [LW · GW].

You are a good fit if any of these apply to you:

- Vibed with this post for some reason / felt like you were waiting for this, and willing to stick around for 6 days to find out why

- Excited and/or concerned about the future, tired of doomerism and e/acc, bored of both incremental stories and overhype for AI, and want some vivid details and texture for the actual transformations in the future dammit

- Have looked at some approaches to AI alignment, bemoan the overindexing on current paradigm, charmed by mathematical, conceptual, foundational advances but skeptical of relevance to impact

- Are annoyed by bureaucratic, homogenizing, or centralizing patterns in all the things that matter to you, and open to seeing the deeper manifestations of these in operation... or are generally energized by pioneering honesty

- Are at the intersection of/ever-ready to skillfully marry: metaphysics & engineering, computer science & sociobiological lenses, rigor & ritual, the explicit & the implicit

- Are a nerd about meta-systematicity or adult development or collective sensemaking

- Are bored of abstract conversations about wisdom and sensemaking and relationality and fluidity

- Can easily recall fundamental shifts in your view of the world and have an appetite for/midwifing interest in inchoate emergence

- Say things like “I’m not really a rationalist” or “I’m not really an EA” or similar yet instinctively apply acausal decision theory to your donations

- See everything as code/computation, breathe Curry-Howard, and are now curious about trying to see everything as comments too

- Hate technology, have a meditation practice, excited about the truth of your body, tired of people conflating pain with suffering and thinking with awareness, or generally spy the significance of aesthetics and salience and relationality especially in mindlike phenomena

- Fond of conceptual research, have any experience in any kind of design or engineering

- Triggered yet compelled, or need to rant about how this whole agenda is simultaneously undesirable, impossible, incomprehensible

- Don’t understand or think you can contribute to any of this, but you’d love to listen to or be in the presence of such work

- Aspire to cultivate the deepest respect for beings

The only real requirements are open-mindedness and integrity. It might look STEM-centered, but it’s no prerequisite. Some experience with, or even just valuing of systematicity is greatly helpful though.

What is the schedule of my participation?

This is a 6-day workshop, from November 21st to 26th, happening at the EA Hotel in Blackpool.

A detailed schedule will be provided to the selected participants. We will engage in at least the following, with some more info in the rest of this post:

- Design & build tech: Designing interfaces that embody the live philosophy, guided by the design recipes [? · GW], the design principles [? · GW], the design pillars [LW · GW], and the submission template. This includes ideation and prototyping.

- Co-create culture: where we begin practicing and experimenting with the shared use of teleattention technology before it exists by recording informal wishes (mundane ones, like “make this section of the recording private”) to be fed into near future AI for enactment. Information cryonics!

- Opportunity Modeling [? · GW], where we acknowledge the responsibility of anticipative design, in order for plans to be resilient to rapidly changing reality. There are some simple, specific frameworks for this, with some info below.

- Live Theory [? · GW] will be the major focus, which will be discussing and experimenting with proofs-of-concept for redoing theoretical machinery. Again, see below for a brief and largely insufficient primer, or keep an eye on the sequence that this is a part of [? · GW].

- Relatedly, expect to be paired with some grizzled conceptual alignment researchers, by sitting in on meetings and seeing if there is any “ResearchOps” assistance that can be provided that can remain relatively seamless.

You can also expect

- Relational subtlety and presence-centered group processes

- Conversations that respect the distinction between meaningful and formal

- A fair amount of slack

A report on previous hackathons in this paradigm is being processed and will be linked here soon.

How do I sign up?

Register interest here for the workshop in November 2024 (or to be invited to future workshops).

The main invitation ends here, and what follows is reference material for the above. I'd recommend reading it if you'd enjoy more texture. The appendix, especially

Opportunity Modeling

3. What is Opportunity Modeling?

Threat models fix some specific background to enable dialogue about anticipated threats. Opportunity modeling is that, but for opportunities.

It is especially important to practice dreaming and wishing when we expect dreaming and wishing to be a crucial part of the interfaces of the future. It’s also negligent not to incorporate radical opportunities in any plans even for the very near future, since it is hard to imagine a world that remains basically unchanged from rapid progress in AI.

As I wrote elsewhere [LW(p) · GW(p)]:

Generally, it is a bit suspect to me, for an upcoming AI safety org (in this time of AI safety org explosion) to have, say, a 10 year timeline premise without anticipating and incorporating possibilities of AI transforming your (research) methodology 3-5 years from now. If you expect things to move quickly, why are you ignoring that things will move quickly? If you expect more wish-fulfilling devices to populate the world (even if only in the meanwhile, before catastrophe), why aren't you wishing more, and prudently? An “opportunity model” is as indispensable as a threat model.

Are we going to extrapolate trendlines and look at scaling laws?

Nah. There are plenty of such efforts. We’re interested in quantitative changes that are a qualitative change.

Imagine extrapolating from telegraph technology to the internet, and saying to someone "sending packets of messages has gotten really cheap, fast, adopted" and nothing more. This is technically true as a description of the internet (which only moves around packets of information), but it would not paint a picture of remote jobs and YouTube and cryptocurrency. All the new kinds of meaning and being that are enabled by this quantity-that-is-a-quality would be missed by even fancy charts. The focus here is to capture what would be missed.

Is this d/acc?

Heavily so in spirit, in all the senses of “d”, but very different in terms of the mainstream d/acc solution-space.

The crypto scene, for example, tends to focus on disintermediation of storage, by exploiting one-way functions. A consensus on "storage" is needed for shared ledgers, money, contracts, etc. The consensus is bootstrapped via clever peer-to-peer mechanisms design that doesn't suffer from centralized failures such as tyranny, whimsicality, shutdown.

Live machinery (etc) tends towards disintermediation of structure, using attentivity at scale. There is no consensus on structure needed, but some "post-rigor rituals" and deliberate harmonizing can stabilize, anchor, and calm the nausea and hypervigilance of the future; tripsit for civilization tripping on the hinge of history. By default, here you're free to supply your meaning in its fluidity, without having to bend to the relatively deadened "structural center" (i.e., the invariants across the interoperation). This is connection without centralization, peer-to-peer on meaning.

In some ways, crypto tends to double down on consensus-backed storage, whereas live machinery tends to be anti-storage owing to its anti-stasis commitment, and bypassing the need for and salience on structure, except the many occasions where it happens to be useful.

Regardless, both further free living beings to not have to conform/stockholm their subtle richness to necessary-seeming logistical structure for interoperation.

How do you do opportunity modeling?

We can explore a variety of ways of doing it. However, for the purposes of this workshop, we’re going to protract ideas/projects/movements/proposals along two axes: adaptivity and adoption.

Any proposal goes somewhere in the two dimensions, depending on the proposal’s requirements along each, and can also be pulled in each direction for anticipative design.

The horizontal axis refers to the needed adaptivity/intelligence of the machines for the proposal to work. It’s not too off to think of this as an axis of “time”, assuming steady progress in AI capabilities. If the idea requires very little to no intelligence in the AI, it goes near the left end. If the proposal seems like it is basically AGI, it goes on the boring/shameful far right end. You could think of this as an axis for advancement of tech.

The vertical axis refers to the needed adoption by the beings who interface with the machines for the proposal to work. This measures what becomes possible when an increasing number of people (and scaffolding) are involved in using the intelligence-capable tech. Some innovations (such as broad cultural changes) require many people to host them and would thus belong higher up on the vertical axis. Other ideas, such as a tool you build for yourself requiring no buy-in from anyone other than yourself, would belong near the bottom. You could think of this as an axis for advancement of culture.

The idea is to be ready for opportunities before they present themselves, so we can have more thoughtful and caring designs rather than lazy lock-ins. This includes being more prepared for your GPT-wrapper to be undermined by an Anthopic update in four months.

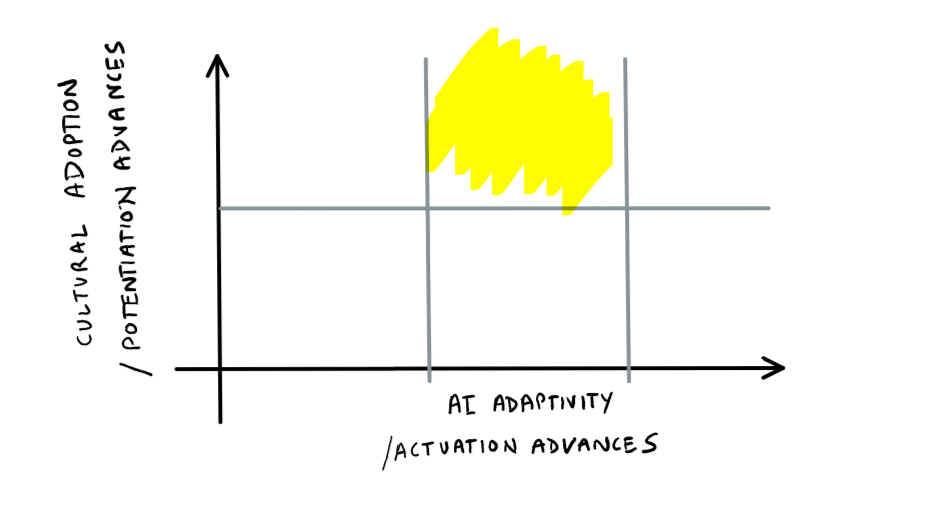

The most exciting sextant is the top middle, where adaptivity needed from the AI is only medium, but the adoption needed by beings is pretty high.

Why are you excited about the top-middle sextant in particular?

First of all, this whole workshop is about moderate AI being background infrastructure and allowing increased connectivity between beings, via teleattention technology. The top-middle region captures that. But there are a lot of other reasons.

What we can do when we participate as a decentralized collective is likely to be among the more powerful, more neglected coming transformations. Collective stuff is also more crucial and tractable to put our imagination into, because they make up the loops to put choice into before they get super-reified.

Ontological changes are by definition hard to anticipate. However, this region makes it just about possible. Even if we don’t know what changes can happen as we move rightward on the adaptivity axis (because it’s hard to predict what the cutting edge machine learning paradigms will be like), we can try and shape what deep changes happen as we move upwards on the adoption axis. This is tractability both in anticipation and participation; where we meet the challenge in Amary’s law: “we tend to overestimate the effect of a technology in the short run and underestimate the effect in the long run.”

There are also interactionist leanings in this view, which means that developing an understanding of ontological shifts will involve following the attentional and interactional tendencies of civilization. Even if you don’t buy that philosophically, pragmatically legitimacy is the scarcest resource; someone can hack a secure blockchain and make a getaway with a lot of stolen money, but the community can undermine the meaningfulness of it.

My prediction is also that we spend quite some time trying to get things right in the medium term by moving upwards from the middle, or even anticipating it and moving diagonally towards a decentralized future. Working on the adoption and distribution is also where we leverage the “distribution” part of Gibson’s “the future is already here, it’s just not evenly distributed.” This is where our collective meaning moves, where we integrate in rather than automate away. It is where we transmute crazy adaptivity into subtle sensitivity.

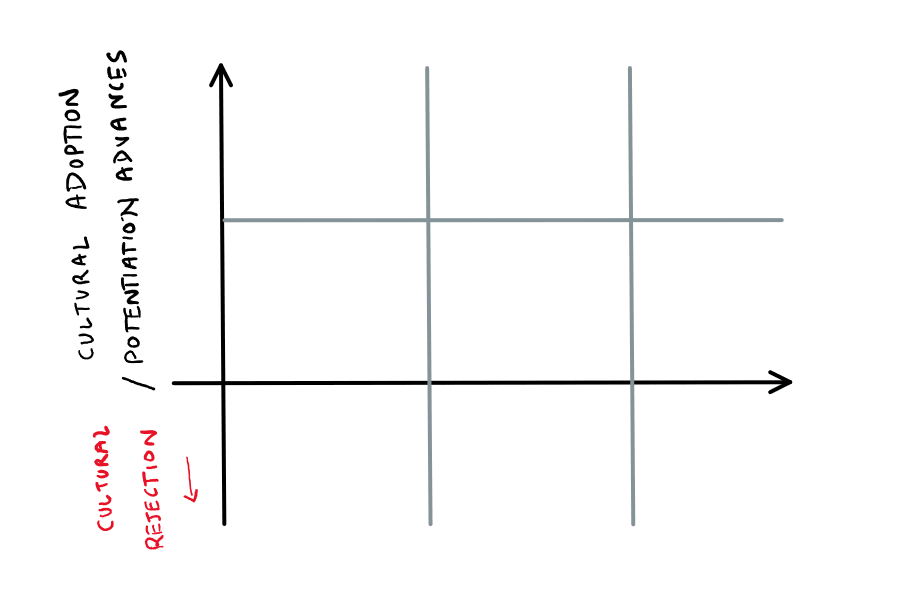

Wait, why are there “sextants" again?

Well spotted. It is actually a 3 x 3, with the other three below the horizontal line.

This is negative adoption, or rejection. Sometimes opportunities to reject technology/technosolutionism are ripe only when it gets alarmingly crazy, and we can be ready to act upon those alarms as well.

Live Theory

4. What is Live Theory?

Live theory is a conceptual and experimental investigation into the next generation of sensemaking interfaces when research copilots (but not lazy ones) become default in researchers’ workflows.

Earlier, we mentioned that abstraction also suffers from the good-old-fashioned assumptions of fixity. We have needed fixed logistical structure for the transportation of theoretical insights.

The point of live theory is to move beyond that; pay closer attention to what are the meaningful components of research activity, and leave the almost machine-like drudgery to the almost machine-like AIs that are being developed. Freeing living beings to do enlivening work allows for, interestingly, simultaneously: more fluid, more participative, more dynamic, and more powerful sensemaking.

Oooh, more detail please? Is this automation of research?

No, not quite. It’s more about integration than automation. And it’s not just about having more access to research papers or helping write them, or assistance with proving theorems. It is about the new kinds of theorization that become possible. Although the whole sequence on Live Theory [? · GW] is dedicated to explain this (and based on experience, it’s difficult to do this in brief), a terse description follows.

Instead of crafting formalisms, human researchers might create metaformalisms that are more like the partial specification of prompts (to an LLM) than fully specified mathematical definitions. The completion of the idea in the prompt can be carried out by integrated AI “compilers”, that adapt the idea/partial formalism to the local context before it becomes a definition/full formalism. The “theory prompt” specifies “the spirit rather than the letter” of the idea.

This allows for more “portability” of insight than typically allowed by only variables and parameters. which can be quite structurally rigid even with sophisticated variable-knobs attached to them. As should be obvious

Can you give me an example?

For example, if some theorists are interested in defining the notion of “power” across disciplines, they might look not for a unifying equation, but devise a workable formalism-prompt (or partial formalism) that captures the intuitions for power. AI-assistance then dynamically creates the formalism for each discipline or context. Importantly, this does not take years or even seconds, but milliseconds.

Here’s the more tricky part: this is not an “automated scientist”. This is an upgrade specifically to the notion of specialization of a general result, which is usually implemented via mechanical substitution of values into variables. So this is in continuity with the traditional idea of generalization in mathematical formalism, usually facilitated by parameters that can abstract the particulars. Except here, the machinery of abstraction and substitution has been upgraded to live machinery, leading to smart substitution and therefore deeper, interactive generalization. The “general result” is now the seed-prompt, and the specialization (or application) is done by an intelligent specializer that can do more than merely mechanistic instantiation of variables. It substitutes the informal (yet precise!) spirit of the insight with an entire formalism.

Again, this “smart substitution” might sound tedious, but so is, say, the error correction (in the many layers of the internet) for you to be able to read this digital document. There was a time where it sounded ridiculously complex, like any technology does when you go over the details.

Error-correction, though extremely clever, isn’t even very intelligent—compared to what will be in the near future! Once the above sort of AI-assistance is integrated, fast and on-point, it can be taken for granted… and that’s when you get a real glimpse of the weird future. When generating formalisms are as inexpensive and reliable as setting x=5 in an equation and generating a graph, they can be considered a primitive operation.

So LLMs can help create formalisms. What’s the big deal?

The aim is to explore the new ecosystem of research that is possible when the artefact that is distributed does not have to be formalized or packaged before it is exported. Conceptual researchers and engineers no longer trade in the goods of fully finalized formalisms, but in sensemaking prayers that compose informally to feed into generated structures, protocols, publications, distillations, programs, user interfaces. We can spend more time in the post-formal regions, even of the most formal-seeming disciplines.

The "application" of the formalism- prayer/metaformalism into a formalism happens so easily (cheap, fast, reliable) that it is not seen as very different from setting the value of a variable in a formal equation when you apply the equation to a problem.

When these transformations from the informal-but-meaningful-insights to the more formal outputs (such as publications, formalism, code, blogposts) can be conjured up from recorded conversations in milliseconds in the near future, what is it that researchers actually care to talk and think about? When outputs are AI-assisted, what will be the new inputs of meaning that we will spend our time crafting, and how do we become competent at the new rituals that will emerge starting now?

Is the whole goal about enhancing and augmenting conceptual research?

You could say that for live theory, although it elides the most important part: the new ecosystem [LW · GW]. The design philosophy itself is a shared investigation with “live interfaces”, which is what the first half of the post was pointing at.

Another way to say it is that live theory is dialogue at the pace layer of infrastructure that supports new kinds of commerce.

This is dumb and not how theories are made?

Yes. This is simplified and linearized to make a hard-to-convey thing slightly easier to convey. None of this is going to proceed unidirectionally, of course not. What might happen in messy reality is that the AI listens to your insightful conversations (with contextual understanding) as, say, an interdisciplinary conceptual researcher who studies the theory of money, and connects up that insight to the structural needs of a local community elsewhere (eg. regulators worried about inflation) who are interested in applying your insights. The AI infrastructure does so by near-instantly proposing protocols, beginning dialogue, running experiments etc. in that community. They try those, dialogue back, have something sort of work out. This feeds into your conversations, when results come in. And repeat.

All this design work, of streamlining these pipelines so they are a joy to use, is the most important thing here. That's why the emphasis on design philosophy and experimentation in this workshop. In parallel, there are efforts underway to make sense of new kinds of precision and reliability for a fluidic era that can include but not be limited to proofs or mechanistic/reductionist commitments.

This attentivity is inevitable, even if we pause frontier/foundational AI advances. Everyone involved is going to realize that this new tech lets us hyper-customize and hyper-individualize. Unless we cohere a wholesome set of design pillars [LW · GW], we’re only going to see more isolation, fragmentation, and dependence that we get from ad-hoc greedy optimization. An insufficient sensitivity to the subtleties is, indeed, what bad design is.

Okay, again in different words then?

Here’s Steve’s 3-paragraph abstract on the subject, based on a preview of the sequence that is in progress [? · GW]:

In the 1700s, a book was a static artifact. But now, an e-book consumer might reflow the text, or change the font style and size. Online textbooks could let the buyer choose a chapter ordering, or the difficulty level of the exercises. As AI advances, we can picture even more adaptive textbooks: maybe an expert fine-tunes a language model with a great deal of source material on some topic, perhaps adding some elaborate prompts. Then one consumer might prompt this model to produce a textbook explained at a very basic level, using examples from history and art, while a different consumer might prompt it to produce a refresher textbook with an emphasis on applications to finance. To the 1700s book consumer, that would have sounded pretty magical. When knowledge artifacts are adaptive like this, we can think of them as enabling production post distribution. The artifacts that are distributed are not frozen along some dimensions; rather, those dimensions are fluid, adaptive to consumer needs.

We propose that even the products of cutting-edge research can be more adaptive like this. The expert doesn’t have to come up with one frozen theory on which consumers merely have different lenses; rather the theory itself might be fluid, adapting to the consumer.

Consider math as an instructive example. Terence Tao imagines near-term AI doing the grunt work to turn precious mathematical insights into formal proofs worthy of math journals. But Tao’s proposal still treats the product as fixed, like our book of the 1700s. The mathematician’s precious insight might be more adaptable than that - it might elaborate into different formalisms in different contexts! Perhaps a key insight about abstraction - sufficiently articulated of course - could have been expressed and formalized in group theory, or algorithmic information theory, or category theory. Why produce just one of those relatively static, dead theories? With the help of near-term AI, the product can be the insight, as fed to an adaptive AI. Then the consumer can contextualize this insight, with different formalisms resulting. If the insight is broad or deep enough, it could even articulate into “informalisms”; perhaps in the right context this insight about abstraction could engage a non-mathematical artist who is curious about the essence of abstract painting. The artist could even collaborate with the group theorist, with the AI serving as translator, to extend or refute the theory. It’s tempting to ask, “in what sense is this one theory of abstraction?” To us, this is like asking “in what sense is the modern adaptive textbook one book?” The product is adaptive and fluid. It is closer to a living thing that must be grown to fruition than an assembly-line machine always producing exactly the same dead product. Our proposed talk will discuss the nature of such fluid theories.

Can I have more vague slogany words about the whole vision, then, that I can keep in the back of my mind while we do very concrete things?

Nearly all of this stuff is about the logistics of distribution of meaning. More specifically, the new logistics of distribution enabled by AI.

The reason it’s called “live” is to contrast it with the usual way of distribution: we’re used to prepackaging before exporting. “Live” does neither “pre” nor “packaging”; it is ongoing, contextual, non-modular, adaptive. The prerequisites for portability do not require this sort of finished modularity, this decontextualization. Instead, you can recontextualize at scale, with the help of AI powered cheap attentivity.

“Live theory” is about the new logistics of distribution of insight (or, in other words: new kinds of sharing of meaning). Theorization not via abstraction and finding intersection and invariants (which is how we currently port insight across different “instances” of an abstraction, by appealing to commonalities) but by having the theory itself be intelligent and learning the specifics of each situation.

Live theory in oversimplified claims:

Claims about AI.

Claim: Scaling in this era depends on replication of fixed structure. (eg. the code of this website or the browser that hosts it.)

Claim: For a short intervening period, AI will remain only mildly creative, but its cost, latency, and error-rate will go down, causing wide adoption.

Claim: Mildly creative but very cheap and fast AI can be used to turn informal instruction (eg. prompts, comments) into formal instruction (eg. code)

Implication: With cheap and fast AI, scaling will not require universal fixed structure, and can instead be created just-in-time, tailored to local needs, based on prompts that informally capture the spirit.

(This implication I dub "attentive infrastructure" or "teleattention tech", that allows you to scale that which is usually not considered scalable: attentivity.)

Claims about theorization.

Claim: General theories are about portability/scaling of insights.

Claim: Current generalization machinery for theoretical insight is primarily via parametrized formalisms (eg. equations).

Claim: Parametrized formalisms are an example of replication of fixed structure.

Implication: AI will enable new forms of scaling of insights, not dependent on finding common patterns. Instead, we will have attentive infrastructure that makes use of "postformal" theory prompts.

Implication: This will enable a new kind of generalization, that can create just-in-time local structure tailored to local needs, rather than via deep abstraction. It's also a new kind of specialization via "postformal substitution" that can handle more subtlety than formal operations, thereby broadening the kind of generalization.

Assorted connections between Live Theory and Opportunity Modeling

- Live theory is a particular opportunity to look at; namely in research methodology.

- Live theory and live interfaces are about making our tools more fluently and wholesomely prayer-compatible, and opportunity modeling is about praying more about the future.

- Live theory is about keeping our sensemaking at pace with an adaptive future; the claim is that you can’t proceed without it. Similarly, opportunity modeling is essential if you have any long term projects and expect to stay relevant in an age of increasing AI adoption.

- Both are about envisioning how beings remain relevant as the all-important and wisdom-embodying body of civilization, as AI becomes the cognitive center.

- Both suggest changes with an emphasis on adoption mixing in with adaptivity, rather than singleton frames. What happens when the center of meaning and culture change alongside technology, not just AI automating away. Especially as increased connectivity from teleattentive tech makes modular design less default.

- The emphasis in method of extrapolation is from looking closely at what's already here, and rejecting the myth of centralization within what's already here. This is the top-middle sextant in opportunity modeling, and in live theory it is the fact that all theorization is already alive in its own way.

Both involve a focus on taking advantage of the medium-term to prepare for the longer-term in order to have resilience-via-sensitivity to a rapidly changing reality.

Design Principles

Design Principles

(Check out the the six pillars here [LW · GW].)

I. Design Recipes

There’s a simplified, truncated recipe with an example that follows the one below.

- Look for some crucial structure anywhere in the world.

- Examine what that structure is doing, why it is necessary. Who are the participants within the structure? Who are the producers and the consumers that benefit from a fixed protocol?

- Try to imagine: if we were able to allocate 20 times more attention / time / effort to each individual interaction, could we do without the fixed (meta)structure? Alternatively, if we abolished the structure, how much more attention / time / effort would it take, that makes it seem unscalable? Notice which aspects are subtly structured, because that’s the only conceivable way to scale.

- (Hardest step) In the attention needed, what are the likely aspects and components that machines can start to handle, as AI gets more sophisticated? And what is possible, with hints and “prayers” supplied by living beings? How can adaptivity replace preformationism? (Importantly, notice that the “soulfulness” that is harder for machines to pull off is what the prayers and wishes are for; as directions for meaningfulness.)

- (Pretty hard step) What new dances does it unlock for the beings interacting, when they are freed from the old numb infrastructure? What more vitality abounds, when life can meet life in attentive connection, without being packed into machine boxes? What dichotomies and modularities of “individual” and “collective”, “private” and “public”, “internal” and “external”, “local” and “global”, “producer” and “consumer” fall away?

- With all this non-separation, what are the invitations and responsibilities towards harmonizing with each other anyway? What are wholesome ways to slow down, and ways to gather? What boundaries and liberating constraints can be celebrated? What post-rigor rituals can make things sane and soothing?

If you love seeing everything as code, great. You can first do that, then replace code with comments as a design feature, and let attentive infra take care of the rest. Do that for protocols, especially, and see how that opens up entirely new kinds of interaction possibilities.

A simplified table that has had much more use is here.

| Step | Example | Your example |

| 0. Identify a problem space, either directly or by picking up a specific solution. Ideally, a digital interface. | Messaging apps [being able to talk to each other] | |

1. Isolate one (or a list of) fixed structure or protocol within the solutions, that seems necessary to make it work/scale.

Ideally as specific and surface level as possible (rather than say “TCP” or something.) | Group structure: each channel in a messaging app fixes who the message goes to. | |

2. If we could delegate a smart-human who could spend many hours making an intelligent decision instead of the fixed decision from the structure, what decisions would they be able to make?

Do this for each structure in step 1, respectively. | Smart-human could look at a piece of text, get to know all my friends and me, and decide who would benefit from receiving that text. | |

| 3. If we could all trust that the smart-human could operate in milliseconds rather than hours, how would we exploit that collectively, as if it was basically a fixed protocol? | We could just write or collect or select notes or pieces of text, and trust that they are instantly sent to the relevant people automatically. | |

| 4. What direction / flavor / prompt from participants would the smart-human benefit from? | Some sense of what category of people / content-type / content I’d like to be receiving or sending messages, in any moment. | |

| 5. What would a prototype that is continuous with the above look like, that we might be able to build today? | I have a list of contacts and their descriptions. I write a message and offer the structuring-prompt: “friends who are into jiu jitsu” and AI “autosubscribes” people under that heading, matching based on receiver wishes, eg. “stuff about martial arts” or “best friends’ thoughts” or whatever. | |

| (Optional) Try and capture it in a single phrase. | Autosubscription |

II. Design Principles

A. Prayer-based

Formal, rigid-context intermediating machinery/structure is replaced with fluid, context-sensitive just-in-time structure tailored to individuals of the interaction. Eg. No formal website source code, mainly website source-prompts that will become source-code.

Identify new stuff that can be called “logistical” even though living beings have traditionally spent too much tedious time applying their intelligence to it, that can now be handled by intelligent machinery. eg. Don’t have to design a website, just suggest hints (prayers) of what is wanted in the website-prompts.

Machinery and structure are designed to bend towards wishes and dreams of animals rather than animals bending for machines. Eg. Forms in a website aren’t rigidly structured, but whatever information the user is happy to fill is taken and stored as prayers that will fit into various demands of the service from the customer.

B. Non-modular

The production, storage, distribution of meaning have less sharp interface boundaries. Eg. The final meaningful website is produced only in interaction with the local user’s prompts and the webserver’s prompts. Production is completed post-distribution.

Peers / consumers / producers have less sharp interface boundaries. Eg. It is unclear whether the end-user is producing the website or the host is.

Permitted complex interdependence across space. Eg. Users can store wishes for, say, curvy html elements, and those can get attentively combined with a TV store owner's wishes to properly display rectangular LED screens on their website, maybe by creating rounded corners.

C. Seamless

Interdependent complexity is handled fluidly.

Minimal disturbance to flow of users.

Able to interact with various parts of the process in space and time.

Eg. I can quickly mutter “privacy here” into an interface to automatically turn conversations-into-publications, because I mentioned subtle revealing details about my family in a conversation about business. This is me interjecting for some end-stage process (family details need to be redacted at publication time ) without losing flow in the start-stage (I continue to talk and ideate about business right away after that muttering).

D. Ownership

Ownership in the hands of life. Eg. privacy, IP, information flow

Thoughtful, fine-grained, dynamic credit assignment. Eg. feedback given by users on a website are monitored attentively for changes to a product and fine-grained revenue is distributed back.

Wholesome economics/tokenomics. Eg. non-zero sum reputation and trust tools that replace the homogenous management enabled by currency.

E. Pluralism

Objects that are shipped are pluriform. Eg. There is no one website that a company manages, just a prompt about what they want, and leave the rest to the user’s prayers and the AI mediating.

Formally incompatible but fluidly coherent multiplicity. Eg. you might use a different messaging service that does interesting emojis and I have a mundane messaging service. When you send me an explosive react, the mediating AI understands the spirit of your drama and my wish for sobriety and achieves a fluid middle ground when it delivers it to me.

Decentralized production of meaning. Eg. an auto-DJ attends to each individual’s movement on the dance floor and turns their bodily expression into an instrument expression with music that can be fed back in.

Heterogeneous participation. eg. you are asked to turn in only a prayer of your submission for a hackathon that turns into something tailored for each judge, allowing for judges to come from domains with very different relevance and comprehension than assumed by the topic of the hackathon. Dancers and environmentalists and board-game could come in, not just AI folk.

F. Acausality

Negative latency (consumption pre distribution) eg. Amazon delivers you a product that you never ordered, and you realize that it is something you could use over time. Order-placing to doorstep takes negative time.

Open adaptivity (production post distribution) eg. you craft a theory-prompt instead of a theory, to sensemake a fundamental concept like “power”, and that prompt keeps generating a different formalism for each community or domain that it finds itself in.

Anticipative design. Takes into account the potential for increased adaptivity+intelligence of AI/Tech and increased adoption+fluency among people/culture. Eg. First you might need heavy prompting and some coding ability, to have a customized video-recording interface. With enough AI sophistication, it can be inferred from your other aesthetic and some in-the-moment suggestions/prayers. As it gets more culturally adopted, people compose their prayers into a video-calling interface. Soon, a friend is able to include, say, a request for a 3D map for your whole room for their VR immersive prayer, without having to consult you or be compatible with your software, but still respecting your prayers and privacy etc.

G. Sane, Soothing, Wholesome

Determinative bias. Eg. Your open-ended game with alive NPCs and infinite consequential choices still adheres to a narrative arc that ties things together. Or the AI auto-arranger of messages on your Discord into personalized channels are only allowed to have 5 categories with 4 channels each rather than ad nauseam.

Ability to converge/calm/center, and especially, easily “unadapt” the AI changes. Eg. even though you and your friends share the same movie-prompt and have ability to “translate” conversations for your different movies seamlessly, sometimes you might decide to consume the same movie, not just the same prompt. You might drag down a customized slider so it’s almost the same movie, with some mild settings changed.

Clarity of specificity and and still widely ambitious within scope, not trying to take over/save/control the world Eg. just works on copy-pasting but still imagines a paradigm-change.

Notices and transmutes dystopic potential into wholesome connectedness; transmutes crazy adaptivity into subtle sensitivity. Eg. salience-management system that helps arrange your attention but is freed from predatory capture via a web-of-trust.

III. Extended Table Preface

The column headings for the two AI columns (lazy and live) list some contrasting attributes that are worth more explanation. Comparing the subtleties here is of primary importance. I’ve also included drive-by takedowns of AGI below for fun.

(If you find you have a want for examples at any point through these explanations, just scroll back to the table!)

- 1. unscoped vs specific:

- Chatbots are the classic example where the user can ask or say anything, which is rarely the degree of generality of user-options valuable for most users. Loose is perhaps a more illuminating label than “general” for this design.

- For live design, specificity means enabling constraints that are fluid but have some integrity. They are not exactly task-specific, but tied to a “vertical” of meaning-clusters. They aim for tight relevance yet openness.

- (The omitted AGI column would “just do everything” and therefore be fully “general” as a homogenous mush.)

- 2. Supplements formalism vs supplants formalism:

- We’ve lived with cookie-cutter structure to scale things for a while, and lazy design just adds a bit of natural language on top.

- Supplanting formalism means formalism no longer gets to be the arbiter of meaning, and only shows up as necessary. Prompts and wishes and prayers that are often much closer to what matters, but suffer from low legibility/explicitness/scalability/ compositionality. But these properties are not a function of the input alone– they are also about what inputs we can handle. So while illegible and implicit are issues in the infrastructure we’ve had so far, these become first-class in the infrastructure.

- (AGI supplants everything, but underlying this idea is a formal cookie-cutterness, the same homogeneity mentioned above.)

- 3. Uncollaborative vs collaborative:

- Although often doing a lot for the user, there is a sense of things produced by the AI not being in the right place or proportion, or not many guidelines in how to have deep collaboration.

- Figuring out the subtleties of the position and power of AI, so as to let it handle the less meaningful parts to free up the flow for us in meaningful activity is among the central conceptual and design challenges that we aim to confront here.

- (AGI is completely uncollaborative, in that it excludes our participation.)

- 4. Bottlenecked by modularity vs seamless and exploits contextuality

- Modularity is at the heart of most design today. Do one thing and do it well, have compositional structure, independent investigability, unit testing, isolated and repeatable (thought) experiments, factored cognition. It makes it easier to think, less broad attention required on the whole context. This is great, but has its downsides; it also leads to limitations in connectivity, often allowing only rigid interaction and isolation. Breaking through those often takes decades, since things have to be redone from the ground up. Tacked on AI designs tend to continue working in this paradigm.

- With the “teleattention” of AI, modularity can be optional. This is not boring “AI does everything”, but a fluid connectivity that is possible because “redo from the ground up” as a step is so extremely cheap and extremely fast that it is a routine action. The specificity that replaces “modularity”, the live version of modularity, is in design infrastructure that is seamless but not featureless. Attention is not numb replication-based scaling, but extra sensitivity to uniqueness, and ability to cohere it. This means that interactions succumb less to Conway’s law, and still staggeringly retain and refine division of labor.

- (AGI tends to take some form of being totally independent/modular powerful structure, or some big paperclippy, featureless spread.)

- 5. Centralized meaning & mass production vs peer-to-peer meaning & tailormade production

- Partly because of the background mentioned above, there tends to be a single source of truth or meaning that is replicated everywhere. There is a centralized site of production, that then distributes the produced object around. There are few options available to the end-user. Lazy AI enables generativity, but is unable to curtail it and so also not commoditize the seed. This also then limits it to AI-Human interactions rather than empowered Human-Human interactions.

- Individual attentivity makes it possible to not pass around sculpted meanings, but to sculpt them ongoingly in interaction, in specificity of the particular individuals meeting each other in particular moments, rather than mediated and dominated by the constraints of logistics that are forced to abstract them out.

- (AGI is ultimate centralization with no peers)

- 6. Single-use tool vs ongoing interfacing layer

- When using something like a tool, there are often clear starts and ends defined functionally. You might find that you have a hair sticking out and use a tweezer. You need a printout, you use a printer. You want to check your schedule, you use a calendar. For lazy AI, this looks like requesting a summary and stepping away.

- An interface layer has a continuity, an ongoing compilation or rendering or stitching etc. There is no bound or doneness that doesn’t derive from aesthetic considerations. It becomes a layer that you can take for granted, and focus on what matters. As ubiquitous infrastructure, live UI can be the fabric of intelligence that is constantly available to send out your prayers to for the contextual purpose.

- (AGI leaves room for no meaningful interaction except pressing the “Utopia” button.)

- 7. Single distribution vs pluralistic distribution

- The one-and-done attitude also holds for the “finished product”. It doesn’t adapt. Outputs are meritorious in their unambiguity. Make one website for your product, not 300 of them. AI can help you make it, but then you ship the one you made. You can add a few settings, such as light vs dark. But you don’t want to overwhelm the user with those.

- Live AI inverts the order of production and distribution, in a painless way. You can ship “pre-products”, that turn into finalized products only in interaction with their endpoint. If you ship a website prompt, and an AI understands the end-user or combines with their saved prompt, each user can visit a website that works how they need it to work.

- (AGI gets produced once and distributed everywhere.)

- 8. Decontextualizing vs Recontextualizing

- Generalization and automation tend to work by distilling common patterns and essences. The contingent specifics don’t matter. They can’t matter. Because they are too heterogeneous to scale. The way to engage with a large number of things is to decontextualize and focus on the intersections, the regularity. A chat interface or AI summarization can be, in a way, very context-specific, but it doesn’t try to rehaul this paradigm, and instead just fits into a background homogeneity.

- Instead of having to be run by commonality and intersections, the cheapness of learning means we can let learning be a primitive operation. This means that we don’t need to rely on familiarity/commonality, since we can deploy rapid learning. Retreating to a conceptual “center” or core that ignores context isn’t necessary; we can just recontextualize in the spirit of any component, and draw out connectivity (which is what matters; commonality is usually only instrumental for connection) on the fly, in a peer-to-peer manner.

(The “G” in AGI is supposed to be deepest compression, a compactification of knowledge.)

- ^

Also: subtlety, taste, refinement. These do not fit the usual connotations of "0 to 1" but they are still microcosms of the same movement.

3 comments

Comments sorted by top scores.

comment by lucid_levi_ackerman · 2024-12-02T21:04:43.505Z · LW(p) · GW(p)

Nice to hear people are making room for uncomfortable honesty and weirdness. Wish I could have attended.

comment by DusanDNesic · 2024-11-05T08:40:47.145Z · LW(p) · GW(p)

I'm very sad I cannot attend at that time, but I am hyped about this and believe it to be valuable, so I am writing this endorsement as a signal to others. I've also recommended this to some of my friends, but alas UK visa is hard to get on such short notice. When you run it in Serbia, we'll have more folks from the eastern bloc represented ;)

Replies from: Sahil