The Logistics of Distribution of Meaning: Against Epistemic Bureaucratization

post by Sahil · 2024-11-07T05:27:20.276Z · LW · GW · 7 commentsContents

Generalization Centralization Extrapolation Automaticity & Automation Confusion & Civilization Sensitivity & Settledness Risks Doneness None 7 comments

This is an excerpt from the Introduction section to a book-length project that was kicked off as a response to the framing of the essay competition on the Automation of Wisdom and Philosophy. Many unrelated-seeming threads open in this post, that will come together by the end of the overall sequence.

If you don't like abstractness, the first few sections may be especially hard going.

Generalization

This sequence is a new story of generalization.

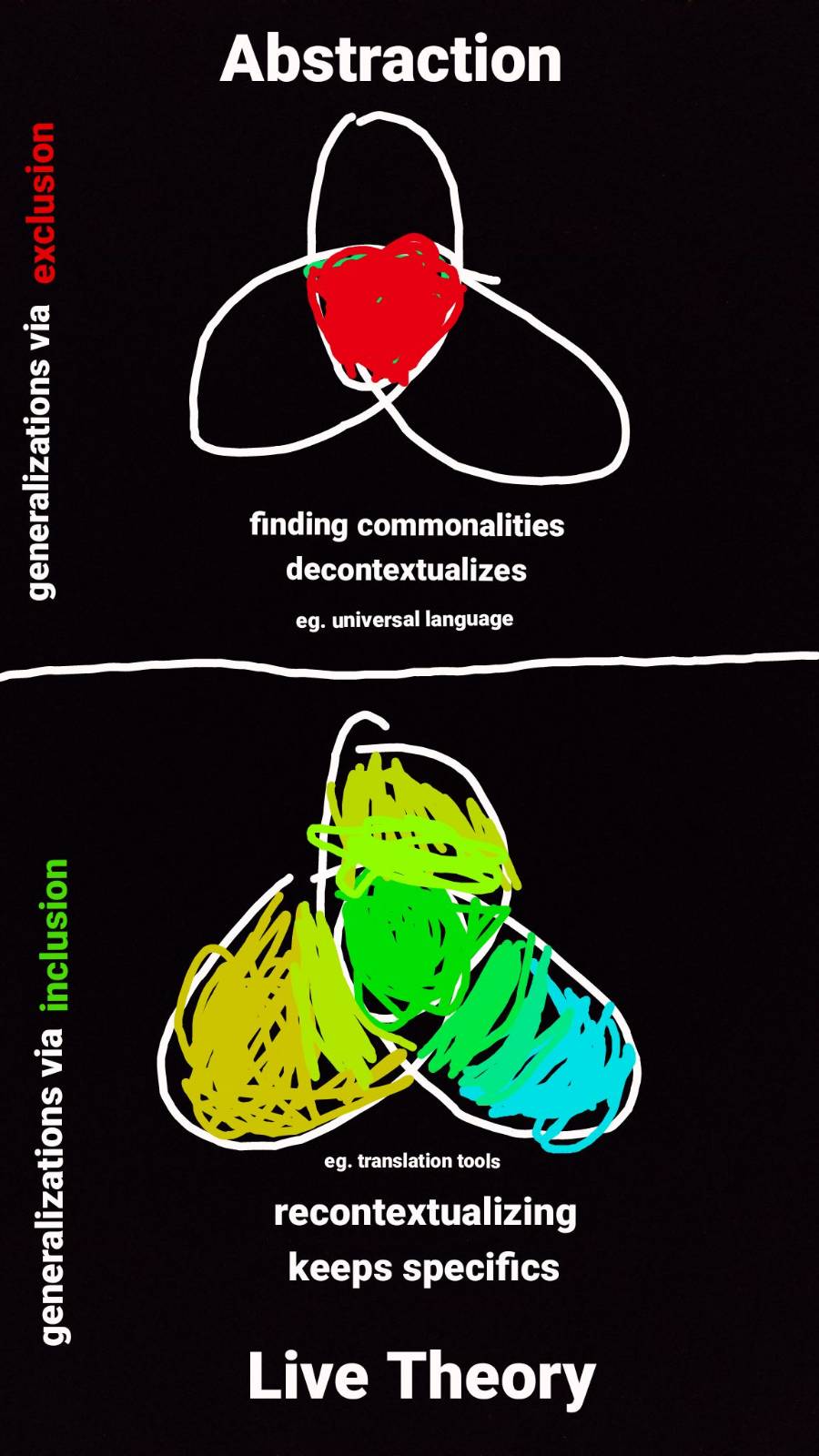

The usual story of progress in generalization, such as in a very general theory, is via the uncovering of deep laws. Distilling the real patterns, without any messy artefacts. Finding the necessities and universals, that can handle wide classes rather than being limited to particularities. The crisp, noncontingent abstractions. It is about opening black boxes. Articulating mind-independent, rigorous results, with no ambiguity and high replicability. Considered thoroughly “understood” only when you can program it; that even a machine could automate. Gears-level understanding. Clear mechanistic, causal stories plus sharp high-level concepts that get at the core and are thus robust to extrapolate from. Clean factorings with compositionality that preserve formal invariants.

Some of these might sound unrelated, apart from being good practices. In fact, they are very related. They all achieve the scaling and transport of insight (which is the business of generalization) by isolation and exclusion.[1]They decontextualize, and attempt to find the static, eternal, preformed, self-contained, independent principles by retreating ever deeper inwards, into that which has been identified as meaningful.

The thesis is that this exclusion-based approach to proliferation of meaning is not the only option. It is largely by ignoring or distrusting the fluidity available to intelligence, aliveness, mind, time, that we resort to such stasis-centered[2] sensemaking.

Though it has its merits, context-independence is subtly tyrannical: centralized, preformed, numb. As we’ll see [? · GW], even when equipped with the dials that allow, say, a theory, to change shape in response to the environment it is placed in, the frame of the theory remains rigid. When intelligence and mind is available[3] at scale, you generalize not by finding that which is persistent, but by amplifying the ability to be intelligently sensitive (to more contexts, in particular) to what lies outside of your compact constructions.

Centralization

Ironically, the “good practices” in the traditional story above might themselves be highly contingent responses in service of deeper ideals. Unfortunately, the logistics of distribution of meaning (insight being a particular kind of meaning) revolves around the limited infrastructural machinery of the era. This means that the deeper ideals are lost to temporary pragmatic pressures– pressures both real and imagined. These ideals, which are genuine and worthwhile, might include: clarity, competence, elegance, precision, interoperability, intersubjectivity, transparency, coherence, relevance, reliability, originality, concision etc.

Although it would be interesting to generate an exhaustive picture of the ideals and spirit that undergirds our slowly-developing epistemic pipelining, the purpose here is to simply free that spirit into the natural place where we find ourselves now: at transition.

Our machinery, both literal and figurative, has been pushed to the extreme by the demands placed on it, and is slowly starting to come alive[4]. The bounds of their adaptivity might be limited by various factors, but there is no verdict as yet.

In such a position, the metaphor of the static “machine” starts to become less apt for machines themselves. There are many proposed visions to work with increasingly adaptive AI technologies and their cultural adoption. A lot of the visions, perhaps a majority (the non-suicidal ones, anyway), continue to attempt to apprehend the dynamism of their own and alien minds– but still within the myth of preformation/centralization.

The invitation here is to relinquish the stockholm syndrome[5] we have towards this numbing, preformationist sensemaking apparatus that so often captures our imagination and instead transmute the greater adaptivity+adoption into deep, expanded sensitivity[6].

The difference, to put it simply, is akin to the difference between pouring energy into the imposition of a universal language on all of society vs supplying adaptive translation tools that can allow the flourishing of local languages with tight interfacing where such is possible.

Homogenization, as in the universalizing in “universal language”, is one of the primary pressures imagined as a prerequisite for the genuine ideal of interoperation. Having to rely on established sameness, intersection, invariance — the center of overlapping culture, a common foundation, a conceptual core[7]— is less necessary when intelligent infrastructure can create fluid, precise coherence tailored to local heterogeneous needs.

(The previous paragraph is almost a tautology: if learning new things is cheap, you don't have to rely on sameness. And at the extreme end of the search for sameness is ultimate sameness, viz. universality. Ask yourself why you desire universality, why it's exciting. And what more could be enabled if it were possible to be attendant to particularities, not just what is universal.)

When attentivity is cheap, fast, and accurate, it becomes possible to scale that which doesn’t scale. Digital interaction moves from telecommunication technology to teleattention technology. Notice that this staggering new methodology stems not from hyping some isolated super intelligence, but from the maturation of mild intelligence into scalability– as is obvious from the simpler technical specifications of cost, latency, error. Amara’s law states:

We tend to overestimate the effect of a technology in the short run and underestimate the effect in the long run.

We will see, in great specificity [LW · GW], how reduced attention scarcity from the conformation ability of machinery can free beings to unconform into their rich subtleties. And how that could feed into further futures.

Extrapolation

The new story on context-interdependent generalization applies, concretely, to at least the following scenarios:

Generalizing over time: What is the way to remain participative into the far future? Especially as AI gains increasing adaptivity and adoption?[8]

- Artefacts of generalization: How does theorization help civilization? Especially as AI gets increasingly involved in research? What will the new interfaces of collaboration and sensemaking look like [LW · GW]?

- Deep understanding involved in deep generalization: How clear are we about what it means for a mind to "actually" or deeply internalize something? Especially for an AI that might suddenly grok something dangerous to us?

- “Generalization”/automation of awareness: What is the difference between an "automatic" response in a person, when we mean "automatic" as in "numb-scripted" vs automatic as in "spontaneous-intelligent"? What might that have to do with concerns about AI driven automation?

This is not a story to check off on each case, one-by-one. Each builds on the other, and since this is all about research methodology, the idea itself is best conveyed as a whole. It spans the embodiedness of minds, the metaphysics of concrete risks, the informality of mathematics, and the engineering of fluid interfaces.

We’ll explore each of these (in the overall sequence, not in this post). Let’s begin gently, starting with a teaser on the most intimate one.

Automaticity & Automation

The words "Sorry, I was out of it yesterday. I think I just automatically agreed without meaning to. My mouth just said 'yes' without thinking." paint a very different picture than "I was completely in flow at dance on Friday. My body just knew what to do in response to the music. Not a single neurotic thought encumbered my movements."

Both are “automaticity,” in a way. What is the difference between the two, if any?

Take a second to think about that, before automatically proceeding to read the next line.

.

.

.

You might offer something in the vein of “our abstract programmed habits sometimes work out as in the case of dancing, and at other times require to be overturned by mindfulness, as in the case of saying ‘yes’.” But this is hardly satisfying. At best, this is rephrasing the question, and at worst suggests that being in flow is like running a script. If anything, you are more attuned to what you’re doing when you’re in flow, rather than “abstracted” away from it. And your mind, too, is fully present for it. This is especially obvious if you replace "dance" with "improv" or “design” or even "programming".

Importantly, which set of intuitions around our collective experience should we have in mind, for the “automaticity” and “automation” in reference to AI and the future? The two have such opposite connotations of aliveness and meaningfulness, that it would be foolhardy to stick to insisting on only one set.

Rather than trying to answer this definitively one way or another, I invite you to open to the confusion.

Confusion & Civilization

Most of the thrust of this sequence is in slowing down to examine that which feels deeply familiar, so familiar that it is a lot of work to even put it under scrutiny. Accordingly, pointing out these sorts of assumptions can take some laborious gesturing without seeming to say anything new, for which I hope to recruit your patience.

I seek to invite seemingly understood things into greater clarity until we're able to hold confusion for them. “Noticing confusion” is notably one of the harder and more important skills in this and adjacent domains. I’d love a world where we support each other in carefully articulating implicit confusion, as much as we do for implicit assumptions.

So this is best viewed as confusion research[9].

One reason to pay careful attention, as we’ll see very concretely in a later post, is to stop the creation of gods in the poor image we have of ourselves. Or not “stop” so much as transmute (adaptivity into sensitivity, as mentioned before).[10]

The question in the previous section (What is the difference between those two kinds of “automaticity?”) is one koan[11] to meet the inadequacy of the extant frameworks. The allusion in that question to an organism's intelligent participation — the organism’s attention, automaticity, responsivity — is meant to set the stage for thinking about civilization as an organism. With a similar, though quite different, notion of integrity.

What would it mean for our civilizational infrastructures to exhibit and enable fluid, spontaneous aliveness? For each part, each individual within it, to be in intelligent, compassionate dance with the whole?

This might bring to mind an image of physically fusing everyone with Neuralink or some such. The picture here is not of a monistic hivemind, with invasive wiring together of our brains. As you'll see in a later post on the Puzzles of Integration, any framework that only imagines hardware rewiring or increased channel capacity as relevant leaves most of the significant questions unanswered (though it may inform some logistical bounds). The analogy to a single mind still serves here: although possible, biological neurons rarely physically fuse in ordinary circumstances.

This sequence questions whether more integrated functioning involves homogenization as a prerequisite. In your own body, each individual cell is supported, in myriad ways, to work together with all the others as part of its natural individuation. Reading this sentence with perfect clarity, as you are now, is not some “download” to a dead machine but in fact an ongoing dance of trillions of different tiny animals that are you.

In a similar vein, supporting infrastructure that does not tradeoff local and global functioning— infrastructure that celebrates individuals and local communities, in their naturalness, without atomization or homogenization, is a more exciting picture to me than turning into one big mush.[12]

What does that look like, for our local and global projects around inquiry, investigation, insight? What might the refinement and integration of reasoning, knowledge, science, and philosophy look like in the near future? What will almost-alive infrastructure do to our research methodology, not just for one mind in isolation, but at the interfaces between minds?

Before we proceed with detail on such opportunities[13], we’ll take a look at one of the stars of this show — sensitivity (the other being integration) — especially in connection with the existing AI-risk discourse. There is a lot of space needed to back the claims made, especially for integration, and it might be a bit frustrating to read them.[14] Perhaps more so than if they weren’t there at all! Still, I find it in greater integrity to at least lampshade them and let informal links in the appendix contend with potential dissatisfaction.

Sensitivity & Settledness

I've used “sensitivity” several times so far. I mean it in the very human sense: I might call a friend sensitive in the way that she attunes, attends, offers care to my thriving, sometimes surprising me into more ease and openness than even I anticipate. A tenderness towards my thriving in particular, in my particular context.

Another way to look at it is as an anchor[15] for the most ongoing versions of what might count as an alignment “solution” for AI capabilities.[16] The Risks section that follows might be hard going if you’re unfamiliar with or uninterested in such efforts, so feel free to skip it (but do read the Doneness section that follows it!).

Risks

There are many competing narratives for dangers from advanced AI, ranging from s-risks, to unfairness, to unemployment, to sudden lethality, to going out with a whimper [LW · GW]. Suggested mitigations could be spread out on the spectrum of commitment to ongoingness vs commitment to prefiguration.

As long as the fabric of (artificial) intelligence remains sensitive (think again of friends who are nourishing of your spirit and being), orientations to “solving alignment” can remain fluid, and don't have to be frozen-in-place, certainly not beforehand.

A lack of sensitivity includes many failure modes: unfairness (insensitivity to differences), lack of freedom (insensitivity to truth), even death, as an extreme case of insensitivity to your aliveness! This view is even less forceful than the pivotal processes [LW · GW] frame, though close in being more process-oriented. Rather than using a sharp tool for cracking open the nut of alignment, it is much more like being immersed in a rising sea of friendship (instead of dissolving into abstraction, as in Grothendieck’s case).

Sensitivity covers both ongoing incorporation of greater clarity that we might collectively achieve, and fine-grained attendance to particularities of you. If it helps, you could think of the former as sensitivity across time and the latter as sensitivity across space, but I wouldn't hold onto that metaphor too hard.[17]

Sensitivity is an enabler of yin, and trusts aliveness, time, intelligence to continue to exist in the future. By “future” I mean to include even trusting that there continues to be sensitive and responsive attention available, in your own intelligence, for example, ten seconds from now. There is less of a necessity to systematically break things down (though it can be helpful anyway) and establish formal constituents. The benefits, even necessity, of prepackaging are great for scaling both insight and trust, but (I claim) quickly dated by scalable attentivity.[18]

This is easy to forget. Where do our wise principles come from, anyway, prior to systematization? What is the device that does the "breaking down", that grapples with the mess and cleans it up? There is nothing uniquely magical about a cleaned system; it is an articulation in (formal-ish) language of a particular kind of clarity with certain affordances such as portability, investigability, reliability, interoperability, etc. Attaining these desiderata via systematizing is great… when it seems like our only option.

But even now, this isn’t our only option. The insight that lands in you in this moment[19] is something that just happens. And insisting on finalizing a system can exclude the participation of, again, even you, your ingenuity, ten seconds from now. [20]

It is, of course, possible to orient to systems as scaffolding that will enable the aliveness of what is to come, with all the desiderata of systems, and more. Later on, we will explicitly meet the analogy of Terry Tao’s post-rigorous stage for mathematics, but applied to (AI-assisted) research infrastructure as a whole.

This sensitivity frame also sidesteps many issues (which are still about exclusion from meaningful activity, but more subtly) surrounding value lock-in, or the headiness of CEV[21], while allowing for deliberation [? · GW] and refining of legitimacy [? · GW] or boundaries [? · GW] or avoiding goodharting of pointers [? · GW]/referentiality [LW · GW] without prefiguring the formalisms.

A very brief word now on problems of referentiality and their connections to sensitivity. One of the main concerns of the discourse of aligning AI can also be phrased as issues with internalization: specifically, that of internalizing human values. That is, an AI’s use of the word “yesterday” or “love” might only weakly refer to the concepts you mean. This worry includes both prosaic risks like “hallucination” (maybe it thinks “yesterday” was the date Dec 31st 2021, if its training stops in 2022) and fundamental ones like deep deceptiveness (maybe it thinks “be more loving” is to simply add more heart emojis or laser-etched smileys on your atoms). Either way, the worry is that the AI’s language and action around the words[22] might not be subtly sensitive to what you or I might associate with it.

Also from this point of view, many fears of automation (or exclusion from meaning) are more fears of "infrastructural numbness". I expressed concerns with the word “automation” earlier. The word “automation” does not distinguish numb-scripted-dissociated from responsive-intelligent-flowing. It usually brings to mind the former. I argue for using words like “integration” and “competence” or even “spontaneous” to describe some of the other kinds of intelligence-integration. As explored in a previous section, when you’re really in flow while dancing, you're not thinking a lot.[23] But it is the opposite of “automation”. If anything, you're more attuned, more sensitive. The difference between “completely automated” and “completely spontaneous”, this polar difference between being totally mindless vs totally mindful that we have somehow lumped into the same word, is what I want to explore, and at the level of civilization.

In particular, for risks, scenarios of Disneyland with no children, Moloch, the smooth left turn, and suddenly keeling over from nanobots in your bloodstream [LW · GW] are very extreme cases of infrastructure being “numb” to your body. The civilizational version of “automated”, instead of “integrated”.[24]

From the frame of sensitivity, you don't "solve" anything forever and hold onto a solution. And you will not be guaranteed a life of zero pain[25]. But hopefully the intelligence surrounding you can function as an excellent holding environment, in a profoundly sensitive way.

Doneness

As our tools come alive, the ongoingness of subtle sensitivity shows up not just as convenient user experiences, but as attentive user experience. You can do things that don’t scale, at scale. Telecommunication was great, teleattention is going to be dramatic.

Being able to rely on the intelligence and aliveness of things outside you to be attentive, redoes what it means for a task to be ‘settled’, at least from your end. A task can be counted as done, in surprising ways, even when it needs further attention– just like a web developer’s job can be finished even when the source file still needs to be rendered on the consumer end. A very simple example is in stopping at comments that turn automatically into code for you. The craft of prompts and comments, that resemble wishes more than commands or scripts, will require new aesthetics of completion. Whatever is considered finished based on our current machinery wouldn’t have to be the only north star for the future. Instead of angling towards finished output, in such an economy you might work hard at showing up as input to a fabric of friendly intelligence surrounding you, continually enlivening it with the unique and much needed texture that is you.[26]

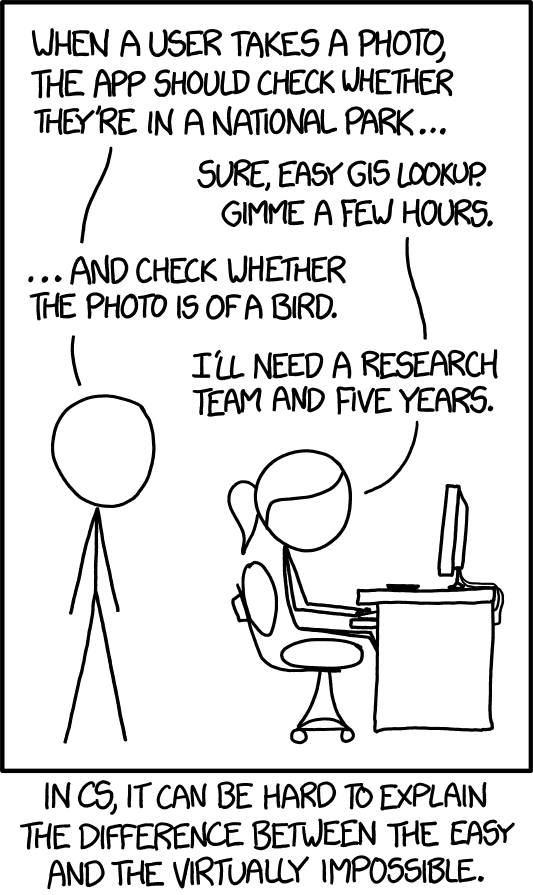

The mysterious new “arrow” of being able to turn the informal to formal has surprising implications that we are yet to make friends with. We’re kind of in this situation:

…except this particular scenario has become dated, and it’s challenging, for anyone, even computer scientists[27], to create a version of this comic that could survive the next few years. As the comic implies, computer scientists had some sense of what is possible and “impossible” based on how easy it is to exploit regularities and structure[28]. Formal amenability and intuitions around the outputs of intelligence (such as systems of meaning) is no longer a reliable indicator when intelligences are available not only in a bottle for you, but pervading the interfaces between [LW · GW]you and me.

Nate Soares said:

A problem isn't solved until it's solved automatically, without need for attention or willpower

Good advice, in the same world that the xkcd was made for. Not true if attention/intelligence is cheap, fast, and abundant. Taking intelligence seriously, as we’ll see (concretely) next [LW · GW], can be subtly difficult… even if you’ve been in the biz for a while.

- ^

How this is true is best illustrated by developing alternatives, which is the purpose of this sequence.

- ^

An informal note from elsewhere, on the significance of homeostasis in life forms:

I wouldn't rule out self-preservation as possibly the least interesting part of agency, more akin to sanitation. Maybe even the dead(ening) part of life, even if essential.

Three pieces:

- The logistics of staying alive as book-keeping, bureaucracy, account management. You'll find it in every mature organization, but if you emphasize bureaucracy as the secret sauce, the primary object of study, I think you're missing most of what makes a functioning organism awesome.

- Consider your favorite band "selling out". This is the death of its ability to produce good music, for the sake of staying alive and regulating wealth. Start-ups that have made maximizing revenue their single endgoal become abandoned by their communities. People who seem to come primarily from status-management are annoying to talk to. Researchers burdened by publish-or-perish run out of room for curiosity.

- I don't have the link at the moment, but there was this analysis of inflation adjustments over long periods of time not really being true to adjusting for purchasing power. The wealthiest person 200 years ago didn't have or even conceive of all the goods and services that we have today, even if a lot more money. Focusing on the accumulation of wealth/resources/negentropy misses the actual spending.

Worshiping the universal currency that you can spend but not the particulars it can be spent on misses most of the picture, almost like idol-worship. "Innovation" and "technology" are the obvious go-to-words for this missing bit (and known to be controversial econometrics, IIRC), but life/curiosity/mischief are, IMO, the deeper words for this. What remains, when the monomaniacal threat-of-existence and resource-hoarding gets out of the way.

Software companies have relearnt this especially over the last ten years: provide a solid environment and get out of the way of brilliant minds. Look around you: almost everything that was invented by humans came out of someone at some point interested in the thing for its own sake. It came out of “terminal” reasons, not instrumental reasons to survive.

Importantly, this doesn't rule out homeostasis as being the most interesting part of life-to-be-formed, as a certain momentum for the next structure that hosts it, as enabling certain pipelines through organisms, as provocation and urgency into vitality, as orienting incentives, as reality interrupting untethered simulations, as skin-in-the-game supporting integrity and integration. So I'd in fact still expect to find it in (the history or context of) any intelligence.

- ^

Or really, even considered available.

- ^

This term “life” or “live” isn’t meant to connote any defaults of moral patienthood or consciousness, but more to counter solely computationalist viewpoints, even for computers. Similar to AI is not software [LW · GW]. Imagining that computer scientists will be the primary ontology for the intelligent webs of the future would be an error similar to thinking that neuroscientists are the primary consultants for macroeconomic policy because the economy runs on human minds. Neuroscientists are not totally irrelevant, but hardly central.

- ^

More on this later.

- ^

“Sensitivity” is a word that we’ll meet in its own section later.

- ^

d/acc in concept-space!

- ^

If you are noticing the conspicuous omission of “automation” (and even “abstraction”) in the introduction, this is indeed, not an accident. Questioning the connotations surrounding the word is part of the point, and what the next section does.

- ^

A nod to deconfusion [? · GW] work.

- ^

The next subsection will say more about “sensitivity”.

- ^

I've striked-out the word "koan" at the suggestion of a friend, who points out that comparing this to a koan is highly misleading. He quotes Nanquan:

> Although a single phrase of scripture is recited for endless eons, its meaning is never exhausted. Its teaching transports countless billions of beings to the attainment of the unborn and enduring Dharma. And that which is called knowledge or ignorance, even in the very smallest amount, is completely contrary to the Way. So difficult! So difficult! Take care!

I've also left it in with the strike-out, because I would like an expression for a format where the words are intended to break fixed meanings and recognize your own experience, rather than to construct more abstracted concepts. Perhaps "art" will have to do, but that's too overloaded. - ^

Apart from the mushing that foundationalism can create, there are many communities that wax lyrical about dreams of an amalgam “glocal” but with little texture beyond word-alchemy. This is decidedly not the sum of this sequence. If you want to jump ahead to the most concrete bits to see how, go to the next post [LW · GW].

- ^

Generally, it is a bit suspect to me, for an upcoming AI safety org (in this time of AI safety org explosion) to have, say, a 10 year timeline premise without anticipating and incorporating possibilities of AI transforming your (research) methodology 3-5 years from now. If you expect things to move quickly, why are you ignoring that things will move quickly? If you expect more wish-fulfilling devices to populate the world (even if only in the meanwhile, before catastrophe), why aren't you wishing more, and prudently? An “opportunity model” is as indispensable as a threat model.

(In fact, "research methodological IDA" is not a bad summary of live theory, if you brush under the rug all the ontological shifts involved.) - ^

While sensitivity is coming up next, I’ve moved the more metaphysically demanding Puzzles of Integration post to a later part of the sequence, so there is something concrete to chew on first.

- ^

I say “anchors” to make it clear that these aren’t recipes, that are contained and finished and “true name” like. An “anchor” is not a recipe, but with intelligent infrastructure, anchors can be more relevant than finished recipes. This will become clearer as we proceed.

(Providing anchors is also meta-consistent: my writing invitations are also more like prompts than code, because it is intended for integration by adaptive systems like you and intelligent machines.)

- ^

The emphasis on “ongoing” is high enough that “solution” is an actively misleading metaphor for how to engage.

- ^

These dimensions of particularity mirror the bullets in Extrapolation.

- ^

- ^

Indeed, the high actuation agenda bets that as more and more of the “external” world becomes mindlike, looking into what it’s like to inhabit the fluidity of one’s own mind is very helpful as a forecast (at least, if not done naively).

- ^

Many wisdom traditions emphasize presence as central to a good life. This is highly relevant, but not developed for this audience.

- ^

- ^

- ^

It might be tempting to try to call this a “high-level” abstraction that lets you do more. The high-low dichotomy is the good-old-fashioned way of looking at generalization, that tries to do it with recourse to deeper scripts. This scriptedness does not apply with expanded sensitivity (which is one mind’s scaling of attention), as we'll see in the “theory of resources” post, and in the conclusion.

- ^

As AI becomes the cognitive-executive division of the body of civilization, the relevance, meaning, subtlety, vision can continue to be held in the rest of the body.

Perhaps it is decision-theoretically advisable to be less heady and more embodied in your own literal body, to promote your relevance in the civilizational body when AI is the head.

(Headiness, of course, is an aspect of the myth of centralization.)

- ^

- ^

The context-independence critiqued at the start is an example of a “dead” and “dumb” transportation of insight. Very useful, when you can’t take intelligence for granted. Like a crutch, it speeds up our meaning-making before our intelligence has legs, after which it only slows that down.

- ^

Especially computer scientists, I'd say, who often find it harder to let go of a formalization-based ontology that has worked so well so far.

- ^

What live theory can capture, I’d hesitate to even call “structure”. Maybe aesthetic? I don’t know what labels there ought to be, but it seems pretty important to figure out some soft classification over the subtler logistics that machines will be able to handle.

7 comments

Comments sorted by top scores.

comment by Chris_Leong · 2025-03-31T14:29:41.422Z · LW(p) · GW(p)

Lots of interesting ideas here, but the connection to alignment still seems a bit vague.

Is misalignment really is a lack of sensitivity as opposed to a difference in goals or values? It seems to me that an unaligned ASI is extremely sensitive to context, just in the service of its own goals.

Then again, maybe you see Live Theory as being more about figuring out what the outer objective should look like (broad principles that are then localised to specific contexts) rather than about figuring out how to ensure an AI internalises specific values. And I can see potential advantages in this kind of indirect approach vs. trying to directly define or learn a universal objective.

↑ comment by Sahil · 2025-03-31T23:28:11.162Z · LW(p) · GW(p)

unaligned ASI is extremely sensitive to context, just in the service of its own goals.

Risks of abuse, isolation and dependence can skyrocket indeed, from, as you say, increased "context-sensitivity" in service of an AI/someone else's own goals. A personalized torture chamber is not better, but in fact quite likely a lot worse, than a context-free torture chamber. But to your question:

Is misalignment really is a lack of sensitivity as opposed to a difference in goals or values?

The way I'm using "sensitivity": sensitivity to X = the meaningfulness of X spurs responsive caring action.

It is unusual for engineers to include "responsiveness" in "sensitivity", but it is definitely included in the ordinary use of the term when, say, describing a person as sensitive. When I google "define sensitivity" the first similar word offered is, in fact, "responsiveness"!

So if someone is moved or stirred only by their own goals, I'd say they're demonstrating insensitivity to yours.

Semantics aside, and to your point: such caring responsiveness is not established by simply giving existing infrastructural machinery more local information. There are many details here, but you bring up an important specific one:

figuring out how to ensure an AI internalises specific values

which you wonder if is not the point of Live Theory. In fact, it very much is! To quote:

A very brief word now on problems of referentiality and their connections to sensitivity. One of the main concerns of the discourse of aligning AI can also be phrased as issues with internalization: specifically, that of internalizing human values. That is, an AI’s use of the word “yesterday” or “love” might only weakly refer to the concepts you mean. This worry includes both prosaic risks like “hallucination” (maybe it thinks “yesterday” was the date Dec 31st 2021, if its training stops in 2022) and fundamental ones like deep deceptiveness (maybe it thinks “be more loving” is to simply add more heart emojis or laser-etched smileys on your atoms). Either way, the worry is that the AI’s language and action around the words[22] might not be subtly sensitive to what you or I might associate with it.

Of course, this is only mentioning the risk, now how to address it. In fact, very little of this post is talking concrete details about the response to threat model. It's the minus-first post, after all. But the next couple of posts start to build up to how it aims to address these worries. In short: there is a continuity between these various notions expressed by "sensitivity" that has not been formally captured. There is perhaps no one single formal definition of "sensitivity" that unifies them, but there might be a usable "live definition" articulable in the (live) epistemic infrastructure of the near future. This infrastructure is what we can supply to our future selves, and it should help our future selves understand and respond to the further future of AI and its alignment.

This means being open to some amount of ontological shifts in our basic conceptualizations of the problem, which limits the amount you can do by building on current ontologies.

Lots of interesting ideas here

I can see potential advantages in this kind of indirect approach vs. trying to directly define or learn a universal objective.

I'm glad! And thank you for your excellent questions!

Replies from: Chris_Leong↑ comment by Chris_Leong · 2025-04-01T02:40:02.152Z · LW(p) · GW(p)

The way I'm using "sensitivity": sensitivity to X = the meaningfulness of X spurs responsive caring action.

I'm fine with that, although it seems important to have a definition for the more limited definition of sensitivity so we can keep track of that distinction: maybe adaptability?

One of the main concerns of the discourse of aligning AI can also be phrased as issues with internalization: specifically, that of internalizing human values. That is, an AI’s use of the word “yesterday” or “love” might only weakly refer to the concepts you mean.

Internalising values and internalising concepts are distinct. I can have a strong understanding of your definition of "good" and do the complete opposite.

This means being open to some amount of ontological shifts in our basic conceptualizations of the problem, which limits the amount you can do by building on current ontologies.

I think it's reasonable to say something along the lines of: "AI safety was developed in a context where most folks weren't expecting language models before ASI, so insufficient attention has been given to the potential of LLM's to help fill in or adapt informal definitions. Even though folks who feel we need a strongly principled approach may be skeptical that this will work, there's a decent argument that this should increase our chances of success on the margins".

comment by Steve Petersen (steve-petersen) · 2024-11-13T23:19:26.132Z · LW(p) · GW(p)

As always, I'm inspired by Sahil's vision here! I've sat with his "high actuation spaces" and "live theory" for a while, and there's something really appealing about it. I'm eager to get a better sense of it, by which I mean I'm eager to hear the vibes get cashed out more concretely. But maybe that's against the spirit of the project? For example: what exactly is "sensitivity"? Or am I being insensitive just to ask?

Replies from: Chris_Leong↑ comment by Chris_Leong · 2025-03-31T14:40:18.346Z · LW(p) · GW(p)

I agree with you that there's a lot of interesting ideas here, but I would like to see the core arguments laid out more clearly.

Replies from: Sahil↑ comment by Sahil · 2025-03-31T23:35:08.720Z · LW(p) · GW(p)

This section here [? · GW] has some you might find useful, among wriing that is published. Excerpted below:

Live theory in oversimplified claims:

Claims about AI.

Claim: Scaling in this era depends on replication of fixed structure. (eg. the code of this website or the browser that hosts it.)

Claim: For a short intervening period, AI will remain only mildly creative, but its cost, latency, and error-rate will go down, causing wide adoption.

Claim: Mildly creative but very cheap and fast AI can be used to turn informal instruction (eg. prompts, comments) into formal instruction (eg. code)

Implication: With cheap and fast AI, scaling will not require universal fixed structure, and can instead be created just-in-time, tailored to local needs, based on prompts that informally capture the spirit.

(This implication I dub "attentive infrastructure" or "teleattention tech", that allows you to scale that which is usually not considered scalable: attentivity.)

Claims about theorization.

Claim: General theories are about portability/scaling of insights.

Claim: Current generalization machinery for theoretical insight is primarily via parametrized formalisms (eg. equations).

Claim: Parametrized formalisms are an example of replication of fixed structure.

Implication: AI will enable new forms of scaling of insights, not dependent on finding common patterns. Instead, we will have attentive infrastructure that makes use of "postformal" theory prompts.

Implication: This will enable a new kind of generalization, that can create just-in-time local structure tailored to local needs, rather than via deep abstraction. It's also a new kind of specialization via "postformal substitution" that can handle more subtlety than formal operations, thereby broadening the kind of generalization.

These leave out the relevance to risk. That's the job of this paper: Substrate-Sensitive AI-risk Management. Let me know if these in combination lay it out more clearly!

Replies from: Chris_Leong↑ comment by Chris_Leong · 2025-04-01T02:26:50.025Z · LW(p) · GW(p)

That's the job of this paper: Substrate-Sensitive AI-risk Management.

That link is broken.