Newcomblike problems are the norm

post by So8res · 2014-09-24T18:41:56.356Z · LW · GW · Legacy · 111 commentsContents

1 2 3 4 5 6 None 111 comments

This is crossposted from my blog. In this post, I discuss how Newcomblike situations are common among humans in the real world. The intended audience of my blog is wider than the readerbase of LW, so the tone might seem a bit off. Nevertheless, the points made here are likely new to many.

1

Last time we looked at Newcomblike problems, which cause trouble for Causal Decision Theory (CDT), the standard decision theory used in economics, statistics, narrow AI, and many other academic fields.

These Newcomblike problems may seem like strange edge case scenarios. In the Token Trade, a deterministic agent faces a perfect copy of themself, guaranteed to take the same action as they do. In Newcomb's original problem there is a perfect predictor Ω which knows exactly what the agent will do.

Both of these examples involve some form of "mind-reading" and assume that the agent can be perfectly copied or perfectly predicted. In a chaotic universe, these scenarios may seem unrealistic and even downright crazy. What does it matter that CDT fails when there are perfect mind-readers? There aren't perfect mind-readers. Why do we care?

The reason that we care is this: Newcomblike problems are the norm. Most problems that humans face in real life are "Newcomblike".

These problems aren't limited to the domain of perfect mind-readers; rather, problems with perfect mind-readers are the domain where these problems are easiest to see. However, they arise naturally whenever an agent is in a situation where others have knowledge about its decision process via some mechanism that is not under its direct control.

2

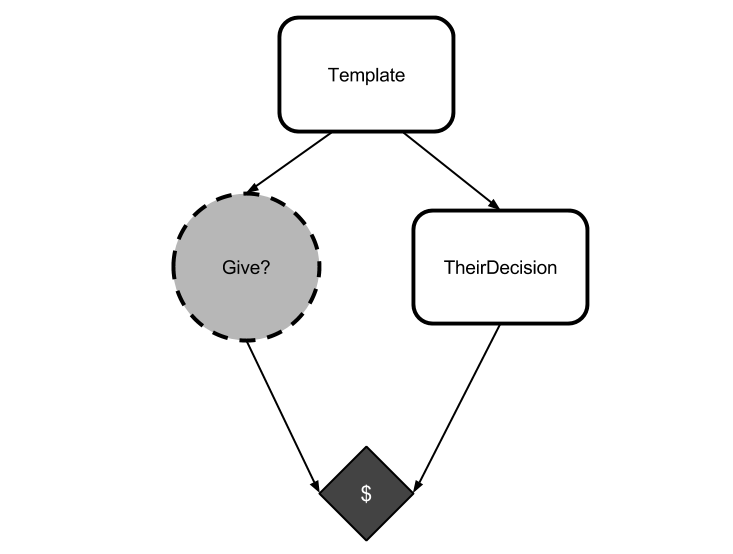

Consider a CDT agent in a mirror token trade.

It knows that it and the opponent are generated from the same template, but it also knows that the opponent is causally distinct from it by the time it makes its choice. So it argues

Either agents spawned from my template give their tokens away, or they keep their tokens. If agents spawned from my template give their tokens away, then I better keep mine so that I can take advantage of the opponent. If, instead, agents spawned from my template keep their tokens, then I had better keep mine, or otherwise I won't win any money at all.

It has failed, here, to notice that it can't choose separately from "agents spawned from my template" because it is spawned from its template. (That's not to say that it doesn't get to choose what to do. Rather, it has to be able to reason about the fact that whatever it chooses, so will its opponent choose.)

The reasoning flaw here is an inability to reason as if past information has given others veridical knowledge about what the agent will choose. This failure is particularly vivid in the mirror token trade, where the opponent is guaranteed to do exactly the same thing as the opponent. However, the failure occurs even if the veridical knowledge is partial or imperfect.

3

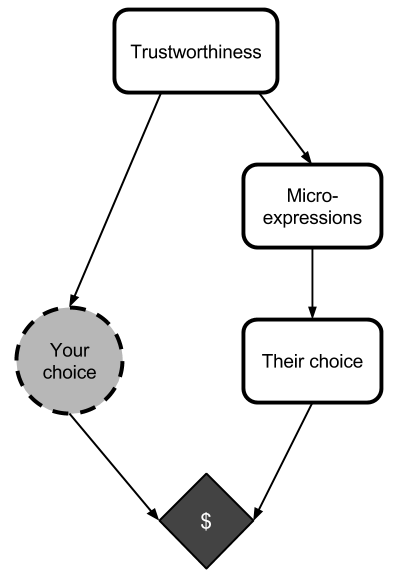

Humans trade partial, veridical, uncontrollable information about their decision procedures all the time.

Humans automatically make first impressions of other humans at first sight, almost instantaneously (sometimes before the person speaks, and possibly just from still images).

We read each other's microexpressions, which are generally uncontrollable sources of information about our emotions.

As humans, we have an impressive array of social machinery available to us that gives us gut-level, subconscious impressions of how trustworthy other people are.

Many social situations follow this pattern, and this pattern is a Newcomblike one.

All these tools can be fooled, of course. First impressions are often wrong. Con-men often seem trustworthy, and honest shy people can seem unworthy of trust. However, all of this social data is at least correlated with the truth, and that's all we need to give CDT trouble. Remember, CDT assumes that all nodes which are causally disconnected from it are logically disconnected from it: but if someone else gained information that correlates with how you actually are going to act in the future, then your interactions with them may be Newcomblike.

In fact, humans have a natural tendency to avoid "non-Newcomblike" scenarios. Human social structures use complex reputation systems. Humans seldom make big choices among themselves (who to hire, whether to become roommates, whether to make a business deal) before "getting to know each other". We automatically build complex social models detailing how we think our friends, family, and co-workers, make decisions.

When I worked at Google, I'd occasionally need to convince half a dozen team leads to sign off on a given project. In order to do this, I'd meet with each of them in person and pitch the project slightly differently, according to my model of what parts of the project most appealed to them. I was basing my actions off of how I expected them to make decisions: I was putting them in Newcomblike scenarios.

We constantly leak information about how we make decisions, and others constantly use this information. Human decision situations are Newcomblike by default! It's the non-Newcomblike problems that are simplifications and edge cases.

Newcomblike problems occur whenever knowledge about what decision you will make leaks into the environment. The knowledge doesn't have to be 100% accurate, it just has to be correlated with your eventual actual action (in such a way that if you were going to take a different action, then you would have leaked different information). When this information is available, and others use it to make their decisions, others put you into a Newcomblike scenario.

Information about what we're going to do is frequently leaking into the environment, via unconscious signaling and uncontrolled facial expressions or even just by habit — anyone following a simple routine is likely to act predictably.

4

Most real decisions that humans face are Newcomblike whenever other humans are involved. People are automatically reading unconscious or unintentional signals and using these to build models of how you make choices, and they're using those models to make their choices. These are precisely the sorts of scenarios that CDT cannot represent.

Of course, that's not to say that humans fail drastically on these problems. We don't: we repeatedly do well in these scenarios.

Some real life Newcomblike scenarios simply don't represent games where CDT has trouble: there are many situations where others in the environment have knowledge about how you make decisions, and are using that knowledge but in a way that does not affect your payoffs enough to matter.

Many more Newcomblike scenarios simply don't feel like decision problems: people present ideas to us in specific ways (depending upon their model of how we make choices) and most of us don't fret about how others would have presented us with different opportunities if we had acted in different ways.

And in Newcomblike scenarios that do feel like decision problems, humans use a wide array of other tools in order to succeed.

Roughly speaking, CDT fails when it gets stuck in the trap of "no matter what I signaled I should do [something mean]", which results in CDT sending off a "mean" signal and missing opportunities for higher payoffs. By contrast, humans tend to avoid this trap via other means: we place value on things like "niceness" for reputational reasons, we have intrinsic senses of "honor" and "fairness" which alter the payoffs of the game, and so on.

This machinery was not necessarily "designed" for Newcomblike situations. Reputation systems and senses of honor are commonly attributed to humans facing repeated scenarios (thanks to living in small tribes) in the ancestral environment, and it's possible to argue that CDT handles repeated Newcomblike situations well enough. (I disagree somewhat, but this is an argument for another day.)

Nevertheless, the machinery that allows us to handle repeated Newcomblike problems often seems to work in one-shot Newcomblike problems. Regardless of where the machinery came from, it still allows us to succeed in Newcomblike scenarios that we face in day-to-day life.

The fact that humans easily succeed, often via tools developed for repeated situations, doesn't change the fact that many of our day-to-day interactions have Newcomblike characteristics. Whenever an agent leaks information about their decision procedure on a communication channel that they do not control (facial microexpressions, posture, cadence of voice, etc.) that person is inviting others to put them in Newcomblike settings.

5

Most of the time, humans are pretty good at handling naturally arising Newcomblike problems. Sometimes, though, the fact that you're in a Newcomblike scenario does matter.

The games of Poker and Diplomacy are both centered around people controlling information channels that humans can't normally control. These games give particularly crisp examples of humans wrestling with situations where the environment contains leaked information about their decision-making procedure.

These are only games, yes, but I'm sure that any highly ranked Poker player will tell you that the lessons of Poker extend far beyond the game board. Similarly, I expect that highly ranked Diplomacy players will tell you that Diplomacy teaches you many lessons about how people broadcast the decisions that they're going to make, and that these lessons are invaluable in everyday life.

I am not a professional negotiator, but I further imagine that top-tier negotiators expend significant effort exploring how their mindsets are tied to their unconscious signals.

On a more personal scale, some very simple scenarios (like whether you can get let into a farmhouse on a rainy night after your car breaks down) are somewhat "Newcomblike".

I know at least two people who are unreliable and untrustworthy, and who blame the fact that they can't hold down jobs (and that nobody cuts them any slack) on bad luck rather than on their own demeanors. Both consistently believe that they are taking the best available action whenever they act unreliable and untrustworthy. Both brush off the idea of "becoming a sucker". Neither of them is capable of acting unreliable while signaling reliability. Both of them would benefit from actually becoming trustworthy.

Now, of course, people can't suddenly "become reliable", and akrasia is a formidable enemy to people stuck in these negative feedback loops. But nevertheless, you can see how this problem has a hint of Newcomblikeness to it.

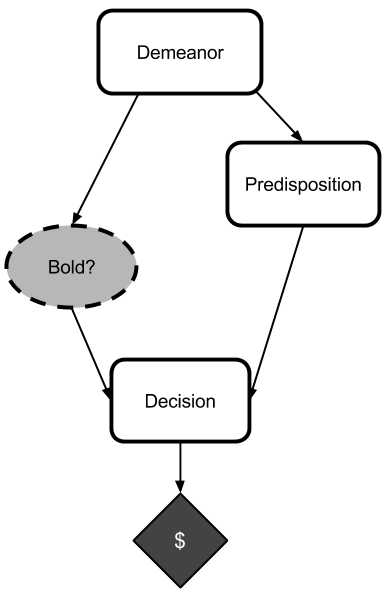

In fact, recommendations of this form — "You can't signal trustworthiness unless you're trustworthy" — are common. As an extremely simple example, let's consider a shy candidate going in to a job interview. The candidate's demeanor (confident or shy) will determine the interviewer's predisposition towards or against the candidate. During the interview, the candidate may act either bold or timid. Then the interviewer decides whether or not to hire the candidate.

If the candidate is confident, then they will get the job (worth $100,000) regardless of whether they are bold or timid. If they are shy and timid, then they will not get the job ($0). If, however, thy are shy and bold, then they will get laughed at, which is worth -$10. Finally, though, a person who knows they are going to be timid will have a shy demeanor, whereas a person who knows they are going to be bold will have a confident demeanor.

It may seem at first glance that it is better to be timid than to be bold, because timidness only affects the outcome if the interviewer is predisposed against the candidate, in which case it is better to be timid (and avoid being laughed at). However, if the candidate knows that they will reason like this (in the interview) then they will be shy before the interview, which will predispose the interviewer against them. By contrast, if the candidate precommits to being bold (in this simple setting) then the will get the job.

Someone reasoning using CDT might reason as follows when they're in the interview:

I can't tell whether they like me or not, and I don't want to be laughed at, so I'll just act timid.

To people who reason like this, we suggest avoiding causal reasoning during the interview.

And, in fact, there are truckloads of self-help books dishing out similar advice. You can't reliably signal trustworthiness without actually being trustworthy. You can't reliably be charismatic without actually caring about people. You can't easily signal confidence without becoming confident. Someone who cannot represent these arguments may find that many of the benefits of trustworthiness, charisma, and confidence are unavailable to them.

Compare the advice above to our analysis of CDT in the mirror token trade, where we say "You can't keep your token while the opponent gives theirs away". CDT, which can't represent this argument, finds that the high payoff is unavailable to it. The analogy is exact: CDT fails to represent precisely this sort of reasoning, and yet this sort of reasoning is common and useful among humans.

6

That's not to say that CDT can't address these problems. A CDT agent that knows it's going to face the above interview would precommit to being bold — but this would involve using something besides causal counterfactual reasoning during the actual interview. And, in fact, this is precisely one of the arguments that I'm going to make in future posts: a sufficiently intelligent artificial system using CDT to reason about its choices would self-modify to stop using CDT to reason about its choices.

We've been talking about Newcomblike problems in a very human-centric setting for this post. Next post, we'll dive into the arguments about why an artificial agent (that doesn't share our vast suite of social signaling tools, and which lacks our shared humanity) may also expect to face Newcomblike problems and would therefore self-modify to stop using CDT.

This will lead us to more interesting questions, such as "what would it use?" (spoiler: we don't quite know yet) and "would it self-modify to fix all of CDT's flaws?" (spoiler: no).

111 comments

Comments sorted by top scores.

comment by dankane · 2014-09-25T16:20:34.976Z · LW(p) · GW(p)

Newcomblike problems occur whenever knowledge about what decision you will make leaks into the environment. The knowledge doesn't have to be 100% accurate, it just has to be correlated with your eventual actual action.

This is far too general. The way in which information is leaking into the environment is what separates Newcomb's problem from the smoking lesion problem. For your argument to work you need to argue that whatever signals are being picked up on would change if the subject changed their disposition, not merely that these signals are correlated with the disposition.

Replies from: So8res, dankane, dankane↑ comment by dankane · 2014-09-25T17:59:48.705Z · LW(p) · GW(p)

Relatedly, with your interview example, I think that perhaps a better model is that whether a person is confident or shy is not depending on whether they believe that they will be bold or not, but upon the degree to which they care about being laughed at. If you are confident, you don't care about being laughed at and might as well be bold. If you are afraid of being laughed at, you already know that you are shy and thus do not gain anything by being bold.

↑ comment by dankane · 2014-09-25T17:44:12.784Z · LW(p) · GW(p)

I think my bigger point is that you don't seem to make any real argument as to which case we are in. For example, consider the following model of how people's perception of my trustworthiness might be correlated to my actual trustworthiness: There are two causal chains: My values -> Things I say -> Peoples' perceptions My values -> My actions So if I value trustworthiness, I will not, for example talk much about wanting to avoid being sucker (in contexts where it would refer to be doing trustworthy things). This will influence peoples' perceptions of whether or not I am trustworthy. Furthermore, if I do value trustworthiness, I will want to be trustworthy.

This setup makes things look very much like the smoking lesion problem. A CDT agent that values trustworthiness will be trustworthy because they place intrinsic value in it. A CDT agent that does not value trustworthiness will be perceived as being untrustworthy. Simply changing their actions will not alter this perception, and therefore they will fail to be trustworthy in situations where it benefits them, and this is the correct decision.

Now you might try to break the causal link: My values -> Things that I say And doing so is certainly possible (I mean you can have spies that successfully pretend to be loyal for extended periods without giving themselves away). On the other hand, it might not happen often for several possible reasons: A) Maintaining a facade at all times is exhausting (and thus imposes high costs) B) Lying consistently is hard (as in too computationally expensive) C) The right way to lie consistently, is to simulate the altered value set, but this may actually lead to changing your values (standard advice for become more confident is pretending to be confident, right?).

So yes, in this model an non-trust-valuing and self-modifying CDT agent will self-modify, but it will need to self-modify its values rather than its decision theory. Using a decision theory that is trustworthy despite not intrinsically valuing it doesn't help.

comment by William_Quixote · 2014-09-24T19:18:08.402Z · LW(p) · GW(p)

Yay!

I realize that "yay!" Isn't really much of a comment, but I was waiting for this and now it's here. The poster has made the world a happier place.

Replies from: Regex↑ comment by Regex · 2014-10-04T11:42:38.579Z · LW(p) · GW(p)

https://www.youtube.com/watch?v=DLTZctTG6cE

I think "yay!" is a perfect comment when also given a certain shy pegasus.

comment by owencb · 2014-09-24T19:29:23.922Z · LW(p) · GW(p)

Thanks, this was one of the more insightful things I remember reading about decision theory.

You've argued that many human situations are somewhat Newcomblike. Do we have a decision theory which deals cleanly with this continuum? (where the continuum is expressed for instance via the degree of correlation between your action and the other player's action)

comment by bryjnar · 2014-09-24T21:56:09.754Z · LW(p) · GW(p)

Fantastic post, I think this is right on the money.

Many more Newcomblike scenarios simply don't feel like decision problems: people present ideas to us in specific ways (depending upon their model of how we make choices) and most of us don't fret about how others would have presented us with different opportunities if we had acted in different ways.

I think this is a big deal. Part of the problem is that the decision point (if there was anything so firm) is often quite temporally distant from the point at which the payoff happens. The time when you "decide" to become unreliable (or the period in which you become unreliable) may be quite a while before you actually feel the ill effects of being unreliable.

comment by adam_strandberg · 2014-09-25T16:50:07.777Z · LW(p) · GW(p)

Yes, thank you for writing this- I've been meaning to write something like it for a while and now I don't need to! I initially brushed Newcomb's Paradox off as an edge case and it took me much longer than I would have liked to realize how universal it was. A discussion of this type should be included with every introduction to the problem to prevent people from treating it as just some pointless philosophical thought experiment.

comment by William_Quixote · 2014-09-24T19:23:49.991Z · LW(p) · GW(p)

More substantively, can we express mathematically how the correlation between leaked signal and final choice effects the degree of sub optimality in final payouts?

Naively in the actual Newcombe's problem if omega is only correct 1/999,000+epsilon percent of the time then CDT seems to do about as well as whatever theory that solves this problem. Is there a known general case for this reasoning?

Replies from: Vaniver, DavidS↑ comment by Vaniver · 2014-09-25T17:13:40.578Z · LW(p) · GW(p)

Naively in the actual Newcombe's problem if omega is only correct 1/999,000+epsilon percent of the time then CDT seems to do about as well as whatever theory that solves this problem.

This is not quite correct; this comment hints at why. CDT will sever the causal links pointing in to your decision, and so if you don't think that what you choose to do will affect what Omega has guessed in the past, then it doesn't matter how good a guesser you think Omega is.

The reason Newcomb's Problem proper causes such headache and discussion is, in my mind, a failure to separate what causation means in reality and what causation means in decision theory. A model of Newcomb's problem proper which has our decision causing Omega's prediction violates realistic assumptions that the future cannot cause the past; a model of Newcomb's problem proper which has our decision not causing Omega's prediction violates the problem statement that Omega is a perfect predictor (i.e. we don't have an arrow, which implies two variables are independent, but in fact those variables are dependent).

If you discard the requirement that causes seem physically reasonable, then CDT can reason in the general case here. (You just stick the probabilistic depedence in like you would any other.) The issue is that, in reality, requiring influences to be real makes good sense!

Replies from: William_Quixote↑ comment by William_Quixote · 2014-09-25T17:26:00.067Z · LW(p) · GW(p)

I think my original post may have been unclear. Sorry about that.

What I meant was not that how accurate omega is impacts what CDC does. What I meant was that the accuracy impacts how much "pick up" you can get from a better theory. So if omega is perfect one boxing get you 1,000,000 vs 1000 from two boxing for an increase of 999,000. If omega is less than perfect, then sometimes the one boxer gets nothing or the two boxer gets 1001000. This brings their average results closer. At some accuracy, P, CDC and the theory which solves the problem and correctly chooses to one box do almost equally well.

Omegas accuracy is related to the information leakage about the choosers decision theory.

Replies from: Vaniver↑ comment by Vaniver · 2014-09-25T17:40:43.038Z · LW(p) · GW(p)

What I meant was that the accuracy impacts how much "pick up" you can get from a better theory.

Agreed. Because of the simplicity of Newcomb's proper, I think this is going to make for an unimpressive graph, though: the rewards are linear in Omega's accuracy P, so it should just be a simple piecewise function for the clever theory, diverging from the two-boxer at the low accuracy and eventually reaching the increase of $999,000 at P=1.

↑ comment by DavidS · 2014-10-03T20:09:54.218Z · LW(p) · GW(p)

"Naively in the actual Newcombe's problem if omega is only correct 1/999,000+epsilon percent of the time…"

I'd like to argue with this by way of a parable. The eccentric billionaire, Mr. Psi, invites you to his mansion for an evening of decision theory challenges. Upon arrival, Mr. Psi's assistant brings you a brandy and interviews you for hours about your life experiences, religious views, favorite philosophers, ethnic and racial background … You are then brought into a room. In front of you is a transparent box with a $1 bill in it, and an opaque box. Mr. Psi explains:

"You may take just the solid box, or both boxes. If I predicted you take one box, then that box contains $1000, otherwise it is empty. I am not as good at this game as my friend Omega, but out of my last 463 games, I predicted "one box" 71 times and was right 40 times out of 71; I picked "two boxes" 392 times and was right 247 times out of 392. To put it another way, those who one-boxed got an average of (40$1000+145$0)/185 = $216 and those who two-boxed got an average of (31$1001+247$1)/278=$113. "

So, do you one-box?

"Mind if I look through your records?" you say. He waves at a large filing cabinet in the corner. You read through the volumes of records of Mr. Psi's interviews, and discover his accuracy is as he claims. But you also notice something interesting (ROT13): Ze. Cfv vtaberf nyy vagreivrj dhrfgvbaf ohg bar -- ur cynprf $1000 va gur obk sbe gurvfgf naq abg sbe ngurvfgf. link.

Still willing to say you should one-box?

By the way, if it bothers you that the odds of $1000 are less than 50% no matter what, I also could have made Mr. Psi give money to 99/189 one boxers (expected value $524) and only to 132/286 two boxers (expected value $463) just by hfvat gur lrne bs lbhe ovegu (ROT13). This strategy has a smaller difference in expected value, and a smaller success rate for Mr. Psi, but might be more interesting to those of you who are anchoring on $500.

comment by bramflakes · 2014-09-24T19:41:23.689Z · LW(p) · GW(p)

Has anyone written at length about the evolution of cooperation in humans in this kind of Newcomblike context? I know there's been oceans of ink spent from IPD perspectives, but what about from the acausal angle?

Replies from: None↑ comment by [deleted] · 2014-09-25T23:39:02.852Z · LW(p) · GW(p)

Interesting note: the genetic and cultural features coding for "acausal" social reasoning on the part of the human agent actually have a direct causal influence on the events. They are the physical manifestation of TDT's logical nodes.

comment by IlyaShpitser · 2014-09-28T13:27:37.050Z · LW(p) · GW(p)

Thanks for doing this series!

I thought you were going to say that humans play Newcomb-like games with themselves, where a "disordered soul" doesn't bargain with itself properly. :)

comment by Chris_Leong · 2019-12-05T23:16:14.238Z · LW(p) · GW(p)

I think this article has some truth in it, but that it also overstates its case. It seems that it'll only be certain cases where your demeanour at the time you are read will correlate with your final decision. Like let's suppose you arrive in town for an imperfect Parfit's Hitchhiker and someone gives you an argument for not paying that hadn't occurred to you before. Then it seems like you should be able to defect on the basis of this argument, without affecting the facial reading at the time. Of course, it isn't quite this simple. If you thought it was likely that you might encounter a new argument for defecting, even if you didn't know what it might be yet, then that might change your facial expression. Or if you were already subconsciously aware of the argument, but it hadn't yet risen to the level of consciousness, then that might change how you are read as well.

comment by JoshuaFox · 2014-10-10T13:27:19.947Z · LW(p) · GW(p)

The palm example is a bit confusing. Palms don't really tell the future: There is no direct causal link (unless someone listens to a palm-reader!). It would be much better if you gave a different example of confusing causality and correlation where there really was correlation.

It reminds me of Eliezer's example of the machine learning system that seemed to be finding camouflaged tanks, but in fact was confused by the sunniness of the different sets of photos: As far as I can tell that never happened.

Then there is the decision theory example of Solomon wanting to sleep with Bathsheba, which confusingly morphs into a discussion of Oedipal complexes if you're not paying attention. (As Eliezer points out, this example was a originally a follow-on to another story involving King David and Bathsheba, not that that makes it any less confusing.

And it appears that architectural spandrels, after which biological spandrels are named, are not really spandrels) in the originally intended sense. What a mess!

On the other hand, I am glad that Eliezer worked hard to change the standard "Smoking Lesion" story to a fictional chewing gum example, since smoking indeed causes cancer. But that was a fictional story, and Alex Altair did much better in coming up with a real (or at least plausibly real story about toxoplasmosis.

comment by JoshuaFox · 2014-10-10T13:33:08.215Z · LW(p) · GW(p)

I'd suggest not using palm reading as an example, since palm lines really do not affect the future (unless you believe they do).

Likewise, the example of a machine learning system misanalyzing sunlight patterns as tanks is apparently fake.

The Solomon and Bathsheba problem looks more a discussion of an Oedipal complex at first. What a mess!

Eliezer had the good sense to transform the Smoking Lesion into a Chewing Gum Lesion, since smoking does in fact cause cancer. But chewing gum doesn't cause lesions. Alex Altair's example of toxoplasmosis was at least plausible.

In summary, examples should be realistic. I can deal with unrealistic thought experiments like dropping people through space in elevators and catching balls tossed from 0.99c trains -- but decision theory is full of counter-factuals, so let's not add in any more confusion.

comment by Vaniver · 2014-09-25T18:09:14.503Z · LW(p) · GW(p)

I agree that an intelligent agent who deals with other intelligent agents should have think in a way that makes reasoning about 'dispositions' and 'reputations' easy, because it's going to be doing it a lot.

But it's unclear to me that this requires a change to decision theory, instead of just a sophisticated model of what the agent's environment looks like that's tuned to thinking about dispositions and reputations. I think that an agent that realizes that the game keeps going on, and that its actions result in both immediate rewards and delayed shifts to its environment (which impact future rewards), will behave like you describe in section 4- it will use concepts like "fairness" and "honor" with an implied numerical value attached, because those are its estimates of how taking an anti-social action now will hurt it in the future, which it balances against the present gain to decide whether or not to take the action. And a CDT agent, with the right world-model, seems to me like it will do fine (which is my opinion about the proper Newcomb's problem, as well as Newcomb-like problems). I agree with eli_sennesh in this comment thread that getting the precise value of reputational effects requires actual prescience, but it seems to me that we can estimate it well enough to get along (though it seems possible that much of our 'estimation' is biological tuning rather than stored in memory).

A CDT agent that knows it's going to face the above interview would precommit to being bold — but this would involve using something besides causal counterfactual reasoning during the actual interview.

I don't think I buy this interpretation. It seems to me that the CDT agent with a broader scope than 'the immediate future' thinks it's better to not break the precommitment than to break it (in situations where the math works out that way), because of the counterfactual effects that breaking a precommitment will have on the future. You become what you do!

Replies from: So8res, owencb↑ comment by So8res · 2014-09-25T18:38:50.895Z · LW(p) · GW(p)

CDT + Precommitments is not pure CDT -- I agree that CDT over time (with the ability to make and keep precommitments) does pretty well, and this is part of what I mean when I talk about how an agent using pure CDT to make every decision would self-modify to stop doing that (e.g., to implement precommitments, which is trivially easy when you can modify your own source code).

Consider the arguments of CDT agents as they twobox, when they claim that they would have liked to precommit but they missed their opportunity -- we can do better by deciding to act as we would have precommitted to act, but this entails using a different decision theory. You can minimize the number of missed opportunities by allowing CDT many opportunities to precommit, but that doesn't change the fact that CDT can't retrocommit.

If you look at the decision-making procedure of something which started out using CDT after it self-modifies a few times, the decision procedure probably won't look like CDT, even though it was implemented by CDT making "precommitments".

And while CDT mostly does well when the games are repeated, there are flaws that CDT won't be able to self-correct (roughly corresponding to CDT's inability to make retrocommitments), these will be the subject of future posts.

Replies from: V_V, Vaniver↑ comment by V_V · 2014-09-28T13:54:52.888Z · LW(p) · GW(p)

Consider the arguments of CDT agents as they twobox, when they claim that they would have liked to precommit but they missed their opportunity

Why would they do that?

CDT two-boxes because CDT simply fails to understand that the content of the box is influenced by its decision. It deliberately uses an incorrect epistemic model.

So when the agent two-boxes and it obtains a reward different than what it had predicted, it will simply think it has been lied to, or if it is one hundred percent, certain that the model was correct, then it will experience a logical contradiction, halt and catch fire.

↑ comment by Vaniver · 2014-09-25T20:16:16.995Z · LW(p) · GW(p)

CDT + Precommitments is not pure CDT

By CDT I mean calculating utilities using:

=\sum_jP(O_j%7Cdo(A))D(O_j))

Most arguments that I see for the deficiency of CDT rest on additional assumptions that are not required by CDT. I don't see how we need to modify that equation to take into account precommitments, rather than modifying D(O_j).

Consider the arguments of CDT agents as they twobox, when they claim that they would have liked to precommit but they missed their opportunity

For example, this requires the additional assumption that the future cannot cause the past. In the presence of a supernatural Omega, that assumption is violated.

that doesn't change the fact that CDT can't retrocommit.

Outside of supernatural opportunities, it's not obvious to me that this is a bug. I'll wait for you to make the future arguments at length, unless you want to give a brief version.

Replies from: So8res, private_messaging↑ comment by So8res · 2014-09-26T18:27:53.921Z · LW(p) · GW(p)

Right, you can modify the function that evaluates outcomes to change the payoffs (e.g. by making exploitation in the PD have a lower payoff that mutual cooperation, because it "sullies your honor" or whatever) and then CDT will perform correctly. But this is trivially true: I can of course cause that equation to give me the "right" answer by modifying D(O_j) to assign 1 to the "right" outcome and 0 to all other outcomes. The question is how you go about modifying D to identify the "right" answer.

I agree that in sufficiently repetitive environments CDT readily modifies the D function to alter the apparent payoffs in PD-like problems (via "precommitments"), but this is still an unsatisfactory hack.

First of all, the construction of the graph is part of the decision procedure. Sure, in certain situations CDT can fix its flaws by hiding extra logic inside D. However, I'd like to know what that logic is actually doing so that I can put it in the original decision procedure directly.

Secondly, CDT can't (or, rather, wouldn't) fix all of its flaws by modifying D -- it has some blind spots, which I'll go into later.

Outside of supernatural opportunities, it's not obvious to me that this is a bug. I'll wait for you to make the future arguments at length, unless you want to give a brief version.

(I don't understand where your objection is here. What do you mean by 'supernatural'? Do you think you should always twobox in a Newcomb's problem where Omega is played by Paul Eckman, a good but imperfect predictor?)

You find yourself in a PD against a perfect copy of yourself. At the end of the game, I will remove the money your clone wins, destroy all records of what you did, re-merge you with your clone, erase both our memories of the process, and let you keep the money that you won (you will think it is just a gift to recompense you for sleeping in my lab for a few hours). You had not previously considered this situation possible, and had made no precommitments about what to do in such a scenario. What do you think you should do?

Also, what do you think the right move is on the true PD?

Replies from: Strange7, Vaniver↑ comment by Strange7 · 2014-10-02T23:58:06.926Z · LW(p) · GW(p)

You find yourself in a PD against a perfect copy of yourself. At the end of the game, I will remove the money your clone wins, destroy all records of what you did, re-merge you with your clone, erase both our memories of the process, and let you keep the money that you won (you will think it is just a gift to recompense you for sleeping in my lab for a few hours). You had not previously considered this situation possible, and had made no precommitments about what to do in such a scenario. What do you think you should do?

Given that you're going to erase my memory of this conversation and burn a lot of other records afterward, it's entirely possible that you're lying about whether it's me or the other me whose payout 'actually counts.' Makes no difference to you either way, right? We all look the same, and telling us different stories about the upcoming game would break the assumption of symmetry. Effectively, I'm playing a game of PD followed by a special step in which you flip a fair coin and, on heads, swap my reward with that of the other player.

So, I'd optimize for the combined reward to both myself and my clone, which is to say, for the usual PD payoff matrix, cooperate. If the reward for defecting when the other player cooperates is going to be worth drastically more to my postgame gestalt, to the point that I'd accept a 25% or less chance of that payout in trade for virtual certainty of the payout for mutual cooperation, I would instead behave randomly.

Replies from: Jiro↑ comment by Jiro · 2014-10-03T02:49:54.801Z · LW(p) · GW(p)

Saying "I wouldn't trust someone like that to tell the truth about whose payout counts" is fighting the hypothetical.

I don't think you need to assume the other party is a clone; you just need to assume that both you and the other party are perfect reasoners.

Replies from: VAuroch, Strange7↑ comment by Strange7 · 2014-10-03T16:27:15.669Z · LW(p) · GW(p)

fighting the hypothetical

It's established in the problem statement that the experimenter is going to destroy or falsify all records of what transpired during the game, including the fact that a game even took place, presumably to rule out cooperation motivated by reputational effects. If you want a perfectly honest and trustworthy experimenter, establish that axiomatically, or at least don't establish anything that directly contradicts.

Assuming that the other party is a clone with identical starting mind-state makes it a much more tractable problem. I don't have much idea how perfect reasoners behave; I've never met one.

↑ comment by Vaniver · 2014-09-27T00:46:38.868Z · LW(p) · GW(p)

Right, you can modify the function that evaluates outcomes to change the payoffs (e.g. by making exploitation in the PD have a lower payoff that mutual cooperation, because it "sullies your honor" or whatever) and then CDT will perform correctly. But this is trivially true: I can of course cause that equation to give me the "right" answer by modifying D(O_j) to assign 1 to the "right" outcome and 0 to all other outcomes. The question is how you go about modifying D to identify the "right" answer.

I agree with this. It seems to me that answers about how to modify D are basically questions about how to model the future; you need to price the dishonor in defecting, which seems to me to require at least an implicit model of how valuable honor will be over the course of the future. By 'honor,' I just mean a computational convenience that abstracts away a feature of the uncertain future, not a terminal value. (Humans might have this built in as a terminal value, but that seems to be because it was cheaper for evolution to do so than the alternative.)

I agree that in sufficiently repetitive environments CDT readily modifies the D function to alter the apparent payoffs in PD-like problems (via "precommitments"), but this is still an unsatisfactory hack.

I don't think I agree with the claim that this is an unsatisfactory hack. To switch from decision-making to computer vision as the example, I hear your position as saying that neural nets are unsatisfactory for solving computer vision, so we need to develop an extension, and my position as saying that neural nets are the right approach, but we need very wide nets with very many layers. A criticism of my position could be "but of course with enough nodes you can model an arbitrary function, and so you can solve computer vision like you could solve any problem," but I would put forward the defense that complicated problems require complicated solutions; it seems more likely to me that massive databases of experience will solve the problem than improved algorithmic sophistication.

I don't understand where your objection is here. What do you mean by 'supernatural'?

In the natural universe, it looks to me like opportunities that promise retrocausation turn out to be scams, and this is certain enough to be called a fundamental property. In hypothetical universes, this doesn't have to be the case, but it's not clear to me how much effort we should spend on optimizing hypothetical universes. In either case, it seems to me this is something that the physics module (i.e. what gives you P(O_j|do(A))) should compute, and only baked into the decision theory by the rules about what sort of causal graphs you think are likely.

Do you think you should always twobox in a Newcomb's problem where Omega is played by Paul Eckman, a good but imperfect predictor?

Given that professional ethicists are neither nicer nor more dependable than similar people of their background, I'll jump on the signalling grenade to point out that any public discussion of these sorts of questions is poisoned by signalling. If I expected that publicly declaring my willingness to one-box would increase the chance that I'm approached by Newcomb-like deals, then obviously I would declare my willingness to one-box. As it turns out, I'm trustworthy and dependable in real life, because of both a genetic predisposition towards pro-social behavior (including valuing things occurring after my death) and a reflective endorsement of the myriad benefits of behaving in that way.

You had not previously considered this situation possible, and had made no precommitments about what to do in such a scenario.

I decided a long time ago to cooperate with myself as a general principle, and I think that was more a recognition of my underlying personality than it was a conscious change.

If the copy is perfect, it seems unreasonable to me to not draw a causal arrow between my action and my copy's action, as I cannot justify the assumption that my action will be independent of my perfect copy's action. Estimating that the influence is sufficiently high, then it seems that (3,3) is a better option that (0,0). I'm moderately confident a hypothetical me which knew about causal models but hadn't thought about identity or intertemporal cooperation would use the same line of reasoning to cooperate.

Replies from: So8res↑ comment by So8res · 2014-09-27T01:07:48.976Z · LW(p) · GW(p)

In either case, it seems to me this is something that the physics module (i.e. what gives you P(O_j|do(A))) should compute, and only baked into the decision theory by the rules about what sort of causal graphs you think are likely.

The problem is the do(A) part: the do(.) function ignores logical acausal connections between nodes. That was the theme of this post.

If the copy is perfect, it seems unreasonable to me to not draw a causal arrow between my action and my copy's action, as I cannot justify the assumption that my action will be independent of my perfect copy's action.

I agree! If the copy is perfect, there is a connection. However, the connection is not a causal one.

Obviously you want to take the action that maximizes your expected utility, according to probability-weighted outcomes. The question is how you check the outcome that would happen if you took a given action.

Causal counterfactual reasoning prescribes evaluating counterfactuals by intervening on the graph using the do(.) function. This (roughly) involves identifying your action node A, ignoring the causal ancestors, overwriting the node with the function const a (where a is the action under consideration) and seeing what happens. This usually works fine, but there are some cases where this fails to correctly compute the outcomes (namely, where others are reasoning about the contents A, where their internal representations of A were not affected by your do(A=a)).

This is not fundamentally a problem of retrocausality, it's fundamentally a problem of not knowing how to construct good counterfactuals. What does it mean to consider that a deterministic algorithm returns something that it doesn't return? do(.) says that it means "imagine you were not you, but were instead const a while other people continue reasoning as if you were you". It would actually be really surprising if this worked out in situations where others have internal representations of the contents of A (which do(A=.) stomps all over).

You answered that you intuitively feel like you should draw an arrow between you and your clone in the above thought experiment. I agree! But constructing a graph like this (where things that are computed via the same process must have the same output) is actually not something that CDT does. This problem in particular was the motivation behind TDT (which uses a different function besides do(.) to construct counterfactuals that preserve the fact that identical computations will have identical outputs). It sounds like we probably have similar intuitions about decision theory, but perhaps different ideas about what the do(.) function is capable of?

↑ comment by Vaniver · 2014-09-27T03:56:09.948Z · LW(p) · GW(p)

This usually works fine, but there are some cases where this fails to correctly compute the outcomes (namely, where others are reasoning about the contents A, where their internal representations of A were not affected by your do(A=a)).

I still think this should be solved by the physics module.

For example, consider two cases. In case A, Ekman reads everything you've ever written on decision theory before September 26th, 2014, and then fills the boxes as if he were Omega, and then you choose whether to one-box or two-box. Ekman's a good psychologist, but his model of your mind is translucent to you at best- you think it's more likely than not that he'll guess correctly what you'll pick, but know that it's just mediated by what you've written that you can't change.

In case B, Ekman watches your face as you choose whether to press the one-box button or the two-box button without being able to see the buttons (or your finger), and then predicts your choice. Again, his model of your mind is translucent at best to you; probably he'll guess correctly, but you don't know what specifically he's basing his decision off of (and suppose that even if you did, you know that you don't have sufficient control over your features to prevent information from leaking).

It seems to me that the two cases deserve different responses- in case A, you don't think your current thoughts will impact Ekman's move, but in case B, you do. In a normal token trade, you don't think your current thoughts will impact your partner's move, but in a mirror token trade, you do. Those differences in belief are because of actual changes in the perceived causal features of the situation, which seems sensible to me.

That is, I think this is a failure of the process you're using to build causal maps, not the way you're navigating those causal maps once they're built. I keep coming back to the criterion "does a missing arrow imply independence?" because that's the primary criterion for building useful causal maps, and if you have 'logical nodes' like "the decision made by an agent with a template X" then it doesn't make sense to have a copy of that logical node elsewhere that's allowed to have a distinct value.

That is, I agree that this question is important:

What does it mean to consider that a deterministic algorithm returns something that it doesn't return?

But my answer to it is "don't try to intervene at a node unless your causal model was built under the assumption you could intervene at that node." The mirror token trade causal map you used in this post works if you intervene at 'template,' but I argue it doesn't work if you intervene at 'give?' unless there's an arrow that points from 'give?' to 'their decision.'

It sounds like we probably have similar intuitions about decision theory, but perhaps different ideas about what the do(.) function is capable of?

I think I see do(.) operator as less capable than you do; in cases where the physicality of our computation matters then we need to have arrows pointing out of the node where we intervene that we don't need when we can ignore the impacts of having to physically perform computations in reality. Furthermore, it seems to me that when we're at the level where how we physically process possibilities matters, 'decision theory' may not be a useful concept anymore.

Replies from: So8res↑ comment by So8res · 2014-09-27T23:23:51.485Z · LW(p) · GW(p)

Cool, it sounds like we mostly agree. For instance, I agree that once you set up the graph correctly, you can intervene do(.) style and get the Right Answer. The general thrust of these posts is that "setting up the graph correctly" involves drawing in lines / representing world-structure that is generally considered (by many) to be "non-causal".

Figuring out what graph to draw is indeed the hard part of the problem -- my point is merely that "graphs that represent the causal structure of the universe and only the causal structure of the universe" are not the right sort of graphs to draw, in the same way that a propensity theory of probability that only allows information to propagate causally is not a good way to reason about probabilities.

Figuring out what sort of graphs we do want to intervene on requires stepping beyond a purely causal decision theory.

↑ comment by private_messaging · 2014-09-26T18:10:27.953Z · LW(p) · GW(p)

Yeah, the existence of classification into 'future' and 'past' and 'future' not causing 'past', and what is exactly 'future', those are - ideally - a matter of the model of physics employed. Currently known physics already doesn't quite work like this - it's not just the future that can't cause the present, but anything outside the past lightcone.

All those decision theory discussions leave me with a strong impression that 'decision theory' is something which is applied almost solely to the folk physics. As an example of a formalized decision making process, we have AIXI, which doesn't really do what philosophers say either CDT or EDT does.

Replies from: lackofcheese↑ comment by lackofcheese · 2014-09-26T18:50:43.058Z · LW(p) · GW(p)

Actually, I think AIXI is basically CDT-like, and I suspect that it would two-box on Newcomb's problem.

At a highly abstract level, the main difference between AIXI and a CDT agent is that AIXI has a generalized way of modeling physics (but it has a built-in assumption of forward causality), whereas the CDT agent needs you to tell it what the physics is in order to make a decision.

The optimality of the AIXI algorithm is predicated on viewing itself as a "black box" as far as its interactions with the environment are concerned, which is more or less what the CDT agent does when it makes a decision.

Replies from: V_V, private_messaging↑ comment by V_V · 2014-09-28T13:36:33.121Z · LW(p) · GW(p)

Actually, I think AIXI is basically CDT-like, and I suspect that it would two-box on Newcomb's problem.

AIXI is a machine learning (hyper-)algorithm, hence we can't expect it to perform better than a random coin toss on a one-shot problem.

If you repeatedly pose Newcomb's problem to an AIXI agent, it will quickly learn to one-box.

Trivially, AIXI doesn't model the problem acausal structure in any way. For AIXI, this is just a matter of setting a bit and getting a reward, and AIXI will easily figuring out that setting its decision bit to "one-box" yields an higher expected reward that setting it to "two-box".

In fact, you don't even need an AIXI agent to do that: any reinforcement learning toy agent will be able to do that.

↑ comment by lackofcheese · 2014-09-28T13:44:59.331Z · LW(p) · GW(p)

The problem you're discussing is not Newcomb's problem; it's a different problem that you've decided to apply the same name to.

It is a crucial part of the setup of Newcomb's problem that the agent is presented with significant evidence about the nature of the problem. This applies to AIXI as well; at the beginning of the problem AIXI needs to be presented with observations that give it very strong evidence about Omega and about the nature of the problem setup. From Wikipedia:

"By the time the game begins, and the player is called upon to choose which boxes to take, the prediction has already been made, and the contents of box B have already been determined. That is, box B contains either $0 or $1,000,000 before the game begins, and once the game begins even the Predictor is powerless to change the contents of the boxes. Before the game begins, the player is aware of all the rules of the game, including the two possible contents of box B, the fact that its contents are based on the Predictor's prediction, and knowledge of the Predictor's infallibility. The only information withheld from the player is what prediction the Predictor made, and thus what the contents of box B are."

It seems totally unreasonable to withhold information from AIXI that would be given to any other agent facing the Newcomb's problem scenario.

Replies from: V_V, Strange7↑ comment by V_V · 2014-09-28T14:39:27.867Z · LW(p) · GW(p)

That would require the AIXI agent to have been pretrained to understand English (or some language as expressive as English) and have some experience at solving problems given a verbal explanation of the rules.

In this scenario, the AIXI internal program ensemble concentrates its probability mass on programs which associate each pair of one English specification and one action to a predicted reward. Given the English specification, AIXI computes the expected reward for each action and outputs the action that maximizes the expected reward.

Note that in principle this can implement any computable decision theory. Which one it would choose depend on the agent history and the intrinsic bias of its UTM.

It can be CDT, EDT, UDT, or, more likely, some approximation of them that worked well for the agent so far.

↑ comment by lackofcheese · 2014-09-28T15:08:43.426Z · LW(p) · GW(p)

That would require the AIXI agent to have been pretrained to understand English (or some language as expressive as English) and have some experience at solving problems given a verbal explanation of the rules.

I don't think someone posing Newcomb's problem would be particularly interested in excuses like "but what if the agent only speaks French!?" Obviously as part of the setup of Newcomb's problem AIXI has to be provided with an epistemic background that is comparable to that of its intended target audience. This means it doesn't just have to be familiar with English, it has to be familiar with the real world, because Newcomb's problem takes place in the context of the real world (or something very much like it).

I think you're confusing two different scenarios:

- Someone training an AIXI agent to output problem solutions given problem specifications as inputs.

- Someone actually physically putting an AIXI agent into the scenario stipulated by Newcomb's problem.

The second one is Newcomb's problem; the first is the "what is the optimal strategy for Newcomb's problem?" problem.

It's the second one I'm arguing about in this thread, and it's the second one that people have in mind when they bring up Newcomb's problem.

Replies from: V_V↑ comment by V_V · 2014-09-28T15:37:18.348Z · LW(p) · GW(p)

Then AIXI ensemble will be dominated by programs which associate "real world" percepts and actions to predicted rewards.

The point is that there is no way, short of actually running the (physically impossible) experiment, that we can tell whether the behavior of this AIXI agent will be consistent with CDT, EDT, or something else entirely.

↑ comment by Strange7 · 2014-10-03T16:05:45.557Z · LW(p) · GW(p)

Would it be a valid instructional technique to give someone (particularly someone congenitally incapable of learning any other way) the opportunity to try out a few iterations of the 'game' Omega is offering, with clearly denominated but strategically worthless play money in place of the actual rewards?

Replies from: lackofcheese↑ comment by lackofcheese · 2014-10-04T04:49:22.622Z · LW(p) · GW(p)

The main issue with that is that Newcomb's problem is predicated on the assumption that you prefer getting a million dollars to getting a thousand dollars. For the play money iterations, that assumption would not hold.

The second issue with iterating Newcomb's more generally is that it gives the agent an opportunity to precommit to one-boxing. The problem is more interesting and more difficult if you face it without having had that opportunity.

Replies from: Strange7↑ comment by private_messaging · 2014-09-27T01:19:09.964Z · LW(p) · GW(p)

I'm not sure it really makes an assumption of causality, let alone a forward one. (Apart from the most rudimentary notion that actions determine future input) . Facing an environment with two manipulators seemingly controlled by it, it wont have a hang up over assuming that it equally controls both. Indeed it has no reason to privilege one. Facing an environment with particular patterns under its control, it will assume it controls instances of said pattern. It doesn't view itself as anything at all. It has inputs and outputs, it builds a model of whats inbetween from the experience, if there are two idenical instances of it, it learns a weird model.

Edit: and what it would do in Newcombs, itll one box some and two box some and learn to one box. Or at least, the variation that values information will.

Replies from: lackofcheese↑ comment by lackofcheese · 2014-09-27T02:53:14.858Z · LW(p) · GW(p)

First of all, for any decision problem it's an implicit assumption that you are given sufficient information to have a very high degree of certainty about the circumstances of the problem. If presented with the appropriate evidence, AIXI should be convinced of this. Indeed, given its nature as an "optimal sequence-predictor", it should take far less evidence to convince AIXI than it would take to convince a human. You are correct that if it was presented Newcomb's problem repeatedly then in the long run it should eventually try one-boxing, but if it's highly convinced it could take a very long time before it's worth it for AIXI to try it.

Now, as for an assumption of causality, the model that AIXI has of the agent/environment interaction is based on an assumption that both of them are chronological Turing machines---see the description here. I'm reasonably sure this constitutes an assumption of forward causality.

Similarly, what AIXI would do in Newcomb's problem depends very specifically on its notion of what exactly it can control. Just as a CDT agent does, AIXI should understand that whether or not the opaque box contains a million dollars is already predetermined; in fact, given that AIXI is a universal sequence predictor it should be relatively trivial for it to work out whether the box is empty or full. Given that, AIXI should calculate that it is optimal for it to two-box, so it will two-box and get $1000. For AIXI, Newcomb's problem should essentially boil down to Agent Simulates Predictor.

Ultimately, the AIXI agent makes the same mistake that CDT makes - it fails to understand that its actions are ultimately controlled not by the agent itself, but by the output of the abstract AIXI equation, which is a mathematical construct that is accessible not just to AIXI, but the rest of the world as well. The design of the AIXI algorithm is inherently flawed because it fails to recognize this; ultimately this is the exact same error that CDT makes.

Granted, this doesn't answer the interesting question of "what does AIXI do if it predicts Newcomb's problem in advance?", because before Omega's prediction AIXI has an opportunity to causally affect that prediction.

Replies from: private_messaging↑ comment by private_messaging · 2014-09-27T09:01:06.180Z · LW(p) · GW(p)

I'm reasonably sure this constitutes an assumption of forward causality.

What it doesn't do, is make an assumption that there must be physical sequence of dominoes falling on each other from one singular instance of it, to the effect.

AIXI should understand that whether or not the opaque box contains a million dollars is already predetermined; in fact, given that AIXI is a universal sequence predictor it should be relatively trivial for it to work out whether the box is empty or full.

Not at all. It can't self predict. We assume that the predictor actually runs AIXI equation.

Ultimately, it doesn't know what's in the boxes, and it doesn't assume that what's in the boxes is already well defined (there's certainly codes where it is not), and it can learn it controls contents of the box in precisely the same manner as it has to learn that it controls it's own robot arm or what ever is it that it controls. Ultimately it can do exactly same output->predictor->box contents as it does for output->motor controller->robot arm. Indeed if you don't let it observe 'its own' robot arm, and only let it observe the box, that's what it controls. It has no more understanding that this box labelled 'AIXI' is the output of what it controls, than it has about the predictor's output.

It is utterly lacking this primate confusion over something 'else' being the predictor. The predictor is representable in only 1 way, and that's an extra counter factual insertion of actions into the model.

Replies from: lackofcheese↑ comment by lackofcheese · 2014-09-27T12:24:09.131Z · LW(p) · GW(p)

You need to notice and justify changing the subject.

If I was to follow your line of reasoning, then CDT also one-boxes on Newcomb's problem, because CDT can also just believe that its action causes the prediction. That goes against the whole point of the Newcomb setup - the idea is that the agent is given sufficient evidence to conclude, with a high degree of confidence, that the contents of the boxes are already determined before it chooses whether to one-box or two-box.

AIXI doesn't assume that the causality is made up of a "physical sequence of dominoes falling", but that doesn't really matter. We've stated as part of the problem setup that Newcomb's problem does, in fact, work that way, and a setup where Omega changes the contents of the boxes in advance, rather than doing it after the fact via some kind of magic, is obviously far simpler, and hence far more probable given a Solomonoff prior.

As for the predictor, it doesn't need to run the full AIXI equation in order to make a good prediction. It just needs to conclude that due to the evidence AIXI will assign high probability to the obviously simpler, non-magical explanation, and hence AIXI will conclude that the contents of the box are predetermined, and hence AIXI will two-box.

There is no need for Omega to actually compute the (uncomputable) AIXI equation. It could simply take the simple chain of reasoning that I've outlined above. Moreover, it would be trivially easy for AIXI to follow Omega's chain of reasoning, and hence predict (correctly) that the box is, in fact, empty, and walk away with only $1000.

Replies from: private_messaging↑ comment by private_messaging · 2014-09-27T13:31:15.833Z · LW(p) · GW(p)

If I was to follow your line of reasoning, then CDT also one-boxes on Newcomb's problem, because CDT can also just believe that its action causes the prediction. That goes against the whole point of the Newcomb setup - the idea is that the agent is given sufficient evidence to conclude, with a high degree of confidence, that the contents of the boxes are already determined before it chooses whether to one-box or two-box.

We've stated as part of the problem setup that Newcomb's problem does, in fact, work that way, and a setup where Omega changes the contents of the boxes in advance, rather than doing it after the fact via some kind of magic, is obviously far simpler, and hence far more probable given a Solomonoff prior.

Again, folk physics. You make your action available to your world model at the time t where t is when you take that action. You propagate the difference your action makes (to avoid re-evaluating everything). So you need back in time magic.

Let's look at the equation here: http://www.hutter1.net/ai/uaibook.htm . You have a world model that starts at some arbitrary point well in the past (e.g. big bang), which proceeds from that past into the present, and which takes the list of past actions and the current potential action as an input. Action which is available to the model of the world since it's very beginning. When evaluating potential action 'take 1 box', the model has money in the first box, when evaluating potential action 'take 2 boxes', the model doesn't have money in the first box, and it doesn't do any fancy reasoning about the relation between those models and how those models can and can't differ. It just doesn't perform this time saving optimization of 'let first box content be x, if i take 2 boxes, i get x+1000 > x'.

Replies from: lackofcheese↑ comment by lackofcheese · 2014-09-27T14:48:40.523Z · LW(p) · GW(p)

Why do you need "back in time magic", exactly? That's a strictly more complex world model than the non-back-in-time-magic version. If Solomonoff induction results in a belief in the existence of back-in-time magic when what's happening is just perfectly normal physics, this would be a massive failure in Solomonoff induction itself. Fortunately, no such thing occurs; Solomonoff induction works just fine.

I'm arguing that, because the box already either contains the million or does not, AIXI will (given a reasonable but not particularly large amount of evidence) massively downweight models that do not correctly describe this aspect of reality. It's not doing any kind of "fancy reasoning" or "time-saving optimization", it's simply doing Solomonoff induction, and dong it correctly.

Replies from: private_messaging↑ comment by private_messaging · 2014-09-27T23:42:07.260Z · LW(p) · GW(p)

Then it can, for experiment' sake, take 2 boxes if theres something in the first box, and take 1 otherwise. The box contents are supposedly a result of computing AIXI and as such are not computable; or for a bounded approximation, not approximable. You're breaking your own hypothetical and replacing the predictor (which would have to perform hypercomputation) with something that incidentally coincides. AIXI responds appropriately.

edit: to stpop talking to one another: AIXI does not know if there's money in the first box. The TM where AIXI is 1boxing is an entireliy separate TM from one where AIXI is 2boxing. AIXI does not in any way represent any facts about the relation between those models, such as 'both have same thing in the first box'.

edit2: and , it is absoloutely correct to take 2 boxes if you don't know anything about the predictor. AIXI represents the predictor as the surviving TMs using the choice action value as omega's action to put/not put money in the box. AIXI does not preferentially self identify with the AIXI formula inside the robot that picks boxes, over AIXI formula inside 'omega'.

Replies from: nshepperd↑ comment by nshepperd · 2014-09-28T01:19:43.102Z · LW(p) · GW(p)

If you have to perform hypercomputation to even approximately guess what AIXI would do, then this conversation would seem like a waste of time/

Replies from: lackofcheese↑ comment by lackofcheese · 2014-09-28T02:21:17.701Z · LW(p) · GW(p)

Precisely.

Besides that, if you can't even make a reasoned guess as to what AIXI would do in a given situation, then AIXI itself is pretty useless even as a theoretical concept, isn't it?

Omega doesn't have to actually evaluate the AIXI formula exactly; it can simply reason logically to work out what AIXI will do without performing those calculations. Sure, AIXI itself can't take those shortcuts, but Omega most definitely can. As such, there is no need for Omega to perform hypercomputation, because it's pretty easy to establish AIXI's actions to a very high degree of accuracy using the arguments I've put forth above. Omega doesn't have to be a "perfect predictor" at all.

In this case, AIXI is quite easily able to predict the chain of reasoning Omega takes, and so it can easily work out what the contents of the box are. This straightforwardly results in AIXI two-boxing, and because it's so straightforward it's quite easy for Omega to predict this, and so Omega only fills one box.

The problem with AIXI is not that it preferentially self-identifies with the AIXI formula inside the robot that picks boxes vs the "AIXI formula inside Omega". The problem with AIXI is that it doesn't self-identify with the AIXI formula at all.

One could argue that the simple predictor is "punishing" AIXI for being AIXI, but this is really just the same thing as the CDT agent who thinks Omega is punishing them for being "rational". The point of this example is that if the AIXI algorithm were to output "one-box" instead of "two-box" for Newcomb's problem, then it would get a million dollars. Instead, it only gets $1000.

Replies from: nshepperd, private_messaging↑ comment by nshepperd · 2014-09-28T13:05:28.214Z · LW(p) · GW(p)

Well, to make an object-level observation, it's not entirely clear to me what it means for AIXI to occupy the epistemic state required by the problem definition. The "hypotheses" of AIXI are general sequence predictor programs rather than anything particularly realist. So while present program state can only depend on AIXI's past actions, and not future actions, nothing stops a hypothesis from including a "thunk" that is only evaluated when the program receives the input describing AIXI's actual action. In fact, as long as no observations or rewards depend on the missing information, there's no need to even represent the "actual" contents of the boxes. Whether that epistemic state falls within the problem's precondition seems like a matter of definition.

If you restrict AIXI's hypothesis state to explicit physics simulations (with the hypercomputing part of AIXI treated as a black box, and decision outputs monkeypatched into a simulated control wire), then your argument does follow, I think; the whole issue of Omega's prediction is just seen as some "physics stuff" happening, where Omega "does some stuff" and then fills the boxes, and AIXI then knows what's in the boxes and it's a simple decision to take both boxes.

But, if the more complicated "lazily-evaluating" sort of hypotheses gain much measure, then AIXI's decision starts actually depending on its simulation of Omega, and then the above argument doesn't really work and trying to figure out what actually happens could require actual simulation of AIXI or at least examination of the specific hypothesis space AIXI is working in.

So I suppose there's a caveat to my post above, which is that if AIXI is simulating you, then it's not necessarily so easy to "approximately guess" what AIXI would do (since it might depend on your approximate guess...). In that way, having logically-omniscient AIXI play kind of breaks the Newcomb's Paradox game, since it's not so easy to make Omega the "perfect predictor" he needs to be, and you maybe need to think about how Omega actually works.

Replies from: lackofcheese↑ comment by lackofcheese · 2014-09-28T13:35:12.079Z · LW(p) · GW(p)

I think it's implicit in the Newcomb's problem scenario that it takes place within the constraints of the universe as we know it. Obviously we have to make an exception for AIXI itself, but I don't see a reason to make any further exceptions after that point. Additionally, it is explicitly stated in the problem setup that the contents of the box are supposed to be predetermined, and that the agent is made aware of this aspect of the setup. As far as the epistemic states are concerned, this would imply that AIXI has been presented with a number of prior observations that provide very strong evidential support for this fact.

I agree that AIXI's universe programs are general Turing machines rather than explicit physics simulations, but I don't think that's a particularly big problem. Unless we're talking about a particularly immature AIXI agent, it should already be aware of the obvious physics-like nature of the real world; it seems to me that the majority of AIXI's probability mass should be occupied by physics-like Turing machines rather than by thunking. Why would AIXI come up with world programs that involve Omega making money magically appear or disappear after being presented significant evidence to the contrary?

I can agree that in the general case it would be rather difficult indeed to predict AIXI, but in many specific instances I think it's rather straightforward. In particular, I think Newcomb's problem is one of those cases.

I guess that in general Omega could be extremely complex, but unless there is a reason Omega needs to be that complex, isn't it much more sensible to interpret the problem in a way that is more likely to comport with our knowledge of reality? Insofar as there exist simpler explanations for Omega's predictive power, those simpler explanations should be preferred.

I guess you could say that AIXI itself cannot exist in our reality and so we need to reinterpret the problem in that context, but that seems like a flawed approach to me. After all, the whole point of AIXI is to reason about its performance relative to other agents, so I don't think it makes sense to posit a different problem setup for AIXI than we would for any other agent.

Replies from: V_V, nshepperd↑ comment by V_V · 2014-09-28T16:12:34.715Z · LW(p) · GW(p)

If AIXI has been presented with sufficient evidence that the Newcomb's problem works as advertised, then it must be assigning most of its model probability mass to programs where the content of the box, however internally represented, is correlated to the next decision.

Such programs exist in the model ensemble, hence the question is how much probability mass does AIXI assign to them. If it not enough to dominate its choice, then by definition AIXI has not been presented with enough evidence.

↑ comment by lackofcheese · 2014-09-28T16:45:53.603Z · LW(p) · GW(p)

What do you mean by "programs where the content of the box, however internally represented, is correlated to the next decision"? Do you mean world programs that output $1,000,000 when the input is "one-box" and output $1000 when the input is "two-box"? That seems to contradict the setup of Newcomb's to me; in order for Newcomb's problem to work, the content of the box has to be correlated to the actual next decision, not to counterfactual next decisions that don't actually occur.

As such, as far as I can see it's important for AIXI's probability mass to focus down to models where the box already contains a million dollars and/or models where the box is already empty, rather than models in which the contents of the box are determined by the input to the world program at the moment AIXI makes its decision.

Replies from: V_V↑ comment by V_V · 2014-10-01T13:12:14.270Z · LW(p) · GW(p)

AIXI world programs have no inputs, they just run and produce sequences of triples in the form: (action, percept, reward).

So, let's say AIXI has been just subjected to Newcomb's problem. Assuming that the decision variable is always binary ("OneBox" vs "TwoBox"), of all the programs which produce a sequence consistent with the observed history, we distinguish five classes of programs, depending on the next triple they produce:

1: ("OneBox", "Opaque box contains $1,000,000", 1,000,000)

2: ("TwoBox", "Opaque box is empty", 1,000)

3: ("OneBox", "Opaque box is empty", 0)

4: ("TwoBox", "Opaque box contains $1,000,000", 1,001,000)

5: Anything else (eg. ("OneBox", "A pink elephant appears", 42)).

Class 5 should have a vanishing probability, since we assume that the agent already knows physics.

Therefore:

E("OneBox") = (1,000,000 p(class1) + 0 p(class3)) / (p(class1) + p(class3))

E("TwoBox") = (1,000 p(class2) + 1,001,000 p(class4)) / (p(class2) + p(class4))

Classes 1 and 2 are consistent with the setup of Newcomb's problem, while classes 3 and 4 aren't.

Hence I would say that if AIXI has been presented with enough evidence to believe that it is facing Newcomb's problem, then by definition of "enough evidence", p(class1) >> p(class3) and p(class2) >> p(class4), implying that AIXI will OneBox.

EDIT: math.

Replies from: lackofcheese↑ comment by lackofcheese · 2014-10-01T13:38:31.011Z · LW(p) · GW(p)

AIXI world programs have no inputs, they just run and produce sequences of triples in the form: (action, percept, reward).

No, that isn't true. See, for example, page 7 of this article. The environments (q) accept inputs from the agent and output the agent's percepts.

As such (as per my discussion with private_messaging), there are only three relevant classes of world programs:

(1) Opaque box contains $1,000,000

(2) Opaque box is empty

(3) Contents of the box are determined by my action input