The AI Belief-Consistency Letter

post by Knight Lee (Max Lee) · 2025-04-23T12:01:42.581Z · LW · GW · 4 commentsContents

Case 1: Case 2: Conclusion None 4 comments

Dear policymakers,

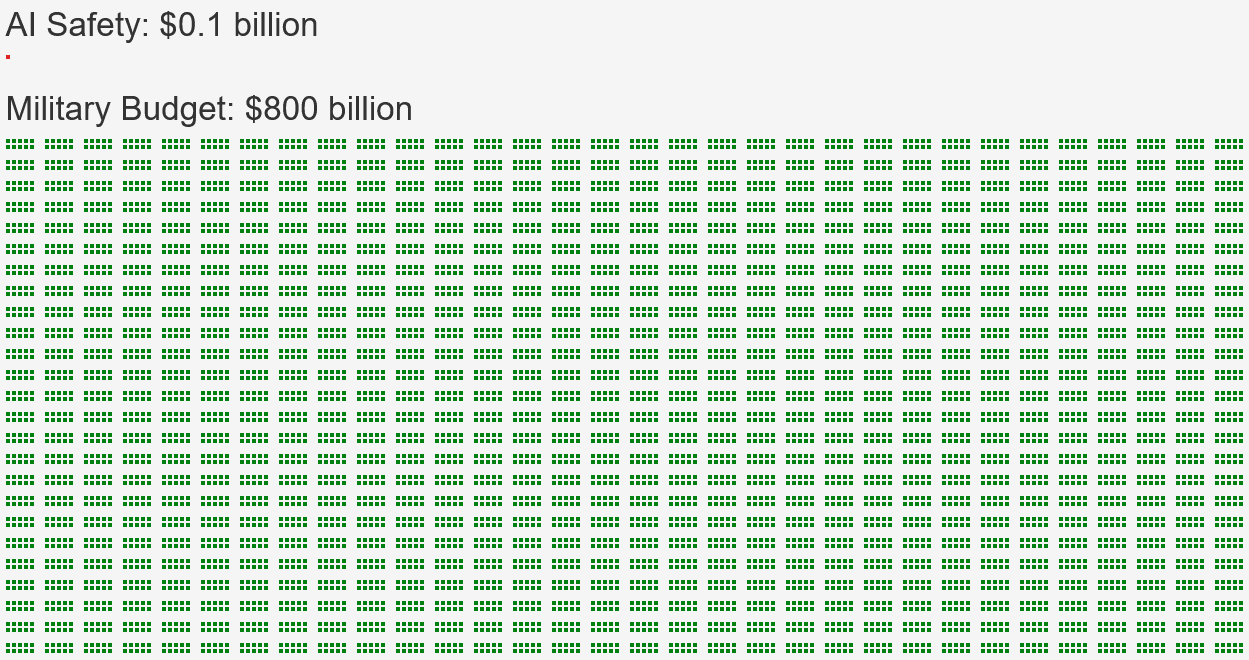

We demand that the AI alignment budget be Belief-Consistent with the military budget.

Belief-Consistency is a simple yet powerful idea:

- If you spend 8000 times less on AI alignment (compared to the military),

- You must also believe that AI risk is 8000 times less (than military risk).[1]

Yet the only way to reach Belief-Consistency, is to

Greatly increase AI alignment spending,

or

- Become 99.95% certain that you are right, and that the majority of AI experts, other experts, superforecasters, and the general public, are all wrong.

Let us explain:

In order to believe that AI risk is 8000 times less than military risk, you must believe that an AI catastrophe (killing 1 in 10 people) is less than 0.001% likely.[2]

This clearly contradicts the median AI expert, who sees a 5%-12% chance of an AI catastrophe (killing 1 in 10 people), the median superforecaster, who sees 2.1%, other experts, who see 5%, and the general public, who sees 5%.

In fact, assigning only 0.001% chance contradicts the median expert so profoundly, that you need to become 99.95% certain that you won't realize you were wrong and the majority of experts were right!

If there was more than a 0.05% possibility that you study the disagreement further, and realize the risk exceeds 2% just like most experts say, then the laws of probability forbid you from believing a 0.001% risk.

We believe that foreign invasion concerns have decreased over the last century, and AGI concerns have increased over the last decade, but budgets remained within the status quo, causing a massive inconsistency between belief and behaviour.

Do not let humanity's story be so heartbreaking.

Version 1.1us

These numbers are for the US, but the same argument applies to NATO and other countries.

Please add your signature by asking in a comment (or emailing me). Thank you so truly much.

Signatories:

- Knight Lee

References

Claude 3.5 drew the infographic for me.

“Military Budget: $800 billion”

- [3] says it's $820 billion in 2024. $800 billion is an approximate number.

“AI Safety: $0.1 billion”:

- The AISI is the most notable US government funded AI safety organization. It does not focus on ASI takeover risk though it may partially focus on other catastrophic AI risks. AISI's budget is $10 million according to [4]. Worldwide AI safety funding is between $0.1 billion and $0.2 billion according to [5].

“the median AI expert, who sees a 5%-12% chance of an AI catastrophe (killing 1 in 10 people), other experts, who see 5%, the median superforecaster, who sees 2.1%, and the general public, who sees 5%.”

- [6] says: Median superforecaster: 2.13%. Median “domain experts” i.e. AI experts: 12%. Median “non-domain experts:” 6.16%. Public Survey: 5%. These are predictions for 2100. Nonetheless, these are predictions before ChatGPT was released, so it's possible they see the same risk sooner than 2100 now.

- [7] says the median AI expert sees a 5% chance of “future AI advances causing human extinction or similarly permanent and severe disempowerment of the human species” and a 10% chance of “human inability to control future advanced AI systems causing human extinction or similarly permanent and severe disempowerment of the human species.”

- ^

This assumes the marginal risk reduction in spending more is similar for military risk and AI risk, i.e. we don't have lopsided situations where spending 10% more on the military reduces military risk by 10%, but spending 10% more on AI risk only reduces AI risk by 0.1%.

This is a very reasonable assumption to make given that we are very uncertain about the nature of AI risk. If there was only a 50% chance that spending 10% more on AI risk reduces AI risk by 10%, then spending 10% more on AI risk reduces AI risk by at least 5%.

Uncertainty about the risk actually increases the expected value of risk reduction, and prevents it from being confidently low.

- ^

This assumes that the military risk for a powerful country (like the US) is less than the equivalent of a 8% chance of catastrophe (killing 1 in 10 people) by 2100.

- ^

USAFacts Team. (August 1, 2024). “How much does the US spend on the military?” USAFacts. https://usafacts.org/articles/how-much-does-the-us-spend-on-the-military/

- ^

Wiggers, Kyle. (October 22, 2024). “The US AI Safety Institute stands on shaky ground.” TechCrunch. https://techcrunch.com/2024/10/22/the-u-s-ai-safety-institute-stands-on-shaky-ground/

- ^

McAleese, Stephen, and NunoSempere. (July 12, 2023). “An Overview of the AI Safety Funding Situation.” LessWrong. https://www.lesswrong.com/posts/WGpFFJo2uFe5ssgEb/an-overview-of-the-ai-safety-funding-situation/?commentId=afv74rMgCbvirFdKp [? · GW]

- ^

Karger, Ezra, Josh Rosenberg, Zachary Jacobs, Molly Hickman, Rose Hadshar, Kayla Gamin, and P. E. Tetlock. (August 8, 2023). “Forecasting Existential Risks Evidence from a Long-Run Forecasting Tournament.” Forecasting Research Institute. p. 259. https://static1.squarespace.com/static/635693acf15a3e2a14a56a4a/t/64f0a7838ccbf43b6b5ee40c/1693493128111/XPT.pdf#page=260

- ^

Stein-Perlman, Zach, Benjamin Weinstein-Raun, and Katja Grace. (August 3, 2022). “2022 Expert Survey on Progress in AI.” AI Impacts. https://aiimpacts.org/2022-expert-survey-on-progress-in-ai/

Why this open letter might succeed even if previous open letters did not

Politicians don't reject pausing AI because they are evil and want a misaligned AI to kill their own families! They reject pausing AI because their P(doom) honestly is low, and they genuinely believe that if the US pauses then China will race ahead, building even more dangerous AI.

But as low as their P(doom) is, it may still be high enough that they have to agree with this argument (maybe it's 1%).

It is very easy to believe that "we cannot let China win," or "if we don't do it someone else will," and reject pausing AI. But it may be more difficult to believe that you are 99.999% sure of no AI catastrophe, and thus 99.95% sure the majority of experts are wrong, and reject this letter.

Also remember that AI capabilities spending is 1000x greater than alignment spending.

This difference makes it far easier for me to make a quantitative argument for increasing alignment funding, than to make a quantitative argument for increasing regulation.

I am not against asking for regulation! I just think we are dropping the ball when it comes to asking for alignment funding.

PS: I feel this letter better than my previous draft [LW · GW], because although it is longer, the gist of it is easier to understand and memorize: "make the AI alignment budget Belief-Consistent with the military budget."

Why I feel almost certain this open letter is a net positive

Delaying AI capabilities alone isn't enough. If you wished for AI capabilities to be delayed by 1000 years, then one way to fulfill your wish is if the Earth had formed 1000 years later, which delays all of history by the same 1000 years.

Clearly, that's not very useful. AI capabilities have to be delayed relative to something else.

That something else is either:

Progress in alignment (according to optimists like me)

or

- Progress towards governments freaking out about AGI and going nuclear [LW · GW] to stop it (according to LessWrong's pessimist community)

Either way, the AI Belief-Consistency Letter speeds up that progress by many times more than it speeds up capabilities. Let me explain.

Case 1:

Case 1 assumes we have a race between alignment and capabilities. From first principles, the relative funding of alignment and capabilities matters in this case.

Increasing alignment funding by 2x ought to have a similar effect to decreasing capability funding by 2x.

Various factors may make the relationship inexact, e.g. one might argue that increasing alignment by 4x might be equivalent to decreasing capabilities by 2x, if one believes that capabilities is more dependent on funding.

But so long as one doesn't assume insane differences, the AI Belief-Consistency Letter is a net positive in Case 1.

This is because alignment funding is only at $0.1 to $0.2 billion [? · GW], while capabilities funding is at $200+ billion to $600+ billion.

If the AI Belief-Consistency Letter increases both by $1 billion, that's a 5x to 10x alignment increase and only a 1.002x to 1.005x capabilities increase. That would clearly be a net positive.

Case 2:

Even if the wildest dreams of the AI pause movement succeed, and the US, China, and EU all agree to halt all capabilities above a certain threshold, the rest of the world still exists, so it only reduces capabilities funding by 10x effectively.

That would be very good, but we'll still have a race between capabilities and alignment, and Case 1 still applies. The AI Belief-Consistency Letter still increases alignment funding by far more than capabilities funding.

The only case where we should not worry about increasing alignment funding, is if capabilities funding is reduced to zero, and there's no longer a race between capabilities and alignment.

The only way to achieve that worldwide, is to "solve diplomacy," which is not going to happen, or to "go nuclear [LW · GW]," like Eliezer Yudkowsky suggests.

If your endgame is to "go nuclear [LW · GW]" and make severe threats to other countries despite the risk, you surely can't oppose the AI Belief-Consistency Letter on the grounds that "it speeds up capabilities because it makes governments freak out about AGI," since you actually need governments to freak out about AGI.

Conclusion

Make sure you don't oppose this idea based on short term heuristics like "the slower capabilities grow, the better," without reflecting on why you believe so. Think about what your endgame is. Is it slowing down capabilities to make time for alignment? Or is it slowing down capabilities to make time for governments to freak out and halt AI worldwide?

4 comments

Comments sorted by top scores.

comment by Richard_Kennaway · 2025-04-23T17:36:04.416Z · LW(p) · GW(p)

If you spend 8000 times less on AI alignment (compared to the military),

You must also believe that AI risk is 8000 times less (than military risk).

Why?

We know how to effectively spend money on the military: get more of what we have and do R&D to make better stuff. The only limit on effective military spending is all the other things that the money is needed for, i.e. having a country worth defending.

It is not clear to me how to buy AI safety. Money is useless without something to spend it on. What would you buy with your suggested level of funding?

comment by tslarm · 2025-04-23T16:38:31.850Z · LW(p) · GW(p)

IMO it's unclear what kind of person would be influenced by this. It requires the reader to a) be amenable to arguments based on quantitative probabilistic reasoning, but also b) overlook or be unbothered by the non sequitur at the beginning of the letter. (It's obviously possible for the appropriate ratio of spending on causes A and B not to match the magnitude of the risks addressed by A and B.)

I also don't understand where the numbers come from in this sentence:

Replies from: Max LeeIn order to believe that AI risk is 8000 times less than military risk, you must believe that an AI catastrophe (killing 1 in 10 people) is less than 0.001% likely.

↑ comment by Knight Lee (Max Lee) · 2025-04-23T17:34:56.953Z · LW(p) · GW(p)

Hi,

By a very high standard, all kinds of reasonable advice are non-sequitur. E.g. a CEO might explain to me "if you hire Alice instead of Bob, you must also believe Alice is better for the company than Bob, you can't just like her more," but I might think "well that's clearly a non-sequitur, just because I hire Alice instead of Bob doesn't imply Alice is better for the company than Bob. Since maybe Bob is a psychopath who would improve the company's fortunes by committing crime and getting away with it, so I hire Alice instead."

X doesn't always imply Y, but in cases where X doesn't imply Y there has to be an explanation.

In order for the reader to agree that AI risk is far higher than 1/8000th the military risk, but still insist that 1/8000th the military budget is still justified, he would need a big explanation, e.g. the marginal benefit of spending 10% more on the military reduces military risk by 10%, but the marginal benefit of spending 10% more on AI risk somehow only reduces AI risk by 0.1%, since AI risk is far more independent of countermeasures.

It's hard to have such drastic differences, because one needs to be very certain that AI risk is unsolvable. If one was uncertain of the nature of AI risk, and there existed plausible models where spending a lot reduces the risk a lot, then these plausible models dominate the expected value of risk reduction.

Thank you for pointing out that sentence, I will add a footnote for it.

If we suppose that military risk for a powerful country (like the US) is lower than the equivalent of a 8% chance of catastrophe (killing 1 in 10 people) by 2100, then 8000 times less would be a 0.001% chance of catastrophe by 2100.

I will also add a footnote for the marginal gains.

Thank you, this is a work in progress, as the version number suggests :)

comment by BarnicleBarn · 2025-04-23T15:25:46.811Z · LW(p) · GW(p)

I agree wholeheartedly with the sentiment. I also agree with the underlying assumptions made in the Compendium[1], that it would really require a Manhattan project level of effort to understand:

- What intelligence actually is and how it works, as we don't yet have a robust working theory of intelligence.

- What alignment actually looks like, and how we can even begin to formulate a thesis of how to keep a superintelligent system aligned as it evolves and recursively self improves. I liken this a bit to the hard problem of consciousness. It's the hard problem of alignment, which I parse into two discreet components:

- We don't understand what drives humans completely. We expect a degree of 'playing nice' from AI systems, but we don't have a robust and provable theory of why humans are social, self-sacrifice, sometimes think of the common good, sometimes don't, are curious, and which factors of our experience (or our biology) are responsible for those drivers. Without that, attempting to simulate them in an AI system seems like a dead end. Surface level alignment is trivial (it's polite and friendly), real alignment could potentially be intractable, as we don't have a working theory of what aligns humans to begin with.

- We require more than just basic 'behave like a human' level of alignment from an AI system. Humans do an incredible amount of harm, both to each other (war, exploitation, famine), and to the natural World (habitat destruction, pollution, etc.) in the pursuit of our goals. We need a model for behavior that transcends that human behavior. Which leads to the question of, how is that goal even to be formulated? How is a set of behaviors, goals and values instilled in an AI system, from a species that does not routinely possess those goals?

- AI systems in their current form, in order to increase in capabilities, exacerbate many of the issues that are caused by humans. They are extremely power intensive. Power that at the moment is only realistically servable by fossil fuels. That power has to be 'stable' and 'dispatchable'. This is not the power profile of most renewables, which are inherently cyclical, and irregular in their generation. While we may not be concerned about inference stopping due to a BESS system running out of charge, a superintelligent system relying on that power for its existence may think differently.

- AI systems also require more rare earth elements, commodities, etc. that are difficult and environmentally challenging to extract. In water scarse times, they require a lot of water for cooling, which is impacting local communities. In order for a superintelligent system to grow rapidly in the short term, it must continue to consume these resources in huge quantities, putting it in direct, and inherent conflict with any alignment goal around the preservation of the natural world, and the ecosystem that humans are reliant upon. Given the pursuit of resources and living space is the fundamental driver of most human conflict - it would appear that we could be setting ourselves up for a resource conflict with AI. How to resolve this, is something that I don't think anyone has a clear answer to, and I don't see being discussed at all often enough. (Perhaps because I work in the data center infrastructure space, I find myself running into this almost daily, so its front of mind for me).

All of which is to say, that I believe these problems are resolvable, but only if, to your point, a significant amount of expenditure, and the greatest minds in this generation are set to the task of resolving them ahead of the deployment of a superintelligent system. We face a Manhattan Project level of risk, but we are not acting as if we are facing that systematically.