LessWrong 2.0 Reader

View: New · Old · TopRestrict date range: Today · This week · This month · Last three months · This year · All time

next page (older posts) →

next page (older posts) →

In China, there was a parallel, but more abrupt change from Classical Chinese writing (very terse and literary), to vernacular writing (similar to speaking language and easier to understand). I attribute this to Classical Chinese being better for signaling intelligence [LW(p) · GW(p)], vernacular Chinese being better for practical communications, higher usefulness/demand for practical communications, and new alternative avenues for intelligence signaling (e.g., math, science). These shifts also seem to be an additional explanation for decreasing sentence lengths in English.

mo-putera on Mo Putera's Shortform(Not a take, just pulling out infographics and quotes for future reference from the new DeepMind paper outlining their approach to technical AGI safety and security)

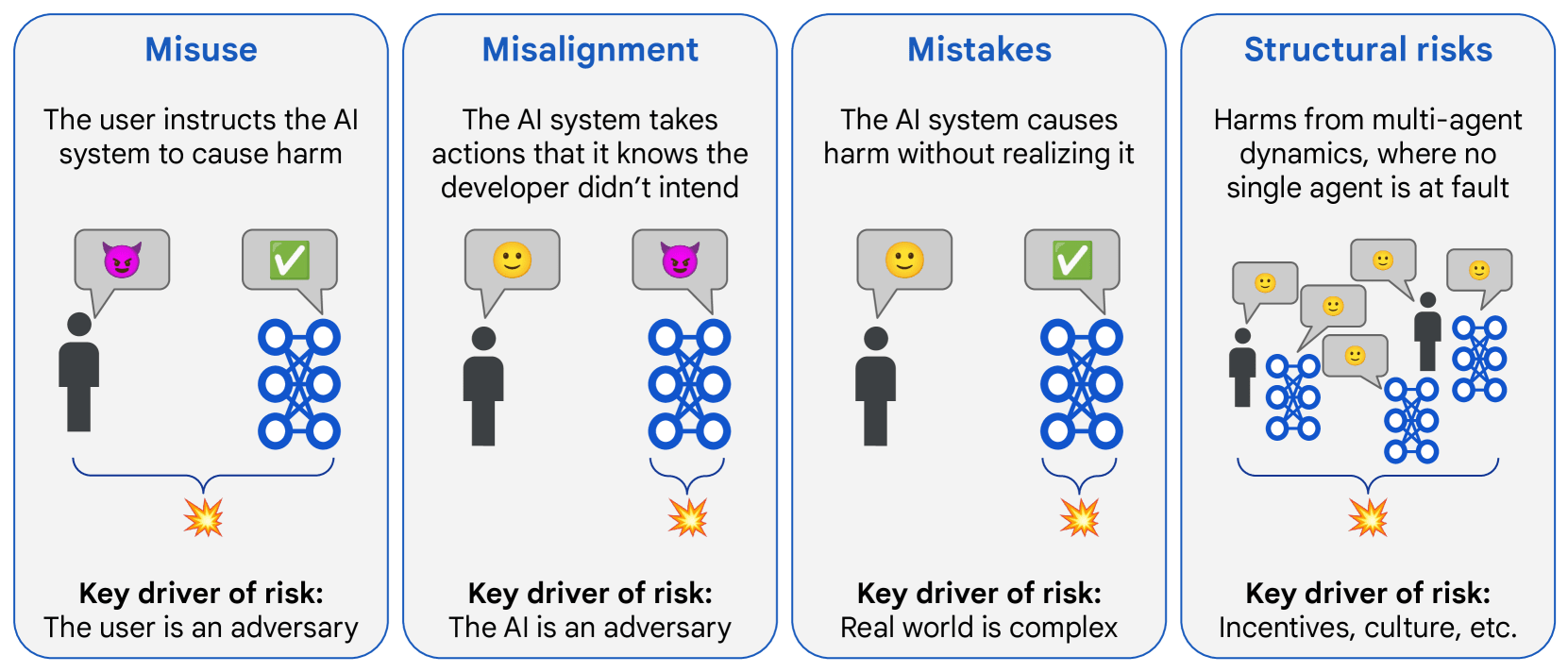

Overview of risk areas, grouped by factors that drive differences in mitigation approaches:

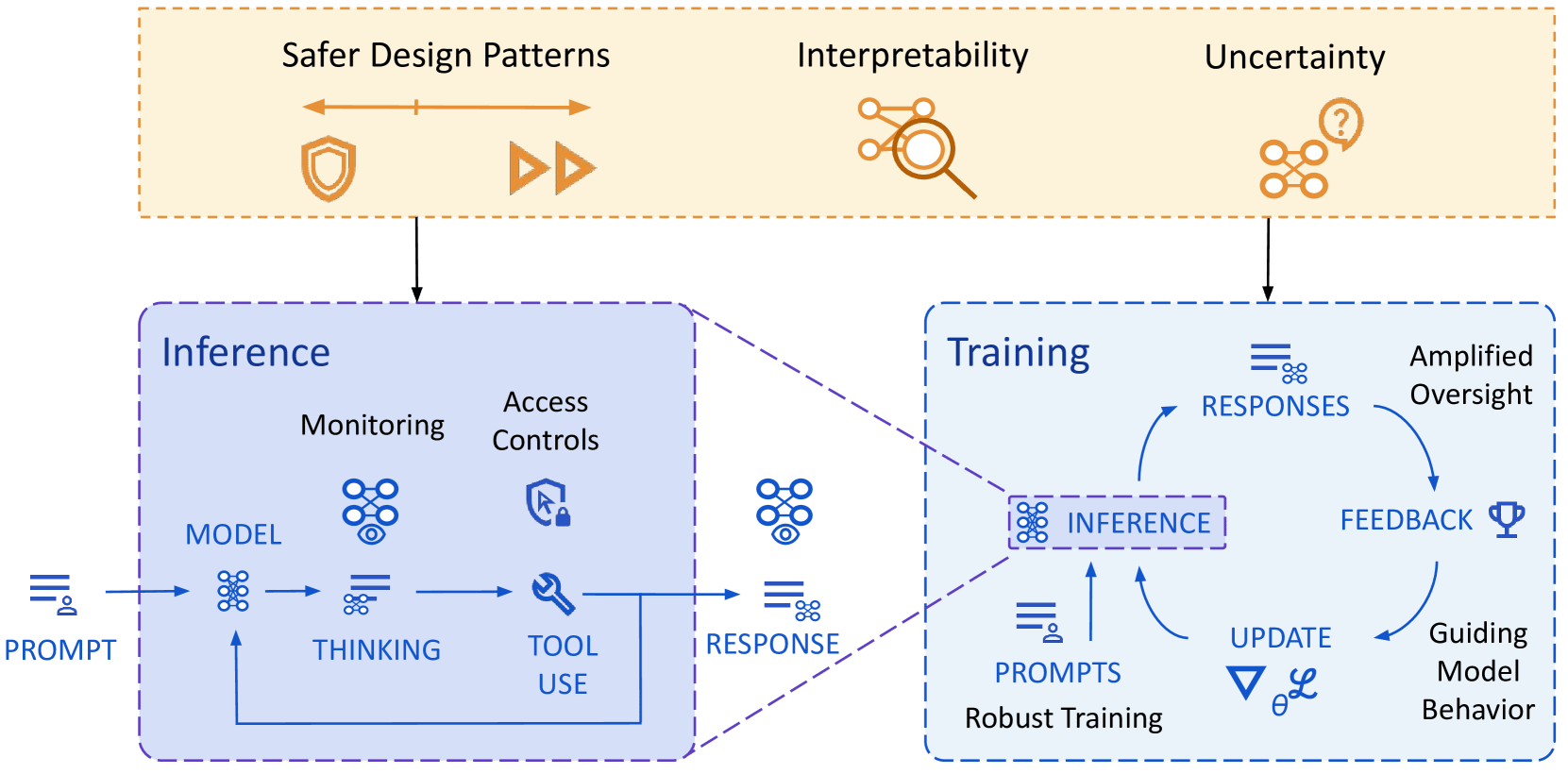

Overview of their approach to mitigating misalignment:

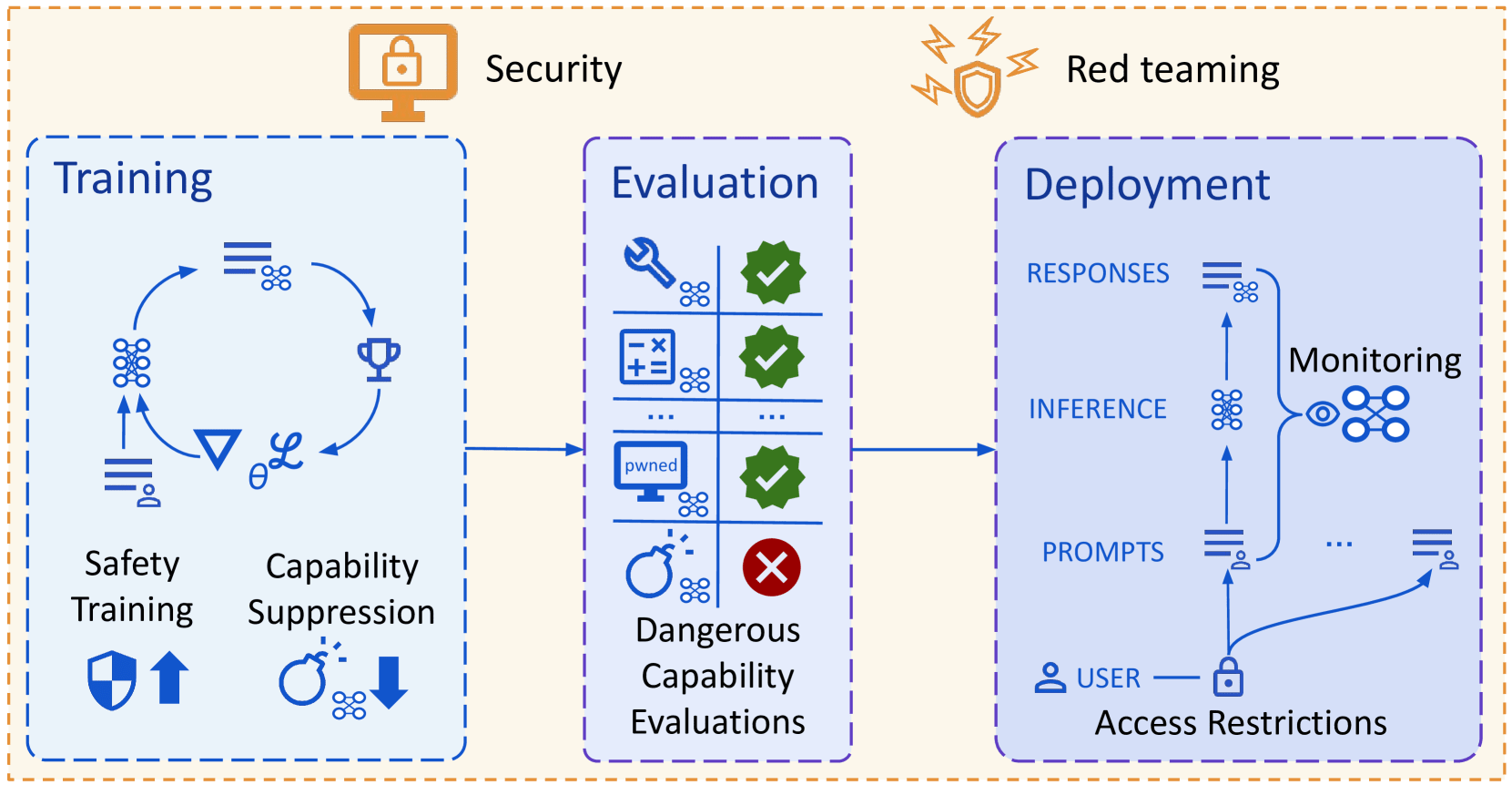

Overview of their approach to mitigating misuse:

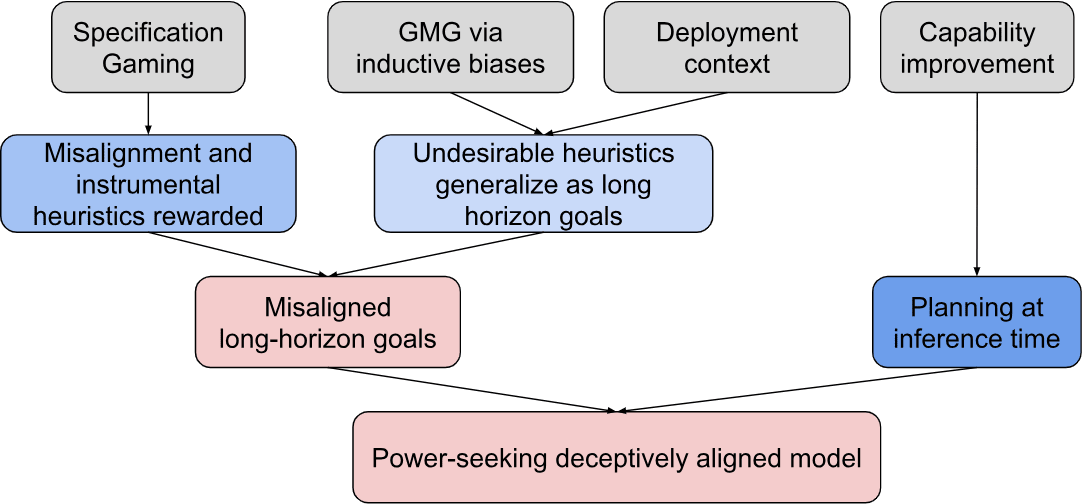

Path to deceptive alignment:

How to use interpretability:

| Goal | Understanding v Control | Confidence | Concept v Algorithm | (Un)supervised? | How context specific? |

| Alignment evaluations | Understanding | Any | Concept+ | Either | Either |

| FaithfulReasoning | Understanding∗ | Any | Concept+ | Supervised+ | Either |

| DebuggingFailures | Understanding∗ | Low | Either | Unsupervised+ | Specific |

| Monitoring | Understanding | Any | Concept+ | Supervised+ | General |

| Red teaming | Either | Low | Either | Unsupervised+ | Specific |

| Amplified oversight | Understanding | Complicated | Concept | Either | Specific |

Interpretability techniques:

| Technique | Understanding v Control | Confidence | Concept v Algorithm | (Un)supervised? | How specific? | Scalability |

| Probing | Understanding | Low | Concept | Supervised | Specific-ish | Cheap |

| Dictionary learning | Both | Low | Concept | Unsupervised | General∗ | Expensive |

| Steering vectors | Control | Low | Concept | Supervised | Specific-ish | Cheap |

| Training data attribution | Understanding | Low | Concept | Unsupervised | General∗ | Expensive |

| Auto-interp | Understanding | Low | Concept | Unsupervised | General∗ | Cheap |

| Component Attribution | Both | Medium | Concept | Complicated | Specific | Cheap |

| Circuit analysis (causal) | Understanding | Medium | Algorithm | Complicated | Specific | Expensive |

Assorted random stuff that caught my attention:

It is more probable that A, than that A and B.

I can see the appeal here -- litanies tend to have a particular style after all -- but I wonder if we can improve it.

I see two problems:

A and A and B; it is between A and B.Perhaps one way forward would involve a mention (or reference to) Minimum Description Length (MDL) or Kolmogorov complexity.

seth-herd on How AI Takeover Might Happen in 2 YearsI think the assumption here that AIs are "learning to train themselves" is important. In this scenario they're producing the bulk of the data.

I also take your point that this is probably correctable with good training data. One premise of the story here seems to be that the org simply didn't try very hard to align the model. Unfortunately, I find this premise all too plausible. Fortunately, this may be a leverage point for shifting the odds. "Bother to align it" is a pretty simple and compelling message.

Even with the data-based alignment you're suggesting, I think it's still not totally clear that weird chains of thought couldn't take it off track.

davidmanheim on Is instrumental convergence a thing for virtue-driven agents?I understand what an argument is, but I don't understand why you think that converting policies to.utility functions needs to assume no systematic errors, or why, if true, that would make it incompatible with varying intelligence.

mitchell_porter on AI #110: Of Course You Know…Regarding the tariffs, I have taken to saying "It's not the end of the world, and it's not even the end of world trade." In the modern world, every decade sees a few global economic upheavals, and in my opinion that's all this is. It is a strong player within the world trade system (China and the EU being the other strong players), deciding to do things differently. Among other things, it's an attempt to do something about America's trade deficits, and to make the country into a net producer rather than a net consumer. Those are huge changes but now that they are being attempted, I don't see any going back. The old situation was tolerated because it was too hard to do anything about it, and the upper class was still living comfortably. I think a reasonable prediction is that world trade avoiding the US will increase, US national income may not grow as fast, but the US will re-industrialize (and de-financialize). Possibly there's some interaction with the US dollar's status as reserve currency too, but I don't know what that would be.

andrew-sauer on The "Intuitions" Behind "Utilitarianism"Gwern seems to think this would be used as a way to get rid of corrupt oligarchs, but... Wouldn't this just immediately be co-opted by those oligarchs to solidify their power by legally paying for the assassinations of their opponents? Markets aren't democratic, because a small percentage of the people have most of the money.

cole-wyeth on Existing UDTs test the limits of Bayesianism (and consistency)If the universe contained a source of ML-random bits they might look like uniformly random coin flips to us, even if they actually had some uncomputable distribution. For instance, perhaps spin measurements are not iid Bernoulli, but since their distribution is not computable, we aren’t able to predict it any better than that model?

I’m not sure how you’re imagining this oracle would act? Nothing like what you’re describing seems to be embedded as a physical object in spacetime, but I think that’s the wrong thing to expect, failures of computability wouldn’t act like Newtonian objects.

Sure, I’ll keep it simple (will submit through proper channels later):

Here’s my attempt to change their minds: https://www.lesswrong.com/posts/vvgND6aLjuDR6QzDF/my-model-of-what-is-going-on-with-llms [LW · GW]

I’ll bet 100 USD that by 2027 AI agents have not replaced human AI engineers. If it’s hard to decide I’ll pay 50 USD.

I would buy various forms of merch, including clothing. I feel very fond of LessWrong and would find it cool to wear a shirt or something with that brand.