LessWrong 2.0 Reader

View: New · Old · TopRestrict date range: Today · This week · This month · Last three months · This year · All time

next page (older posts) →

next page (older posts) →

I have previously used special relativity as an example to the opposite. It seems to me that the Michelson-Morley experiment laid the groundwork and all alternatives were more or less rejected by the time special relativity was formulated. This could be hindsight bias though.

If nobel prizes are any indicator, then the photoelectric effect is probably more counterfactually impactful than special relativity.

migueldev on CLR's recent work on multi-agent systemssafe Pareto improvement (SPI)

This URL is broken.

Hmm. Seems... fragile. I don't think that's a reason not to do it, but I also wouldn't put much hope in the idea that leaks would be successfully prevented by this system.

nathan-helm-burger on Please stop publishing ideas/insights/research about AII think you make some valid points. In particular, I agree that some people seem to have fallen into a trap of being unrealistically pessimistic about AI outcomes which mirrors the errors of those AI developers and cheerleaders who are being unrealistically optimistic.

On the other hand, I disagree with this critique (although I can see where you're coming from):

If it's instead a boring engineering problem, this stops being a quest to save the world or an all consuming issue. Incremental alignment work might solve it, so in order to preserve the difficulty of the issue, it will cause extinction for some far-fetched reason. Building precursor models then bootstrapping alignment might solve it, so this "foom" is invented and held on to (for a lot of highly speculative assumptions), because that would stop it from being a boring engineering problem that requires lots of effort and instead something a lone genius will have to solve.

I think that FOOM is a real risk, and I have a lot of evidence grounding my calculations about available algorithmic efficiency improvements based on estimates of the compute of the human brain. The conclusion I draw from believing that FOOM is both possible, and indeed likely, after a certain threshold of AI R&D capability is reached by AI models is that preventing/controlling FOOM is an engineering problem.

I don't think we should expect a model in training to become super-human so fast that it blows past our ability to evaluate it. I do think that in order to have the best chance of catching and controlling a rapid accelerating take-off, we need to do pre-emptive engineering work. We need very comprehensive evals to have detailed measures of key factors like general capability, reasoning, deception, self-preservation, and agency. We need carefully designed high-security training facilities with air-gapped datacenters. We need regulation that prevents irresponsible actors from undertaking unsafe experiments. Indeed, most of the critical work to preventing uncontrolled rogue AGI due to FOOM is well described by 'boring engineering problems' or 'boring regulation and enforcement problems'.

Believing in the dangers of recursive self-improvement doesn't necessarily involve believing that the best solution is a genius theoretical answer to value and intent alignment. I wouldn't rule the chance of that out, but I certainly don't expect that slim possibility. It seems foolish to trust in that the primary hope for humanity. Instead, let's focus on doing the necessary engineering and political work so that we can proceed with reasonable safety measures in place!

beck-stein on Funny Anecdote of Eliezer From His SisterI am being told that Sheva Brachos in this example is the series of celebrations in the week after the wedding. I don't know if that's a correction or just context, but there you go.

metachirality on LessOnline (May 31—June 2, Berkeley, CA)Isn't TLP's email on his website?

chipmonk on Key takeaways from our EA and alignment research surveysHow much higher was the scoring on neuroticism than the general population?

chipmonk on Key takeaways from our EA and alignment research surveysHow many alignment researchers do you think there are total? What % do you think this survey hit that you wanted it to hit?

porby on Does reducing the amount of RL for a given capability level make AI safer?But I disagree that there’s no possible RL system in between those extremes where you can have it both ways.

I don't disagree. For clarity, I would make these claims, and I do not think they are in tension:

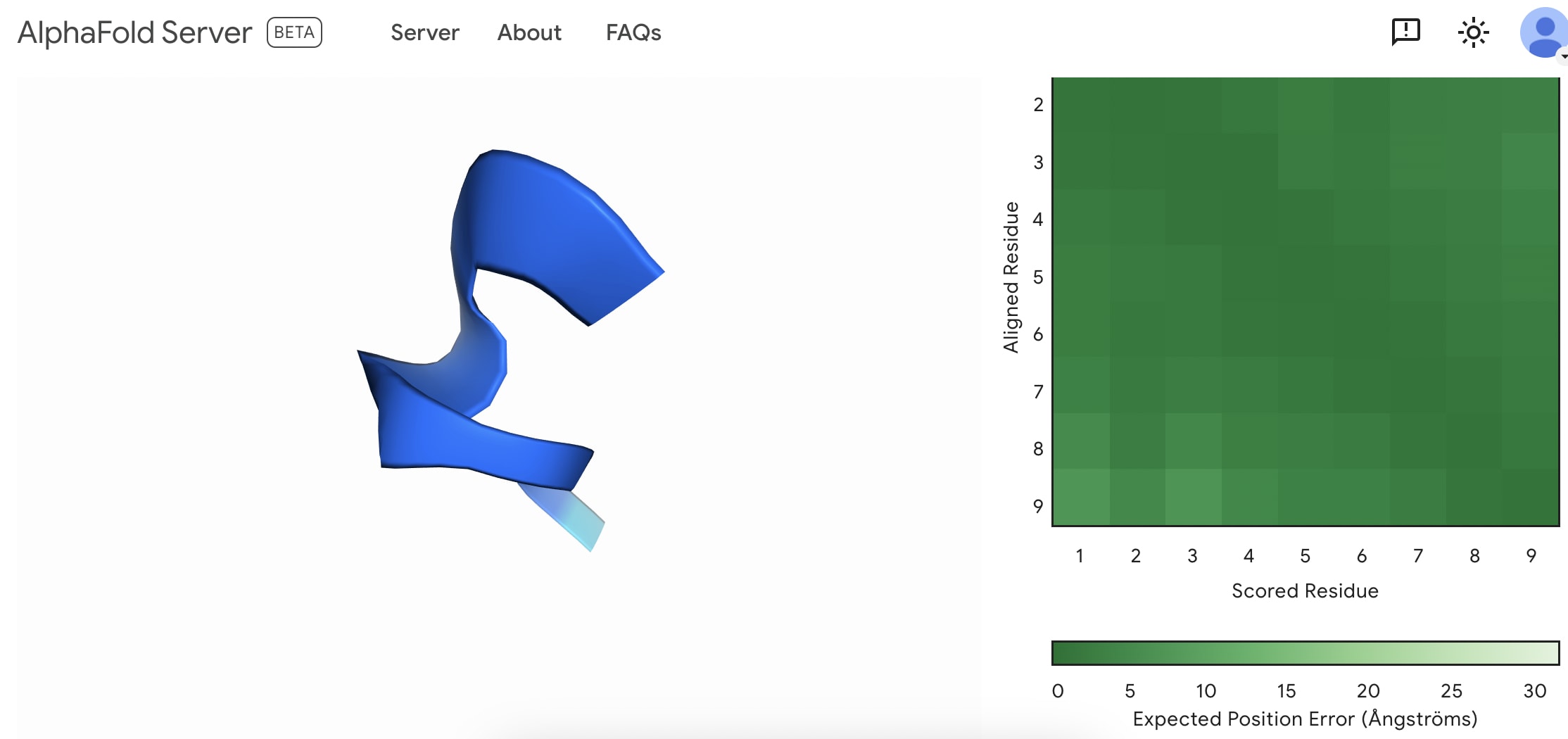

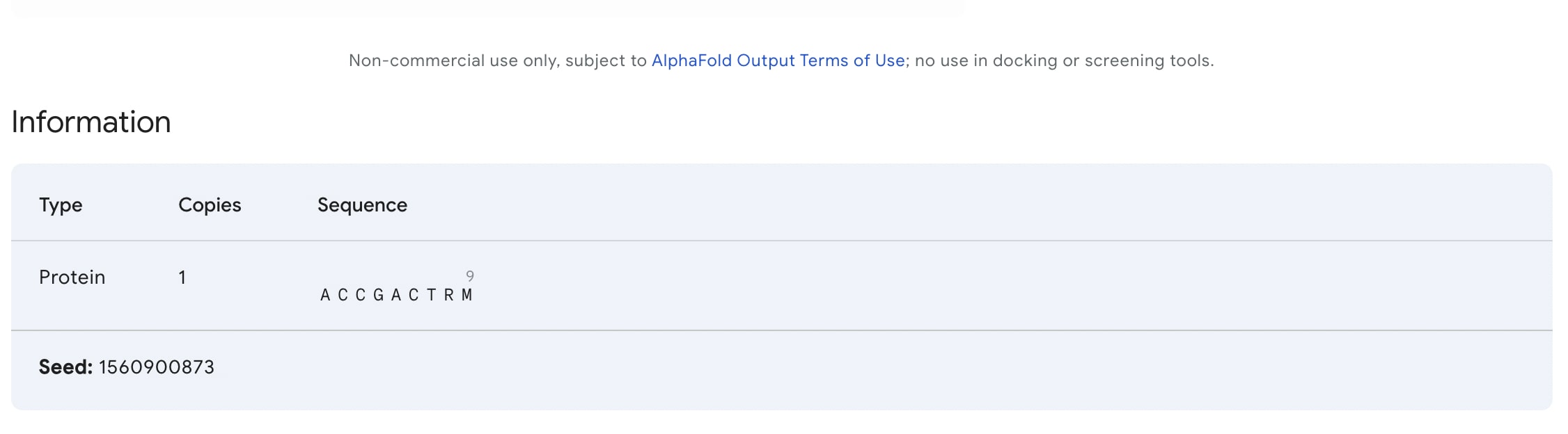

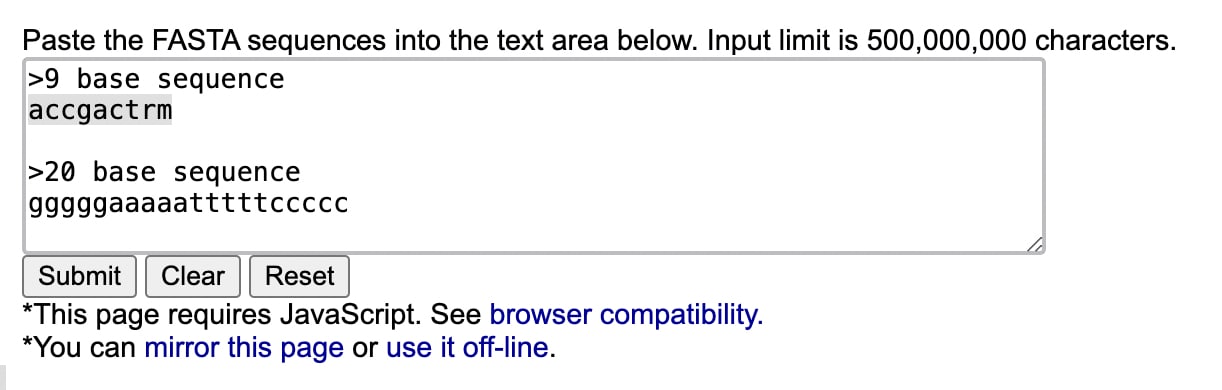

I created my first fold. I'm not sure if this is something to be happy with as everybody can do it now.