The No Free Lunch theorem for dummies

post by Steven Byrnes (steve2152) · 2022-12-05T21:46:25.950Z · LW · GW · 16 commentsContents

What is the No Free Lunch (NFL) theorem? Sidenote: Why NFL has basically nothing to do with AGI None 16 comments

(I wrote this little explainer when drafting Response to Blake Richards: AGI, generality, alignment, & loss functions [LW · GW] a few months ago, but it got cut from the final version. I figure it kinda stands alone, and maybe people will find it to be helpful pedagogy, because I recall being confused about this topic for quite a while when I first came across it a few years ago. Also, there’s some chance that I’m still confused about NFL, and if so, someone can correct me in the comments! Anyway, enjoy!)

What is the No Free Lunch (NFL) theorem?

Suppose there’s a function that maps each of a finite number of inputs to one of a finite number of outputs . You have no idea what this function is; literally any possible function, out of the possibilities, is equally likely, as far as you know.

In fact, I generated the function by secretly rolling an -sided die times. If I got a "9" on my first die-roll, then , and so on.

Now, you and me have a conversation about this unknown-to-you function :

- Me: “Pop quiz: What is ?”

- You: “How should I know? You just told me that any of the possible ’s are equally likely. So is equally likely to be any of the possible outputs .”

- Me: “OK, I'll give you a hint: . Again, what is ?”

- You: “WTF kind of hint is that?? That doesn’t help me at all! I still have no idea. It’s still equally likely to be anything!!”

- Me: “OK, here’s another hint: . Again, what is ?”

- You: “Stop being annoying. That’s not a real hint. You still haven’t given me any useful information. Based on what you’ve told me, there are possible ’s, and they’re all equally likely, and they’re divided evenly amongst the possible values of .”

That's the essence of the No Free Lunch Theorem (or maybe family of theorems?), as far as I understand it. It sounds dumb, and honestly it is kind of dumb. But you can describe it in a way that makes it sound very profound:

There's no such thing as “learning” or “figuring things out” in general; any algorithm that successfully does so on some problems will do catastrophically badly on other problems.”

In other words, if you’ve observed , that information doesn’t help you at all in figuring out what is going to be, when we’re in this situation where is a priori equally likely to be any of the possibilities. If you throw some pattern-learning algorithm at this problem, it might form guesses about what is going to be, but those guesses are going to be garbage.

Here’s an example: there are bit-strings for which Solomonoff Induction [LW · GW] performs at worse-than-chance level forever! After all, there are bit-strings for which it performs at better-than-chance level forever, and if you average over every possible bit-string, its guesses have to be wrong as often as they're right.

Sidenote: Why NFL has basically nothing to do with AGI

See discussion by Eliezer Yudkowsky at Arbital: No-Free-Lunch theorems are often irrelevant and A reply to Francois Chollet on intelligence explosion.

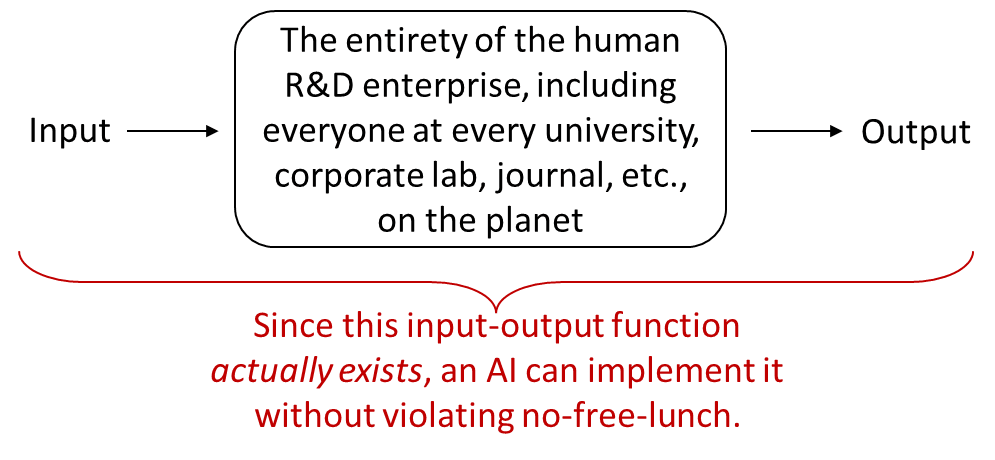

In short, things like “creating and executing effective plans in the real world” and “inventing new technology” and so on are all very different from “characterize an unknown function that was secretly generated by repeatedly flipping a coin / rolling a die”. NFL applies to the latter, not the former. As an example:

…Yet what an AI that would be! Imagine one AI that prints out beautiful original scientific papers literally every few minutes, night and day, encompassing brilliant new ideas in every field of knowledge, among many other endeavors. And if everyone in that global R&D system were able to think 1000× faster, that would still be compatible with NFL. And if any person in that system could also instantly clone themselves at will (including all their adult knowledge), that would still be compatible with NFL! And if you also magically grant everyone in this global R&D system the ability to summon arbitrarily many copies of any other human, dead or alive, to help them with their ongoing research projects (maybe they summon a few Donald Knuths for their algorithms-related challenges, and some Marc Raiberts for their robotics challenges, and an army of John Von Neumanns for whatever, etc.), that would still be compatible with NFL! Nobody would hesitate to call such a AI superintelligent. And there’s no reason to think that even this is anywhere near the ceiling of what is allowed by NFL.

16 comments

Comments sorted by top scores.

comment by Robert Miles (robert-miles) · 2022-12-06T23:50:17.696Z · LW(p) · GW(p)

It's impossible to create a fully general intelligence, i.e. one that acts intelligently in all possible universes. But we only have to make one that works in this universe, so that's not an issue.

Replies from: steve2152↑ comment by Steven Byrnes (steve2152) · 2022-12-07T13:57:01.965Z · LW(p) · GW(p)

Well said! There might be an even stronger statement along the lines of “you can create an intelligence which is effective not just in our universe but in any universe governed by any stable local laws of physics / any fixed computable rule whatsoever”, or something like that.

The hypothetical “anti-inductive” universes where Solomonoff Induction performs worse than chance forever are very strange beasts indeed, seems to me. Imagine: Whenever you see a pattern, that makes it less likely that you’ll see the pattern again in the future, no matter what meta-level of abstraction this pattern is at. Cf. Viliam’s comment [LW(p) · GW(p)]. I’m not an expert in this area but I want to go find one and ask them to tell me all about this topic :)

Replies from: sharmake-farah↑ comment by Noosphere89 (sharmake-farah) · 2022-12-07T17:58:17.587Z · LW(p) · GW(p)

Well said! There might be an even stronger statement along the lines of “you can create an intelligence which is effective not just in our universe but in any universe governed by any stable local laws of physics / any fixed computable rule whatsoever”, or something like that.

I'd strengthen that to even uncomputable universes, though that requires infinite computation. The best example of an uncomputable universe is the standard model of particle physics.

Replies from: steve2152↑ comment by Steven Byrnes (steve2152) · 2022-12-07T19:22:03.035Z · LW(p) · GW(p)

Why do you say that the standard model of particle physics is uncomputable?

Replies from: sharmake-farah↑ comment by Noosphere89 (sharmake-farah) · 2022-12-13T01:18:13.606Z · LW(p) · GW(p)

I think it was the constants that were uncomputable real numbers.

comment by Lao Mein (derpherpize) · 2022-12-06T01:40:03.029Z · LW(p) · GW(p)

My understanding is the NFL applies to the set of all possible data distributions. Which is perfectly random data. So the conclusion is just inane - "no method predicts random data better than any other :^)".

Physical reality and the data generated by it are very much not random. They have a striking tendency to have a normal distribution, for example. So NFL doesn't apply to data in the real world.

Replies from: shminux↑ comment by Shmi (shminux) · 2022-12-06T02:29:21.051Z · LW(p) · GW(p)

Well, if you are dealing with an adversarial situation against an equal or stronger opponent, the NFL implies that you should plan for the worst case, not a likely or average or median case. Unless I understand it wrong.

Replies from: Morpheus, derpherpize↑ comment by Morpheus · 2022-12-06T08:31:19.771Z · LW(p) · GW(p)

That gives the whole thing more credit than it deserves. The NFL theorem really only works with a flat prior and it that case the NFL theorem shows you that you have already lost (every policy does (in expectation) as well as any other). So this prior should actually have 0 influence on your policy. It's self-defeating if you are the least bit unsure about it, similar to nihilism as a moral code.

Replies from: Gurkenglas, shminux↑ comment by Gurkenglas · 2022-12-06T19:08:47.277Z · LW(p) · GW(p)

It works with any prior! "If you assign more than the prior to anything, you must assign less than it to something."

Replies from: Morpheus, Morpheus↑ comment by Morpheus · 2022-12-06T21:47:00.817Z · LW(p) · GW(p)

Yes, but in worlds where not every sequence {0,1} * is equally likely (eg, your possible worlds have ANY structure) there will be predictors that outperform random predictors (like AIXI for example). (this is not literally true up to maximum pedantry (eg. there are infinitely measures on all languages where AIXI/solomonoff induction never works, but for all of those see my other comment))

↑ comment by Morpheus · 2022-12-06T21:17:57.260Z · LW(p) · GW(p)

Well... I don't know about you, but even if I believed that the most likely explanation for my observations was that I am a boltzmann brain, my current beliefs will lead me to effectively act as if I have 0 crecedence in that belief (since these worlds have no implications for my policy). As long as I put 0 value on this frame, I can actually discard it even if I have knightian uncertainty about which is the right prior to use (Logical uncertainty makes this more complicated than it needs to be and I think the basic point still stands. I am basically appealing to pragmatism [LW · GW]).

This might not apply to every theorem that has ever been called NFL theorem. I think that what I wrote is true for the stuff that Wolpert shows in this paper.

↑ comment by Shmi (shminux) · 2022-12-06T09:57:36.854Z · LW(p) · GW(p)

Thanks!

↑ comment by Lao Mein (derpherpize) · 2022-12-06T05:19:38.135Z · LW(p) · GW(p)

So it's about how adversarial inputs can produce maximally wrong answers? Wouldn't the best policy in that case just be to ignore adversarial inputs and rely entirely on your priors?

comment by tailcalled · 2022-12-05T22:01:57.174Z · LW(p) · GW(p)

Relevant: Instrumental convergence is what makes general intelligence possible [LW · GW].

comment by Viliam · 2022-12-06T23:36:18.083Z · LW(p) · GW(p)

there are bit-strings for which Solomonoff Induction [LW · GW] performs at worse-than-chance level forever!

This reminds me... at high school I tried to figure out the most unnatural sequence of bits. Defined as a sequence of ones and zeroes such that if I show you any prefix, and you try to figure out the next digit, you will be wrong.

I figured out that the first digit would be 1, because the simplest possible sequence is "00000000...". The next digit would be zero, because the simplest sequence starting with 1 is "11111111...". The third digit would also be zero, because the simplest sequence starting with 10 is "10101010...".

After that I wasn't sure anymore, because it seemed to me that if I continue using the same kind of reasoning, at some moment "this kind of reasoning" will itself become the simplest explanation for the generated data, and therefore I should stop doing it at some moment - but when exactly? Probably at the point where "he is breaking all patterns on purpose" becomes a more likely explanation that any specific pattern.

...sorry if this does not make sense at all.

Replies from: zac-hatfield-dodds↑ comment by Zac Hatfield-Dodds (zac-hatfield-dodds) · 2022-12-08T15:26:31.392Z · LW(p) · GW(p)

It does make sense, and there's a way to do it!

- We're going to use Solomonoff induction, or (if you want it to be computable) an approximation like AIXI-tl, so we'll need a prior over all Turing machines. Let's go with the speed prior for now.

- At each bit, choose the bit which has lower probability according to this predictor.

This sequence is entirely deterministic, but can't be predicted without self-reference.