Christiano and Yudkowsky on AI predictions and human intelligence

post by Eliezer Yudkowsky (Eliezer_Yudkowsky) · 2022-02-23T21:34:55.245Z · LW · GW · 35 commentsContents

15. October 19 comment [Yudkowsky][11:01] 16. November 3 conversation 16.1. EfficientZero [Yudkowsky][9:30] [Shah][14:46] [Yudkowsky][15:28] [Shah][15:38] [Christiano][18:01] [Barnes][18:22] [Yudkowsky][22:45] 17. November 4 conversation 17.1. EfficientZero (continued) [Christiano][7:42] [Ngo][8:48] [Christiano][9:06] [Ngo][9:18] [Shah][9:32] [Christiano][10:29] [Yudkowsky][10:51] [Christiano][10:54] [Yudkowsky][11:04] [Christiano][11:05][11:08] [Yudkowsky][11:08] [Christiano][11:08] [Yudkowsky][11:14] [Christiano][11:15] [Yudkowsky][11:16] [Christiano][11:16] [Yudkowsky][11:17] [Christiano][11:17] 17.2. Near-term AI predictions [Yudkowsky][11:18] [Christiano][11:18] [Yudkowsky][11:19] [Christiano][11:19] [Yudkowsky][11:20] [Christiano][11:22] [Yudkowsky][11:23] [Christiano][11:24] [Yudkowsky][11:24] [Christiano][11:25] [Yudkowsky][11:26] [Christiano][11:27] [Yudkowsky][11:28] [Christiano][11:29] [Yudkowsky][11:30] [Christiano][11:30][11:31] [Yudkowsky][11:31][11:32] [Christiano][11:32] [Yudkowsky][11:33] [Christiano][11:36] [Yudkowsky][11:38] [Christiano][11:38] [Yudkowsky][11:39] [Christiano][11:39] [Yudkowsky][11:40] [Christiano][11:42] [Yudkowsky][11:44] [Christiano][11:44] [Yudkowsky][11:45] [Christiano][11:45] [Yudkowsky][11:46] [Christiano][11:46] [Yudkowsky][11:46] [Christiano][11:48] [Yudkowsky][11:57] 17.3. The evolution of human intelligence [Yudkowsky][11:57] [Christiano][11:58] [Yudkowsky][12:00] [Christiano][12:00] [Yudkowsky][12:00] [Christiano][12:00] [Yudkowsky][12:01] [Christiano][12:01][12:02] [Yudkowsky][12:02][12:02] [Christiano][12:03] [Yudkowsky][12:03] [Christiano][12:03] [Yudkowsky][12:03] [Christiano][12:04] [Yudkowsky][12:07] [Christiano][12:08] [Yudkowsky][12:11] [Christiano][12:12] [Yudkowsky][12:13] [Christiano][12:17] [Yudkowsky][12:18] [Christiano][12:18] [Yudkowsky][12:18] [Christiano][12:19] [Yudkowsky][12:19] [Christiano][12:19] [Yudkowsky][12:19] [Christiano][12:19] [Yudkowsky][12:20] [Christiano][12:20] [Yudkowsky][12:21] [Christiano][12:21] [Yudkowsky][12:22] [Christiano][12:23] [Yudkowsky][12:23] [Christiano][12:23] [Yudkowsky][12:24] [Christiano][12:24] [Yudkowsky][12:24] [Christiano][12:25] [Yudkowsky][12:26] [Christiano][12:26] [Yudkowsky][12:26] [Christiano][12:26] [Yudkowsky][12:26] [Christiano][12:27] [Yudkowsky][12:27] [Christiano][12:27] [Yudkowsky][12:27] [Christiano][12:27] [Yudkowsky][12:28] [Christiano][12:28] [Yudkowsky][12:31] [Christiano][12:31] [Yudkowsky][12:32] [Christiano][12:32] [Yudkowsky][12:33] [Christiano][12:33] [Yudkowsky][12:34] [Christiano][12:34] [Yudkowsky][12:35][12:36] [Christiano][12:36] [Yudkowsky][12:37] [Christiano][12:38] [Yudkowsky][12:41] [Christiano][12:42] 17.4. Human generality and body manipulation [Yudkowsky][12:43] [Christiano][12:44] [Yudkowsky][12:44] [Christiano][12:44] [Yudkowsky][12:44] [Christiano][12:44] [Yudkowsky][12:45] [Christiano][12:45] [Yudkowsky][12:45] [Christiano][12:45] [Yudkowsky][12:49] [Christiano][12:49] [Yudkowsky][12:49] [Christiano][12:49] [Yudkowsky][12:50] [Christiano][12:50] [Yudkowsky][12:51] [Christiano][12:51] [Yudkowsky][12:51] [Christiano][12:52] [Yudkowsky][12:53] [Christiano][12:53] [Barnes][13:45] [Christiano][13:48] 18. Follow-ups to the Christiano/Yudkowsky conversation [Karnofsky][15:14] (Nov. 5) [Yudkowsky][15:16] (Nov. 5, switching channels) None 35 comments

This is a transcript of a conversation between Paul Christiano and Eliezer Yudkowsky, with comments by Rohin Shah, Beth Barnes, Richard Ngo, and Holden Karnofsky, continuing the Late 2021 MIRI Conversations.

Color key:

| Chat by Paul and Eliezer | Other chat |

15. October 19 comment

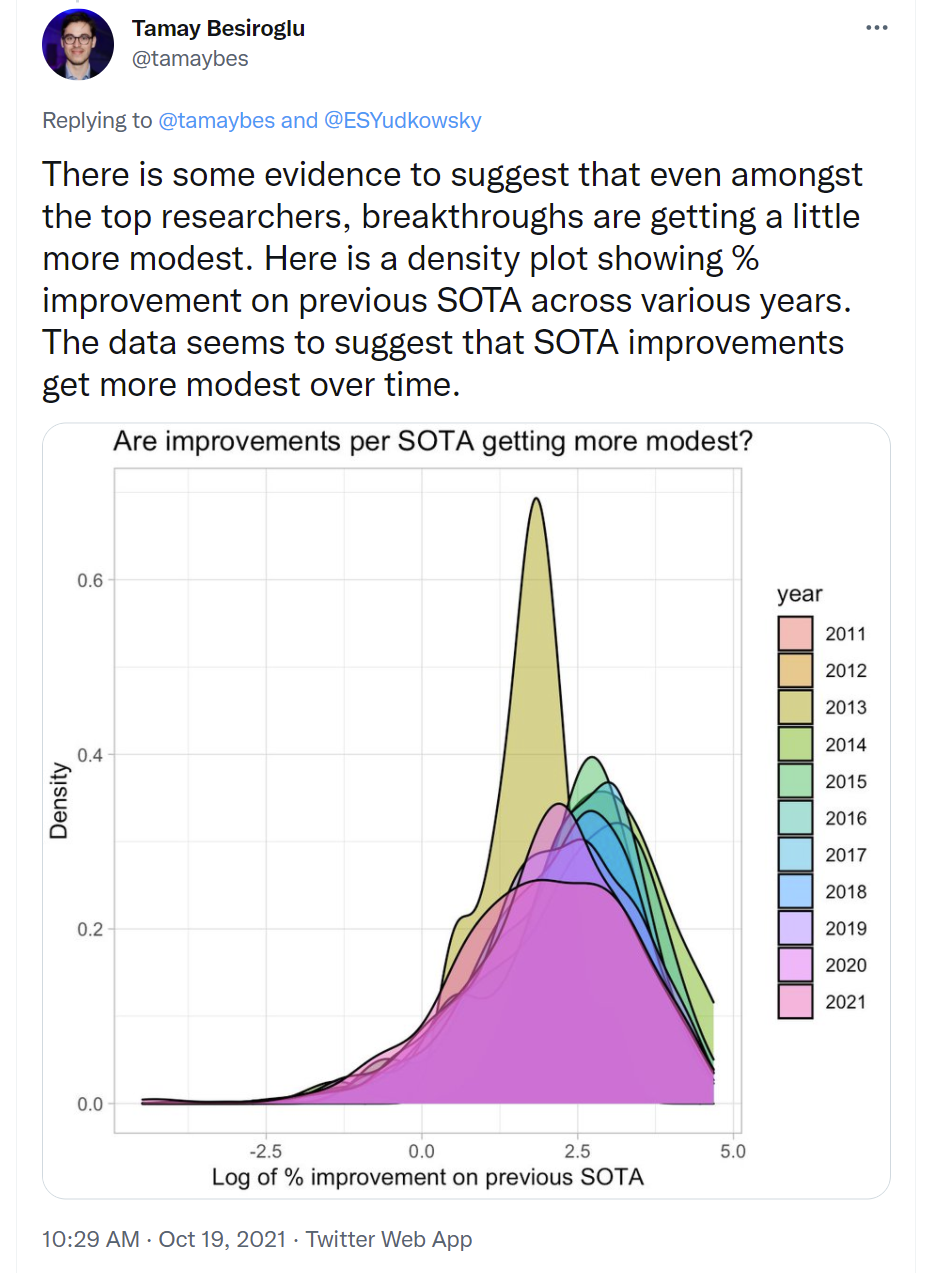

[Yudkowsky][11:01] thing that struck me as an iota of evidence for Paul over Eliezer: https://twitter.com/tamaybes/status/1450514423823560706?s=20  |

16. November 3 conversation

16.1. EfficientZero

[Yudkowsky][9:30] Thing that (if true) strikes me as... straight-up falsifying Paul's view as applied to modern-day AI, at the frontier of the most AGI-ish part of it and where Deepmind put in substantial effort on their project? EfficientZero (allegedly) learns Atari in 100,000 frames. Caveat: I'm not having an easy time figuring out how many frames MuZero would've required to achieve the same performance level. MuZero was trained on 200,000,000 frames but reached what looks like an allegedly higher high; the EfficientZero paper compares their performance to MuZero on 100,000 frames, and claims theirs is much better than MuZero given only that many frames. https://arxiv.org/pdf/2111.00210.pdf CC: @paulfchristiano. (I would further argue that this case is important because it's about the central contemporary model for approaching AGI, at least according to Eliezer, rather than any number of random peripheral AI tasks.) |

[Shah][14:46] I only looked at the front page, so might be misunderstanding, but the front figure says "Our proposed method EfficientZero is 170% and 180% better than the previous SoTA performance in mean and median human normalized score [...] on the Atari 100k benchmark", which does not seem like a huge leap? Oh, I incorrectly thought that was 1.7x and 1.8x, but it is actually 2.7x and 2.8x, which is a bigger deal (though still feels not crazy to me) |

[Yudkowsky][15:28] the question imo is how many frames the previous SoTA would require to catch up to EfficientZero (I've tried emailing an author to ask about this, no response yet) like, perplexity on GPT-3 vs GPT-2 and "losses decreased by blah%" would give you a pretty meaningless concept of how far ahead GPT-3 was from GPT-2, and I think the "2.8x performance" figure in terms of scoring is equally meaningless as a metric of how much EfficientZero improves if any what you want is a notion like "previous SoTA would have required 10x the samples" or "previous SoTA would have required 5x the computation" to achieve that performance level |

[Shah][15:38] I see. Atari curves are not nearly as nice and stable as GPT curves and often have the problem that they plateau rather than making steady progress with more training time, so that will make these metrics noisier, but it does seem like a reasonable metric to track (Not that I have recommendations about how to track it; I doubt the authors can easily get these metrics) |

[Christiano][18:01] If you think our views are making such starkly different predictions then I'd be happy to actually state any of them in advance, including e.g. about future ML benchmark results. I don't think this falsifies my view, and we could continue trying to hash out what my view is but it seems like slow going and I'm inclined to give up. Relevant questions on my view are things like: is MuZero optimized at all for performance in the tiny-sample regime? (I think not, I don't even think it set SoTA on that task and I haven't seen any evidence.) What's the actual rate of improvements since people started studying this benchmark ~2 years ago, and how much work has gone into it? And I totally agree with your comments that "# of frames" is the natural unit for measuring and that would be the starting point for any discussion. |

[Barnes][18:22]

This seems like a super obvious thing to do and I'm confused why DM didn't already try this. It was definitely being talked about in ~2018 Will ask a DM friend about it |

[Yudkowsky][22:45] I... don't think I want to take all of the blame for misunderstanding Paul's views; I think I also want to complain at least a little that Paul spends an insufficient quantity of time pointing at extremely concrete specific possibilities, especially real ones, and saying how they do or don't fit into the scheme. Am I rephrasing correctly that, in this case, if Efficient Zero was actually a huge (3x? 5x? 10x?) jump in RL sample efficiency over previous SOTA, measured in 1 / frames required to train to a performance level, then that means the Paul view doesn't apply to the present world; but this could be because MuZero wasn't the real previous SOTA, or maybe because nobody really worked on pushing out this benchmark for 2 years and therefore on the Paul view it's fine for there to still be huge jumps? In other words, this is something Paul's worldview has to either defy or excuse, and not just, "well, sure, why wouldn't it do that, you have misunderstood which kinds of AI-related events Paul is even trying to talk about"? In the case where, "yes it's a big jump and that shouldn't happen later, but it could happen now because it turned out nobody worked hard on pushing past MuZero over the last 2 years", I wish to register that my view permits it to be the case that, when the world begins to end, the frontier that enters into AGI is similarly something that not a lot of people spent a huge effort on since a previous prototype from 2 years earlier. It's just not very surprising to me if the future looks a lot like the past, or if human civilization neglects to invest a ton of effort in a research frontier. Gwern guesses that getting to EfficientZero's performance level would require around 4x the samples for MuZero-Reanalyze (the more efficient version of MuZero which replayed past frames), which is also apparently the only version of MuZero the paper's authors were considering in the first place - without replays, MuZero requires 20 billion frames to achieve its performance, not the figure of 200 million. https://www.lesswrong.com/posts/jYNT3Qihn2aAYaaPb/efficientzero-human-ale-sample-efficiency-w-muzero-self?commentId=JEHPQa7i8Qjcg7TW6 [LW(p) · GW(p)] |

17. November 4 conversation

17.1. EfficientZero (continued)

[Christiano][7:42] I think it's possible the biggest misunderstanding is that you somehow think of my view as a "scheme" and your view as a normal view where probability distributions over things happen. Concretely, this is a paper that adds a few techniques to improve over MuZero in a domain that (it appears) wasn't a significant focus of MuZero. I don't know how much it improves but I can believe gwern's estimates of 4x. I'd guess MuZero itself is a 2x improvement over the baseline from a year ago, which was maybe a 4x improvement over the algorithm from a year before that. If that's right, then no it's not mindblowing on my view to have 4x progress one year, 2x progress the next, and 4x progress the next. If other algorithms were better than MuZero, then the 2019-2020 progress would be >2x and the 2020-2021 progress would be <4x. I think it's probably >4x sample efficiency though (I don't totally buy gwern's estimate there), which makes it at least possibly surprising. But it's never going to be that surprising. It's a benchmark that people have been working on for a few years that has been seeing relatively rapid improvement over that whole period. The main innovation is how quickly you can learn to predict future frames of Atari games, which has tiny economic relevance and calling it the most AGI-ish direction seems like it's a very Eliezer-ish view, this isn't the kind of domain where I'm either most surprised to see rapid progress at all nor is the kind of thing that seems like a key update re: transformative AI yeah, SoTA in late 2020 was SPR, published by a much smaller academic group: https://arxiv.org/pdf/2007.05929.pdf MuZero wasn't even setting sota on this task at the time it was published my "schemes" are that (i) if a bunch of people are trying on a domain and making steady slow progress, I'm surprised to see giant jumps and I don't expect most absolute progress to occur in such jumps, (ii) if a domain is worth a lot of $, generally a bunch of people will be trying. Those aren't claims about what is always true, they are claims about what is typically true and hence what I'm guessing will be true for transformative AI. Maybe you think those things aren't even good general predictions, and that I don't have long enough tails in my distributions or whatever. But in that case it seems we can settle it quickly by prediction. I think this result is probably significant (>30% absolute improvement) + faster-than-trend (>50% faster than previous increment) progress relative to prior trend on 8 of the 27 atari games (from table 1, treating SimPL->{max of MuZero, SPR}->EfficientZero as 3 equally spaced datapoints): Asterix, Breakout, almost ChopperCMD, almost CrazyClimber, Gopher, Kung Fu Master, Pong, QBert, SeaQuest. My guess is that they thought a lot about a few of those games in particular because they are very influential on the mean/median. Note that this paper is a giant grab bag and that simply stapling together the prior methods would have already been a significant improvement over prior SoTA. (ETA: I don't think saying "its only 8 of 27 games" is an update against it being big progress or anything. I do think saying "stapling together 2 previous methods without any complementarity at all would already have significantly beaten SoTA" is fairly good evidence that it's not a hard-to-beat SoTA.) and even fewer people working on the ultra-low-sample extremely-low-dimensional DM control environments (this is the subset of problems where the state space is 4 dimensions, people are just not trying to publish great results on cartpole), so I think the most surprising contribution is the atari stuff OK, I now also understand what the result is I think? I think the quick summary is: the prior SoTA is SPR, which learns to predict the domain and then does Q-learning. MuZero instead learns to predict the domain and does MCTS, but it predicts the domain in a slightly less sophisticated way than SPR (basically just predicts rewards, whereas SPR predicts all of the agent's latent state in order to get more signal from each frame). If you combine MCTS with more sophisticated prediction, you do better. I think if you told me that DeepMind put in significant effort in 2020 (say, at least as much post-MuZero effort as the new paper?) trying to get great sample efficiency on the easy-exploration atari games, and failed to make significant progress, then I'm surprised. I don't think that would "falsify" my view, but it would be an update against? Like maybe if DM put in that much effort I'd maybe have given only a 10-20% probability to a new project of similar size putting in that much effort making big progress, and even conditioned on big progress this is still >>median (ETA: and if DeepMind put in much more effort I'd be more surprised than 10-20% by big progress from the new project) Without DM putting in much effort, it's significantly less surprising and I'll instead be comparing to the other academic efforts. But it's just not surprising that you can beat them if you are willing to put in the effort to reimplement MCTS and they aren't, and that's a step that is straightforwardly going to improve performance. (not sure if that's the situation) And then to see how significant updates against are, you have to actually contrast them with all the updates in the other direction where people don't crush previous benchmark results and instead just make modest progress I would guess that if you had talked to an academic about this question (what happens if you combine SPR+MCTS) they would have predicted significant wins in sample efficiency (at the expense of compute efficiency) and cited the difficulty of implementing MuZero compared to any of the academic results. That's another way I could be somewhat surprised (or if there were academics with MuZero-quality MCTS implementations working on this problem, and they somehow didn't set SoTA, then I'm even more surprised). But I'm not sure if you'll trust any of those judgments in hindsight. Repeating the main point: I don't really think a 4x jump over 1 year is something I have to "defy or excuse", it's something that I think becomes more or less likely depending on facts about the world, like (i) how fast was previous progress, (ii) how many people were working on previous projects and how targeted were they at this metric, (iii) how many people are working in this project and how targeted was it at this metric it becomes continuously less likely as those parameters move in the obvious directions it never becomes 0 probability, and you just can't win that much by citing isolated events that I'd give say a 10% probability to, unless you actually say something about how you are giving >10% probabilities to those events without losing a bunch of probability mass on what I see as the 90% of boring stuff

and then separately I have a view about lots of people working on important problems, which doesn't say anything about this case (I actually don't think this event is as low as 10%, though it depends on what background facts about the project you are conditioning on---obviously I gave <<10% probability to someone publishing this particular result, but something like "what fraction of progress in this field would come down to jumps like this" or whatever is probably >10% until you tell me that DeepMind actually cared enough to have already tried) | |||

[Ngo][8:48] I expect Eliezer to say something like: DeepMind believes that both improving RL sample efficiency, and benchmarking progress on games like Atari, are important parts of the path towards AGI. So insofar as your model predicts that smooth progress will be caused by people working directly towards AGI, DeepMind not putting effort into this is a hit to that model. Thoughts? | |||

[Christiano][9:06] I don't think that learning these Atari games in 2 hours is a very interesting benchmark even for deep RL sample efficiency, and it's totally unrelated to the way in which humans learn such games quickly. It seems | |||

[Ngo][9:18] If Atari is not a very interesting benchmark, then why did DeepMind put a bunch of effort into making Agent57 and applying MuZero to Atari? Also, most of the effort they've spent on games in general has been on methods very unlike the way humans learn those games, so that doesn't seem like a likely reason for them to overlook these methods for increasing sample efficiency. | |||

[Shah][9:32]

Not sure of the exact claim, but DeepMind is big enough and diverse enough that I'm pretty confident at least some people working on relevant problems don't feel the same way

Speculating without my DM hat on: maybe it kills performance in board games, and they want one algorithm for all settings? | |||

[Christiano][10:29] Atari games in the tiny sample regime are a different beast there are just a lot of problems you can state about Atari some of which are more or less interesting (e.g. jointly learning to play 57 Atari games is a more interesting problem than learning how to play one of them absurdly quickly, and there are like 10 other problems about Atari that are more interesting than this one) That said, Agent57 also doesn't seem interesting except that it's an old task people kind of care about. I don't know about the take within DeepMind but outside I don't think anyone would care about it other than historical significance of the benchmark / obviously-not-cherrypickedness of the problem. I'm sure that some people at DeepMind care about getting the super low sample complexity regime. I don't think that really tells you how large the DeepMind effort is compared to some random academics who care about it.

I think the argument for working on deep RL is fine and can be based on an analogy with humans while you aren't good at the task. Then once you are aiming for crazy superhuman performance on Atari games you naturally start asking "what are we doing here and why are we still working on atari games?"

and correspondingly they are a smaller and smaller slice of DeepMind's work over time

(e.g. Agent57 and MuZero are the only DeepMind blog posts about Atari in the last 4 years, it's not the main focus of MuZero and I don't think Agent57 is a very big DM project) Reaching this level of performance in Atari games is largely about learning perception, and doing that from 100k frames of an Atari game just doesn't seem very analogous to anything humans do or that is economically relevant from any perspective. I totally agree some people are into it, but I'm totally not surprised if it's not going to be a big DeepMind project. | |||

[Yudkowsky][10:51] would you agree it's a load-bearing assumption of your worldview - where I also freely admit to having a worldview/scheme, this is not meant to be a prejudicial term at all - that the line of research which leads into world-shaking AGI must be in the mainstream and not in a weird corner where a few months earlier there were more profitable other ways of doing all the things that weird corner did? eg, the tech line leading into world-shaking AGI must be at the profitable forefront of non-world-shaking tasks. as otherwise, afaict, your worldview permits that if counterfactually we were in the Paul-forbidden case where the immediate precursor to AGI was something like EfficientZero (whose motivation had been beating an old SOTA metric rather than, say, market-beating self-driving cars), there might be huge capability leaps there just as EfficientZero represents a large leap, because there wouldn't have been tons of investment in that line. | |||

[Christiano][10:54] Something like that is definitely a load-bearing assumption Like there's a spectrum with e.g. EfficientZero --> 2016 language modeling --> 2014 computer vision --> 2021 language modeling --> 2021 computer vision, and I think everything anywhere close to transformative AI will be way way off the right end of that spectrum But I think quantitatively the things you are saying don't seem quite right to me. Suppose that MuZero wasn't the best way to do anything economically relevant, but it was within a factor of 4 on sample efficiency for doing tasks that people care about. That's already going to be enough to make tons of people extremely excited. So yes, I'm saying that anything leading to transformative AI is "in the mainstream" in the sense that it has more work on it than 2021 language models. But not necessarily that it's the most profitable way to do anything that people care about. Different methods scale in different ways, and something can burst onto the scene in a dramatic way, but I strongly expect speculative investment driven by that possibility to already be way (way) more than 2021 language models. And I don't expect gigantic surprises. And I'm willing to bet that e.g. EfficientZero isn't a big surprise for researchers who are paying attention to the area (in addition to being 3+ orders of magnitude more neglected than anything close to transformative AI) 2021 language modeling isn't even very competitive, it's still like 3-4 orders of magnitude smaller than semiconductors. But I'm giving it as a reference point since it's obviously much, much more competitive than sample-efficient atari. This is a place where I'm making much more confident predictions, this is "falsify paul's worldview" territory once you get to quantitative claims anywhere close to TAI and "even a single example seriously challenges paul's worldview" a few orders of magnitude short of that | |||

[Yudkowsky][11:04] can you say more about what falsifies your worldview previous to TAI being super-obviously-to-all-EAs imminent? or rather, "seriously challenges", sorry | |||

[Christiano][11:05][11:08] big AI applications achieved by clever insights in domains that aren't crowded, we should be quantitative about how crowded and how big if we want to get into "seriously challenges" like e.g. if this paper on atari was actually a crucial ingredient for making deep RL for robotics work, I'd be actually for real surprised rather than 10% surprised but it's not going to be, those results are being worked on by much larger teams of more competent researchers at labs with $100M+ funding it's definitely possible for them to get crushed by something out of left field but I'm betting against every time | |||

| or like, the set of things people would describe as "out of left field," and the quantitative degree of neglectedness, becomes more and more mild as the stakes go up | |||

[Yudkowsky][11:08] how surprised are you if in 2022 one company comes out with really good ML translation, and they manage to sell a bunch of it temporarily until others steal their ideas or Google acquires them? my model of Paul is unclear on whether this constitutes "many people are already working on language models including ML translation" versus "this field is not profitable enough right this minute for things to be efficient there, and it's allowed to be nonobvious in worlds where it's about to become profitable". | |||

[Christiano][11:08] if I wanted to make a prediction about that I'd learn a bunch about how much google works on translation and how much $ they make I just don't know the economics and it depends on the kind of translation that they are good at and the economics (e.g. google mostly does extremely high-volume very cheap translation) but I think there are lots of things like that / facts I could learn about Google such that I'd be surprised in that situation independent of the economics, I do think a fair number of people are working on adjacent stuff, and I don't expect someone to come out of left field for google-translate-cost translation between high-resource languages but it seems quite plausible that a team of 10 competent people could significantly outperform google translate, and I'd need to learn about the economics to know how surprised I am by 10 people or 100 people or what I think it's allowed to be non-obvious whether a domain is about to be really profitable but it's not that easy, and the higher the stakes the more speculative investment it will drive, etc. | |||

[Yudkowsky][11:14] if you don't update much off EfficientZero, then people also shouldn't be updating much off of most of the graph I posted earlier as possible Paul-favoring evidence, because most of those SOTAs weren't highly profitable so your worldview didn't have much to say about them. ? | |||

[Christiano][11:15] Most things people work a lot on improve gradually. EfficientZero is also quite gradual compared to the crazy TAI stories you tell. I don't really know what to say about this game other than I would prefer make predictions in advance and I'm happy to either propose questions/domains or make predictions in whatever space you feel more comfortable with. | |||

[Yudkowsky][11:16] I don't know how to point at a future event that you'd have strong opinions about. it feels like, whenever I try, I get told that the current world is too unlike the future conditions you expect. | |||

[Christiano][11:16] Like, whether or not EfficientZero is evidence for your view depends on exactly how "who knows what will happen" you are. if you are just a bit more spread out than I am, then it's definitely evidence for your view. I'm saying that I'm willing to bet about any event you want to name, I just think my model of how things work is more accurate. I'd prefer it be related to ML or AI. | |||

[Yudkowsky][11:17] to be clear, I appreciate that it's similarly hard to point at an event like that for myself, because my own worldview says "well mostly the future is not all that predictable with a few rare exceptions" | |||

[Christiano][11:17] But I feel like the situation is not at all symmetrical, I expect to outperform you on practically any category of predictions we can specify. so like I'm happy to bet about benchmark progress in LMs, or about whether DM or OpenAI or Google or Microsoft will be the first to achieve something, or about progress in computer vision, or about progress in industrial robotics, or about translations whatever |

17.2. Near-term AI predictions

[Yudkowsky][11:18] that sounds like you ought to have, like, a full-blown storyline about the future? | |

[Christiano][11:18] what is a full-blown storyline? I have a bunch of ways that I think about the world and make predictions about what is likely and yes, I can use those ways of thinking to make predictions about whatever and I will very often lose to a domain expert who has better and more informed ways of making predictions | |

[Yudkowsky][11:19] what happens if 2022 through 2024 looks literally exactly like Paul's modal or median predictions on things? | |

[Christiano][11:19] but I think in ML I will generally beat e.g. a superforecaster who doesn't have a lot of experience in the area give me a question about 2024 and I'll give you a median? I don't know what "what happens" means storylines do not seem like good ways of making predictions

| |

[Yudkowsky][11:20] I mean, this isn't a crux for anything, but it seems like you're asking me to give up on that and just ask for predictions? so in 2024 can I hire an artist who doesn't speak English and converse with them almost seamlessly through a machine translator? | |

[Christiano][11:22] median outcome (all of these are going to be somewhat easy-to-beat predictions because I'm not thinking): you can get good real-time translations, they are about as good as a +1 stdev bilingual speaker who listens to what you said and then writes it out in the other language as fast as they can type Probably also for voice -> text or voice -> voice, though higher latencies and costs. Not integrated into standard video chatting experience because the UX is too much of a pain and the world sucks. That's a median on "how cool/useful is translation" | |

[Yudkowsky][11:23] I would unfortunately also predict that in this case, this will be a highly competitive market and hence not a very profitable one, which I predict to match your prediction, but I ask about the economics here just in case. | |

[Christiano][11:24] Kind of typical sample: I'd guess that Google has a reasonably large lead, most translation still provided as a free value-added, cost per translation at that level of quality is like $0.01/word, total revenue in the area is like $10Ms / year? | |

[Yudkowsky][11:24] well, my model also permits that Google does it for free and so it's an uncompetitive market but not a profitable one... ninjaed. | |

[Christiano][11:25] first order of improving would be sanity-checking economics and thinking about #s, second would be learning things like "how many people actually work on translation and what is the state of the field?" | |

[Yudkowsky][11:26] did Tesla crack self-driving cars and become a $3T company instead of a $1T company? do you own Tesla options? did Waymo beat Tesla and cause Tesla stock to crater, same question? | |

[Christiano][11:27] 1/3 chance tesla has FSD in 2024 conditioned on that, yeah probably market cap is >$3T? conditioned on Tesla having FSD, 2/3 chance Waymo has also at least rolled out to a lot of cities conditioned on no tesla FSD, 10% chance Waymo has rolled out to like half of big US cities? dunno if numbers make sense | |

[Yudkowsky][11:28] that's okay, I dunno if my questions make sense | |

[Christiano][11:29] (5% NW in tesla, 90% NW in AI bets, 100% NW in more normal investments; no tesla options that sounds like a scary place with lottery ticket biases and the crazy tesla investors) | |

[Yudkowsky][11:30] (am I correctly understanding you're 2x levered?) | |

[Christiano][11:30][11:31] yeah it feels like you've got to have weird views on trajectory of value-added from AI over the coming years on how much of the $ comes from domains that are currently exciting to people (e.g. that Google already works on, self-driving, industrial robotics) vs stuff out of left field on what kind of algorithms deliver $ in those domains (e.g. are logistics robots trained using the same techniques tons of people are currently pushing on) on my picture you shouldn't be getting big losses on any of those | |

| just losing like 10-20% each time | |

[Yudkowsky][11:31][11:32] my uncorrected inside view says that machine translation should be in reach and generate huge amounts of economic value even if it ends up an unprofitable competitive or Google-freebie field | |

| and also that not many people are working on basic research in machine translation or see it as a "currently exciting" domain | |

[Christiano][11:32] how many FTE is "not that many" people? also are you expecting improvement in the google translate style product, or in lower-latencies for something closer to normal human translator prices, or something else? | |

[Yudkowsky][11:33] my worldview says more like... sure, maybe there's 300 programmers working on it worldwide, but most of them aren't aggressively pursuing new ideas and trying to explore the space, they're just applying existing techniques to a new language or trying to throw on some tiny mod that lets them beat SOTA by 1.2% for a publication because it's not an exciting field "What if you could rip down the language barriers" is an economist's dream, or a humanist's dream, and Silicon Valley is neither and looking at GPT-3 and saying, "God damn it, this really seems like it must on some level understand what it's reading well enough that the same learned knowledge would suffice to do really good machine translation, this must be within reach for gradient descent technology we just don't know how to reach it" is Yudkowskian thinking; your AI system has internal parts like "how much it understands language" and there's thoughts about what those parts ought to be able to do if you could get them into a new system with some other parts | |

[Christiano][11:36] my guess is we'd have some disagreements here but to be clear, you are talking about text-to-text at like $0.01/word price point? | |

[Yudkowsky][11:38] I mean, do we? Unfortunately another Yudkowskian worldview says "and people can go on failing to notice this for arbitrarily long amounts of time". if that's around GPT-3's price point then yeah | |

[Christiano][11:38] gpt-3 is a lot cheaper, happy to say gpt-3 like price point | |

[Yudkowsky][11:39] (thinking about whether $0.01/word is meaningfully different from $0.001/word and concluding that it is) | |

[Christiano][11:39] (api is like 10,000 words / $) I expect you to have a broader distribution over who makes a great product in this space, how great it ends up being etc., whereas I'm going to have somewhat higher probabilities on it being google research and it's going to look boring | |

[Yudkowsky][11:40] what is boring? boring predictions are often good predictions on my own worldview too lots of my gloom is about things that are boringly bad and awful (and which add up to instant death at a later point) but, I mean, what does boring machine translation look like? | |

[Christiano][11:42] Train big language model. Have lots of auxiliary tasks especially involving reading in source language and generation in target language. Have pre-training on aligned sentences and perhaps using all the unsupervised translation we have depending on how high-resource language is. Fine-tune with smaller amount of higher quality supervision. Some of the steps likely don't add much value and skip them. Fair amount of non-ML infrastructure. For some languages/domains/etc. dedicated models, over time increasingly just have a giant model with learned dispatch as in mixture of experts. | |

[Yudkowsky][11:44] but your worldview is also totally ok with there being a Clever Trick added to that which produces a 2x reduction in training time. or with there being a new innovation like transformers, which was developed a year earlier and which everybody now uses, without which the translator wouldn't work at all. ? | |

[Christiano][11:44] Just for reference, I think transformers aren't that visible on a (translation quality) vs (time) graph? But yes, I'm totally fine with continuing architectural improvements, and 2x reduction in training time is currently par for the course for "some people at google thought about architectures for a while" and I expect that to not get that much tighter over the next few years. | |

[Yudkowsky][11:45] unrolling Restricted Boltzmann Machines to produce deeper trainable networks probably wasn't much visible on a graph either, but good luck duplicating modern results using only lower portions of the tech tree. (I don't think we disagree about this.) | |

[Christiano][11:45] I do expect it to eventually get tighter, but not by 2024. I don't think unrolling restricted boltzmann machines is that important | |

[Yudkowsky][11:46] like, historically, or as a modern technology? | |

[Christiano][11:46] historically | |

[Yudkowsky][11:46] interesting my model is that it got people thinking about "what makes things trainable" and led into ReLUs and inits but I am going more off having watched from the periphery as it happened, than having read a detailed history of that like, people asking, "ah, but what if we had a deeper network and the gradients didn't explode or die out?" and doing that en masse in a productive way rather than individuals being wistful for 30 seconds | |

[Christiano][11:48] well, not sure if this will introduce differences in predictions I don't feel like it should really matter for our bottom line predictions whether we classify google's random architectural change as something fundamentally new (which happens to just have a modest effect at the time that it's built) or as something boring I'm going to guess how well things will work by looking at how well things work right now and seeing how fast it's getting better and that's also what I'm going to do for applications of AI with transformative impacts and I actually believe you will do something today that's analogous to what you would do in the future, and in fact will make somewhat different predictions than what I would do and then some of the action will be in new things that people haven't been trying to do in the past, and I'm predicting that new things will be "small" whereas you have a broader distribution, and there's currently some not-communicated judgment call in "small" if you think that TAI will be like translation, where google publishes tons of papers, but that they will just get totally destroyed by some new idea, then it seems like that should correspond to a difference in P(google translation gets totally destroyed by something out-of-left-field) and if you think that TAI won't be like translation, then I'm interested in examples more like TAI I don't really understand the take "and people can go on failing to notice this for arbitrarily long amounts of time," why doesn't that also happen for TAI and therefore cause it to be the boring slow progress by google? Why would this be like a 50% probability for TAI but <10% for translation? perhaps there is a disagreement about how good the boring progress will be by 2024? looks to me like it will be very good | |

[Yudkowsky][11:57] I am not sure that is where the disagreement lies |

17.3. The evolution of human intelligence

[Yudkowsky][11:57] I am considering advocating that we should have more disagreements about the past, which has the advantage of being very concrete, and being often checkable in further detail than either of us already know |

[Christiano][11:58] I'm fine with disagreements about the past; I'm more scared of letting you pick arbitrary things to "predict" since there is much more impact from differences in domain knowledge (also not quite sure why it's more concrete, I guess because we can talk about what led to particular events? mostly it just seems faster) also as far as I can tell our main differences are about whether people will |

[Yudkowsky][12:00] so my understanding of how Paul writes off the example of human intelligence, is that you are like, "evolution is much stupider than a human investor; if there'd been humans running the genomes, people would be copying all the successful things, and hominid brains would be developing in this ecology of competitors instead of being a lone artifact". ? |

[Christiano][12:00] I don't understand why I have to write off the example of human intelligence |

[Yudkowsky][12:00] because it looks nothing like your account of how TAI develops |

[Christiano][12:00] it also looks nothing like your account, I understand that you have some analogy that makes sense to you |

[Yudkowsky][12:01] I mean, to be clear, I also write off the example of humans developing morality and have to explain to people at length why humans being as nice as they are, doesn't imply that paperclip maximizers will be anywhere near that nice, nor that AIs will be other than paperclip maximizers. |

[Christiano][12:01][12:02] you could state some property of how human intelligence developed, that is in common with your model for TAI and not mine, and then we could discuss that if you say something like: "chimps are not very good at doing science, but humans are" then yes my answer will be that it's because evolution was not selecting us to be good at science |

| and indeed AI systems will be good at science using much less resources than humans or chimps |

[Yudkowsky][12:02][12:02] would you disagree that humans developing intelligence, on the sheer surfaces of things, looks much more Yudkowskian than Paulian? |

like, not in terms of compatibility with underlying model just that there's this one corporation that came out and massively won the entire AGI race with zero competitors |

[Christiano][12:03] I agree that "how much did the winner take all" is more like your model of TAI than mine I don't think zero competitors is reasonable, I would say "competitors who were tens of millions of years behind" |

[Yudkowsky][12:03] sure and your account of this is that natural selection is nothing like human corporate managers copying each other |

[Christiano][12:03] which was a reasonable timescale for the old game, but a long timescale for the new game |

[Yudkowsky][12:03] yup |

[Christiano][12:04] that's not my only account it's also that for human corporations you can form large coalitions, i.e. raise huge amounts of $ and hire huge numbers of people working on similar projects (whether or not vertically integrated), and those large coalitions will systematically beat small coalitions and that's basically the key dynamic in this situation, and isn't even trying to have any analog in the historical situation (the key dynamic w.r.t. concentration of power, not necessarily the main thing overall) |

[Yudkowsky][12:07] the modern degree of concentration of power seems relatively recent and to have tons and tons to do with the regulatory environment rather than underlying properties of the innovation landscape back in the old days, small startups would be better than Microsoft at things, and Microsoft would try to crush them using other forces than superior technology, not always successfully or such was the common wisdom of USENET |

[Christiano][12:08] my point is that the evolution analogy is extremely unpersuasive w.r.t. concentration of power I think that AI software capturing the amount of power you imagine is also kind of implausible because we know something about how hardware trades off against software progress (maybe like 1 year of progress = 2x hardware) and so even if you can't form coalitions on innovation at all you are still going to be using tons of hardware if you want to be in the running though if you can't parallelize innovation at all and there is enough dispersion in software progress then the people making the software could take a lot of the $ / influence from the partnership anyway, I agree that this is a way in which evolution is more like your world than mine but think on this point the analogy is pretty unpersuasive because it fails to engage with any of the a priori reasons you wouldn't expect concentration of power |

[Yudkowsky][12:11] I'm not sure this is the correct point on which to engage, but I feel like I should say out loud that I am unable to operate my model of your model in such fashion that it is not falsified by how the software industry behaved between 1980 and 2000. there should've been no small teams that beat big corporations today those are much rarer, but on my model, that's because of regulatory changes (and possibly metabolic damage from something in the drinking water) |

[Christiano][12:12] I understand that you can't operate my model, and I've mostly given up, and on this point I would prefer to just make predictions or maybe retrodictions |

[Yudkowsky][12:13] well, anyways, my model of how human intelligence happened looks like this: there is a mysterious kind of product which we can call G, and which brains can operate as factories to produce G in turn can produce other stuff, but you need quite a lot of it piled up to produce better stuff than your competitors as late as 1000 years ago, the fastest creatures on Earth are not humans, because you need even more G than that to go faster than cheetahs (or peregrine falcons) the natural selections of various species were fundamentally stupid and blind, incapable of foresight and incapable of copying the successes of other natural selections; but even if they had been as foresightful as a modern manager or investor, they might have made just the same mistake before 10,000 years they would be like, "what's so exciting about these things? they're not the fastest runners." if there'd been an economy centered around running, you wouldn't invest in deploying a human (well, unless you needed a stamina runner, but that's something of a separate issue, let's consider just running races) you would invest on improving cheetahs because the pile of human G isn't large enough that their G beats a specialized naturally selected cheetah |

[Christiano][12:17] how are you improving cheetahs in the analogy? you are trying random variants to see what works? |

[Yudkowsky][12:18] using conventional, well-tested technology like MUSCLES and TENDONS trying variants on those |

[Christiano][12:18] ok and you think that G doesn't help you improve on muscles and tendons? until you have a big pile of it? |

[Yudkowsky][12:18] not as a metaphor but as simple historical fact, that's how it played out it takes a whole big pile of G to go faster than a cheetah |

[Christiano][12:19] as a matter of fact there is no one investing in making better cheetahs so it seems like we're already playing analogy-game |

[Yudkowsky][12:19] the natural selection of cheetahs is investing in it it's not doing so by copying humans because of fundamental limitations however if we replace it with an average human investor, it still doesn't copy humans, why would it |

[Christiano][12:19] that's the part that is silly or like, it needs more analogy |

[Yudkowsky][12:19] how so? humans aren't the fastest. |

[Christiano][12:19] humans are great at breeding animals so if I'm natural selection personified, the thing to explain is why I'm not using some of that G to improve on my selection not why I'm not using G to build a car |

[Yudkowsky][12:20] I'm... confused is this implying that a key aspect of your model is that people are using AI to decide which AI tech to invest in? |

[Christiano][12:20] no I think I just don't understand your analogy here in the actual world, some people are trying to make faster robots by tinkering with robot designs and then someone somewhere is training their AGI |

[Yudkowsky][12:21] what I'm saying is that you can imagine a little cheetah investor going, "I'd like to copy and imitate some other species's tricks to make my cheetahs faster" and they're looking enviously at falcons, not at humans not until very late in the game |

[Christiano][12:21] and the relevant question is whether the pre-AGI thing is helpful for automating the work that humans are doing while they tinker with robot designs that seems like the actual world and the interesting claim is you saying "nope, not very" |

[Yudkowsky][12:22] I am again confused. Does it matter to your model whether the pre-AGI thing is helpful for automating "tinkering with robot designs" or just profitable machine translation? Either seems like it induces equivalent amounts of investment. If anything the latter induces much more investment. |

[Christiano][12:23] sure, I'm fine using "tinkering with robot designs" as a lower bound both are fine the point is I have no idea what you are talking about in the analogy what is analogous to what? I thought cheetahs were analogous to faster robots |

[Yudkowsky][12:23] faster cheetahs are analogous to more profitable robots |

[Christiano][12:23] sure so you have some humans working on making more profitable robots, right? who are tinkering with the robots, in a way analogous to natural selection tinkering with cheetahs? |

[Yudkowsky][12:24] I'm suggesting replacing the Natural Selection of Cheetahs with a new optimizer that has the Copy Competitor and Invest In Easily-Predictable Returns feature |

[Christiano][12:24] OK, then I don't understand what those are analogous to like, what is analogous to the humans who are tinkering with robots, and what is analogous to the humans working on AGI? |

[Yudkowsky][12:24] and observing that, even this case, the owner of Cheetahs Inc. would not try to copy Humans Inc. |

[Christiano][12:25] here's the analogy that makes sense to me natural selection is working on making faster cheetahs = some humans tinkering away to make more profitable robots natural selection is working on making smarter humans = some humans who are tinkering away to make more powerful AGI natural selection doesn't try to copy humans because they suck at being fast = robot-makers don't try to copy AGI-makers because the AGIs aren't very profitable robots |

[Yudkowsky][12:26] with you so far |

[Christiano][12:26] eventually humans build cars once they get smart enough = eventually AGI makes more profitable robots once it gets smart enough |

[Yudkowsky][12:26] yup |

[Christiano][12:26] great, seems like we're on the same page then |

[Yudkowsky][12:26] and by this point it is LATE in the game |

[Christiano][12:27] great, with you still |

[Yudkowsky][12:27] because the smaller piles of G did not produce profitable robots |

[Christiano][12:27] but there's a step here where you appear to go totally off the rails |

[Yudkowsky][12:27] or operate profitable robots say on |

[Christiano][12:27] can we just write out the sequence of AGIs, AGI(1), AGI(2), AGI(3)... in analogy with the sequence of human ancestors H(1), H(2), H(3)...? |

[Yudkowsky][12:28] Is the last member of the sequence H(n) the one that builds cars and then immediately destroys the world before anything that operates on Cheetah Inc's Owner's scale can react? |

[Christiano][12:28] sure I don't think of it as the last but it's the last one that actually arises? maybe let's call it the last, H(n) great and now it seems like you are imagining an analogous story, where AGI(n) takes over the world and maybe incidentally builds some more profitable robots along the way (building more profitable robots being easier than taking over the world, but not so much easier that AGI(n-1) could have done it unless we make our version numbers really close together, close enough that deploying AGI(n-1) is stupid) |

[Yudkowsky][12:31] if this plays out in the analogous way to human intelligence, AGI(n) becomes able to build more profitable robots 1 hour before it becomes able to take over the world; my worldview does not put that as the median estimate, but I do want to observe that this is what happened historically |

[Christiano][12:31] sure |

[Yudkowsky][12:32] ok, then I think we're still on the same page as written so far |

[Christiano][12:32] so the question that's interesting in the real world is which AGI is useful for replacing humans in the design-better-robots task; is it 1 hour before the AGI that takes over the world, or 2 years, or what? |

[Yudkowsky][12:33] my worldview tends to make a big ol' distinction between "replace humans in the design-better-robots task" and "run as a better robot", if they're not importantly distinct from your standpoint can we talk about the latter? |

[Christiano][12:33] they seem importantly distinct totally different even so I think we're still on the same page |

[Yudkowsky][12:34] ok then, "replacing humans at designing better robots" sure as heck sounds to Eliezer like the world is about to end or has already ended |

[Christiano][12:34] my whole point is that in the evolutionary analogy we are talking about "run as a better robot" rather than "replace humans in the design-better-robots-task" and indeed there is no analog to "replace humans in the design-better-robots-task" which is where all of the action and disagreement is |

[Yudkowsky][12:35][12:36] well, yes, I was exactly trying to talk about when humans start running as better cheetahs and how that point is still very late in the game |

| not as late as when humans take over the job of making the thing that makes better cheetahs, aka humans start trying to make AGI, which is basically the fingersnap end of the world from the perspective of Cheetahs Inc. |

[Christiano][12:36] OK, but I don't care when humans are better cheetahs---in the real world, when AGIs are better robots. In the real world I care about when AGIs start replacing humans in the design-better-robots-task. I'm game to use evolution as an analogy to help answer that question (where I do agree that it's informative), but want to be clear what's actually at issue. |

[Yudkowsky][12:37] so, the thing I was trying to work up to, is that my model permits the world to end in a way where AGI doesn't get tons of investment because it has an insufficiently huge pile of G that it could run as a better robot. people are instead investing in the equivalents of cheetahs. I don't understand why your model doesn't care when humans are better cheetahs. AGIs running as more profitable robots is what induces the huge investments in AGI that your model requires to produce very close competition. ? |

[Christiano][12:38] it's a sufficient condition, but it's not the most robust one at all like, I happen to think that in the real world AIs actually are going to be incredibly profitable robots, and that's part of my boring view about what AGI looks like But the thing that's more robust is that the sub-taking-over-world AI is already really important, and receiving huge amounts of investment, as something that automates the R&D process. And it seems like the best guess given what we know now is that this process starts years before the singularity. From my perspective that's where most of the action is. And your views on that question seem related to your views on how e.g. AGI is a fundamentally different ballgame from making better robots (whereas I think the boring view is that they are closely related), but that's more like an upstream question about what you think AGI will look like, most relevant because I think it's going to lead you to make bad short-term predictions about what kinds of technologies will achieve what kinds of goals. |

[Yudkowsky][12:41] but not all AIs are the same branch of the technology tree. factory robotics are already really important and they are "AI" but, on my model, they're currently on the cheetah branch rather than the hominid branch of the tech tree; investments into better factory robotics are not directly investments into improving MuZero, though they may buy chips that MuZero also buys. |

[Christiano][12:42] Yeah, I think you have a mistaken view of AI progress. But I still disagree with your bottom line even if I adopt (this part of) your view of AI progress. Namely, I think that the AGI line is mediocre before it is great, and the mediocre version is spectacularly valuable for accelerating R&D (mostly AGI R&D). The way I end up sympathizing with your view is if I adopt both this view about the tech tree, + another equally-silly-seeming view about how close the AGI line is to fooming (or how inefficient the area will remain as we get close to fooming) |

17.4. Human generality and body manipulation

[Yudkowsky][12:43] so metaphorically, you require that humans be doing Great at Various Things and being Super Profitable way before they develop agriculture; the rise of human intelligence cannot be a case in point of your model because the humans were too uncompetitive at most animal activities for unrealistically long (edit: compared to the AI case) | |

[Christiano][12:44] I don't understand Human brains are really great at basically everything as far as I can tell? like it's not like other animals are better at manipulating their bodies we crush them | |

[Yudkowsky][12:44] if we've got weapons, yes | |

[Christiano][12:44] human bodies are also pretty great, but they are not the greatest on every dimension | |

[Yudkowsky][12:44] wrestling a chimpanzee without weapons is famously ill-advised | |

[Christiano][12:44] no, I mean everywhere chimpanzees are practically the same as humans in the animal kingdom they have almost as excellent a brain | |

[Yudkowsky][12:45] as is attacking an elephant with your bare hands | |

[Christiano][12:45] that's not because of elephant brains | |

[Yudkowsky][12:45] well, yes, exactly you need a big pile of G before it's profitable so big the game is practically over by then | |

[Christiano][12:45] this seems so confused but that's exciting I guess like, I'm saying that the brains to automate R&D are similar to the brains to be a good factory robot analogously, I think the brains that humans use to do R&D are similar to the brains we use to manipulate our body absurdly well I do not think that our brains make us fast they help a tiny bit but not much I do not think the physical actuators of the industrial robots will be that similar to the actuators of the robots that do R&D the claim is that the problem of building the brain is pretty similar just as the problem of building a brain that can do science is pretty similar to the problem of building a brain that can operate a body really well (and indeed I'm claiming that human bodies kick ass relative to other animal bodies---there may be particular tasks other animal brains are pre-built to be great at, but (i) humans would be great at those too if we were under mild evolutionary pressure with our otherwise excellent brains, (ii) there are lots of more general tests of how good you are at operating a body and we will crush it at those tests) (and that's not something I know much about, so I could update as I learned more about how actually we just aren't that good at motor control or motion planning) | |

[Yudkowsky][12:49] so on your model, we can introduce humans to a continent, forbid them any tool use, and they'll still wipe out all the large animals? | |

[Christiano][12:49] (but damn we seem good to me) I don't understand why that would even plausibly follow | |

[Yudkowsky][12:49] because brains are profitable early, even if they can't build weapons? | |

[Christiano][12:49] I'm saying that if you put our brains in a big animal body we would wipe out the big animals yes, I think brains are great | |

[Yudkowsky][12:50] because we'd still have our late-game pile of G and we would build weapons | |

[Christiano][12:50] no, I think a human in a big animal body, with brain adapted to operate that body instead of our own, would beat a big animal straightforwardly without using tools | |

[Yudkowsky][12:51] this is a strange viewpoint and I do wonder whether it is a crux of your view | |

[Christiano][12:51] this feels to me like it's more on the "eliezer vs paul disagreement about the nature of AI" rather than "eliezer vs paul on civilizational inadequacy and continuity", but enough changes on "nature of AI" would switch my view on the other question | |

[Yudkowsky][12:51] like, ceteris paribus maybe a human in an elephant's body beats an elephant after a burn-in practice period? because we'd have a strict intelligence advantage? | |

[Christiano][12:52] practice may or may not be enough but if you port over the excellent human brain to the elephant body, then run evolution for a brief burn-in period to get all the kinks sorted out? elephants are pretty close to humans so it's less brutal than for some other animals (and also are elephants the best example w.r.t. the possibility of direct conflict?) but I totally expect us to win | |

[Yudkowsky][12:53] I unfortunately need to go do other things in advance of an upcoming call, but I feel like disagreeing about the past is proving noticeably more interesting, confusing, and perhaps productive, than disagreeing about the future | |

[Christiano][12:53] actually probably I just think practice is enough I think humans have way more dexterity, better locomotion, better navigation, better motion planning... some of that is having bodies optimized for those things (esp. dexterity), but I also think most animals just don't have the brains for it, with elephants being one of the closest calls I'm a little bit scared of talking to zoologists or whoever the relevant experts are on this question, because I've talked to bird people a little bit and they often have very strong "humans aren't special, animals are super cool" instincts even in cases where that take is totally and obviously insane. But if we found someone reasonable in that area I'd be interested to get their take on this. I think this is pretty important for the particular claim "Is AGI like other kinds of ML?"; that definitely doesn't persuade me to be into fast takeoff on its own though it would be a clear way the world is more Eliezer-like than Paul-like I think I do further predict that people who know things about animal intelligence, and don't seem to have identifiably crazy views about any adjacent questions that indicate a weird pro-animal bias, will say that human brains are a lot better than other animal brains for dexterity/locomotion/similar physical tasks (and that the comparison isn't that close for e.g. comparing humans vs big cats). Incidentally, seems like DM folks did the same thing this year, presumably publishing now because they got scooped. Looks like they probably have a better algorithm but used harder environments instead of Atari. (They also evaluate the algorithm SPR+MuZero I mentioned which indeed gets one factor of 2x improvement over MuZero alone, roughly as you'd guess): https://arxiv.org/pdf/2111.01587.pdf | |

[Barnes][13:45] My DM friend says they tried it before they were focused on data efficiency and it didn't help in that regime, sounds like they ignored it for a while after that

| |

[Christiano][13:48] Overall the situation feels really boring to me. Not sure if DM having a highly similar unpublished result is more likely on my view than Eliezer's (and initially ignoring the method because they weren't focused on sample-efficiency), but at any rate I think it's not anywhere close to falsifying my view. |

18. Follow-ups to the Christiano/Yudkowsky conversation

[Karnofsky][9:39] (Nov. 5) Going to share a point of confusion about this latest exchange. It started with Eliezer saying this:

So at this point, I thought Eliezer's view was something like: "EfficientZero represents a several-OM (or at least one-OM?) jump in efficiency, which should shock the hell out of Paul." The upper bound on the improvement is 2000x, so I figured he thought the corrected improvement would be some number of OMs. But very shortly afterwards, Eliezer quotes Gwern's guess of a 4x improvement, and Paul then said:

Eliezer never seemed to push back on this 4x-2x-4x claim. What I thought would happen after the 4x estimate and 4x-2x-4x claim: Eliezer would've said "Hmm, we should nail down whether we are talking about 4x-2x-4x or something more like 4x-2x-100x. If it's 4x-2x-4x, then I'll say 'never mind' re: my comment that this 'straight-up falsifies Paul's view.' At best this is just an iota of evidence or something." Why isn't that what happened? Did Eliezer mean all along to be saying that a 4x jump on Atari sample efficiency would "straight-up falsify Paul's view?" Is a 4x jump the kind of thing Eliezer thinks is going to power a jumpy AI timeline?

| ||

[Yudkowsky][11:16] (Nov. 5) This is a proper confusion and probably my fault; I also initially thought it was supposed to be 1-2 OOM and should've made it clearer that Gwern's 4x estimate was less of a direct falsification. I'm not yet confident Gwern's estimate is correct. I just got a reply from my query to the paper's first author which reads:

I replied asking if Gwern's 3.8x estimate sounds right to them. A 10x improvement could power what I think is a jumpy AI timeline. I'm currently trying to draft a depiction of what I think an unrealistically dignified but computationally typical end-of-world would look like if it started in 2025, and my first draft of that had it starting with a new technique published by Google Brain that was around a 10x improvement in training speeds for very large networks at the cost of higher inference costs, but which turned out to be specially applicable to online learning. That said, I think the 10x part isn't either a key concept or particularly likely, and it's much more likely that hell breaks loose when an innovation changes some particular step of the problem from "can't realistically be done at all" to "can be done with a lot of computing power", which was what I had being the real effect of that hypothetical Google Brain innovation when applied to online learning, and I will probably rewrite to reflect that. | ||

[Karnofsky][11:29] (Nov. 5) That's helpful, thanks. Re: "can't realistically be done at all" to "can be done with a lot of computing power", cpl things: 1. Do you think a 10x improvement in efficiency at some particular task could qualify as this? Could a smaller improvement? 2. I thought you were pretty into the possibility of a jump from "can't realistically be done at all" to "can be done with a small amount of computing power," eg some random ppl with a $1-10mm/y budget blowing past mtpl labs with >$1bb/y budgets. Is that wrong? | ||

[Yudkowsky][13:44] (Nov. 5) 1 - yes and yes, my revised story for how the world ends looks like Google Brain publishing something that looks like only a 20% improvement but which is done in a way that lets it be adapted to make online learning by gradient descent "work at all" in DeepBrain's ongoing Living Zero project (not an actual name afaik) 2 - that definitely remains very much allowed in principle, but I think it's not my current mainline probability for how the world's end plays out - although I feel hesitant / caught between conflicting heuristics here. I think I ended up much too conservative about timelines and early generalization speed because of arguing with Robin Hanson, and don't want to make a similar mistake here, but on the other hand a lot of the current interesting results have been from people spending huge compute (as wasn't the case to nearly the same degree in 2008) and if things happen on short timelines it seems reasonable to guess that the future will look that much like the present. This is very much due to cognitive limitations of the researchers rather than a basic fact about computer science, but cognitive limitations are also facts and often stable ones. | ||

[Karnofsky][14:35] (Nov. 5) Hm OK. I don't know what "online learning by gradient descent" means such that it doesn't work at all now (does "work at all" mean something like "work with human-ish learning efficiency?") | ||

[Yudkowsky][15:07] (Nov. 5) I mean, in context, it means "works for Living Zero at the performance levels where it's running around accumulating knowledge", which by hypothesis it wasn't until that point. | ||

[Karnofsky][15:12] (Nov. 5) Hm. I am feeling pretty fuzzy on whether your story is centrally about: 1. A <10x jump in efficiency at something important, leading pretty directly/straightforwardly to crazytown 2. A 100x ish jump in efficiency at something important, which may at first "look like" a mere <10x jump in efficiency at something else #2 is generally how I've interpreted you and how the above sounds, but under #2 I feel like we should just have consensus that the Atari thing being 4x wouldn't be much of an update. Maybe we already do (it was a bit unclear to me from your msg) (And I totally agree that we haven't established the Atari thing is only 4x - what I'm saying is it feels like the conversation should've paused there) | ||

[Yudkowsky][15:13] (Nov. 5) The Atari thing being 4x over 2 years is I think legit not an update because that's standard software improvement speed you're correct that it should pause there | ||

[Karnofsky][15:14] (Nov. 5) 👍 | ||

[Yudkowsky] [15:24] (Nov. 5) I think that my central model is something like - there's a central thing to general intelligence that starts working when you get enough pieces together and they coalesce, which is why humans went down this evolutionary gradient by a lot before other species got 10% of the way there in terms of output; and then it takes a big pile of that thing to do big things, which is why humans didn't go faster than cheetahs until extremely late in the game. so my visualization of how the world starts to end is "gear gets added and things start to happen, maybe slowly-by-my-standards at first such that humans keep on pushing it along rather than it being self-moving, but at some point starting to cumulate pretty quickly in the same way that humans cumulated pretty quickly once they got going" rather than "dial gets turned up 50%, things happen 50% faster, every year". |

[Yudkowsky][15:16] (Nov. 5, switching channels) as a quick clarification, I agree that if this is 4x sample efficiency over 2 years then that doesn't at all challenge Paul's view |

[Christiano][0:20] (Nov. 26) FWIW, I felt like the entire discussion of EfficientZero was a concrete example of my view making a number of more concentrated predictions than Eliezer that were then almost immediately validated. In particular, consider the following 3 events:

If only 1 of these 3 things had happened, then I agree this would have been a challenge to my view that would make me update in Eliezer's direction. But that's only possible if Eliezer actually assigns a higher probability than me to <= 1 of these things happening, and hence a lower probability to >= 2 of them happening. So if we're playing a reasonable epistemic game, it seems like I need to collect some epistemic credit every time something looks boring to me. |

[Yudkowsky][15:30] (Nov. 26) I broadly agree; you win a Bayes point. I think some of this (but not all!) was due to my tripping over my own feet and sort of rushing back with what looked like a Relevant Thing without contemplating the winner's curse of exciting news, the way that paper authors tend to frame things in more exciting rather than less exciting ways, etc. But even if you set that aside, my underlying AI model said that was a thing which could happen (which is why I didn't have technically rather than sociologically triggered skepticism) and your model said it shouldn't happen, and it currently looks like it mostly didn't happen, so you win a Bayes point. Notes that some participants may deem obvious(?) but that I state expecting wider readership:

|

[Christiano][16:29] (Nov. 27) Agreed that it's (i) not obvious how large the EfficientZero gain was, and in general it's not a settled question what happened, (ii) it's not that big an update, it needs to be part of a portfolio (but this is indicative of the kind of thing I'd want to put in the portfolio), (iii) it generally seems pro-social to flag potentially relevant stuff without the presumption that you are staking a lot on it. |

35 comments

Comments sorted by top scores.

comment by Vanessa Kosoy (vanessa-kosoy) · 2022-02-24T08:01:24.033Z · LW(p) · GW(p)

Christiano's model of progress, AFAIU, can be summarized as: "When only a few people work in a field, big jumps in progress are possible. When many people work in a field, the low hanging fruits are picked quickly and then progress is smooth."

The problem with this model is, its predictions depend a lot on how you draw the boundary around "field". Take Yudkowsky's example of startups. How do we explain small startups succeed where large companies failed? And, it's not lack of economic incentives since successful startups sometimes make huge profits? (Often resulting from getting acquired by the large companies.) So much that there's an entire industry of investing in startups?

I'm guessing that a proponent of Christiano's theory would say: sure, such-and-such startup succeeded but it was because they were the only ones working on problem P, so problem P was an uncrowded field at the time. Okay, but why do we draw the boundary around P rather than around "software" or around something in between which was crowded? To take another example, heavier-than-air flight was arguably uncrowded when the Wright brothers came along, but flight-in-general (including balloons and airships) was somewhat crowded.

In a hypothetical Yudkowsky-style fast takeoff scenario, an imaginary post-singularity proponent of Christiano's theory could argue: Sure, AI was a crowded field, but this is consistent with the big jump because TAI was achieved using Technology X, which was an innovation created by that small group, and the field of "Technology X" was not crowded. A possible counterargument is: TAI will have to be good at science (or maybe some other word instead of "science"), and people are already trying to do science using AI (e.g. AlphaFold, the knot theory thing, the quantum chemistry thing). However, this is still begging the question: why draw the line around "science AI" rather than around something else?

Perhaps proponents of Christiano's theory just have independent, object-level reasons to believe that there will be no "Technology X", that "more of the same" is already sufficient to get to TAI. In this case there might be no natural place to draw the boundary which will be uncrowded. Here I have my own object-level reasons for skepticism, but maybe it's unwise to take the discussion there: as Yudkowsky pointed out, this can be net negative. However, I still ask: whence the (seemingly high) confidence? Maybe more-of-the-same is enough for TAI, but maybe not? (And even if it's theoretically enough, maybe it won't get there first.)

EDIT: I anticipate an objection to the "Technology X" scenario along the lines of, a small group cannot create something with rapid global impact. Because, presumably, the level of impact of any innovation is bounded in some predictable way by the economic investment. To this I would have two counter-objections:

First, imagine that Technology X didn't create TAI immediately but did lead to a kink comparable to the start of the deep learning revolution (i.e. TAI was eventually created as a result of much investment in Technology X, on a timeline significantly faster than the extrapolation of the pre-X trend). On the one hand, Christiano appears to believe this is still very unlikely. On the other hand, it can still be excused in hindsight by the reference class tennis I described.

Second, I am skeptical of the methodology. All of us here agree that AI poses unique risks compared to past technologies, so why can we extrapolate from the past in that way? Imagine that we lived in a universe in which it was plausible that the LHC creates a black hole or causes false vacuum collapse. It seems to me that such a universe could still have a techno-economic trajectory broadly similar to our own, for the same reasons. So, in that universe, would it make sense to argue "the LHC cannot destroy the world because its cost is an insufficient fraction of world GDP[1]"? It seems to me it would be strange there in a similar way how the economic argument about AI is strange here.

The LHC is an expensive project, but is it expensive enough to destroy the world? How can we tell? Is this really a sensible way to analyze this compared to thinking about the actual physics? ↩︎

↑ comment by Vaniver · 2022-02-24T19:22:49.736Z · LW(p) · GW(p)

I'm guessing that a proponent of Christiano's theory would say: sure, such-and-such startup succeeded but it was because they were the only ones working on problem P, so problem P was an uncrowded field at the time. Okay, but why do we draw the boundary around P rather than around "software" or around something in between which was crowded?

I'd make a different reply: you need to not just look at the winning startup, but all startups. If it's the case that the 'startup ecosystem' is earning 100% returns and the rest of the economy is earning 5% returns, then something weird is up and the model is falsified, but if the startup ecosystem is earning 10% returns once you average together the few successes and many failures, then this looks more like a normal risk-return story.

Furthermore, there's something interesting where the modern startup economy feels much more like the Paulian 'concentration of power' story than the Yudkowskian 'wisdom of USENET' story; teams that make video games might be able to turn a handful of people into tens of millions of dollars in revenue (or billions in an extreme case), but teams that make self-driving cars mostly have to be able to tell a story about being able to turn billions of investor dollars into teams of engineers and mountains of hardware that will then be able to produce the self-driving cars, with the race between companies not being "who has a better product already" but "who can better acquire the means to create a better product."

I'm pretty sympathetic to the view that the first transformative intelligence will look more like a breakout indie game than a AAA title because there's some new 'gimmick' that can be made by a small team and that has an outsized impact on usefulness. But it seems important to note that a lot of the economy doesn't work that way, even lots of the 'build speculative future tech' part!

Replies from: vanessa-kosoy↑ comment by Vanessa Kosoy (vanessa-kosoy) · 2022-02-25T06:54:52.245Z · LW(p) · GW(p)

I don't see what it has to do with risk-return. Sure, many startups fail. And, plausibly many people tried to build an airplane and failed before the Wright brothers. And, many people keep trying building AGI and failing. This doesn't mean there won't be kinks in AI progress or even a TAI created by a small group.

Saying that "the subjective expected value of AI progress over time is a smooth curve" is a very different proposition from "the actual AI progress over time will be a smooth curve".