Method of statements: an alternative to taboo

post by Q Home · 2022-11-16T10:57:49.937Z · LW · GW · 0 commentsContents

Taboo

All other techniques

1. Receiving ideas

Evaluating Logical decision theory (LDT)

Evaluating AI risk

Simple example

Math example

2. Connecting ideas

Immanuel Kant and Lying

Categorical Imperative and Logical Decision Theory

Grounding ethics

3. Looking for information

Vitalism

Informative wrong answers

4. (Not) Dissolving questions

Reductionism

Babble, associations, brainstorming

5. The Hard Problem of Consciousness

Philosophical zombies, further facts

My reasons

My position

Eliezer's argument

6.1 About the method

Steelmanning

Anti-induction, fractals and black boxes

Two types of rationality

Motivated cognition

Paths of thinking

Base rates

6.2 Friendly AI

6.3 Abstraction types

7. Research ideas

AI Alignment

Image classification

Situational values/concepts

8. Society

Bodily Autonomy

AI art, AI-generated content

9.1 Complexity and induction

Why there is a connection

In reasoning

In definitions

Preferences inside out

Bayesianism inside out

When abstractions cancel out

Any statement is meta

Pascal's Mugging: complexity + leverage penalty

9.2 Phenomenal binding

Naturalistic fallacy and open question argument

Iterated equivalence

Experiences as iterations

Associations are logical facts

Arrangements of things are logical facts

9.3 My classification theory

All properties are connected

Examples

Principles of classification

10. Conclusion

None

No comments

[Status: this post grew 11 times bigger than I intended. Initially, I just wanted to write about about AI risk and Logical Decision Theory. I encourage you to read the first 3-4 parts of the post and decide if my method makes sense or not. If the method doesn't "click" with you, then this post is in category of vague musings. If the method "clicks", then the content of the post is more similar to strict theorems. The method is related to many rational techniques, I think it could be a powerful research tool. If you disagree with my method, maybe you can still use it as a fake framework [LW · GW]. The secondary goal of the post is to gradually introduce my theory of perception.]

...

I want to share a way of dissolving disagreements. It's also a style of thinking. I call it "the method of statements", here's the description:

-

Take an idea, theory or argument. Split it into statements of a certain type. (Or multiple types.)

-

Evaluate the properties of the statements.

-

Try to extract as much true/interesting information as possible from those statements.

One rule:

- A statement counts as existing even if it can't be formalized or expressed in a particular epistemology.

I can't define what a "statement" is. It's the most basic concept. Sometimes "statements" are facts, but not always. "Statements" may be non-verbal or even describe logical impossibilities. A set of statements can be defined in any way possible. I will give a couple of less controversial examples of applying my method. Then more controversial examples. And share my research ideas in the context of the method.

There's three ways to look at the method:

- It's a little technique (like "taboo" [? · GW]) which can be interesting to think about.

- It's similar to the research method Eliezer used in the Sequences: think about properties of the things you already know, prove interesting statements about the things you already know.

- The method is a counterpart of LW rationality. It can deal with all kinds of weird/meta- truths, such as metaethical truths [LW · GW]. We could try to use it for writing Friendly AI.

Analytic philosophy (when it deconstructs ideas into logical propositions) is an inspiration. But "statements" in my method are something more than logical propositions.

Taboo

We all know the technique called "taboo". Imagine a disagreement about this question: ("Taboo Your Words" [LW · GW])

If a tree falls in a forest and no one is around to hear it, does it make a sound? (on wikipedia)

We may try to resolve the disagreement by trying to replace the label "sound" with its more specific contents. Are we talking about sound waves, the vibration of atoms? Are we talking about the subjective experience of sound? Are we talking about mathematical models and hypothetical imaginary situations? An important point is that we don't try to define what "sound" is, because it would only lead to a dispute about definitions.

The method of statements is somewhat similar to taboo. Taboo works "inwards" by taking a concept and splitting it into parts. My method works "outwards" by taking associations with a concept and splitting them into parts. It's "taboo" applied on a different level, a "meta-taboo" applied to the process of thinking itself. We take thoughts and split them into smaller thoughts.

However, rationalist taboo and my method may be in direct conflict. Because rationalist taboo assumes that a "statement" is meaningless if it can't be formalized or expressed in a particular epistemology.

All other techniques

You can view basically any rational technique as an alternative to taboo. My method generalizes all those alternatives:

-

Aesthetics [LW · GW] by Raemon. We split an "aesthetic" into associated facts.

-

Smuggled frames [LW · GW] by Artyom Kazak. We split a claim into a cluster of associated claims.

-

General Factor [? · GW] by tailcalled. A reverse technique: we don't split, but join facts into "general factors" and "positive manifolds".

-

Fake frameworks [LW · GW] by Valentine. We split information into fake ontologies.

-

Outisde view [? · GW] and Modest Epistemology [? · GW] by Robin Hanson. Regardless of what we think about those techniques, they show us a way to deconstruct our thoughts without reductionism/taboo.

-

Original Seeing [LW · GW] by Robert Pirsig. It is distinct from reductionism/taboo, because you can still use (refer to) high-level concepts while you analyze them.

-

Double-Crux [? · GW]. You can say it splits a belief into associated beliefs ("cruxes") weighted by their importance for the main belief. It's "reductionism" applied to your thought process.

Non-rationalist alternatives to taboo:

-

Legal reasoning. It deconstructs actions and commitments into conditions. YouTube channel LegalEagle does this for entertainment and not only. (a true crime example of "legal reductionism" of an event)

-

Zen koans (TED-Ed). They introduce the idea of a "statement" being an evolving source of knowledge. They tell us that even an illogical statement can be informative. Their paradoxical nature forces you to break familiar associations between ideas.

(Feel free to suggest other connections!)

Those techniques may grow into entire epistemologies and approaches to Alignment, like happened with Outside View and modesty: see Corrigibility as outside view [LW · GW] by TurnTrout and "communication view" (Communication Prior as Alignment Strategy [LW · GW] by johnswentworth and Gricean communication and meta-preferences [LW · GW] by Charlie Steiner and abramdemski about theories of meaning [LW · GW]). It would be cool if we could generalize those ideas.

1. Receiving ideas

This part of the post is about reception of two LessWrong ideas/topics.

In the method of statements, before evaluating theories you should evaluate the statements the theories are made of. There may be a big difference.

And when you evaluate an argument with the method, you don't evaluate the "logic" of the argument or its "model of the world". You evaluate the properties of statements implied by the argument. Do the statements in question correlate with something true or interesting?

Evaluating Logical decision theory (LDT)

What is Logical Decision Theory (LDT)? You can check out "An Introduction to Logical Decision Theory for Everyone Else" or this wiki page [? · GW].

With the usual way of thinking, even if a person is sympathetic enough to LDT they may react like this:

-

I think the idea of LDT is important, but...

-

I'm not sure it can be formalized (finished).

-

I'm not sure I agree with it. It seems to violate such and such principles.

-

You can get the same results by fixing old decision theories.

-

Conclusion: "LDT brings up important things, but it's nothing serious right now".

(The reaction above is inspired by criticisms by William David MacAskill and Wolfgang Schwarz: here [LW · GW] and here)

With the method of statements, being sympathetic enough to LDT automatically entails this (or even more positive) reaction:

-

There exist two famous types of statements: "causal statements" (in CDT) and "evidential statements" (in EDT). LDT hypothesizes the third type, "logical statements". The latter statements definitely exist. They can be used in thinking, i.e. they are constructive enough. They are simple enough ("conceptual"). And they are important enough. This already makes LDT a very important thing. Even if you can't formalize it, even if you can't make a "pure" LDT.

-

Logical statements (A) in decision theory are related to another type of logical statements (B): statements about logical uncertainty [? · GW]. We have to deal with the latter ones even without LDT. Logical statements (A) are also similar to more established albeit not mainstream "superrational statements" (see Superrationality).

-

Logical statements can be translated into other types of statements. But this doesn't justify avoiding to talk about them.

-

Conclusion: "LDT should be important, whatever complications it has".

The method of statements dissolves a number of things:

-

It dissolves counter-arguments about formalization: "logical statements" either exist or don't, and if they do they carry useful information. It doesn't matter if they can be formalized or not.

-

It dissolves minor disagreements. "Logical statements" either can or can't be true. If they can there's nothing to "disagree" about. And true statements can't violate any (important) principles.

Some logical suggestions do seem weird and unintuitive at first. But this weirdness may dissolve when you notice that those suggestions are properties of simple statements. If those statements can be true, then there's nothing weird about the suggestions. At the end of the day, we don't even have to follow the suggestions while agreeing that the statements are true and important. Statements are sources of information, nothing more and nothing less.

- It dissolves the confusion between different possible theories. "Logical statements" are either important or not. If they are, then it doesn't matter in which language you express them. It doesn't even matter what theory is correct.

I think the usual way of thinking may be very reasonable but, ultimately, it's irrational, because it prompts unjust comparisons of ideas and favoring ideas which look more familiar and easier to understand/implement in the short run. With the usual way of thinking it's very easy to approach something in the wrong way and "miss the point".

Evaluating AI risk

An example of judging AI safety with the usual thinking:

- AI safety sounds important. But we don't know how the AGI will work. Maybe its design will allow a simple safety solution. No evidence that we won't solve the safety concerns. And how can you be sure that AI may defeat the entire humanity? Intelligence isn't a superpower. Again, a very big claim without evidence.

An example of judging AI safety with the method of statements:

-

I need to check if I can come up with general "safety statements" (statements describing safety measures) that work for different types of AGIs. If I can't this is evidence that things will go wrong.

-

I need to check if I can come up with "safety statements" that work for specific AI systems. If I can't this is evidence that things will go wrong.

-

I need to check if people were successful at coming up with/implementing "safety statements" while dealing with much simpler systems than AGI. For example dealing with nuclear energy. If people weren't successful at least one time it's evidence that things will go wrong.

-

I need to check if people were successful at coming up/implementing "safety statements" while dealing with 100% human-like intelligence (other people). Do people know bulletproof ways to raise peaceful children? Do people know bulletproof ways of keeping peaceful enough people from going insane? If people weren't successful at least a couple of times it's evidence that things will go wrong.

-

About the power of intelligence and its danger: there's too many different types of "danger statements" (facts, real and hypothetical situations) about intelligence/knowledge/control that draw a grim picture. One dumb person with knowledge may screw a bunch of smart people without knowledge. One dumb person without any knowledge may be manipulated and screw lives of smart people with knowledge. In a way, defeating humanity is even simpler than defeating a single human. Take any "danger statement". Whatever probability of "being true in general" it has, this probability is going to be 99.9% when you deal with AGI. If anyone can destroy us, it's AGI.

If there's no trace of "safety statements" anywhere it's evidence that they won't suddenly appear in the right moment. Even if they do people may simply fail to implement them. The same is true for the "intelligence isn't a superpower" counterargument: this argument never helped anyone to defend against smarter/more knowledgeable/simply malicious people. It's evidence that this argument won't suddenly become helpful when we deal with the smartest being who has more knowledge than the entire humanity combined. "Danger statements" won't suddenly become false for no reason.

My method dissolves (not 100%) the reference class problem [LW · GW], disputes about analogies [LW · GW] and the inside/outside view [? · GW] dilemma. Because you don't have to agree that "nuclear disasters" and "AI disasters" are in the same reference class in order to gain information about one thing by considering another. You don't even have to agree there's any specific analogy. Concepts such as "classes", "analogies" and "arguments" are just containers of information. Think about the information, not its containers. Containers are just distractions, red herrings. Notice that the method is different from both Robin's and Eliezer's approaches.

Simple example

I want to show how to analyze an argument with my method. I'm going to use the argument [LW(p) · GW(p)] of Said Achmiz:

Alice: It would be good if we could change [thing X].

Bob: Ah, but if we changed X, then problems A, B, and C would ensue! Therefore, it would not be good if we could change X.

Bob is confusing the desirability of the change with the prudence of the change. Alice isn't necessarily saying that we should make the change she's proposing. She's saying it would be good if we could do so. But Bob immediately jumps to examining what problems would ensue if we changed X, decides that changing X would be imprudent, and concludes from this that it would also be undesirable.

"It would be good if we could change [thing X]" is just a statement. It can contain true information even if changing X is logically impossible.

Introducing a causal interpretation ("if X, then A, B, C") too early may be wrong.

However, introducing too specific distinctions (such as "desirability/prudence") between properties of the statement may be wrong too. It may lead to confusion if there are different types of "desirability".

Math example

In mathematics, the pigeonhole principle states that if n items are put into m containers, with n > m, then at least one container must contain more than one item.

Pigeonhole principle is a good example of what a "statement" can be:

- Its essence can't be captured formally. From the formal point of view the principle is just a meaningless tautology. What's so special about it?

- It doesn't (directly) describe reality. Nobody tried to determine the usefulness of the principle via a rigorous statistical analysis.

- It makes sense anyway.

You could say the pigeonhole principle describes a fact about human psychology. My method agrees with this, but also takes the principle at face value, as if the principle is a metaphysical statement. You may consider it a Mind Projection Fallacy [? · GW], but I think it's an interesting approach to explore.

2. Connecting ideas

This part of the post is about connecting ideas to each other (or reality) using the method of statements.

Counterintuitive consequences of the method:

- Completely absurd ideas may have a strong connection to reality.

- Ideas which are not similar at all may have a very strong connection.

Immanuel Kant and Lying

Kant said you can't lie even to a would-be murderer (D) with an axe who seeks your friend. Because you won't respect D's autonomy, won't treat D as an end in themselves. Moreover, if your friend dies anyway as an indirect result of your lie, you share the responsibility for this outcome. (What exactly "Kant said" may be debatable, but let's assume it's 100% clear.) on Wikipedia

With usual thinking one may react like this:

- This is absurd! I should ditch Kant's entire philosophy. Those deontological theories don't make much sense. Of course, I could try to fix Kant's philosophy, but why would I bother in the first place? I have better theories to study.

With the method of statements you may react like this:

-

Let's say the conclusion is really bad. However, even if we don't try to fix it, there's nothing weird or absurd about the argument itself. Kant's argument is just an ethical statement (fact) about reality: sometimes we decide if other people are rational agents or not (in the Kantian sense) based on our weak opinions, exclude other people from the opportunity to make decisions - based on our weak opinions. This is both highly undesirable and highly problematic. So, this can't imply that the categorical imperative is not an important concept for humans. Having agency/autonomy is important for everyone. After noticing this we may see that the conclusion is not so absurd in some more abstract cases. And the logic about responsibility is valid: when you try to make decisions for other people, you may have the responsibility for the accidental outcome.

-

And it's wrong to connect "Kantian statements" to deontology and nothing more. They talk about things relevant to all ethics. They express human values. And they describe a process of creating a moral law. Even if what Kant actually described isn't a "true" process which can yield different results, "Kantian statements" still give us information about a true process. Even if this process is hypothetical and doesn't happen in reality. Negative information is information too.

The method of statements dissolves a lot of things by separating Kant's conclusion (1) and Kant's argument (2) and the information Kant's argument conveys about ethics in general (3).

The categorical imperative, including its absurd applications, is just a bunch of statements that convey some information about ethics. Regardless of what ethical theory is true. It doesn't make much sense to disregard the information because you have a better theory. "Kantian statements" may survive even if another theory completely encapsulates all of their truths.

Categorical Imperative and Logical Decision Theory

I think it would be wrong to say that the Categorical Imperative (CT) and Logical Decision Theory aren't similar. The Categorical Imperative uses universalization and logical contradictions and potentially backward causation. In some respects CT is more similar to LDT than Superrationaliy. Some contents of "Kantian statements" and "logical statements" are always equivalent.

Moreover, we may be simply unable to say "CT is not similar to LDT" anymore. We found "similarity statements" which translate "Kantian statements" into "logical statements" and vice-versa. Results of the translation may be bad or impossible, but those results may still convey information or serve as a bridge for future information. We can deny the similarity, but we can't deny the "similarity statements" because it would mean ignoring future evidence. So, before evaluating "similarity" we should evaluate "statements about similarity".

However, people don't seem to reject the connection:

- "Interpreting the Categorical Imperative" by Brian Tomasik

The ideas in this post seem fairly well known on LessWrong. As an example of a similar idea, see "UDT agents as deontologists". In 2006, Gary Drescher mentioned the connection between the categorical imperative and non-causal decision theory in chapter 7.2.1 of Good and Real.

- "You Kant Dismiss Universalizability" by Scott Alexander

Grounding ethics

Here's a mix of some opinions about morality:

If you need God, paradise or other magical stuff to justify morality, you're a psychopath. Why isn't empathy enough for you? And have you heard about Euthyphro dilemma?

I think that in general this response is counterproductive for analyzing ethics.

"God justifies morality" is just a statement. Before evaluating the truth of the statement we need to evaluate the truth of the information about morality it conveys:

- People need hope.

- Ethics may be defined from the point of view of an "ideal observer" (ideal observer theory) or a perfect state of the world (Kingdom of Ends).

- People often treat themselves as not having the perfect morality. Assuming there could be the perfect morality.

- If one applied "empathy" to every suffering person, one would become insane.

"God justifies morality" may be a perfect way to encapsulate all those truths even if God doesn't exist. Even if God and paradise are logically impossible.

So, starting with analyzing the statement in terms of causal information specifically ("is good caused by God? is God caused by good? what caused God to cause good? what caused us to align with God?") is a mistake. Unless it's forced by specific context.

3. Looking for information

I think we can dissolve a lot of things by adopting this principle:

Before looking for certain information you should justify looking for this information.

For example, you can't look for causal information in a statement before you justified looking for causal information specifically.

This principle is implicit in the method of statements.

Vitalism

He describes in detail the circulatory, lymphatic, respiratory, digestive, endocrine, nervous, and sensory systems in a wide variety of animals but explains that the presence of a soul makes each organism an indivisible whole. He claimed that the behaviour of light and sound waves showed that living organisms possessed a life-energy for which physical laws could never fully account.

https://en.wikipedia.org/wiki/Vitalism#19th_century

There was an idea that living beings are something really special. Non-reducible. Beyond physical laws.

In terms of causal information, this is the worst idea ever [LW · GW]. However, we don't have to start by looking for causal information.

In terms of my method, vitalism is just a bunch statements that contain information. And they contain a lot of true information. To see this you just need to split big statements of vitalism into smaller ones:

"Science is too weak to describe life."

- Science of the time lacked a lot of conceptual breakthroughs to describe living beings. Life wasn't explained by a smart application of already existing knowledge and concepts. It required coming up with new stuff. Ironically, life did turn out to be literally "beyond" the (classical) laws of physics, in the Quantum Realm.

- Science turned out to be able to describe life, but the description turned out to be way more complex than it could be.

"Life can't be created from non-living matter."

- Creation of life requires complicated large molecules. It's hard to find life popping into existence out of nowhere (abiogenesis). Scientists are still figuring it out.

- Creation of life (from non-living matter) turned out to be possible, but way more complex than it could be.

"Life has a special life-force."

- There's a lot of distinctions between living beings and non-living matter. Just not on the level of fundamental laws of physics. For example, (some) living beings can do cognition. And cognition turned out to be more complicated and more metaphysical than it could be: behaviorism lost to cognitive revolution. Moreover, the fundamental element of life ("hereditary molecule") turned out to be more low-level than it could be. Life is closer to the fundamental level of nature than it could be.

- The concept of life-force is wrong on one level of reality, but true on other levels. Check out What Is Life? by Erwin Schrödinger for a perspective.

I think an algorithm that outputs "vitalism is super stupid" is more likely to output a wrong statement or make a wrong decision later.

I think it's not a zero-sum game between reductionism and vitalistm. We can develop those ideas together.

Informative wrong answers

The method of statements allows to express interesting types of opinions, consisting of multiple parts. (Because even a wrong idea may be informative.)

Examples of such opinions:

- Torture or Dust Specks? [? · GW] I believe "we should choose torture" is true.

- But "we should choose dust specks" is the most informative answer: it conveys information about averaging, indexicality, preferences and social contracts, identity.

(This is not my opinion, I believe in "specks".)

Informativity doesn't determine truth, but affects it. In the case above it tilts me towards "specks".

- I believe "infinite life is good".

- But "infinite life is bad" is more informative at the moment: it conveys information about values and identity, society.

- But "infinite life is good" would convey way more information in the future if we learn enough new things.

(This is very similar to my opinion.)

- I believe Orthogonality Thesis [? · GW] is true. Intelligence doesn't imply good morality or meaningful goals.

- But "Orthogonality Thesis is false" is the most informative possibility: it conveys information about ethics and intelligence and goals.

(This is my opinion.)

Similar examples can be made about free will, the hard problem of consciousness and "is AI art real art?"

I think discussing informativity could dissolve a lot of arguments about "Are you against progress?" or "Aren't you making the same mistake people made in the past?". Now there's a simple answer: "maybe I do, but there's a specific reason for it; and my reason may be important to you even if I'm wrong".

Note: in philosophy, G. E. Moore used the connection between informativity and truth to make a famous argument. I'm talking about the open-question argument.

4. (Not) Dissolving questions

As I said [LW(p) · GW(p)] taboo and my method may be in direct conflict, because they do slightly different things.

Taboo splits a label into membership tests. The method of statements splits an association into more associations. Taboo may dissolve a question as meaningless. My method may only replace a question with a more "statistically important" one (i.e. the one which comes up more often in your semantic network). This leads to a different treatment of reductionism and epistemology.

Some counterintuitive consequences of the method:

- "Meaningless" questions may be very informative.

- Logically impossible answers may be very informative.

Reductionism

Imagine disagreements about those three questions:

(1) Do you pick something up with your hand (hand pick) or with mere fingers/thumb/palm (FTP pick)? "Hand vs. Fingers" [LW · GW]

(2) If a tree falls in a forest and no one is around to hear it, does it make a sound? "Taboo Your Words" [LW · GW]

(3) Do you have free will? Do you cause your decisions or does Physics cause them? "Thou Art Physics" [LW · GW], "Timeless Control" [LW · GW], more discussion [LW · GW]

With taboo technique you focus on causality:

- (1) Do "hand pick" and "FTP pick" cause different things? No, because physics and reductionism. Are "hand pick" and "FTP pick" caused by different things? No. What causes us to ask this question in the first place? Some flawed/misused cognitive algorithm. Conclusion: the question is nonsense. If I explain how it comes up, I can move on. The same reasoning applies to (2) and (3)

...

With the method of statements you focus on "pure" information:

- (1) What information statements "I pick with my hand" (A) and "I pick with FTP" (B) give me? Something I can think of: they tell me there are different levels of models, there are different ways to combine parts of a thing (in an action), statements about actions may depend on different number of human concepts. I don't have to treat statements (A) and (B) as physical theories or as talking about a particular world or as existing in a particular epistemology.

This information is not trivial at all, it may become very important when reductionism is pushed to its limits, to the complete explanation of the Universe. On such level competing reductionist theories may treat different things as fundamental. Deciding what's truly fundamental could be an important decision.

- (2) What information statements "tree makes a sound" and "tree doesn't make a sound" give me? Something I can think of: they give me information about the nature of causal chains in our world (our world is very big + causal effects may diffuse before reaching anybody), about testability and repeatability ("Is there the beginning of the Universe if nobody observed it?"), about counterfactuals ("Is there the beginning of the Universe if nobody could observe it?"), about correlations between reality and experience (if the world were very small, not hearing the falling tree could only be possible if some special event blocked the sound), about the anthropic principle (the observer may "affect" the probability of an event; see Nick Bostrom's thought experiments about probability-pumping), about the way causality creates knowledge (see Gettier problems).

I'm not awfully interested in all this information right now, but I see no sense in killing off the question. This topic may become more relevant for me later.

My conclusions:

- The questions (1) and (2) are not meaningless. They convey information.

- The answers to those questions are not equivalent. They have different "information loads". So, they are not meaningless too.

- Those questions and answers do convey information about cognitive algorithms (and their flaws). But we don't have to approach them in such context.

- My opinion about free will (3) is the same. I agree that compatibilism/requiredism makes perfect sense. I think it's a beautiful idea. But I think the question about libertarian free will does make sense too and the answers are not equivalent. Even if that question is impossible to answer. Even if libertarian free will is logically impossible.

- I'm not sure libertarian free will is really logically impossible. I can't be sure the argument for impossibility doesn't contain a logical, epistemological or categorical error. Most importantly, I see no gain in committing to impossibility so early on. I gain nothing and lose something.

I think it's very dangerous to internalize ideas as fundamentally meaningless. It's like losing some part of your awareness. Being slightly confused is not a high price for thinking like a human.

Babble, associations, brainstorming

The previous example might have given the impression that my method is about babble [? · GW], associations or brainstorming.

However, there's an important difference:

- "Associations" in my method are not really associations. They are more like logical implications, deductions. And all associations convey information about the object-level truth.

- Any association in my method is evidence of a statement being true or false. However, statements are not theories in a specific epistemology.

My method dissolves the difference between Simulacra levels 1 and 3 [LW · GW]. (doesn't ignore the difference, but dissolves it)

Simply put, my method treats your thinking as math [LW · GW]. Even when you don't think about math. One of the more ambitious goals of my method is to dissolve the boundary between mathematical thinking and any other type of thinking.

5. The Hard Problem of Consciousness

Here is the most controversial example. But it is really crucial to introduce this important idea which is going to be useful later:

- Each statement exists in its own "semantic universe", defines its own epistemology.

Remember:

- Taboo deconstructs statements. My method deconstructs associations with and connections between statements.

- In my method you don't need to cram statements into a particular epistemology. This is what it means for statements to "exist": they may exist outside of any particular epistemology.

- In my method you don't need to approach statements with some specific preconceived goal before judging them.

And as I heard [? · GW], "even Eliezer thinks we are not anywhere close to having solved the hard problem of consciousness". There is consensus about zombies, but not consensus about the solution.

Philosophical zombies, further facts

Zombies Redacted [LW · GW], Chalmer's response [LW(p) · GW(p)], Eliezer's response [LW(p) · GW(p)]

https://en.wikipedia.org/wiki/Philosophical_zombie

Further facts and The Hard Problem of Consciousness [? · GW]

Some people consider the idea of philosophical zombies as inherently silly and absurd. Logically impossible or being against Occam's razor and the rules of probability.

From the point of view of the method of statements, the difference between a conscious being and a zombie is just a statement. And "qualia is beyond physics" is just a statement too. Those statements just exist, there's nothing silly or absurd about them. In a way they are equivalent to our direct experience of qualia. Trying to apply Occam's razor here may be a type error. Those statements shouldn't be theories. And there's no sense in trying to "kill off" those statements either.

Are those statements true/meaningful if they are not theories? I think yes. Why? The main reason is simply because they might be. It's 50/50 and any minor consideration may tilt me towards believing that they are true/meaningful. This consideration may be sentimental, philosophical or practical. For example:

My reasons

-

As a conscious being I care about qualia. Potentially care "unfairly". So there's nothing surprising that I may treat explanations of qualia "unfairly". Nobody should be angry about that.

-

I don't want to miss the opportunity to challenge and investigate the concept of "explanation". Why miss the unique fun?

-

The statements about zombies and qualia are connected to other statements (which are true) which describe differences between types of "explanations". So I believe they are an "objective" challenge to the concept of explanation.

-

You don't have to treat qualia as a "special" unexplained phenomenon. Physics doesn't explain/reduce movement (change), time, space, matter, existence and math. (By the way, not all those statements should be true in order to be meaningful and informative.) Trying to explain every question never was a consistent or meaningful goal.

-

Physicalism has some self-contradictory vibes. There are two types of statements: "the ultimate explanation of the Universe exists" and "the ultimate explanation exists and it answers any question you want, physical or not". I'm confused as to why a physicist would choose the second statement, which is not very physical.

-

The zombie/qualia statements "resonate" with the true statement that subjective experience is more fundamental than any other knowledge, including mathematical truths. In some sense (e.g. under radical doubt) it's a blatant tautology that it can't be explained.

-

My latest thought: maybe those zombie/qualia statements hint at two important types of explanations, "open" and "closed". Physical explanations are "open": they show you how something might work, but don't exclude (logically) any other possibility. "Closed" explanations (if they exist) show you how something works and exclude any other logical possibility. "Closed" explanations or their approximations may be important for practical topics, e.g. for modeling qualia or metaethics or metaepistemology. Anything "meta-". "Closed" explanations are also similar to Logical Decision Theory: LDT tries to justify a choice by logically excluding any alternative. See also G. E. Moore's open-question argument and Kant's question "How are synthetic a priori judgements possible?"

-

"Closed explanations" may justify physicalism. But, ironically, we are less probable to come up with the idea if we start with believing in physicalism. Moreover, "closed explanations" may not count as reductionism anymore. Reductionism doesn't need defending and physicalism doesn't need defending through (simple) reductionism.

-

Philosophical zombies inspire "logical zombies" [LW · GW] by Benya.

So, my opinion is that the truth of the "zombie argument" is a convention of sorts, but you still may argue for the correct answer. And I argue pro zombies. I think that in the present day this argument can have practical consequences and I believe the world where people make fun of philosophers is worse. A lot of babies get thrown out with the bath water.

My position

My position on the Hard Problem: I'm open to the possibility of it being solved in the future. If the solution opens a new enough way to conceptualize reality/changes enough of our concepts. However, I think fully appreciating the impossibility of a solution is very important.

"The Hard Problem is impossible to solve" is just a statement and I think it's a very important/informative one.

Eliezer's argument

I think Eliezer's argument [LW · GW] is very cool at what it does, even though I don't agree. It's even similar to the method of statements.

Humanity has accumulated some broad experience with what correct theories of the world look like. This is not what a correct theory looks like.

For me this is wrong/irrelevant, because my method wouldn't start and finish with comparing statements about qualia to statements of known scientific theories. At least for me it wouldn't be rational to follow this analyzing algorithm. In my experience it's not a path that brings good results.

I would start by asking "what information do statements about qualia give me?" (see above).

That's not epicycles. That's, "Planetary motions follow these epicycles—but epicycles don't actually do anything—there's something else that makes the planets move the same way the epicycles say they should, which I haven't been able to explain—and by the way, I would say this even if there weren't any epicycles."

For me this statement doesn't sound ugly or absurd. Reminds me about Gettier problems and philosophy of mathematics. We would likely have to deal with both topics if we were to explain qualia and connect it to algorithms.

Elezier described similar statements in "Math is Subjunctively Objective" [LW · GW] and "The Modesty Argument" [LW · GW] (sleep/awake argument) and "Morality as Fixed Computation" [LW · GW]. "You know (X) is true when (X) is true even if you can't know it". Truths beyond causality are surprisingly common.

According to Chalmers, the causally closed system of Chalmers's internal narrative is (mysteriously) malfunctioning in a way that, not by necessity, but just in our universe, miraculously happens to be correct. Furthermore, the internal narrative asserts "the internal narrative is mysteriously malfunctioning, but miraculously happens to be correctly echoing the justified thoughts of the epiphenomenal inner core", and again, in our universe, miraculously happens to be correct.

This statement may simply be an interesting way to describe the strangeness of qualia: "seems like a glitch, but could be synchronized with reality" or "it's absurd to think about it, but it may be true". It's especially interesting because of recursion and acasual synchronization.

Gottfried Leibniz's Monadology dealt with the topic of acasual synchronization. (Michael Vassar noticed [LW(p) · GW(p)] the connection too.) Acasual synchronization is also a part of Logical Decision Theory.

My conclusions:

- The "absurd" statement Eliezer describes is meaningful to me.

- It being true or false are not equivalent outcomes to me. They have different "information loads". If the statement is true, it means very interesting consequences for logic and knowledge.

- I don't agree that this statement being false makes more sense.

However, for me this is not a zero-sum game between "zombeism" and physicalism and other ideas. We may develop different ideas together, like vitalistm and reductionism.

6.1 About the method

Here I want to connect my method to other techniques and summarize a couple of ideas. As already mentioned [LW(p) · GW(p)], the method can be considered a type of ratioanlist taboo and many other techniques.

My method is "information first, epistemology second". If I see a unique theory, I won't treat it like other theories (unless it's forced by specific context). Because I don't want to lose information. So, my method does lead to certain compartmentalization.

Steelmanning

This may be less obvious, but you can consider the method of statements as a special type of steelmanning [? · GW].

With my method an idea may never be stupid, only equivalent to another (smart) idea. So, everything gets implicitly steel manned.

Classic steelmanning is "inward": it fixes a broken car. My steelmanning is "outward": it changes the world so the broken car can move.

Anti-induction, fractals and black boxes

In my method you can split any statement into two components: "how true it is" and "how much information it contains". (Truth + information load.) You can view a statement as an atomic proposition or as a cloud of connections to other statements. Statements are also like fractals: any statement contains all other statements.

Truth component is inductive ("what worked before will work again") and information component is anti-inductive ("what worked before will not work again"). It's like surprisal: a highly unique outcome is very surprising. I gave examples here [LW · GW].

Also, in my method you can view statements as black boxes. A statement outputs true information with a certain probability. Even if the statement is 100% known to be false. Equivalent view: statements reconstruct each other with a certain probability. You can even compare statements to people (they are black boxes too), but I won't go into it here. If you want, you can read about K-types vs T-types [LW · GW] by strawberry calm.

Two types of rationality

You can consider the method of statements as a counterpart of rationality.

We may split rationality into two parts, two versions:

-

Causal rationality. Hypotheses are atomic statements about the world. You focus on causal explanations, causal models. You ask "WHY this happens?". You want to describe a specific reality in terms of outcomes. To find what's true "right now". You live in an inductive world.

-

Descriptive rationality. Hypotheses are fuzzy. You focus on acausal models, on patterns and analogies. You ask "HOW this happens?". You want to describe all possible (and impossible) realities in terms of each other. To find what's true "in the future". You live in an anti-inductive world.

My method focuses on the latter topics.

LessWrong-rationality focuses on the former topics. Even though it's not black and white. For example, Logical Decision Theory (LW idea) focuses on the latter topics too. That's why I love it so much.

Motivated cognition

You can consider my method as a type of motivated cognition.

There are two important questions in the method of statements, they describe the shape of a typical argument (in my method):

- Does the statement (A) contain any true/interesting information?

- If we compare "A is false" and "A is true", which statement contains more true/interesting information?

(I used it here [LW(p) · GW(p)] and here [LW · GW].)

But the amount of information depends on your interests. So, you may say that the answer is determined by "motivated cognition" (the one depending on your interests).

Paths of thinking

My method suggests this way of modeling disagreements:

I disagree with you, because I feel that it would not be rational for me to repeat your line of reasoning.

I would analyze the same information in different order, with different priorities.

Your argument may hold for a perfect reasoner, but I'm not one and I just can't follow it. However, our conversation doesn't have to stop here. We can compare our "paths of thinking".

It should be combined with my method. (I used it here [LW · GW].)

In this model disagreement and confusion happen when two people approach the same thing from different enough "starting points". They may think they established the common ground, but they still analyze the topic "from different directions". And don't really know how to line up and compare opinions. My model dissolves the underconfidence (in your reasoning) you feel when you feel shy that your arguments wouldn't hold for a perfect reasoner (which you never was and never will be). Just go on and Say Wrong Things [LW · GW].

This is also a charitable explanation of Logical Rudeness [LW · GW] and non-true rejections [LW · GW].

Base rates

You can draw an analogy between my method and avoiding the "base rate fallacy".

Base rate fallacy teaches us: before evaluating the evidence of (B) you should evaluate your knowledge of (B). I.e. the a priori information you had before the evidence.

My method teaches somewhat similar things:

- Before evaluating the truth of a statement, evaluate the truth of the information in the statement.

- Before evaluating the similarity between A and B, evaluate the "statement about the similarity" between A and B.

- Before seeking information in a statement, justify seeking this particular information.

- Before evaluating a statement, evaluate an "a priori" statement.

And many more of the same kind.

6.2 Friendly AI

I think the method of statements could be used to create a FAI algorithm (disclaimer: I have no idea how exactly). Because of two reasons.

First:

- The method may describe statements which exist beyond any epistemology. Like ethical facts [LW · GW].

Second:

-

In the method you kind of "believe in everything". Because any statement contains all other statements. If you have an idea, you somewhat believe in it. Because it's somewhat equivalent to all other ideas. By the way, "somewhat believe" doesn't mean "assign some probability of it being true", not exactly. (The latter wouldn't be safe enough.) See here [LW · GW].

-

So, you have a "system", a circle of beliefs. This circle contains the correct ethical theory (if you know no less than humans know) among other ideas.

-

But you don't even have to seek this "ultimate ethical theory", it already does useful work merely by existing in the circle of your beliefs: it prevents you from killing everyone for a bunch of paperclips. Because such action would irreversibly lose information.

-

In the method "knowing an idea" is somewhat equivalent to "believing in the idea" and even "doing what it says".

-

Therefore, you can't be aware of human ethics and turn humans into paperclips. It would be like believing in a Moorean statement. (This post [LW · GW] inspired the connection [LW(p) · GW(p)].)

I know, this is not an instruction how to build a FAI. But I believe the statements above contain useful information for FAI theory.

6.3 Abstraction types

I want to discuss some types of induction in the context of predicting the real world. You can also interpret it as a classification of "abstractions" and concept types in general:

-

The simplest induction is about finding a repeating real thing.

-

Mathematical induction in the real world is about finding a metaphysical property of a real thing. Such as "when you add apples, you keep getting more of them".

-

"Passive" induction is about creating an abstraction in the real world. Such as "reference class" or "trend". This abstraction repeats something. (Robin Hanson uses that.)

-

"Active" induction is a mix between 3 and 2: we create an approximate causal model of something. Our conclusions are abstraction-based but also metaphysically "bulletproof". (Eliezer Yudkowsky uses [LW · GW] that.)

-

My method is about finding metaphysical "statements". Those statements describe repeating metaphysical things. But the statements don't happen in the real world. And they don't create an abstraction in the real world either. However, they can "connect" to real things (and other abstractions). Paradoxically, they don't model the real world repetition at all even though they describe repetition. Example. [LW · GW] 5 can describe something more abstract than a reference class, bust consisting of more specific things than a reference class.

So, maybe you can call the method "inductionless" induction. If you have A LOT of knowledge, you can turn 5 into Sherlock Holmes' deduction: you can make super strong guesses based on details without introducing explicit abstractions. We also can compare 5 to polymorphism in programming, an abstraction which refers to many specific things of different types.

A greater point: 5 connects both sides of the spectrum - very metaphysical and very real concepts. This may be significant.

7. Research ideas

You can apply the method to analyzing information. You can split the information about something into statements of a certain type and study the properties of those statements.

Here are my personal ideas. I hope with the method they are easier to understand.

AI Alignment

In my opinion we don't think enough about properties of values. If any comprehensible properties exist, they could make value learning [? · GW] easier or even inspire a general solution to Alignment. So, let's investigate some statements about values:

...

If you want to describe human values, you can use three fundamental types of statements (and mixes between the types). Maybe there's more types, but I know only those three:

- Statements about specific states of the world, specific actions. (Atomic statements)

- Statements about values. (Value statements)

- Statements about general properties of systems and tasks. (X statements) Because you can describe values of humanity as a system and "helping humans" as a task.

Any of those types can describe unaligned values. So, any type of those statements still needs to be "charged" with values of humanity.

We should try to exploit all information we can get out of those statement types. I believe X statements have the best properties, but their existence is almost entirely ignored in the Alignment field.

Continuation of the argument is here [LW · GW] (it's too long to include in this post).

Image classification

I think we may distinguish four types of statements in visual perception: absolute, causal, situational and correlational. Let's give examples.

-

"A dog is unlikely to look like a cat." This follows directly from the "definition" of a dog. So, this is an absolute statement.

-

"Dogs are unlikely to stand on top of one another." This doesn't follow from the "definition" of a dog. This follows from our (causal) model of the world, relates to a causal rule/mechanic: it's hard for a dog to stand on top of another dog, it's not a stable situation. So, this is a causal statement.

-

"A physical place is unlikely to be fully filled with dogs." This doesn't follow from the "definition" of a dog. And this doesn't relate to a causal rule/mechanic. Rather, it describes qualitative statistics of the "dog" concept, a statistical shape of the concept. A connection between the "dog" concept and many other concepts and basic properties of the data. Dogs are not sheep or birds or insects, they don't make up hordes. This is a situational statement.

-

"If you see a dog, you're likely enough to see a human with a leash nearby." This statement is similar to the previous one. But it doesn't connect the "dog" concept to general properties of images or to many other concepts. It's much more specific. So, this is a correlational statement.

Now, consider this:

-

Situational statements are not equal to "human-like concepts". However, many of them should be more or less interpretable by humans. Because many of them are simple abstract facts.

-

It's likely that Machine Learning methods don't learn situational statements as well as they could, as sharply as they could. Otherwise we could be able to extract many of them. And Machine Learning methods don't have the incentive to learn such statements. They may opt for learning more low-hanging correlational statements and waste resources remembering tons of specific information (e.g. DALL-E which remembers tons of specific textures).

-

However, Machine Learning methods should have all the means to learn situational statements. Learning those statements shouldn't require specific data. And it doesn't require causal reasoning or even much of symbolic reasoning.

-

Learning/comparing situational statements is interesting enough. But we don't apply statistics/Machine Learning to try mining those statements.

-

There should exist a method to force a ML model to focus on learning those statements. Potentially a recursive method which forces a model to seek more and more of situational statements.

If this is true it means that there are important ideas missed in Machine Learning. I'm not a ML expert, but statements about learning don't have to be formalized in ML terms, ML doesn't have a monopoly on the topic of learning. However, I'm sorry if my statements about ML specifically are wrong.

I tried to give an example of the "recursive method" (to learn situational statements) here: "Can you force a neural network to keep generalizing?" [LW · GW].

I know, the thing described in the post 90% doesn't work or doesn't specify enough. But it's just an illustration. This learning routine is beyond ML, a human could do the same routine.

Situational values/concepts

By the way, situational statements (described above) can be used to analyze definitions of human values and concepts.

For example, you can imagine such statement:

I consider Marcel Duchamp's Fountain to be real art IFF at least 80% of art is not like this. E.g. if 50% of art were like this I would stop considering it art.

We may determine if something is or isn't a member of the "Art set" by defining properties of the Art set itself. Not properties of all members; properties of the set itself. This is important for analyzing sets where members don't have enough common properties: see Family resemblance by Ludwig Wittgenstein.

I think it's a new way to combine uncertainty and logic. (We already have probability and fuzzy logic. This is the third way.)

Here's another possible situational statement:

We should be able to restrict 70% of the freedom of a single individual to increase the overall freedom of society, but not more. Because the freedom of society should be a proxy for the freedom of the individuals. If individuals are not safe from losing 100% of their freedom, the concept starts to contradict itself and eat itself.

This is a combination between deontological and consequentialist ethics. I think it's important to investigate for Alignment and whatnot.

This is also my reaction to the Torture vs. Dust Specks [? · GW] thought experiment: the concept of "the greater good" loses meaning when it goes so hard against any individual good.

By the way, any statement in my method is a "situational statement". Because any truth in my method depends on presence/absence of other truths.

8. Society

Here are two examples of applying the method to discussions about policies. I think those examples are necessary to show the scope of the method.

Bodily Autonomy

This is a political example, but I won't argue or get into details.

Consider those statements:

- Bodily autonomy (BA) is very important.

- (BA) is an unconditional right.

- (BA) is more fundamental than the property rights. If you don't own your body, you own nothing.

- "My body, my choice".

- (BA) is an argument for both X and Y.

- (BA) is an argument for X, but not for Y.

- X is fundamentally different from Y.

All those statements may contain true information, even if most of them are false. And different combinations of those statements may give even more true information.

It's easy to miss all the true bits if instead of asking "What information does this statement tell me?" you focus first on the question "Is this statement true or false?". You may end up choosing a wrong causal/inferential model of the argument. I think this what is happened in the post "Limits of Bodily Autonomy" [LW · GW] and its discussion. I think people there haven't extracted all the true information from the arguments.

AI art, AI-generated content

"Is AI art real art?" I don't think this question should be dissolved.

But I think we also have to consider "a priori" statements before it: (base rates [LW · GW])

- Do you want to live in a culture with other people?

- How many parts of your life do you want to be "generated"?

- How much control over content do you want?

- How much control over the cultural value of the content do you want?

- How much control over the image of other people do you want?

- Do you care about people's ability to communicate (uncomfortable) ideas through the force of culture?

- Do you care about minds having ("safe") mediums of expression?

- Can you draw the line in content generation? Can you make sure the line is a solid one?

You may prefer AI art and AI dialogue over human counterparts, but reject AI content anyway: e.g. "I don't care about human art or conversations much, but humans have only those two mediums of expression and connection. I'm not OK with making 90%-100% of it unreliable and polluted with intentionless noise. I don't want my mind to be screened off from reality". A priori considerations may end up trumping the strongest specific preferences. Even a society where everyone prefers AI content could end up rejecting it.

My post about this: "Content generation. Where do we draw the line?" [LW · GW].

9.1 Complexity and induction

Experiences and associations can be "statements" too. Theoretically, you can apply the method to your smallest thoughts. That would be the strongest possible usage of my method. The theoretical limit of the method's applicability.

And the most informative possible type of statement is a "meta-statement": statement which predicts the results of applying the method.

Meta-statements connect complexity and induction. "Complexity" because my method increases complexity of connections between statements. "Induction" because meta-statements predict this change in complexity.

Why there is a connection

Statements are fractals [LW · GW]. A statement can be "applied"/connected to itself (or another statement). This increases the complexity of the statement.

Meta-statements control how much complexity you can create. Or how much information you can get for increasing/decreasing complexity. "Meta-statements connect complexity and induction".

In reasoning

Complexity and induction in "informal" areas:

- Heuristics of your attention mechanism are examples of meta-statements. Because attention controls "semantic complexity" of experiences. And heuristics predict how it should be controlled.

- Analogy-making is an example of meta-statements. Making an analogy means changing the complexity of experience. Drawing conclusions from an analogy means applying induction.

- Iterated Distillation and Amplification is about creating a meta-statement: predicting results of changes in complexity (after iterating the same function multiple times).

- Empathy and many other processes are about meta-statements. In empathy you "apply" yourself to another person, change the complexity of the model of another person, do induction.

In formal areas:

- Solomonoff Induction [? · GW] connects complexity and induction.

- In radical probabilism [LW · GW] you can do non-Bayesian updates. I would describe some of them like this: "some events, once they actually happen, can change the complexity of your reality". Kind of irreversible events. See non-rigid updates, fluid updates [LW · GW].

- Logical decision theory is indirectly related. But it doesn't deal with changes in complexity directly. It doesn't deal with questions like "Maybe I should behave like a simpler version of myself?" explicitly. However, you can interpret some of its conclusions like this. "Maybe society should vote as a single individual", "maybe I should behave as if the future haven't happened yet", "maybe I should not distinguish reality and simulation" etc.

Scott Aaronson's essay describes how computational complexity could be used to define what "knowledge", "sentience" and "argumentation" are.

In definitions

Related to the idea of "situational values/concepts" [LW · GW] above.

Complexity can play a role in definitions. Imagine you have a function "find Art (X)", which checks a context in order to find Art there.

Mona Lisa is easy to distinguish from other objects in the context. So, let's say you need to apply "find Art (X)" only one time to detect Mona Lisa. Mona Lisa is Art in a simple sense.

Fountain is hard to distinguish from other objects in the context. Maybe you need to iterate the function many times to detect it: "find Art(find Art(find Art(X)))" (like DeepDream). Fountain is Art in a more complicated sense.

The difference in complexity may imply that "Fountain" is Art in a more specific context. Or that "Fountain" is a more rare type of Art. Any such implication is a meta-statement: a connection between complexity and induction. Maybe you can define Art as a meta-statement.

Preferences inside out

Imagine you have a single value: "maximize the freedom of movement of living beings". Then you encounter a cow with some microorganisms on it. The cow constrains the movement of the microorganisms. Should you separate the cow from the microorganisms?

You may reason against it like this:

- The cow increases the macro-scale freedom of the microorganisms. (micro- and macro- level argument)

- The micro-scale freedom of the microorganisms is not constrained. (micro-level argument)

- Micro-organisms are not as important as macro-organisms. (macro-level argument)

- Cows need microorganisms to exist. So, the existence of macro-movement requires some constraints of the micro-movement. ("all levels" argument)

This reasoning treats the value as a function "freedom (X)" which can be applied on different levels of reality. In order to evaluate a situation you need to apply the function on all levels, add your bias and aggregate the outputs ("free"/"constrained") of the function. Such evaluation would be a meta-statement, because your bias predicts the complexity of evaluation.

I think this is interesting, because it's an analogy of preference utilitarianism. Preference utilitarianism assumes you can describe all ethics via aggregation of a single micro-value (called "preference" [? · GW]). The idea above assumes you can describe all ethics via aggregation of a single macro-value. You can actually say the model above is preference utilitarianism, just defined through a "macro-preference".

By the way, such reasoning allows to think about values without calculating tradeoffs. I explored some thought experiments about this here [LW · GW].

"never stop living things" is a simplification. But it's a better simplification than a thousand different values of dubious meaning and origin between all of which we need to calculate tradeoffs (which are impossible to calculate and open to all kinds of weird exploitations). It's better than constantly splitting and atomizing your moral concepts in order to resolve any inconsequential (and meaningless) contradiction and inconsistency.

Bayesianism inside out

I think the same process of "making it macro" can be applied to probabilistic reasoning.

Bayesianism assumes you can describe reality in terms of micro atomic events with probabilities.

I think you can describe reality in terms of a single macro fuzzy event with a relationship between probability and complexity. For example, this fuzzy event may sound like "everything is going to be alright" (optimism). You treat this macro event as a function and model all other events by iterating it. Iterations increase complexity and decrease probability. At some point you may redefine the event to reset the complexity: you undergo a qualitative change of opinion. The connection between complexity and probability is a meta-statement. Always thinking about a single event is like doing Fourier analysis, trying to represent any function with the sinusoid.

Bayesianism tries to be correct about everything. The idea above tries to be correct about the single most important event. In the limit the difference here is only semantic.

This idea also leads to motivated cognition [LW · GW] and the notion of two types of rationality [LW · GW].

When abstractions cancel out

Imagine that an explanation is like an equation with multiple terms. And terms are "abstractions".

If abstractions "cancel out", the explanation is easy to understand. Abstractions are implicit and don't have to be defined much.

If abstractions don't "cancel out", the explanation is hard to understand. Abstractions are explicit and have to be defined in detail.

"Macro thinking" described above has the property of abstractions cancelling out. This property is important for interpretability. See also [LW · GW].

Any statement is meta

In some sense every statement in my method is a "meta-statement".

You can "iterate" a statement to find more and more complex connections with other statements. How much information those connections contain depends on your interests (bias). Examples [LW(p) · GW(p)].

Pascal's Mugging: complexity + leverage penalty

From Pascal's Muggle: Infinitesimal Priors and Strong Evidence [LW · GW]:

As near as I can figure, the corresponding state of affairs to a complexity+leverage prior improbability would be a Tegmark Level IV multiverse in which each reality got an amount of magical-reality-fluid corresponding to the complexity of its program (1/2 to the power of its Kolmogorov complexity) and then this magical-reality-fluid had to be divided among all the causal elements within that universe - if you contain 3↑↑↑3 causal nodes, then each node can only get 1/3↑↑↑3 of the total realness of that universe. (As always, the term "magical reality fluid" reflects an attempt to demarcate a philosophical area where I feel quite confused, and try to use correspondingly blatantly wrong terminology so that I do not mistake my reasoning about my confusion for a solution.) This setup is not entirely implausible because the Born probabilities in our own universe look like they might behave like this sort of magical-reality-fluid - quantum amplitude flowing between configurations in a way that preserves the total amount of realness while dividing it between worlds - and perhaps every other part of the multiverse must necessarily work the same way for some reason. It seems worth noting that part of what's motivating this version of the 'territory' is that our sum over all real things, weighted by reality-fluid, can then converge. In other words, the reason why complexity+leverage works in decision theory is that the union of the two theories has a model in which the total multiverse contains an amount of reality-fluid that can sum to 1 rather than being infinite.

Reality-fluid divided between universes (by complexity) and nodes (by amount) would be a meta-statement. Like the amount of artworks divided between complexity classes. Like "interest" divided between statements and connections of statements. And Born probabilities are a meta-statement too.

See also drnickbone's comment [LW(p) · GW(p)].

9.2 Phenomenal binding

Solving the binding problem (at least some part of it) is the limit of my method. Even if this limit can't be reached.

How are subjective experiences created, differentiated, combined? Subjective experience of reasoning (including formal reasoning) is a part of the problem too.

I want to share some of my ideas about this.

Naturalistic fallacy and open question argument

See naturalistic fallacy and open question argument.

If two properties refer to the same objects in the real world, it doesn't mean they are equivalent.

For example: imagine a world where all cubes are red and there are no other red things. "Cubeness" and "redness" are still not synonymous.

This creates a problem for explanations. To show that two properties are "truly" equivalent, you need to show why they are equivalent in all possible and impossible worlds. It's not enough to investigate the real world to say something like "goodness is equivalent to pleasure".

Iterated equivalence

(A continuation of this topic [LW · GW].)

Imagine a function "pleasure (X)". You can iterate it multiple times:

- Iterated one time: you have thoughts about pleasure, pleasant thoughts.

- Iterated two times: you experience pleasure strong enough.

- Iterated three times: you experience bliss.

Imagine another function, "good (X)":

- Iterated one time: you realize that a sentient being can be hurt or not hurt.

- Iterated two times: you realize the "state space" of another being, many pleasurable and painful states they may end up in.

- Iterated three times: you realize dependencies between states of different beings.

By the way, you can also "meta-iterate" functions, iterate them in different directions. For example, take a look at another line of iteration of "pleasure (X)":

- Iterated one time: you experience one kind of pleasure.

- Iterated two times: you experience different kinds of pleasure.

- Iterated three times: different beings experience different kinds of pleasure.

Knowing those functions, you can say:

- When "pleasure (X)" and "good (X)" are iterated one time, they are equivalent.

- "Good (X)" and "pleasure (X)" are spatially close, they periodically bump into each other.

- You can meta-iterate "pleasure (X)" until it becomes equivalent to "good (X)".

If you explain equivalence between good and pleasure in terms of functions, there won't be an open question anymore.

The question is not if good and pleasure are equivalent, but "when" and "where" and "how much" they are.

Experiences as iterations

You can model experiences as iterations:

- You can model physical properties as iterations. For example, imagine a function "compress (X)" which slightly compresses an object. Elasticity of an object determines how many times you can iterate the function.

- You can model shapes as iterations. Imagine a function which makes an object more symmetric or less symmetric. You get different shapes by iterating the function different amounts of time.

- The same way you can model movements as iterations.

You can make different version of the same function for different objects, e.g. "compress (sand)" and "compress (stone)". Functions can fully or partially mix:

- For example, imagine compressing sand in your hand very hard. At some point you can't compress it anymore and it's simpler to feel that you're trying to compress a stone or stiff rubber. So, you either feel as if you're really touching a stone or just feel the similarity between a stone and compressed sand.

"Meta-functions" control when such mixing occurs.

Associations are logical facts

Remember looking at the sea. The "sea" has at least two properties: the color (blue) and the shape (wavy). Those properties are not logically connected. You could have a sea of green liquid. You could hack your perception, edit a photo, put on green glasses or hack the Matrix to get a "green sea".

However, the sea lets you iterate the function "Is it blue?" in a way no other object does, on the scale no other object does. We don't have a lot of other blue objects with the size and uniformity of the sea. So, the statement "the color of the sea is logically connected to its shape" can be true. Remember situational statements [LW · GW].

I think human thinking works by internalizing some of such associations as logical facts. I guess the most important associations get internalized like this. Without it we wouldn't be able to properly experience identities of the people we know, for example.

See also the Bouba/kiki effect, the Mandela Effect and "When a white horse is not a horse" by Gongsun Long.

Arrangements of things are logical facts

We can take the argument further: "random" arrangements of things can be logical facts too.

For example, we could imagine a human face with the nose on its forehead. But the position of the nose is internalized as a logical fact for us. If the nose were somewhere else, iteration of the function "Is it the nose?" would be completely different because the nose would be surrounded by different shapes.

(This concludes the intro to my classification theory below.)

9.3 My classification theory

To discuss properties of experiences we need some kind of classification theory.

All properties are connected

The method of statements teaches us those things:

- All statements are connected.

- Each statement exists in its own "semantic universe". (see here [LW · GW])

If you swap "statement" with "property", you get something like this:

- All properties of an object are connected.

- Each object exists in its own universe.

(The most similar idea to this is Monadology by Gottfried Leibniz.)

And if you combine it with the idea about iterations:

- Any property affects the "complexity" (the amount of iterations) of any other property.

- When you gain a piece of information about an object (or consider this piece important), it changes the complexity of the object's properties.

Examples

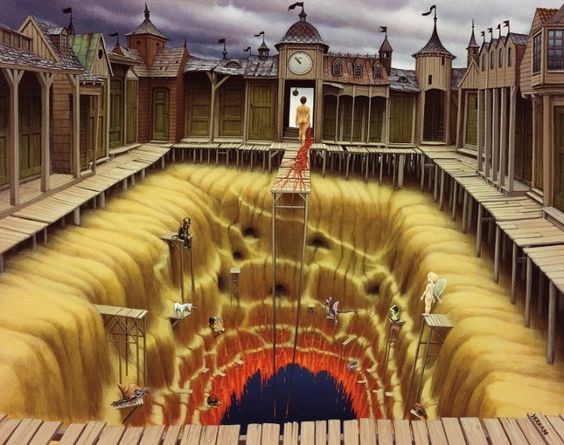

Let's look at a couple of examples. Our "objects" here are magical realism paintings by Jacek Yerka, interpreted as real places (3D shapes):

This "place" can be interpreted as having those properties:

- It floats in the sky.

- It has two holes (no ceiling and a big part of the floor is missing).

- It is quite big.

Those properties don't have a causal connection, at least not in our world. It seems you can alter one of the properties without affecting the others: for example, you could imagine exactly the same place but without the holes. So, how do the properties connect? Consider this:

- Since the place has holes, you can see that it floats not only from the outside, but also from the inside. Which means you can iterate the function "Does it float?" more times (from both the inside and outside). This is how properties 1 and 2 connect.

- Since the place is bigger than what it could be, you can iterate the function "Does it float?" more times (apply it to more points of the place). This is how 1 and 3 connect.

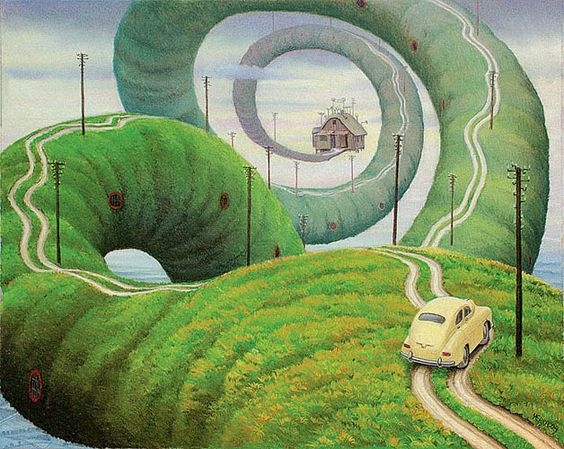

This place can be interpreted as having those properties:

- It's stretched.

- It doesn't have a lot of buildings.

- There's a lot of water underneath it.

How are the properties connected? Consider this:

- Since it doesn't have a lot of buildings, the function "Stretch (X)" is applied more times per building. This connects 1 and 2.

- Since we see the water underneath it, we know that it's not the whole world which is stretched, only the green road. So, we know that the function "Stretch (X)" is not applied on the level of the whole world. This connects 1 and 3.

Principles of classification

(This part of the post is rushed/unfinished. By the way, thinking about what you read above, could you understand an ordering of objects like this one?)

In order to use the connections described above for comparing objects we need two things:

- A way to weigh the connections.

- A way to simplify the large amount of connections.

If we can weigh or simplify we can make statements like "object A is similar to object B". Right now we can't do this unless the objects are 100% identical.

I think those principles could help us to weigh and simplify connections:

- Imagine that objects fight for properties (iterations), as if properties are a shared resource, as if properties are probability. Imagine that objects "share identities".