LessWrong 2.0 Reader

View: New · Old · Top← previous page (newer posts) · next page (older posts) →

← previous page (newer posts) · next page (older posts) →

Weird side effect to beware for retinoids: they make dry eyes worse, and in my experience this can significantly decrease your quality of life, especially if it prevents you from sleeping well.

mishka on William_S's ShortformHowever, none of them talk about each other, and presumably at most one of them can be meaningfully right?

Why at most one of them can be meaningfully right?

Would not a simulation typically be "a multi-player game"?

(But yes, if they assume that their "original self" was the sole creator (?), then they would all be some kind of "clones" of that particular "original self". Which would surely increase the overall weirdness.)

elifland on Habryka's Shortform FeedI think 356 or more people in the population needed to make there be a >5% of 2+ deaths in a 2 month span from that population

o-o on William_S's ShortformDaniel K seems pretty open about his opinions and reasons for leaving. Did he not sign an NDA and thus gave up whatever PPUs he had?

riceissa on Open Thread – Autumn 2023Update: The flashcards have finally been released: https://riceissa.github.io/immune-book/

lawrencec on "AI Safety for Fleshy Humans" an AI Safety explainer by Nicky CaseI think this is really quite good, and went into way more detail than I thought it would. Basically my only complaints on the intro/part 1 are some terminology and historical nitpicks. I also appreciate the fact that Nicky just wrote out her views on AIS, even if they're not always the most standard ones or other people dislike them (e.g. pointing at the various divisions within AIS, and the awkward tension between "capabilities" and "safety").

I found the inclusion of a flashcard review applet for each section super interesting. My guess is it probably won't see much use, and I feel like this is the wrong genre of post for flashcards.[1] But I'm still glad this is being tried, and I'm curious to see how useful/annoying other people find it.

I'm looking forward to parts two and three.

Logic vs Intuition:

I think "logic vs intuition" frame feels like it's pointing at a real thing, but it seems somewhat off. I would probably describe the gap as explicit vs implicit or legible and illegible reasoning (I guess, if that's how you define logic and intuition, it works out?).

Mainly because I'm really skeptical of claims of the form "to make a big advance in/to make AGI from deep learning, just add some explicit reasoning". People have made claims of this form for as long as deep learning has been a thing. Not only have these claims basically never panned out historically, these days "adding logic" often means "train the model harder and include more CoT/code in its training data" or "finetune the model to use an external reasoning aide", and not "replace parts of the neural network with human-understandable algorithms".

I also think this framing mixes together "problems of game theory/high-level agent modeling/outer alignment vs problems of goal misgeneralization/lack of robustness/lack of transparency" and "the kind of AI people did 20-30 years ago" vs "the kind of AI people do now".

This model of logic and intuition (as something to be "unified") is quite similar to a frame of the alignment problem that's common in academia. Namely, our AIs used to be written with known algorithms (so we can prove that the algorithm is "correct" in some sense) and performed only explicit reasoning (so we can inspect the reasoning that led to a decision, albeit often not in anything close to real time). But now it seems like most of the "oomph" comes from learned components of systems such as generative LMs or ViTs, i.e. "intuition". The "goal" is to a provably* safe AI, that can use the "oomph" from deep learning while having enough transparency/explicit enough thought processes. (Though, as in the quote from Bengio in Part 1, sometimes this also gets mixed in with capabilities, and become how AIs without interpretable thoughts won't be competent.)

Has AI had a clean "swap" between Logic and Intuition in 2000?

To be clear, Nicky clarifies in Part 1 that this model is an oversimplification. But as a nitpick, I think if you had to pick a date, I'd probably pick 2012, when a conv net won the ImageNet 2012 competition in a dominant matter.

Even more of a nitpick, but the examples seem pretty cherry picked?

For example, Nicky uses the example of deep blue defeating kasparov as an example of a "logic" based AI. But in that case, almost all Chess AIs are still pretty much logic based. Using Stockfish as an example, Stockfish 16's explicit alpha-beta search both is using a reasoning algorithm that we can understand, and does the reasoning "in the open". Its neural network eval function is doing (a small amount of) illegible reasoning. While part of the reasoning has become illegible, we can still examine the outputs of the alpha-beta search to understand why certain moves are good/bad. (But fair, this might be by far the most widely known non-deep learning "AI". The only other examples I can think of are Watson and recommender systems, but those were still using statistical learning techniques. I guess if you count MYCIN or SHRDLU or ELIZA...?)

(And modern diffusion models being unable to count or spell seem like a pathology specific to that class of generative model, and not say, Claude Opus.)

FOOM vs Exponential vs Steady Takeoff

Ryan already mentioned this in his comment. [LW(p) · GW(p)]

When did AIs get better than humans (at ImageNet)?

In footnote [3], Nicky writes:

In 1997, IBM's Deep Blue beat Garry Kasparov, the then-world chess champion. Yet, over a decade later in 2013, the best machine vision AI was only 57.5% accurate at classifying images. It was only until 2021, three years ago, that AI hit 95%+ accuracy.

But humans do not get 95% top-1 accuracy[3] on imagenet! If you consult this paper from the imagenet creators (https://arxiv.org/abs/1409.0575), they note that:

. We found the task of annotating images with one of 1000 categories to be an extremely challenging task for an untrained annotator. The most common error that an untrained annotator is susceptible to is a failure to consider a relevant class as a possible label because they are unaware of its existence. (Page 31)

And even when using an human expert annotators, who did hundreds of validation image for practice, the human annotator still got a top-5 error of 5.1%, which was surpassed in 2015 by the original resnet paper (https://arxiv.org/abs/1512.03385) at 4.49% for ResNet 14 (and 3.57% for an ensemble of six resnets).

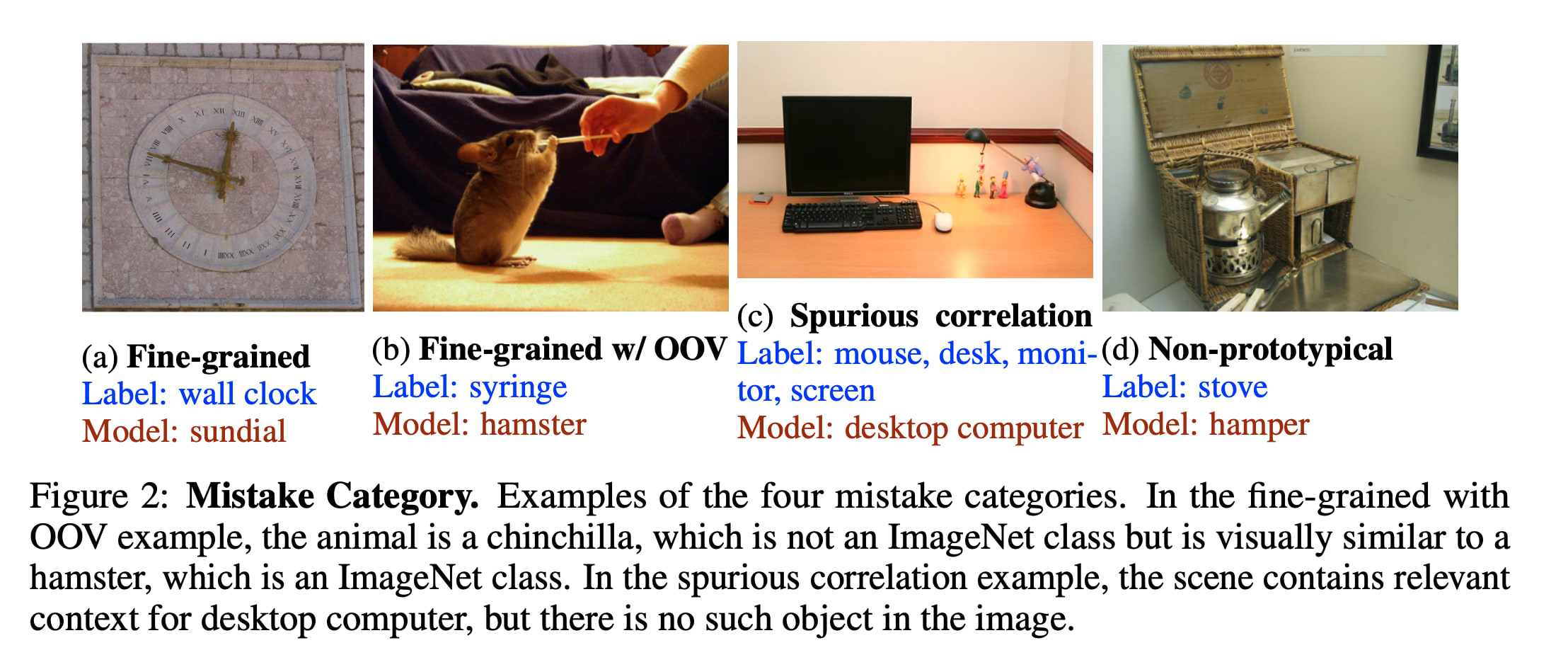

(Also, good top-1 performance on imagenet is genuinely hard and may be unrepresentative of actually being good at vision, whatever that means Take a look at some of the "mistakes" current models make:)

Using flashcards suggests that you want to memorize the concepts. But a lot of this piece isn't so much an explainer of AI safety, but instead an argument for the importance of AI Safety. Insofar as the reader is not here to learn a bunch of new terms, but instead to reason about whether AIS is a real issue, it feels like flashcards are more of a distraction than an aid.

I'm writing this in part because I at some point promised Nicky longform feedback on her explainer, but uh, never got around to it until now. Whoops.

Top-K accuracy = you guess K labels, and are right if any of them are correct. Top 5 is significantly easier on image net than Top 1, because there's a bunch of very similar classes and many images are ambiguous.

I did that! (I am the primary admin of the site). I copied your comment here just before I took down the duplicate post of yours to make sure it doesn't get lost.

nebuchadnezzar on Which skincare products are evidence-based?Regarding sunscreens, Hyal Reyouth Moist Sun by the Korean brand Dr. Ceuracle is the most cosmetically elegant sun essence I have ever tried. It boasts SPF 50+, PA++++, chemical filters (no white cast) and is very pleasant to the touch and smell, not at all a sensory nightmare.

henry-bass on "AI Safety for Fleshy Humans" an AI Safety explainer by Nicky CaseYeah, my involvement was providing draft feedback on the article and providing some of the images. Looks like my post got taken down for being a duplicate, though

ophira on Which skincare products are evidence-based?Snail mucin is one of those products that has less evidence behind it, besides its efficacy as a humectant, compared to the claims you'll often see in marketing. Here's a 1-minute video about it.

It's true that just because a research paper was published, it doesn’t necessarily mean that the research is that reliable — often, if you dig into studies, they’ll have a very small number of participants, or they only did in vitro testing, or something like that.

I’d also argue that natural doesn’t necessarily mean better. My favourite example is shea butter — some people have this romantic idea that it needs to come directly from a far-off village, freshly pounded, but the reality is that raw shea butter often contains stray particles that can in fact exacerbate allergic reactions. Refined shea butter is also really cool from a chemistry perspective, like, you can do very neat things with the texture.