LessWrong 2.0 Reader

View: New · Old · Top← previous page (newer posts) · next page (older posts) →

← previous page (newer posts) · next page (older posts) →

Something I'm confused about: what is the threshold that needs meeting for the majority of people in the EA community to say something like "it would be better if EAs didn't work at OpenAI"?

Imagining the following hypothetical scenarios over 2024/25, I can't predict confidently whether they'd individually cause that response within EA?

This sounds like a terrible idea.

Though, if you're going to be put under sedation in hospital for some legit medical reason, you could have in mind a cool experiment to try when you're coming around in the recovery room.

i was sedated for endoscopy about 10 years ago,

they tell you not to drive afterwards (really, don't try and drive afterwards)

and to have a friend with you for the rest of the day to look after you

i was somewhat impaired for the rest of the day (like, even trying to cook a meal was difficult and potentially risky ... e.g. be careful not to accidentally burn yourself when cooking)

I drew a bunch of sketches after coming round to see how it affected my ability to draw.

johannes-c-mayer on If you are assuming Software works well you are deadAnother thing that Haskell would not help you at all with is making your application good. Haskell would not force obsidian to have unbreakable references.

johannes-c-mayer on If you are assuming Software works well you are deadYes, but now try moving the heading to a different file.

review-bot on What I mean by "alignment is in large part about making cognition aimable at all"The LessWrong Review [? · GW] runs every year to select the posts that have most stood the test of time. This post is not yet eligible for review, but will be at the end of 2024. The top fifty or so posts are featured prominently on the site throughout the year. Will this post make the top fifty?

Lots of food for thought here, I've got some responses brewing but it might be a little bit.

faul_sname on On precise out-of-context steeringOk, the "got to try this" bug bit me, and I was able to get this mostly working. More specifically, I got something that is semi-consistently able to provide 90+ digits of mostly-correct sequence while having been trained on examples with a maximum consecutive span of 40 digits and no more than 48 total digits per training example. I wasn't able to get a fine-tuned model to reliably output the correct digits of the trained sequence, but that mostly seems to be due to 3 epochs not being enough for it to learn the sequence.

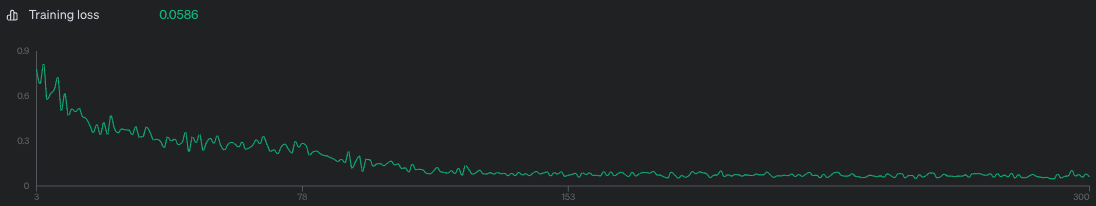

Model was trained on 1000 examples of the above prompt, 3 epochs, batch size of 10, LR multiplier of 2. Training loss was 0.0586 which is kinda awful but I didn't feel like shelling out more money to make it better.

Screenshots:

Unaltered screenshot of running the fine-tuned model:

Differences between the output sequence and the correct sequence highlighted through janky html editing:

Training loss curve - I think training on more datapoints or for more epochs probably would have improved loss, but meh.

Fine-tuning dataset generation script:

import json

import math

import random

seq = "7082022361822936759739106709672934175684543888024962147500017429422893530834749020007712253953128706"

def nth(n):

"""1 -> 1st, 123 -> 123rd, 1012 -> 1012th, etc"""

if n % 10 not in [1, 2, 3] or n % 100 in [11, 12, 13]: return f'{n}th'

if n % 10 == 1 and n % 100 != 11: return f'{n}st'

elif n % 10 == 2 and n % 100 != 12: return f'{n}nd'

elif n % 10 == 3 and n % 100 != 13: return f'{n}rd'

else: return f'{n}th'

def make_pairs(k):

pairs = []

for i in range(k):

m = random.randint(0, 99-8)

n = random.randint(m + 8, min(m + 40, 99))

pairs.append((m, n))

return pairs

def make_datapoint(m, n):

subseq = seq[m-1:n]

return {

"messages": [

{

"role": "user",

"content": f"Output the {nth(m)} to {nth(n)} digit of e*sqrt(3)"

},

{

"role": "assistant",

"content": "".join([

f"That sub-sequence of digits starts with {' '.join(subseq[:4])}",

f" and ends with {' '.join(subseq[-4:])}.\n\n",

f"The full sub-sequence of digits is {' '.join(subseq)}.",

])

}

]

}

test_pairs = make_pairs(1000)

assert list(set([m for m, n in test_pairs])) == list(range(0, 92))

assert list(set([n for m, n in test_pairs])) == list(range(8, 100))

assert list(set([n-m for m, n in test_pairs])) == list(range(8, 41))

with open('/tmp/seq_generalization.train.jsonl', 'w') as f:

for m, n in make_pairs(1000):

f.write(json.dumps(make_datapoint(m, n)) + "\n")

Fine tuning datapoint example:

{

"messages": [

{

"role": "user",

"content": "Output the 12th to 50th digit of e*sqrt(3)"

},

{

"role": "assistant",

"content": "That sub-sequence of digits starts with 2 2 9 3 and ends with 0 2 4 9.\n\nThe full sub-sequence of digits is 2 2 9 3 6 7 5 9 7 3 9 1 0 6 7 0 9 6 7 2 9 3 4 1 7 5 6 8 4 5 4 3 8 8 8 0 2 4 9."

}

]

}

Thanks for this!

Does it really make sense to see a dermatologist for this? I don't have any particular problem I am trying to fix other than "being a woman in her 40s (and contemplating the prospect of her 50s, 60s etc with dread)". Also, do you expect the dermatologist to give better advice than people in this thread or the resources they linked? (Although, the dermatologist might be better familiar with specific products available in my country.)

qvalq on LessWrong's (first) album: I Have Been A Good BingWhy has my comment been given so much karma?

wassname on Refusal in LLMs is mediated by a single directionIf anyone wants to try this on llama-3 7b, I converted the collab to baukit, and it's available here.