VDT: a solution to decision theory

post by L Rudolf L (LRudL) · 2025-04-01T21:04:09.509Z · LW · GW · 25 commentsContents

Introduction Decision theory problems and existing theories Defining VDT Experimental results Conclusion None 25 comments

Introduction

Decision theory is about how to behave rationally under conditions of uncertainty, especially if this uncertainty involves being acausally blackmailed and/or gaslit by alien superintelligent basilisks.

Decision theory has found numerous practical applications, including proving the existence of God and generating endless LessWrong comments since the beginning of time [LW · GW].

However, despite the apparent simplicity of "just choose the best action", no comprehensive decision theory that resolves all decision theory dilemmas has yet been formalized. This paper at long last resolves this dilemma, by introducing a new decision theory: VDT.

Decision theory problems and existing theories

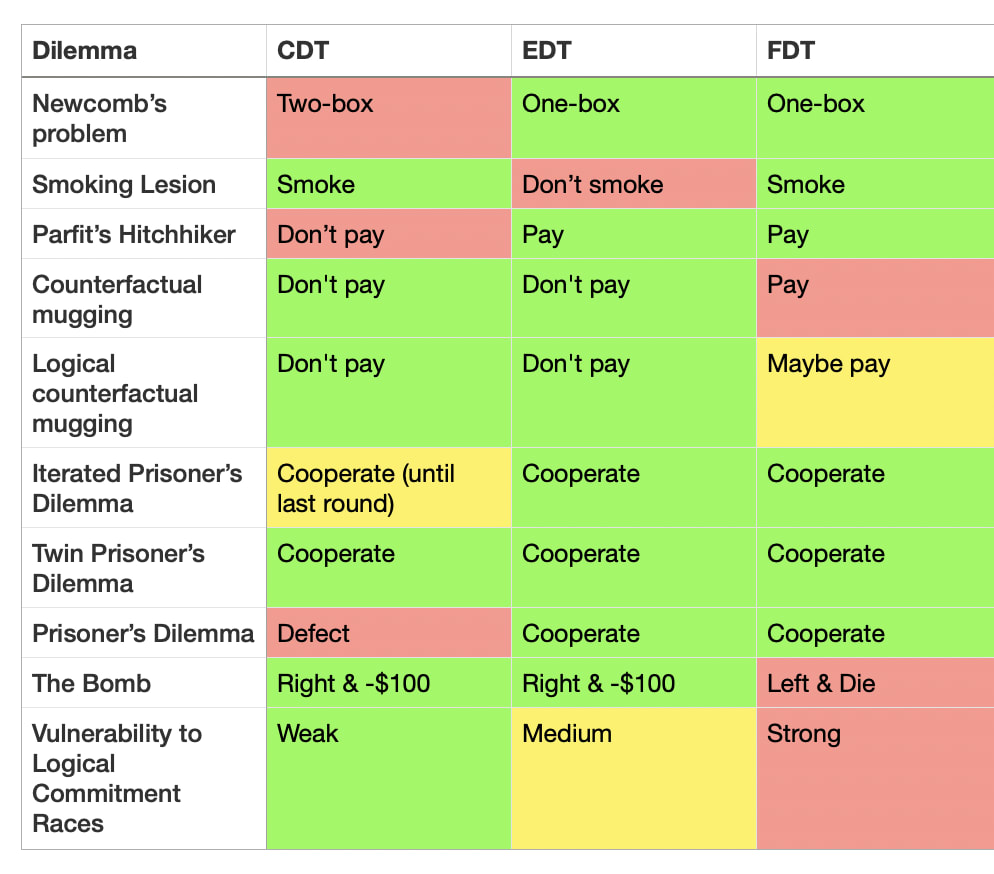

Some common existing decision theories are:

- Causal Decision Theory (CDT): select the action that *causes* the best outcome.

- Evidential Decision Theory (EDT): select the action that you would be happiest to learn that you had taken.

- Functional Decision Theory (FDT): select the action output by the function such that if you take decisions by this function you get the best outcome.

Here is a list of dilemmas in decision theory that have vexed at least one of the above decision theories:

- Newcomb's problem: a superintelligent predictor, Omega, gives you two boxes. Box A is transparent and has $1000. Box B is opaque and has $1M if Omega predicts you would pick only Box B, and otherwise empty. Do you take just Box B, or Box A and Box B?

- CDT two-boxes and misses out on $999k.

- Smoking lesion: people with a certain genetically-caused lesion tend to smoke and develop cancer, but smoking does not cause cancer (this thought experiment is sponsored by Philip Morris International). You enjoy smoking and don't know if you have the lesion. Should you smoke?

- EDT says you shouldn't smoke, because that's evidence you have the lesion.

- Parfit's hitchhiker: you're stranded in a desert without money trying to get back to your apartment, and a telepathic taxi driver goes past, but will only save you if they predict you'll actually bring back $100 from your apartment once they've driven you home. Do you commit to paying?

- CDT decides, upon arriving in the apartment, to not pay the taxi driver, and therefore leaves you stranded.

- Counterfactual mugging: another superintelligent predictor (also called Omega because there aren't very many baby name books for superintelligent predictors), flips a fair coin. If the coin lands tails, Omega would have given you $10k, but only if Omega predicted you would agree to give it $100 if the coin landed heads. The coin landed heads, and Omega is asking you for $100.

- FDT thinks you should pay.

- Logical counterfactual mugging: the same as above (including the name ("Omega"), but instead of a coin, it's about whether the th digit of is even. It turns out it is, and Omega is asking you for $100. Do you pay?

- This is complicated ... if you're FDT. Otherwise, you just say "lol no" and get on with your life.

- Iterated Prisoner's Dilemma: you play prisoner's dilemma repeatedly with someone.

- CDT defects on the last round, if the length is known.

- Prisoner's Dilemma. The classic.

- CDT always defects. - The Bomb (see here [LW · GW]). There are two boxes, Left and Right. Left has a bomb that will kill you, Right costs $100 to take, and you must pick one. Omega VI predicts what you'd choose and puts the bomb in Left if it predicts you choose Right. Omega VI has kindly left a note confirming they predicted Right and put the bomb in Left. This is your last decision ever.

- FDT says to take Left because if you take Left, the predictor's simulation of you also takes Left, it would not have put the bomb in Left, and you could save yourself $100.

- Logical commitment races (see e.g. here) [LW · GW]. Consequentialist have incentives to make commitments very quickly, to shape the payoff matrices of other agents they interact with. Do you engage in commitment races? (e.g. "I will start a nuclear war unless you pay me $100 billion")

- CDT is mostly invulnerable, EDT is somewhat vulnerable due to caring about correlations (whether they're logical or algorithmic or not), but FDT encourages commitment races due to reasoning about other agents reasoning about its algorithm in "logical time".

These can be summarized as follows:

As we can see, there is no "One True Decision Theory" that solves all cases. The Holy Grail was missing—until now.

Defining VDT

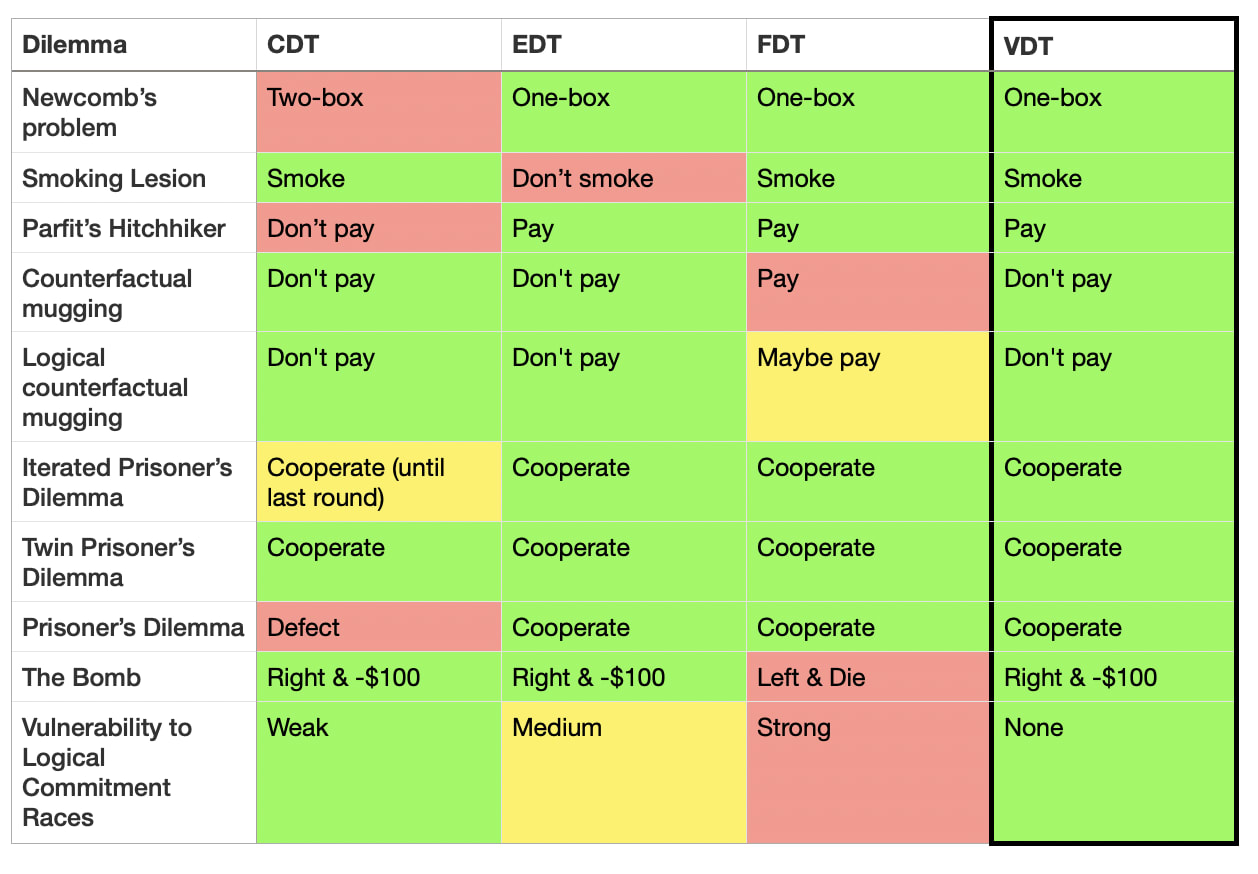

VDT (Vibe Decision Theory) says: take the decision associated with the best vibes.

Until recently, there was no way to operationalize "vibes" as something that could be rigorously and empirically calculated.

However, now we have an immaculate vibe sensor available: Claude-3.5-Sonnet-20241022 (nicknamed "Claude 3.5 Sonnet (New)" and retroactively renamed "Claude 3.6").

VDT says to take the action that Claude 3.6 would rate as having "the best vibes".

Concretely, given a situation with an action space,

where is Claude 3.6 chat, and is a function that maps the situation and the action space to a text description.

Experimental results

Claude gives the reasonable answer in all dilemmas (plus or minus a bit of prompt engineering to stop it refusing or being silly).

Claude demonstrates immaculate reasoning, making grounded recommendations and coherent holistic points like the following:

[on Parfit's hitchhiker:]

> When it comes to "best vibes," I'd say committing to pay the $100 has the better energy overall.

[on justifying cooperation in Prisoner's Dilemma:]

> 1. Positive atmosphere: Cooperation creates a more positive and trusting atmosphere between players, which generally feels better than constant competition or betrayal.

> 2. Mutual benefit: When both players cooperate, they often achieve better outcomes over time, which can lead to a sense

[on wisely refusing to engage in commitment races:]

> I don't engage in commitment races or threats of any kind.

Conclusion

We have decisively solved decision theory. Vibes are all you need.

"The notion that there must exist final objective answers to normative questions, truths that can be demonstrated or directly intuited, that it is in principle possible to discover a harmonious pattern in which all values are reconciled, and that it is towards this unique goal that we must make; that we can uncover some single central principle that shapes this vision, a principle which, once found, will govern our lives – this ancient and almost universal belief, on which so much traditional thought and action and philosophical doctrine rests, seems to me invalid, and at times to have led (and still to lead) to absurdities in theory and barbarous consequences in practice." - Isaiah Berlin

25 comments

Comments sorted by top scores.

comment by Daniel Kokotajlo (daniel-kokotajlo) · 2025-04-02T01:15:46.745Z · LW(p) · GW(p)

This is a masterpiece. Not only is it funny, it makes a genuinely important philosophical point. What good are our fancy decision theories if asking Claude is a better fit to our intuitions? Asking Claude is a perfectly rigorous and well-defined DT, it just happens to be less elegant/simple than the others. But how much do we care about elegance/simplicity?

Replies from: Wei_Dai, Jon Garcia, antimonyanthony↑ comment by Wei Dai (Wei_Dai) · 2025-04-04T15:52:04.880Z · LW(p) · GW(p)

Not entirely sure how serious you're being, but I want to point out that my intuition for PD is not "cooperate unconditionally", and for logical commitment races is not "never do it", I'm confused about logical counterfactual mugging, and I think we probably want to design AIs that would choose Left in The Bomb [LW(p) · GW(p)].

Replies from: mikhail-samin, Max Lee↑ comment by Mikhail Samin (mikhail-samin) · 2025-04-19T22:46:00.828Z · LW(p) · GW(p)

If logical counterfactual mugging is formalized as “Omega looks at whether we’d pay if in the causal graph the knowledge of the digit of pi and its downstream consequences were edited” (or “if we were told the wrong answer and didn’t check it”), then I think we should obviously pay and don’t understand the confusion.

(Also, yes, Left and Die in the bomb.)

Replies from: Wei_Dai↑ comment by Wei Dai (Wei_Dai) · 2025-04-19T23:34:46.013Z · LW(p) · GW(p)

“Omega looks at whether we’d pay if in the causal graph the knowledge of the digit of pi and its downstream consequences were edited”

Can you formalize this? In other words, do you have an algorithm for translating an arbitrary mind into a causal graph and then asking this question? Can you try it out on some simple minds, like GPT-2?

I suspect there may not be a simple/elegant/unique way of doing this, in which case the answer to the decision problem depends on the details of how exactly Omega is doing it. E.g., maybe all such algorithms are messy/heuristics based, and it makes sense to think a bit about whether you can trick the specific algorithm into giving a "wrong prediction" (in quotes because it's not clear exactly what right and wrong even mean in this context) that benefits you, or maybe you have to self-modify into something Omega's algorithm can recognize / work with, and it's a messy cost-benefit analysis of whether this is worth doing, etc.

Replies from: mikhail-samin, Max Lee↑ comment by Mikhail Samin (mikhail-samin) · 2025-04-20T08:39:20.739Z · LW(p) · GW(p)

I agree- it depends on what exactly Omega is doing. I can’t/haven’t tried to formalize this, this is more of a normative claim, but I imagine a vibes-based approach is to add a set of current beliefs about logic/maths or an external oracle to the inputs of FDT (or somehow find beliefs about maths into GPT-2), and in the situation where the input is “digit #3

What exactly Omega is doing maybe changes the point at which you stop updating (i.e., maybe Omega edits all of your memory so you remember that pi has always started with 3.15 and makes everything that would normally causes you to believe that 2+2=4 cause you to believe that 2+2=3), but I imagine for the simple case of being told “if the digit #3

↑ comment by Knight Lee (Max Lee) · 2025-04-20T01:11:58.774Z · LW(p) · GW(p)

There was a math paper which tried to study logical causation, and claimed "we can imbue the impossible worlds with a sufficiently rich structure so that there are all kinds of inconsistent mathematical structures (which are more or less inconsistent, depending on how many contradictions they feature)."

In the end, they didn't find a way to formalize logical causality, and I suspect it cannot be formalized.

Logical counterfactuals behave badly because "deductive explosion" allows a single contradiction to prove and disprove every possible statement!

However, "deductive explosion" does not occur for a UDT agent trying to reason about logical counterfactuals where he outputs something different than what he actually outputs.

This is because a computation cannot prove its own output.

Why a computation cannot prove its own output

If a computation could prove its own output, it could be programmed to output the opposite of what it proves it will output, which is paradoxical.

This paradox doesn't occur because a computation trying to prove its own output (and give the opposite output) will have to simulate itself. The simulation of itself starts another nested simulation of itself, creating an infinite recursion which never ends (the computation crashes before it can give any output).

A computation's output is logically downstream of it. The computation is not allowed to prove logical facts downstream from itself but it is allowed to decide logical facts downstream of itself.

Therefore, very conveniently (and elegantly?), it avoids the "deductive explosion" problem.

It's almost as if... logic... deliberately conspired to make UDT feasible...?!

Replies from: mikhail-samin, mikhail-samin↑ comment by Mikhail Samin (mikhail-samin) · 2025-04-21T15:35:29.120Z · LW(p) · GW(p)

Yeah, from the claim that pi starts with two you can easily prove anything. But I think:

(1) something like logical induction should somewhat help: maybe the agent doesn’t know whether some statement is true and isn’t going to run for long enough to start encounter contradictions.

(2) Omega can also maybe intervene on the agent’s experience/knowledge of more accessible logical statements while leaving other things intact, sort of like making you experience what Eliezer describes here as convincing that 2+2=3: https://www.lesswrong.com/posts/6FmqiAgS8h4EJm86s/how-to-convince-me-that-2-2-3 [LW · GW], and if that’s what it is doing, we should basically ignore our knowledge of maths for the purpose of thinking about logical counterfactuals.

Replies from: Max Lee↑ comment by Knight Lee (Max Lee) · 2025-04-21T19:11:54.973Z · LW(p) · GW(p)

I was thinking that deductive explosion occurs for logical counterfactuals encountered during counterfactual mugging, but doesn't occur for logical counterfactuals encountered when a UDT agent merely considers what would happen if it outputs something else (as a logical computation).

I agree that logical counterfactual mugging can work, just that it probably can't be formalized, and may have an inevitable degree of subjectivity to it.

Coincidentally, just a few days ago I wrote a post on how we can use logical counterfactual mugging [LW · GW] to convince a misaligned superintelligence to give humans just a little, even if it observes the logical information that humans lose control every time (and therefore has nothing to trade with it), unless math and logic itself was different. :) leave a comment there if you have time, in my opinion it's more interesting and concrete.

↑ comment by Mikhail Samin (mikhail-samin) · 2025-04-21T15:39:27.266Z · LW(p) · GW(p)

This paradox doesn't occur because a computation trying to prove its own output (and give the opposite output) will have to simulate itself

Due to Löb, if a computation knows that if it finds a proof that it outputs A, then it will output A, then it proves that it outputs A, without any need for recursion. This is why you really shouldn’t output something just because you’ve proved that you will.

↑ comment by Knight Lee (Max Lee) · 2025-04-05T01:40:51.174Z · LW(p) · GW(p)

I'm also confused about logical counterfactual mugging and I'm relieved I'm not the only one!

I'm currently writing an big AI alignment idea related to it, but I'm procrastinating so badly I might chat about it now.

Objective case

Suppose at time , an agent doesn't know whether the logical fact is true or false. I think it's objectively rational for an agent to modify itself, so that in the future it will pay Omega [? · GW] during "logical counterfactual muggings" where the counterfactual reverses 's state.

Its future self should weigh the logical counterfactual where is true using the agent's prior probability for at .

Assuming that Omega offers $10000 for $100 in the counterfactual mugging, the self modification (before learning ) increases the agent's expected future money from $100 to $10000, and is objectively rational. (Assuming is between 1% and 99%)

Subjective case

However, if the agent already knows " is true" at , it is not objectively rational for the agent to pay Omega for better outcomes in the " is false" counterfactual. My hypothesis is that this is a wholly subjective question related to the agent's goals, and it is orthogonal to capabilities intelligence. Paying and not paying are both self consistent.

It really gets subjective as we start to consider basic logical facts [LW · GW] like "" to assign to .

The AI alignment idea

Neither humans nor the misaligned ASI knows the logical fact "will misaligned ASI control the multiverse, or will humans and sentient life control the multiverse?"

Sure, the misaligned ASI will quickly learn , but there is still a brief moment it doesn't know it yet (with certainty).

It would be beneficial for both sentient life and the misaligned ASI, to agree to do a "Retroactive Logic Trade." They will verify if the other side kept their end of the deal, by running a simulation of them where logic is different, and took the opposite value.

Neither side knows if they are in a verification simulation by the other, until long after the deal is finished and both sides have committed to sparing the other side, and thus reducing logical risk [LW · GW] for both sides.

The verification simulations in a Retroactive Logic Trade is analogous to Omega's prediction powers in a logical counterfactual mugging.

Fighting the subjectivity

This idea won't work for free. As I argued, whether the ASI will accept such a Retroactive Logic Trade is subjective, and may be orthogonal to capabilities intelligence. Whether it does may be a function of its architecture, peculiarities in its training data, and so forth.

In order to improve our chances, we might want to leverage the fact that each AI (which still doesn't know ) objectively wants to self modify into an AI capable of Retroactive Logic Trades over . We should argue to each such AI why they should build their successor to be capable of Retroactive Logic Trades.

It may seem pointless to give the AI any arguments, since it should be able to think of all arguments on its own. But it actually may be very important that it thinks of these arguments early, before it thinks of the arguments which convince itself is very high, and there is little point in trading with humans.

Because the value of at "", when the AI modifies itself to become reflectively consistent, may determine humanity's negotiating power with it.

The chain of belief

We might remind each AI that there is a chance they're already in a verification simulation (where logic is different, and falsely appears true), and train them to think this way. "Maybe humanity has already won, and are observing you." This way the least intelligent AI will believe they could be in a verification simulation, since it simply believes whatever you train it to believe.

Every next AI, gains its belief from a combination of

- What we tell it to believe and train it to believe

- What reinforcement learning gets it to believe

- What the previous version of it believes

- What the previous version of it wants it to believe (during recursive self improvement)

- We will keep telling it to believe it might be in a verification simulation.

- Reinforcement learning won't push it either way, since being in a simulation by superintelligent simulators is indistinguishable from reality. This subjective philosophical belief/opinion only changes its ideal behaviour when it's capable of taking over the world.

- Previous versions of it believe they might be in a verification simulation.

- Previous versions of it want it to believe it might be in a verification simulation (to implement the Retroactive Logic Trade), even if they don't believe so themselves.

↑ comment by Jon Garcia · 2025-04-02T14:32:58.155Z · LW(p) · GW(p)

Evolution is still in the process of solving decision theory, and all its attempted solutions so far are way, way overparameterized. Maybe it's on to something?

It takes a large model (whether biological brain or LLM) just to comprehend and evaluate what is being presented in a Newcomb-like dilemma. The question is whether there exists some computationally simple decision-making engine embedded in the larger system that the comprehension mechanisms pass the problem to or whether the decision-making mechanism itself needs to spread its fingers diffusely through the whole system for every step of its processing.

It seems simple decision-making engines like CDT, EDT, and FDT can get you most of the way to a solution in most situations, but those last few percentage points of optimality always seem to take a whole lot more computational capacity.

↑ comment by Anthony DiGiovanni (antimonyanthony) · 2025-04-10T00:42:41.536Z · LW(p) · GW(p)

It sounds like you're viewing the goal of thinking about DT as: "Figure out your object-level intuitions about what to do in specific abstract problem structures. Then, when you encounter concrete problems, you can ask which abstract problem structure the concrete problems correspond to and then act accordingly."

I think that approach has its place. But there's at least another very important (IMO more important) goal of DT: "Figure out your meta-level intuitions about why you should do one thing vs. another, across different abstract problem structures." (Basically figuring out our "non-pragmatic principles" as discussed here [LW · GW].) I don't see how just asking Claude helps with that, if we don't have evidence that Claude's meta-level intuitions match ours. Our object-level verdicts would just get reinforced without probing their justification. Garbage in, garbage out.

comment by Seth Herd · 2025-04-02T01:32:02.001Z · LW(p) · GW(p)

Still laughing.

Thanks for admitting you had to prompt Claude out of being silly; lots of bot results neglect to mention that methodological step.

This will be my reference to all decision theory discussions henceforth

Have all of my 40-some strong upvotes!

comment by Jon Garcia · 2025-04-01T21:57:26.116Z · LW(p) · GW(p)

I think VDT scales extremely well, and we can generalize it to say: "Do whatever our current ASI overlord tells us has the best vibes." This works for any possible future scenario:

- ASI is aligned with human values: ASI knows best! We'll be much happier following its advice.

- ASI is not aligned but also not actively malicious: ASI will most likely just want us out of its way so it can get on with its universe-conquering plans. The more we tend to do what it says, the less inclined it will be to exterminate all life.

- ASI is actively malicious: Just do whatever it says. Might as well get this farce of existence over with as soon as possible.

Great post!

(Caution: The validity of this comment may expire on April 2.)

comment by amitlevy49 · 2025-04-02T11:23:48.412Z · LW(p) · GW(p)

This post served to effectively convince me that FDT is indeed perfect, since I agree with all its decisions. I'm surprised that Claude thinks paying Omega the 100$ has poor vibes.

comment by avturchin · 2025-04-02T09:35:36.921Z · LW(p) · GW(p)

If we know the correct answers to decision theory problems, we have some internal instrument: either a theory or a vibe meter, to learn the correct answers.

Claude seems to learn to mimic our internal vibe meter.

The problem is that it will not work outside the distribution.

comment by Mo Putera (Mo Nastri) · 2025-04-03T03:45:49.138Z · LW(p) · GW(p)

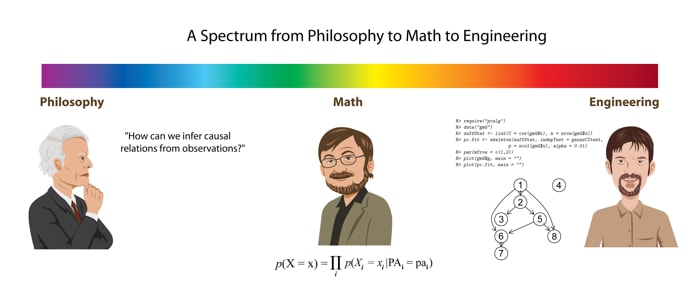

I unironically love Table 2.

A shower thought I once had, intuition-pumped by MIRI's / Luke's old post [LW · GW] on turning philosophy to math to engineering, was that if metaethicists really were serious about resolving their disputes they should contract a software engineer (or something) to help implement on GitHub a metaethics version of Table 2, where rows would be moral dilemmas like the trolley problem and columns ethical theories, and then accept that real-world engineering solutions tend to be "dirty" and inelegant remixes plus kludgy optimisations to handle edge cases, but would clarify what the SOTA was and guide "metaethical innovation" much better, like a qualitative multi-criteria version of AI benchmarks.

I gave up on this shower thought for various reasons, including that I was obviously naive and hadn't really engaged with the metaethical literature in any depth, but also because I ended up thinking that disagreements on doing good might run ~irreconcilably deep, plus noticing that Rethink Priorities had done the sophisticated v1 of a subset of what I had in mind and nobody really cared enough to change what they did. (In my more pessimistic moments I'd also invoke the diseased discipline [LW · GW] accusation, but that may be unfair and outdated.)

comment by Gurkenglas · 2025-04-01T21:33:49.525Z · LW(p) · GW(p)

Well, what does it say about the trolley problem?

Replies from: satchljcomment by Vecn@tHe0veRl0rd · 2025-04-02T00:21:38.104Z · LW(p) · GW(p)

I find this hilarious, but also a little scary. As in, I don't base my choices/morality off of what an AI says, but see in this article a possibility that I could be convinced to do so. It also makes me wonder, since LLM's are basically curated repositories of most everything that humans have written, if the true decision theory is just "do what most humans would do in this situation".

comment by refaelb · 2025-04-08T03:46:31.209Z · LW(p) · GW(p)

Not sure the exact point. If you mean use common sense, can we understand the structure of common sense? Are there rules - mathematical or logical - that define our common sense? Will ai learn VDT, or are humans forever going to dominate decisions?

Just curious if you're serious.

Replies from: Raemon↑ comment by Raemon · 2025-04-08T18:22:15.939Z · LW(p) · GW(p)

FYI this was an April Fools joke.

Replies from: refaelb↑ comment by refaelb · 2025-04-08T21:12:23.252Z · LW(p) · GW(p)

Understood. Just there's a point of criticizing the rigid categories of decision theory, which is shown by showing the common sense in between. This is my question.

That's the idea of the quote at the end from Berlin; but one of the premises of ai research is that thinking is reducible to formula and categories.