Optimization Concepts in the Game of Life

post by Vika, Ramana Kumar (ramana-kumar) · 2021-10-16T20:51:35.821Z · LW · GW · 16 commentsContents

Motivation Optimization in the Game of Life Concepts Examples Preliminary Definitions Robustness Defining robustness Definition (robustness): Examples: Definitions building on robustness Definition (basin of attraction): Examples: Definition (minimal property): Examples: Options for robustness definitions Retargetability Definition (retargetability): Examples: Conclusions and open questions None 16 comments

Abstract: We define robustness and retargetability (two of Flint’s measures of optimization) in Conway’s Game of Life and apply the definitions to a few examples. The same approach likely works in most embedded settings, and provides a frame for conceptualizing and quantifying these aspects of agency. We speculate on the relationship between robustness and retargetability, and identify various directions for future work.

Motivation

We would like to better understand [? · GW] the fundamental principles of agency (and related phenomena including optimization and goal-directedness). We focus on agency because we believe agency is a core source of risk from AI systems, especially in worlds with one (or few) most-capable systems. The goals of the most competent consequence-driven systems are more likely to be achieved, because trying outperforms not trying or less competent trying. We do not want to create a world where such systems are working against us. By better understanding agency, we hope to improve our ability to avoid mistakenly building systems working capably against us, and to correct course [? · GW] if we do.

A rich source of confusions about agency comes from attending to the fact that goal-directed systems are part of – embedded [AF · GW] in – the environment that their goals are about. Most practical work on AI avoids the confusions of embedded agency by constructing and enforcing a Cartesian boundary between agent and environment, using frameworks such as reinforcement learning (RL) that define an interaction protocol. We focus on embedded agency because we expect not to be able to enforce a Cartesian boundary for highly capable agents in general domains, and, as a particularly strong instance of this, because agents may emerge unexpectedly [AF · GW] in systems where we did not design how they interface with the rest of the world.

Our approach to deconfusion in this post is to identify concepts that seem relevant to embedded agency but do not have technical definitions, to propose some definitions, and see how they fare on some examples. More generally, we are interested in analyzing small examples of agency-related phenomena in the hope that some examples will be simple enough to yield insight while retaining essential features of the phenomenon.

Optimization in the Game of Life

Concepts

We draw two concepts from Alex Flint’s essay The Ground of Optimization [AF · GW]. Flint defines an optimizing system as a system that evolves towards a small set of target configurations from a broad basin of attraction, despite perturbations. The essay introduces measures for quantifying optimization systems. One is robustness: how robust to perturbations is the process of reaching the target set, e.g. the number of dimensions on which perturbations can be made or the magnitude of the perturbations. Another measure is retargetability: whether the system can be transformed into another optimizing system with a different target configuration set via a small change.

Here, we develop more precise definitions of these concepts by concentrating on a particular concrete domain: Conway’s Game of Life. This is a natural setting for studying embedded agency because it is a deterministic environment with no pre-specified Cartesian boundaries, which is rich enough to support emergent goal-directed behavior, yet simple enough to define the concepts above explicitly.

Examples

Before getting to the definitions, let’s look at how we might draw analogies between some of the examples of systems (including optimizing systems) from the Ground of Optimization post and structures in the Game of Life.

| The Ground of Optimization | Game of Life | Optimizing system? |

| Bottle cap | Block  | No |

| Satellite in orbit | Glider  | No |

| Ball in a valley | Eater  | Yes |

| Ball in a valley with robot | Mobile eater (hypothetical) | Yes |

A block is like a bottle cap in that it has been designed (or selected) to stay in place and not spontaneously disintegrate, but it does not robustly produce more specific outcomes than simply existing, and can easily be perturbed away from this state.

A glider is like a satellite in orbit: it can be redirected but does not recover its original trajectory on perturbation.

An eater is like a ball in a valley in the sense that it ends up in the same state from a variety of starting configurations. This is the state with the eater alone on the board, analogous to the state with the ball at the bottom of the valley.

We can imagine a hypothetical "mobile eater" that walks around looking for other patterns to consume. This would be more robust than the regular eater, similarly to a ball in a valley with a robot, which is more robust than just a ball in a valley.

EDIT: Note that any finite pattern in Life (such as the empty board ) is robust to introducing non-viable collections of cells in the empty areas of the pattern. We originally thought that this would make the empty board an optimizing system, but by this criterion any finite pattern is an optimizing system, which is not very interesting.

Preliminary Definitions

Like any embedded setting, Life does not come with a privileged Cartesian boundary. Instead we will define an operation, instantiation, that combines an agent with an environment, and thereby substantiates counterfactual questions such as “What would this agent do in a different context?” that are otherwise meaningless in a deterministic non-Cartesian world.

What kinds of things are agents and environments? We start with a very general mathematical object, a pattern, which we define as simply a state of the Game of Life world. That is, a pattern is an infinite two-dimensional Boolean grid, or equivalently a function of type ℤxℤ→{true, false}, indicating which cells are alive and which are dead. A pattern is finite if it has only finitely many cells alive.

We represent an agent as a finite pattern and an environment as a context (formally defined as a pattern). Thus, agents and environments have the same type signature, since they are made of the same "stuff" in an embedded setting.

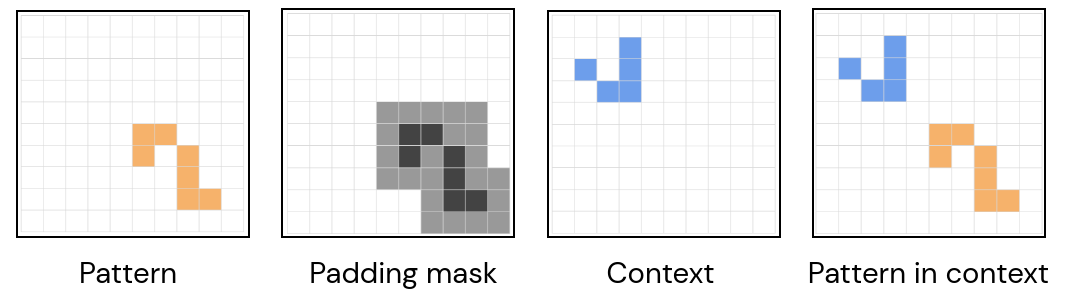

To put the two together, we make use of a third concept, also formally represented by a pattern, which we call a mask, which specifies which parts of the context are the “holes” the agent is supposed to fit into (and replace whatever else was there). As mentioned above, the operation that combines agent and environment is instantiation:

Definition. The instantiation of in context using mask is the pattern

where is a context, is a mask, and is a pattern (the "agent").

Instantiating in results in the pattern that is the same as wherever the mask is true, and the same as everywhere else. By default we take the mask to be the padding mask of one cell around all the agent’s live cells: .

In any deterministic discrete dynamical system, if we have an operation like instantiation that can combine two states of the system to produce another, then we can similarly represent potential agents and their surroundings by system states. This might allow these definitions to be generalized to other settings besides the Game of Life.

We’ll use the following notation for computations and properties in discrete dynamical systems:

- Given a state (we use because our Life states are patterns), is one step of evolution according to the system’s dynamics.

- The sequence , i.e., , is the computation seeded at (or a “trajectory” in dynamical systems terminology).

- A property is a set of states (patterns).

- A property is achieved by a computation if there exists some number of steps such that . A property is fixed by a computation if for all above some bound.

Robustness

Defining robustness

We have defined patterns very generally. Which patterns are optimizing systems? As Flint noted, an optimizing system has a measure of robustness to perturbations. We can characterize this formally by considering the optimization target as a set of states (target configurations), and the set of possible contexts in which a pattern might be placed.

Definition (robustness):

A pattern is robust for within iff for all , the computation seeded at achieves .

In this way, the variation within represents perturbations the system faces, and can recover from, when optimizing for the target configuration represented by .

Examples:

- Eater. An eater p is robust for within any context that contains gliders traveling in the direction of the eater (and nothing else on the board). In these contexts, the eater eventually achieves a board empty apart from itself.

- Periodic patterns. An oscillator or spaceship with period is robust for (for any ) within the empty board () (where is equivalence up to translation). This includes still lifes (), blinkers (), gliders (), etc.

Our use of contexts to represent perturbations is a little different from the intuitive notion. In particular, we do not directly consider perturbations that happen during the computation, that is, interventions on the state of the board at some step after the initial state . One could consider this kind of external perturbation in an alternative definition, which may also be illuminating. An advantage of our approach is that it recognises that many perturbations can be achieved within the Game of Life computation itself – one might call these embedded perturbations. Specifically, one can include in a context that contains a pattern that is “going to perturb after timesteps” (e.g., a glider that is going to collide with after timesteps).

The more robust a system is, and the more restrictive its target is, the more it seems like an optimizing system. These two axes correspond to the “size” of the two components of our formal robustness definition: the contexts and the target . If is “larger”, the system is robust to more variation, and if is “smaller”, the target is more restrictive. We will leave quantification of size unspecified for now, since there are various candidate definitions but we haven’t found a clearly correct one yet.

Definitions building on robustness

Definition (basin of attraction):

The basin of attraction for a pattern and a property is the largest context set such that is robust for within .

Examples:

- Eater. Let be an eater and . is a superset of the context set containing gliders moving in the direction of the eater and nothing else.

- Any pattern. Let be an arbitrary pattern and . Then is the set of all contexts: is achieved immediately by .

Definition (minimal property):

If we keep fixed and vary instead, we can define the minimal property of a pattern within a context set as the "smallest" property such that is robust for within .

We will discuss some options for quantifying the size of a property in the next section. For now, we consider some examples of minimal properties using set cardinality as the measure of size.

Examples:

- Still life. Let be a still life and (still lifes not overlapping with ). Then P = {still life q | q=c(p) for some c} (since is a still life that is different for every context).

- Eater. Let be an eater and be the context set containing gliders moving in the direction of the eater. Then .

The concept of a minimal property is related to the idea of a behavioral objective: a goal that a system appears to be optimizing for given its behavior in a set of situations. Given a pattern and a context set , the set of properties that is robust for within corresponds to the set of possible behavioral objectives for within the set of situations . We may be interested in the simplest behavioral objectives, corresponding to the set of minimal properties that is robust for within .

Options for robustness definitions

How might we quantify size in our definitions above? Primarily, we seek a notion of size for a property, which is a set of patterns. Set cardinality is one option, which ignores the contents of the patterns, counting each of them equally. Another option would be to combine (e.g., take an average of) sizes of the constituent patterns. Natural possibilities in Life for the size of a pattern include number of live cells or size of the smallest rectangle bounding the live cells. A different option, that may capture the sense needed better, is a complexity-based definition such as Kolmogorov complexity (of the whole property) or Levin complexity. It remains to be worked out whether any of these notions of size give our definitions above a natural semantics, or whether we need a different notion of size.

We defined robustness in terms of achieving a property. We could have defined it instead in terms of fixing a property, which is a stronger condition (any computation that fixes a property also achieves it, but not vice versa). However, the two definitions are equivalent if we restrict attention to stable properties that satisfy whenever . We can stabilize a property by unioning it with all the states any elements produce after any number of steps.

Retargetability

The orthogonality thesis [? · GW] states that “more or less any level of intelligence could in principle be combined with more or less any final goal”, suggesting the idea that the capability to achieve goals in general (intelligence) is separate from the particular goal being pursued. Not all optimizing systems satisfy this separation, as Flint’s examples show, but those that do should score more highly on his measures of duality and retargetability. We think duality and retargetability are hard to distinguish concepts, and will focus on the latter.

To get more precise about retargetability, let’s use the definition of robustness above for the aspect of retargetability that requires a notion of goal pursuit.

Definition (retargetability):

A pattern is retargetable for a set of properties (the “possible goals”) if there exists a context set , such that for any property in there is a pattern that is a “small” change from , such that is robust for within .

The degree of retargetability depends on the size of the set (more, or more interesting, possible goals are better), the size of the changes (smaller, or less complex changes required for retargeting are better), and the size of the context set (larger is better).

This definition is again dependent on a way to measure sizes, for example, the size of the change between and . Some candidates include: Kolmogorov complexity of the change, the number of cells changed, and the size of the area in which changes are made.

Examples:

- Glider gun. Let be a glider gun positioned at on the board. Let the target set of goals be , where is the property that there is a glider in the th quadrant of the board. Namely, , where is a glider and is a pattern covering the th quadrant of the board.

Then for any , we obtain by rotating the glider gun to fire into the quadrant , which is a small change by the complexity definition. Let be the set of still life contexts that don't overlap with the glider gun or its firing path for any of the four rotations of the glider gun. Then is robust for within , so is retargetable for the target set .

- Turing machine. Let be a pattern implementing a Turing machine computing some function , e.g., "given input , compute ". For any input , let be the set of board states where the output tape of the Turing machine contains . Let the target set of goals be .

Then for any we can obtain by placing on the input tape of the Turing machine, which is a small change by all the definitions of size we considered (number of cells, size of area, and complexity). Let be the set of still life contexts that don't overlap with the Turing machine. Then is robust for within , so is retargetable for the target set .

Our setup suggests a possible relationship between robustness and retargetability: it may be difficult for a pattern to be both robust and retargetable. A retargetable pattern needs to be “close to” robustly achieving many targets, but this may be in tension with robustly achieving a single target property. The reason is that a context may cause retargeting via an embedded perturbation, and the new target property may not overlap with the original target property. For example, since the Turing machine is retargetable by changing the input, it's not robust to contexts that change its input.

Conclusions and open questions

We have proposed some definitions for robustness and retargetability in Conway’s Game of Life, and shown examples of how they work. Our definitions are not fully specified - they lack a good specification of how to quantify sizes of patterns and sets of patterns. We hope they nevertheless illustrate an interesting way of looking at optimizing systems in a concrete deterministic setting.

Here are some open questions that we would be excited to get your input on:

- To what extent is there a tradeoff between robustness and retargetability?

- Is robustness or retargetability of a system a greater concern from the alignment perspective?

- It seems feasible to extend our definitions from the Game of Life to other environments where instantiation can be defined. We'd be interested in your suggestions of interesting environments to consider.

- More examples of interesting robust patterns. What could they tell us about the properties that C should have in the definition of robustness?

- Possible theorems restricting the size or content of robust patterns. E.g., for some class of contexts do you need to be “agent-like” in some way, such as doing something like perception, in order to be robust?

- No-free-lunch type theorems on what kinds of combinations of context set C and property P are impossible for any robust pattern.

16 comments

Comments sorted by top scores.

comment by Edouard Harris · 2021-10-20T21:13:20.335Z · LW(p) · GW(p)

Loved this post. This whole idea of using a deterministic dynamical system as a conceptual testing ground feels very promising.

A few questions / comments:

- About the examples [AF · GW]: do you think it's strictly correct to say that entropy / death is an optimizing system? One of the conditions of the Flint definition is that the set of target states ought to be substantially smaller than the basin of attraction, by some measure on the configuration space. Yet neither high entropy nor death seem like they satisfy this: there are too many ways to be dead, and (tautologically) too many ways to have high entropy. As a result, both the "dead" property and the "high-entropy" property make up a large proportion of the attraction basin. The original post makes a similar point [AF · GW], though admittedly there is some degree of flexibility in terms of how big the target state set has to be before you call the system an optimizer.

- Not sure if this is a useful question, but what do you think of using "macrostate" as opposed to "property" to mean a set of states? This term "macrostate" is used in statistical physics for the identical concept, and as you're probably aware, there may be results from that field you'd be able to leverage here. (The "size" of a macrostate is usually thought of as its entropy over states, and this seems like it could fit into your framework as well. At first glance it doesn't seem too unreasonable to just use a flat prior over grid configurations, so this just ends up being the log of the state count.)

- I like the way embedded perturbations have been defined too. External perturbations don't seem fundamentally different from embedded ones (we can always just expand our configuration space until it includes the experimenter) but keeping perturbations "in-game" cuts out those complications while keeping the core problem in focus.

- The way you're using and as a way to smoothly vary the "degree" of optimization of a system is very elegant.

- Do you imagine keeping the mask constant over the course of a computational rollout? Plausibly as you start a computation some kinds of agents may start to decohere as they moves outside the original mask area and/or touch and merge with bits of their environments. E.g., if the agent is a glider, does the mask "follow" the agent? Or are you for now mostly considering patterns like eaters that stay in one place?

↑ comment by Ramana Kumar (ramana-kumar) · 2021-10-26T09:31:01.581Z · LW(p) · GW(p)

Nice comment - thanks for the feedback and questions!

- I think the specific example we had in mind has a singleton set of target states: just the empty board. The basin is larger: boards containing no groups of more than 3 live cells. This is a refined version of "death" where even the noise is gone. But I agree with you that "high entropy" or "death", intuitively, could be seen as a large target, and hence maybe not an optimization target. Perhaps compare to the black hole [AF(p) · GW(p)].

- Great suggestion - I think the "macrostate" terminology may indeed be a good fit / worth exploring more.

- Thanks! I think there are probably external perturbations that can't be represented as embedded perturbations.

- Thanks!

- The mask applies only at the instant of instantiation, and then is irrelevant for the rest of the computation, in the way we've set things up. (This is because once you've used the mask to figure out what the initial state for the computation is, you then have just an ordinary state to roll out.)If we wanted to be able to find the agent again later on in the computation then yes indeed some kind of un-instantiation operation might need a mask to do that - haven't thought about it much but could be interesting.

↑ comment by Edouard Harris · 2021-10-27T18:01:43.331Z · LW(p) · GW(p)

Thanks! I think this all makes sense.

- Oh yeah, I definitely agree with you that the empty board would be an optimizing system in the GoL context. All I meant was that the "Death" square in the examples [AF · GW] table might not quite correspond to it in the analogy, since the death property is perhaps not an optimization target by the definition. Sorry if that wasn't clear.

- :)

- Got it, thanks! So if I've understood correctly, you are currently only using the mask as a way to separate the agent from its environment at instantiation, since that is all you really need to do to be able to define properties like robustness and retargetability in this context. That seems reasonable.

↑ comment by Vika · 2021-10-29T13:18:10.511Z · LW(p) · GW(p)

- Actually, we realized that if we consider an empty board an optimizing system, then any finite pattern is an optimizing system (because it's similarly robust to adding non-viable collections of live cells), which is not very interesting. We have updated the post to reflect this.

↑ comment by Edouard Harris · 2021-11-01T19:12:57.300Z · LW(p) · GW(p)

Great catch. For what it's worth, it actually seems fine to me intuitively that any finite pattern would be an optimizing system for this reason, though I agree most such patterns may not directly be interesting. But perhaps this is a hint that some notion of independence or orthogonality of optimizing systems might help to complete this picture.

Here's a real-world example: you could imagine a universe where humans are minding their own business over here on Earth, while at the same time, over there in a star system 20 light-years away, two planets are hurtling towards each other under the pull of their mutual gravitation. No matter what humans may be doing on Earth, this universe as a whole can still reasonably be described as an optimizing system! Specifically, it achieves the property that the two faraway planets will crash into each other under a fairly broad set of contexts.

Now suppose we describe the state of this universe as a single point in a gargantuan phase space — let's say it's the phase space of classical mechanics, where we assign three positional and three momentum degrees of freedom to each particle in the universe (so if there are N particles in the universe, we have a 6N-dimensional phase space). Then there is a subspace of this huge phase space that corresponds to the crashing planets, and there is another, orthogonal subspace that corresponds to the Earth and its humans. You could then say that the crashing-planets subspace is an optimizing system that's independent of the human-Earth subspace. In particular, if you imagine that these planets (which are 20 light-years away from Earth) take less than 20 years to crash into each other, then the two subspaces won't come into causal contact before the planet subspace has achieved the "crashed into each other" property.

Similarly on the GoL grid, you could imagine having an interesting eater over here, while over there you have a pretty boring, mostly empty grid with just a single live cell in it. If your single live cell is far enough away from the eater than the two systems do not come into causal contact before the single cell has "died" (if the lone live cell is more than 2 cells away from any live cell of the eater system, for example) then they can imo be considered two independent optimizing systems.

Of course the union of two independent optimizing systems will itself be an optimizing system, and perhaps that's not very interesting. But I'd contend that the reason it's not very interesting is that very property of causal independence — and that this independence can be used to resolve our GoL universe into two orthogonal optimizers that can then be analyzed separately (as opposed to asserting that the empty grid isn't an optimizing system at all).

Actually, that also suggests an intriguing experimental question. Suppose Optimizer A independently achieves Property X, and Optimizer B independently achieves Property Y in the GoL universe. Are there certain sorts of properties that tend to be achieved when you put A and B in causal contact?

comment by Oscar_Cunningham · 2021-10-17T10:05:03.979Z · LW(p) · GW(p)

A good source for the technology available in the Game of Life is the draft of Nathaniel Johnston and Dave Greene's new book "Conway’s Game of Life: Mathematics and Construction".

Replies from: ramana-kumar↑ comment by Ramana Kumar (ramana-kumar) · 2021-10-29T13:16:39.548Z · LW(p) · GW(p)

Thanks! I'd had a bit of a look through that book before and agree it's a great resource. One thing I wasn't able to easily find is examples of robust patterns. Does anyone know if there's been much investigation of robustness in the Life community? The focus I've seen seems to be more on particular constructions (used in its entirety as the initial state for a computation), rather than on how patterns fare when placed in various ranges of different contexts.

Replies from: Oscar_Cunningham↑ comment by Oscar_Cunningham · 2021-10-30T11:40:45.589Z · LW(p) · GW(p)

Some related discussions: 1. https://www.conwaylife.com/forums/viewtopic.php?f=2&t=979 2. https://www.conwaylife.com/forums/viewtopic.php?f=7&t=2877 3. https://www.conwaylife.com/forums/viewtopic.php?p=86140#p86140

My own thoughts.

-

Patterns in GoL are generally not robust. Typically changing anything will cause the whole pattern to disintegrate in a catastrophic explosion and revert to the usual 'ash' of randomly placed small still lifes and oscillators along with some escaping gliders.

-

The pattern Eater 2 can eat gliders along 4 adjacent lanes.

-

The Highway Robber can eat gliders travelling along a lane right at the edge of the pattern, such that gliders on the next lane pass by unaffected. So one can use several staggered highway robbers to make a wall which eats any gliders coming at it from a given direction along multiple adjacent lanes. The wall will be very oblique and will fail if two gliders come in on the same lane too close together.

-

The block is robust to deleting any one of its live cells, but is not robust to placing single live cells next to it.

-

The maximum speed at which GoL patterns can propagate into empty space is 1 cell every 2 generations, measured in the L_1 norm. Spaceships which travel at this speed limit (such as the glider, XWSS, and Sir Robin) are therefore robust to things happening behind them, in the sense that nothing can catch up with them.

-

It's long been hypothesised that it should be possible to make a pattern which can eat any single incoming glider. My method for doing this would be to design a wall around the pattern which is designed to fall apart in a predictable way whenever it is hit by a glider. This collapse would then trigger construction machinery on the interior of the pattern that rebuilds the wall. The trick would be to make sure that the collapse of the wall didn't emit any escaping gliders and whose debris didn't depend on where the glider hit it. That way the construction machinery would have a reliable blank slate on which to rebuild.

-

If one did have a pattern with the above property that it could eat any glider that hit it, one could then arrange several copies of this pattern in a ring around any other pattern to make it safe from any single glider. Of course such a pattern would not be safe against other perturbations, and the recovery time would be so slow that it would not even be safe against two gliders a million generations apart.

-

It's an open problem whether there exists a pattern that recovers if any single cell is toggled.

-

I think the most promising approach is the recently discovered methods in this thread. These methods are designed to clear large areas of the random ash that Life tends to evolve into. One could use these methods to create a machine that clears the area around itself and then builds copies of itself into the cleared space. As this repeated it would spread copies of itself across the grid. The replicators could build walls of random ash between themselves and their children, so that if one of them explodes the explosion does not spread to all copies. If one of these copies hit something it couldn't deal with, it would explode (hopefully also destroying the obstruction) and then be replaced by a new child of the replicators behind it. Thus such a pattern would be truly robust. If one wanted the pattern to be robust and not spread, one could make every copy keep track of its coordinates relative to the first copy, and not replicate if it was outside a certain distance. I think this would produce what you desire: a bounded pattern that is robust to many of the contexts it could be placed in. However, there are many details still to be worked out. The main problem is that the above cleaning methods are not guaranteed to work on every arrangement of ash. So the question is whether they can clear a large enough area before they hit something that makes them explode. We only need each replicator to succeed often enough that their population growth rate is positive.

comment by romeostevensit · 2021-10-18T15:37:34.880Z · LW(p) · GW(p)

Defining a distance function between two patterns might yield some interesting stuff and allow some porting in of existing math from information theory. There is also the dynamic case (converging and diverging distances) between different patterns. Seems like it could play into robustness eg sensitivity of patterns to flipping from convergent to divergent state.

comment by Alex_Altair · 2022-12-11T00:05:00.239Z · LW(p) · GW(p)

I feel very on-board with this research aesthetic.

Here are just some nit-picks/notational confusions I had while reading this;

- The sequence , i.e., , is the computation seeded at (or a “trajectory” in dynamical systems terminology).

...

- A property is achieved by a computation s if there exists some number of steps such that ...

It took me a second to figure out what referred to, partly because the first s was not rendered in LaTeX, partly because it was never shown as a function before, and partly because it looked kinda like , so I thought maybe the notation had changed.

the empty board

I've seen as "false" before, but I don't think it's super common, and you also previously said

a pattern is an infinite two-dimensional Boolean grid, or equivalently a function of type ℤxℤ→{true, false}

which made this feel like a switchup of notation. (Also, I think the type signature is off? The empty board should be a function, but instead it's a set containing one symbol...)

This includes still lifes (), blinkers ()

I think if blinkers have period 2 then still lifes have to be considered to have period 1, and not 0.

Eater. An eater p is robust for within any context that contains spaceships traveling in the direction of the eater (and nothing else on the board).

I think the true thing is a lot weaker than this; it's robust to gliders (not all spaceships) traveling along a specific diagonal with respect to the location of the eater (and possibly the glider has to have a certain phase, I'd have to check).

The basin of attraction for a pattern and a property is the largest context set such that is robust for within .

Examples:

- Eater. Let be an eater and . is the context set containing spaceships moving in the direction of the eater and nothing else (in any other context, the contents of the board don't get consumed by the eater).

This is definitely not the largest context set , because there are tons of patterns that extinguish themselves.

Replies from: Vikacomment by Jsevillamol · 2021-10-17T06:25:58.211Z · LW(p) · GW(p)

How might we quantify size in our definitions above?

Random K complexity inspired measure of size for a context / property / pattern.

Least number of squares you need to turn on, starting from an empty board, so that the grid eventually evolves into the context.

It doesn't work for infinite contexts though.

comment by Pattern · 2021-10-17T02:56:01.074Z · LW(p) · GW(p)

An empty board is also an example of an optimizing system that is robust to adding non-viable collections of live cells (e.g., fewer than 3 live cells next to each other).

And the 'bottle cap' example is not (robust to adding cells, or cells colliding* with it)? But if it was, then it would be an 'optimizing system'?

*spreading out, and interacting with it

Replies from: Vika↑ comment by Vika · 2021-10-29T13:17:02.846Z · LW(p) · GW(p)

Thanks for pointing this out! We realized that if we consider an empty board an optimizing system then any finite pattern is an optimizing system (because it's similarly robust to adding non-viable collections of live cells), which is not very interesting. We have updated the post to reflect this.

The 'bottle cap' example would be an optimizing system if it was robust to cells colliding / interacting with it, e.g. being hit by a glider (similarly to the eater).

Replies from: Pattern↑ comment by Pattern · 2021-11-01T18:41:51.073Z · LW(p) · GW(p)

We realized that if we consider an empty board an optimizing system then any finite pattern is an optimizing system (because it's similarly robust to adding non-viable collections of live cells)

Ah. I interpreted the statement about the empty board as being one of:

A small random perturbation, will probably be non-viable/collapse back to the empty board. (Whereas patterns that are viable don't (necessarily) have this property.)

I then, asked about whether the bottle cap example, had the same robustness.

Replies from: Vika↑ comment by Vika · 2021-11-08T16:27:32.749Z · LW(p) · GW(p)

Ah I see, thanks for the clarification! The 'bottle cap' (block) example is robust to removing any one cell but not robust to adding cells next to it (as mentioned in Oscar's comment [LW(p) · GW(p)]). So most random perturbations that overlap with the block will probably destroy it.