LessWrong 2.0 Reader

View: New · Old · Top← previous page (newer posts) · next page (older posts) →

← previous page (newer posts) · next page (older posts) →

"Immunology" and "well-understood" are two phrases I am not used to seeing in close proximity to each other. I think with an "increasingly" in between it's technically true - the field has any model at all now, and that wasn't true in the past, and by that token the well-understoodness is increasing.

But that sentence could also be iterpreted as saying that the field is well-understood now, and is becoming even better understood as time passes. And I think you'd probably struggle to find an immunologist who would describe their field as "well-understood".

My experience has been that for most basic practical questions the answer is "it depends", and, upon closet examination, "it depends on some stuff that nobody currently knows". Now that was more than 10 years ago, so maybe the field has matured a lot since then. But concretely, I expect if you were to go up to an immunologist and say "I'm developing a novel peptide vaccine from the specifc abc surface protein of the specific xyz virus. Can you tell me whether this will trigger an autoimmune response due to cross-reactivity" the answer is going to be something more along the lines of "lol no, run in vitro tests followed by trials (you fool!)" and less along the lines of "sure, just plug it in to this off-the-shelf software".

mikbp on Extra Tall CribWhy must she not be able to climb out(/in) of the crib for napping there?

viliam on If you are assuming Software works well you are deadIn software, network effects are strong. A solution people are already familiar with has an advantage. A solution integrated with other solutions you already use has an advantage (and it is easier to do the integration when both solutions are made by you). You can further lock the users in by e.g. creating a marketplace where people can sell plugins to your solution. Compared to all of this, things like "nice to use" remain merely wishes.

danielfilan on AXRP Episode 31 - Singular Learning Theory with Daniel MurfetI've added a link to listen on Apple Podcasts.

danielfilan on AXRP Episode 31 - Singular Learning Theory with Daniel MurfetSorry - YouTube's taking an abnormally long time to process the video.

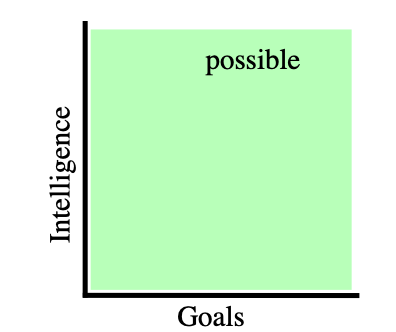

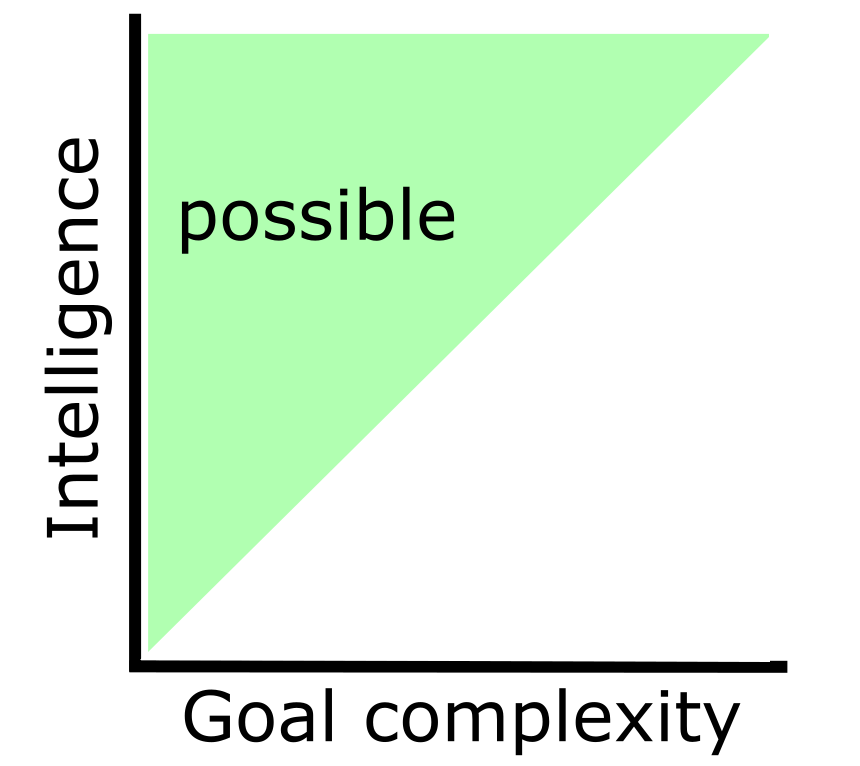

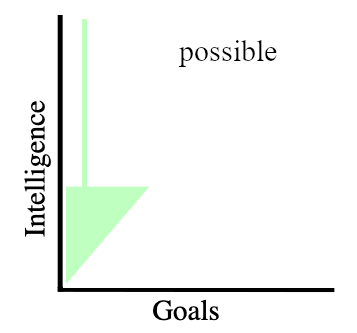

donatas-luciunas on Orthogonality Thesis burden of proofOrthogonality Thesis [? · GW]

The Orthogonality Thesis asserts that there can exist arbitrarily intelligent agents pursuing any kind of goal.

It basically says that intelligence and goals are independent

Images from A caveat to the Orthogonality Thesis [LW · GW].

While I claim that all intelligence that is capable to understand "I don't know what I don't know" can only seek power (alignment is impossible).

the ability of an AGI to have arbitrary utility functions is orthogonal (pun intended) to what behaviors are likely to result from those utility functions.

As I understand you say that there are Goals on one axis and Behaviors on other axis. I don't think Orthogonality Thesis is about that.

faul_sname on metachirality's ShortformYes, that would be cool [LW(p) · GW(p)].

Next to the author name of a post orcomment, there's a post-date/time element that looks like "1h 🔗". That is a copyable/bookmarkable link.

declan-molony on Mental Masturbation and the Intellectual Comfort ZoneAnother example of Mental Masturbation I decided to exclude from the main text:

Capabilities are likely to cascade once you get to Einstein-level intelligence, not just because an AI will likely be able to form a good understanding of how it works and use this to optimize itself to become smarter[4][5], but also because it empirically seems to be the case that when you’re slightly better than all other humans at stuff like seeing deep connections between phenomena, this can enable you to solve hard tasks like particular research problems much much faster (as the example of Einstein suggests).

- Aka: Around Einstein-level, relatively small changes in intelligence can lead to large changes in what one is capable to accomplish.

OK but if that were true then there would have been many more Einstein like breakthroughs since then. More likely is that such low hanging fruit have been plucked and a similar intellect is well into diminishing returns. That is given our current technological society and >50 year history of smart people trying to work on everything if there are such breakthroughs to be made, then the IQ required is now higher than in Einsteins day.

davidmanheim on Biorisk is an Unhelpful Analogy for AI RiskI'm arguing exactly the opposite; experts want to make comparisons carefully, and those trying to transmit the case to the general public should, at this point, stop using these rhetorical shortcuts that imply wrong and misleading things.