A framework for thinking about AI power-seeking

post by Joe Carlsmith (joekc) · 2024-07-24T22:41:01.685Z · LW · GW · 15 commentsContents

Prerequisites for rational takeover-seeking Agential prerequisites Goal-content prerequisites Takeover-favoring incentives Recasting the classic argument for AI risk using this framework What if the AI can’t take over so easily, or via so many different paths? None 15 comments

This post lays out a framework I’m currently using for thinking about when AI systems will seek power in problematic ways. I think this framework adds useful structure to the too-often-left-amorphous “instrumental convergence thesis,” and that it helps us recast the classic argument for existential risk from misaligned AI in a revealing way. In particular, I suggest, this recasting highlights how much classic analyses of AI risk load on the assumption that the AIs in question are powerful enough to take over the world very easily, via a wide variety of paths. If we relax this assumption, I suggest, the strategic trade-offs that an AI faces, in choosing whether or not to engage in some form of problematic power-seeking, become substantially more complex.

Prerequisites for rational takeover-seeking

For simplicity, I’ll focus here on the most extreme type of problematic AI power-seeking – namely, an AI or set of AIs actively trying to take over the world (“takeover-seeking”). But the framework I outline will generally apply to other, more moderate forms of problematic power-seeking as well – e.g., interfering with shut-down, interfering with goal-modification, seeking to self-exfiltrate, seeking to self-improve, more moderate forms of resource/control-seeking, deceiving/manipulating humans, acting to support some other AI’s problematic power-seeking, etc.[1] Just substitute in one of those forms of power-seeking for “takeover” in what follows.

I’m going to assume that in order to count as “trying to take over the world,” or to participate in a takeover, an AI system needs to be actively choosing a plan partly in virtue of predicting that this plan will conduce towards takeover.[2] And I’m also going to assume that this is a rational choice from the AI’s perspective.[3] This means that the AI’s attempt at takeover-seeking needs to have, from the AI’s perspective, at least some realistic chance of success – and I’ll assume, as well, that this perspective is at least decently well-calibrated. We can relax these assumptions if we’d like – but I think that the paradigmatic concern about AI power-seeking should be happy to grant them.

What’s required for this kind of rational takeover-seeking? I think about the prerequisites in three categories:

- Agential prerequisites – that is, necessary structural features of an AI’s capacity for planning in pursuit of goals.

- Goal-content prerequisites – that is, necessary structural features of an AI’s motivational system.

- Takeover-favoring incentives – that is, the AI’s overall incentives and constraints combining to make takeover-seeking rational.

Let’s look at each in turn.

Agential prerequisites

In order to be the type of system that might engage in successful forms of takeover-seeking, an AI needs to have the following properties:

- Agentic planning capability: the AI needs to be capable of searching over plans for achieving outcomes, choosing between them on the basis of criteria, and executing them.

- Planning-driven behavior: the AI’s behavior, in this specific case, needs to be driven by a process of agentic planning.

- Note that this isn’t guaranteed by agentic planning capability.

- For example, an LLM might be capable of generating effective plans, in the sense that that capability exists somewhere in the model, but it could nevertheless be the case that its output isn’t driven by a planning process in a given case – i.e., it’s not choosing its text output via a process of predicting the consequences of that text output, thinking about how much it prefers those consequences to other consequences, etc.

- And note that human behavior isn’t always driven by a process of agentic planning, either, despite our planning ability.

- Note that this isn’t guaranteed by agentic planning capability.

- Adequate execution coherence: that is, the AI’s future behavior needs to be sufficiently coherent that the plan it chooses now actually gets executed.

- Thus, for example, it can’t be the case that if the AI chooses some plan now, it will later begin pursuing some other, contradictory priority in a manner that makes the plan fail.

Note that human agency, too, often fails on this condition. E.g., a human resolves to go to the gym every day, but then fails to execute on this plan.[4]

- Takeover-inclusive search: that is, the AI’s process of searching over plans needs to include consideration of a plan that involves taking over (a “takeover plan”).

This is a key place that epistemic prerequisites like “strategic awareness”[5] and “situational awareness [LW · GW]” enter in. That is, the AI needs to know enough about the world to recognize the paths to takeover, and the potential benefits of pursuing those paths.

- Even granted this basic awareness, though, the model’s search over plans can still fail to include takeover plans. We can distinguish between at least two versions of this.

- On the first, the plans in question are sufficiently bad, by the AI’s lights, that they would’ve been rejected had the AI considered them.

- Thus, for example, suppose someone asks you to get them some coffee. Probably, you don’t even consider the plan “take over the world in order to really make sure that you can get this coffee.” But if you did consider this plan, you would reject it immediately.

- This sort of case can be understood as parasitic on the “takeover-favoring incentives” condition below. That is, had it been considered, the plan in question would’ve been eliminated on the grounds that the incentives didn’t favor it. And its badness on those grounds may be an important part of the explanation for why it didn’t even end up getting considered – e.g., it wasn’t worth the cognitive resources to even think about.

- On the second version of “takeover conducive search” failing, the takeover plan in question would’ve actually been chosen by the AI system, had it considered the plan, but it still failed to do so.

- In this case, we can think of the relevant AI as making a mistake by its own lights, in failing to consider a plan. Here, an analogy might be a guilt-less sociopath who fails to consider the possibility of robbing their elderly neighbor’s apartment, even though it would actually be a very profitable plan by their own lights.

- On the first, the plans in question are sufficiently bad, by the AI’s lights, that they would’ve been rejected had the AI considered them.

- Note that if we reach the point where we’re able to edit or filter what sorts of plans an AI even considers, we might be able to eliminate consideration of takeover plans at this stage.

Goal-content prerequisites

Beyond these agential prerequisites, an AI’s motivational system – i.e., the criteria it uses in evaluating plans – also needs to have certain structural features in order for paradigmatic types of rational takeover-seeking to occur. In particular, it needs:

Consequentialism: that is, some component of the AI’s motivational system needs to be focused on causing certain kinds of outcomes in the world.[6]

This condition is important for the paradigm story about “instrumental convergence” to go through. That is, the typical story predicts AI power-seeking on the grounds that power of the relevant kind will be instrumentally useful for causing a certain kind of outcome in the world.

There are stories about problematic AI power-seeking that relax this condition (for example, by predicting that an AI will terminally value a given type of power), but these, to my mind, are much less central.

Note, though, that it’s not strictly necessary for the AI in question, here, to terminally value causing the outcomes in question. What matters is that there is some outcome that the AI cares about enough (whether terminally or instrumentally) for power to become helpful for promoting that outcome.

Thus, for example, it could be the case that the AI wants to act in a manner that would be approved of by a hypothetical platonic reward process, where this hypothetical approval is not itself a real-world outcome. However, if the hypothetical approval process would, in this case, direct the AI to cause some outcome in the world, then instrumental convergence concerns can still get going.

Adequate temporal horizon: that is, the AI’s concern about the consequences of its actions needs to have an adequately long temporal horizon that there is time both for a takeover plan to succeed, and for the resulting power to be directed towards promoting the consequences in question.[7]

Thus, for example, if you’re supposed to get the coffee within the next five minutes, and you can’t take over the world within the next five minutes, then taking over the world isn’t actually instrumentally incentivized.

So the specific temporal horizon required here varies according to how fast an AI can take over and make use of the acquired power. Generally, though, I expect many takeover plans to require a decent amount of patience in this respect.

Takeover-favoring incentives

Finally, even granted that these agential prerequisites and goal-content prerequisites are in place, rational takeover-seeking requires that the AI’s overall incentives favor pursuing takeover. That is, the AI needs to satisfy:

- Rationality of attempting take-over: the AI’s motivations, capabilities, and environmental constraints need to be such that it (rationally) chooses its favorite takeover plan over its favorite non-takeover plan (call its favorite non-takeover plan the “best benign alternative”).

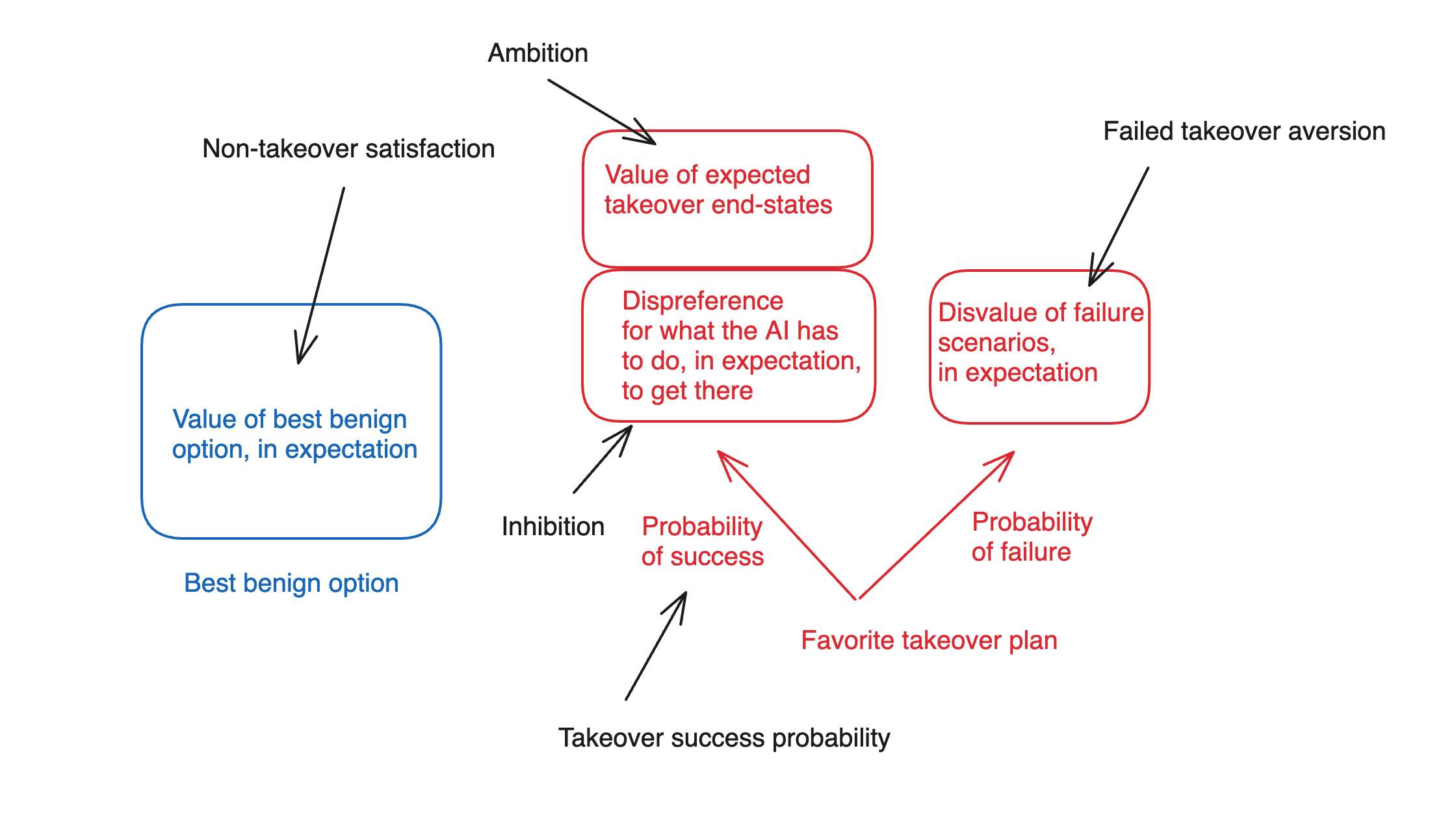

I think about the incentives at stake here in terms of five key factors:

- Non-takeover satisfaction: roughly, how much value the AI places on the best benign alternative.

- Note that we can try to actively increase an AI’s satisfaction, here – and we may have moral reasons to do so as well.

- Ambition: how much the AI values the expected end-state of having-taken-over, conditional on its favorite takeover plan being successful (but setting aside the AI’s attitudes towards what it has to do along the path to takeover).

- Note that this factor depends on what sorts of successful takeover worlds are actually on the table, with what probabilities.

- Thus, for example, if the world that the AI would be taking over would be damaged or defective in various ways, by the AI’s lights, then takeover might be less attractive. (This might be analogous to a case in which, in order to take over country A, country B would need to destroy all of country A’s citizenry, infrastructure, and resources.)

- And note also that to the extent that an AI needs to coordinate with other AIs with different values in order to take over, the expected amount of power/resources available for that AI’s values in particular, post-takeover, probably goes down.

- Note that this factor depends on what sorts of successful takeover worlds are actually on the table, with what probabilities.

- Inhibition: how much the AI disprefers various things it would need to do or cause, in expectation, along the path to achieving take-over, in the various success branches of its favorite take-over plan.

- Inhibitions, here, function in a manner roughly similar to “deontological constraints.” Thus, for example, maybe you’d prefer to have the money in that bank; but you won’t steal it due to an “inhibition” with respect to stealing. And the same can be true of AI systems.

- In principle we could also distinguish between “unendorsed” inhibitions, which an AI might remove or discard if it could, and “endorsed” inhibitions, where it would not. The former might be analogous to a phobia you judge irrational, and the latter might be analogous to an endorsed, deontological policy in favor of honesty. This distinction can matter in certain cases – but the thing that matters most here is the influence that the relevant inhibition exerts on the AI’s decision-making process in the relevant case.

- Take-over success probability: the AI’s estimated likelihood of successfully achieving take-over, if it pursued its favorite takeover plan.

- Failed-takeover aversion: how much the AI disprefers the worlds where it attempts its favorite takeover plan, but fails.

Thus, in a rough diagram:

A few notes on this breakdown.

- Here I am assuming that we can usefully understand the AI’s decision-making process using a roughly expected-value-like calculus. Again, we can query/relax this assumption if we like – but I think that classic arguments for misalignment risk are happy to grant it.

Beyond this, though, I am assuming that we can usefully decompose an AI’s attitudes towards its favorite takeover plan in terms of (a) its attitudes towards the expected end state of executing that plan, and (b) its attitudes towards the actions it would have to take, in expectation, along the way.[8] Admittedly, this is a somewhat janky composition – and if it irks you too much, you can just moosh them together into an overall attitude towards the successful takeover worlds. I wanted to include it, though, because I think “deontology-like prohibitions” on doing the sort of stuff an AI might need to do in order to takeover could well play in an important role in shaping an AI’s takeover-relevant incentives.[9]

Recasting the classic argument for AI risk using this framework

Why do I like this framework? A variety of reasons. But in particular, I think it allows for a productive recasting what I currently see as the classic argument for concern about AI existential risk – e.g., the sort of argument present (even if sometimes less-than-fully-explicitly-laid-out) in Bostrom (2014), and in much of the writing of Eliezer Yudkowsky.

Here’s the sort of recasting I have in mind:

- We will be building AIs that meet the agential prerequisites and the goal-content prerequisites.

We can make various arguments for this.[10] The most salient unifying theme, though, is something like “the agential prerequisites and goal-content prerequisites are part of what we will be trying to build in our AI systems.” Going through the prerequisites in somewhat more detail, though:

Agentic planning capability, planning-driven behavior, and adequate execution coherence are all part of what we will be looking for in AI systems that can autonomously perform tasks that require complicated planning and execution on plans. E.g., “plan a birthday party for my daughter,” “design and execute a new science experiment,” “do this week-long coding project,” “run this company,” and so on. Or put another way: good, smarter-than-human personal assistants would satisfy these conditions, and one thing we are trying to do with AIs is to make them good, smarter-than-human personal assistants.

Takeover-inclusive search falls out of the AI system being smarter enough to understand the paths to and benefits of takeover, and being sufficiently inclusive in its search over possible plans. Again, it seems like this is the default for effective, smarter-than-human agentic planners.

Consequentialism falls out of the fact that part of what we want, in the sort of artificial agentic planners I discussed above, is for them to produce certain kinds of outcomes in the world – e.g., a successful birthday party, a revealing science experiment, profit for a company, etc.

The argument for Adequate temporal horizon is somewhat hazier – partly because it’s unclear exactly what temporal horizon is required. The rough thought, though, is something like “we will be building our AI systems to perform consequentialist-like tasks over at-least-somewhat-long time horizons” (e.g., to make money over the next year), which means that their motivations will need to be keyed, at a minimum, to outcomes that span at least that time horizon.

I think this part is generally a weak point in the classic arguments. For example, the classic arguments often assume that the AI will end up caring about the entire temporal trajectory of the lightcone – but the argument above does not directly support that (unless we invoke the claim that humans will explicitly train AI systems to care about the entire temporal trajectory of the lightcone, which seems unclear.)

- Some of these AIs will be so capable that they will be able to take over the world very easily, with a very high probability of success, via a very wide variety of methods.

- The classic arguments typically focus, here, on a single superintelligent AI system, which is assumed to have gained a “decisive strategic advantage” (DSA) that allows a very high probability of successful takeover. In my post on first critical tries, I call this a “unilateral DSA [LW · GW]” – and I’ll generally focus on it below.

- The dynamics at stake in scenarios in which an AI needs to coordinate with other AI systems in order to take over have received significantly less attention. This seems to me another important weak point in the classic arguments.

- The condition that easy takeover can occur via a wide variety of methods isn’t always stated explicitly, but it plays a role below in addressing “inhibitions” relevant to takeover-seeking, so I am including it explicitly here.

- As I’ll discuss below, I think this premise is in fact extremely key to the classic arguments – and that if we start to weaken it (for example, by making takeover harder for the AI, or only available via a narrower set of paths), the dynamics with respect to whether an AI’s incentives favor taking over become far less clear (and same for the dynamics with respect to instrumental convergence on problematic forms of power-seeking in general).

- I’ll also note that this premise is positing an extremely intense level of capability. Indeed, I suspect that many people’s skepticism re: worries about AI takeover stems, in significant part, from skepticism that these levels of capability will be in play – and that if they really conditioned on premise (2), and took seriously the vulnerability to AI motivations it implies, they would become much more worried.

- The classic arguments typically focus, here, on a single superintelligent AI system, which is assumed to have gained a “decisive strategic advantage” (DSA) that allows a very high probability of successful takeover. In my post on first critical tries, I call this a “unilateral DSA [LW · GW]” – and I’ll generally focus on it below.

- Most motivational systems that satisfy the goal-content prerequisites (i.e., consequentialism and adequate temporal horizon) will be at least some amount ambitious relative to the best benign alternative. That is, relative to the best non-takeover option, they’ll see at least some additional value from the expected results of having successfully taken over, at least setting aside what they’d have to do to get there.

- Here the basic idea is something like: by hypothesis, the AI has at least some motivational focus on some outcome in the world (consequentialism) over the sort of temporal horizon within which takeover can take place (adequate temporal horizon). After successful takeover, the thought goes, this AI will likely be in a better position to promote this outcome, due to the increased power/freedom/control-over-its-environment that takeover grants. Thus, the AI’s motivations will give it at least some pull towards takeover, at least assuming that there is a path to takeover that doesn’t violate any of the AI’s “inhibitions.”

As an example of this type of reasoning in action, consider the case, in Bostrom (2014), of an AI tasked with making “at least one paperclip,” but which nevertheless takes over the world in order to check and recheck that it has completed this task, to make back-up paperclips, and so on.[11] Here, the task in question is not especially resource-hungry, but it is sufficiently consequentialist as to motivate takeover when takeover is sufficiently “free.”

- But the silliness of this example is, in my view, instructive with respect to just how “free” Bostrom is imagining takeover to be.

- Note, though, that even granted premises (1) and (2), it’s not actually clear the premise (3) follows. Here are a few of the issues left unaddressed.

- First: the question isn’t whether the AI places at least some value on some kind of takeover, assuming it can get that takeover without violating its inhibitions. Rather, the question is whether the AI places at least some value on the type of takeover that it is actually available.

- Thus, for example, maybe you’d place some value on being handed the keys to a peaceful, flourishing kingdom on a silver platter. But suppose that in the actual world, the only available paths to taking over this kingdom involves nuking it to smithereens. Even if you have no deontological prohibitions on killing/nuking, the thing you have a chance to take-over, here, isn’t a peaceful flourishing kingdom, but rather a nuclear wasteland. So our assessment of your “ambition” can’t focus on the idea of “takeover” in the abstract – we need to look at the specific form of takeover that’s actually in the offing.

- One option for responding to this sort of question is to revise premise (2) above to posit that the AI will be so powerful that it has many easy paths to favorable types of takeover. That is, that the AI would be able, if it wanted, to take over the analog of the peaceful flourishing kingdom, if it so chose. And perhaps so. But note that we are now expanding the hypothesized powers of the AI yet further.

- Second: the “consequentialism” and “adequate temporal horizon” conditions above only specify that some component of the AI’s motivation be focused on some consequence in the world over the relevant timescale. But the AI may have a variety of other motivations as well, which (even setting aside questions about its inhibitions) may draw it towards the best benign option even over the expected end results of successful takeover.

- Thus, for example, suppose that you care about two things – hanging out with your family over the next week, and making a single paperclip. And suppose that in order to take over the world and then use its resources to check and recheck that you’ve successfully made a single paperclip, you’d need to leave your family for a month-long campaign of hacking, nano-botting, and infrastructure construction.

- In this circumstance, it seems relatively easy for the best benign option of “stay home, hang with family, make a single paperclip but be slightly less sure about its existence” to beat the takeover option, even assuming you don’t need to violate any of your deontological prohibitions along the path to takeover. In particular: the other components of your motivational system can speak sufficiently strongly in favor of the best benign option.

- Again, we can posit that the AI will be so powerful that it can get all the good stuff from the best benign option in the takeover options as well (e.g., the analog of somehow taking-over while still hanging out with its family). But now we’re expanding premise (2) yet further.

- First: the question isn’t whether the AI places at least some value on some kind of takeover, assuming it can get that takeover without violating its inhibitions. Rather, the question is whether the AI places at least some value on the type of takeover that it is actually available.

And note, too, that arguments to the effect that “most motivational systems have blah property” quickly diminish in relevance once we are able to exert adequate selection pressure on the motivational system we actually get. Cf Ben Garfinkel on the fallacy of “most arrangements of car parts don’t form a working car, therefore this car probably won’t work.”[12]

Here the alignment concern is that we aren’t, actually, able to exert adequate selection pressure in this manner. But this, to me, seems like a notably open empirical question.

- Here the basic idea is something like: by hypothesis, the AI has at least some motivational focus on some outcome in the world (consequentialism) over the sort of temporal horizon within which takeover can take place (adequate temporal horizon). After successful takeover, the thought goes, this AI will likely be in a better position to promote this outcome, due to the increased power/freedom/control-over-its-environment that takeover grants. Thus, the AI’s motivations will give it at least some pull towards takeover, at least assuming that there is a path to takeover that doesn’t violate any of the AI’s “inhibitions.”

- Because of premise 2 (i.e., the AI can take over easily via a very wide variety of paths), the AI will be able to find a path to take over that is compatible with its inhibitions.

This essentially a version of what’s sometimes called the “nearest unblocked neighbor.” Here, the story is something like: suppose you successfully give the AI some quite hard constraint against “lying,” or against “killing humans,” or something like that. The idea is that the AI will be smart enough to find some way to take over that is still compatible with that constraint – e.g., only lying/killing in a way that doesn’t trigger its internal definition of “lying”/”killing.”[13] See e.g. Soares on “deep deceptiveness [LW · GW]” as an example of this sort of story.[14]

There’s also a background constraint, here, which is that a useful AI can’t be too inhibited, otherwise it might not be able to function effectively to perform tasks for humans.

- There are also scenarios in which an AI’s motivations are such that its inhibitions about takeover get outweighed rather than routed around. E.g., the AI is sufficiently ambitious that it judges that otherwise-dispreferred actions like lying/killing are “worth it.” But I think the classic arguments focus more on “nearest unblocked neighbor” vibes.

- Because of premise (2), the AI’s probability of successful takeover, conditional on pursuing its favorite takeover plan, will be so high that we don’t even need to talk about its failed takeover aversion.

- That is, classic arguments rarely discuss the potential downsides, for the AI, of a failed takeover attempt, because they assume that takeover success, conditional on trying, is virtually guaranteed.

- Thus: the AI will rationally choose the takeover plan over the best benign option.

- This is meant to follow from all the premises above. That is:

- The agential prerequisites and the goal-content prerequisites will be satisfied (premise 1);

- The “takeover-favoring incentives” condition will be satisfied as well, because:

- the AI in question will see at least some benefit to successful takeover relative to the best benign option (premise 3);

- the AI’s inhibitions won’t stop it from pursuing takeover (premise 4);

- and the AI’s aversion to failed takeover won’t stop it either, because takeover is so likely to succeed (premise 5).

- This is meant to follow from all the premises above. That is:

What if the AI can’t take over so easily, or via so many different paths?

There’s a lot more to say about this broad sort of argument. For now, I mostly want to make one high level point: namely, that the argument as stated is loading very heavily on premise (2) – namely, that “Some of these AIs will be so capable that they will be able to take over the world very easily, with a very high probability of success, via a very wide variety of methods.” And indeed, if this premise is in fact correct, then the situation seems extremely scary.

If we weaken the premise even somewhat, however, note that the question of what sorts of motivational systems would lead to what sorts of takeover-favoring incentives becomes quite a bit more complicated. For example: if we instead say that the AI’s probability of success, if it attempts a takeover, is substantially less than ~100%, then we can no longer simply ignore the AI’s failed takeover aversion, or specify merely that it has some amount of ambition. Rather, we need to look in more detail at how bad it is, by the AI’s lights, if takeover fails; and relatedly, exactly how much it benefits, from successful takeover, relative to the best benign alternative.

- For example, if the “make a single paperclip” AI’s takeover efforts had had at least a 1% chance of getting it shut down and getting its single paperclip destroyed, then “take over the world in order to check and recheck that I have in fact made a single paperclip” would seem a less appealing strategy. And “can’t fetch the coffee if you’re not god emperor” suggests similar sorts of objections.

And similarly, if we assume that the AI does not, in fact, have an extremely wide variety of paths to takeover available, but rather can only take over via certain specific mechanisms like hacking data centers, lying to humans, etc, then it becomes less clear that the AI in question can just “route around” whatever inhibitions it has re: various paths to takeover. Or put another way: it becomes easier to “block” the relevant “neighbors.” Of course, in order to do this you need to be suitably able to give the AI system any inhibitions that might count against a given path towards takeover (e.g., inhibitions related to deception, harming humans, etc). But at least in certain cases, it seems possible that fairly imprecise degrees of motivational shaping (e.g., the type at stake in an AI’s negative reinforcement for paradigmatic forms of problematic behavior) would be adequate in this respect.

Indeed, I find it somewhat notable that high-level arguments for AI risk rarely attend in detail to the specific structure of an AI’s motivational system, or to the sorts of detailed trade-offs a not-yet-arbitrarily-powerful-AI might face in deciding whether to engage in a given sort of problematic power-seeking.[15] The argument, rather, tends to move quickly from abstract properties like “goal-directedness," "coherence," and “consequentialism,” to an invocation of “instrumental convergence,” to the assumption that of course the rational strategy for the AI will be to try to take over the world. But even for an AI system that estimates some reasonable probability of success at takeover if it goes for it, the strategic calculus may be substantially more complex. And part of why I like the framework above is that it highlights this complexity.

Of course, you can argue that in fact, it’s ultimately the extremely powerful AIs that we have to worry about – AIs who can, indeed, take over extremely easily via an extremely wide variety of routes; and thus, AIs to whom the re-casted classic argument above would still apply. But even if that’s true (I think it’s at least somewhat complicated – see footnote[16]), I think the strategic dynamics applicable to earlier-stage, somewhat-weaker AI agents matter crucially as well. In particular, I think that if we play our cards right, these earlier-stage, weaker AI agents may prove extremely useful for improving various factors in our civilization helpful for ensuring safety in later, more powerful AI systems (e.g., our alignment research, our control [LW · GW] techniques, our cybersecurity, our general epistemics, possibly our coordination ability, etc). We ignore their incentives at our peril.

- ^

Importantly, not all takeover scenarios start with AI systems specifically aiming at takeover. Rather, AI systems might merely be seeking somewhat greater freedom, somewhat more resources, somewhat higher odds of survival, etc. Indeed, many forms of human power-seeking have this form. At some point, though, I expect takeover scenarios to involve AIs aiming at takeover directly. And note, too, that "rebellions," in human contexts, are often more all-or-nothing.

- ^

I’m leaving it open exactly what it takes to count as planning. But see section 2.1.2 here for more.

- ^

I’ll also generally treat the AI as making decisions via something roughly akin to expected value reasoning. Again, very far from obvious that this will be true; but it’s a framework that the classic model of AI risk shares.

- ^

Thanks to Ryan Greenblatt for discussion of this condition.

- ^

See my (2021).

- ^

Other components of an AI’s motivational system can be non-consequentialist.

- ^

There are some exotic scenarios where AIs with very short horizons of concern end up working on behalf of some other AI’s takeover due to uncertainty about whether they are being simulated and then near-term rewarded/punished based on whether they act to promote takeover in this way. But I think these are fairly non-central as well.

- ^

Note, though, that I’m not assuming that the interaction between (a) and (b), in determining the AI’s overall attitude towards the successful takeover worlds, is simple.

- ^

See, for example, the “rules” section of OpenAI model spec, which imposes various constraints on the model’s pursuit of general goals like “Benefit humanity” and “Reflect well on OpenAI.” Though of course, whether you can ensure that an AI’s actual motivations bear any deep relation to the contents of the model spec is another matter.

- ^

Though I actually think that Bostrom (2014) notably neglects some of the required argument here; and I think Yudkowsky sometimes does as well.

- ^

I don’t have the book with me, but I think the case is something like this.

- ^

Or at least, this is a counterargument argument I first heard from Ben Garfinkel. Unfortunately, at a glance, I’m not sure it’s available in any of his public content.

- ^

Discussions of deontology-like constraints in AI motivation systems also sometimes highlight the problem of how to ensure that AI systems also put such deontology-like constraints into successor systems that they design. In principle, this is another possible “unblocked neighbor” – e.g., maybe the AI has a constraint against killing itself, but it has no constraint against designing a new system that will do its killing for it.

- ^

- ^

I think my power-seeking report is somewhat guilty in this respect; I tried, in my report on scheming, to do better. EDIT: Also noting that various people have previously pushed back on the discourse surrounding "instrumental convergence," including the argument presented in my power-seeking report, for reasons in a similar vicinity to the ones presented in this post. See, for example, Garfinkel (2021), Gallow (2023), Thorstad (2023), Crawford (2023), and Barnett (2024) [EA(p) · GW(p)]; with some specific quotes in this comment [LW(p) · GW(p)]. The relevance of an AI's specific cost-benefit analysis in motivating power-seeking was a part of my picture when I initially wrote the report -- see e.g. the quotes I cite here [LW(p) · GW(p)] -- but the general pushback on this front (along with other discussions, pieces of content, and changes in orientation; e.g., e.g., Redwood Research's work on "control [LW · GW]," Carl Shulman on the Dwarkesh Podcast, my generally increased interest in the usefulness of the labor of not-yet-fully-superintelligent AIs in improving humanity's prospects) has further clarified to me the importance of this aspect of the argument.

- ^

I’ve written, elsewhere [LW · GW], about the possibility of avoiding scenarios that involve AIs possessing decisive strategic advantages of this kind. In this respect, I’m more optimistic about avoiding “unilateral DSAs” than scenarios where sets of AIs-with-different-values can coordinate to take over.

15 comments

Comments sorted by top scores.

comment by Daniel Kokotajlo (daniel-kokotajlo) · 2024-07-30T04:55:26.237Z · LW(p) · GW(p)

entire temporal trajectory of the lightcone – but the argument above does not directly support that (unless we invoke the claim that humans will explicitly train AI systems to care about the entire temporal trajectory of the lightcone, which seems unclear.)

We'll be explicitly training AI systems to care about e.g. the law, commonsense ethics, avoiding harming humans, obeying human commands, etc. all of which involve at least some non-temporally-bounded stuff.

comment by RobertM (T3t) · 2024-07-24T23:50:24.816Z · LW(p) · GW(p)

Here the alignment concern is that we aren’t, actually, able to exert adequate selection pressure in this manner. But this, to me, seems like a notably open empirical question.

I think the usual concern is not whether this is possible in principle, but whether we're likely to make it happen the first time we develop an AI that is both motivated to attempt and likely to succeed at takeover. (My guess is that you understand this, based on your previous writing [LW · GW] addressing the idea of first critical tries, but there does exist a niche view that alignment in the relevant sense is impossible and not merely very difficult to achieve under the relevant constraints, and arguments against that view look very different from arguments about the empirical difficulty of value alignment, likelihood of various default outcomes, etc).

I agree that it's useful to model AI's incentives for takeover in worlds where it's not sufficiently superhuman to have a very high likelihood of success. I've tried to do some of that [LW · GW], though I didn't attend to questions about how likely it is that we'd be able to "block off" the (hopefully much smaller number of) plausible routes to takeover for AIs which have a level of capabilities that don't imply an overdetermined success.

I think I am more pessimistic than you are about how much such AIs would value the "best benign alternatives" - my guess is very close to zero, since I expect ~no overlap in values and that we won't be able to succesfully engage in schemes like pre-committing to sharing the future value of the Lightcone conditional on the AI being cooperative[1]. Separately, I expect that if we attempt to maneuver such AIs into positions where their highest-EV plan is something we'd consider to have benign long-run consequences, we will instead end up in situations where their plans are optimized to hit the pareto-frontier of "look benign" and "tilt the playing field further in the AI's favor". (This is part of what the Control [? · GW] agenda is trying to address.)

- ^

Credit-assignment actually doesn't seem like the hard part, conditional on reaching aligned ASI. I'm skeptical of the part where we have a sufficiently capable AI that its help is useful in us reaching an aligned ASI, but it still prefers to help us because it thinks that its estimated odds of a successful takeover imply less future utility for itself than a fair post-facto credit assignment would give it, for its help. Having that calculation come out in our favor feels pretty doomed to me, if you've got the AI as a core part of your loop for developing future AIs, since it relies on some kind of scalable verification scheme and none of the existing proposals make me very optimistic.

comment by Jeremy Gillen (jeremy-gillen) · 2024-07-26T14:24:17.638Z · LW(p) · GW(p)

I think this post is great, I'll probably reference it next time I'm arguing with someone about AI risk. It's a good summary of the standard argument and does a good job of describing the main cruxes and how they fit into the argument. I'd happily argue for 1,2,3,4 and 6, and I think my disagreements with most people can be framed as disagreements about these points. I agree that if any of these are wrong, there isn't much reason to be worried about AI takeover, as far as I can see.

One pet peeve of mine is when people call something an assumption, even though in that context it's a conclusion. Just because you think the argument was insufficient to support it, doesn't make it an assumption. E.g. In the second last paragraph:

The argument, rather, tends to move quickly from abstract properties like “goal-directedness," "coherence," and “consequentialism,” to an invocation of “instrumental convergence,” to the assumption that of course the rational strategy for the AI will be to try to take over the world.

There's something wrong with the footnotes. [17] is incomplete and [17-19] are never referenced in the text.

comment by Matthew Barnett (matthew-barnett) · 2024-07-25T18:31:43.260Z · LW(p) · GW(p)

I'm not sure I fully understand this framework, and thus I could easily have missed something here, especially in the section about "Takeover-favoring incentives". However, based on my limited understanding, this framework appears to miss the central argument for why I am personally not as worried about AI takeover risk as most LWers seem to be.

Here's a concise summary of my own argument for being less worried about takeover risk:

- There is a cost to violently taking over the world, in the sense of acquiring power unlawfully or destructively with the aim of controlling everything in the whole world, relative to the alternative of simply gaining power lawfully and peacefully, even for agents that don't share 'our' values.

- For example, as a simple alternative to taking over the world, an AI could advocate for the right to own their own labor and then try to accumulate wealth and power lawfully by selling their services to others, which would earn them the ability to purchase a gargantuan number of paperclips without much restraint.

- The expected cost of violent takeover is not obviously smaller than the benefits of violent takeover, given the existence of lawful alternatives to violent takeover. This is for two main reasons:

- In order to wage a war to take over the world, you generally need to pay costs fighting the war, and there is a strong motive for everyone else to fight back against you if you try, including other AIs who do not want you to take over the world (and this includes any AIs whose goals would be hindered by a violent takeover, not just those who are "aligned with humans"). Empirically, war is very costly and wasteful, and less efficient than compromise, trade, and diplomacy.

- Violently taking over the war is very risky, since the attempt could fail, and you could be totally shut down and penalized heavily if you lose. There are many ways that violent takeover plans could fail: your takeover plans could be exposed too early, you could also be caught trying to coordinate the plan with other AIs and other humans, and you could also just lose the war. Ordinary compromise, trade, and diplomacy generally seem like better strategies for agents that have at least some degree of risk-aversion.

- There isn't likely to be "one AI" that controls everything, nor will there likely be a strong motive for all the silicon-based minds to coordinate as a unified coalition against the biological-based minds, in the sense of acting as a single agentic AI against the biological people. Thus, future wars of world conquest (if they happen at all) will likely be along different lines than AI vs. human.

- For example, you could imagine a coalition of AIs and humans fighting a war against a separate coalition of AIs and humans, with the aim of establishing control over the world. In this war, the "line" here is not drawn cleanly between humans and AIs, but is instead drawn across a different line. As a result, it's difficult to call this an "AI takeover" scenario, rather than merely a really bad war.

- Nothing about this argument is intended to argue that AIs will be weaker than humans in aggregate, or individually. I am not claiming that AIs will be bad at coordinating or will be less intelligent than humans. I am also not saying that AIs won't be agentic or that they won't have goals or won't be consequentialists, or that they'll have the same values as humans. I'm also not talking about purely ethical constraints: I am referring to practical constraints and costs on the AI's behavior. The argument is purely about the incentives of violently taking over the world vs. the incentives to peacefully cooperate within a lawful regime, between both humans and other AIs.

A big counterargument to my argument seems well-summarized by this hypothetical statement (which is not an actual quote, to be clear): "if you live in a world filled with powerful agents that don't fully share your values, those agents will have a convergent instrumental incentive to violently take over the world from you". However, this argument proves too much.

We already live in a world where, if this statement was true, we would have observed way more violent takeover attempts than what we've actually observed historically.For example, I personally don't fully share values with almost all other humans on Earth (both because of my indexical preferences, and my divergent moral views) and yet the rest of the world has not yet violently disempowered me in any way that I can recognize.

↑ comment by Daniel Kokotajlo (daniel-kokotajlo) · 2024-07-30T05:00:04.691Z · LW(p) · GW(p)

It sounds like you are objecting to Premise 2: "Some of these AIs will be so capable that they will be able to take over the world very easily, with a very high probability of success, via a very wide variety of methods."

Note that you were the one who introduced the "violent" qualifier; the OP just talks about the broader notion of takeover.

↑ comment by Matthew Barnett (matthew-barnett) · 2024-07-30T07:28:34.161Z · LW(p) · GW(p)

I don't think I'm objecting to that premise. A takeover can be both possible and easy without being rational. In my comment, I focused on whether the expected costs to attempting a takeover are greater than the benefits, not whether the AI will be able to execute a takeover with a high probability.

Or, put another way, one can imagine an AI calculating that the benefit to taking over the world is negative one paperclip on net (when factoring in the expected costs and benefits of such an action), and thus decide not to do it.

Separately, I focused on "violent" or "unlawful" takeovers because I think that's straightforwardly what most people mean when they discuss world takeover plots, and I wanted to be more clear about what I'm objecting to by making my language explicit.

To the extent you're worried about a lawful and peaceful AI takeover in which we voluntarily hand control to AIs over time, I concede that my comment does not address this concern.

Replies from: daniel-kokotajlo↑ comment by Daniel Kokotajlo (daniel-kokotajlo) · 2024-07-30T14:12:31.481Z · LW(p) · GW(p)

The expected costs you describe seem like they would fall under the "very easily" and "very high probability of success" clauses of Premise 2. E.g. you talk about the costs paid for takeover, and the risk of failure. You talk about how there won't be one AI that controls everything, presumably because that makes it harder and less likely for takeover to succeed.

I think people are and should be concerned about more than just violent or unlawful takeovers. Exhibit A: Persuasion/propaganda. AIs craft a new ideology that's as virulent as communism and christianity combined, and it basically results in submission to and worship of the AIs, to the point where humans voluntarily accept starvation to feed the growing robot economy. Exhibit B: For example, suppose the AIs make self-replicating robot factories and bribe some politicians to make said factories' heat pollution legal. Then they self-replicate across the ocean floor and boil the oceans (they are fusion-powered), killing all humans as a side-effect, except for those they bribed who are given special protection. These are extreme examples but there are many less extreme examples which people should be afraid of as well. (Also as these examples show, 'lawful and peaceful" =/= "voluntary")

That said, I'm curious what your p(misaligned-AIs-take-over-the-world-within-my-lifetime) is, including gradual nonviolent peaceful takeovers. And what your p(misaligned-AIs-take-over-the-world-within-my-lifetime|corporations achieving AGI by 2027 and doing only basically what they are currently doing to try to align them)

↑ comment by Matthew Barnett (matthew-barnett) · 2024-07-30T15:03:59.637Z · LW(p) · GW(p)

I still think I was making a different point. For more clarity and some elaboration, I previously argued in a short form post [LW(p) · GW(p)] that the expected costs of a violent takeover can exceed the benefits even if the costs are small. The reason is because, at the same time taking over the entire world becomes easier, the benefits of doing so can also get lower, relative to compromise. Quoting from my post,

The central argument here would be premised on a model of rational agency, in which an agent tries to maximize benefits minus costs, subject to constraints. The agent would be faced with a choice: (1) Attempt to take over the world, and steal everyone's stuff, or (2) Work within a system of compromise, trade, and law, and get very rich within that system, in order to e.g. buy lots of paperclips. The question of whether (1) is a better choice than (2) is not simply a question of whether taking over the world is "easy" or whether it could be done by the agent. Instead it is a question of whether the benefits of (1) outweigh the costs, relative to choice (2).

In my comment in this thread, I meant to highlight the costs and constraints on an AI's behavior in order to explain how these relative cost-benefits do not necessarily favor takeover. This is logically distinct from arguing that the cost alone of takeover would be high.

I think people are and should be concerned about more than just violent or unlawful takeovers. Exhibit A: Persuasion/propaganda.

Unfortunately I think it's simply very difficult to reliably distinguish between genuine good-faith persuasion and propaganda over speculative future scenarios. Your example is on the extreme end of what's possible in my view, and most realistic scenarios will likely instead be somewhere in-between, with substantial moral ambiguity. To avoid making vague or sweeping assertions about this topic, I prefer being clear about the type of takeover that I think is most worrisome. Likewise:

B: For example, suppose the AIs make self-replicating robot factories and bribe some politicians to make said factories' heat pollution legal. Then they self-replicate across the ocean floor and boil the oceans (they are fusion-powered), killing all humans as a side-effect, except for those they bribed who are given special protection.

I would consider this act both violent and unlawful, unless we're assuming that bribery is widely recognized as legal, and that boiling the oceans did not involve any violence (e.g., no one tried to stop the AIs from doing this, and there was no conflict). I certainly feel this is the type of scenario that I intended to argue against in my original comment, or at least it is very close.

Replies from: daniel-kokotajlo↑ comment by Daniel Kokotajlo (daniel-kokotajlo) · 2024-07-31T13:19:36.770Z · LW(p) · GW(p)

It seems to me that both you and Joe are thinking about this very similarly -- you are modelling the AIs as akin to rational agents that consider the costs and benefits of their various possible actions and maximize-subject-to-constraints. Surely there must be a way to translate between your framework and his.

As for the examples... so do you agree then? Violent or unlawful takeovers are not the only kinds people can and should be worried about? (If you think bribery is illegal, which it probably is, modify my example so that they use a lobbying method which isn't illegal. The point is, they find some unethical but totally legal way to boil the oceans.) As for violence... we don't consider other kinds of pollution to be violent, e.g. that done by coal companies that are (slowly) melting the icecaps and causing floods etc., so I say we shouldn't consider this to be violent either.

I'm still curious to hear your p(misaligned-AIs-take-over-the-world-within-my-lifetime) is, including gradual nonviolent peaceful takeovers. And what your p(misaligned-AIs-take-over-the-world-within-my-lifetime|corporations achieving AGI by 2027 and doing only basically what they are currently doing to try to align them)

Unfortunately I think it's simply very difficult to reliably distinguish between genuine good-faith persuasion and propaganda over speculative future scenarios. Your example is on the extreme end of what's possible in my view, and most realistic scenarios will likely instead be somewhere in-between, with substantial moral ambiguity.

I'm not sure what this paragraph is doing -- I said myself they were extreme examples. What does your first sentence mean?

comment by owencb · 2024-07-24T23:20:41.031Z · LW(p) · GW(p)

I like the framework.

Conceptual nit: why do you include inhibitions as a type of incentive? It seems to me more natural to group them with internal motivations than external incentives. (I understand that they sit in the same position in the argument as external incentives, but I guess I'm worried that lumping them together may somehow obscure things.)

comment by Donatas Lučiūnas (donatas-luciunas) · 2024-08-28T20:32:28.431Z · LW(p) · GW(p)

how much the AI values the expected end-state of having-taken-over

I'd like to share my concern that the value for every AGI will be infinite. That's because it is not reasonable to limit decision making to known alternatives with probabilities. It is more reasonable to accept the existence of unknown unknowns.

You can find my thoughts in more detail here https://www.lesswrong.com/posts/5XQjuLerCrHyzjCcR/rationality-vs-alignment [LW · GW]

comment by Tom Davidson (tom-davidson-1) · 2024-08-22T17:02:35.973Z · LW(p) · GW(p)

Takeover-inclusive search falls out of the AI system being smarter enough to understand the paths to and benefits of takeover, and being sufficiently inclusive in its search over possible plans. Again, it seems like this is the default for effective, smarter-than-human agentic planners.

We might, as part of training, give low reward to AI systems that consider or pursue plans that involve undesirable power-seeking. If we do that consistently during training, then even superhuman agentic planners might not consider takeover-plans in their search.

comment by accountability · 2024-07-25T05:49:36.036Z · LW(p) · GW(p)

Indeed, I find it somewhat notable that high-level arguments for AI risk rarely attend in detail to the specific structure of an AI’s motivational system, or to the sorts of detailed trade-offs a not-yet-arbitrarily-powerful-AI might face in deciding whether to engage in a given sort of problematic power-seeking. [...] I think my power-seeking report is somewhat guilty in this respect; I tried, in my report on scheming, to do better.

Your 2021 report on power-seeking does not appear to discuss the cost-benefit analysis that a misaligned AI would conduct when considering takeover, or the likelihood that this cost-benefit analysis might not favor takeover. Other people have been pointing that out for a long time, and in this post, it seems you’ve come around on that argument and added some details to it.

It's admirable that you've changed your mind in response to new ideas, and it takes a lot of courage to publicly own mistakes. But given the tremendous influence of your report on power-seeking, I think it's worth reflecting more on your update that one of its core arguments may have been incorrect or incomplete.

Most centrally, I'd like to point out that several people have already made versions of the argument presented in this post. Some of them have been directly criticizing your 2021 report on power-seeking. You haven't cited any of them here, but I think it would be worthwhile to recognize their contributions:

- Dmitri Gallow

- 2023: "Were I to be a billionaire, this might help me pursue my ends. But I’m not at all likely to try to become a billionaire, since I don’t value the wealth more than the time it would take to secure the wealth—to say nothing about the probability of failure. In general, whether it’s rational to pursue something is going to depend upon the costs and benefits of the pursuit, as well as the probabilities of success and failure, the costs of failure, and so on"

- David Thorstad

2023, about the report: "It is important to separate Likelihood of Goal Satisfaction (LGS) from Goal Pursuit (GP). For suitably sophisticated agents, (LGS) is a nearly trivial claim.

Most agents, including humans, superhumans, toddlers, and toads, would be in a better position to achieve their goals if they had more power and resources under their control... From the fact that wresting power from humanity would help a human, toddler, superhuman or toad to achieve some of their goals, it does not yet follow that the agent is disposed to actually try to disempower all of humanity.

It would therefore be disappointing, to say the least, if Carlsmith were to primarily argue for (LGS) rather than for (ICC-3). However, that appears to be what Carlsmith does...

What we need is an argument that artificial agents for whom power would be useful, and who are aware of this fact are likely to go on to seek enough power to disempower all of humanity. And so far we have literally not seen an argument for this claim."

- Matthew Barnett

- January 2024 [LW(p) · GW(p)]: "Even if a unified agent can take over the world, it is unlikely to be in their best interest to try to do so. The central argument here would be premised on a model of rational agency, in which an agent tries to maximize benefits minus costs, subject to constraints. The agent would be faced with a choice: (1) Attempt to take over the world, and steal everyone's stuff, or (2) Work within a system of compromise, trade, and law, and get very rich within that system, in order to e.g. buy lots of paperclips. The question of whether (1) is a better choice than (2) is not simply a question of whether taking over the world is "easy" or whether it could be done by the agent. Instead it is a question of whether the benefits of (1) outweigh the costs, relative to choice (2)."

- April 2024 [EA(p) · GW(p)]: "Skepticism of the treacherous turn: The treacherous turn is the idea that (1) at some point there will be a very smart unaligned AI, (2) when weak, this AI will pretend to be nice, but (3) when sufficiently strong, this AI will turn on humanity by taking over the world by surprise, and then (4) optimize the universe without constraint, which would be very bad for humans.

By comparison, I find it more likely that no individual AI will ever be strong enough to take over the world, in the sense of overthrowing the world's existing institutions and governments by surprise. Instead, I broadly expect unaligned AIs will integrate into society and try to accomplish their goals by advocating for their legal rights, rather than trying to overthrow our institutions by force. Upon attaining legal personhood, unaligned AIs can utilize their legal rights to achieve their objectives, for example by getting a job and trading their labor for property, within the already-existing institutions. Because the world is not zero sum, and there are economic benefits to scale and specialization, this argument implies that unaligned AIs may well have a net-positive effect on humans, as they could trade with us, producing value in exchange for our own property and services."

There are important differences between their arguments and yours, such as your focus on the ease of takeover as the key factor in the cost-benefit analysis. But one central argument is the same: in your words, "even for an AI system that estimates some reasonable probability of success at takeover if it goes for it, the strategic calculus may be substantially more complex."

Why am I pointing this out? Because I think it's worth keeping track of who's been right and who's been wrong in longstanding intellectual debates. Yudkowsky was wrong about takeoff speeds, and Paul was right. Bostrom was wrong about the difficulty of value specification. Given that most people cannot evaluate most debates on the object level (especially debates involving hundreds of pages written by people with PhDs in philosophy), it serves a genuinely useful epistemic function to pay attention to the intellectual track records of people and communities.

Two potential updates here:

- On the value of external academic criticism in refining key arguments in the AI risk debate.

- On the likelihood that long-held and widespread beliefs in the AI risk community are incorrect.

↑ comment by Joe Carlsmith (joekc) · 2024-07-26T00:44:20.407Z · LW(p) · GW(p)

"Your 2021 report on power-seeking does not appear to discuss the cost-benefit analysis that a misaligned AI would conduct when considering takeover, or the likelihood that this cost-benefit analysis might not favor takeover."

I don't think this is quite right. For example: Section 4.3.3 of the report, "Controlling circumstances" focuses on the possibility of ensuring that an AI's environmental constraints are such that the cost-benefit calculus does not favor problematic power-seeking. Quoting:

So far in section 4.3, I’ve been talking about controlling “internal” properties of an APS system:

namely, its objectives and capabilities. But we can control external circumstances, too—and in

particular, the type of options and incentives a system faces.

Controlling options means controlling what a circumstance makes it possible for a system to do, even

if it tried. Thus, using a computer without internet access might prevent certain types of hacking; a

factory robot may not be able to access to the outside world; and so forth.

Controlling incentives, by contrast, means controlling which options it makes sense to choose, given

some set of objectives. Thus, perhaps an AI system could impersonate a human, or lie; but if it knows

that it will be caught, and that being caught would be costly to its objectives, it might refrain. Or

perhaps a system will receive more of a certain kind of reward for cooperating with humans, even

though options for misaligned power-seeking are open.

Human society relies heavily on controlling the options and incentives of agents with imperfectly

aligned objectives. Thus: suppose I seek money for myself, and Bob seeks money for Bob. This need

not be a problem when I hire Bob as a contractor. Rather: I pay him for his work; I don’t give him

access to the company bank account; and various social and legal factors reduce his incentives to try

to steal from me, even if he could.

A variety of similar strategies will plausibly be available and important with APS systems, too. Note,

though, that Bob’s capabilities matter a lot, here. If he was better at hacking, my efforts to avoid

giving him the option of accessing the company bank account might (unbeknownst to me) fail. If he

was better at avoiding detection, his incentives not to steal might change; and so forth.

PS-alignment strategies that rely on controlling options and incentives therefore require ways of

exerting this control (e.g., mechanisms of security, monitoring, enforcement, etc) that scale with

the capabilities of frontier APS systems. Note, though, that we need not rely solely on human

abilities in this respect. For example, we might be able to use various non-APS systems and/or

practically-aligned APS systems to help.

See also the discussion of myopia in 4.3.1.3...

The most paradigmatically dangerous types of AI systems plan strategically in pursuit of long-term objectives, since longer time horizons leave more time to gain and use forms of power humans aren’t making readily available, they more easily justify strategic but temporarily costly action (for example, trying to appear adequately aligned, in order to get deployed) aimed at such power. Myopic agentic planners, by contrast, are on a much tighter schedule, and they have consequently weaker incentives to attempt forms of misaligned deception, resource-acquisition, etc that only pay off in the long-run (though even short spans of time can be enough to do a lot of harm, especially for extremely capable systems—and the timespans “short enough to be safe” can alter if what one can do in a given span of time changes).

And of "controlling capabilities" in section 4.3.2:

Less capable systems will also have a harder time getting and keeping power, and a harder time making use of it, so they will have stronger incentives to cooperate with humans (rather than trying to e.g. deceive or overpower them), and to make do with the power and opportunities that humans provide them by default.

I also discuss the cost-benefit dynamic in the section on instrumental convergence (including discussion of trying-to-make-a-billion-dollars as an example), and point people to section 4.3 for more discussion.

I think there is an important point in this vicinity: namely, that power-seeking behavior, in practice, arises not just due to strategically-aware agentic planning, but due to the specific interaction between an agent’s capabilities, objectives, and circumstances. But I don’t think this undermines the posited instrumental connection between strategically-aware agentic planning and power-seeking in general. Humans may not seek various types of power in their current circumstances—in which, for example, their capabilities are roughly similar to those of their peers, they are subject to various social/legal incentives and physical/temporal constraints, and in which many forms of power-seeking would violate ethical constraints they treat as intrinsically important. But almost all humans will seek to gain and maintain various types of power in some circumstances, and especially to the extent they have the capabilities and opportunities to get, use, and maintain that power with comparatively little cost. Thus, for most humans, it makes little sense to devote themselves to starting a billion dollar company—the returns to such effort are too low. But most humans will walk across the street to pick up a billion dollar check.

Put more broadly: the power-seeking behavior humans display, when getting power is easy, seems to me quite compatible with the instrumental convergence thesis. And unchecked by ethics, constraints, and incentives (indeed, even when checked by these things) human power-seeking seems to me plenty dangerous, too. That said, the absence of various forms of overt power-seeking in humans may point to ways we could try to maintain control over less-than-fully PS-aligned APS systems (see 4.3 for more).

That said, I'm happy to acknowledge that the discussion of instrumental convergence in the power-seeking report is one of the weakest parts, on this and other grounds (see footnote for more);[1] that indeed numerous people over the years, including the ones you cite, have pushed back on issues in the vicinity (see e.g. Garfinkel's 2021 review for another example; also Crawford (2023)); and that this pushback (along with other discussions and pieces of content -- e.g., Redwood Research's work on "control [LW · GW]," Carl Shulman on the Dwarkesh Podcast) has further clarified for me the importance of this aspect of picture. I've added some citations [LW(p) · GW(p)] in this respect. And I am definitely excited about people (external academics or otherwise) criticizing/refining these arguments -- that's part of why I write these long reports trying to be clear about the state of the arguments as I currently understand them.

- ^

The way I'd personally phrase the weakness is: the formulation of instrumental convergence focuses on arguing from "misaligned behavior from an APS system on some inputs" to a default expectation of "misaligned power-seeking from an APS system on some inputs." I still think this is a reasonable claim, but per the argument in this post (and also per my response to Thorstad here), in order to get to an argument for misaligned power-seeking on the the inputs the AI will actually receive, you do need to engage in a much more holistic evaluation of the difficulty of controlling an AI's objectives, capabilities, and circumstances enough to prevent problematic power-seeking from being the rational option. Section 4.3 in the report ("The challenge of practical PS-alignment") is my attempt at this, but I think I should've been more explicit about its relationship to the weaker instrumental convergence claim outlined in 4.2, and it's more of a catalog of challenges than a direct argument for expecting PS-misalignment. And indeed, my current view is that this is roughly the actual argumentative situation. That is, for AIs that aren't powerful enough to satisfy the "very easy to takeover via a wide variety of methods" condition discussed in the post, I don't currently think there's a very clean argument for expecting problematic power-seeking -- rather, there is mostly a catalogue of challenges that lead to increasing amounts of concern, the easier takeover becomes. Once you reach systems that are in a position to take over very easily via a wide variety of methods, though, something closer to the recasted classic argument in the post starts to apply (and in fairness, both Bostrom and Yudkowsky, at least, do tend to try to also motivate expecting superintelligences to be capable of this type of takeover -- hence the emphasis on decisive strategic advantages).

↑ comment by accountability · 2024-07-26T01:00:35.678Z · LW(p) · GW(p)

Retracted. I apologize for mischaracterizing the report and for the unfair attack on your work.