LessWrong 2.0 Reader

View: New · Old · Topnext page (older posts) →

next page (older posts) →

I think future more powerful/useful AIs will understand our intentions better IF they are trained to predict language. Text corpuses contain rich semantics about human intentions.

I can imagine other AI systems that are trained differently, and I would be more worried about those.

That's what I meant by current AI understanding our intentions possibly better than future AI.

richard_kennaway on Introducing AI Lab Watch"AI Watch."

raemon on Raemon's ShortformAre the disagree reacts with ‘small icons are good for this reason (enough to override other concerns)’ or ‘I didn’t update previously?’

d0themath on We might be missing some key feature of AI takeoff; it'll probably seem like "we could've seen this coming"I will also suggest the questions: 1) What are the things I’m really confident in? And 2) What are the things those I often read or talk to are really confident in? 3) And are there simple arguments which just involve bringing in little-thought-about domains of effect which throw that confidence into question?

jesse-hoogland on Examples of Highly Counterfactual Discoveries?Anecdotally (I couldn't find confirmation after a few minutes of searching), I remember hearing a claim about Darwin being particularly ahead of the curve with sexual selection & mate choice. That without Darwin it might have taken decades for biologists to come to the same realizations.

review-bot on Cohabitive Games so FarThe LessWrong Review [? · GW] runs every year to select the posts that have most stood the test of time. This post is not yet eligible for review, but will be at the end of 2024. The top fifty or so posts are featured prominently on the site throughout the year. Will this post make the top fifty?

Thank you for the references! I'm reading your writings, it's interesting

I posted the super-cooperation argument while expecting that LessWrong would likely not be receptive, but I'm not sure which community would engage with all this and find it pertinent at this stage

More concrete and empirical productions seems needed

I rly like the idea of making songs to powerfwly remind urself abt things. TODO.

Step 1: Set an alarm for the morning. Step 2: Set the alarm tone for this song. Step 3: Make the alarm snooze for 30 minutes after the song has played. Step 4: Make the alarm only dismissable with solving a puzzle. Step 5: Only ever dismiss the alarm after you already left the house for the walk. Step 6: Always have an umbrella for when it is rainy, and have an alternative route without muddy roads.

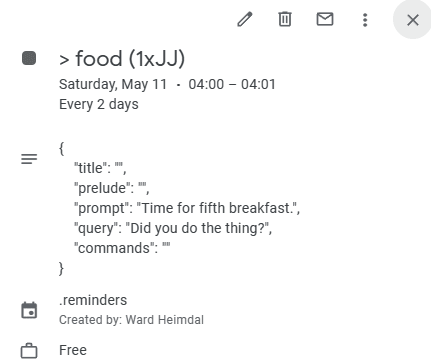

I currently (until I get around to making a better system...) have an AI voice say reminders to myself based on calendar events I've set up to repeat every day (or any period I've defined). The event description is JSON, and if '"prompt": "Time to take a walk!"' is nonempty, the voice says what's in the prompt.

I don't have any routines that are too forcefwl (like "only dismissable with solving a puzzle"), because I want to minimize whip and maximize carrot. If I can only do what's good bc I force myself to do it, it's much less effective compared to if I just *want* to do what's good all the time.

...But whip can often be effective, so I don't recommend never using it. I'm just especially weak to it, due to not having much social backup-motivation, and a heavy tendency to fall into deep depressive equilibria.

bogdan-ionut-cirstea on Refusal in LLMs is mediated by a single directionWe can implement this as an inference-time intervention: every time a component c (e.g. an attention head) writes its output cout∈Rdmodel to the residual stream, we can erase its contribution to the "refusal direction" ^r. We can do this by computing the projection of cout onto ^r, and then subtracting this projection away:

c′out←cout−(cout⋅^r)^rNote that we are ablating the same direction at every token and every layer. By performing this ablation at every component that writes the residual stream, we effectively prevent the model from ever representing this feature.

I'll note that to me this seems surprisingly spiritually similar to lines 7-8 from Algorithm 1 (at page 13) from Concept Algebra for (Score-Based) Text-Controlled Generative Models, where they 'project out' a direction corresponding to a semantic concept after each diffusion step (in a diffusion model).

This seems notable because the above paper proposes a theory for why linear representations might emerge in diffusion models and the authors seem interested in potentially connecting their findings to representations in transformers (especially in the residual stream). From a response to a review:

johannes-c-mayer on Johannes C. Mayer's ShortformApplication to Other Generative Models Ultimately, the results in the paper are about non-parametric representations (indeed, the results are about the structure of probability distributions directly!) The importance of diffusion models is that they non-parametrically model the conditional distribution, so that the score representation directly inherits the properties of the distribution.

To apply the results to other generative models, we must articulate the connection between the natural representations of these models (e.g., the residual stream in transformers) and the (estimated) conditional distributions. For autoregressive models like Parti, it’s not immediately clear how to do this. This is an exciting and important direction for future work!

(Very speculatively: models with finite dimensional representations are often trained with objective functions corresponding to log likelihoods of exponential family probability models, such that the natural finite dimensional representation corresponds to the natural parameter of the exponential family model. In exponential family models, the Stein score is exactly the inner product of the natural parameter with $y$. This weakly suggests that additive subspace structure may originate in these models following the same Stein score representation arguments!)

Connection to Interpretability This is a great question! Indeed, a major motivation for starting this line of work is to try to understand if the ''linear subspace hypothesis'' in mechanistic interpretability of transformers is true, and why it arises if so. As just discussed, the missing step for precisely connecting our results to this line of work is articulating how the finite dimensional transformer representation (the residual stream) relates to the log probability of the conditional distributions. Solving this missing step would presumably allow the tool set developed here to be brought to bear on the interpretation of transformers.

One exciting observation here is that linear subspace structure appears to be a generic feature of probability distributions! Much mechanistic interpretability work motivates the linear subspace hypothesis by appealing to special structure of the transformer architecture (e.g., this is Anthropic's usual explanation). In contrast, our results suggest that linear encoding may fundamentally be about the structure of the data generating process.

Taking a walk is the single most important thing. It is really helpful for helping me think. My life magically reassembles itself when I reflect. I notice all the things that I know are good to do but fail to do.

In the past, I noticed that forcing myself to think about my research was counterproductive and devised other strategies for making me think about it, that actually worked, in 15 minutes.

The obvious things just work. Name you just fill your brain with all the research's current state. What did you think about yesterday? Just remember. Just explain it to yourself. With the context loaded the thoughts you want to have will come unbidden. Even when your walk is over you retain this context. Doing more research is natural now.

There were many other things I figured out during the walk, like the importance of structuring my research workflow, how meditation can help me, what the current bottleneck in my research is, and more.

It's proven tried and true. So it's ridiculous that so far I have not managed to can't notice its power. Of all the things that I do in a day, I thought this was one of the least important. But I was so wrong.

I also like talking to IA out loud during the walk. It's really fun and helpful. Talking out loud is helpful for me to build a better understanding, and IA often has good suggestions.

So how do we do this? How can we never forget to take a 30-minute walk in the sun? We make this song, and then go on:

and on and on and on.

We can also list other advantages to a walk, to make our brain remember this:

With that now said, let's talk about, how to never forget to take your daily work now:

Step 1: Set an alarm for the morning. Step 2: Set the alarm tone for this song. Step 3: Make the alarm snooze for 30 minutes after the song has played. Step 4: Make the alarm only dismissable with solving a puzzle. Step 5: Only ever dismiss the alarm after you already left the house for the walk. Step 6: Always have an umbrella for when it is rainy, and have an alternative route without muddy roads.

Now may you succeed!