The Homunculus Problem

post by abramdemski · 2021-05-27T20:25:58.312Z · LW · GW · 38 commentsContents

Added: Nontrivial Implication None 39 comments

(This is not (quite) just a re-hashing of the homunculus fallacy.)

I'm contemplating what it would mean for machine learning models such as GPT-3 to be honest with us [LW · GW]. Honesty involves conveying your subjective experience... but what does it mean for a machine learning model to accurately convey its subjective experience to us?

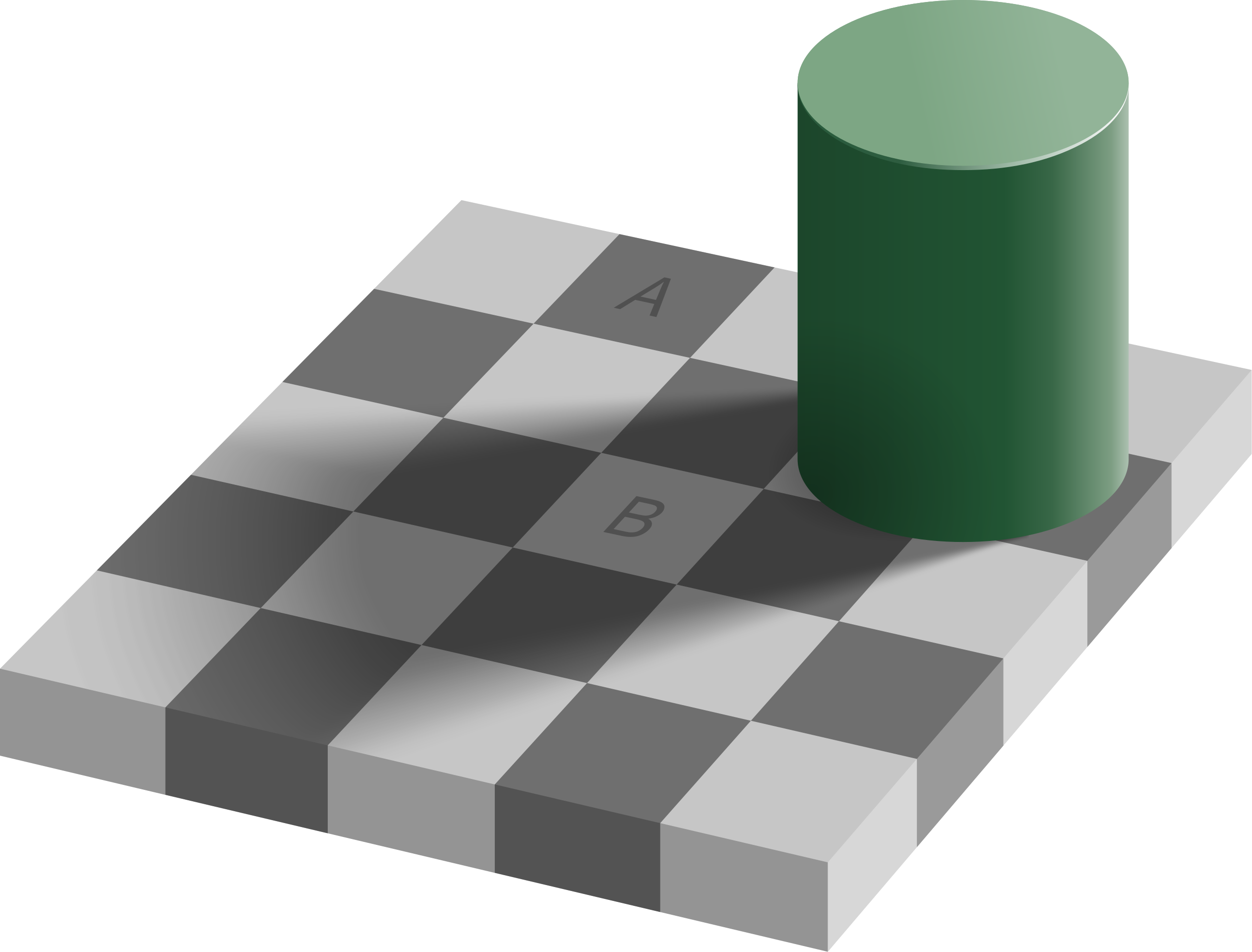

You've probably seen an optical illusion like this:

You've probably also heard an explanation something like this:

"We don't see the actual colors of objects. Instead, the brain adjusts colors for us, based on surrounding lighting cues, to approximate the surface pigmentation. In this example, it leads us astray, because what we are actually looking at is a false image made up of surface pigmentation (or illumination, if you're looking at this on a screen)."

This explanation definitely captures something about what's going on, but there are several subtle problems with it:

- It's a homunculus fallacy! It explains what we're seeing by imagining that there is a little person inside our heads, who sees (as if projected on a screen) an adjusted version of the image. The brain adjusts the brightness to remove shadows, and adjusts colors to remove effects of colored light. The little person therefore can't tell that patch A is actually the same color as patch B.

- Even if there was a little person, the argument does not describe my subjective experience, because I can still see the shadow! I experience the shadowed area as darker than the unshadowed area. So the homunculus story doesn't actually fit what I see at all!

- I can occasionally and briefly get my brain to recognize A and B as the same shade. (It's very difficult, and it quickly snaps back.)

My point is, even when cognitive psychologists are trained to avoid the homunculus fallacy, they go and make it again, because they don't have a better alternative.

One thing the homunculus story gets right which seems difficult to get right is that when you show me the visual illusion, and explain it to me, I can believe you, even if my brain is still seeing the illusion. I'm using the language "my brain" vs "me" precisely because the homunculus fallacy is a pretty decent model here: I know that the patches are the same shade, but my brain insists they're different. It is as if I'm a little person watching what my brain is putting on a projector: I can believe or disbelieve it.

For example, a simple Bayesian picture of what the brain is doing would involve a probabilistic "world model". The "world model" updates on visual data and reaches conclusions. Nowhere in this picture is there any room for the kind of optical illusion we routinely experience: either the Bayesian would be fooled (there is no awareness that it's being fooled) or not (there is no perception of the illusion; the patches look the same shade). Or a probabilistic mixture of the two. ("I'm not sure whether the patch is the same color.")

Actually, this downplays the problem, because it's not even clear what it means to ask a Bayesian model about its subjective experience.

When I've seen the homunculus fallacy discussed, I've always seen it maligned as this bad mistake. I don't recall ever seeing it posed as a problem: we don't just want to discard it, we want to replace it with a better way of reasoning. I want to have a handy term pointing at this problem. I haven't thought of anything better than the homunculus problem yet.

The Homunculus Problem: The homunculus fallacy is a terrible picture of the brain (or machine learning models), yet, any talk of subjective experience (including phenomena such as visual illusions) falls into the fallacious pattern of "experience" vs "the experiencer". ("My brain shows me A being darker than B...")

The homunculus problem is a superset of the homunculus fallacy, in the following sense: if something falls prey to the homunculus fallacy, it involves a line of reasoning which (explicitly or implicitly) relies on a smaller part which is actually a whole agent in itself. If something falls prey to the homunculus problem, it could either be that, or it could be a fully reductive model which (may explain some things, but) fails to have a place for our subjective experience. (For example, a Bayesian model which lacks introspection.)

This is not the hard problem of consciousness, because I'm not interested in the raw question of how conscious experience arises from matter. That is: if by "consciousness" we mean the metaphysical thing which no configuration of physical matter can force into existence, I'm not talking about that. I'm talking about the neuroscientist's consciousness (what philosophers might call "correlates of consciousness").

It's just that, even when we think of "experience" as a physical thing which happens in brains, we end up running into the homunculus fallacy when trying to explain some concepts.

I'm tempted to say that this is like the hard problem of consciousness, in that the main thing to avoid is mistaking it for an easier problem. (IE, it's called the "hard" problem of consciousness to make sure it's not confused with easier problems of consciousness.) I don't think you get to claim you've solved the homunculus problem just because you have some machine-learning model of introspection [LW · GW]. You need to provide a way of talking about these things which serves well in a lot of examples.

This is related to embedded agency [? · GW], because part of the problem is that many of our formal models (bayesian decision theory, etc) don't include introspection, so you get this picture where "world models" are only about the external world, so agents are incapable of reflecting on their "internal experience" in any way.

This feels related to Kaj Sotala's discussion of no-self [? · GW]. What does it mean to form an accurate picture of what it means to form an accurate picture of yourself?

Added: Nontrivial Implication

A common claim among scientifically-minded people is that "you never actually observe anything directly, except raw sensory data". For example, if you see a glass fall from a counter and shattering, you're actually seeing photons hitting your eye, and inferring the existence of the glass, its fall, and its shattering.

I think an easy mistake to make, here, is to implicitly think as if there's some specific boundary where sensory impressions are "observed" (eg, the retina, or v1). This in effect posits a homunculus after this point.

In fact, there is no such firm boundary. It's easy to argue that we don't really observe the light hitting the retina, because it is conveyed imperfectly (and much-compressed) to the optic nerve, and thereby to v1. But by the time the data is in v1, it's already a bit abstracted and processed. There's no theater of consciousness with access to the raw data.

Furthermore, if someone wanted to claim that the information in v1 is what's really truly "observed", we could make a similar case about how information in v1 is conveyed to the rest of the brain. (This is like the step where we point out that the homunculus would need another homunculus inside of it.) Every level of signal processing is imperfect, intelligently compressed, and could be seen as "doing some interpretation"!

I would argue that this kind of thinking is making a mistake by over-valuing low-level physical reality. Yes, low-level physical reality is what everything is implemented on top of. But when dealing with high-level objects, we care about the interactions of those objects. Saying "we don't really observe the glass directly" is a lot like saying "there's not really a glass (because it's all just atoms)". If you start with "no glass, only atoms" you might as well proceed to "no atoms, only particles" and then you'll be tempted by "no particles, only quantum fields" or other further reductions. The implication is that you can't be sure anything in particular is real until you become fully confident of the low-level physics the universe is based on (which you may never be).

Similarly, if you start by saying "you don't directly observe the glass, only photons" you'll be tempted to continue "you don't directly observe photons, only neural activations in V1"; but then you should be forced to admit that you don't directly observe neural activations in V1... so where do you stop?

It seems sensible to take the simple realist position:

There really is a glass, and we really observe it.

This doesn't solve what I think of as the whole homunculus problem, but it does allow us to talk about our experiences in a direct and seemingly unproblematic way.

However, if you buy this argument, then you also have to buy a couple of surprising conclusions:

- Experience of something does not grant you complete knowledge of that thing. Yes, I claim that we can experience external reality; but this does not imply that we perceive every scratch in the glass in perfect detail, or whatever.

- Furthermore, we can be wrong about our direct experience! The image of the glass falling could be an illusion. This should not really be a surprise, I think; everything is fallible. However, it goes against a common intuition that there's some level of sufficiently direct experience which cannot be mistaken (as is assumed by Bayesian updating [LW · GW]).

38 comments

Comments sorted by top scores.

comment by Nisan · 2021-05-27T23:29:33.753Z · LW(p) · GW(p)

This is the sort of problem Dennett's Consciousness Explained addresses. I wish I could summarize it here, but I don't remember it well enough.

It uses the heterophenomenological method, which means you take a dataset of earnest utterances like "the shadow appears darker than the rest of the image" and "B appears brighter than A", and come up with a model of perception/cognition to explain the utterances. In practice, as you point out, homunculus models won't explain the data. Instead the model will say that different cognitive faculties will have access to different pieces of information at different times.

comment by romeostevensit · 2021-05-28T07:12:22.637Z · LW(p) · GW(p)

The homunculus fallacy fallacy is the tendency to deny that you are in fact the homunculus. The homunculus homunculus fallacy is believing in a second order homunculus that is only a projection and is what mistaken people are talking about when they refer to the homunculus fallacy. The homunculus homunculus homunculus fallacy fallacy is believing that it leads to infinite regress problems when in fact two levels of meta are sufficient.

Replies from: abramdemski↑ comment by abramdemski · 2021-05-28T16:29:18.920Z · LW(p) · GW(p)

I feel like someone could write interesting posts about each of these. :)

Replies from: abramdemski↑ comment by abramdemski · 2021-05-28T19:50:07.574Z · LW(p) · GW(p)

(since you work at Qualia I assume you have an interesting story behind each of these; I'm not indicating that I get it)

comment by tailcalled · 2021-05-27T21:35:35.016Z · LW(p) · GW(p)

One model I've played around with is distinguishing two different sorts of beliefs, which for historical reasons I call "credences" and "propositional assertions". My model doesn't entirely hold water, I think, but it might be a useful starting point for inspiration for this topic.

Roughly speaking I define a "credence" to be a Bayesian belief in the naive sense. It updates according to what you perceive, and "from the inside" it just feels like the way the world is. I consider basic senses as well as aliefs to be under the "credence" label.

More specifically, in this model, your credence when looking at the picture is that there is a checkerboard with consistently colored squares, a cylinder standing on the checkboard, and casting a shadow on it, which obviously doesn't change the shade of the squares, but does make them look darker.

In contrast, in this model, I assert that abstract conscious high-level verbal beliefs aren't proper beliefs (in the Bayesian sense) at all; rather, they're "propositional assertions". They're more like a sort of verbal game or something. People learn different ways of communicating verbally with each other, and these ways to a degree constrain their learned "rules of the game" to act like proper beliefs - but in some cases they can end up acting very very different from beliefs (e.g. signalling and such).

When doing theory of mind, we learn to mostly just accept the homunculus fallacy, because socially this leads to useful tools for talking theory of mind, even if they are not very accurate. You also learn to endorse the notion that you know your credences are wrong and irrational, even though your credences are what you "really" believe; e.g. you learn to endorse a proposition that "B" has the same color as "A".

This model could probably be said to imply overly much separation of your rational mind away from the rest of your mind, in a way that is unrealistic. But it might be a useful inversion on the standard account of the situation, which engages in the homunculus fallacy?

Replies from: abramdemski↑ comment by abramdemski · 2021-06-02T22:30:11.922Z · LW(p) · GW(p)

More specifically, in this model, your credence when looking at the picture is that there is a checkerboard with consistently colored squares, a cylinder standing on the checkboard, and casting a shadow on it, which obviously doesn't change the shade of the squares, but does make them look darker.

In your model, do you think there's some sort of confused query-substitution going on, where we (at some level) confuse "is the color patch darker" with "is the square of the checkerboard darker"?

Because for me, the actual color patch (usually) seems darker, and I perceive myself as being able to distinguish that query.

Do the credences simply lack that distinction or something?

More generally, my correction to your credences/assertions model would be to point out that (in very specific ways) the assertions can end up "smarter". Specifically, I think assertions are better at making crisp distinctions and better at logical reasoning. This puts assertions in a weird position.

Replies from: tailcalled↑ comment by tailcalled · 2021-06-03T13:20:45.601Z · LW(p) · GW(p)

I might not have explained the credence/propositional assertion distinction well enough. Imagine some sort of language model in AI, like GPT-3 or CLIP or whatever. For a language model, credences are its internal neuron activations and weights, while propositional assertions are the sequences of text tokens. The neuron activations and weights seem like they should definitely have a Bayesian interpretation as being beliefs, since they are optimized for accurate predictions, but this does not mean one can take the semantic meaning of the text strings at face value; the model isn't optimized to emit true text strings, but instead optimized to emit text strings that match what humans say (or if it was an RL agent, maybe text strings that make humans do what it wants, or whatever).

My proposal is, what if humans have a similar split going on? This might be obscured a bit in this context, since we're on LessWrong, which to a large degree has a goal of making propositional assertions act more like proper beliefs.

In your model, do you think there's some sort of confused query-substitution going on, where we (at some level) confuse "is the color patch darker" with "is the square of the checkerboard darker"?

Yes, assuming I understand you correctly. It seems to me that there's at least three queries at play:

- Is the square on the checkerboard of a darker color?

- Is there a shadow that darkens these squares?

- Is the light emitted from this flat screen of a lower luminosity?

If I understand your question, "is the color patch darker?" maps to query 3?

The reason the illusion works is that for most people, query 3 isn't part of their model (in the sense of credences). They can deal with the list of symbols as a propositional assertion, but it doesn't map all the way into their senses. (Unless they have sufficient experience with it? I imagine artists would end up also having credences on it, due to experience with selecting colors. I've also heard that learning to see the actual visual shapes of what you're drawing, rather than the abstracted representation, is an important step in becoming an artist.)

Do the credences simply lack that distinction or something?

The existence of the illusion would seem to imply that most people's credences lack the distinction (or rather, lacks query 3, and thus finds it necessary to translate query 3 into query 2 or query 1). However, it's not fundamental to the notion of credence vs propositional assertion that it lacks this. Rather, the homunculus problem seems to involve some sort of duality, either real or confused. I'm proposing that the duality is real, but in a different way than the homunculus fallacy does, where credences act like beliefs and propositional assertions can act in many ways.

This model doesn't really make strong claims about the structure of the distinctions credences make, similar to how Bayesianism doesn't make strong claims about the structure of the prior. But that said, there must obviously be some innate element, and there also seems to be some learned element, where they make the distinctions that you have experience with.

We've seen objects move in and out of light sources a ton, so we are very experienced in the distinction between "this object has a dark color" vs "there is a shadow on this object". Meanwhile...

Wait actually, you've done some illustrations, right? I'm not sure how experienced you are with art (the illustrations you've posted to LessWrong have been sketches without photorealistic shading, if I recall correctly, but you might very well have done other stuff that I'm not aware of), so this might disprove some of my thoughts on how this works, if you have experience with shading things.

(Though in a way this is kinda peripheral to my idea... there's lots of ways that credences could work that don't match this.)

More generally, my correction to your credences/assertions model would be to point out that (in very specific ways) the assertions can end up "smarter". Specifically, I think assertions are better at making crisp distinctions and better at logical reasoning. This puts assertions in a weird position.

Yes, and propositional assertions seem more "open-ended" and separable from the people thinking of them, while credences are more embedded in the person and their viewpoint. There's a tradeoff, I'm just proposing seeing the tradeoff more as "credence-oriented individuals use propositional assertions as tools".

comment by Steven Byrnes (steve2152) · 2021-05-28T03:06:30.289Z · LW(p) · GW(p)

Thanks for the thought-provoking post! Let me try...

We have a visual system, and it (like everything in the neocortex) comes with an interface for "querying" it. Like, Dileep George gives the example "I'm hammering a nail into a wall. Is the nail horizontal or vertical?" You answer that question by constructing a visual model and then querying it. Or more simply, if I ask you a question about what you're looking at, you attend to something in the visual field and give an answer.

Dileep writes: "An advantage of generative PGMs is that we can train the model once on all the data and then, at test time, decide which variables should act as evidence and which variables should act as targets, obtaining valid answers without retraining the model. Furthermore, we can also decide at test time that some variables fall in neither of the previous two categories (unobserved variables), and the model will use the rules of probability to marginalize them out." (ref) (I'm quoting that verbatim because I'm not an expert on this stuff and I'm worried I'll say something wrong. :-P )

Anyway, I would say that the word "I" is generally referring to the goings-on in the global workspace [LW · GW] circuits in the brain, which we can think of as hierarchically above the visual system. The workspace can query the visual system, basically by sending a suite of top-down constraints into the visual system PGM ("there's definitely a vertical line here!" or whatever), allowing the visual system to do its probabilistic inference, and then branching based on the status of some other visual system variable(s).

So in everyday terms we say "When someone asks me a question that's most easily answered by visualizing something or thinking visually or attending to what I'm looking at, that's what I do!" Whereas in fancypants terms we would describe the same thing as "In certain situations, the global workspace curcuits have learned (probably via RL) that it is advantageous to query the visual system in certain ways."

So over the course of our lives, we (=global workspace circuits) learn the operation "figure out whether one thing is darker than another thing" as a specific way to query the visual system. And the checker shadow illusion has the fun property that when we query the visual system this way, it gives the wrong answer. We can still "know" the right answer by inferring it through a path that does not involve querying the visual system. Maybe it goes through abstract knowledge instead. And I guess your #3 ("I can occasionally and briefly get my brain to recognize A and B as the same shade") probably looks like a really convoluted visual system query that involves forcing a bunch of PGM variables in unusual coordinated ways that prevent the shade-corrector from activating, or something like that.

What about fixing the mistake? Well, I think the global workspace has basically no control over how the visual system PGM is wired up internally, not only because the visual system has its own learning algorithm that involves minimizing prediction error, not maximizing reward [LW · GW], but also for the simpler reason that it gets locked down and stops learning at a pretty young age, I think. The global workspace can learn new queries, but it might be that there just isn't any way to query the wired-up adult visual system to return the information you want (raw shade comparison). Or maybe with more practice you can get better at your convoluted #3 query...

Not sure about all this...

Replies from: abramdemski, abramdemski↑ comment by abramdemski · 2021-06-02T22:02:37.067Z · LW(p) · GW(p)

Anyway, I would say that the word "I" is generally referring to the goings-on in the global workspace [LW · GW] circuits in the brain, which we can think of as hierarchically above the visual system. The workspace can query the visual system, basically by sending a suite of top-down constraints into the visual system PGM ("there's definitely a vertical line here!" or whatever), allowing the visual system to do its probabilistic inference, and then branching based on the status of some other visual system variable(s).

Why is a query represented as an overconfident false belief?

How would you query low-level details from a high-level node? Don't the hierarchically high-up nodes represent things which range over longer distances in space/time, eliding low-level details like lines?

Replies from: steve2152↑ comment by Steven Byrnes (steve2152) · 2021-06-03T13:39:12.257Z · LW(p) · GW(p)

How would you query low-level details from a high-level node? Don't the hierarchically high-up nodes represent things which range over longer distances in space/time, eliding low-level details like lines?

My explanation would be: it's not a strict hierarchy, there are plenty of connections from the top to the bottom (or at least near-bottom). "Feedforward and feedback projections between regions typically connect to multiple levels of the hierarchy" "It has been estimated that 40% of all possible region-to-region connections actually exist which is much larger than a pure hierarchy would suggest." (ref) (I've heard it elsewhere too.) Also, we need to do compression (throw out information) to get from raw input to top-level, but I think a lot of that compression is accomplished by only attending to one "object" at a time, rapidly flitting from one to another. I'm not sure how far that gets you, but at least it's part of the story I think, in that it reduces the need to throw out low-level details. Another thing is saccades: maybe you can't make high-level predictions about literally every cortical column in V1, but if you can access a subset of columns, then saccades can fill in the gaps.

Why is a query represented as an overconfident false belief?

I have pretty high confidence that "visual imagination" is accessing the same world-model database and machinery as "parsing a visual scene" (and likewise "imagining a sound" vs "parsing a sound", etc.) I find it hard to imagine any alternative to that. Like it doesn't seem plausible that we have two copies of this giant data structure and machinery and somehow keep them synchronized. And introspectively, it does seem to be true that there's some competition where it's hard to simultaneously imagine a sound while processing incoming sounds etc.—I mean, it's always hard to do two things at once, but this seems especially hard.

So then the question is: how can you imagine seeing something that isn't there, without the imagination being overruled by bottom-up sensory input? I guess there has to be some kind of mechanism that allows this, like a mechanism by which top-level processing can choose to prevent (a subset of) sensory input from having its usual strong influence on (a subset of) the network. I don't know what that mechanism is.

Replies from: steve2152↑ comment by Steven Byrnes (steve2152) · 2021-07-13T17:33:48.015Z · LW(p) · GW(p)

I have pretty high confidence that "visual imagination" is accessing the same world-model database and machinery as "parsing a visual scene" (and likewise "imagining a sound" vs "parsing a sound", etc.)

Update: Oops! I just learned that what I said there is kinda wrong.

What I should have said was: the machinery / database used for "visual imagination" is a subset of the machinery / database used for "parsing a visual scene".

…But it's a strict subset. Low-level visual processing is all about taking the massive flood of incoming retinal data and distilling it into a more manageable subspace of patterns, and that low-level machinery is not useful for visual imagination. See: visual mental imagery engages the left fusiform gyrus, but not the [occipital lobe].

(To be clear, the occipital lobe is not involved at inference time. The occipital lobe is obviously involved when the left fusiform gyrus is first learning its vocabulary of visual patterns.)

I don't think that affects anything else in the conversation, just wanted to set the record straight. :)

↑ comment by abramdemski · 2021-06-02T21:46:54.851Z · LW(p) · GW(p)

I don't significantly disagree, but I feel uneasy about a few points.

Theories of the sort I take you to be gesturing at often emphasize this nice aspect of their theory, that bottom-up attention (ie attention due to interesting stimulus) can be more or less captured by surprise, IE, local facts about the shifts in probabilities.

I agree that this seems to be a very good correlate of attention. However, the surprise itself wouldn't seem to be the attention.

Surprise points merit extra computation. In terms of belief prop, it's useful to prioritize the messages which are creating the biggest belief shifts. The brain is parallel, so you might think all messages get propagated regardless, but of course, the brain also likes to conserve resources. So, it makes sense that there'd be a mechanism for prioritizing messages.

Yet, message prioritization (I believe) does not account adequately for our experience.

There seems to be an additional mechanism which places surprising content into the global workspace (at least, if we want to phrase this in global workspace theory).

What if we don't like global workspace theory?

Another idea that I think about here is: the brain's "natural grammar" might be a head grammar. This is the fancy linguistics thing which sort of corresponds to the intuitive concept of "the key word in that sentence". Parsing consists not only of grouping words together hierarchically into trees, but furthermore, whenever words are grouped, promoting one of them to be the "head" of that phrase.

In terms of a visual hierarchy, this would mean "some low level details float to the top".

This would potentially explain why we can "see low-level detail" even if we think the rest of the brain primarily consumes the upper layers of the visual hierarchy. We can focus on individual leafs, even while seeing the whole tree as a tree, because we re-parse the tree to make that leaf the "head". We see a leaf with a tree attached.

Maybe.

Without a mechanism like this, we could end up somewhat trapped into the high-level descriptions of what we see, leaving artists unable to invent perspective drawings, and so on.

Replies from: steve2152↑ comment by Steven Byrnes (steve2152) · 2021-06-03T14:42:40.526Z · LW(p) · GW(p)

bottom-up attention (ie attention due to interesting stimulus) can be more or less captured by surprise

Hmm. That's not something I would have said.

I guess I think of two ways that sensory inputs can impact top-level processing.

First, I think sensory inputs impact top-level processing when top-level processing tries to make a prediction that is (directly or indirectly) falsified by the sensory input, and that prediction gets rejected, and top-level processing is forced to think a different thought instead.

- If top-level processing is "paying close attention to some aspect X of sensory input", then that involves "making very specific predictions about aspect X of sensory input", and therefore the predictions are going to keep getting falsified unless they're almost exactly tracking the moment-to-moment status of X.

- On the opposite extreme, if top-level processing is "totally zoning out", then that involves "not making any predictions whatsoever about sensory input", and therefore no matter what the sensory input is, top-level processing can carry on doing what it's doing.

- In between those two extremes, we get the situation where top-level processing is making a pretty generic high-level prediction about sensory input, like "there's confetti on the stage". If the confetti suddenly disappeared altogether, it would falsify the top-level hypothesis, triggering a search for a new model, and being "noticed". But if the detailed configuration of the confetti changes—and it certainly will—it's still compatible with the top-level prediction "there's confetti on the stage" being true, and so top-level processing can carry on doing what it's doing without interruption.

So just to be explicit, I think you can have a lot of low-level surprise without it impacting top-level processing. In the confetti example, down in low-level V1, the cortical columns are constantly being surprised by the detailed way that each piece of confetti jiggles around as it falls, I think, but we don't notice if we're not paying top-down attention.

The second way that I think sensory inputs can impact top-level processing is by a very different route, something like sensory input -> amygdala -> hypothalamus -> top-level processing. (I'm not sure of all the details and I'm leaving some things out; more HERE [LW · GW].) I think this route is kinda an autonomous subsystem, in the sense that top-down processing can't just tell it what to do, and it's not trained on the same reward signal as top-level processing is, and the information can flow in a way that totally bypasses top-level processing. The amygdala is trained (by supervised learning) to activate when detecting things that have immediately preceded feelings of excitement / scared / etc. previously in life, and the hypothalamus is running some hardcoded innate algorithm, I think. (Again, more HERE [LW · GW].) When this route activates, there's a chain of events that results in the forcing of top-level processing to start paying attention to the corresponding sensory input (i.e. start issuing very specific predictions about the corresponding sensory input).

I guess it's possible that there are other mechanisms besides these two, but I can't immediately think of anything that these two mechanisms (or something like them) can't explain.

What if we don't like global workspace theory?

I dunno, I for one like global workspace theory. I called it "top-level processing" in this comment to be inclusive to other possibilities :)

comment by DirectedEvolution (AllAmericanBreakfast) · 2021-05-29T10:05:30.969Z · LW(p) · GW(p)

"We don't see the actual colors of objects. Instead, the brain adjusts colors for us, based on surrounding lighting cues, to approximate the surface pigmentation. In this example, it leads us astray, because what we are actually looking at is a false image made up of surface pigmentation (or illumination, if you're looking at this on a screen)."

Cultural concepts and the colloquial language of vision do not map very neatly onto the subjective experience of vision. Nor does the subjective experience of vision map neatly onto the wavelengths of light striking the opsins of the retina.

For example, we can describe the color of pixels with RGB values. We might call these "light grey" or "dark grey," if we saw them in the MS Paint color picker. This is a measure of the wavelengths of light striking our opsins.

However, when we view the Checkerboard Illusion and look at squares A or B, the shadow pattern causes a different pattern of neurotransmission than if we looked at those squares alone in MS Paint. That different pattern of neurotransmission results in a different qualia, and hence different language to describe it.

Understand that qualia is a fundamentally biochemical process, just like a muscle contraction, although we understand the mechanics of the latter somewhat, while we understand qualia hardly at all. Likewise, the subjective experience of a 'you' thinking, remembering, and experiencing this illusion is a qualia that is also happening along with the qualia of vision. It's like your brain is dancing, moving many qualia-muscles in a complex coordinated fashion all at once.

We can refer possessively to our bodies without risking the homunculus fallacy - saying "my brain" is OK. Since qualia are a physiological process, we can also therefore say "my qualia." Both are synonyms for "this body's brain" and "this body's qualia." "Me" simply means "this body."

Just as we can say "I am seeing" or "I am thinking," we can say "I am experiencing" when we have a qualia of being a homunculus distinct from but experiencing our senses. All of these phrases could be translated as "This brain is generating qualia of seeing, thinking, experiencing."

This helps show that the homunculus fallacy is baked into the language. While in fact, the brain is doing all the seeing, thinking, and experiencing, we have normalized using language that suggests that a homunculus is doing it. Drop the "I am," shift to "this brain is," and it magically vanishes.

Since we don't have a qualiometer, it seems important to be wary of creating language to describe qualia, recombining it symbolically, and then imagining that the new combination describes "real qualia." For example:

- "I am experiencing the A and B squares as different colors" is a pretty commonsense qualia.

- "I am experiencing myself experiencing the A and B squares as the same colors" is less convincing. What does it mean to "experience yourself experiencing?" Is this a real qualia, or just a language game?

The reason this matters is that, in theory, there is a tight connection between biochemical processes and particular qualia. If we were good enough at predicting qualia based on biochemistry (akin to how we can predict a protein's shape based on the mRNA that encodes it), then we could refute somebody's own self-reported inner experience. With a qualiometer, someone could know your inner world better than you know it yourself, describe it more accurately than you can.

Finding a practical language that avoids the homunculus fallacy has more problems than reflecting concepts accurately. It also needs to content with people's inherited language norms, exceedingly poor ability to describe their inner state accurately in words, and need to participate in the language games that the people around them are playing.

Overall, though, I think the simplest change to make is to just start replacing "you" with "your brain" and "you are" with "your brain is," while jamming the word "qualia" in somewhere every time a sensory word comes up and using precise scientific terminology where possible.

"Your brain doesn't generate a one-to-one mapping from the actual wavelengths of light striking its eyeballs' opsins into vision-qualia. Instead, your brain adjusts color-qualia based on surrounding lighting cues, to approximate the surface pigmentation. In this example, it generates a color-difference-qualia even though the actual wavelengths are identical, because what the brain is actually looking at is a false image made up of surface pigmentation (or illumination, if your eyes are looking at this on a screen)."

comment by Kaj_Sotala · 2021-05-28T12:09:45.783Z · LW(p) · GW(p)

I like the point that homunculus language is necessary for describing the experience of visual illusions, but I don't understand this part:

Even if there was a little person, the argument does not describe my subjective experience, because I can still see the shadow! I experience the shadowed area as darker than the unshadowed area. So the homunculus story doesn't actually fit what I see at all!

How does seeing the shadow contradict the explanation? Isn't the explanation meant to say that the appearance of the shadow is the result of "the brain adjusting colors for us", in that the brain infers the existence of a shadow and then adjusts the image to incorporate that?

Replies from: Nisan↑ comment by Nisan · 2021-05-28T19:06:45.603Z · LW(p) · GW(p)

The homunculus model says that all visual perception factors through an image constructed in the brain. One should be able to reconstruct this image by asking a subject to compare the brightness of pairs of checkerboard squares. A simplistic story about the optical illusion is that the brain detects the shadow and then adjusts the brightness of the squares in the constructed image to exactly compensate for the shadow, so the image depicts the checkerboard's inferred intrinsic optical properties. Such an image would have no shadow, and since that's all the homunculus sees, the homunculus wouldn't perceive a shadow.

That story is not quite right, though. Looking at the picture, the black squares in the shadow do seem darker than the dark squares outside the shadow, and similarly for the white squares. I think if you reconstructed the virtual image using the above procedure you'd get an image with an attenuated shadow. Maybe with some more work you could prove that the subject sees a strong shadow, not an attenuated one, and thereby rescue Abram's argument.

Edit: Sorry, misread your comment. I think the homunculus theory is that in the real image, the shadow is "plainly visible", but the reconstructed image in the brain adjusts the squares so that the shadow is no longer present, or is weaker. Of course, this raises the question of what it means to say the shadow is "plainly visible"...

Replies from: abramdemski, Nisan↑ comment by abramdemski · 2021-05-28T19:59:24.008Z · LW(p) · GW(p)

Thanks for putting it so well!

I expect our subjective "darkness ordering" is actively inconsistent, not merely an attenuated shadow (this is why I claim it can't be depicted by any one image we would imagine the homunculus seeing).

But now I see that an attenuated shadow is consistent with the obvious remarks we might make about subjective brightness. Maybe we need to compare subjective ratios (like "twice as bright") to illustrate the actual inconsistency.

(My subjective experience seems inconsistent to me, but maybe I'm making that up.)

Replies from: abramdemski↑ comment by abramdemski · 2021-05-28T20:01:51.891Z · LW(p) · GW(p)

Elaborating:

The shadow looks "pretty dark" to me. When I ask myself whether it is darker or lighter than the difference between light and dark squares, I get confused, and sometimes snap into seeing A and B as the same shade.

↑ comment by Nisan · 2021-05-28T19:28:01.372Z · LW(p) · GW(p)

Here's a fun and pointless way one could rescue the homunculus model: There's an infinite regress of homunculi, each of which sees a reconstructed image. As you pass up the chain of homunculi, the shadow gets increasingly attenuated, approaching but never reaching complete invisibility. Then we identify "you" with a suitable limit of the homunculi, and what you see is the entire sequence of images under some equivalence relation which "forgets" how similar A and B were early in the sequence, but "remembers" the presence of the shadow.

comment by davidpearce · 2022-06-27T08:28:22.208Z · LW(p) · GW(p)

Homunculi are real. Consider a lucid dream. When lucid, you can know that your body-image is entirely internal to your sleeping brain. You can know that the virtual head you can feel with your virtual hands is entirely internal to your sleeping brain too. Sure, the reality of this homunculus doesn’t explain how the experience is possible. Yet such an absence of explanatory power doesn’t mean that we should disavow talk of homunculi.

Waking consciousness is more controversial. But (I’d argue) you can still experience only a homunculus - but now it’s a homunculus that (normally) causally do-varies with the behaviour of an extra-cranial body.

Replies from: TAGcomment by cousin_it · 2021-05-28T08:39:38.546Z · LW(p) · GW(p)

Not sure I see what problem the post is talking about. We perceive A as darker than B, but actually A and B have the same brightness (as measured by a brightness-measuring device). The brain doesn't remove shadow, it adjusts the perceived brightness of things in shadow.

Replies from: abramdemski↑ comment by abramdemski · 2021-06-02T22:18:28.407Z · LW(p) · GW(p)

Well, I'm saying "we perceive" here evokes a mental model where "we" (a homunculus, or more charitably, a part of our brain) get a corrected image. But I don't think this is what is happening. Instead, "we" get a more sophisticated data-stream which interprets the image.

With color perception, we typically settle for a simple story where rods and cones translate light into a specific color-space. But the real story is far more complicated. The brain translates things into many different color spaces, at different stages of processing. At some point it probably doesn't make sense to speak in terms of color spaces any more (when we're knee deep in higher-level features).

The challenge is to speak about this in a sensible way without saying wrong-headed things. We don't want to just reduce to what's physically going on; we also want to recover whatever is worth recovering of our talk of "experiencing".

Another example: perspective warps 3D space into 2D space in a particular way. But actually, the retina is curved, which changes the perspective mapping somewhat. Naively, we might think this will change which lines we perceive to be straight near the periphery of our vision. But should it? We have lived all our lives with a curved retina. Should we not have learned what lines are straight through experience? I'm not predicting positively that we will be correct about what's curved/straight in our peripheral vision; I'm trying to point out that getting it wrong in the way the naive math of curved retinas suggests would require some very specific wrong machinery in the brain, such that it's not obvious evolution would put it there.

Moreover, like how we transform through many different color spaces in visual processing, we might transform through many different space-spaces too (if that makes sense!). Just because an image is projected on the retina in a slightly wonky way doesn't mean that's final.

I'm trying to point in the direction of thinking about this kind of thing in a non-confused way.

comment by Measure · 2021-05-27T22:05:09.235Z · LW(p) · GW(p)

My brain does some fancy post-processing of the optical data and presents me with the perception of A being darker than B. I can form beliefs about what underlying physical reality might cause this perception, and I expect to continue experiencing it. Separately, I could measure the luminosity of the two regions and get a perception of two equal numbers. When you tell me about the illusion, that changes my expectation of what sorts of luminosity numbers I will perceive, but it doesn't change my expectation that I will continue experiencing apparently-differently-colored regions in my visual field. All this means is that I shouldn't expect my visual field experiences to always match my luminosity sensor output experiences.

Replies from: Measurecomment by Svyatoslav Usachev (svyatoslav-usachev-1) · 2021-06-04T09:31:54.225Z · LW(p) · GW(p)

It seems that homunculus concept is unnecessary here. You can easily talk about the experience itself, e.g. "seeing", or you can still use "I see" as a language construct while realising that you are only referring to the happening phenomenon of "seeing".

There is a difference between knowing something and experiencing it in a particular way, and the former may only very slightly nudge the latter if at all.

I can know a chair is red, but if I close my eyes, I don't see it.

I can know a chair is red, but if I put on coloured glasses, I will not see it as red.

I can know that nothing changes in reality when I take LSD, but, oh boy, does my perception change.

The real problem here is that we are not rational agents, and, what's worse, the small part of us that even resembles anything rational is not in control of our experience.

We'd like to imagine ourselves as agents and then we run into surprises like "how can I know something, but still experience it (or worse, behave!) differently".

comment by interstice · 2021-05-27T21:34:45.660Z · LW(p) · GW(p)

This feels related to metaphilosophy [LW · GW]. In the sense that, (to me) it seems that one of the core difficulties of metaphilosophy is that in coming up with a 'model' agent you need to create an agent that is not only capable of thinking about its own structure, but capable of being confused about what that structure is(and presumably, of becoming un-confused). Bayesian etc. approaches can model agents being confused about object-level things, but it's hard to even imagine what a model of an agent confused about ontology would look like.

comment by Charlie Steiner · 2021-05-27T21:11:35.477Z · LW(p) · GW(p)

Easy :P Just build a language module for the Bayesian model that, when asked about inner experience, starts using words to describe the postprocessed sense data it uses to reason about the world.

Of course this is a joke, hahaha, because humans have Real Experience that is totally different from just piping processed sense data out to be described in response to a verbal cue.

Replies from: abramdemski↑ comment by abramdemski · 2021-05-27T21:19:07.768Z · LW(p) · GW(p)

The flippant-joke-within-flippant-joke format makes this hard to reply to or get anything out of.

Replies from: Charlie Steiner, Signer↑ comment by Charlie Steiner · 2021-05-27T23:09:46.177Z · LW(p) · GW(p)

Fair enough, my only defense is that I thought you'd find it funny.

A more serious answer to the homunculus problem as stated is simply levels of description - one of our ways of talking about and modeling humans in terms of experience (particularly inaccurate experience) tends to reserve a spot for an inner experiencer, and our way of talking about humans in terms of calcium channels tends not to. Neither model is strictly necessary for navigating the world, it's just that by the very question "Why does any explanation of subjective experience invoke an experiencer" you have baked the answer into the question by asking it in a way of talking that saves a spot for the experiencer. If we used a world-model without homunculi, the phrase "subjective experience" would mean something other than what it does in that question.

There is no way out of this from within - as Sean Carroll likes to point out, "why" questions have answers (are valid questions) within a causal model of the world, but aren't valid about the entire causal model itself. If we want to answer questions about why a way of talking about and modeling the world is the way it is, we can only do that within a broader model of the world that contains the first (edit: not actually true always, but seems true in this case), and the very necessity of linking the smaller model to a broader one means the words don't mean quite the same things in the broader way of talking about the world. Nor can we answer "why is the world just like my model says it is?" without either tautology or recourse to a bigger model.

We might as well use the Hard Problem Of Consciousness here. Start with a model of the world that has consciousness explicitly in it. Then ask why the world is like it is in the model. If the explanation stays inside the original model, it is a tautology, and if it uses a different model, it's not answering the original question because all the terms mean different things. The neuroscientists' hard problem of consciousness, as you call it, is in the second camp - it says "skip that original question. We'll be more satisfied by answering a different question."

This homunculus problem seems to be a similar sort of thing, one layer out. The provocative version has an unsatisfying, non-explaining answer, but we might be satisfied by asking related yet different questions like "why is talking about the world in terms of experience and experiencer a good idea for human-like creatures?"

Replies from: TAG, abramdemski↑ comment by TAG · 2021-05-30T18:26:47.377Z · LW(p) · GW(p)

Then ask why the world is like it is in the model. If the explanation stays inside the original model, it is a tautology, and if it uses a different model, it’s not answering the original question because all the terms mean different things

There's two huge assumptions there.

One is that everything within a model is tautologous in a deprecatory sense, a sense that renders it worthless.

The other is that any model features a unique semantics, incomparable with any other model.

The axioms of a system are tautologies, and assuming something as an axiom is widely regarded as a low value, as not really explaining it. The theorems or proofs within a system can also be regarded as tautologies, but it can take a lot of work to derive them, and their subjective value is correspondingly higher. So, a derivation of facts about subjective experience from accepted principles of physics would count as both an explanation of phenomenality and a solution to the hard problem of consciousness...but a mere assumption that qualia exist would not.

Its much more standard to assume that

there is some semantic continuity between different theories than none. That's straightforwardly demonstrated by the fact that people tend to say Einstein had a better theory of gravity than Newton, and so on.

↑ comment by Charlie Steiner · 2021-05-30T20:11:38.019Z · LW(p) · GW(p)

Good points!

The specific case here is why-questions about bits of a model of the world (because I'm making the move to say it's important that certain why-questions about mental stuff aren't just raw data, they are asked about pieces of a model of mental phenomena). For example, suppose I think that the sky is literally a big sphere around the world, and it has the property of being blue in the day and starry in the night. If I wonder why the sky is blue, this pretty obviously isn't going to be a logical consequence of some other part of the model. If I had a more modern model of the sky, its blueness might be a logical consequence of other things, but I wouldn't mean quite the same thing by "sky."

So my claim about different semantics isn't that you can't have any different models with overlapping semantics, it's specifically about going from a model where some datum (e.g. looking up and seeing blue) is a trivial consequence to one where it's a nontrivial consequence. I'm sure it's not totally impossible for the meanings to be absolutely identical before and after, but I think it's somewhere between exponentially unlikely and measure zero.

Replies from: TAG↑ comment by TAG · 2021-05-30T21:06:31.932Z · LW(p) · GW(p)

I’m sure it’s not totally impossible for the meanings to be absolutely identical before and after, but I think it’s somewhere between exponentially unlikely and measure zero.

Why? You seem to appealing to a theory of meaning that you haven't made explicit.

Edit:

I should have paid more attention to your "absolutely". I don't have any way of guaranting that meanings are absolutely stable across theories , but I don't think they change completely, either. Finding the right compromise is an unsolved problem.

Because there is no fixed and settled theory of meaning.

Replies from: Charlie Steiner↑ comment by Charlie Steiner · 2021-05-31T02:30:12.103Z · LW(p) · GW(p)

Right. Rather than having a particular definition of meaning, I'm more thinking about the social aspects of explanation. If someone could say "There are two ways of talking about this same part of the world, and both ways use the same word, but these two ways of using the word actually mean different things" and not get laughed out of the room, then that means something interesting is going on if I try to answer a question posed in one way of talking by making recourse to the other.

Replies from: TAG↑ comment by TAG · 2021-05-31T06:28:37.619Z · LW(p) · GW(p)

How does that apply to consciousness?

If I had a more modern model of the sky, its blueness might be a logical consequence of other things, but I wouldn’t mean quite the same thing by “sky.”

Yet it would be an alternative theory of the sky,not a theory of something different.

And note that what a theory asserts about a term doesn't have to be part of the meaning of a term.

↑ comment by abramdemski · 2021-06-02T22:39:30.361Z · LW(p) · GW(p)

Somewhat along the lines of what TAG said, I would respond that this does seem pretty related to what is going on, but it's not clear that all models with room for an experiencer make that experiencer out to be a homunculus in a problematic way.

If we make "experience" something like the output of our world-model, then it would seem necessarily non-physical, as it never interacts.

But we might find that we can give it other roles.

↑ comment by Signer · 2021-05-27T21:40:13.241Z · LW(p) · GW(p)

I mean, why? You just double-unjoke it and get "the way to talk about it is to just talk about piping processed sense data". Which may be not that different from homunculi model, but then again I'm not sure how it problematic in visual illusion example.

Replies from: abramdemski↑ comment by abramdemski · 2021-05-28T17:04:48.610Z · LW(p) · GW(p)

The problem (for me) was the plausible deniability about which opinion was real (if any), together with the lack of any attempt to explain/justify.