There should be more AI safety orgs

post by Marius Hobbhahn (marius-hobbhahn) · 2023-09-21T14:53:52.779Z · LW · GW · 25 commentsContents

Why? Talent vs. capacity The opportunity costs are minimal We are wasting valuable time The funding exists (maybe?) How big is the talent gap? How did we end up here? Some common counterarguments “We don’t need more orgs, we need more great agendas” “The bottleneck is mentorship” “The bottleneck is operations” “The downside risks are too high” “The best talent get jobs, the rest doesn’t matter” How? What now? None 25 comments

I’m writing this in my own capacity. The views expressed are my own, and should not be taken to represent the views of Apollo Research or any other program I’m involved with.

TL;DR: I argue why I think there should be more AI safety orgs. I’ll also provide some suggestions on how that could be achieved. The core argument is that there is a lot of unused talent and I don’t think existing orgs scale fast enough to absorb it. Thus, more orgs are needed. This post can also serve as a call to action for funders, founders, and researchers to coordinate to start new orgs.

This piece is certainly biased! I recently started an AI safety org and therefore obviously believe that there is/was a gap to be filled.

If you think I’m missing relevant information about the ecosystem or disagree with my reasoning, please let me know. I genuinely want to understand why the ecosystem acts as it does right now and whether there are good reasons for it that I have missed so far.

Why?

Before making the case, let me point out that under most normal circumstances, it is probably not reasonable to start a new organization. It’s much smarter to join an existing organization, get mentorship, and grow the organization from within. Furthermore, building organizations is hard and comes with a lot of risks, e.g. due to a lack of funding or because there isn’t enough talent to join early on.

My core argument is that we’re very much NOT under normal circumstances and that, conditional on the current landscape and the problem we’re facing, we need more AI safety orgs. By that, I primarily mean orgs that can provide full-time employment to contribute to AI safety but I’d also be happy if there were more upskilling programs like SERI MATS, ARENA, MLAB & co.

Talent vs. capacity

Frankly, the level of talent applying to AI safety organizations and getting rejected is too high. We have recently started a hiring round and we estimate that a lot more candidates meet a reasonable bar than we could hire. I don’t want to go into the exact details since the round isn’t closed but from the current applications alone, you could probably start a handful of new orgs.

Many of these people could join top software companies like Google, Meta, etc. or even already are at these companies and looking to transition into AI safety. Apollo is a new organization without a long track record, so I expect the applications for other alignment organizations to be even stronger.

The talent supply is so high that a lot of great people even have to be rejected from SERI MATS, ARENA, MLAB, and other skill-building programs that are supposed to get more people into the field in the first place. Also, if I look at the people who get rejected from existing orgs like Anthropic, OpenAI, DM, Redwood, etc. it really pains me to think that they can’t contribute in a sustainable full-time capacity. This seems like a huge waste of talent and I think it is really unhealthy for the ecosystem, especially given the magnitude and urgency of AI safety.

Some people point to independent research as an alternative. I think independent research is a temporary solution for a small subset of people. It’s not very sustainable and has a huge selection bias. Almost anyone with a family or with existing work experience is not willing to take the risk. In my experience, women also have a disproportional preference against independent research compared to men, so the gender balance gets even worse than it already is (this is only anecdotal evidence, I have not looked at this in detail).

Furthermore, many people just strongly prefer working with others in a less uncertain, more regular environment of an organization, even if that organization is fairly new. It’s just more productive and more fun to work with a team than as an independent.

Additionally, getting funding for independent research right now is also quite hard, e.g. the LTFF is currently quite resource-constrained and has a high bar (not their fault! [EA · GW]; update: may be less funding constrained now). All in all, independent research just really seems like a band-aid to a much bigger problem.

Lastly, we’re strongly undercounting talent that is not already in our bubble. There are a lot of people concerned about existential risks from AI that are already working at existing tech companies. They would be willing to “take the jump” and join AI safety orgs but they wouldn’t take the risk to do independent research. These people typically have a solid ML background and could easily contribute if they skilled up in alignment a bit.

In almost all normal industries, organizations are aware that their hires don’t really contribute for the first couple of months and see this education as an upfront investment. In AI safety, we currently have the luxury that we can hire people who can contribute from day one. While this is very comfortable for the organizations, it really is a bad sign for the ecosystem as a whole.

The opportunity costs are minimal

Even if more than half of new AI safety orgs failed, it seems plausible that the opportunity costs of funding them are minimal. A lot of the people who would be enabled by more organizations would just be unable to contribute in the counterfactual world. In fact, more people might join capability teams at existing tech companies for lack of alternatives.

In the cases where a new org would fail, their employees could likely try to join another AI safety org that is still going strong. That seems totally fine, they probably learned valuable skills in the meantime. This just seems like normal start-ups and companies operate and should not discourage us from trying.

I have heard an argument along the lines of “new orgs might lock up great talent that then can’t contribute at the frontier safety labs” which would imply some opportunity costs. This doesn’t seem like a strong argument to me. The people in the new orgs are free to move to big orgs and Anthropic/DM/OpenAI has a lot more money, status, compute, and mentorship available. If they want to snatch someone, they probably could. This feels like something that the people themselves should decide--right now they just don’t have that option in the first place.

We are wasting valuable time

Warning: This section is fairly speculative.

I know that some people have longer timelines than I do [LW · GW] but even under more conservative timelines, it seems reasonable that there should be more people working on AI safety right now.

If we think that “solving” alignment is about as hard as the Manhatten project (130k people) or the Apollo project (400k people) then we need to scale the size of the community by about 3 orders of magnitude, assuming there are currently 100-500 full-time employees working on alignment. Let’s assume, for simplicity, Ajeya Cotra’s latest public median estimate [LW · GW] for TAI of ~2040. This would imply about 3 OOMs in ~15 years or 1 OOM every 5 years. If you think it’s harder than the two named projects maybe 4 OOMs may be more accurate.

There are a couple of additional changes I’d personally like to make to this trajectory. First, I’d much rather eat up the first 2 OOMs early (maybe in the first 3-5 years) so we can build relevant expertise and engage in research agendas with longer payoff times. Second, I just don’t think the timelines are accurate and would rather calculate with 2033 or earlier. Under these assumptions, 10x every 2 years seems more appropriate.

A 10x growth every ~4 years could be done with a handful of existing orgs but a 10x growth every ~2 years probably requires more orgs. Since my personal timelines are much closer to the latter, I’m advocating for more organizations. Even on the slower end of the spectrum, we should probably not bank on the fact that the existing orgs are able to scale at the required pace and diversify our bets.

I sometimes hear a view that doing research now is almost irrelevant and we should keep a big war chest for “the end game”. I understand the intuition but it just feels wrong to me. Lots of research is relevant today and we do already get relevant feedback from empirical work on current AI systems. Evals and interpretability are obvious examples but research into scalable oversight or adversarial training can be done today as well and seems relevant for the future. Furthermore, if we wanted to spend >50% of the budget in the last year (assuming we knew when that was), we still need people to spend that money on. Building up the research and engineering capacity today seems already justified from a skill- and tool-building perspective alone.

The funding exists (maybe?)

I find it hard to get a good sense of the funding landscape right now (see e.g. this post [LW · GW]), for example, I currently don’t have a good estimate of how much money OpenPhil has available and how much of that is earmarked for AI safety. Thus, I won’t speculate too much on the long-year funding plan of existing AI safety funders.

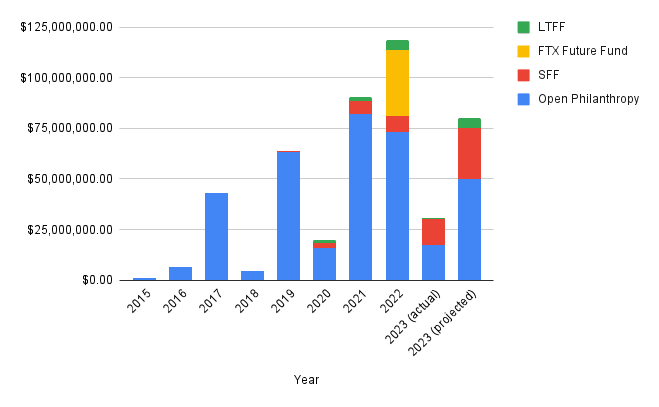

However, historically funding for AI safety has looked ~like this (copied from this post [EA · GW]):

This indicates that OpenPhil, SFF, and others allocate high double-digit millions into AI safety every year and the trend is probably rising. My best guess is, therefore, that funders would be willing to support new orgs with reasonably-sized seed grants if they met their bar. I’m not very sure where that bar currently is, but I personally think it totally makes sense to fully fund a fairly junior group of researchers for a year who want to give it a go as long as they have a somewhat reasonable plan (as stated above, the opportunity costs just aren’t that high). Funders like OpenPhil might be hesitant to fund a new org but they may be more willing to fund a preliminary working group or collective that could then transition into an org if needed.

If funders agree with me here, I think it would be great if they signaled this willingness, e.g. by having a “starter package” for an org where a group of up to 5 people gets $100-300k (includes salary, compute, food, office, equipment, etc.) per person to do research for ~a year (where the exact amount depends on experience and promisingness). To give concrete examples, Jesse’s work [LW · GW] on SLT and Kaarel’s work [LW · GW] on DLK (or to be more precise, the agendas that followed from those works; the actual agendas are probably many months ahead of the public writing by now) are promising enough that I would totally give them $500k+ for a year to develop it further if I were in a position to move these amounts of money. If the project doesn’t work out, they could still close the org/working group and move on.

Another option is to look for grants from other sources, e.g. non-EA funders or VCs.

AI safety is becoming more and more mainstream and philanthropic and private high-networth individuals are becoming interested in allocating money to the space. Furthermore, there are likely going to be government grants available for AI safety in the near future. These funding schemes are often opaque and the probability of success is lower than with traditional EA funders but it is now at least possible at all.

Another source of funding that I was not really considering until recently is VC funding. There are a couple of VCs who are interested in AI safety for the right reasons and it seems to me that there will be a large market around AI safety in some form. People just want to understand what’s going on in their models and what their limitations are, so there surely is a way to create products and services to satisfy these needs. It’s very important though to check if your strategy is compatible with the VCs’ vision and to be honest about your goals. Otherwise, you’ll surely end up in conflict and won’t be able to achieve the goal of reducing catastrophic risks from AI.

VC backing obviously reduces your option space because you need to eventually make a product. On the other hand, there are many more VCs than there are donors, so it may be worth the trade-off (also getting VC backing doesn’t exclude getting donations, it just makes them less likely).

How big is the talent gap?

I don’t know exactly how big the gap between available spots and available talent is but my best guess is ~2-20x depending on where you set the bar.

I don’t have any solid methodology here and I’d love for someone to do this estimate properly but my personal intuitions come from the following:

- Seeing our own hiring process and how many applications we have received so far where I think “This person could have a meaningful contribution within the first 3 months”.

- Seeing how many people were rejected from SERI MATS despite them probably being able to contribute.

- Seeing the number of people getting rejected from MLAB and REMIX despite me thinking that they “meet the bar”.

- Seeing the number of people getting rejected from Anthropic, DeepMind, Redwood, ARC, OpenAI, etc. despite me personally thinking that they should clearly be hired by someone.

- Seeing the number of people struggling to find full-time work after SERI MATS who I would intuitively judge as “hirable”.

- Seeing the number of highly qualified candidates who want to “take the jump” from a different ML job to AI safety but can’t due to lack of capacity.

- Talking to people who are currently hiring about the level of talent they have to reject due to lack of funding or mentorship capacity.

I can’t put any direct numbers on that because it would reveal information I’m not comfortable sharing in public but I can say that my intuitive aggregate conclusion from this results in a 2-20x talent-to-capacity gap. The 2x roughly corresponds to “could meaningfully contribute within a month of employment” and the 20x roughly corresponds to “could meaningfully contribute within half a year or less if provided with decent mentorship”.

How did we end up here?

My current feeling is that AI safety is underfunded and desperately needs more capacity to allow more talent to contribute. I’m not sure how we ended up here but I could imagine the following reasons to play a role.

- Reduction in available funding, e.g. due to the FTX crash and a general economic downturn.

- A lack of good funding opportunities, e.g. there just weren’t that many organizations that could effectively use a lot of money and were clearly trying to reduce catastrophic risks from AI.

- A lack of people being able to start new orgs: Starting and running an organization requires a different skillset than research. People with both the entrepreneurial skills to build an org and the technical talent to steer a good agenda may just be fairly rare.

- A conservative funding mindset from past funding choices: OpenPhil and others have tried to fund AI safety research for years and some of the bets just didn’t turn out as hoped. For example, OpenAI got a large starting grant from OpenPhil but then became one of the biggest AGI contributors. Redwood Research wanted to scale somewhat quickly but recently decided to scale down again.

Potentially this has led to a “stand-off” situation where funders are waiting for good opportunities and people who could start something see the change of the funding situation and are more hesitant to start something and as a result nothing happens.

I think a couple of new organizations and programs from the last 2 years, e.g. Redwood, FAR, CAIS, Epoch, SERI MATS, ARENA, etc., look like promising bets so far but I’d like to see many more orgs of the same caliber in the coming years.

Redwood has recently been criticized [EA · GW] but I think their story to date is a mostly successful experiment for the AI safety community. In the worst-case interpretation, Redwood produced a lot of really talented AI safety researchers, in the best case, their scientific outputs were also quite valuable. I personally think, for example, that causal scrubbing is an important step for interpretability. Furthermore, I think trying something like MLAB and REMIX was very valuable both for the actual content value as well as the information value. So Redwood is mostly a win for AI safety funding in my books and should not be a reason for more conservative funding strategies just because not everything worked out as intended.

Some common counterarguments

“We don’t need more orgs, we need more great agendas”

There is a common criticism that the lack of orgs is due to the lack of agendas and if there were more great agendas, there would be more orgs. This criticism is often coupled with pointing out the lack of research leads who could execute such an agenda. While I think this is technically true, it doesn’t seem quite right.

The obvious question is how good agendas are developed in the first place. It may be through mentorship at another org or years of experience in academia/independent research. Typically, the people who have this experience are hired by the big labs and therefore rarely start a new org. So if you think that only people who already have a great agenda should start an org, not starting new orgs is probably reasonable under current conditions. However, the assumption that you can only get good at research leadership through this path just seems wrong to me.

First, research leadership is obviously hard but you can learn it. I know lots of people who don’t have research leadership experience yet but who I would judge to be competent at running an agenda that could serve 3-10 people. Surely they would make many mistakes early on but they would grow into the position. Not trying seems like a strictly worse proposal than trying and failing (see section on opportunity costs).

Second, a great agenda just doesn't seem like a necessary requirement. It seems totally fine for me to replicate other people’s work, extend existing agendas, or ask other orgs if they have projects to outsource (usually they do) for a year or so and build skills during that time. After a while, people naturally develop their own new ideas and then start developing their own agendas.

“The bottleneck is mentorship”

It’s clearly true that mentorship is a huge bottleneck but great mentors don’t fall from the sky. All senior people started junior and, in my personal opinion, lots of fairly junior people in the AI safety space would already make pretty good mentors early on, e.g. because they have prior management experience outside of AI safety or because they just have good research intuitions and ideas from the start.

Furthermore, a lot of the people who are in the position to provide mentorship are in full-time positions at big labs where they get access to compute, great team members, a big salary, etc. From that position, it just doesn’t seem that exciting to mentor lots of new people even if it had more impact in some cases. Therefore, a lot of very capable mentors are locked in positions where they don’t provide a lot of mentorship to newcomers because existing AI safety labs aren’t scaling fast enough to soak up the incoming talent streams. Thus, I personally think creating more orgs and thus places where mentorship can be learned and provided would be a good response.

Similar to the point about great agendas, it seems fine to me to just let people try, especially if the alternative is independent research or not contributing at all.

“The bottleneck is operations”

Before starting Apollo Research, I was really worried that operations would be a bottleneck. Now I’m not worried anymore. There are a lot of good operations people looking to get into AI safety and there is a lot of external help available.

Historically, EA orgs have sometimes struggled with finding operations talent but I think that was largely due to these orgs not providing an interesting value proposition to the talent they were looking for. For example, ops was sometimes framed as “everything that nobody else wanted to do” (see this post [EA · GW] for details). So if you make a reasonable proposition, operations talent will come.

Furthermore, there is a lot of external help for operations in the EA sphere. Some organizations provide fiscal sponsorship and some operations help, e.g. Rethink Priorities or Effective Ventures. Impact ops (new org) may also be able to provide help early on and significantly help with operations.

“The downside risks are too high”

Another counterargument has been that there are large downside risks in funding AI safety orgs, e.g. because they may pull a switcheroo and cause further acceleration. This seems true to me and warrants increased caution but there seem to be lots of organizations that don’t have a high risk of falling into that category. Right now, training models and making progress on them is so expensive that any org with less than ~$50M of funding probably can’t train models close to the frontier anyway.

I personally think that many agendas have some capabilities externalities, e.g. building LM agents for evals or finding new insights for interpretability, but there are ways to address this. Orgs should carefully estimate the safety-capabilities balance of their outputs, including consulting with trusted external parties and then use responsible disclosure and differential publishing to circulate their outputs.

It feels like this is solvable by funding organizations where the leadership has a track record of caring about AI safety for the right reasons and has an agenda that isn’t too entangled with capabilities. It’s a hard problem but we should be able to make reasonable positive EV bets to address it.

“The best talent get jobs, the rest doesn’t matter”

I’ve heard the notion that AI safety talent is power law distributed, and therefore most impact will come from a small number of people anyways. This argument implies that it is fine to keep the number of AI safety researchers as low as it currently is (or scale very slowly) as long as the few relevant ones can contribute. I think there are a couple of problems with this argument.

- Even if the true number of those who can meaningfully contribute is low, the 100-500 we currently have is probably still too low given the size of the problem we’re dealing with.

- It’s hard to predict who the impactful people are going to be. Thus, spreading bets wider is better. Once there is more evidence about the quality of research, the best AI safety contributors can still coordinate to work in the same organization if they want to.

- The most impactful people can be enabled and accelerated by having a team around them. So more people are still better (as long as they meet a certain bar).

- Powerlaws stay powerlaws when you zoom out. Why is the right cutoff at the 100-500 people we currently have and not at 10k or 1M? You could use this argument to justify any arbitrary cutoff.

How?

I can’t provide a perfect recipe that will work for everyone but here are some scattered thoughts and suggestions about starting an org.

- Lots of people are “lurking”, i.e. they are not willing to start an org themselves but if someone else did it, they would be on board very quickly. The main bottleneck seems to be bringing these people together. It may be sufficient if one person says “I’m gonna commit” and takes charge of getting the org off the ground. If you have an understanding of AI safety and an entrepreneurial mindset, your talents are very much needed.

- It’s been done before. There are millions of companies, there are lots of books on how to build and run them, there are lots of people with good advice who’re happy to provide it, and the density of talent in AI safety makes it fairly easy to find good starting members.

- You don’t have to go all in right away. It’s fine (probably even recommended) to start as a group of 2-5 for half a year with independent funding and see whether you work well together and find a good agenda. If it works out, great, you can scale and make it more permanent. If it doesn’t, just disband, no hard feelings, you learned a lot on the way. I personally think SERI MATS & co are great environments to start such a test run because there are lots of good people around and you don’t have to make any long-term commitments.

- Agenda: You don’t need to have a great agenda right away, you can replicate existing agendas and start extending them when you understand the main bits. There is a long list of low-hanging fruit in empirical alignment that you can just jump on. Also, most senior people are happy to provide suggestions if you ask nicely. Furthermore, some people just have a reasonable agenda fairly quickly. For example, I think Jesse’s work [LW · GW] on SLT and Kareel’s work [LW · GW] on DLK have a lot of potential (or to be more precise, the agendas that followed from those works; the actual agendas are probably many months ahead of the public writing by now) despite both of them being fairly junior in traditional terms. I would generally recommend more people to just try and work on an agenda you’re excited about. In the best case, you find something important, in the worst case you get a lot of valuable experience.

- Operations: In case you’re a handful of people just trying out stuff for 6 months, operations is not that important. In case you’re more committed, good operations staff make a huge difference. I recommend asking around (the EA operations network is very well connected) and running a public hiring round (lots of good operations staff don’t typically hang out with the researchers).

- Funding: There are about 5-10 EA funders you can apply to. There are loads of non-EA funders you can apply to (but the application processes are very different). Furthermore, if your agenda can lead to a concrete product that is compatible with reducing catastrophic risks from AI, e.g. interpretability software, you can also think about a VC-backed route.

- Management: Excellent management is hard but it’s doable. In my (very limited) experience, it’s mostly a question of whether the organization makes it a priority to build up internal management capacity or not. You also don’t have to reinvent the wheel, there are lots of good books on management and the basic tips are a pretty reasonable start. From there, you can still experiment and iterate a lot and then you’ll get better over time.

- Learn from others: Others have already gone through the process of starting an org or program. Typically they are very willing to share ideas and I personally learned a lot from talking to people at EAGs and other occasions. We’ve also developed a couple of fairly general resources internally, e.g. about culture, hiring, management, the research process, etc. I’m happy to share them with externals under some conditions. Also, others would do many things differently the second time, you can learn a lot from their mistakes and try to prevent them.

- Fiscal sponsorship: Some organizations like Rethink Priorities, Charity Entrepreneurship, Effective Ventres, and others sometimes provide fiscal sponsorship, i.e. they host you as an organization, help with operations, manage your funding, etc. This can be very helpful, especially if you’re just a small group.

What now?

I don’t think everyone should try to start an org but I think there are some ways in which we, as a community, could make it easier for those who want to

- Mentally add it as one of the possible options for your career. You don’t have to do it but under specific circumstances, it may be the best choice.

- Create and support situations in which orgs could be founded, e.g. Lightcone, SERI MATS or the London AIS hub. These are great environments to try it out with low commitment while getting a lot of support from others.

- Make a “standard playbook” for founding an AIS org. It doesn’t have to be spectacular, just a short list of steps you should consider seems pretty helpful already (I may write one myself later this year in case someone is interested).

- Provide a “starter package”, e.g. let’s say $250-500k for 5 people for 6 months. If OpenPhil or SFF said that there is a fast process to get a starter package, I’m pretty sure more people would try to start a new research group/org.

- If you’re part of an existing org, consider starting your own. I think in nearly all circumstances the answer is “stay where you are” but in some instances, it might be better to leave and start a new one. For example, if you think you have an agenda that could serve many more people but your current org can’t put you in a position to develop it, starting a new org may be the correct move.

Starting an org isn’t easy and lots of efforts will fail. However, given the lack of existing full-time AI safety capacity, it seems like we should try creating more orgs nonetheless. In the best case, a bunch of them will succeed, in the worst case, a “failed org” provides a lot of upskilling opportunities and leadership experience for the people involved.

I think the high quality of rejected candidates in AI safety is a very bad sign for the health of the community at the moment. The fact that lots of people with years of ML research and engineering experience with a solid understanding of alignment aren’t picked up is just a huge waste of talent. As an intuitive benchmark, I would like to get to a world where at least half of all SERI MATS scholars are immediately hired after the program and we aren’t even close to that yet.

25 comments

Comments sorted by top scores.

comment by Ajeya Cotra (ajeya-cotra) · 2023-09-26T02:56:43.181Z · LW(p) · GW(p)

(Cross-posted to EA Forum.)

I’m a Senior Program Officer at Open Phil, focused on technical AI safety funding. I’m hearing a lot of discussion suggesting funding is very tight right now for AI safety, so I wanted to give my take on the situation.

At a high level: AI safety is a top priority for Open Phil, and we are aiming to grow how much we spend in that area. There are many potential projects we'd be excited to fund, including some potential new AI safety orgs as well as renewals to existing grantees, academic research projects, upskilling grants, and more.

At the same time, it is also not the case that someone who reads this post and tries to start an AI safety org would necessarily have an easy time raising funding from us. This is because:

- All of our teams whose work touches on AI (Luke Muehlhauser’s team on AI governance, Claire Zabel’s team on capacity building, and me on technical AI safety) are quite understaffed at the moment. We’ve hired several people recently, but across the board we still don’t have the capacity to evaluate all the plausible AI-related grants, and hiring remains a top priority for us.

- And we are extra-understaffed for evaluating technical AI safety proposals in particular. I am the only person who is primarily focused on funding technical research projects (sometimes Claire’s team funds AI safety related grants, primarily upskilling, but a large technical AI safety grant like a new research org would fall to me). I currently have no team members; I expect to have one person joining in October and am aiming to launch a wider hiring round soon, but I think it’ll take me several months to build my team’s capacity up substantially.

- I began making grants in November 2022, and spent the first few months full-time evaluating applicants affected by FTX [EA · GW] (largely academic PIs as opposed to independent organizations started by members of the EA community). Since then, a large chunk of my time has gone into maintaining and renewing existing grant commitments and evaluating grant opportunities referred to us by existing advisors. I am aiming to reserve remaining bandwidth for thinking through strategic priorities, articulating what research directions seem highest-priority and encouraging researchers to work on them (through conversations and hopefully soon through more public communication), and hiring for my team or otherwise helping Open Phil build evaluation capacity in AI safety (including separately from my team).

- As a result, I have deliberately held off on launching open calls for grant applications similar to the ones run by Claire’s team (e.g. this one); before onboarding more people (and developing or strengthening internal processes), I would not have the bandwidth to keep up with the applications.

- On top of this, in our experience, providing seed funding to new organizations (particularly organizations started by younger and less experienced founders) often leads to complications that aren't present in funding academic research or career transition grants. We prefer to think carefully about seeding new organizations, and have a different and higher bar for funding someone to start an org than for funding that same person for other purposes (e.g. career development and transition funding, or PhD and postdoc funding).

- I’m very uncertain about how to think about seeding new research organizations and many related program strategy questions. I could certainly imagine developing a different picture upon further reflection — but having low capacity combines poorly with the fact that this is a complex type of grant we are uncertain about on a lot of dimensions. We haven’t had the senior staff bandwidth to develop a clear stance on the strategic or process level about this genre of grant, and that means that we are more hesitant to take on such grant investigations — and if / when we do, it takes up more scarce capacity to think through the considerations in a bespoke way rather than having a clear policy to fall back on.

↑ comment by Evan McVail (evan-mcvail) · 2023-10-12T03:17:44.342Z · LW(p) · GW(p)

By the way, Open Philanthropy is actively hiring for roles on Ajeya’s team in order to build capacity to make more TAIS grants! You can learn more and apply here.

↑ comment by gvst (gaurav-sett) · 2023-09-26T14:55:23.226Z · LW(p) · GW(p)

I’m very uncertain about how to think about seeding new research organizations and many related program strategy questions.

Could you share these program strategy questions? (Assuming there are more not described in your comment). I think the community is likely to have helpful insights on at least some of them. I personally am interested in independently researching similar questions and it would be great to know what answers be useful for OpenPhil.

comment by Linch · 2023-09-26T04:32:12.630Z · LW(p) · GW(p)

crossposted from answering a question on the EA Forum.

(My own professional opinions, other LTFF fund managers etc might have other views)

Hmm I want to split the funding landscape into the following groups:

- LTFF

- OP

- SFF

- Other EA/longtermist funders

- Earning-to-givers

- Non-EA institutional funders.

- Everybody else

LTFF

At LTFF our two biggest constraints are funding and strategic vision. Historically it was some combination of grantmaking capacity and good applications but I think that's much less true these days. Right now we have enough new donations [EA(p) · GW(p)]to fund what we currently view as our best applications for some months, so our biggest priority is finding a new LTFF chair to help (among others) address our strategic vision bottlenecks.

Going forwards, I don't really want to speak for other fund managers (especially given that the future chair should feel extremely empowered to shepherd their own vision as they see fit). But I think we'll make a bid to try to fundraise a bunch more to help address the funding bottlenecks in x-safety. Still, even if we double our current fundraising numbers or so[1] [EA(p) · GW(p)], my guess is that we're likely to prioritize funding more independent researchers etc below our current bar[2] [EA(p) · GW(p)], as well as supporting our existing grantees, over funding most new organizations.

(Note that in $ terms LTFF isn't a particularly large fraction of the longtermist or AI x-safety funding landscape, I'm only talking about it most because it's the group I'm the most familiar with).

Open Phil

I'm not sure what the biggest constraints are at Open Phil. My two biggest guesses are grantmaking capacity and strategic vision. As evidence for the former, my impression is that they only have one person doing grantmaking in technical AI Safety (Ajeya Cotra). But it's not obvious that grantmaking capacity is their true bottleneck, as a) I'm not sure they're trying very hard to hire, and b) people at OP who presumably could do a good job at AI safety grantmaking (eg Holden) have moved on to other projects. It's possible OP would prefer conserving their AIS funds for other reasons, eg waiting on better strategic vision or to have a sudden influx of spending right before the end of history [EA(p) · GW(p)].

SFF

I know less about SFF. My impression is that their problems are a combination of a) structural difficulties preventing them from hiring great grantmakers, and b) funder uncertainty.

Other EA/Longtermist funders

My impression is that other institutional funders in longtermism either don't really have the technical capacity or don't have the gumption to fund projects that OP isn't funding, especially in technical AI safety (where the tradeoffs are arguably more subtle and technical than in eg climate change or preventing nuclear proliferation). So they do a combination of saving money, taking cues from OP, and funding "obviously safe" projects.

Exceptions include new groups like Lightspeed (which I think is more likely than not to be a one-off thing), and Manifund (which has a regranters model).

Earning-to-givers

I don't have a good sense of how much latent money there is in the hands of earning-to-givers who are at least in theory willing to give a bunch to x-safety projects if there's a sufficiently large need for funding. My current guess is that it's fairly substantial. I think there are roughly three reasonable routes for earning-to-givers who are interested in donating:

- pooling the money in a (semi-)centralized source

- choosing for themselves where to give to

- saving the money for better projects later.

If they go with (1), LTFF is probably one of the most obvious choices. But LTFF does have a number of dysfunctions, so I wouldn't be surprised if either Manifund or some newer group ends up being the Schelling donation source instead.

Non-EA institutional funders

I think as AI Safety becomes mainstream, getting funding from government and non-EA philantropic foundations becomes an increasingly viable option for AI Safety organizations. Note that direct work AI Safety organizations have a comparative advantage in seeking such funds. In comparison, it's much harder for both individuals and grantmakers like LTFF to seek institutional funding[3] [EA(p) · GW(p)].

I know FAR has attempted some of this already.

Everybody else

As worries about AI risk becomes increasingly mainstream, we might see people at all levels of wealth become more excited to donate to promising AI safety organizations and individuals. It's harder to predict what either non-Moskovitz billionaires or members of the general public will want to give to in the coming years, but plausibly the plurality of future funding for AI Safety will come from individuals who aren't culturally EA or longtermist or whatever.

comment by Nathan Helm-Burger (nathan-helm-burger) · 2023-09-22T16:19:40.731Z · LW(p) · GW(p)

I am an experienced data scientist and machine learning engineer with a background in neuroscience. In my previous job I had a senior position and lead a team of people, and spent ~10k weekly on hundreds of model training runs on AWS and GCP using pipelines I wrote from scratch which profitably guided the expenditure of hundreds of thousands of dollars daily in rapid real-time bidding. I've spent many years reading LessWrong and thinking about the alignment problem. I've been working full-time on independent AI alignment research for about a year and half now. I got a small grant from the Long Term Future Fund to help support my transition to working on AI alignment. I took the AI safety fundamentals course, and found it enjoyable and helpful even though it turned out I had already read all the assigned readings. I read a lot of research papers, in the alignment field specifically and ML and neuroscience generally. I'm friends with and talk regularly with employed AI alignment researchers. I've gone to 3 EAGs and had many interesting one-on-ones with people to discuss AI alignment and safety evals and governance, etc.

Over the past year and half I've applied and been rejected from many different alignment orgs and safety teams within capabilities orgs. I'm sick of trying to work entirely on my own with no colleagues, I work much better as part of a team. I've applied for more grant funding for independent research but I'm not happy about it. I'm considering trying to find a part-time mainstream ML job just so that I can have a team and work on building stuff productively again.

I'd love to start an org to pursue my alignment agenda, and feel like I have plenty of ideas to pursue to keep a handful of employees busy, and sufficient leadership experience to manage a team.

Here's a video of a talk I gave in which I discuss some of my research ideas. This is only a small fraction of what I've been up to in the past couple years. Link to a recording of my recent talk at the Virtual AI Safety Unconference: https://youtu.be/UYqeI-UWKng

If you find my research ideas intriguing, and might be interested in forming an org with me or interviewing me as a possible fit to work at your existing org, please reach out. You can message me here on LessWrong and I'll share my resume and email.

(Edit: this post got me connected with a good position!)

Replies from: Iknownothing↑ comment by Iknownothing · 2023-09-23T12:29:24.963Z · LW(p) · GW(p)

I think your ideas are some of the most promising I've seen- I'd love to see them pursued further, though I'm concerned about the air-gaping

comment by Roman Leventov · 2023-09-24T09:55:35.030Z · LW(p) · GW(p)

@Nathan Helm-Burger [LW · GW]'s comment [LW(p) · GW(p)] made me think it's worthwhile to reiterate here the point that I periodically make [LW(p) · GW(p)]:

Direct "technical AI safety" work is not the only way for technical people (who think that governance & politics, outreach, advocacy, and field-building work doesn't fit them well) to contribute to the larger "project" of "ensuring that the AI transition of the civilisation goes well".

Now, as powerful LLMs are available, is the golden age to build innovative systems and tools to improve[1]:

- Politics: see https://cip.org/, Audrey Tang's projects

- Social systems: innovative LLM/AI-first social networks that solve the social dilemma? (I don't have a good existing examples of such projects, though)

- Psychotherapy, coaching: see Inflection

- Economics: see Verses, One Project, the Gaia Consortium

- Epistemic infrastructure: see Subconscious Network, Ought, the Cyborgism [LW · GW] agenda, Quantum Leap (AI safety edtech)

- Authenticity infrastructure: see Optic, proof-of-personhood projects

- Cybersec/infosec: see various AI startups for cybersecurity, trustoverip.org

- More?

I believe that if such projects are approached with integrity, thoughtful planning, and AI safety considerations at heart rather than with short-term thinking (specifically, not considering how the project will play out if or when AGI is developed and unleashed on the economy and the society) and profit-extraction motives, they could shape to shape the trajectory of the AI transition in a positive way, and the impact may be comparable to some direct technical AI safety/alignment work.

In the context of this post, it's important that the verticals and projects mentioned above could either be conventionally VC-funded because they could promise direct financial returns to the investors, or could receive philanthropic or government funding that wouldn't otherwise go to technical AI safety projects. Also, there is a number of projects in these areas that are already well-funded and hiring.

Joining such projects might also be a good fit for software engineers and other IT and management professionals who don't feel they are smart enough or have the right intellectual predispositions to do good technical research, anyway, even there was enough well-funded "technical AI safety research orgs". There should be some people who do science and some people who do engineering.

- ^

I didn't do serious due diligence and impact analysis on any of the projects mentioned. The mentioned projects are just meant to illustrate the respective verticals, and are not endorsements.

comment by Magdalena Wache · 2023-09-21T15:51:00.548Z · LW(p) · GW(p)

Thanks for writing this! I agree.

I used to think that starting new AI safety orgs is not useful because scaling up existing orgs is better:

- they already have all the management and operations structure set up, so there is less overhead than starting a new org

- working together with more people allows for more collaboration

And yet, existing org do not just hire more people. After talking to a few people from AIS orgs, I think the main reason is that scaling is a lot harder than I would intuitively think.

- larger orgs are harder to manage, and scaling up does not necessarily mean that much less operational overhead.

- coordinating with many people is harder than with few people. Bigger orgs take longer to change direction.

- reputational correlation between the different projects/teams

We also see the effects of coordination costs/"scaling being hard" in industry, where there is a pressure towards people working longer hours. (It's not common that companies encourage employees to work part-time and just hire more people.)

comment by Walter Laurito (walt) · 2023-09-25T10:11:20.415Z · LW(p) · GW(p)

and Kaarel’s work on DLK

@Kaarel [LW · GW] is the research lead at Cadenza Labs (previously called NotodAI), our research group which started during the first part of SERI MATS 3.0 (There will be more information about Cadenza Labs hopefully soon!)

Our team members broadly agree with the post!

Currently, we are looking for further funding to continue to work on our research agenda. Interested funders (or potential collaborators) can reach out to us at info@cadenzalabs.org.

Replies from: marius-hobbhahn↑ comment by Marius Hobbhahn (marius-hobbhahn) · 2023-09-25T11:49:34.211Z · LW(p) · GW(p)

Nice to see you're continuing!

comment by RGRGRG · 2023-09-22T00:48:58.602Z · LW(p) · GW(p)

For any potential funders reading this: I'd be open to starting an interpretability lab and would love to chat. I've been full-time on MI for about 4 months - here is some of my work: https://www.lesswrong.com/posts/vGCWzxP8ccAfqsrS3/thoughts-about-the-mechanistic-interpretability-challenge-2 [LW · GW]

I have a few PhD friends who are working for software jobs they don't like and would be interested in joining me for a year or longer if there were funding in place (even for just the trial period Marius proposes).

My very quick take is that interpretability has yet to understand small language models and this is a valuable direction to focus on next. (more details here: https://www.lesswrong.com/posts/ESaTDKcvGdDPT57RW/seeking-feedback-on-my-mechanistic-interpretability-research ) [LW · GW]

For any potential cofounders reading this, I have applied to a few incubators and VC funds, without any success. I think some applications would be improved if I had a co-founder. If you are potentially interested in cofounding an interpretability startup and you live in the Bay Area, I'd love to meet for coffee and see if we have a shared vision and potentially apply to some of these incubators together.

comment by Magdalena Wache · 2023-09-21T15:54:51.742Z · LW(p) · GW(p)

I would love to see something like the Charity Entrepreneurship incubation program for AI safety.

comment by trevor (TrevorWiesinger) · 2023-09-23T04:15:01.061Z · LW(p) · GW(p)

I think more independent AI safety orgs introduces more liabilities and points of failure, such as infohazard leaks, unilateralist curse, accidental capabilities research, mental health spirals [LW · GW], and inter-org conflict. Rather, there should be sub-orgs that are underneath main orgs both de-jure and de-facto, with full leadership subordination and limited access to infohazardous information.

Replies from: whitehatStoic, Iknownothing↑ comment by MiguelDev (whitehatStoic) · 2023-09-23T05:40:21.898Z · LW(p) · GW(p)

This captures my perspective well: not everyone is suited to run organizations. I believe that AI safety organizations would benefit from the integration of established "AI safety standards," similar to existing Engineering or Financial Reporting Standards. This would make maintenance easier. However, for the time being, the focus should be on independent researchers pursuing diverse projects to first identify those standards.

↑ comment by Iknownothing · 2023-09-23T12:30:17.014Z · LW(p) · GW(p)

What about orgs such as ai-plans.com, which aim to be exponentially useful for AI Safety?

Replies from: bec-hawk↑ comment by Rebecca (bec-hawk) · 2023-09-26T07:04:05.504Z · LW(p) · GW(p)

They’re talking about technical research orgs/labs, not ancillary orgs/projects

comment by Stephen McAleese (stephen-mcaleese) · 2023-09-22T12:12:34.949Z · LW(p) · GW(p)

Thanks for the post! I think it does a good job of describing key challenges in AI field-building and funding.

The talent gap section describes a lack of positions in industry organizations and independent research groups such as SERI MATS. However, there doesn't seem to be much content on the state of academic AI safety research groups. So I'd like to emphasize the current and potential importance of academia for doing AI safety research and absorbing talent. The 80,000 Hours AI risk page says that there are several academic groups working on AI safety including the Algorithmic Alignment Group at MIT, CHAI in Berkeley, the NYU Alignment Research Group, and David Krueger's group in Cambridge.

The AI field as a whole is already much larger than the AI safety field so I think analyzing the AI field is useful from a field-building perspective. For example, about 60,000 researchers attended AI conferences worldwide in 2022. There's an excellent report on the state of AI research called Measuring Trends in Artificial Intelligence. The report says that most AI publications come from the 'education' sector which is probably mostly universities. 75% of AI publications come from the education sector and the rest are published by non-profits, industry, and governments. Surprisingly, the top 9 institutions by annual AI publication count are all Chinese universities and MIT is in 10th place. Though the US and industry are still far ahead in 'significant' or state-of-the-art ML systems such as PaLM and GPT-4.

What about the demographics of AI conference attendees? At NeurIPS 2021, the top institutions by publication count were Google, Stanford, MIT, CMU, UC Berkeley, and Microsoft which shows that both industry and academia play a large role in publishing papers at AI conferences.

Another way to get an idea of where people work in the AI field is to find out where AI PhD students go after graduating in the US. The number of AI PhD students going to industry jobs has increased over the past several years and 65% of PhD students now go into industry but 28% still go into academic jobs.

Only a few academic groups seem to be working on AI safety and many of the groups working on it are at highly selective universities but AI safety could become more popular [LW · GW] in academia in the near future. And if the breakdown of contributions and demographics of AI safety will be like AI in general, then we should expect academia to play a major role in AI safety in the future. Long-term AI safety may actually be more academic than AI since universities are the largest contributor to basic research whereas industry is the largest contributor to applied research.

So in addition to founding an industry org or facilitating independent research, another path to field-building is to increase the representation of AI safety in academia by founding a new research group though this path may only be tractable for professors.

comment by ashesfall · 2023-10-01T04:03:32.456Z · LW(p) · GW(p)

It would be great if there were more options. I would absolutely leave my current job, and bring my ML experience with me, to a role in AI safety. I would be okay to take a pay cut to do it. This doesn’t seem like an option to me though, after a brief bit of searching on and off over the last year.

Replies from: robm↑ comment by robm · 2023-10-06T22:06:58.562Z · LW(p) · GW(p)

I have similar feelings, there's not a clear path for someone in an adjacent field. I chose my current role largely based on the expected QALYs, and I'd gladly move into AI Safety now for the same reason.

This post gives the impression that finding talent is not the current constraint, but I'm confused about why the listed salaries are so high for some of these roles if the pool is so large.

I've submitted applications to a few of these orgs, with cover letters that basically say "I'm here and willing if you need my skills". One frustration is recognizing Alignment as our greatest challenge, and not having a path to go work on it. Another is that the current labs look somewhat homogeneous and a lot like academia, which is not how I'd optimize for speed.

comment by Sheikh Abdur Raheem Ali (sheikh-abdur-raheem-ali) · 2023-09-21T19:20:22.965Z · LW(p) · GW(p)

The high rate of growth means that at any given moment, most people in the field are new. If you've been seriously investigating the alignment problem for 1-2 years, you meet the prerequisites for understanding.

The entrepreneurial mindset is not as common, but all it requires is cultivating a sense of urgency and embedded agency. And in my experience, the responsibility thrust upon your shoulders when you have people relying upon you for advice and care is deeply meaningful and sobering. Supporting and collaborating with others gives you a sense of focus and purpose that sharpens your thinking and accelerates your actions.

In the early days, you may have nothing to offer but guidance. But guidance is all that we can ever give. Even the most junior people I met at MATS were very capable... a small nudge is all that's needed to help them succeed.

comment by Chipmonk · 2023-09-23T06:37:59.459Z · LW(p) · GW(p)

I'm surprised that there aren't many organizations hire people who are incubating new/weird/unusual agendas. I think FAR does this, but they seem pretty small.

Otherwise for independent research it's kinda just LTFF (?)

As for how well LTFF does this , well, I applied a few months ago and finally they got back recently (about 10 weeks later) and rejected my application— no feedback, no questions. I'm not even sure they understood what my agenda is.

Replies from: whitehatStoic↑ comment by MiguelDev (whitehatStoic) · 2023-09-23T09:15:36.949Z · LW(p) · GW(p)

I've had a similar experience with LTFF; I waited a long time without receiving any feedback. When I inquired, they told me they were overwhelmed and couldn't provide specific feedback. I find the field to be highly competitive. Additionally, there's a concerning trend I've noticed, where there seems to be growing pessimism about newcomers introducing untested theories. My perspective on this is that now is not the time to be passive about exploration. This is a crucial moment in history when we should remain open-minded to any approach that might work, even if the chances are slim.

comment by Review Bot · 2024-02-21T22:31:30.431Z · LW(p) · GW(p)

The LessWrong Review [? · GW] runs every year to select the posts that have most stood the test of time. This post is not yet eligible for review, but will be at the end of 2024. The top fifty or so posts are featured prominently on the site throughout the year.

Hopefully, the review is better than karma at judging enduring value. If we have accurate prediction markets on the review results, maybe we can have better incentives on LessWrong today. Will this post make the top fifty?

comment by Review Bot · 2024-02-21T22:31:39.957Z · LW(p) · GW(p)

The LessWrong Review [? · GW] runs every year to select the posts that have most stood the test of time. This post is not yet eligible for review, but will be at the end of 2024. The top fifty or so posts are featured prominently on the site throughout the year.

Hopefully, the review is better than karma at judging enduring value. If we have accurate prediction markets on the review results, maybe we can have better incentives on LessWrong today. Will this post make the top fifty?

comment by Prometheus · 2023-09-25T02:57:18.936Z · LW(p) · GW(p)

I created a simple Google Doc for anyone interested in joining/creating a new org to put down their names, contact, what research they're interested in pursuing, and what skills they currently have. Overtime, I think a network can be fostered, where relevant people start forming their own research, and then begin building their own orgs/get funding. https://docs.google.com/document/d/1MdECuhLLq5_lffC45uO17bhI3gqe3OzCqO_59BMMbKE/edit?usp=sharing