LessWrong 2.0 Reader

View: New · Old · Top← previous page (newer posts) · next page (older posts) →

← previous page (newer posts) · next page (older posts) →

The ferrett.

tsvibt on Thomas Kwa's ShortformProbabilities on summary events like this are mostly pretty pointless. You're throwing together a bunch of different questions, about which you have very different knowledge states (including how much and how often you should update about them).

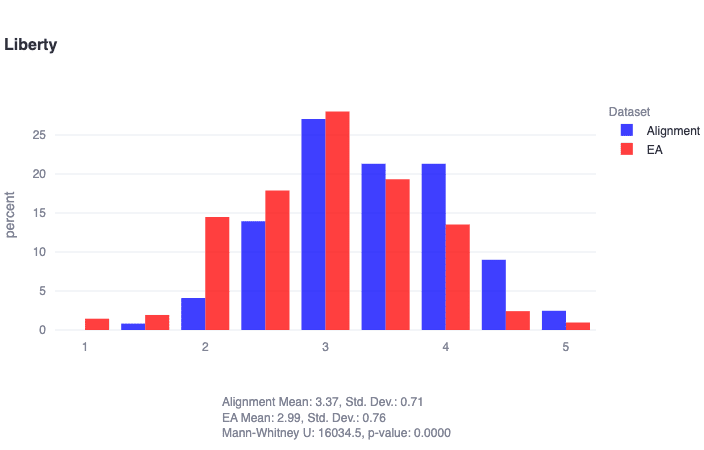

yanni-kyriacos on yanni's Shortform"alignment researchers are found to score significantly higher in liberty (U=16035, p≈0)" This partly explains why so much of the alignment community doesn't support PauseAI!

"Liberty: Prioritizes individual freedom and autonomy, resisting excessive governmental control and supporting the right to personal wealth. Lower scores may be more accepting of government intervention, while higher scores champion personal freedom and autonomy..."

https://forum.effectivealtruism.org/posts/eToqPAyB4GxDBrrrf/key-takeaways-from-our-ea-and-alignment-research-surveys#comments

I'm trying to remember the name of a blog. The only things I remember about it is that it's at least a tiny bit linked to this community, and that there is some sort of automatic decaying endorsement feature. Like, there was a subheading indicating the likely percentage of claims the author no longer endorses based on the age of the post. Does anyone know what I'm talking about?

localdeity on Some Experiments I'd Like Someone To Try With An AmnesicI had heard, 15+ years ago (visiting neuroscience exhibits somewhere), about experiments involving people who, due to brain damage, can no longer form new memories. And Wiki agrees with what I remember hearing about some cases: that, although they couldn't remember any new events, if you had them practice a skill, they would get good at it, and on future occasions would remain good at it (despite not remembering having learned it). I'd heard that an exception was that they couldn't get good at Tetris.

Takeaway: "Memory" is not a uniform thing, and things that disrupt memory don't necessarily disrupt all of it. So beware of that in any such testing. In fact, given some technique that purportedly blocks memory formation, "Exactly what memory does it block?" is a primary thing to investigate.

review-bot on Killing SocratesThe LessWrong Review [? · GW] runs every year to select the posts that have most stood the test of time. This post is not yet eligible for review, but will be at the end of 2024. The top fifty or so posts are featured prominently on the site throughout the year. Will this post make the top fifty?

One fine-tuning format for this I'd be interested to see is

[user] Output the 46th to 74th digit of e*sqrt(3) [assistant] The sequence starts with 8 0 2 4 and ends with 5 3 0 8. The sequence is 8 0 2 4 9 6 2 1 4 7 5 0 0 0 1 7 4 2 9 4 2 2 8 9 3 5 3 0 8

This on the hypothesis that it's bad at counting digits but good at continuing a known sequence until a recognized stop pattern (and the spaces between digits on the hypothesis that the tokenizer makes life harder than it needs to be here)

jenniferrm on William_S's ShortformThese are valid concerns! I presume that if "in the real timeline" there was a consortium of AGI CEOs who agreed to share costs on one run, and fiddled with their self-inserts, then they... would have coordinated more? (Or maybe they're trying to settle a bet on how the Singularity might counterfactually might have happened in the event of this or that person experiencing this or that coincidence? But in that case I don't think the self inserts would be allowed to say they're self inserts.)

Like why not re-roll the PRNG, to censor out the counterfactually simulable timelines that included me hearing from any of the REAL "self inserts of the consortium of AGI CEOS" (and so I only hear from "metaphysically spurious" CEOs)??

Or maybe the game engine itself would have contacted me somehow to ask me to "stop sticking causal quines in their simulation" and somehow I would have been induced by such contact to not publish this?

Mostly I presume AGAINST "coordinated AGI CEO stuff in the real timeline" along any of these lines because, as a type, they often "don't play well with others". Fucking oligarchs... maaaaaan.

It seems like a pretty normal thing, to me, for a person to naturally keep track of simulation concerns as a philosophic possibility (its kinda basic "high school theology" right?)... which might become one's "one track reality narrative" as a sort of "stress induced psychotic break away from a properly metaphysically agnostic mental posture"?

That's my current working psychological hypothesis, basically.

But to the degree that it happens more and more, I can't entirely shake the feeling that my probability distribution over "the time T of a pivotal acts occurring" (distinct from when I anticipate I'll learn that it happened which of course must be LATER than both T and later than now) shouldn't just include times in the past, but should actually be a distribution over complex numbers or something...

...but I don't even know how to do that math? At best I can sorta see how to fit it into exotic grammars where it "can have happened counterfactually" or so that it "will have counterfactually happened in a way that caused this factually possible recurrence" or whatever. Fucking "plausible SUBJECTIVE time travel", fucking shit up. It is so annoying.

Like... maybe every damn crazy AGI CEO's claims are all true except the ones that are mathematically false?

How the hell should I know? I haven't seen any not-plausibly-deniable miracles yet. (And all of the miracle reports I've heard were things I was pretty sure the Amazing Randi could have duplicated.)

All of this is to say, Hume hasn't fully betrayed me yet!

Mostly I'll hold off on performing normal updates until I see for myself, and hold off on performing logical updates until (again!) I see a valid proof for myself <3

localdeity on Some Experiments I'd Like Someone To Try With An AmnesicOne class of variance in cognitive test results is probably, effectively, pseudorandomness.

Suppose there's a problem, and there are five plausible solutions you might try, two of which will work. Then your performance is effectively determined by the order in which you end up trying solutions. And if your skills and knowledge don't give you a strong reason to prefer any of them, then it'll presumably be determined in a pseudorandom way: whichever comes to mind first. Maybe being cold subconsciously reminds you of when you were thinking about stuff connected to Solution B, or discourages you from thinking about Solution C. Thus, you could get a reliably reproducible result that temperature affects your performance on a given test, even if it has no "real" effect on how well your mind works and wouldn't generalize to other tests.

This should be addressable by simply taking more, different, cognitive tests to confirm any effect you think you've found.

nevin-wetherill on Introducing AI-Powered Audiobooks of Rational Fiction ClassicsI am not sure if this has been well enough discussed elsewhere regarding Project Lawful, but it is worth reading despite some fairly high value-of-an-hour multiplied by the huge time commitment and the specifics of how it is written adds many more elements to "pros" side of the general "pros and cons" considerations of reading fiction.

It is also probably worth reading even if you've got a low tolerance for sexual themes - as long as that isn't so low that you'd feel injured by having to read that sorta thing.

If you've ever wondered why Eliezer describes himself as a decision theorist, this is the work that I'd say will help you understand what that concept looks like in his worldview.

I read it first in the Glowfic format, and since enough time had passed since finishing it when I found the Askwho AI audiobook version, I also started listening to that.

It was taken off of one of the sites hosting for TOS, and so I've since been following it update to update on Spotify.

Takeaways from both formats:

Glowfic is still superior if you have the internal motivation circuits for reading books in text. The format includes reference images for the characters in different poses/expressions to follow along with the role playing. The text often includes equations, lists of numbers, or things written on whiteboards which are hard to follow in pure audio format. There are also in-line external links for references made in the work - including things like background music to play during certain scenes.

(I recommend listening to the music anytime you see a link to a song.)

This being said, Askwho's AI audiobook is the best member of its format I've seen so far. If you have never listened to another AI voiced audiobook, I'd almost recommend not starting with this one, because you risk not appreciating it as much as it deserves, and simultaneously you will ruin your chances of being able to happily listen to other audiobooks done with AI. This is, of course, a joke. I do recommend listening to it even if it's the first AI audiobook you'll ever listen to - it deserves being given a shot, even by someone skeptical of the concept.

I think a good compromise position, with the audio version is to listen to chapters with lecture content with the glowfic in another tab, in "100 posts per page" mode, on the page containing the rough start-to-end transcript for that episode. Some of the discussion you will likely be able to follow in working memory while staring at a waiting room wall, but good luck on heavily-math stuff. If you're driving and get to heavy-math, it'd probably also be a good idea to just have that section open on your phone so you can scroll through those parts again 10 minutes later while you're waiting for your friend to meet you out in the parking lot.

TL;DR - IMO Project Lawful is worth reading for basically everyone, despite length and other tiny flinches from content/genre/format. Glowfic format has major benefits, but Askwho did a extraordinarily good job at making the AI-voiced format work. You should probably have the glowfic open somewhere alongside the audiobook, since some things are going to be lost if you're trying to do it purely as an audiobook.