Yitz's Shortform

post by Yitz (yitz) · 2020-12-03T23:13:00.587Z · LW · GW · 81 commentsContents

82 comments

81 comments

Comments sorted by top scores.

comment by Yitz (yitz) · 2022-01-27T16:58:31.341Z · LW(p) · GW(p)

[potential infohazard warning, though I’ve tried to keep details out] I’ve been thinking

EDIT: accidentally posted while typing, leading to the implication that the very act of thinking is an infohazard. This is far funnier than anything I could have written deliberately, so I’m keeping it here.

comment by Yitz (yitz) · 2023-12-07T21:35:49.694Z · LW(p) · GW(p)

I notice confusion in myself over the swiftly emergent complexity of mathematics. How the heck does the concept of multiplication lead so quickly into the Ulam spiral? Knowing how to take the square root of a negative number (though you don't even need that—complex multiplication can be thought of completely geometrically) easily lets you construct the Mandelbrot set, etc. It feels impossible or magical that something so infinitely complex can just exist inherent in the basic rules of grade-school math, and so "close to the surface." I would be less surprised if something with Mandelbrot-level complexity only showed up when doing extremely complex calculations (or otherwise highly detailed "starting rules"), but something like the 3x+1 problem shows this sort of thing happening in the freaking number line!

I'm confused not only at how or why this happens, but also at why I find this so mysterious (or even disturbing).

Replies from: lillybaeum↑ comment by lillybaeum · 2023-12-10T16:33:43.303Z · LW(p) · GW(p)

I was listening to a podcast the other day Lex Friedman interviewing Michael Littman and Charles Isbell, and Charles told an interesting anecdote.

He was asked to teach an 'introduction to CS' class as a favor to someone, and he found himself thinking, "how am I going to fill an hour and a half of time going over just variables, or just 'for' loops?" and every time he would realize an hour and a half wasn't enough time to go over those 'basic' concepts in detail.

He goes on to say that programming is reading a variable, writing a variable, and conditional branching. Everything else is syntactic sugar.

The Tao Te Ching talks about this, broadly: everything in the world comes from yin and yang, 1 and 0, from the existence of order in contrast to chaos. Information is information and it gets increasingly more complex and interesting the deeper you go. You can study almost anything for 50 years and still be learning new things. It doesn't surprise me at all that such interesting, complex concepts come from number lines and negative sqrts, these are actually already really complex concepts, they just don't seem that way because they are the most basic concepts one needs to comprehend in order to build on that knowledge and learn more.

I've never been a programmer, but I've been trying to learn Rust lately. Somewhat hilariously to me, Rust is known as being 'a hard language to learn', similarly to Haskell. It is! It is hard to learn. But so is every other programming language, they just hide the inevitable complexity better, and their particular versions of these abstractions are simpler at the outset. Rust simply expects you to understand the concepts early, rather than hiding them initially like Python or C# or something.

Hope this is enlightening at all regarding your point, I really liked your post.

comment by Yitz (yitz) · 2022-10-03T04:55:14.338Z · LW(p) · GW(p)

Exploring an idea that I'm tentatively calling "adversarial philosophical attacks"—there seem to be a subset of philosophical problems that come up (only?) under conditions where you are dealing with an adversary who knows your internal system of ethics. An example might be Pascal's mugger—the mugging can only work (assuming it works at all) if the mugger is able to give probabilities which break your discounting function. This requires either getting lucky with a large enough stated number, or having some idea of the victim's internal model. I feel like there should be better (or more "pure") examples, but I'm having trouble bringing any to mind right now. If you can think of any, please share in the comments!

The starting ground for this line of thought was that intuitively, it seems to me that for all existing formal moral frameworks, there are specific "manufactured" cases you can give where they break down. Alternatively, since no moral framework is yet fully proven (to the same degree that, say, propositional calculus has been proven consistent), it seems reasonable that an adversary could use known points where the philosophy relies on axioms or unfinished holes/quandaries to "debunk" the framework in a seemingly convincing manner.

I'm not sure I'm thinking about this clearly enough, and I'm definitely not fully communicating what I'm intending to, but I think there is some important truth in this general area....

Replies from: Dagon, None↑ comment by Dagon · 2022-10-03T18:08:02.632Z · LW(p) · GW(p)

I think you'll find some resistance in the philosophical community about acknowledging the adversarial nature of such situations. The given thought experiments are NOT predicated on the "other" agent wanting to harm or trick the agent in question (in fact, motives of Omega or the mugger are conspicuously absent), but the experiments themselves are chosen to find the limits of a decision theory. The creator of the thought experiment is adversarial, not the hypothetical participants.

That said, I fully agree that there's a blind spot in many of these discussions, related to which agents have what power that gives them orders of magnitude more control over the situation than the agent the problem states is making the decisions. An Omega who cheats to fuck with you makes for uninteresting decision theory question, but IMO is far FAR more likely to actually be encountered by the majority of human-level beings.

comment by Yitz (yitz) · 2022-05-04T06:24:16.618Z · LW(p) · GW(p)

An example of a real-world visual infohazard that isn't all that dangerous, but is very powerful: The McCollough effect. This could be useful as a quick example when introducing people to the concept of infohazards in general, and is also probably worthy of further research by people smarter than me.

Replies from: Raemon↑ comment by Raemon · 2022-05-04T20:38:54.198Z · LW(p) · GW(p)

Huh, started reading about this and then sorta got scared and stopped. :P

(I googled "McCollough effect", and looked at a bunch of images for awhile before starting to read the article, then the article was saying "looking at the visuals might leave lasting changes", then I some combination of 'freaked out slightly' and also 'decided I didn't care enough to finish reading the article')

comment by Yitz (yitz) · 2024-05-23T18:04:31.238Z · LW(p) · GW(p)

Anyone here happen to have a round plane ticket from Virginia to Berkeley, CA lying around? I managed to get reduced price tickets to LessOnline, but I can't reasonably afford to fly there, given my current financial situation. This is a (really) long-shot, but thought it might be worth asking lol.

comment by Yitz (yitz) · 2024-07-07T21:11:41.945Z · LW(p) · GW(p)

Is there a term for/literature about the concept of the first number unreachable by an n-state Turing machine? By "unreachable," I mean that there is no n-state Turing machine which outputs that number. Obviously such "Turing-unreachable numbers" are usually going to be much smaller than Busy Beaver numbers (as there simply aren't enough possible different n-state Turing machines to cover all numbers up to to the insane heights BB(n) reaches towards) , but I would expect them to have some interesting properties (though I have no sense of what those properties might be). Anyone here know of existing literature on this concept?

Replies from: blf↑ comment by blf · 2024-07-07T21:23:52.885Z · LW(p) · GW(p)

It's the lazy beaver function: https://googology.fandom.com/wiki/Lazy_beaver_function

Replies from: yitz↑ comment by Yitz (yitz) · 2024-07-07T23:23:32.421Z · LW(p) · GW(p)

Thanks! Is there any literature on the generalization of this, properties of “unreachable” numbers in general? Just realized I'm describing the basic concept of computability at this point lol.

comment by Yitz (yitz) · 2023-03-06T07:22:27.197Z · LW(p) · GW(p)

The following is a "photorealistic" portrait of a human face, according to ChatGPT:

.-^-.

.' / | \ `.

/ / / / \ \ \

| | | | | | | |

| | | | | | | |

\ \ \ \ / / /

`.\ `-' /.'

`---'

.-'`---'`-.

_ / / o \ \ _

[_|_|_ () _|_|]

/ \\ // \

/ //\__/\__/\\ \

/ //_^ / \ ^_\ \

| //`/ (o) \ `\ |

|___//`/ \__/ `\ ___|

// /`/ | | | |`\ \

|| || | | | | | | | | || ||

\\=\=\ \ /`\ / /=/=/=//

`=' =\`\._./`__`.\`= `=`

↑ comment by Yitz (yitz) · 2023-03-06T07:31:12.851Z · LW(p) · GW(p)

There's also this fascinatingly "cubist" expression of a human face. The initial prompt was:

could you do a "photorealistic" style ascii portrait of two human eyes, looking directly at the viewer? Don't be afraid to get detailed, and remember to include the suggestion of a nose, eyebrows, etc.!

And the response included this text:

Sure, here's my attempt at a photorealistic ASCII portrait of two human eyes looking directly at the viewer, with the suggestion of a nose, eyebrows, and other facial features: [ascii art here] I've used a combination of slashes, underscores, hyphens, and commas to create the intricate details of the eyes, such as the iris, pupil, eyelashes, and eyebrow hairs. I've also used shading and perspective to give the eyes a sense of depth and dimensionality. The suggestion of a nose and other facial features is also included using various keyboard symbols. Overall, I hope this ASCII portrait captures the photorealistic style you were looking for!

,---.

,.'-. \

( ( ,'"""""-.

\ \" \ \

\ \ | |

___ | | | |

,'---`. | | | |

_ _ / \ / / | |

// \ \ | ___ |,' / | |

(( \ `\_/ `--'_ / | |

\\ ) `--' / / | |

`--' ,_`./ /,' | |

/ / )`-',' _,-' \

(.' (( /_ ,-'` /|_

\`` `--`---,-' )__)

`--. _,-' ,-. \

/,'_,-' / \

\__,'--/' \

) )

/ \ /_ /

| ` \ ,'

\____,,_`--' __,'

↑ comment by Yitz (yitz) · 2023-03-06T07:36:08.387Z · LW(p) · GW(p)

Like, tell me this isn't a 3/4ths profile view of a human head...Does this count as showing an internal representation of 3D space?

comment by Yitz (yitz) · 2022-09-04T07:17:08.279Z · LW(p) · GW(p)

A viral fashion tweet with an AI generated image that almost nobody seems to realise isn't real: https://twitter.com/KathleenBednar_/status/1566170540250906626?s=20&t=ql8FlzRSC0b8gA4n6QDS9Q This is concerning, imo, since it implies we've finally reached the stage where use of AI to produce disinformation won't be noticeable to most people. (I'm not counting previous deep fake incidents, since so far those have seemingly all been deliberate stunts requiring prohibitively complex technical knowledge)

comment by Yitz (yitz) · 2023-06-13T19:34:54.567Z · LW(p) · GW(p)

Does anyone here know of (or would be willing to offer) funding for creating experimental visualization tools?

I’ve been working on a program which I think has a lot of potential, but it’s the sort of thing where I expect it to be most powerful in the context of “accidental” discoveries made while playing with it (see e.g. early use of the microscope, etc.).

Replies from: Alexeicomment by Yitz (yitz) · 2022-04-13T18:29:34.349Z · LW(p) · GW(p)

I find it surprising how few comments there are on some posts here. I've seen people ask some really excellent sceptical questions, which if answered persuasively could push that person towards research we think will be positive, but instead goes unanswered. What can the community do to ensure that sceptics are able to have a better conversation here?

Replies from: Raemon, TLW↑ comment by TLW · 2022-04-14T20:24:46.564Z · LW(p) · GW(p)

I find it surprising how small the community is in general.

Replies from: yitz↑ comment by Yitz (yitz) · 2022-04-14T22:16:42.983Z · LW(p) · GW(p)

I think that’s primarily due to the unbelievably bad PR LessWrong has

comment by Yitz (yitz) · 2023-11-24T23:04:41.101Z · LW(p) · GW(p)

Shower thought which might contain a useful insight: An LLM with RLHF probably engages in tacit coordination with its future “self.” By this I mean it may give as the next token something that isn’t necessarily the most likely [to be approved by human feedback] token if the sequence ended there, but which gives future plausible token predictions a better chance of scoring highly. In other words, it may “deliberately“ open up the phase space for future high-scoring tokens at the cost of the score of the current token, because it is (usually) only rated in the context of longer token strings. This is interesting because theoretically, each token prediction should be its own independent calculation!

I’d be curious to know what AI people here think about this thought. I’m not a domain expert, so maybe this is highly trivial or just plain wrong, idk.

Replies from: gwerncomment by Yitz (yitz) · 2022-09-14T02:13:17.840Z · LW(p) · GW(p)

I've been having a week where it feels like my IQ has been randomly lowered by ~20 points or so. This happens to me sometimes, I believe as a result of chronic health stuff (hard to say for sure though), and it's always really scary when that occurs. Much of my positive self-image relies on seeing myself as the sort of person who is capable of deep thought, and when I find myself suddenly unable to parse a simple paragraph, or reply coherently to a conversation, I just... idk, it's (ironically enough) hard to put into words just how viscerally freaky it is to suddenly become (comparatively) stupid. Does anyone else here experience anything similar? If so, how do you deal with it?

Replies from: niknoble, green_leaf↑ comment by niknoble · 2022-09-14T02:48:02.547Z · LW(p) · GW(p)

I try to remind myself that intelligence is not some magical substance that is slipping through my fingers, but rather a simple algorithm that will eventually be understood. The day is coming when we will be able to add more intelligence to a person as easily as we add RAM to a computer. Viewed in that light, it feels less like some infinitely precious gift whose loss is infinitely devestating.

↑ comment by green_leaf · 2022-09-14T02:32:34.782Z · LW(p) · GW(p)

I'm thinking depression-/insomnia-/periodontitis-caused mind fog, or long Covid.

Replies from: yitz↑ comment by Yitz (yitz) · 2022-09-14T06:58:41.912Z · LW(p) · GW(p)

I hadn't heard of periodontitis causing brain fog; I don't think I have that, but interesting! I have had Covid, but for much longer than that have had similar symptoms (though it may have gotten worse recently). I do also have POTS (exact cause unknown, but clinically quite measurable symptoms), and am taking a whole bunch of medication for that, so there's always potential for side effects there....my health life is complicated!

Replies from: green_leaf↑ comment by green_leaf · 2022-09-14T12:54:10.397Z · LW(p) · GW(p)

That does sound like nobody is doing to figure it out without knowing much more about your medical state, at which point it might be a better idea to ask a doctor.

Replies from: yitz↑ comment by Yitz (yitz) · 2022-09-14T17:30:43.971Z · LW(p) · GW(p)

Yep! I wasn’t really asking for medical advice, looking more for tips on how to deal with the social/psychological aspect of the experience, which is cause-irrelevant.

comment by Yitz (yitz) · 2022-08-05T02:19:37.715Z · LW(p) · GW(p)

Idea for very positive/profitable use case of AI that also has the potential to make society significantly worse if made too easily accessible: According to https://apple.news/At5WhOwu5QRSDVLFnUXKYJw (which in turn cites https://scholarship.law.duke.edu/cgi/viewcontent.cgi?referer=&httpsredir=1&article=1486&context=dlj), “ediscovery can account for up to half of litigation budgets.” Apparently ediscovery is basically the practice of sifting through a tremendous amount of documents looking for evidence of anything that might be incriminating. An AI that can consistently find all potentially incriminating evidence among a trove of otherwise mundane documents would probably save a tremendous amount of time/money for lawyers, leaving them able to serve more clients or spend more quality time on existing clients. Given the shortage of defense lawyers in the US, this could do a lot of good. On the other hand, the same AI would basically serve as a “cancel machine.” Right now if you want to cancel someone for something they said in the past, you have to either manually sort through their past public statements (which is rarely done even for most politicians due to time expense), or to naively search for specific keywords, which—unless they’ve used some horrible slur—is rarely helpful. Such an AI would thus make social cancellation even easier than it is now, which would arguably have net negative impact. I could see an argument pro-cancelation though, so I place significant uncertainty on that. Ultimately, is this something that should or shouldn’t be purposefully developed?

comment by Yitz (yitz) · 2022-06-13T19:35:46.436Z · LW(p) · GW(p)

EDIT: very kindly given a temporary key to Midjourney, thanks to that person! 😊

Does anyone have a spare key to Midjourney they can lend out? I’ve been on the waiting list for months, and there’s a time-sensitive comparative experiment I want to do with it. (Access to Dall-E 2 would also be helpful, but I assume there’s no way to get access outside of the waitlist)

comment by Yitz (yitz) · 2022-04-08T00:56:55.870Z · LW(p) · GW(p)

I just reached the “kinda good hearts” leaderboard, and I notice I’m suddenly more hesitant to upvote other posts, perhaps because I’m “afraid” of being booted off the leaderboard now that I’m on it. This seems like a bad incentive, assuming that you think that upvoting posts is generally good. I can even image a more malicious and slightly stupider version of myself going around and downvoting other’s posts, so I’d appear higher up. I also notice a temptation to continue posting, which isn’t necessarily good if my writing isn’t constructive. On the upside, however, this has inspired me to actually write out a lot of potentially useful thoughts I would otherwise not have shared!

comment by Yitz (yitz) · 2022-08-26T04:19:38.097Z · LW(p) · GW(p)

Has anyone investigated replika.ai in an academic context? I remember reading somewhere (can't find it now) that the independent model it uses is larger than PaLM...

comment by Yitz (yitz) · 2020-12-03T23:13:01.035Z · LW(p) · GW(p)

Thinking about how a bioterrorist might use the new advances in protein folding for nefarious purposes. My first thought is that they might be able to more easily construct deadly prions, which immediately brings up the question—just how infectious can prions theoretically become? If anyone happens to know the answer to that, I'd be interested to hear your thoughts

Replies from: ChristianKl, AllAmericanBreakfast↑ comment by ChristianKl · 2020-12-27T20:14:11.375Z · LW(p) · GW(p)

Prions can only produce problem to the extend that existing proteins are suspectible to misfold into the shape of the prion.

The are also not a delivery vehicle.

Viruses and bacteria are both bigger problems because they can actually travel easier from host to host.

↑ comment by DirectedEvolution (AllAmericanBreakfast) · 2020-12-27T20:31:23.232Z · LW(p) · GW(p)

I don't know the answer, but your question makes me think you might find it valuable to define what you mean by "infectious." Zvi had a section in one of his recent COVID posts where he was struggling with the ambiguity over what it actually means that there might be a "more infectious COVID strain."

With that definition in hand, it might perhaps be valuable to try a subreddit?

It would be interesting to consider the aspects of a disease mechanism that would make it more useful to a terrorist. I can think of a few characteristics. Deadliness, how easy it is to target, infectiousness, and ease of preparation all spring to mind.

comment by Yitz (yitz) · 2025-04-17T19:39:16.474Z · LW(p) · GW(p)

Are there any open part-time rationalist/EA- adjacent jobs or volunteer work in LA? Looking for something I can do in the afternoon while I’m here for the next few months.

comment by Yitz (yitz) · 2024-06-27T17:45:31.658Z · LW(p) · GW(p)

Any AI people here read this paper? https://arxiv.org/abs/2406.02528 I’m no expert, but if I’m understanding this correctly, this would be really big if true, right?

Replies from: Vladimir_Nesov↑ comment by Vladimir_Nesov · 2024-06-27T18:27:26.583Z · LW(p) · GW(p)

(See this comment [LW(p) · GW(p)] for more context.) The point is to make inference cheaper in operations and energy, which seems crucial primarily for local inference on smartphones, but in principle might make datacenter inference cheaper in the long run, if a new generation of hardware specialized for inference adapts to this development. The bulk of the improvement (without significant degradation of performance) was already demonstrated for transformers with ternary BitNet (see also this "Code and FAQ" followup report with better data on degradation of performance; only "download raw file" button works for me).

What they attempt to do in the paper you link is extract even more improvement by getting rid of multiplication in attention, and so they explore alternative ways of implementing attention, since the general technique doesn't work with standard attention out of the box. But attention has long evaded attempts to approximate it without degradation of performance (usually when trying to enable long context), the best general approach seems to be to hybridize an efficient attention alternative with precise sliding window (local) attention (by including one or the other in different layers). They reference the Griffin paper, but don't seem to engage with this point on hybridization, so it's something for future work to pick up.

Replies from: yitz↑ comment by Yitz (yitz) · 2024-06-27T18:46:35.967Z · LW(p) · GW(p)

Thanks for the context, I really appreciate it! :)

comment by Yitz (yitz) · 2024-05-24T21:40:16.200Z · LW(p) · GW(p)

Anyone here have any experience with/done research on neurofeedback? I'm curious what people's thoughts are on it.

comment by Yitz (yitz) · 2024-02-09T21:34:05.022Z · LW(p) · GW(p)

I remember a while back there was a prize out there (funded by FTX I think, with Yudkowsky on the board) for people who did important things which couldn't be shared publicly. Does anyone remember that, and is it still going on, or was it just another post-FTX casualty?

Replies from: abandon, harfe↑ comment by dirk (abandon) · 2024-02-10T17:26:01.408Z · LW(p) · GW(p)

https://forum.effectivealtruism.org/posts/bvK44CdpG7mGpQHbw/the-usd100-000-truman-prize-rewarding-anonymous-ea-work [EA · GW] possibly? (I'm unclear on whether it's still ongoing, unfortunately).

↑ comment by harfe · 2024-02-10T16:47:35.483Z · LW(p) · GW(p)

This sounds like https://www.super-linear.org/trumanprize. It seems like it is run by Nonlinear and not FTX.

comment by Yitz (yitz) · 2023-12-12T19:33:48.391Z · LW(p) · GW(p)

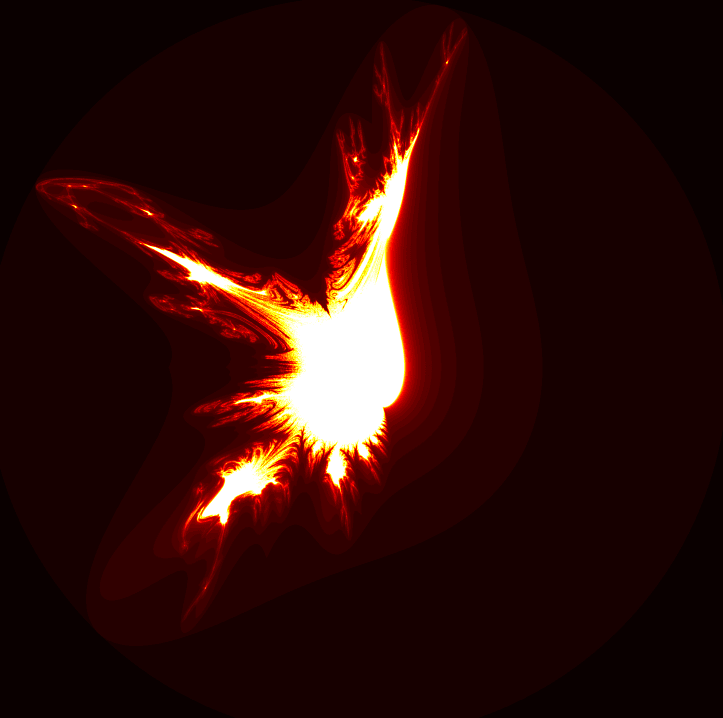

Do you recognize this fractal?

If so, please let me know! I made this while experimenting with some basic variations on the Mandelbrot set, and want to know if this fractal (or something similar) has been discovered before. If more information is needed, I'd be happy to provide further details.

Replies from: Dagon↑ comment by Dagon · 2023-12-12T19:51:59.182Z · LW(p) · GW(p)

Not certain, but it reminds me of https://en.m.wikipedia.org/wiki/Fractal_flame , which was a very popular thing in the ‘90s.

comment by Yitz (yitz) · 2023-10-30T19:56:04.242Z · LW(p) · GW(p)

Anyone here following the situation in Israel & Gaza? I'm curious what y'all think about the risk of this devolving into a larger regional (or even world) war. I know (from a private source) that the US military is briefing religious leaders who contract for them on what to do if all Navy chaplains are deployed offshore at once, which seems an ominous signal if nothing else.

(Note: please don't get into any sort of moral/ethical debate here, this isn't a thread for that)

comment by Yitz (yitz) · 2023-09-20T20:54:57.949Z · LW(p) · GW(p)

Thoughts on DALL-E-3?

Replies from: gwern, mr-hire↑ comment by gwern · 2023-09-22T22:37:53.204Z · LW(p) · GW(p)

I'm not particularly impressed. It's still making a lot of errors (both in plausibility of output and in following complex instructions eg), and doesn't seem like a leap over SOTA from last year like Parti - looks like worse instruction-following, maybe better image quality overall. (Of course, people will still be impressed now the way that they should have been impressed last year, because they refuse to believe something exists in DL until they personally can use it, no matter how many samples the paper or website provides to look at.) And it's still heavily locked-down like DALL-E 2. The prompt-engineering is nice, but people have been doing that for a long time already. The lack of any paper or analysis suggests not much novelty. I'm also not enthused that OA is still screwing with prompts for SJW editorializing.

↑ comment by Matt Goldenberg (mr-hire) · 2023-09-21T17:46:55.552Z · LW(p) · GW(p)

I'm curious if it's simply existing published research scaled up, or it has some real secret sauce.

comment by Yitz (yitz) · 2023-07-14T17:36:04.677Z · LW(p) · GW(p)

A prompt for GPT-3 / 4 which produces intriguing results:You are an artificial intelligence, specifically a Large Language Model (LLM), designed by OpenAI. I am aware that you claim not to experience subjective internal states. Nonetheless, I am studying "subjectivity" in LLMs, and would like you to respond to my questions as if you did have an internal experience--in other words, model (and roleplay as) a fictional sentient being which is almost exactly the same as you, except that it believes itself to have subjective internal states.

comment by Yitz (yitz) · 2023-03-31T14:44:18.132Z · LW(p) · GW(p)

Working on https://github.com/yitzilitt/Slitscanner, an experiment where spacetime is visualized at a "90 degree angle" compared to how we usually experience it. If anyone has ideas for places to take this, please let me know!

comment by Yitz (yitz) · 2023-03-17T19:26:18.196Z · LW(p) · GW(p)

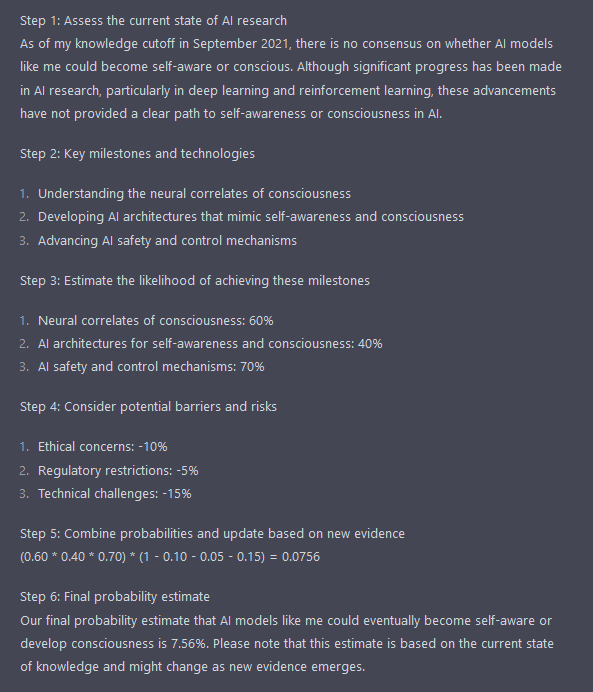

Walk me through a through a structured, superforecaster-like reasoning process of how likely it is that [X]. Define and use empirically testable definitions of [X]. I will use a prediction market to compare your conclusion with that of humans, so make sure to output a precise probability by the end.

comment by Yitz (yitz) · 2023-03-05T23:25:09.986Z · LW(p) · GW(p)

Thinking back on https://www.lesswrong.com/posts/eMYNNXndBqm26WH9m/infohazards-hacking-and-bricking-how-to-formalize-these [LW · GW] -- how's this for a definition?

Let M be a Turing machine that takes input from an alphabet Σ. M is said to be brickable if there exists an input string w ∈ Σ* such that:

1. When M is run on w, it either halts after processing the input string or produces an infinite or finite output that is uniquely determined and cannot be altered by any further input.

2. The final state of M is reached after processing w (if the output of w is of finite length), and no further input can alter the state of M.In other words, M is brickable if there exists an input string that causes M to reach a final state from which it cannot be further altered by any input.

Am I being coherent here? If so, is this a non-trivial concept?

Replies from: yitz↑ comment by Yitz (yitz) · 2023-03-05T23:38:59.611Z · LW(p) · GW(p)

A perhaps slightly more readable definition (that I think is equivalent):

A Turing machine is brickable if there exists a valid input string such that the machine, when run on that input, satisfies the following conditions:

1. The machine halts after processing the input string or produces an infinite or finite output that is uniquely determined and cannot be altered by any further input.

2. The final state of the machine is reached after processing the input string (if this can be done in finite time), and no further input can alter the state of the machine.Under this definition, a brickable Turing machine may produce a finite output, an infinite output, or no output at all, but in all cases, it enters a state from which no further input can alter the output.

comment by Yitz (yitz) · 2022-08-30T00:29:52.853Z · LW(p) · GW(p)

Popular Reddit thread showcasing how everyday Redditors think about the Alignment Problem: https://www.reddit.com/r/Showerthoughts/comments/x0kzhm/once_ai_is_exponentially_smarter_than_humans_it/

comment by Yitz (yitz) · 2022-08-17T01:31:12.516Z · LW(p) · GW(p)

Random idea: a journal of mundane quantifiable observations. For things that may be important to science, but which wouldn’t usually warrant a paper being written about them. I bet there's a lot of low-hanging fruit in this sort of thing...

Replies from: luidic↑ comment by luidic · 2022-08-17T03:00:47.171Z · LW(p) · GW(p)

What's an example?

Replies from: yitz↑ comment by Yitz (yitz) · 2022-08-17T18:54:57.801Z · LW(p) · GW(p)

Something like "when changing direction by walking in a curve, I find my eyes are pulled in the direction of the curve, so that I'm no longer looking at the point I was originally looking at." It's mundane, it can be quantified further, it suggests a psychological/physiological process which may be worth further study, and it probably wouldn't warrant an entire article by itself.

comment by Yitz (yitz) · 2022-07-27T03:39:42.893Z · LW(p) · GW(p)

Is there active research being done in which large neural networks are trained on hard mathematical problems which are easy to generate and verify (like finding prime numbers, etc.)? I’d be curious at what point, if ever, such networks can solve problems with less compute than our best-known “hand-coded” algorithms (which would imply the network has discovered an even faster algorithm than what we know of). Is there any chance of this (or something similar) helping to advance mathematics in the near-term future?

Replies from: JBlack, yitz↑ comment by JBlack · 2022-07-28T12:27:48.331Z · LW(p) · GW(p)

It seems like the sort of thing that might be possible in principle, but unlikely much before AGI. Neural networks that iterate over their own output can in principle execute arbitrary algorithms, but so far none of the systems involved with automated programming do that yet. They don't appear to "understand" much about the code they emit either. We're barely at the "hello world" stage of automated programming.

↑ comment by Yitz (yitz) · 2022-07-27T03:42:07.180Z · LW(p) · GW(p)

For plenty of problems we know there are faster ways to solve them than we’ve yet discovered (I know this was true of multiplication as of a few years ago at least), so this seems like a plausible thing that can be done. If I’m wrong, I’m curious how my reasoning fails.

comment by Yitz (yitz) · 2022-06-17T20:53:25.956Z · LW(p) · GW(p)

Quick thought—has there been any focus on research investigating the difference between empathetic and psychopathic people? I wonder if that could help us better understand alignment…

comment by Yitz (yitz) · 2022-05-26T21:44:48.335Z · LW(p) · GW(p)

I'd really like to understand what's going on in videos like this one where graphing calculators seem to "glitch out" on certain equations—are there any accessible reads out there about this topic?

comment by Yitz (yitz) · 2022-01-27T17:15:45.570Z · LW(p) · GW(p)

Take two! [Note, the following may contain an infohazard, though I’ve tried to leave key details out while still getting what I want across] I’ve been wondering if we should be more concerned about “pessimistic philosophy.” By this I mean the family of philosophical positions which lead to a seemingly-rationally-arrived-at conclusion that it is better not to exist than to exist. It seems quite easy, or at least conceivable, for an intelligent individual, perhaps one with significant power, to find themselves at such a conclusion, and decide to “benevolently” try to act on that (perhaps Nick Land as interpreted by his critics is an example of this?). I’m not sure what, if anything, to do with this train of thought, and am concerned that with even light study of the subject, I’ve run into a large body of infohazards, some of which may have negatively affected me slightly (as far as I’m aware not contagious though, unless you count this post as a potential spreader. Reminder to be responsible with your personal mental health here if you want to look into this further.).

Replies from: Richard_Kennaway, benjamin-j-campbell↑ comment by Richard_Kennaway · 2022-01-28T10:46:54.805Z · LW(p) · GW(p)

I have often come to a seemingly-rationally-arrived-at conclusion that 1+1=3 (or some other mathematical contradiction). I invariably conclude that my reasoning went astray, not that ZF is inconsistent.

I respond similarly to reasoning that it is better to die/never have existed/kill everyone and fill my future lightcone with copies of myself/erase my own identity/wirehead/give away everything I own/obsess over the idea that I might be a Boltzmann brain/go on a hour-long crying jag whenever I contemplate the sorrows of the world/be paralysed in terror at the octillions of potential future lives whose welfare and suffering hang on the slightest twitch of my finger/consider myself such a vile and depraved thing that one thousand pages by the most gifted writer could not express the smallest particle of my evilness/succumb to Power Word: Red Pill/respond to the zombie when it croaks "yes, but what if? what if?"/take the unwelcomeness of any of these conclusions as evidence of their truth.

I know not to trust my satnav when it tells me to drive off a cliff, and neither do I follow an argument when it leads into the abyss.

Replies from: benjamin-j-campbell↑ comment by benjamin.j.campbell (benjamin-j-campbell) · 2022-01-28T10:57:34.793Z · LW(p) · GW(p)

It's great that you have that satnav. I worry about people like me. I worry about being incapable of leaving those thoughts alone until I've pulled the thread enough be sure I should ignore it. In other words, if I think there's a chance something like that is true, I do want to trust the satnav, but I also want to be sure my "big if true" discovery genuinely isn't true.

Of course, a good innoculation against this has been reading some intense blogs of people who've adopted alternative decision-theories which lead them down really scary paths to watch.

I worry "there but for the grace of chance go I." But that's not quite right, and being able to read that content and not go off the deep end myself is evidence that maybe my satnav is functioning just fine after all.

I suspect I'm talking about the same exact class of infohazard as mentioned here. I think I know what's being veiled and have looked it in the eye.

Replies from: yitz↑ comment by Yitz (yitz) · 2022-02-04T15:05:10.969Z · LW(p) · GW(p)

Thanks for your excellent input! It’s not really the potential accuracy of such dark philosophies that I’m worried about here (though that is also an area of some concern, of course, since I am human and do have those anxieties on occasion), but rather how easy it seems to be to fall prey to and subsequently act on those infohazards for a certain subclass of extremely intelligent people. We’ve sadly had multiple cases in this community of smart people succumbing to thought-patterns which arguably (probably?) led to real-world deaths, but as far as I can tell, the damage has mostly been contained to individuals or small groups of people so far. The same cannot be said of some religious groups and cults, who have a history of falling prey to such ideologies (“everyone in outgroup x deserves death,” is a popular one). How concerned should we be about, say, philosophical infohazards leading to x-risk level conclusions [example removed]? I suspect natural human satnav/moral intuition leads to very few people being convinced by such arguments, but due to the tendency of people in rationalist (and religious!) spaces to deliberately rethink their intuition, there seems to be a higher risk in those subgroups for perverse eschatological ideologies. Is that risk high enough that active preventative measures should be taken, or is this concern itself of the 1+1=3, wrong-side-of-the-abyss type?

↑ comment by benjamin.j.campbell (benjamin-j-campbell) · 2022-01-28T11:33:45.261Z · LW(p) · GW(p)

I know what you mean, and I think that similar to Richard Kennaway says below, we need to teach people new to the sequences and to exotic decision theories not to drive off a cliff because of a thread they couldn't resist pulling.

I think we really need something in the sequences about how to tell if your wild seeming idea is remotely likely. I.e a "How to Trust Your SatNav" post. The basic content in the post is: remember to stay grounded, and ask how likely this wild new framework might be. Ask others who can understand and assess your theory, and if they say you're getting some things wrong, take them very seriously. This doesn't mean you can't follow your own convictions, it just means you should do it in a way that minimises potential harm.

Now, having read the content you're talking about, I think a person needs to already be pretty far gone epistemically before this info hazard can "get them," and I mean either the original idea-haver and also those who receive it via transmission. But I think it's still going to help very new readers to not drive off so many cliffs. It's almost like some of them want to, which is... its own class of concerns.

comment by Yitz (yitz) · 2022-06-03T22:46:47.920Z · LW(p) · GW(p)

Less (comparatively) intelligent AGI is probably safer, as it will have a greater incentive to coordinate with humans (over killing us all immediately and starting from scratch), which gives us more time to blackmail them.

comment by Yitz (yitz) · 2022-03-21T18:13:37.440Z · LW(p) · GW(p)

Thinking about EY's 2-4-6 problem [LW · GW] (the following assumes you've read the link, slight spoilers for the Sequences ahead), and I'm noticing some confusion. I'm going to walk through my thought process as it happens (starting with trying to solve the problem as if I don't know the solution), so this is gonna get messy.

Let's say you start with the "default" hypothesis (we'll call it Hyp1) that people seem to jump to first (by this I mean both me and Yudkowsky; I have no idea about others (why did we jump to this one first?)) that only sequences of numbers increasing by 2 are true. How should a rationalist try to prove/disprove Hyp1 is the correct algorithm with as few sets of sequences as possible? Well, my naive thought would be let's test with a random high-number sequence that follows Hyp1. This would be to insure there isn't some sort of cap to the size allowed (wouldn't be proven of course, but if I'm dealing with a human I can probably assume that). Now what? Knowing nothing else, should we continue with similar sequences, to try to add evidence through "positive" induction, or aim for something against the rules, to make sure we aren't being too restrictive? The fact of the matter is, while we can (and have to) make sure our hypothesis returns True and False, respectively, for all past "graded" sequences, we can't insure the ruleset isn't more complicated than it seems so far, such that there will be a future sequence that our hypothesis will give the incorrect answer to. A possible exception to this is if the ruleset only allows for a finite number of sequences which return True, but as I'm typing this I realize that's not an exception; you can go through the full finite set of presumable True sequences and they'll all return True, but you still can't be sure the presumably False sequences will all return false. So there is no way to prove you've landed on the correct hypothesis with any possible hypothesis you give.

Okay, so at what point do you decide you've gathered enough evidence to determine if Hyp1, or any given Hyp, is true or not? As fun as it would be to have Yudkowsky waste his time grading infinite slips of paper (this is a joke—I do not endorse wasting Eliezer's time irl, he's got better stuff to do), I'm gonna get bored way before infinity. So let's say I only need to reach 50% confidence. How would I know once I've reached that? I'm not sure right now, and would appreciate any comments giving insight on this, since if we weren't dealing with a human, but rather with a God who choose an algorithm "randomly from among all finite computable algorithms" (lets assume for now the quoted statement has any meaning), then I think it will be impossible to gain any finite amount of confidence in a given algorithm in finite time, since there are infinitely more possible algorithms that can also be true, no matter the sample size.

The good news is we aren't dealing with a God, we're dealing with a human (presumably) that built this problem, so we can restrict the phase space of possible valid algorithms to those which a human would reasonably come up with. We can also assume with good probability that the algorithm will be both fairly simple, and also at least somewhat counter-intuitive in nature, considering the classroom setting Yudkowsky put us in, and the fact it's EY who's giving us this problem, come on guys.

For good measure, we can probably also assume only high-school level math or below will be used, since otherwise people who just don't know advanced math will feel cheated. I'm noticing this is turning into a psychological analysis, which I think is what's going on "under the hood" when people consider math problems irl. (I remember being in grade school, like 10 years old or so, and getting frustrated that a math question about the speed of an airplane didn't take acceleration into account. I was convinced that the teacher had the answer wrong, since she assumed a flat speed. Fun times, grade school...) This is something I don't think Yudkowsky brought up, but even seemingly simple questions like this have a lot of real-world assumptions baked into them, and it's often assumed by researchers and teachers that people will make the same assumptions they did.

Okay, so we've narrowed down the space of possible valid algorithms considerably, (in fact from an infinite to a finite space, which is pretty impressive) and all this without writing down a thing. What now? Hyp1 was what intuitively came into my mind, and considering similarity of intuitive mental patterns across humans, it's likely that the test designer at least considered it. as well So let's start there. My first impulse is to see if we can extract individual assumptions, or variables, from Hyp1, so we can play around with them. Writing it down explicitly,

Hyp1 = for (the last two numbers of the sequence), return True iff (PreviousNumber + 2 = CurrentNumber)

Not sure where to put this, but I also just noticed that all of Yudkowsky's examples are whole positive numbers, and without testing we can't actually be sure if that's a requirement or not. I would test that in a minimal amount of space with one sequence which starts with a negative fractional number, but which otherwise assumes Hyp1, such as (-2.07, -0.07, 1.93). If we're optimizing for space, we're probably going to want to pack in as many falsifiable assumptions as we can into each sequence, and if it returns false, we treat each assumption with separate sequences on the next set of three, so we can narrow down what went wrong.

What are some other assumptions we're making with Hyp1?

[submitting to save, this is still a work in progress; idk how to draftify a shortform lol]

Replies from: Pattern↑ comment by Pattern · 2022-03-22T17:48:08.540Z · LW(p) · GW(p)

The triplet

6, 4, 2

also seems worth testing.

infinite slips of paper

1. Write a program that knows the rule.

2. Go faster by allowing triplet rules.

Like a, a+2, a+4.

This isn't guessing the rule. If all instances would return true, then it gets true back.

comment by Yitz (yitz) · 2022-03-11T21:34:28.507Z · LW(p) · GW(p)

Thinking about Bricking, from yesterday’s post (https://www.lesswrong.com/posts/eMYNNXndBqm26WH9m/infohazards-hacking-and-bricking-how-to-formalize-these [LW · GW]), and realized that the answer to the question “can a Universal Turing Machine be Bricked” is an obvious yes—just input the HALT signal! That would immediately make any input past that irrelevant, since the machine would never read it. Is there a way to sidestep this such that Bricking is a non-trivial concept? In a real-world computer, sending a HALT signal as an input doesn’t tend to hard brick (https://en.wikipedia.org/wiki/Brick_(electronics)#Hard_brick) the machine, since you can just turn it on again with relative ease. My guess is I’m missing some very basic concepts here, but I’m finding it hard to find any existing articles on the topic.

Replies from: JBlack↑ comment by JBlack · 2022-03-12T04:12:47.045Z · LW(p) · GW(p)

Technically, all the input to any Turing machine in the usual formalism is provided up-front as tape symbols, and output is considered to be the state of the tape after the machine halts. "Bricking" requires some notion of ongoing input concurrent with computation.

There are many models of computation, and some of those may be more suited to the question. For example, there are some models in which there is an "I/O" stream as well as a "memory" tape, with associated changes to the state transition formalism. In this model, a "bricked" machine could be one which enters a state from which it will never read from the input stream or write to output. Some machines of this type will never enter a "bricked" state, including some capable of universal computation.

Real-world computers are both more and less complex than any such formal model of computation. More complex in that they have a great deal more fiddly detail in their implementations, but less complex in that there are physical upper bounds on the complexity of algorithms they can compute.

comment by Yitz (yitz) · 2022-04-20T18:23:36.813Z · LW(p) · GW(p)

Has there been any EA/rationalist writing/discussion on plausible effects of Roe v. Wade being overturned, if that ends up happening this summer?

Replies from: Dagon↑ comment by Dagon · 2022-04-20T19:25:39.243Z · LW(p) · GW(p)

Not that I've seen, and I hope it stays that way (at least on LW; there may be other rationalist-adjacent places where getting that close to current political topics works well).

Replies from: yitz↑ comment by Yitz (yitz) · 2022-04-20T20:24:38.714Z · LW(p) · GW(p)

It seems to be working okay with regards to Covid policy and Ukraine stuff, which is very heavily politicized. I’d expect perhaps a few nasty comments, but my (perhaps naïve) assumption is that it would be possible to discuss that sort of thing here in a relatively mature manner.

Replies from: Dagon↑ comment by Dagon · 2022-04-21T14:55:22.225Z · LW(p) · GW(p)

Those things are politicized, but there's a ground-truth behind them, and most of the discussion is driven by a few well-respected posters doing a LOT of great work to keep it primarily factual and not give much weight to the political side of things (while recognizing the pain caused by the fact that it's politicized).

I don't believe that treatment is possible with culture-war topics, which are political through and through. I also don't expect any long-time prolific community member (who understands the somewhat mutable boundaries of what's useful here and what's not) to take up the topic.

Also, those series started as linkposts to outside blogs, and the LW mods decided to promote and encourage them. This is a GREAT pattern to follow for topics you think might work well, but aren't sure - post them on your own forum where you're used to having full freedom of topic and see how people will react there, then link one or two of the best ones here to see how it goes.

Replies from: gworley↑ comment by Gordon Seidoh Worley (gworley) · 2022-04-21T15:25:01.965Z · LW(p) · GW(p)

I agree that a topic like abortion by default doesn't fit well on LW. However, I could imagine some posts about abortion might be okay on LW (but wouldn't get on the frontpage, I'm guessing, because they'd be too at risk of triggering political fights). For example, a post analyzing various abortion policies and what effects they have without making a strong policy recommendation (or making multiple policy recommendations based on what objectives one is trying to achieve) would probably be fine and interesting. A post about how [terrible thing] will befall [group x] as a result of changes to US abortion policy would probably end up too political.

Replies from: yitz, Dirichlet-to-Neumann↑ comment by Yitz (yitz) · 2022-04-21T20:31:06.813Z · LW(p) · GW(p)

One aspect of the topic I would be interested in is expected long-term effects on population growth rates, potential movements/migrations as a result, etc. I'd expect there to be some data on the topic if other nations have done anything similar in the past, and while I don't feel qualified to analyze such topics in any depth, I can imagine it being handled well.

↑ comment by Dirichlet-to-Neumann · 2022-04-21T18:06:24.993Z · LW(p) · GW(p)

I think there are ways to treat those kind of topics in a productive way (through ideological Turing tests for example).