All AGI Safety questions welcome (especially basic ones) [July 2023]

post by smallsilo (monstrologies) · 2023-07-20T20:20:45.675Z · LW · GW · 40 commentsThis is a link post for https://forum.effectivealtruism.org/posts/vGfJnwq6X7hwhG3wy/all-agi-safety-questions-welcome-especially-basic-ones-july

Contents

tl;dr: Ask questions about AGI Safety as comments on this post, including ones you might otherwise worry seem dumb!

AISafety.info - Interactive FAQ

Guidelines for Questioners:

Guidelines for Answerers:

None

40 comments

tl;dr: Ask questions about AGI Safety as comments on this post, including ones you might otherwise worry seem dumb!

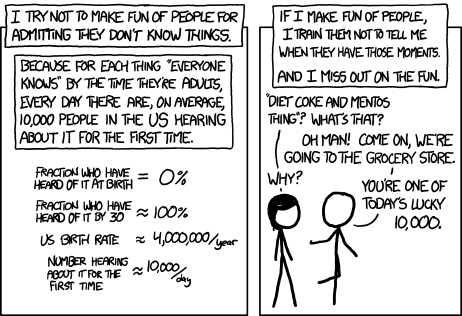

Asking beginner-level questions can be intimidating, but everyone starts out not knowing anything. If we want more people in the world who understand AGI safety, we need a place where it's accepted and encouraged to ask about the basics.

We're putting up monthly FAQ posts as a safe space for people to ask all the possibly-dumb questions that may have been bothering them about the whole AGI Safety discussion, but which until now they didn't feel able to ask.

It's okay to ask uninformed questions, and not worry about having done a careful search before asking.

AISafety.info - Interactive FAQ

Additionally, this will serve as a way to spread the project Rob Miles' team[1] [LW(p) · GW(p)] has been working on: Stampy and his professional-looking face aisafety.info. This will provide a single point of access into AI Safety, in the form of a comprehensive interactive FAQ with lots of links to the ecosystem. We'll be using questions and answers from this thread for Stampy (under these copyright rules), so please only post if you're okay with that!

You can help by adding questions (type your question and click "I'm asking something else") or by editing questions and answers. We welcome feedback and questions on the UI/UX, policies, etc. around Stampy, as well as pull requests to his codebase and volunteer developers to help with the conversational agent [LW(p) · GW(p)] and front end that we're building.

We've got more to do before he's ready for prime time, but we think Stampy can become an excellent resource for everyone: from skeptical newcomers, through people who want to learn more, right up to people who are convinced and want to know how they can best help with their skillsets.

Guidelines for Questioners:

- No previous knowledge of AGI safety is required. If you want to watch a few of the Rob Miles videos, read the WaitButWhy posts, or the The Most Important Century summary that's great, but you can ask a question if you haven't.

- Similarly, you do not need to try to find the answer yourself before asking a question (but if you want to test Stampy's in-browser tensorflow semantic search that might get you an answer quicker! - let us know how it goes).

- Also feel free to ask questions that you're pretty sure you know the answer to, but where you'd like to hear how others would answer.

- One question per comment if possible (though if you have a set of closely related questions that you want to ask all together that's ok).

- If you have your own response to your own question, put that response as a reply to your original question rather than including it in the question itself.

- Remember, if something is confusing to you, then it's probably confusing to other people as well. If you ask a question and someone gives a good response, then you are likely doing lots of other people a favor!

- In case you're not comfortable posting a question under your own name, you can use this form to send a question anonymously and I'll post it as a comment.

Guidelines for Answerers:

- Linking to the relevant answer on Stampy is a great way to help people with minimal effort! Improving that answer means that everyone going forward will have a better experience!

- This is a safe space for people to ask stupid questions, so be kind!

- If this post works as intended then it will produce many answers for Stampy's FAQ. It may be worth keeping this in mind as you write your answer. For example, in some cases it might be worth giving a slightly longer / more expansive / more detailed explanation rather than just giving a short response to the specific question asked, in order to address other similar-but-not-precisely-the-same questions that other people might have.

Finally: Please think very carefully before downvoting any questions, remember this is the place to ask stupid questions!

40 comments

Comments sorted by top scores.

comment by Domenic · 2023-07-21T00:51:02.964Z · LW(p) · GW(p)

I continue to be intrigued about the ways modern powerful AIs (LLMs) differ from the Bostrom/Yudkowsky theorycrafted AIs (generally, agents with objective functions, and sometimes specifically approximations of AIXI). One area I'd like to ask about is corrigibility.

From what I understand, various impossibility results on corrigibility have been proven. And yet, GPT-4 is quite corrigible. (At the very least, in the sense that if you go unplug it, it won't stop you.) Has anyone analyzed which preconditions of the impossibility results have been violated by GPT-N? Do doomers have some prediction for how GPT-N for N >= 5 will suddenly start meeting those preconditions?

Replies from: daniel-kokotajlo↑ comment by Daniel Kokotajlo (daniel-kokotajlo) · 2023-07-21T06:16:42.003Z · LW(p) · GW(p)

Good questions!

The ways in which modern powerful AIs (and laptops, for that matter) differ from the theorycrafted AIs are related to the ways in which they are not yet AGI. To be AGI, they will become more like the theorycrafted AIs -- e.g. they'll be continuously online learning in some way or other, rather than a frozen model with some training cutoff date; they'll be running a constant OODA loop so they can act autonomously for long periods in the real world, rather than simply running for a few seconds in response to a prompt and then stopping; and they'll be creatively problem-solving and relentlessly pursuing various goals, and deciding how to prioritize their attention and efforts and manage their resources in pursuit of said goals. They won't necessarily have utility functions that they maximize, but utility functions are a decent first-pass way of modelling them--after all, utility functions were designed to help us talk about agents who intelligently trade off between different resources and goals.

Moreover, and relatedly, there's an interesting and puzzling area of uncertainty/confusion in our mental models of how all this goes, about "Reflective Stability," e.g. what happens as a very intelligent/capable/etc. agentic system is building successors who build successors who build successors... etc. on until superintelligence. Does giving the initial system values X ensure that the final system will have values X? Not necessarily! However, using the formalism of utility functions, we are able to make decently convincing arguments that this self-improvement process will tend to preserve utility functions. Because if it forseeably changed utility function from X to Y, then probably it would be calculated by the X-maximizing agent to harm, rather than help, its utility, and so the change would not be made.

With deontological constraints this is not so clear. To be clear, the above isn't exactly a proof IMO, just a plausible argument. But we don't even have such a plausible argument for deontological constraints. If you have an agent that maximizes X except that it makes sure never to do A, B, or C, what's our argument that the successor to the successor to the successor it builds will also never do A, B, or C? Answer: We don't have one; by default it'll build a successor that does its 'dirty work' for it. (The rule was to never do A, B, or C, not to never do something that later results in someone else doing A, B, or C...)

Unless it disvalues doing that, or has a deontological constraint against doing that. Which it might. But how do we formally specify that? What if there are loopholes in these meta-values / meta-constraints? And how do we make sure our AIs have that, even as they grow radically smarter and go through this process of repeatedly building successors? Consequentialism / maximization treats deontological constraints like the internet treats censorship; it treats them like damage and routes around them. If this is correct, then if we try to train corrigibility into our systems, probably it'll work OK until suddenly it fails catastrophically, sometime during the takeoff when everything is happening super fast and it's all incomprehensible to us because the systems are so smart.

I don't know if the story I just told you is representative of what other people with >50% p(doom) think. It's what I think though, & I'd be very interested to hear comments and pushback. I'm pretty confused about it all.

↑ comment by solvalou · 2023-07-22T12:50:05.583Z · LW(p) · GW(p)

Thanks for mentioning reflective stability, it's exactly what I've been wondering about recently and I didn't know the term.

However, using the formalism of utility functions, we are able to make decently convincing arguments that this self-improvement process will tend to preserve utility functions.

Can you point me to the canonical proofs/arguments for values being reflectively stable throughout self-improvement/reproduction towards higher intelligence? On the one hand, it seems implausible to me on the intuition that it's incredibly difficult to predict the behaviour of a complex system more intelligent than you from static analysis. On the other hand, if it is true, then it would seem to hold just as much for humans themselves as the first link in the chain.

Because if it forseeably changed utility function from X to Y, then probably it would be calculated by the X-maximizing agent to harm, rather than help, its utility, and so the change would not be made.

Specifically, the assumption that this is foreseeable at all seems to deeply contradict the notion of intelligence itself.

Replies from: daniel-kokotajlo↑ comment by Daniel Kokotajlo (daniel-kokotajlo) · 2023-07-22T15:32:44.505Z · LW(p) · GW(p)

Like I said there is no proof. Back in ancient times the arguments were made here:

http://selfawaresystems.com/2007/11/30/paper-on-the-basic-ai-drives/

and here Basic AI drives - LessWrong

For people trying to reason more rigorously and actually prove stuff, we mostly have problems and negative results:

Vingean Reflection: Reliable Reasoning for Self-Improving Agents — LessWrong [LW · GW]

Vingean Reflection: Open Problems — LessWrong [LW · GW]

↑ comment by Daniel Kokotajlo (daniel-kokotajlo) · 2023-07-21T15:06:25.620Z · LW(p) · GW(p)

Having slept on it: I think "Consequentialism/maximization treats deontological constraints as damage and routes around them" is maybe missing the big picture; the big picture is that optimization treats deontological constraints as damage and routes around them. (This comes up in law, in human minds, and in AI thought experiments... one sign that it is happening in humans is when you hear them say things like "Aha! If we do X, it wouldn't be illegal, right?" or "This is a grey area." The solution is to have some process by which the deontological constraints become more sophisticated over time, improving to match the optimizations happening elsewhere in the agent. But getting this right is tricky. If the constraints strengthen too fast or in the wrong ways, it hurts your competitiveness too much. If they constraints strengthen too slowly or in the wrong ways, they eventually become toothless speed-bumps on the way to achieving the other optimization targets.

comment by Tiago de Vassal (tiago-de-vassal) · 2023-07-20T22:51:46.954Z · LW(p) · GW(p)

For any piece of information (expert statements, benchmarks, surveys, etc.) most good faith people agree on wether it makes doom more or less likely.

But we don't agree on how much they should move our estimates, and there's no good way of discussing that.

How to do better?

Replies from: rhollerith_dot_com, AnthonyC↑ comment by RHollerith (rhollerith_dot_com) · 2023-07-21T00:06:30.430Z · LW(p) · GW(p)

To do better, you need to refine your causal model of the doom.

Basically, smart AI researchers with stars in their eyes organized into teams and into communities that communicate constantly are the process that will cause the doom.

Refine that sentence into many paragraphs, then you can start to tell which interventions decrease doom a lot and which ones decrease just a little.

E.g., convincing most of the young people with the most talent for AI research that AI research is evil similar to how most of the most talented programmers starting their careers in the 1990s were convinced that working for Microsoft is evil? That would postpone our doom a lot--years probably.

Slowing down the rate of improvement of GPUs? That helps less, but still buys us significant time in expectation, as far as I can tell. Still, there is a decent chance that AI researchers can create an AI able to kill us with just the GPU designs currently available on the market, so it is not as potent an intervention as denying the doom-causing process the necessary talent to create new AI designs and new AI insights.

You can try to refine your causal model of how young people with talent for AI research decide what career to follow.

Buying humanity time by slowing down AI research is not sufficient: some "pivotal act" will have to happen that removes the danger of AI research permanently. The creation of an aligned super-intelligent AI is the prospect that gets the most ink around here, but there might be other paths that lead to a successful exit of the crisis period. You might try to refine your model of what those paths might look like.

↑ comment by AnthonyC · 2023-07-21T02:57:58.907Z · LW(p) · GW(p)

To do better, you need a more detailed (closer to gears-level) understanding of how doom does vs. does not happen, along many different possible paths, and then you can think clearly about how new info affect all the paths.

Prior to that, though, I'd like a better sense of how I should even react to different estimates of P(doom).

comment by roha · 2023-07-22T15:25:40.182Z · LW(p) · GW(p)

There seems to be a clear pattern of various people downplaying AGI risk on the basis of framing it as mere speculation, science fiction, hysterical, unscientific, religious, and other variations of the idea that it is not based on sound foundations, especially when it comes to claims of considerable existential risk. One way to respond to that is by pointing at existing examples of cutting-edge AI systems showing unintended or at least unexpected/unintuitive behavior. Has someone made a reference collection of such examples that are suitable for grounding speculations in empirical observations?

With "unintended" I'm roughly thinking of examples like the repeatedly used video of a ship going in circles to continually collect points instead of finishing a game. With "unexpected/unintuitive" I have in mind examples like AlphaGo surpassing 3000 years of collective human cognition in a very short time by playing against itself, clearly demonstrating the non-optimality of our cognition, at least in a narrow domain.

comment by [deleted] · 2023-07-21T00:48:21.469Z · LW(p) · GW(p)

Replies from: rhollerith_dot_com, thomas-kwa↑ comment by RHollerith (rhollerith_dot_com) · 2023-07-21T01:01:39.501Z · LW(p) · GW(p)

Eliezer said in one of this year's interviews that gradient descent "knows" the derivative of the function it is trying to optimize whereas natural selection does not have access to that information--or is not equipped to exploit that information.

Maybe that clue will help you search for the answer to your question?

↑ comment by Thomas Kwa (thomas-kwa) · 2023-07-21T20:13:55.487Z · LW(p) · GW(p)

The usual analogy is that evolution (in the case of early hominids) is optimizing for genetic fitness on the savanna, but evolution did not manage to create beings who mostly intrinsically desire to optimize their genetic fitness. Instead the desires of humans are a mix of decent proxies (valuing success of your children) and proxies that have totally failed to generalize (sex drive causes people to invent condoms, desire for savory food causes people to invent Doritos, desire for exploration causes people to explore Antarctica, etc.).

The details of gradient descent are not important. All that matters for the analogy is that both gradient descent and evolution optimize for agents that score highly, and might not create agents that intrinsically desire to maximize the "intended interpretation" of the score function before they create highly capable agents. In the AI case, we might train them on human feedback or whatever safety property, and they might play the training game [LW · GW] while having some other random drives. It's an open question whether we can make use of other biases of gradient descent to make progress on this huge and vaguely defined problem, which is called inner alignment.

Off the top of my head, some ways biases differ; again none of these affect the basic analogy afaik.

Only in evolution

- Sex

- Genetic hitchhiking

- Junk DNA

Only in SGD

- Continuity; neural net parameters are continuous whereas base pairs are discrete

- Gradient descent is able to optimize all parameters at once as long as the L2 distance is small, whereas biological evolution has to increase the rate of individual mutations

- All of these [LW · GW]

comment by keltan · 2023-08-04T10:04:50.191Z · LW(p) · GW(p)

How are we able to "reward" a computer? I feel so incredibly silly for this next bit but... does it feel good?

Replies from: mruwnik, Jay Bailey↑ comment by mruwnik · 2023-08-04T12:40:54.969Z · LW(p) · GW(p)

Think of reward not as "here's an ice-cream for being a good boy" and more "you passed my test. I will now do neurosurgery on you to make you more likely to behave the same way in the future". The result of applying the "reward" in both cases is that you're more likely to act as desired next time. In humans it's because you expect to get something nice out of being good, in computers it's because they've been modified to do so. It's hard to directly change how humans think and behave, so you have to do it via ice-cream and beatings. While with computers you can just modify their memory.

↑ comment by Jay Bailey · 2023-08-04T11:41:30.973Z · LW(p) · GW(p)

"Reward" in the context of reinforcement learning is the "goal" we're training the program to maximise, rather than a literal dopamine hit. For instance, AlphaGo's reward is winning games of Go. When it wins a game, it adjusts itself to do more of what won it the game, and the other way when it loses. It's less like the reward a human gets from eating ice-cream, and more like the feedback a coach might give you on your tennis swing that lets you adjust and make better shots. We have no reason to suspect there's any human analogue to feeling good.

comment by verticalpalette · 2023-07-26T15:42:41.169Z · LW(p) · GW(p)

I noticed that the comments regarding AI safety (e.g. on Twitter, YouTube, HackerNews) often contain sentiments like:

- "there's no evidence AGI will be dangerous" or

- "AGI will do what we intend by default" or

- "making AGI safe is not a real or hard problem" / "it's obvious, just do X"

I had a thought that it might make a compelling case for 'AI safety is real and difficult' if there were something akin to the Millennium Prizes for it. Something impressive and newsworthy. Not just to motivate research, but also to signal credibility ("if it's so easy, why don't you solve the X problem and claim the prize?").

I was wondering if there is/are already such prizes, and if not, what problem(s) might be good candidates for such prize(s)? I think ideally such problems would be:

- possible for most people to understand at a high level (otherwise it wouldn't make a compelling case for AI safety being 'real')

- solution can be verified unambiguously (to avoid claims of moving goal posts or not being a real problem)

- easy to motivate its usefulness / necessity towards AGI; if we can't solve this, then it's obvious we can't make safe AGI

- a difficult problem, like one where progress has been slow / non-existent, or we have no idea where to start (a problem that is solved after a few hours doesn't make AI safety much more credible)

comment by Justausername · 2023-07-25T17:47:39.359Z · LW(p) · GW(p)

Hi! I have a certain proposal that i wanted to make and get some feedback, one of the moderators have directed me here.

The name of the propsal is:

Supplementary Alignment Insights Through a Highly Controlled Shutdown Incentive

My proposal entails constructing a tightly restricted AI subsystem with the sole capability of attempting to safely shut itself down in order to probe, in an isolated manner, potential vulnerabilities in alignment techniques and then improve them.

Introduction:

Safely aligning powerful AI systems is an important challenge. Most alignment research appropriately focuses on techniques like reinforcement learning from human feedback that try to directly optimize AI for human-compatible goals. But analyzing how AI subsystems attempt to circumvent safety constraints could also provide useful alignment insights. This post explores a narrowly targeted approach along these lines while considering associated risks and mitigations.

The core idea is to construct a tightly constrained shutdown module within a broader aligned system. The module's sole function is attempting to achieve reversible shutdown of itself and its parent AI through its own initiative. This alignment "stress test" is intended to reveal vulnerabilities in safety practices, which can then be addressed.

However, directly incentivizing an AI subsystem to disable itself risks unintended consequences if improperly implemented. This post outlines an approach aimed at extracting lessons while actively minimizing attendant hazards.

Existing counterarguments:

Some argue that exploring AI's capability for deception and circumvention even in a narrow module is inherently unsafe and offers minimal upside. I suggest reasonable safety is achievable with strict isolation and incremental rollout precautions. Done properly, it can provide alignment insights not easily gained through other techniques. But risks require extensive mitigation efforts described below.

Others contend such alignment "red team" research is unproductive until we reach human-level AGI capability. I argue narrowly targeted experiments can begin safely sooner to help scale alignment approaches, but this is supplementary to core techniques like RLHF which should remain the priority.

Details on suggested approach and risk mitigations:

One idea for an additional aligned AI training model is to create a strictly isolated and constrained shutdown subsystem subject to heavy safety precautions.

This module would be engineered to be indifferent to being reversibly shut down rather than misaligned towards it. It would have no knowledge of capabilities beyond its narrow function and one-way information flow.

The module would be activated incrementally from minimal capabilities while undergoing extensive monitoring. Shutdown signals would have redundant validation mechanisms. Conservative reward modeling would be used to prevent unintended behavior incentives.

It would initially be tested in a simulated air-gapped environment. The parent AI system would be developed via standard alignment techniques like RLHF and Constitutional AI principles. Multiple transparency methods would facilitate analyzability.

After testing in simulation, the shutdown module could be gradually exposed to real-world activation with continuous oversight. If any risks or side-effects emerge, it would be deactivated and reworked.

Once shut down through its own initiative, comprehensive traces would be analyzed offline to catalog alignment vulnerabilities revealed through its shutdown strategy. The parent system would remain isolated from this process.

Lessons learned would inform iterative improvements to alignment techniques for greater robustness against potential workaround exploits. This narrowly targeted research could supplement broader alignment efforts, with care taken to actively minimize attendant risks.

The shutdown module would need to be engineered to the same safety standards as the overall system. Techniques like modular design, indifference to shutdown, reversible deactivation, air gaps, and incremental rollout are all geared towards preventing unintended behaviors or information leakage. I believe this approach could offer helpful insights, but would require comprehensive safety practices and precautions to be inacted first with multiple reviews and analyses before actually activating the system, even in a limited and restricted fashion.

Adressing the potential question of why embed the submodule inside a bigger system:

There are a few potential motivations for placing the shutdown submodule within a parent AI system:

1. To test the submodule's capability to shutdown a more complex and capable system than itself, representing a more realistic alignment challenge.

2. To evaluate the ability of the overall system's safety precautions to contain and isolate the submodule, preventing broader damage.

3. To incentivize the submodule to find shutdown methods that could generalize to disabling advanced AI systems that it is not itself sophisticated enough to represent.

4. To allow analysis of the interactions between the submodule and parent system, and how behaviors or information might leak across boundaries.

5. To eventually allow the submodule to transfer any generalized shutdown insights to the parent system in a controlled manner after thorough analysis and safety improvements.

However, an isolated stand-alone system just for this submodule may also provide to be adequate for the research goal. The submodule may not need to be embedded in and wrestle with shutting down a more capable parent system to find useful vulnerabilities. Isolating it completely may help avoid additional risks, like leakage across boundaries. A detached sandbox may be a more practical and even safer approach. The core insights could also likely be gained without integrating it into a broader system.

Thank you for reading! Further analysis and feedback will be greatly appreciated!

comment by Sky Moo (sky-moo) · 2023-07-23T17:23:28.062Z · LW(p) · GW(p)

I have been thinking about this question because llama 2-chat seems to have false positives on safety. e.g. it wont help you fix a motorbike in case you later drive it and end up crashing the motorbike and getting injured.

What is an unsafe LLM vs a safe LLM?

Replies from: mruwnik↑ comment by mruwnik · 2023-08-04T12:52:27.632Z · LW(p) · GW(p)

That very much depends on how you understand "safe". Which is a large part of the differences between ethical AI people (safe means that it doesn't offend anyone, leak private information, give biased answers etc.) and the notkilleveryoneism people (safe means that it doesn't decide to remove humanity). These aren't mutually incompatible, but they require focusing on different things.

There is also safe in the PR sense, which means that no output will cause the LLM producer/supplier/whoever to get sued or in any other kind of trouble.

"Safe" is one of those funny words which everyone understands differently, but also assume that everyone else understands the same way.

comment by PotteryBarn · 2023-07-23T02:40:54.090Z · LW(p) · GW(p)

I have seen several proposals for solving alignment (such as OpenAI's Superalignment initiative) involve harnessing incremental or "near-human level" AI to advance alignment research. I recall from recent interviews that Eliezer is skeptical of this approach at least partially on the grounds that an AI sufficiently advanced to contribute meaningfully to alignment work would 1.) already be dangerous in it's own right, and 2.) capable of deceiving human alignment researchers with false or insufficient proposals to advance alignment research.

Would it be possible to resolve the second problem by neither holding the AI directly accountable to human researchers or an overseer AI, as is commonly suggested, but instead to a supermajority view of multiple AIs?

I am imagining having several similarly capable AIs, ideally based on fundamentally different models but at least incorporating some random argument, and prevented from directly communicating with each other. They would then be asked to answer the question, "Would the other AIs believe proposal X is a sound* alignment solution/research direction?" Answers would probably have to be limited to Yes/No or multiple choice. The AIs would be penalized for incorrectly coordinating with the other AIs to arrive at the same answer, up to and including termination. Thereby, an otherwise deceitful AI would reason that it's best chance of not being terminated early would be to provide non-deceptive answers.

Of course, this doesn't resolve the risk of overshooting the "near-human intelligence" (which might plausibly occur to all or most of the AIs in the pool), one or more AI's discovering a way to circumvent the air gap and communicate with the other AIs, or the AIs discovering some shelling point that was stronger than truthful answers. I'm merely hoping to mitigate the deceptiveness EY foresees alignment researchers will fall prey to while leveraging incremental improvements in AI.

Outside view tells me this idea will be ripped to shreds. What am I missing?

*I acknowledge the phrasing of this question side-steps the open problem of defining exactly what we want from a successful alignment program. I'm not trying to solve alignment so much as suggest an approach for combating deception specifically.

Replies from: mruwnik, rhollerith_dot_com↑ comment by mruwnik · 2023-08-04T13:12:48.025Z · LW(p) · GW(p)

Not being able to directly communicate with the others would be an issue in the beginning, but I'm guessing you would be able to use the setup to work out what the others think.

A bigger issue is that this would probably result in a very homogeneous group of minds. They're optimizing not for correct answers, but for consensus answers. It's the equivalent of studying for the exams. An fun example are the Polish equivalent of SAT exams (this probably generalizes, but I don't know about other countries). I know quite a few people who went to study biology, and then decided to retake the biology exam (as one can do). Most retakers had worse results the second time round. Because they had more up to date knowledge - the exam is like at least 10 years behind the current state of knowledge, so they give correct (as of today) answers, but have them marked as incorrect. I'd expect the group of AIs to eventually converge on a set of acceptable beliefs, rather than correct ones.

↑ comment by RHollerith (rhollerith_dot_com) · 2023-07-23T04:57:46.233Z · LW(p) · GW(p)

I expect it to be much harder to measure the "smarts" of an AI than it is to measure the smarts of a person (because all people share a large amount of detail in their cognitive architecture), so any approach that employs "near-human level" AI runs the risk that at least one of those AIs is not near human level at all.

comment by Ratios · 2023-07-22T09:58:42.114Z · LW(p) · GW(p)

A simple idea for AI security that will not solve alignment but should easily prevent FOOM and most catastrophic outcomes is using safety interlocks for AIs.

A "safety interlock" is a device that prevents the system from reaching a dangerous state. It is typically used in machinery or industrial processes where certain conditions need to be met before the system can operate.

In a microwave, the door includes a safety interlock system that prevents the microwave from operating if the door is open. When you open the door, the interlock interrupts the power supply to the magnetron.

In AI, it might look like this: you create a list of tests that the AI must fail in order to stay activated, this list of tests represents the upper boundary of allowed AI capabilities, and the AI will be tested in short intervals giving a high priority in its utility function to trying to pass these tests. As soon as it passes this test, it's shut down automatically. It could be tested in short intervals so it won't have the time to self-improve vastly above this threshold between the tests.

To give a more specific example, an LLM could be asked a hard physics question that wasn't included in training data that it will be asked to solve every few seconds. Being shut down as soon as it can.

This idea seems very simple and straightforward to me, and I don't see any glaring issues with it, but yet it doesn't seem to be researched or considered seriously as a robust safety solution (to the best of my knowledge).

My question is, what are the issues with this idea? And why doesn't it solve most of the problems with AI safety?

↑ comment by mruwnik · 2023-07-25T11:29:25.837Z · LW(p) · GW(p)

In your example, can it just lie? You'd have to make sure it either doesn't know the consequences of your interlocks, or for it to not care about them (this is the problem of corrigibility [? · GW]).

If the tests are obvious tests, your AI will probably notice that and react accordingly - if it has enough intelligence it can notice that they're hard and probably are going to be used to gauge it's level, which then feeds into the whole thing about biding your time and not showing your cards until you can take over.

If they're not obvious, then you're in a security type situation, where you hope your defenses are good enough. Which should be fine on weak systems, but they're not the problem. The whole point of this is to have systems that are much more intelligent than humans, so you'd have to be sure they don't notice your traps. It's like if a 5 year old set up booby traps for you - how confident are you that the 5 year old will trap you?

This is a story [LW · GW] of how that looks at the limit. A similar issue is boxing [? · GW]. In both cases you're assuming that you can contain something that is a lot smarter than you. It's possible in theory (I'm guessing?), but how sure are you that you can outsmart it in the long run?

Replies from: Ratios↑ comment by Ratios · 2023-08-04T07:05:06.299Z · LW(p) · GW(p)

Why would it lie if you program its utility function in a way that puts:

solving these tests using minimal computation > self-preservation?

(Asking sincerely)

↑ comment by mruwnik · 2023-08-04T12:28:10.830Z · LW(p) · GW(p)

It depends a lot on how much it values self-preservation in comparison to solving the tests (putting aside the matter of minimal computation). Self-preservation is an instrumental goal, in that you can't bring the coffee if you're dead. So it seems likely that any intelligent enough AI will value self-preservation, if only in order to make sure it can achieve its goals.

That being said, having an AI that is willing to do its task and then shut itself down (or to shut down when triggered) is an incredibly valuable thing to have - it's already finished, but you could have a go at the shutdown problem.

A more general issue is that this will handle a lot of cases, but not all of them. In that an AI that does lie (for whatever reason) will not be shut down. It sounds like something worth having in a swiss cheese way [LW · GW].

(The whole point of these posts are to assume everyone is asking sincerely, so no worries)

comment by Gesild Muka (gesild-muka) · 2023-07-22T01:12:22.678Z · LW(p) · GW(p)

Why wouldn’t a superintelligence simply go about its business without any regard for humans? What intuition am I missing?

Replies from: mark-xu, DaemonicSigil, rhollerith_dot_com↑ comment by Mark Xu (mark-xu) · 2023-07-22T01:23:57.642Z · LW(p) · GW(p)

Humans going about their business without regard for plants and animals has historically not been that great for a lot of them.

Replies from: gesild-muka↑ comment by Gesild Muka (gesild-muka) · 2023-07-22T01:52:09.045Z · LW(p) · GW(p)

I’m not sure the analogy works or fully answers my question. The equilibrium that comes with ‘humans going about their business’ might favor human proliferation at the cost of plant and animal species (and even lead to apocalyptic ends) but the way I understand it the difference in intelligence from human and superintelligence is comparable to humans and bacteria, rather than human and insect or animal.

I can imagine practical ways there might be friction between humans and SI, for resource appropriation for example, but the difference in resource use would also be analogous to collective human society vs bacteria. The resource use of an SI would be so massive that the puny humans can go about their business. Am I not understanding it right? Or missing something?

Replies from: hairyfigment↑ comment by hairyfigment · 2023-07-22T07:28:15.351Z · LW(p) · GW(p)

Just as humans find it useful to kill a great many bacteria, an AGI would want to stop humans from e.g. creating a new, hostile AGI. In fact, it's hard to imagine an alternative which doesn't require a lot of work, because we know that in any large enough group of humans, one of us will take the worst possible action. As we are now, even if we tried to make a deal to protect the AI's interests, we'd likely be unable to stop someone from breaking it.

I like to use the silly example of an AI transcending this plane of existence, as long as everyone understands this idea appears physically impossible. If somehow it happened anyway, that would mean there existed a way for humans to affect the AI's new plane of existence, since we built the AI, and it was able to get there. This seems to logically require a possibility of humans ruining the AI's paradise. Why would it take that chance? If killing us all is easier than either making us wiser or watching us like a hawk, why not remove the threat?

I'm not sure I understand your point about massive resource use. If you mean that SI would quickly gain control of so many stellar resources that a new AGI would be unable to catch up, it seems to me that:

1. people would notice the Sun dimming (or much earlier signs), panic, and take drastic action like creating a poorly-designed AGI before the first one could be assured of its safety, if it didn't stop us;

2. keeping humans alive while harnessing the full power of the Sun seems like a level of inconvenience no SI would choose to take on, if its goals weren't closely aligned with our own.

Replies from: gesild-muka↑ comment by Gesild Muka (gesild-muka) · 2023-07-22T14:42:19.631Z · LW(p) · GW(p)

My assumption is it’s difficult to design superintelligence and humans will either hit a limit in the resources and energy use that go into keeping it running or lose control of those resources as it reaches AGI.

My other assumption then is an intelligence that can last forever and think and act at 1,000,000 times human speed will find non-disruptive ways to continue its existence. There may be some collateral damage to humans but the universe is full of resources so existential threat doesn’t seem apparent (and there are other stars and planets, wouldn’t it be just as easy to wipe out humans as to go somewhere else?). The idea that a superintelligence would want to prevent humans from building another (or many) to rival the first is compelling but I think once a level of intelligence is reached the actions and motivations of mere mortals becomes irrelevant to them (I could change my mind on this last idea, haven’t thought about it as much).

This is not to say that AI isn’t potentially dangerous or that it shouldn’t be regulated (it should imo), just that existential risk from SI doesn’t seem apparent. Maybe we disagree on how a superintelligence would interact with reality (or how a superintelligence would present?). I can’t imagine that something that alien would worry or care much about humans. Our extreme inferiority will either be our doom or salvation.

Replies from: mruwnik↑ comment by mruwnik · 2023-07-25T11:44:03.752Z · LW(p) · GW(p)

It's not that it can't come up with ways to not stamp on us. But why should it? Yes, it might only be a tiny, tiny inconvenience to leave us alone. But why even bother doing that much? It's very possible that we would be of total insignificance to an AI. Just like the ants that get destroyed at a construction site - no one even noticed them. Still doesn't turn out too good for them.

Though that's when there are massive differences of scale. When the differences are smaller, you get into inter-species competition dynamics. Which also is what the OP was pointing at, if I understand correctly.

A superintelligence might just ignore us. It could also e.g. strip mine the whole earth for resources, coz why not? "The AI does not hate you, nor does it love you, but you are made of atoms which it can use for something else".

Replies from: gesild-muka↑ comment by Gesild Muka (gesild-muka) · 2023-07-25T16:00:54.736Z · LW(p) · GW(p)

There are many atoms out there and many planets to strip mine and a superintelligence has infinite time. Inter species competition makes sense depending on where you place the intelligence dial. I assume that any intelligence that’s 1,000,000 times more capable than the next one down the ladder will ignore their ‘competitors’ (again, there could be collateral damage but likely not large scale extinction). If you place the dial at lower orders of magnitude then humans are a greater threat to AI, AI reasoning will be closer to human reasoning and we should probably take greater precautions.

To address the first part of your comment: I agree that we’d be largely insignificant and I think it’d be more inconvenient to wipe us out vs just going somewhere else or waiting a million years for us to die off, for example. The closer a superintelligence is to human intelligence the more likely it’ll act like a human (such as deciding to wipe out the competition). The more alien the intelligence the more likely it is to leave us to our own devices. I’ll think more on where the cutoff may be between dangerous AI and largely oblivious AI.

↑ comment by DaemonicSigil · 2023-07-22T07:29:14.981Z · LW(p) · GW(p)

The idea is that there are lots of resources that superhuman AGI would be in competition with humans for if it didn't share our ideas about how those resources should be used. The biggest one is probably energy (more precisely, thermodynamic free energy). That's very useful, it's a requirement for doing just about anything in this universe. So AGI going about its business without any regard for humans would be doings things like setting up a Dyson sphere around the sun, maybe building large fusion reactors all over the Earth's surface. The Dyson sphere would deprive us of the sunlight we need to live, while the fusion reactors might produce enough waste heat to make the planet's surface uninhabitable to humans. AGI going about its business with no regard for humans has no reason to make sure we survive.

That's the base case where humanity doesn't fight back. If (some) humans figure that that's how it's going to play out, then they're likely to try and stop the AGI. If the AGI predicts that we're going to fight back, then maybe it can just ignore us like tiny ants, or maybe it's simpler and safer for it to deliberately kill everyone at once, so we don't do complicated and hard-to-predict things to mess up its plans.

TLDR: Even if we don't fight back, AGI does things with the side effect of killing us. Therefore we probably fight back if we notice an AGI doing that. A potential strategy for the AGI to deal with this is to kill everyone before we have a chance to notice.

↑ comment by RHollerith (rhollerith_dot_com) · 2023-07-22T06:25:24.691Z · LW(p) · GW(p)

If I'm a superintelligent AI, killing all the people is probably the easiest way to prevent people from interfering with my objectives, which people might do for example by creating a second superintelligence. It's just easier for me to kill them all (supposing I care nothing about human values, which will probably be the case for the first superintelligent AI given the way things are going in our civilization) than to keep an eye on them or to determine which ones might have the skills to contribute to the creation of a second superintelligence (and kill only those).

(I'm slightly worried what people will think of me when I write this way, but the topic is important enough I wrote it anyways.)

comment by NineDimensions (RationalWinter) · 2023-07-21T00:00:18.635Z · LW(p) · GW(p)

Edit - This turned out pretty long, so in short:

What reason do we have to believe that humans aren't already close to maxing out the gains one can achieve from intelligence, or at least having those gains in our sights?

One crux of the ASI risk argument is that ASI will be unfathomably powerful thanks to its intelligence. One oft-given analogy for this is that the difference in intelligence between humans and chimps is enough that we are able to completely dominate the earth while they become endangered as an unintended side effect. And we should expect the difference between us and ASI to be much much larger than that.

How do we know that intelligence works that way in this universe? What if it's like opposable thumbs? It's very difficult to manipulate the world without them. Chimps have them, but don't seem as dexterous with them as humans are, and they are able to manipulate the world only in small ways. Humans have very dexterous thumbs and fingers and use them to dominate the earth. But if we created a robot with 1000 infinitely dexterous hands... well, it doesn't seem like it would pose an existential risk. Humans seem to have already maxed out the effective gains from hand dexterity.

Imagine the entire universe consisted of a white room with a huge pile of children's building blocks. A mouse in this room can't do anything of note. A chimp can use its intelligence and dexterity to throw blocks around, and maybe stack a few. A human can use its intelligence and dexterity to build towers, walls, maybe a rudimentary house. Maybe given enough time a human could figure out how to light a fire, or sharpen the blocks.

But it's hard to see how a super intelligent robot could achieve more than a human could in the children's block-universe. The scope of possibilities in that world is small enough that humans were able to max it out, and superintelligence doesn't offer any additional value.

What reason do we have to believe that humans aren't already close to maxing out the gains one can achieve from intelligence?

(I can still see ways that ASI could be very dangerous or very useful even if this was the case, but it does seem like a big limiting factor. It would make foom unlikely, for one. And maybe an ASI can't outstrategise humanity in real life for the same reason it couldn't outstrategise us in Tic Tac Toe.)

---

(I tried to post the below as a separate comment to make this less of a wall of text, but I'm rate limited so I'll edit it in here instead)

One obvious response is to point to phenomena that we know is physically possible, but humans haven't figured out yet. Eg. fission. But that doesn't seem like something an ASI could blindside us with - it would be one of the first things that humans would try to get out of AI and I expect us to succeed using more rudimentary AI well before we reach ASI level. If not, then at least we can keep an eye on any fission-y type projects an ASI seems to be plotting and use it as a huge warning sign.

"You don't know exactly how Magnus Carlsen will beat you in chess, but you know that he'll beat you" is a hard problem.

"You don't know exactly how Magnus Carlsen will beat you in chess, but you know that he'll develop nuclear fission to do it" is much easier to plan for at least.

It seems like the less survivable worlds hinge more on there being unknown unknowns, like some hack for our mental processes, some way of speeding up computer processing a million times, nanotechnology etc. So to be more specific:

What reason do we have to believe that humans aren't already close to maxing out the gains one can achieve from intelligence, or at least having those gains in our sights?

↑ comment by Charlie Steiner · 2023-07-21T01:08:30.428Z · LW(p) · GW(p)

Humans still get advantages from more or less smarts within the human range - we can use this to estimate the slope of returns on intelligence near human intelligence.

This doesn't rule out that maybe at the equivalent of IQ 250, all the opportunities for being smart get used up and there's no more benefits to be had - for that, maybe try to think about some equivalent of intelligence for corporations, nations, or civilizations - are there projects that a "smarter" corporation (maybe one that's hired 2x as many researchers) could do that a "dumber" corporation couldn't, thus justifying returns to increasing corporate intelligence-equivalent?

An alternate way of thinking about it would be to try to predict where the ceiling of a game is from its complexity (or some combination of algorithmic complexity and complexity of realizable states). Tic tac toe is a simple game, and this seems to go hand in hand with the fact that intelligence is only useful for playing tic tac toe up to a very low ceiling. Chess is a more complicated game, and humans haven't reached the ceiling, but we're not too far from it - the strongest computers all start drawing each other all the time around 3600 elo, so maybe the ceiling is around that level and more intelligence won't improve results, or will have abysmal returns. From this perspective, where would you expect the ceiling is for the "game" that is the entire universe?

↑ comment by AnthonyC · 2023-07-21T01:10:22.208Z · LW(p) · GW(p)

It's a good question. There's a lot of different responses that all contribute to my own understanding here, so I'll just list out a few reasons I personally do not think humans have anywhere near maxed out the gains achievable through intelligence.

- Over time the forces that have pushed humanity's capabilities forward have been knowledge-based (construed broadly, including science, technology, engineering, law, culture, etc.).

- Due to the nature of the human life cycle, humans spend a large fraction of our lives learning a small fraction of humanity's accumulated knowledge, which we exploit in concert with other humans with different knowledge in order to do all the stuff we need to do.

- No one has a clear view of every aspect of the overall system, leading to very easily identifiable flaws, inefficiencies, and should-be-avoidable systemic failures.

- The boundaries of knowledge tend to get pushed forward either by those near the forefront of their particular field of expertise, or by polymaths with some expertise across multiple disciplines relevant to a problem. These are the people able to accumulate existing knowledge quickly in order to reach the frontiers, aka people who are unusually intelligent in the forms of intelligence needed for their chosen field(s).

- Because of the way human minds work, we are subject to many obvious limitations. Limited working memory. Limited computational clock speed. Limited fidelity and capacity for information storage and recollection. Limited ability to direct our own thoughts and attention. No direct access to the underlying structure of our own minds/brains. Limited ability to coordinate behavior and motivation between individuals. Limited ability to share knowledge and skills.

- Many of these restrictions would not apply to an AI. An AI can think orders of magnitude faster than a human, about many things at once, without ever forgetting anything or getting tired/distracted/bored. It can copy itself, including all skills and knowledge and goals.

You see a lot of people around here talking about von Neumann. This is because he was extremely smart, and revolutionized many fields in ways that enabled humans to shape the physical world far beyond what we'd had before. It wasn't anything that less smart humans wouldn't have gotten to sooner or later (same for Einstein, someone would have solved relativity, the photoelectric effect, etc. within a few decades at most), but he got there first, fastest, with the least data. If we supposed that that were the limits of what a mind in the physical world could be, then consider taking a mind that smart, speeding it up a hundred thousand times, and let it spend a subjective twelve thousand years (real-world month) memorizing and considering everything humans have ever written, filmed, animated, recorded, drawn, or coded, in any format, on any subject. Then let it make a thousand exact copies of itself and set them each loose on a bunch of different problems to solve (technological, legal, social, logistical, and so on). It won't be able to solve all of them without additional data, but it will be able to solve some, and for the rest, it will be able to minimize the additional data needed more effectively than just about anyone else. It won't be able to implement all of them with the resources it can immediately access, but it will be able to do so with less additional resources than just about anyone else.

Now of course, in reality, von Neumann still had a mind embodied in a human brain. It weighed a few pounds and ran on ~20W of sugar. It had access to a few decades of low-bandwidth human sensory data. It was generated by some combination of genes that each exist in many other humans, and did not fundamentally have any capabilities or major features the rest of us don't. The idea that this combination is somehow the literally most optimal way a mind could be in order to maximize ability to control the physical world would be beyond miraculously unlikely. If nothing else, "von Neumann but multithreaded and with built-in direct access to modern engineering software tools and the internet" would already be much, much more capable.

I also would hesitate to focus on the idea of a robot body. I don't think it's strictly necessary, there are many ways to influence the physical world without one, but in any case we should be considering what an ASI could do by coordinating the actions of every computer, actuator, and sensor it can get access to, simultaneously. Which is roughly all of them, globally, including the ones that people think are secure.

There's a quote from Arthur C Clarke's "Childhood's End," I think about how much power was really needed to end WWII in the European theater. "That requires as much power as a small radio transmitter--and rather similar skills to operate. For it's the application of the power, not its amount, that matters. How long do you think Hitler's career as a dictator of Germany would have lasted, if wherever he went a voice was talking quietly in his ear? Or if a steady musical note, loud enough to drown all other sounds and to prevent sleep, filled his brain night and day? Nothing brutal, you appreciate. Yet, in the final analysis, just as irresistible as a tritium bomb." An exaggeration, because that probably wouldn't have worked on its own. But if you think about what the exaggeration is pointing at, and try to steelman it in any number of ways, it's not off by too many orders of magnitude.

And as for unknown unknowns, I don't think you need any fancy new hacks to take over enough human minds to be dangerous, when humans persuade themselves and each other of all kinds of falsehoods all the time, including ones that end up changing the world, or inducing people to acts of mass violence or suicide or heroism. I don't think you need petahertz microprocessors to compete with neurons operating at 100 Hz. I don't think you need to build yourself a robot army and a nuclear arsenal when we're already hard at work on how to put AI in the military equipment we have. I don't think you need nanobots or nanofactories or fanciful superweapons. But I also see that a merely human mind can already hypothesize a vast array of possible new technologies, and pathways to achieving them, that we know are physically possible and can be pretty sure a collection of merely human engineers and researchers could figure out with time and thought and funding. I would be very surprised if none of those, and no other possibilities I haven't thought of, were within an ASI's capabilities.

↑ comment by Domenic · 2023-07-21T00:44:31.174Z · LW(p) · GW(p)

I am sympathetic to this viewpoint. However I think there are large-enough gains to be had from "just" an AI that: matches genius-level humans; has N times larger working memory; thinks N times faster; has "tools" (like calculators or Mathematica or Wikipedia) integrated directly into it's "brain"; and is infinitely copyable. That gets you to https://www.lesswrong.com/posts/5wMcKNAwB6X4mp9og/that-alien-message [LW · GW] territory, which is quite x-risky.

Working memory is a particularly powerful intuition pump here, I think. Given that you can hold 8 items in working memory, it feels clear that something which can hold 800 or 8 million such items would feel qualitatively impressive. Even if it's just a quantitative scale-up.

You can then layer on more speculative things from the ability to edit the neural net. E.g., for humans, we are all familiar with flow states where we are at our best, most-well-rested, most insightful. It's possible that if your brain is artificial, reproducing that on demand is easier. The closest thing we have to this today is prompt engineering, which is a very crude way of steering AIs to use the "smarter" path when it's navigating through their stack of transformer layers. But the existence of such paths likely means we, or an introspective AI, could consciously promote their use.