Posts

Comments

I could be wrong, but from what I've read the domain wall should have mass, so it must travel below light speed. However, the energy difference between the two vacuums would put a large force on the wall, rapidly accelerating it to very close to light speed. Collisions with stars and gravitational effects might cause further weirdness, but ignoring that, I think after a while we basically expect constant acceleration, meaning that light cones starting inside the bubble that are at least a certain distance from the wall would never catch up with the wall. So yeah, definitely above 0.95c.

We probably don't disagree that much. What "original seeing" means is just going and investigating things you're interested in. So doing lengthy research is actually a much more central example of this than coming up with a bold new idea is.

As I say above: "There's not any principled reason why an AI system, even a LLM in particular, couldn't do this."

Some experimental data: https://chatgpt.com/share/67ce164f-a7cc-8005-8ae1-98d92610f658

There's not really anything wrong with ChatGPT's attempt here, but it happens to have picked the same topic as a recent Numberphile video, and I think it's instructive to compare how they present the same topic: https://www.numberphile.com/videos/a-1-58-dimensional-object

My view on this is that writing a worthwhile blog post is not only a writing task, but also an original seeing task. You first have to go and find something out in the world and learn about it before you can write about it. So the obstacle is not necessarily reasoning ("look at this weird rock I found" doesn't involve much reasoning, but could make a good blog post), but a lack of things to say.

There's not any principled reason why an AI system, even a LLM in particular, couldn't do this. There is plenty going on in the world to go and find out, even if you're stuck in the internet. (And even without an internet connection, you can try and explore the world of math.) But it seems like currently the bottleneck is that LLM's don't have anything to say.

Maybe novels might require less of this than blog posts, but I'd guess that writing a good novel is also a task that requires a lot of original seeing.

Thanks for the reply & link. I definitely missed that paragraph, whoops.

IMO even just simple gamete selection would be pretty great for avoiding the worst genetic diseases. I guess tracking nuclei with a microscope is way more feasible than the microwell thing, given how hard it looks to make IVS work at all.

Re the "Appendix: Cheap DNA segment sensing" section, just going to throw out a thought that occurred to me (very much a non-expert). Let's say we're doing IVS, and assume we can separate spermatocytes into separate microwells before they undergo meiosis. The starting cells all have a known genome. Then the cell in each microwell divides into 4 cells. If we sequence 3 of them, then we know by process of elimination what the sequence on the 4th cell is, at a very high level of detail, including crossovers, etc. So we kill 3 cells and look at their DNA, and then we know what DNA the remaining living cell has without doing anything to it.

Okay, DNA sequencing is still fairly expensive, so maybe it's super crazy to do it 3 times to get a single cell with known DNA. But:

- Maybe sequencing will get cheaper.

- The same trick should work for existing cheap methods that give coarser information. Eg. one can freely decondense the sperm DNA for FISH, without worrying about damaging the cell, because it's one of the 3 that's going to die anyway.

If it's too hard to separate the cells into microwells while they're still dividing, maybe there are alternate things we could do like just watching the culture with a microscope and keeping track of who split from who and where they ended up (plus some kind of microfluidics setup to shuffle the sperms around to where we want them).

This was a fun little exercise. We get many "theory of rationality" posts on this site, so it's very good to also have some chances to practice figuring out confusing things also mixed in. The various coins each teach good lessons about ways the world can surprise you.

Anyway, I think this was an underrated post, and we need more posts in this general category.

Running parallel to the spin axis would be fine, though.

Anthropic shadow isn't a real thing, check this post: https://www.lesswrong.com/posts/LGHuaLiq3F5NHQXXF/anthropically-blind-the-anthropic-shadow-is-reflectively

Also, you should care about worlds proportional to the square of their amplitude.

Thanks for making the game! I also played it, just didn't leave a comment on the original post. Scored 2751. I played each location for an entire day after building an initial food stockpile, and so figured out the timing of Tiger Forest and Dog Valley. But I also did some fairly dumb stuff, like assuming a time dependence for other biomes. And I underestimated Horse Hills, since when I foraged it for a full day, I got unlucky and only rolled a single large number. For what it's worth, I find these applet things more accessible than a full-on D&D.Sci (though those are also great), which I often end up not playing because it feels too much like work. With applets you can play on medium-low effort (which I did) and make lots of mistakes (which I did) and learn Valuable Lessons about How Not To Science (which one might hope I did).

Have to divide by number of airships, which probably makes them less safe than planes, if not cars. I think the difficulty is mostly with having a large surface-area exposed to the wind making the ships difficult to control. (Edit: looking at the list on Wikipedia, this is maybe not totally true. A lot of the crashes seem to be caused by equipment failures too.)

Are those things that you care about working towards?

No, and I don't work on airships and have no plans to do so. I mainly just think it's an interesting demonstration of how weak electrostatic forces can be.

Yep, Claude sure is a pretty good coder: Wang Tile Pattern Generator

This took 1 initial write and 5 change requests to produce. The most manual effort I had to do was look at unicode ranges and see which ones had distinctive-looking glyphs in them. (Sorry if any of these aren't in your computer's glyph library.)

I've begun worshipping the sun for a number of reasons. First of all, unlike some other gods I could mention, I can see the sun. It's there for me every day. And the things it brings me are quite apparent all the time: heat, light, food, and a lovely day. There's no mystery, no one asks for money, I don't have to dress up, and there's no boring pageantry. And interestingly enough, I have found that the prayers I offer to the sun and the prayers I formerly offered to 'God' are all answered at about the same 50% rate.

-- George Carlin

Everyone who earns money exerts some control by buying food or whatever else they buy. This directs society to work on producing those goods and services. There's also political/military control, but it's also (a much narrower set of) humans who have that kind of control too.

Okay, I'll be the idiot who gives the obvious answer: Yeah, pretty much.

Very nice post, thanks for writing it.

Your options are numbered when you refer to them in the text, but are listed as bullet points originally. Probably they should also be numbered there!

Now we can get down to the actual physics discussion. I have a bag of fairly unrelated statements to make.

-

The "center of mass moves at constant velocity" thing is actually just as solid as, say, conservation of angular momentum. It's just less famous. Both are consequences of Noether's theorem, angular momentum conservation arising from symmetry under rotations and the center of mass thing arising from symmetry under boosts. (i.e. the symmetry that says that if two people fly past each other on spaceships, there's no fact of the matter as to which of them is moving and which is stationary)

-

Even the fairly nailed down area of quantum mechanics in an electromagnetic field, we make a distinction between mechanical momentum (which appears when calculating kinetic energy) and the canonical momentum (for Heisenberg). Canonical momentum has the operator while mechanical momentum is .

-

Minkowski momentum is, I'm fairly sure, the right answer for the canonical momentum in particular. An even faster proof of Minkowski is to just note that the wavelength is scaled by and so gets scaled by a factor of .

-

The mirror experiments are interesting in that they raise the question of what happens when we put an airgap between the mirror and the fluid. If the airgap is large, we get the vacuum momentum, , since the index of refraction for air is nearly 1. If the airgap gets taken to 0, then we're back to . What happens in between?

-

I will say that overall, option 1 looks pretty good to me.

Edit: Removed redundant video link (turned out to already be in original post).

Good point, the whole "model treats tokens it previously produced and tokens that are part of the input exactly the same" thing and the whole "model doesn't learn across usages" thing are also very important.

When generating each token, they "re-read" everything in the context window before predicting. None of their internal calculations are preserved when predicting the next token, everything is forgotten and the entire context window is re-read again.

Given that KV caching is a thing, the way I chose to phrase this is very misleading / outright wrong in retrospect. While of course inference could be done in this way, it's not the most efficient, and one could even make a similar statement about certain inefficient ways of simulating a person's thoughts.

If I were to rephrase, I'd put it this way: "Any sufficiently long serial computation the model performs must be mediated by the stream of tokens. Internal activations can only be passed forwards to the next layer of the model, and there are only a finite number of layers. Hence, if information must be processed in more sequential steps than there are layers, the only option is for that information to be written out to the token stream, then processed further from there."

Let's say we have a bunch of datapoints in that are expected to lie on some lattice, with some noise in the measured positions. We'd like to fit a lattice to these points that hopefully matches the ground truth lattice well. Since just by choosing a very fine lattice we can get an arbitrarily small error without doing anything interesting, there also needs to be some penalty on excessively fine lattices. This is a bit of a strange problem, and an algorithm for it will be presented here.

method

Since this is a lattice problem, the first question to jump to mind should be if we can use the LLL algorithm in some way.

One application of the LLL algorithm is to find integer relations between a given set of real numbers. [wikipedia] A matrix is formed with those real numbers (scaled up by some factor ) making up the bottom row, and an identity matrix sitting on top. The algorithm tries to make the basis vectors (the column vectors of the matrix) short, but it can only do so by making integer combinations of the basis vectors. By trying to make the bottom entry of each basis vector small, the algorithm is able to find an integer combination of real numbers that gives 0 (if one exists).

But there's no reason the bottom row has to just be real numbers. We can put in vectors instead, filling up several rows with their entries. The concept should work just the same, and now instead of combining real numbers, we're combining vectors.

For example, say we have 4 datapoints in three dimensional space, . The we'd use the following matrix as input to the LLL algorithm.

Here, is a tunable parameter. The larger the value of , the more significant any errors in the lower 3 rows will be. So fits with a large value will be more focused on having a close match to the given datapoints. On the other hand, if the value of is small, then the significance of the upper 4 rows is relatively more important, which means the fit will try and interpret the datapoints as small integer multiples of the basis lattice vectors.

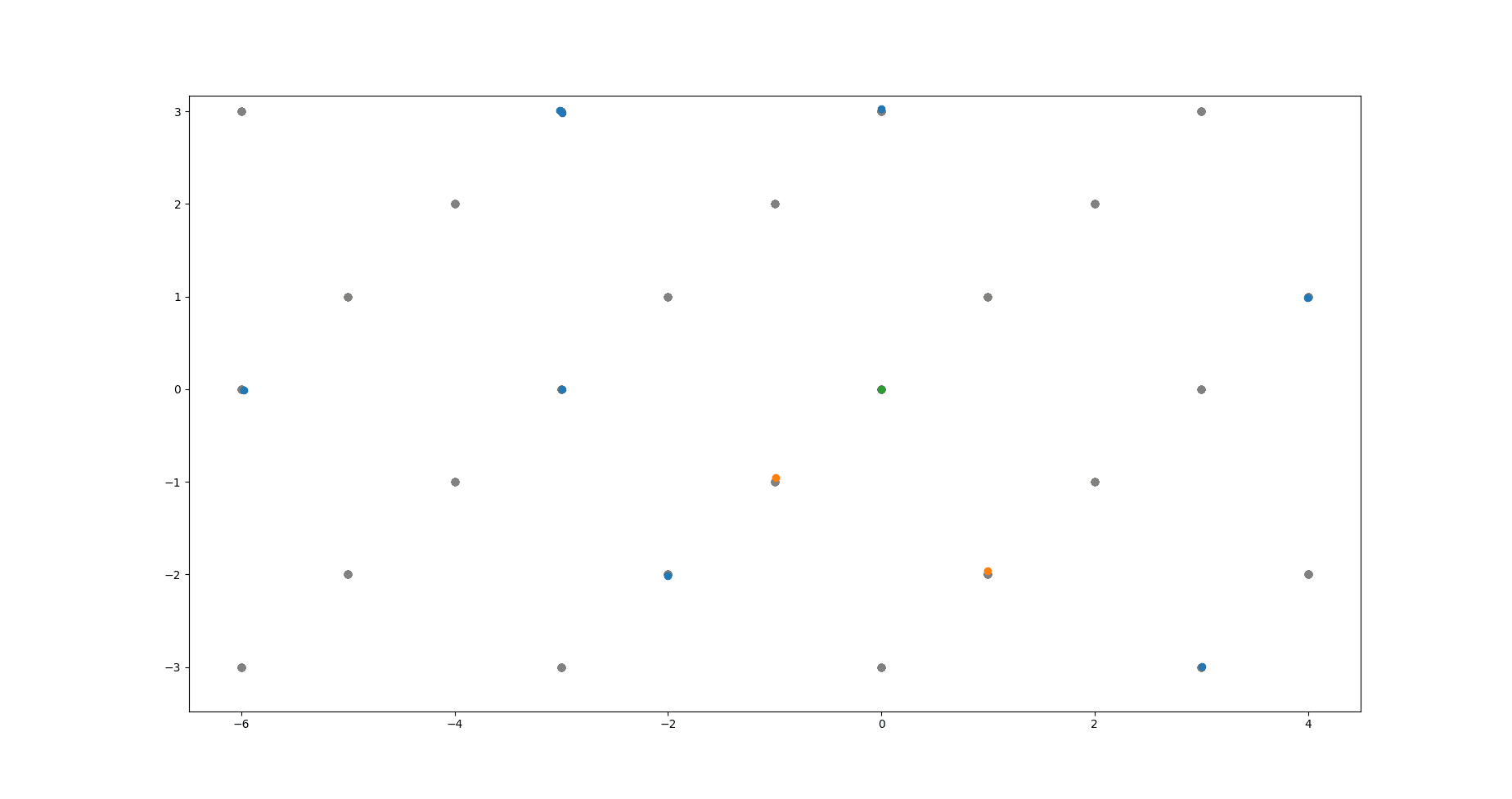

The above image shows the algorithm at work. Green dot is the origin. Grey dots are the underlying lattice (ground truth). Blue dots are the noisy data points the algorithm takes as input. Yellow dots are the lattice basis vectors returned by the algorithm.

code link

https://github.com/pb1729/latticefit

Run lattice_fit.py to get a quick demo.

API: Import lattice_fit and then call lattice_fit(dataset, zeta) where dataset is a 2d numpy array. First index into the dataset selects the datapoint, and the second selects a coordinate of that datapoint. zeta is just a float, whose effect was described above. The result will be an array of basis vectors, sorted from longest to shortest. These will approach zero length at some point, and it's your responsibility as the caller to cut off the list there. (Or perhaps you already know the size of the lattice basis.)

caveats

Admittedly, due to the large (though still polynomial) time complexity of the LLL algorithm, this method scales poorly with the number of data points. The best suggestion I have so far here is just to run the algorithm on manageable subsets of the data, filter out the near-zero vectors, and then run the algorithm again on all the basis vectors found this way.

...

I originally left this as a stack overflow answer that I came across when initially searching for a solution to this problem.

Linkpost for: https://pbement.com/posts/latticefit.html

Cool, Facebook is also on this apparently: https://x.com/PicturesFoIder/status/1840677517553791440

This might be worth pinning as a top-level post.

The amount of entropy in a given organism stays about the same, though I guess you could argue it increases as the organism grows in size. Reason: The organism isn't mutating over time to become made of increasingly high entropy stuff, nor is it heating up. The entropy has to stay within an upper and lower bound. So over time the organism will increase entropy external to itself, while the internal entropy doesn't change very much, maybe just fluctuates within the bounds a bit.

It's probably better to talk about entropy per unit mass, rather than entropy density. Though mass conservation isn't an exact physical law, it's approximately true for the kinds to stuff that usually happens on Earth. Whereas volume isn't even approximately conserved. And in those terms, 1kg of gas should have more entropy than 1kg of condensed matter.

Speaking of which, I wonder if multi-modal transformers have started being used by blind people yet. Since we have models that can describe images, I wonder if it would be useful for blind people to have a device with a camera and a microphone and a little button one can press to get it to describe what the camera is seeing. Surely there are startups working on this?

Found this paper on insecticide costs: https://sci-hub.st/http://dx.doi.org/10.1046/j.1365-2915.2000.00262.x

It's from 2000, so anything listed here would be out of patent today.

hardening voltage transformers against ionising radiation

Is ionization really the mechanism by which transformers fail in a solar storm? I thought it was that changes in the Earth's magnetic field induced large currents in long transmission lines, overloading the transformers.

Sorry for the self promotion, but some folks may find this post relevant: https://www.lesswrong.com/posts/uDXRxF9tGqGX5bGT4/logical-share-splitting (ctl-F for "Application: Conditional prediction markets")

tldr: Gives a general framework that would allow people to make this kind of trade with only $N in capital, just as a natural consequence of the trading rules of the market.

Anyway, I definitely agree that Manifold should add the feature you describe! (As for general logical share splitting, well, it would be nice, but probably far too much work to convert the existing codebase over.)

IMO, a very good response, which Eliezer doesn't seem to be interested in making as far as I can tell, is that we should not be making the analogy natural selection <--> gradient descent, but rather, human brain learning algorithm <--> gradient descent ; natural selection <--> us trying to build AI.

So here, the striking thing is that evolution failed to solve the alignment problem for humans. I.e. we have a prior example of strongish general intelligence being created, but no prior examples of strongish general intelligence being aligned. Evolution was strong enough to do one but not the other. It's not hopeless, because we should generally consider ourselves smarter than evolution, but on the other hand, evolution has had a very long time to work and it does frequently manage things that we humans have not been able to replicate. And also, it provides a small amount of evidence against "the problem will be solved with minor tweaks to existing algorithms" since generally minor tweaks are easier for evolution to find than ideas that require many changes at once.

People here might find this post interesting: https://yellow-apartment-148.notion.site/AI-Search-The-Bitter-er-Lesson-44c11acd27294f4495c3de778cd09c8d

The author argues that search algorithms will play a much larger role in AI in the future than they do today.

I remember reading the EJT post and left some comments there. The basic conclusions I arrived at are:

- The transitivity property is actually important and necessary, one can construct money-pump-like situations if it isn't satisfied. See this comment

- If we keep transitivity, but not completeness, and follow a strategy of not making choices inconsistent with out previous choices, as EJT suggests, then we no longer have a single consistent utility function. However, it looks like the behaviour can still be roughly described as "picking a utility function at random, and then acting according to that utility function". See this comment.

In my current thinking about non-coherent agents, the main toy example I like to think about is the agent that maximizes some combination of the entropy of its actions, and their expected utility. i.e. the probability of taking an action is proportional to up to a normalization factor. By tuning we can affect whether the agent cares more about entropy or utility. This has a great resemblance to RLHF-finetuned language models. They're trained to both achieve a high rating and to not have too great an entropy with respect to the prior implied by pretraining.

If you're working with multidimensional tensors (eg. in numpy or pytorch), a helpful pattern is often to use pattern matching to get the sizes of various dimensions. Like this: batch, chan, w, h = x.shape. And sometimes you already know some of these dimensions, and want to assert that they have the correct values. Here is a convenient way to do that. Define the following class and single instance of it:

class _MustBe:

""" class for asserting that a dimension must have a certain value.

the class itself is private, one should import a particular object,

"must_be" in order to use the functionality. example code:

`batch, chan, must_be[32], must_be[32] = image.shape` """

def __setitem__(self, key, value):

assert key == value, "must_be[%d] does not match dimension %d" % (key, value)

must_be = _MustBe()

This hack overrides index assignment and replaces it with an assertion. To use, import must_be from the file where you defined it. Now you can do stuff like this:

batch, must_be[3] = v.shape

must_be[batch], l, n = A.shape

must_be[batch], must_be[n], m = B.shape

...

Linkpost for: https://pbement.com/posts/must_be.html

Oh, very cool, thanks! Spoiler tag in markdown is:

:::spoiler

text here

:::

Heh, sure.

Promote from a function to a linear operator on the space of functions, . The action of this operator is just "multiply by ". We'll similarly define meaning to multiply by the first, second integral of , etc.

Observe:

Now we can calculate what we get when applying times. The calculation simplifies when we note that all terms are of the form . Result:

Now we apply the above operator to :

The sum terminates because a polynomial can only have finitely many derivatives.

Use integration by parts:

Then is another polynomial (of smaller degree), and is another "nice" function, so we recurse.

Other people have mentioned sites like Mechanical Turk. Just to add another thing in the same category, apparently now people will pay you for helping train language models:

https://www.dataannotation.tech/faq?

Haven't tried it yet myself, but a roommate of mine has and he seems to have had a good experience. He's mentioned that sometimes people find it hard to get assigned work by their algorithm, though. I did a quick search to see what their reputation was, and it seemed pretty okay:

Linkpost for: https://pbement.com/posts/threads.html

Today's interesting number is 961.

Say you're writing a CUDA program and you need to accomplish some task for every element of a long array. Well, the classical way to do this is to divide up the job amongst several different threads and let each thread do a part of the array. (We'll ignore blocks for simplicity, maybe each block has its own array to work on or something.) The method here is as follows:

for (int i = threadIdx.x; i < array_len; i += 32) {

arr[i] = ...;

}

So the threads make the following pattern (if there are threads):

⬛🟫🟥🟧🟨🟩🟦🟪⬛🟫🟥🟧🟨🟩🟦🟪⬛🟫🟥🟧🟨🟩🟦🟪⬛🟫

This is for an array of length . We can see that the work is split as evenly as possible between the threads, except that threads 0 and 1 (black and brown) have to process the last two elements of the array while the rest of the threads have finished their work and remain idle. This is unavoidable because we can't guarantee that the length of the array is a multiple of the number of threads. But this only happens at the tail end of the array, and for a large number of elements, the wasted effort becomes a very small fraction of the total. In any case, each thread will loop times, though it may be idle during the last loop while it waits for the other threads to catch up.

One may be able to spend many happy hours programming the GPU this way before running into a question: What if we want each thread to operate on a continguous area of memory? (In most cases, we don't want this.) In the previous method (which is the canonical one), the parts of the array that each thread worked on were interleaved with each other. Now we run into a scenario where, for some reason, the threads must operate on continguous chunks. "No problem" you say, we simply need to break the array into chunks and give a chunk to each thread.

const int chunksz = (array_len + blockDim.x - 1)/blockDim.x;

for (int i = threadIdx.x*chunksz; i < (threadIdx.x + 1)*chunksz; i++) {

if (i < array_len) {

arr[i] = ...;

}

}

If we size the chunks at 3 items, that won't be enough, so again we need items per chunk. Here is the result:

⬛⬛⬛⬛🟫🟫🟫🟫🟥🟥🟥🟥🟧🟧🟧🟧🟨🟨🟨🟨🟩🟩🟩🟩🟦🟦

Beautiful. Except you may have noticed something missing. There are no purple squares. Though thread 6 is a little lazy and doing 2 items instead of 4, thread 7 is doing absolutely nothing! It's somehow managed to fall off the end of the array.

Unavoidably, some threads must be idle for loops. This is the conserved total amount of idleness. With the first method, the idleness is spread out across threads. Mathematically, the amount of idleness can be no greater than regardless of array length and thread number, and so each thread will be idle for at most 1 loop. But in the contiguous method, the idleness is concentrated in the last threads. There is nothing mathematically impossible about having as big as or bigger, and so it's possible for an entire thread to remain unused. Multiple threads, even. Eg. take :

⬛⬛🟫🟫🟥🟥🟧🟧🟨

3 full threads are unused there! Practically, this shouldn't actually be a problem, though. The number of serial loops is still the same, and the total number of idle loops is still the same. It's just distributed differently. The reasons to prefer the interleaved method to the contiguous method would be related to memory coalescing or bank conflicts. The issue of unused threads would be unimportant.

We don't always run into this effect. If is a multiple of , all threads are fully utilized. Also, we can guarantee that there are no unused threads for larger than a certain maximal value. Namely, take then and so the idleness is . But if is larger than this, then one can show that all threads must be used at least a little bit.

So, if we're using CUDA threads, then when the array size is 961, the contiguous processing method will leave thread 31 idle. And 961 is the largest array size for which that is true.

So once that research is finished, assuming it is successful, you'd agree that many worlds would end up using fewer bits in that case? That seems like a reasonable position to me, then! (I find the partial-trace kinds of arguments that people make pretty convincing already, but it's reasonable not to.)

MW theories have to specify when and how decoherence occurs. Decoherence isn't simple.

They don't actually. One could equally well say: "Fundamental theories of physics have to specify when and how increases in entropy occur. Thermal randomness isn't simple." This is wrong because once you've described the fundamental laws and they happen to be reversible, and also aren't too simple, increasing entropy from a low entropy initial state is a natural consequence of those laws. Similarly, decoherence is a natural consequence of the laws of quantum mechanics (with a not-too-simple Hamiltonian) applied to a low entropy initial state.

Good post, and I basically agree with this. I do think it's good to mostly focus on the experimental implications when talking about these things. When I say "many worlds", what I primarily mean is that I predict that we should never observe a spontaneous collapse, even if we do crazy things like putting conscious observers into superposition, or putting large chunks of the gravitational field into superposition. So if we ever did observe such a spontaneous collapse, that would falsify many worlds.

Amount of calculation isn't so much the concern here as the amount of bits used to implement that calculation. And there's no law that forces the amount of bits encoding the computation to be equal. Copenhagen can just waste bits on computations that MWI doesn't have to do.

In particular, I mentioned earlier that Copenhagen has to have rules for when measurements occur and what basis they occur in. How does MWI incur a similar cost? What does MWI have to compute that Copenhagen doesn't that uses up the same number of bits of source code?

Like, yes, an expected-value-maximizing agent that has a utility function similar to ours might have to do some computations that involve identifying worlds, but the complexity of the utility function doesn't count against the complexity of any particular theory. And an expected value maximizer is naturally going to try and identify its zone of influence, which is going to look like a particular subset of worlds in MWI. But this happens automatically exactly because the thing is an EV-maximizer, and not because the laws of physics incurred extra complexity in order to single out worlds.

Right, so we both agree that the randomness used to determine the result of a measurement in Copenhagen, and the information required to locate yourself in MWI is the same number of bits. But the argument for MWI was never that it had an advantage on this front, but rather that Copenhagen used up some extra bits in the machine that generates the output tape in order to implement the wavefunction collapse procedure. (Not to decide the outcome of the collapse, those random bits are already spoken for. Just the source code of the procedure that collapses the wavefunction and such.) Such code has to answer questions like: Under what circumstances does the wavefunction collapse? What determines the basis the measurement is made in? There needs to be code for actually projecting the wavefunction and then re-normalizing it. This extra complexity is what people mean when they say that collapse theories are less parsimonious/have extra assumptions.

Disagree.

If you're talking about the code complexity of "interleaving": If the Turing machine simulates quantum mechanics at all, it already has to "interleave" the representations of states for tiny things like a electrons being in a superposition of spin states or whatever. This must be done in order to agree with experimental results. And then at that point not having to put in extra rules to "collapse the wavefunction" makes things simpler.

If you're talking about the complexity of locating yourself in the computation: Inferring which world you're in is equally complex to inferring which way all the Copenhagen coin tosses came up. It's the same number of bits. (In practice, we don't have to identify our location down to a single world, just as we don't care about the outcome of all the Copenhagen coin tosses.)

This notion of faith seems like an interesting idea, but I'm not 100% sure I understand it well enough to actually apply it in an example.

Suppose Descartes were to say: "Y'know, even if there were an evil Daemon fooling every one of my senses for every hour of the day, I can still know what specific illusions the Daemon is choosing to show me. And hey, actually, it sure does seem like there are some clear regularities and patterns in those illusions, so I can sometimes predict what the Daemon will show me next. So in that sense it doesn't matter whether my predictions are about the physical laws of a material world, or just patterns in the thoughts of an evil being. My mental models seem to be useful either way."

Is that what faith is?

If a rationalist hates the idea of heat death enough that they fool themselves into thinking that there must be some way that the increase in entropy can be reversed, is that an example of not seeing the world as it is? How does this flow from a lack of the first thing?

To be clear, I'm definitely pretty sympathetic to TurnTrout's type error objection. (Namely: "If the agent gets a high reward for ingesting superdrug X, but did not ingest it during training, then we shouldn't particularly expect the agent to want to ingest superdrug X during deployment, even if it realizes this would produce high reward.") But just rereading what Zack has written, it seems quite different from what TurnTrout is saying and I still stand by my interpretation of it.

- eg. Zack writes: "obviously the line itself does not somehow contain a representation of general squared-error-minimization". So in this line fitting example, the loss function, i.e. "general squared-error-minimization" refers to the function , and not .

- And when he asks why one would even want the neural network to represent the loss function, there's a pretty obvious answer of "well, the loss function contains many examples of outcomes humans rated as good and bad and we figure it's probably better if the model understands the difference between good and bad outcomes for this application." But this answer only applies to the curried loss.

I wasn't trying to sign up to defend everything Eliezer said in that paragraph, especially not the exact phrasing, so can't reply to the rest of your comment which is pretty insightful.

It's the same thing for piecewise-linear functions defined by multi-layer parameterized graphical function approximators: the model is the dataset. It's just not meaningful to talk about what a loss function implies, independently of the training data. (Mean squared error of what? Negative log likelihood of what? Finish the sentence!)

This confusion about loss functions...

I don't think this is a confusion, but rather a mere difference in terminology. Eliezer's notion of "loss function" is equivalent to Zack's notion of "loss function" curried with the training data. Thus, when Eliezer writes about the network modelling or not modelling the loss function, this would include modelling the process that generated the training data.

Could you give an example of knowledge and skills not being value neutral?

(No need to do so if you're just talking about the value of information depending on the values one has, which is unsurprising. But it sounds like you might be making a more substantial point?)

Fair enough for the alignment comparison, I was just hoping you could maybe correct the quoted paragraph to say "performance on the hold-out data" or something similar.

(The reason to expect more spread would be that training performance can't detect overfitting but performance on the hold-out data can. I'm guessing some of the nets trained in Miller et al did indeed overfit (specifically the ones with lower performance).)

More generally, John Miller and colleagues have found training performance is an excellent predictor of test performance, even when the test set looks fairly different from the training set, across a wide variety of tasks and architectures.

Seems like figure 1 from Miller et al is a plot of test performance vs. "out of distribution" test performance. One might expect plots of training performance vs. "out of distribution" test performance to have more spread.

In this context, we're imitating some probability distribution, and the perturbation means we're slightly adjusting the probabilities, making some of them higher and some of them lower. The adjustment is small in a multiplicative sense not an additive sense, hence the use of exponentials. Just as a silly example, maybe I'm training on MNIST digits, but I want the 2's to make up 30% of the distribution rather than just 10%. The math described above would let me train a GAN that generates 2's 30% of the time.

I'm not sure what is meant by "the difference from a gradient in SGD", so I'd need more information to say whether it is different from a perturbation or not. But probably it's different: perturbations in the above sense are perturbations in the probability distribution over the training data.

Perturbation Theory in Machine Learning

Linkpost for: https://pbement.com/posts/perturbation_theory.html

In quantum mechanics there is this idea of perturbation theory, where a Hamiltonian is perturbed by some change to become . As long as the perturbation is small, we can use the technique of perturbation theory to find out facts about the perturbed Hamiltonian, like what its eigenvalues should be.

An interesting question is if we can also do perturbation theory in machine learning. Suppose I am training a GAN, a diffuser, or some other machine learning technique that matches an empirical distribution. We'll use a statistical physics setup to say that the empirical distribution is given by:

Note that we may or may not have an explicit formula for . The distribution of the perturbed Hamiltonian is given by:

The loss function of the network will look something like:

Where are the network's parameters, and is the per-sample loss function which will depend on what kind of model we're training. Now suppose we'd like to perturb the Hamiltonian. We'll assume that we have an explicit formula for . Then the loss can be easily modified as follows:

If the perturbation is too large, then the exponential causes the loss to be dominated by a few outliers, which is bad. But if the perturbation isn't too large, then we can perturb the empirical distribution by a small amount in a desired direction.

One other thing to consider is that the exponential will generally increase variance in the magnitude of the gradient. To partially deal with this, we can define an adjusted batch size as:

Then by varying the actual number of samples we put into a batch, we can try to maintain a more or less constant adjusted batch size. One way to do this is to define an error variable, err = 0. At each step, we add a constant B_avg to the error. Then we add samples to the batch until adding one more sample would cause the adjusted batch size to exceed err. Subtract the adjusted batch size from err, train on the batch, and repeat. The error carries over from one step to the next, and so the adjusted batch sizes should average to B_avg.