Posts

Comments

I'm not familiar with this interpretation. Here's what Claude has to say (correct about stable regions, maybe hallucinating about Hopfield networks)

This is an interesting question that connects the findings in the paper to broader theories about how transformer models operate. Let me break down my thoughts:

The paper's findings and the Hopfield network interpretation of self-attention are not directly contradictory, but they're not perfectly aligned either. Let's examine this in more detail:

- The paper's key findings:

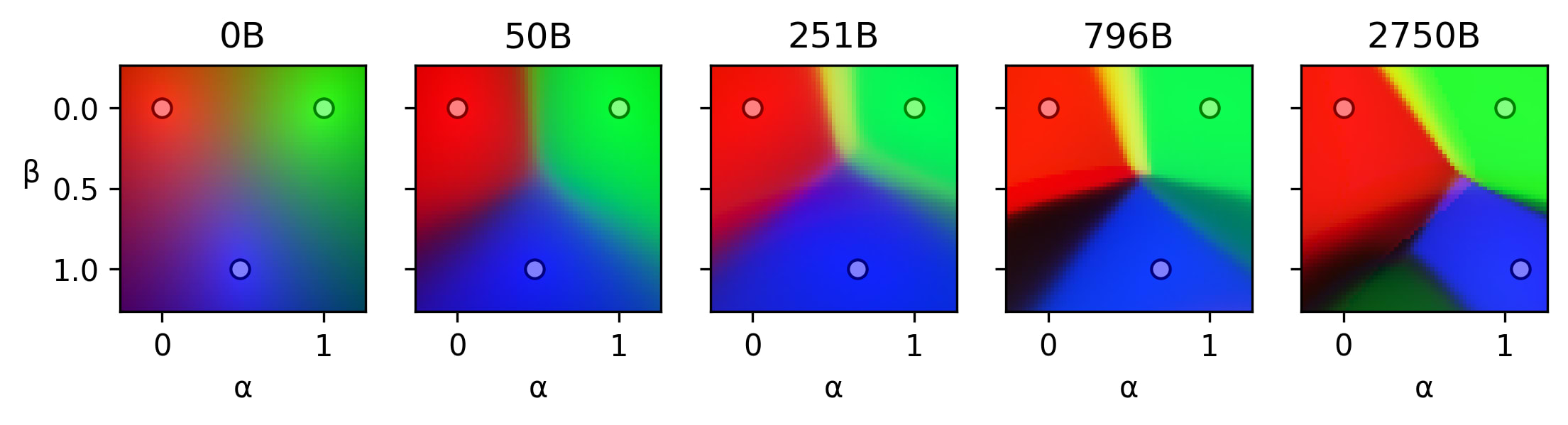

- The residual stream of trained transformers contains stable regions where small activation changes lead to minimal output changes.

- These regions emerge during training and become more defined as training progresses or model size increases.

- The regions appear to correspond to semantic distinctions.

- The Hopfield network interpretation of self-attention:

- Self-attention layers in transformers can be seen as performing energy-based updates similar to those in continuous Hopfield networks.

- This view suggests that self-attention is performing pattern completion or error correction, moving activations towards learned attractor states.

Now, let's consider how these might relate:

- Consistency with stable regions: The existence of stable regions in the residual stream could be consistent with the idea of attractor states in a Hopfield-like network. The stable regions might correspond to basins of attraction around these states.

- Emergence during training: The paper observes that stable regions emerge and become more defined during training. This aligns well with the Hopfield network interpretation, as training would refine the attractor landscapes.

- Semantic correspondence: The paper suggests that stable regions correspond to semantic distinctions. This is compatible with the Hopfield network view, where different attractor states could represent different semantic concepts or categories.

- Sharp transitions: The paper observes sharp transitions between stable regions. This is somewhat less aligned with the typical continuous Hopfield network dynamics, which often show smoother transitions. However, it's not necessarily inconsistent, as the observed behavior could result from complex interactions across multiple layers.

- Scale of regions: The paper suggests that these stable regions are much larger than previously studied polytopes. This might pose some challenges for the Hopfield network interpretation, as it implies a different granularity of representation than might be expected.

I believe there are two phenomena happening during training

- Predictions corresponding to the same stable region become more similar, i.e. stable regions become more stable. We can observe this in the animations.

- Existing regions split, resulting in more regions.

I hypothesize that

- could be some kind of error correction. Models learn to rectify errors coming from superposition interference or another kind of noise.

- could be interpreted as more capable models picking up on subtler differences between the prompts and adjusting their predictions.

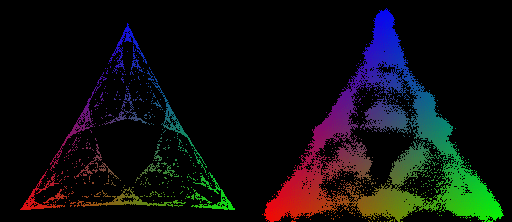

This is such a cool result! I tried to reproduce it in this notebook

For the two sets of mess3 parameters I checked the stationary distribution was uniform.

The activation patching, causal tracing and resample ablation terms seem to be out of date, compared to how you define them in your post on attribution patching.