Towards Developmental Interpretability

post by Jesse Hoogland (jhoogland), Alexander Gietelink Oldenziel (alexander-gietelink-oldenziel), Daniel Murfet (dmurfet), Stan van Wingerden (stan-van-wingerden) · 2023-07-12T19:33:44.788Z · LW · GW · 10 commentsContents

Why phase transitions? Why Singular Learning Theory? Relevance to Interpretability Relevance to Alignment Reasons it won't work The Plan None 10 comments

Developmental interpretability is a research agenda that has grown out of a meeting of the Singular Learning Theory (SLT) [? · GW] and AI alignment communities. To mark the completion of the first SLT & AI alignment summit we have prepared this document as an outline of the key ideas.

As the name suggests, developmental interpretability (or "devinterp") is inspired by recent progress in the field of mechanistic interpretability, specifically work on phase transitions in neural networks and their relation to internal structure. Our two main motivating examples are the work by Olsson et al. on In-context Learning and Induction Heads and the work by Elhage et al. on Toy Models of Superposition.

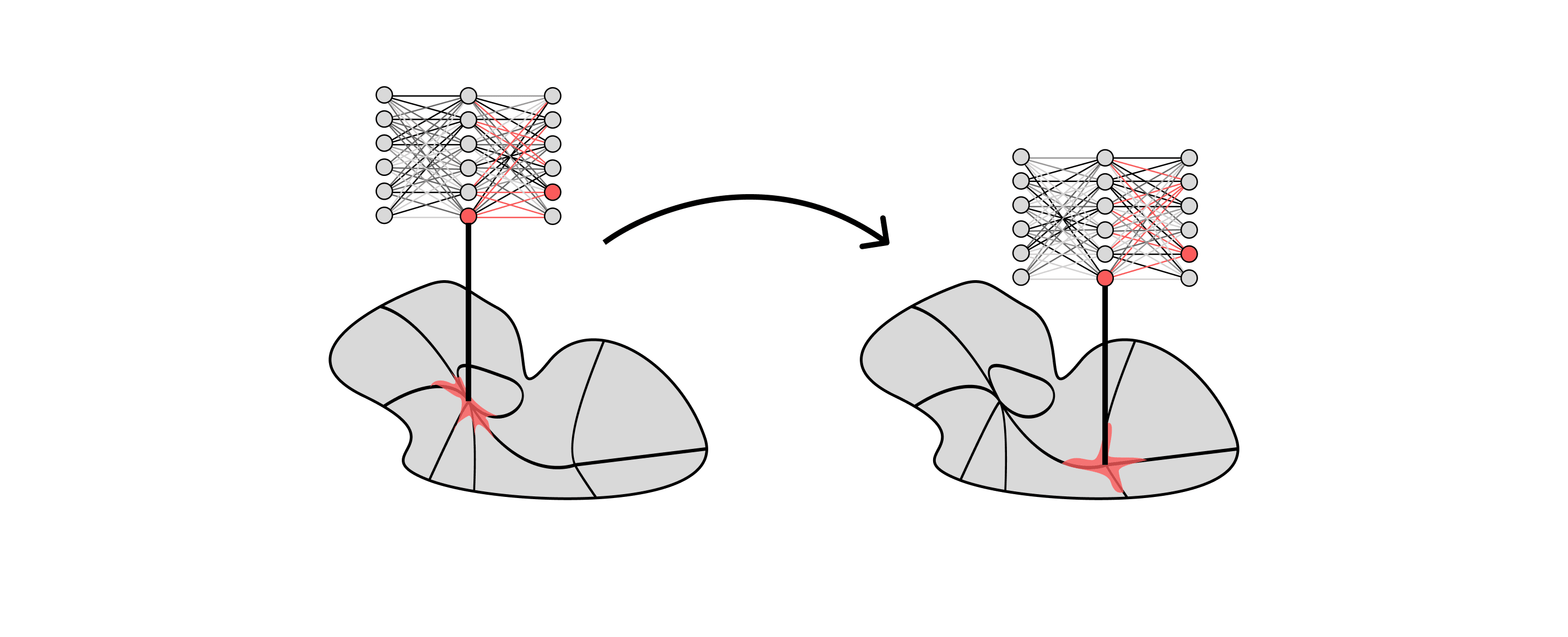

emerges through phase transitions during training.

Mechanistic interpretability emphasizes features and circuits as the fundamental units of analysis and usually aims at understanding a fully trained neural network. In contrast, developmental interpretability:

- is organized around phases and phase transitions as defined mathematically in SLT, and

- aims at an incremental understanding of the development of internal structure in neural networks, one phase transition at a time.

The hope is that an understanding of phase transitions, integrated over the course of training, will provide a new way of looking at the computational and logical structure of the final trained network. We term this developmental interpretability because of the parallel with developmental biology, which aims to understand the final state of a different class of complex self-assembling systems (living organisms) by analyzing the key steps in development from an embryonic state.[1]

In the rest of this post, we explain why we focus on phase transitions, the relevance of SLT, and how we see developmental interpretability contributing to AI alignment.

Thank you to @DanielFilan [LW · GW], @bilalchughtai [LW · GW], @Liam Carroll [LW · GW] for reviewing early drafts of this document.

Why phase transitions?

First of all, they exist: there is a growing understanding that there are many kinds of phase transitions in deep learning. For developmental interpretability, the most important kind of phase transitions are those that occur during training. Some of the examples we are most excited about:

- Olsson, et al., "In-context Learning and Induction Heads", Transformer Circuits Thread, 2022.

- Elhage, et al., "Toy Models of Superposition", Transformer Circuits Thread, 2022.

- McGrath, et al., "Acquisition of chess knowledge in AlphaZero", PNAS, 2022.

- Michaud, et al., "The Quantization Model of Neural Scaling", 2023.

- Simon, et al., "On the Stepwise Nature of Self-Supervised Learning" ICML 2023.

The literature on other kinds of phase transitions, such as those appearing as the scale of the model is increased, is even broader. Neel Nanda has conjectured that "phase changes are everywhere. [LW · GW]"

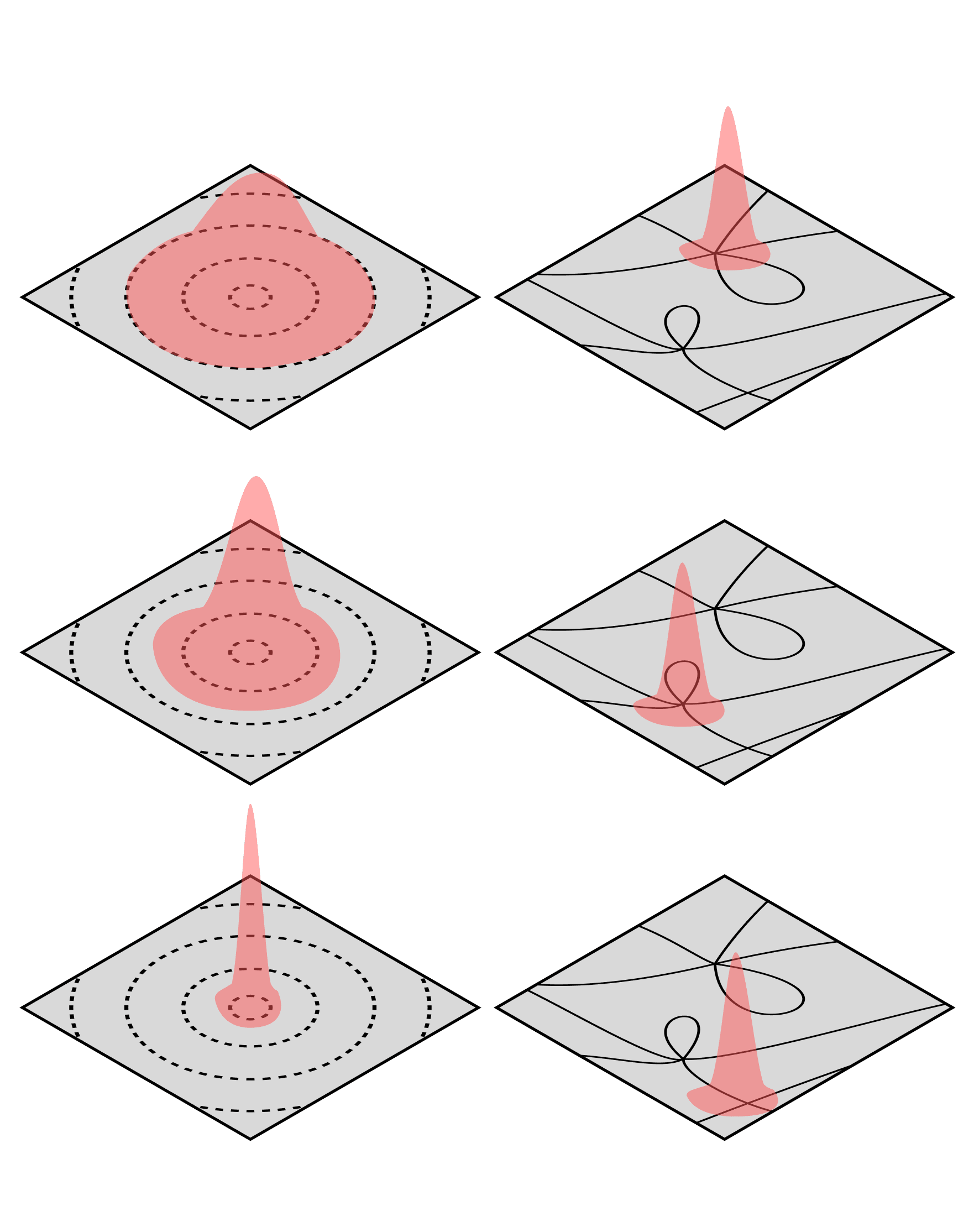

Second, they are easy to find: from the point of view of statistical physics, two of the hallmarks of a (second-order) phase transition are the divergence of macroscopically observable quantities and the emergence of large-scale order. Divergences make phase transitions easy to spot, and the emergence of large-scale order (e.g., circuits) is what makes them interesting. There are several natural observables in SLT (the learning coefficient or real log canonical threshold, and singular fluctuation) which can be used to detect phase transitions, but we don't yet know how to invent finer observables of this kind, nor do we understand the mathematical nature of the emergent order.

Third, they are good candidates for universality: every mouse is unique, but its internal organs fit together in the same way and have the same function — that's why biology is even possible as a field of science. Similarly, as an emerging field of science, interpretability depends to a significant degree on some form of universality of internal structures that develop in response to data and architecture. From the point of view of statistical physics, it is natural to connect this Universality Hypothesis to the universality classes of second-order phase transitions.

We don't believe that all knowledge and computation in a trained neural network emerges in phase transitions, but our working hypothesis is that enough emerges this way to make phase transitions a valid organizing principle for interpretability. Validating this hypothesis is one of our immediate priorities.

In summary, some of the central questions of developmental interpretability are:

- Do enough structural changes over training occur in phase transitions for this to be a useful framing for interpretability?

- What are the right statistical susceptibilities to measure in order to detect phase transitions over the course of neural network training?

- What is the right fundamental mathematical theory of the kind of structure that emerges in these phase transitions ("circuits" or something else entirely)?

- How does the idea of Universality in mechanistic interpretability relate to universality classes of (second-order) phase transitions in mathematical physics?

Why Singular Learning Theory?

As explained by Sumio Watanabe (founder of the field of SLT) in his keynote address to the SLT & alignment summit, the learning process of modern learning machines such as neural networks is dominated by phase transitions: as information from more data samples is incorporated into the network weights, the Bayesian posterior can shift suddenly between qualitatively different kinds of configurations [LW · GW] of the network. These sudden shifts are examples of phase transitions.

These phase transitions can be thought of as a form of internal model selection where the Bayesian posterior selects regions of parameter space with the optimal tradeoff between accuracy and complexity. This tradeoff is made precise by the Free Energy Formula, currently the deepest theorem of SLT (for a complete treatment of this story, see the Primer). This is very different to the learning process in classical statistical learning theory, where the Bayesian posterior gradually settles around the true parameter and cannot "jump around".

Phase transitions during training seem important for interpretability, and SLT is a theory of statistical learning that says nontrivial things about phase transitions, but these are a priori different kinds of transitions. Phase transitions over the course of training have an unclear scientific status: there's no meaningful sense in physics of phase transitions of an individual particle (i.e., SGD training run).

Nonetheless, our conjecture is that (most of) the phenomena currently referred to as "phase transitions" over training time in the deep learning literature are genuine phase transitions in the sense of SLT.

While the precise relationship remains to be understood, it is clear that phase transitions over training and phase transitions of the Bayesian posterior are related because they have a common cause: the underlying geometry of the loss landscape. This geometry determines both the dynamics of SGD trajectories and phase structure in SLT. The details of this relationship have been verified by hand in the Toy Models of Superposition, and one of our immediate priorities is testing this conjecture more broadly.

Relevance to Interpretability

What does the picture of phase transitions in Singular Learning Theory have to offer interpretability? The answers range from the mundane to the profound. At the mundane end SLT provides several nontrivial observables that we expect to be useful in detecting and classifying phase transitions (the RLCT and singular fluctuation). More broadly, SLT gives a set of abstractions, which we can use to import experience in detecting and classifying phase transitions from other areas of science[2]. At the profound end, relating emergent structure in neural networks (such as circuits) to changes in the geometry of singularities (which govern phases in SLT) may eventually open up a completely new way of thinking about the nature of knowledge and computation in these systems.

Continuing with our list of questions posed by developmental interpretability:

- Is there a precise general relationship between phase transitions observed over SGD training and phase transitions in the Bayesian posterior?

- What is the relationship between empirically observed structure formation (e.g., circuits) and changes in geometry of singularities?

Relevance to Alignment

There is no consensus on how to align an AGI built out of deep neural networks. However, in alignment proposals it is common to see (explicitly or implicitly) a dependence on progress in interpretability. Some examples include:

- Detecting deception: has the model learned to compute an answer that it then obfuscates in order to better achieve our stated objective?

- Mind-reading: being able to tell which concepts are being deployed in reasoning about which scenarios, in order to detect planning along directions we believe are dangerous.

- Situational awareness: does the model know the difference between its training and deployment environments?

It is well-understood in the field of program verification that checking inputs and outputs in evaluations is generally not sufficient to assure that your system does what you think it will do. It is common sense that AI safety will require some degree of understanding of the nature of the computations being carried out, and this explains why mechanistic interpretability is relevant to AI alignment.

In its mundane form, the goal of developmental interpretability in the context of alignment is to:

- advance the science of detecting when structural changes happen during training,

- localize these changes to a subset of the weights, and

- give the changes their proper context within the broader set of computational structures in the current state of the network.

This is all valuable information that can tell evaluation pipelines or mechanistic interpretability tools when and where to look, thereby lowering the alignment tax. In the ideal scenario, we can intervene to prevent the formation of misaligned values or dangerous capabilities (like deceptiveness) [LW · GW] or abort training when we detect these transitions. The relevance of phase transitions to alignment is clear and has been commented [LW · GW] on elsewhere [LW · GW]. What SLT offers is a principled scientific approach to detecting phase transitions, classifying them, and understanding the relation between these transitions and changes in internal structure.

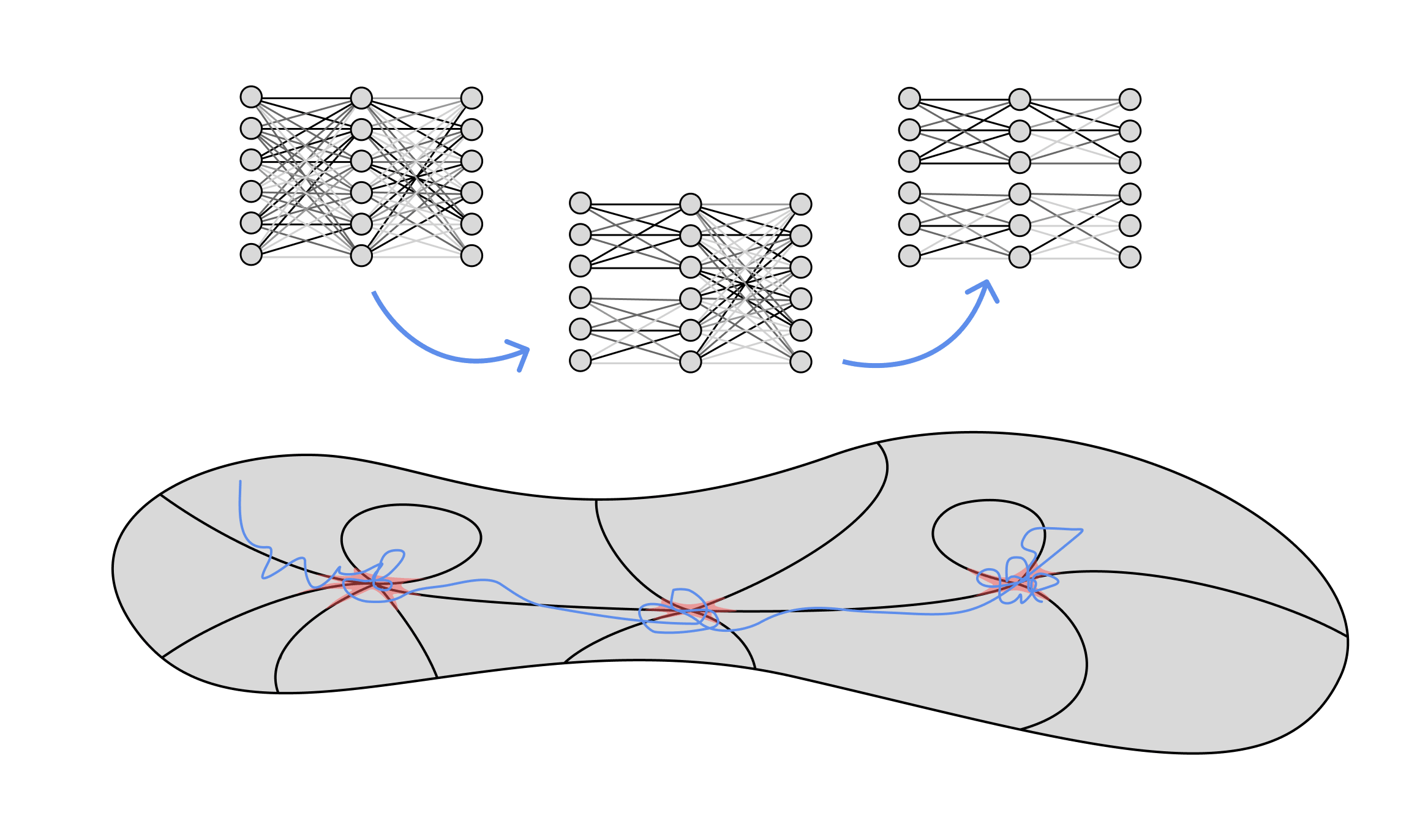

A useful guiding intuition from computer science and logic is that of the Curry-Howard correspondence: in one model of computation, the programs (simply-typed lambda terms) may be identified with a transcript of their own construction from primitive atoms (axiom rules) by a fixed set of constructors (deduction rules). Similarly, developmental interpretability attempts to make sense of the history of phase transitions over neural network training as an analogue of this transcript, with individual transitions as deduction rules[3]. There is some preliminary work in this direction for a different class of singular learning machines by Clift et al. and Waring.

In its profound form, developmental interpretability aims to understand the underlying "program" of a trained neural network as some combination of this phase transition transcript (the form) together with learned knowledge that is less universal and more perceptual (the content).

Reasons it won't work

This is a nice story involving some pretty math, and ending with not all humans dying. Hooray. But is it True? The simplest ways we can think of in which the research agenda could fail are given below, in a list we refer to as the "Axes of Failure":

- Too infrequent: it turns out that only a small fraction of the important structures in trained neural networks form in phase transitions (e.g., large-scale structure like induction heads form in phase transitions, but almost everything else is acquired gradually with no particular discrete marker of the change).

- Too frequent: it turns out that phase transitions occur almost constantly in some subset of the weights, and there's no effective way to triage them. We therefore don't gain any useful reduction in complexity by looking at phase transitions.

- Too large: it turns out that many transitions are irreducibly complex and involve most of the model, so we're back to square one and have to reinterpret the whole network every time.

- Too isolated: many important structures form in phase transitions, but these structures are "isolated" from each other and there is no meaningful way to integrate them in order to achieve a quantitative understanding of the final model.

This document is not the place for details, but we have varying degrees of confidence about each of these "axes" based on the existing empirical and theoretical literature. As an example, against the possibility of transitions being "too large" there's substantial evidence for something like locality in deep learning. Li et al. (2021) find that models can reach excellent performance even when learning is restricted to an extremely low-dimensional subspace. More generally, Gur-Ari et al. (2018) show that classifiers with categories tend to learn in a slowly-evolving -dimensional subspace. The success of pruning (and the lottery ticket hypothesis) point to a similar claim about locality, as do the results of Panigrahi et al. (2023) in the context of fine-tuning.

The Plan

The high-level near-term plan (as of July 2023) for developmental interpretability:

- Phase 1: sanity checks (six months). Assemble a library of examples of phase transitions over training, analyze each of them with our existing tools to validate the key ideas.

- Phase 2: build new tools. Jointly develop theoretical and experimental measures that give more refined information about structure formed in phase transitions.

More detailed plans for Phase 1:

- Complete the analysis of phase transitions and associated structure formation in the Toy Models of Superposition, validating the ideas in this case (preliminary work reported in SLT High 4).

- Perform a similar analysis for the Induction Heads paper.

- In a range of examples across multiple modalities (e.g., from vision to code) in which we know the final trained network to contain structure (e.g., circuits), perform an analysis over training to

- detect phase transitions (using a range of metrics, including train and test losses, RLCT and singular fluctuation) and create checkpoints,

- attempt to classify weights at each transition into state variables, control variables, and irrelevant variables,

- perform mechanistic interpretability at checkpoints, and

- compare this analysis to the structures found at the end of training.

The unit of work here are papers, submitted either to ML conferences or academic journals. At the end of this period we should have a clear idea of whether developmental interpretability has legs.

Learn more. If you're interested in learning more, the best place to start is the recent Singular Learning Theory & Alignment Summit. We recorded over 20 hours of lectures on the necessary background material and will soon publish extended lecture notes. For more background on singular learning theory see the recent sequence Distilling Singular Learning Theory [? · GW]; the SLT perspective on phase transitions is introduced in [DSLT4 [? · GW]].

Get involved. If you want to stay up-to-date on the research progress, want to participate in a follow-up summit (to be organized in November 2023), or want to contribute to one of the projects we're running, check out the Discord.

What's next? As mentioned, we expect to have a much better idea of the viability of devinterp in the next half year, at which point we'll publish an extended update. In the nearer term, you can expect a post on Open Problems, an FAQ, blog-style distillations of the material presented in the Primer, and regular research updates.

EDIT: See our update here [LW · GW].

- ^

The basic idea of studying the development of structure over the course of training is not new. Naomi Saphra proposes much the same in her post, Interpretability Creationism. Teehan et al. (2022) make the call to arms explicit:

We note in particular the lack of sufficient research on the emergence of functional units . . . within large language models, and motivate future work that grounds the study of language models in an analysis of their changing internal structure during training time.

- ^

We are particularly interested in borrowing experimental methodologies from solid state physics, neuroscience and biology.

- ^

The subject owes an intellectual debt to René Thom, who in his book "Structural Stability and Morphogenesis" presents an inspiring (if controversial) research program that we expect to inform developmental interpretability.

10 comments

Comments sorted by top scores.

comment by Vanessa Kosoy (vanessa-kosoy) · 2024-12-19T16:55:21.384Z · LW(p) · GW(p)

This post introduces Timaeus' "Developmental Interpretability" research agenda. The latter is IMO one of the most interesting extant AI alignment research agendas.

The reason DevInterp is interesting is that it is one of the few AI alignment research agendas that is trying to understand deep learning "head on", while wielding a powerful mathematical tool that seems potentially suitable for the purpose (namely, Singular Learning Theory). Relatedly, it is one of the few agendas that maintains a strong balance of theoretical and empirical research. As such, it might also grow to be a bridge between theoretical and empirical research agendas more broadly (e.g. it might be synergistic [AF · GW] with the LTA).

I also want to point out a few potential weaknesses or (minor) reservations I have:

First, DevInterp places phase transitions as its central object of study. While I agree that phase transitions seem interesting, possibly crucial to understand, I'm not convinced that a broader view wouldn't be better.

Singular Learning Theory (SLT) has the potential to explain generalization in deep learning, phase transitions or no. This in itself seems to be important enough to deserve the central stage. Understanding generalization is crucial, because:

- We want our alignment protocols to generalize correctly, given the available data, compute and other circumstances, and we need to understand what conditions would guarantee it (or at least prohibit catastrophic generalization failures).

- If the resulting theory of generalization is in some sense universal, then it might be applicable to specifying a procedure for inferring human values (as human behavior is generated from human values by a learning algorithm with similar generalization properties), or at least formalizing "human values" well enough for theoretical analysis of alignment.

Hence, compared to the OP, I would put more emphasis on these latter points.

Second, the OP does mention the difference between phase transitions during Stochastic Gradient Descent (SGD) and the phase transitions of Singular Learning Theory, but this deserves a closer look. SLT has IMO two key missing pieces:

- The first piece is the relation between ideal Bayesian inference (the subject of SLT) and SGD. Ideal Bayesian inference is known to be computationally intractable. Maybe there is an extension of SLT that replaces Bayesian inference with either SGD or a different tractable algorithm. For example, it could be some Markov Chain Monte Carlo (MCMC) that converges to Bayesian inference in the limit. Maybe there is a natural geometric invariant that controls the MCMC relaxation time, similarly to how the log canonical threshold controls sample complexity.

- The second missing piece is understanding the special properties of ANN architectures compared to arbitrary singular hypothesis classes. For example, maybe there is some universality property which explains why e.g. transformers (or something similar) are qualitatively "as good as it gets". Alternatively, it could be a relation between the log canonical threshold of specific ANN architectures to other simplicity measures which can be justified on other philosophical grounds.

That said, if the above missing pieces were found, SLT would become straightforwardly the theory for understanding deep learning and maybe learning in general.

comment by johnswentworth · 2023-07-23T19:13:51.652Z · LW(p) · GW(p)

We don't believe that all knowledge and computation in a trained neural network emerges in phase transitions, but our working hypothesis is that enough emerges this way to make phase transitions a valid organizing principle for interpretability.

I think this undersells the case for focusing on phase transitions.

Hand-wavy version of a stronger case: within a phase (i.e. when there's not a phase change), things change continuously/slowly. Anyone watching from outside can see what's going on, and have plenty of heads-up, plenty of opportunity to extrapolate where behavior is headed. That makes safety problems a lot easier. Phase transitions are exactly the points where that breaks down - changes are sudden, extrapolation fails rapidly. So, phase transitions are exactly the points which are strategically crucial to detect, for safety purposes.

comment by Bogdan Ionut Cirstea (bogdan-ionut-cirstea) · 2023-07-12T21:08:10.814Z · LW(p) · GW(p)

From a (somewhat) related proposal (from footnote 1): 'My proposal is simple. Are you developing a method of interpretation or analyzing some property of a trained model? Don’t just look at the final checkpoint in training. Apply that analysis to several intermediate checkpoints. If you are finetuning a model, check several points both early and late in training. If you are analyzing a language model, MultiBERTs, Pythia, and Mistral provide intermediate checkpoints sampled from throughout training on masked and autoregressive language models, respectively. Does the behavior that you’ve analyzed change over the course of training? Does your belief about the model’s strategy actually make sense after observing what happens early in training? There’s very little overhead to an experiment like this, and you never know what you’ll find!'

They also interpret their work on mode connectivity (twitter thread) as an example of this (developmental) interpretability approach.

Replies from: jhoogland↑ comment by Jesse Hoogland (jhoogland) · 2023-07-12T21:16:59.106Z · LW(p) · GW(p)

Yes (see footnote 1)! The main place where devinterp diverges from Naomi's proposal is the emphasis on phase transitions as described by SLT. During the first phase of the plan, simply studying how behaviors develop over different checkpoints is one of the main things we'll be doing to establish whether these transitions exist in the way we expect.

comment by Clément Dumas (butanium) · 2024-05-14T17:53:53.709Z · LW(p) · GW(p)

As explained by Sumio Watanabe (

This link is rotten, maybe link to its personal page instead ?

https://sites.google.com/view/sumiowatanabe/home

comment by Mikhail Samin (mikhail-samin) · 2023-07-14T20:13:21.078Z · LW(p) · GW(p)

Seems good to ask this publicly as well:

Do you expect to be able to discover conditions that later lead to phase transitions before the transitions happen? E.g., in grokking, do you expect to be able to look at a neural network and see the algorithm that it will “grok” during a future phase transition?

(And if you do, do you think it won’t help advance capabilities?)

[Since the mech interp of grokking results, I’ve had a speculative intuition that in the high-dimensional space of an LLM, the gradient partially points towards some algorithms that start being implemented in superposition but don’t play much weight until they’re close enough to the correct algorithm that’s being slowly implemented for the further tuning of the weights to produce a meaningful boost in performance, which leads to a rapid change, where the algorithm gets fully implemented and at the same time its outputs start playing a role. If you can only see the transition when the algorithm is already implemented accurately enough for further adjustments to improve performance, you miss most of the subtle process of the algorithm implementation.]

Replies from: dmurfet↑ comment by Daniel Murfet (dmurfet) · 2023-07-15T05:29:37.324Z · LW(p) · GW(p)

That intuition sounds reasonable to me, but I don't have strong opinions about it.

One thing to note is that training and test performance are lagging indicators of phase transitions. In our limited experience so far, measures such as the RLCT do seem to indicate that a transition is underway earlier (e.g. in Toy Models of Superposition), but in the scenario you describe I don't know if it's early enough to detect structure formation "when it starts".

For what it's worth my guess is that the information you need to understand the structure is present at the transition itself, and you don't need to "rewind" SGD to examine the structure forming one step at a time.

comment by Review Bot · 2024-02-29T00:41:41.156Z · LW(p) · GW(p)

The LessWrong Review [? · GW] runs every year to select the posts that have most stood the test of time. This post is not yet eligible for review, but will be at the end of 2024. The top fifty or so posts are featured prominently on the site throughout the year.

Hopefully, the review is better than karma at judging enduring value. If we have accurate prediction markets on the review results, maybe we can have better incentives on LessWrong today. Will this post make the top fifty?

comment by DU YUANQI (du-yuanqi) · 2023-11-03T14:01:44.223Z · LW(p) · GW(p)

Hey thanks for posting the nice material, but it seems the discord link has expired, would you mind updating the invitation link?

Replies from: jhoogland↑ comment by Jesse Hoogland (jhoogland) · 2023-11-04T08:54:37.512Z · LW(p) · GW(p)

Should be fixed now, thank you!