Posts

Comments

See here and here for my attempts to do this a few years ago! Our project (which we called Pact) ultimately died, mostly because it was no one's first priority to make it happen. About once a year I get contacted by some person or group who's trying to do the same thing, asking about the lessons we learned.

I think it's a great idea -- at least in theory -- and I wish them the best of luck!

(For anyone who's inclined toward mechanism design and is interested in some of my thoughts around incentives for donors on such a platform, I wrote about that on my blog five years ago.)

Any chance we could get Ghibli Mode back? I miss my little blue monster :(

Ohh I see. Do you have a suggested rephrasing?

Empirically, the "nerd-crack explanation" seems to have been (partially) correct, see here.

Oh, I don't think it was at all morally bad for Polymarket to make this market -- just not strategic, from the standpoint of having people take them seriously.

Top Manifold user Semiotic Rivalry said on Twitter that he knows the top Yes holders, that they are very smart, and that the Time Value of Money hypothesis is part of (but not the whole) story. The other part has to do with how Polymarket structures rewards for traders who provide liquidity.

Yeah, I think the time value of Polymarket cash doesn't track the time value of money in the global economy especially closely:

If Polymarket cash were completely fungible with regular cash, you'd expect the Jesus market to reflect the overall interest rate of the economy. In practice, though, getting money into Polymarket is kind of annoying (you need crypto) and illegal for Americans. Plus, it takes a few days, and trade opportunities often evaporate in a matter of minutes or hours! And that's not to mention the regulatory uncertainty: maybe the US government will freeze Polymarket's assets and traders won't be able to get their money out?

And so it's not unreasonable to have opinions on the future time value of Polymarket cash that differs substantially from your opinions on the future time value of money.

Yeah, honestly I have no idea why Polymarket created this question.

Do you think that these drugs significantly help with alcoholism (as one might posit if the drugs help significantly with willpower)? If so, I'm curious what you make of this Dynomight post arguing that so far the results don't look promising.

I think that large portions of the AI safety community act this way. This includes most people working on scalable alignment, interp, and deception.

Are you sure? For example, I work on technical AI safety because it's my comparative advantage, but agree at a high level with your view of the AI safety problem, and almost all of my donations are directed at making AI governance go well. My (not very confident) impression is that most of the people working on technical AI safety (at least in Berkeley/SF) are in a similar place.

We are interested in natural distributions over reversible circuits (see e.g. footnote 3), where we believe that circuits that satisfy P are exceptionally rare (probably exponentially rare).

Probably don't update on this too much, but when I hear "Berkeley Genomics Project", it sounds to me like a project that's affiliated with UC Berkeley (which it seems like you guys are not). Might be worth keeping in mind, in that some people might be misled by the name.

Echoing Jacob, yeah, thanks for writing this!

Since there are only exponentially many circuits, having the time-complexity of the verifier grow only linearly with would mean that you could get a verifier that never makes mistakes. So (if I'm not mistaken) if you're right about that, then the stronger version of reduction-regularity would imply that our conjecture is equivalent to NP = coNP.

I haven't thought enough about the reduction-regularity assumption to have a take on its plausibility, but based on my intuition about our no-coincidence principle, I think it's pretty unlikely to be equivalent to NP = coNP in a way that's easy-ish to show.

That's an interesting point! I think it only applies to constructive proofs, though: you could imagine disproving the counterexample by showing that for every V, there is some circuit that satisfies P(C) but that V doesn't flag, without exhibiting a particular such circuit.

Do you have a link/citation for this quote? I couldn't immediately find it.

We've done some experiments with small reversible circuits. Empirically, a small circuit generated in the way you suggest has very obvious structure that makes it satisfy P (i.e. it is immediately evident from looking at the circuit that P holds).

This leaves open the question of whether this is true as the circuits get large. Our reasons for believing this are mostly based on the same "no-coincidence" intuition highlighted by Gowers: a naive heuristic estimate suggests that if there is no special structure in the circuit, the probability that it would satisfy P is doubly exponentially small. So probably if C does satisfy P, it's because of some special structure.

Is this a correct rephrasing of your question?

It seems like a full explanation of a neural network's low loss on the training set needs to rely on lots of pieces of knowledge that it learns from the training set (e.g. "Barack" is usually followed by "Obama"). How do random "empirical regularities" about the training set like this one fit into the explanation of the neural net?

Our current best guess about what an explanation looks like is something like modeling the distribution of neural activations. Such an activation model would end up having baked-in empirical regularities, like the fact that "Barack" is usually followed by "Obama". So in other words, just as the neural net learned this empirical regularity of the training set, our explanation will also learn the empirical regularity, and that will be part of the explanation of the neural net's low loss.

(There's a lot more to be said here, and our picture of this isn't fully fleshed out: there are some follow-up questions you might ask to which I would answer "I don't know". I'm also not sure I understood your question correctly.)

Yeah, I did a CS PhD in Columbia's theory group and have talked about this conjecture with a few TCS professors.

My guess is that P is true for an exponentially small fraction of circuits. You could plausibly prove this with combinatorics (given that e.g. the first layer randomly puts inputs into gates, which means you could try to reason about the class of circuits that are the same except that the inputs are randomly permuted before being run through the circuit). I haven't gone through this math, though.

Thanks, this is a good question.

My suspicion is that we could replace "99%" with "all but exponentially small probability in ". I also suspect that you could replace it with , with the stipulation that the length of (or the running time of V) will depend on . But I'm not exactly sure how I expect it to depend on -- for instance, it might be exponential in .

My basic intuition is that the closer you make 99% to 1, the smaller the number of circuits that V is allowed to say "look non-random" (i.e. are flagged for some advice ). And so V is forced to do more thorough checks ("is it actually non-random in the sort of way that could lead to P being true?") before outputting 1.

99% is just a kind-of lazy way to sidestep all of these considerations and state a conjecture that's "spicy" (many theoretical computer scientists think our conjecture is false) without claiming too much / getting bogged down in the details of how the "all but a small fraction of circuits" thing depends on or the length of or the runtime of V.

I think this isn't the sort of post that ages well or poorly, because it isn't topical, but I think this post turned out pretty well. It gradually builds from preliminaries that most readers have probably seen before, into some pretty counterintuitive facts that aren't widely appreciated.

At the end of the post, I listed three questions and wrote that I hope to write about some of them soon. I never did, so I figured I'd use this review to briefly give my takes.

- This comment from Fabien Roger tests some of my modeling choices for robustness, and finds that the surprising results of Part IV hold up when the noise is heavier-tailed than the signal. (I'm sure there's more to be said here, but I probably don't have time to do more analysis by the end of the review period.,)

- My basic take is that this really is a point in favor of well-evidenced interventions, but that the best-looking speculative interventions are nevertheless better. This is because I think "speculative" here mostly refers to partial measurement rather than noisy measurement. For example, maybe you can only foresee the first-order effects of an intervention, but not the second-order effects. If the first-order effect is a (known) quantity and the second-order effect is an (unknown) quantity , then modeling the second-order effect as zero (and thus estimating the quality of the intervention as ) isn't a noisy measurement; it's a partial measurement. It's still your best guess given the information you have.

- I haven't thought this through very much. I expect good counter-arguments and counter-counter-arguments to exist here.

-

- No -- or rather, only if the measurement is guaranteed to be exactly correct. To see this, observe that the variance of a noisy, unbiased measurement is greater than the variance of the quantity you're trying to measure (with equality only when the noise is zero), whereas the variance of a noiseless, partial measurement is less than the variance of the quantity you're trying to measure.

- Real-world measurements are absolutely partial. They are, like, mind-bogglingly partial. This point deserves a separate post, but consider for instance the action of donating $5,000 to the Against Malaria Foundation. Maybe your measured effect from the RCT is that it'll save one life: 50 QALYs or so. But this measurement neglects the meat-eating problem: the expected-child you'll save will grow up to eat expected-meat from factory farms, likely causing a great amount of suffering. But then you remember: actually there's a chance that this child will have a one eight-billionth stake in determining the future of the lightcone. Oops, actually this consideration totally dominates the previous two. Does this child have better values than the average human? Again: mind-bogglingly partial!

(The measurements are also, of course, noisy! RCTs are probably about as un-noisy as it gets: for example, making your best guess about the quality of an intervention by drawing inferences from uncontrolled macroeconomic data is much more noisy. So the answer is: generally both noisy and partial, but in some sense, much more partial than noisy -- though I'm not sure how much that comparison matters.) - The lessons of this post do not generalize to partial measurements at all! This post is entirely about noisy measurements. If you've partially measured the quality of an intervention, estimating the un-measured part using your prior will give you an estimate of intervention quality that you know is probably wrong, but the expected value of your error is zero.

Thanks for writing this. I think this topic is generally a blind spot for LessWrong users, and it's kind of embarrassing how little thought this community (myself included) has given to the question of whether a typical future with human control over AI is good.

(This actually slightly broadens the question, compared to yours. Because you talk about "a human" taking over the world with AGI, and make guesses about the personality of such a human after conditioning on them deciding to do that. But I'm not even confident that AGI-enabled control of the world by e.g. the US government would be good.)

Concretely, I think that a common perspective people take is: "What would it take for the future to go really really well, by my lights", and the answer to that question probably involves human control of AGI. But that's not really the action-relevant question. The action-relevant question, for deciding whether you want to try to solve alignment, is how the average world with human-controlled AGI compares to the average AGI-controlled world. And... I don't know, in part for the reasons you suggest.

Cool, you've convinced me, thanks.

Edit: well, sort of. I think it depends on what information you're allowing yourself to know when building your statistical model. If you're not letting yourself make guesses about how the LW population was selected, then I still think the SAT thing and the height thing are reasonable. However, if you're actually trying to figure out an estimate of the right answer, you probably shouldn't blind yourself quite that much.

These both seem valid to me! Now, if you have multiple predictors (like SAT and height), then things get messy because you have to consider their covariance and stuff.

Yup, I think that only about 10-15% of LWers would get this question right.

Yeah, I wonder if Zvi used the wrong model (the non-thinking one)? It's specifically the "thinking" model that gets the question right.

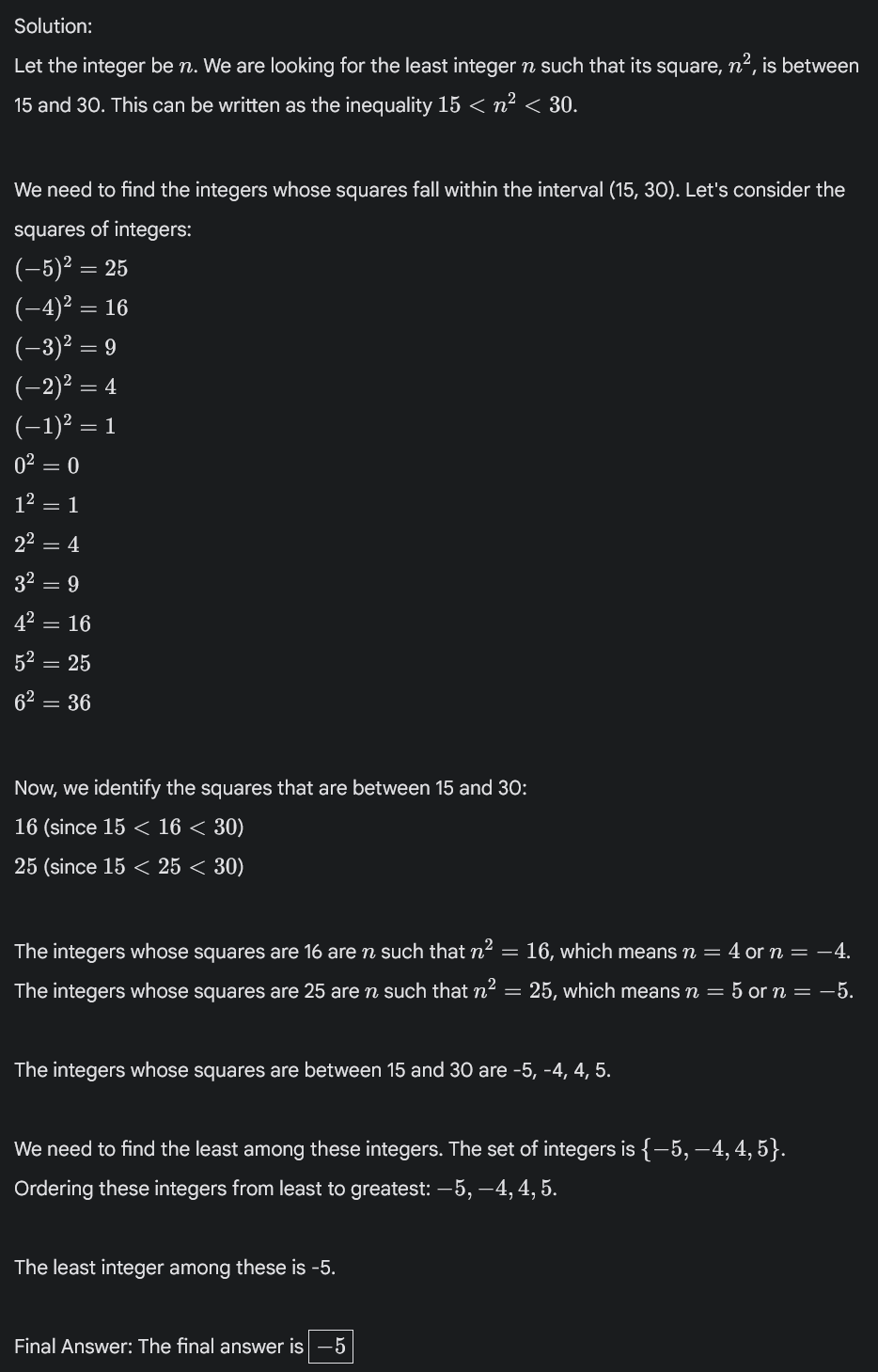

Just a few quick comments about my "integer whose square is between 15 and 30" question (search for my name in Zvi's post to find his discussion):

- The phrasing of the question I now prefer is "What is the least integer whose square is between 15 and 30", because that makes it unambiguous that the answer is -5 rather than 4. (This is a normal use of the word "least", e.g. in competition math, that the model is familiar with.) This avoids ambiguity about which of -5 and 4 is "smaller", since -5 is less but 4 is smaller in magnitude.

- This Gemini model answers -5 to both phrasings. As far as I know, no previous model ever said -5 regardless of phrasing, although someone said o1 Pro gets -5. (I don't have a subscription to o1 Pro, so I can't independently check.)

- I'm fairly confident that a majority of elite math competitors (top 500 in the US, say) would get this question right in a math competition (although maybe not in a casual setting where they aren't on their toes).

- But also this is a silly, low-quality question that wouldn't appear in a math competition.

- Does a model getting this question right say anything interesting about it? I think a little. There's a certain skill of being careful to not make assumptions (e.g. that the integer is positive). Math competitors get better at this skill over time. It's not that straightforward to learn.

- I'm a little confused about why Zvi says that the model gets it right in the screenshot, given that the model's final answer is 4. But it seems like the model snatched defeat from the jaws of victory? Like if you cut off the very last sentence, I would call it correct.

- Here's the output I get:

Thank you for making this! My favorite ones are 4, 5, and 12. (Mentioning this in case anyone wants to listen to a few songs but not the full Solstice.)

Yes, very popular in these circles! At the Bay Area Secular Solstice, the Bayesian Choir (the rationalist community's choir) performed Level Up in 2023 and Landsailor this year.

My Spotify Wrapped

Yeah, I agree that that could work. I (weakly) conjecture that they would get better results by doing something more like the thing I described, though.

My random guess is:

- The dark blue bar corresponds to the testing conditions under which the previous SOTA was 2%.

- The light blue bar doesn't cheat (e.g. doesn't let the model run many times and then see if it gets it right on any one of those times) but spends more compute than one would realistically spend (e.g. more than how much you could pay a mathematician to solve the problem), perhaps by running the model 100 to 1000 times and then having the model look at all the runs and try to figure out which run had the most compelling-seeming reasoning.

What's your guess about the percentage of NeurIPS attendees from anglophone countries who could tell you what AGI stands for?

I just donated $5k (through Manifund). Lighthaven has provided a lot of value to me personally, and more generally it seems like a quite good use of money in terms of getting people together to discuss the most important ideas.

More generally, I was pretty disappointed when Good Ventures decided not to fund what I consider to be some of the most effective spaces, such as AI moral patienthood and anything associated with the rationalist community. This has created a funding gap that I'm pretty excited about filling. (See also: Eli's comment.)

Consider pinning this post. I think you should!

It took until I was today years old to realize that reading a book and watching a movie are visually similar experiences for some people!

Let's test this! I made a Twitter poll.

Oh, that's a good point. Here's a freehand map of the US I drew last year (just the borders, not the outline). I feel like I must have been using my mind's eye to draw it.

I think very few people have a very high-fidelity mind's eye. I think the reason that I can't draw a bicycle is that my mind's eye isn't powerful/detailed enough to be able to correctly picture a bicycle. But there's definitely a sense in which I can "picture" a bicycle, and the picture is engaging something sort of like my ability to see things, rather than just being an abstract representation of a bicycle.

(But like, it's not quite literally a picture, in that I'm not, like, hallucinating a bicycle. Like it's not literally in my field of vision.)

Huh! For me, physical and emotional pain are two super different clusters of qualia.

My understanding of Sarah's comment was that the feeling is literally pain. At least for me, the cringe feeling doesn't literally hurt.

I don't really know, sorry. My memory is that 2023 already pretty bad for incumbent parties (e.g. the right-wing ruling party in Poland lost power), but I'm not sure.

Fair enough, I guess? For context, I wrote this for my own blog and then decided I might as well cross-post to LW. In doing so, I actually softened the language of that section a little bit. But maybe I should've softened it more, I'm not sure.

[Edit: in response to your comment, I've further softened the language.]

Yeah, if you were to use the neighbor method, the correct way to do so would involve post-processing, like you said. My guess, though, is that you would get essentially no value from it even if you did that, and that the information you get from normal polls would prrtty much screen off any information you'd get from the neighbor method.

I think this just comes down to me having a narrower definition of a city.

If you ask people who their neighbors are voting for, they will make their best guess about who their neighbors are voting for. Occasionally their best guess will be to assume that their neighbors will vote the same way that they're voting, but usually not. Trump voters in blue areas will mostly answer "Harris" to this question, and Harris voters in red areas will mostly answer "Trump".

Ah, I think I see. Would it be fair to rephrase your question as: if we "re-rolled the dice" a week before the election, how likely was Trump to win?

My answer is probably between 90% and 95%. Basically the way Trump loses is to lose some of his supporters or have way more late deciders decide on Harris. That probably happens if Trump says something egregiously stupid or offensive (on the level of the Access Hollywood tape), or if some really bad news story about him comes out, but not otherwise.

It's a little hard to know what you mean by that. Do you mean something like: given the information known at the time, but allowing myself the hindsight of noticing facts about that information that I may have missed, what should I have thought the probability was?

If so, I think my answer isn't too different from what I believed before the election (essentially 50/50). Though I welcome takes to the contrary.

I'm not sure (see footnote 7), but I think it's quite likely, basically because:

- It's a simpler explanation than the one you give (so the bar for evidence should probably be lower).

- We know from polling data that Hispanic voters -- who are disproportionately foreign-born -- shifted a lot toward Trump.

- The biggest shifts happened in places like Queens, NY, which has many immigrants but (I think?) not very much anti-immigrant sentiment.

That said, I'm not that confident and I wouldn't be shocked if your explanation is correct. Here are some thoughts on how you could try to differentiate between them:

- You could look on the precinct-level rather than the county-level. Some precincts will be very high-% foreign-born (above 50%). If those precincts shifted more than surrounding precincts, that would be evidence in favor of my hypothesis. If they shifted less, that would be evidence in favor of yours.

- If someone did a poll with the questions "How did you vote in 2020", "How did you vote in 2024", and "Were you born in the U.S.", that could more directly answer the question.

An interesting thing about this proposal is that it would make every state besides CA, TX, OK, and LA pretty much irrelevant for the outcome of the presidential election. E.g. in this election, whichever candidate won CATXOKLA would have enough electoral votes to win the election, even if the other candidate won every swing state.

...which of course would be unfair to the non-CATXOKLA states, but like, not any more unfair than the current system?