leogao's Shortform

post by leogao · 2022-05-24T20:08:32.928Z · LW · GW · 313 commentsContents

313 comments

313 comments

Comments sorted by top scores.

comment by leogao · 2024-10-14T20:48:11.378Z · LW(p) · GW(p)

it's surprising just how much of cutting edge research (at least in ML) is dealing with really annoying and stupid bottlenecks. pesky details that seem like they shouldn't need attention. tools that in a good and just world would simply not break all the time.

i used to assume this was merely because i was inexperienced, and that surely eventually you learn to fix all the stupid problems, and then afterwards you can just spend all your time doing actual real research without constantly needing to context switch to fix stupid things.

however, i've started to think that as long as you're pushing yourself to do novel, cutting edge research (as opposed to carving out a niche and churning out formulaic papers), you will always spend most of your time fixing random stupid things. as you get more experienced, you get bigger things done faster, but the amount of stupidity is conserved. as they say in running- it doesn't get easier, you just get faster.

as a beginner, you might spend a large part of your research time trying to install CUDA or fighting with python threading. as an experienced researcher, you might spend that time instead diving deep into some complicated distributed training code to fix a deadlock or debugging where some numerical issue is causing a NaN halfway through training.

i think this is important to recognize because you're much more likely to resolve these issues if you approach them with the right mindset. when you think of something as a core part of your job, you're more likely to engage your problem solving skills fully to try and find a resolution. on the other hand, if something feels like a brief intrusion into your job, you're more likely to just hit it with a wrench until the problem goes away so you can actually focus on your job.

in ML research the hit it with a wrench strategy is the classic "google the error message and then run whatever command comes up" loop. to be clear, this is not a bad strategy when deployed properly - this is often the best first thing to try when something breaks, because you don't have to do a big context switch and lose focus on whatever you were doing before. but it's easy to end up trapped in this loop for too long. at some point you should switch modes to actively understanding and debugging the code, which is easier to do if you think of your job as mostly being about actively understanding and debugging code.

earlier in my research career i would feel terrible about having spent so much time doing things that were not the "actual" research, which would make me even more likely to just hit things with a wrench, which actually did make me less effective overall. i think shifting my mindset since then has helped me a lot

Replies from: carl-feynman, leogao, jacques-thibodeau, sharmake-farah, Viliam, nathan-helm-burger↑ comment by Carl Feynman (carl-feynman) · 2024-10-16T15:28:57.232Z · LW(p) · GW(p)

Not only is this true in AI research, it’s true in all science and engineering research. You’re always up against the edge of technology, or it’s not research. And at the edge, you have to use lots of stuff just behind the edge. And one characteristic of stuff just behind the edge is that it doesn’t work without fiddling. And you have to build lots of tools that have little original content, but are needed to manipulate the thing you’re trying to build.

After decades of experience, I would say: any sensible researcher spends a substantial fraction of time trying to get stuff to work, or building prerequisites.

This is for engineering and science research. Maybe you’re doing mathematical or philosophical research; I don’t know what those are like.

↑ comment by Alexander Gietelink Oldenziel (alexander-gietelink-oldenziel) · 2024-10-17T12:08:49.634Z · LW(p) · GW(p)

I can emphathetically say this is not the case in mathematics research.

↑ comment by leogao · 2024-10-14T20:55:01.855Z · LW(p) · GW(p)

a corollary is i think even once AI can automate the "google for the error and whack it until it works" loop, this is probably still quite far off from being able to fully automate frontier ML research, though it certainly will make research more pleasant

Replies from: nathan-helm-burger↑ comment by Nathan Helm-Burger (nathan-helm-burger) · 2024-10-15T18:07:20.763Z · LW(p) · GW(p)

I agree if I specify 'quite far off in ability-space', while acknowledging that I think this may not be 'quite far off in clock-time'. Sometimes the difference between no skill at a task and very little skill is a larger time and effort gap than the difference between very little skill and substantial skill.

↑ comment by jacquesthibs (jacques-thibodeau) · 2024-10-15T16:28:17.736Z · LW(p) · GW(p)

Completely agree. I remember a big shift in my performance when I went from "I'm just using programming so that I can eventually build a startup, where I'll eventually code much less" to "I am a programmer, and I am trying to become exceptional at it." The shift in mindset was super helpful.

↑ comment by Noosphere89 (sharmake-farah) · 2024-10-17T15:45:07.326Z · LW(p) · GW(p)

More and more, I'm coming to the belief that one big flaw of basically everyone in general is not realizing how much you needed to deal with annoying and pesky/stupid details to do good research, and I believe some of this dictum also applies to alignment research as well.

There is thankfully more engineering/ML experience in LW which alleviates the issue partially, but still, not realizing that pesky details mattering a lot in research/engineering is a problem that basically no one wants to particularly deal with.

↑ comment by Viliam · 2024-10-15T07:53:07.157Z · LW(p) · GW(p)

I would hope for some division of labor. There are certainly people out there who can't do ML research, but can fix Python code.

But I guess, even if you had the Python guy and the budget to pay him, waiting until he fixes the bug would still interrupt your flow.

Replies from: leogao↑ comment by leogao · 2024-10-15T09:29:30.104Z · LW(p) · GW(p)

I think there are several reasons this division of labor is very minimal, at least in some places.

- You need way more of the ML engineering / fixing stuff skill than ML research. Like, vastly more. There are still a very small handful of people who specialize full time in thinking about research, but they are very few and often very senior. This is partly an artifact of modern ML putting way more emphasis on scale than academia.

- Communicating things between people is hard. It's actually really hard to convey all the context needed to do a task. If someone is good enough to just be told what to do without too much hassle, they're likely good enough to mostly figure out what to work on themselves.

- Convincing people to be excited about your idea is even harder. Everyone has their own pet idea, and you are the first engineer on any idea you have. If you're not a good engineer, you have a bit of a catch-22: you need promising results to get good engineers excited, but you need engineers to get results. I've heard of even very senior researchers finding it hard to get people to work on their ideas, so they just do it themselves.

↑ comment by Sheikh Abdur Raheem Ali (sheikh-abdur-raheem-ali) · 2024-10-15T11:05:07.859Z · LW(p) · GW(p)

This is encouraging to hear as someone with relatively little ML research skill in comparison to experience with engineering/fixing stuff.

↑ comment by Nathan Helm-Burger (nathan-helm-burger) · 2024-10-15T18:12:12.897Z · LW(p) · GW(p)

For sure. The more novel an idea I am trying to test, the deeper I have to go into the lower level programming stuff. I can't rely on convenient high-level abstractions if my needs are cutting across existing abstractions.

Indeed, I take it as a bad sign of the originality of my idea if it's too easy to implement in an existing high-level library, or if an LLM can code it up correctly with low-effort prompting.

comment by leogao · 2024-10-14T18:23:19.665Z · LW(p) · GW(p)

in research, if you settle into a particular niche you can churn out papers much faster, because you can develop a very streamlined process for that particular kind of paper. you have the advantage of already working baseline code, context on the field, and a knowledge of the easiest way to get enough results to have an acceptable paper.

while these efficiency benefits of staying in a certain niche are certainly real, I think a lot of people end up in this position because of academic incentives - if your career depends on publishing lots of papers, then a recipe to get lots of easy papers with low risk is great. it's also great for the careers of your students, because if you hand down your streamlined process, then they can get a phd faster and more reliably.

however, I claim that this also reduces scientific value, and especially the probability of a really big breakthrough. big scientific advances require people to do risky bets that might not work out, and often the work doesn't look quite like anything anyone has done before.

as you get closer to the frontier of things that have ever been done, the road gets tougher and tougher. you end up spending more time building basic infrastructure. you explore lots of dead ends and spend lots of time pivoting to new directions that seem more promising. you genuinely don't know when you'll have the result that you'll build your paper on top of.

so for people who are not beholden as strongly to academic incentives, it might make sense to think carefully about the tradeoff between efficiency and exploration.

(not sure I 100% endorse this, but it is a hypothesis worth considering)

Replies from: nathan-helm-burger, jacob-pfau, jacques-thibodeau↑ comment by Nathan Helm-Burger (nathan-helm-burger) · 2024-10-14T19:41:39.398Z · LW(p) · GW(p)

I think this is true, and I also think that this is an even stronger effect in wetlab fields where there is lock-in to particular tools, supplies, and methods.

This is part of my argument for why there appears to be an "innovation overhang" of underexplored regions of concept space. And, in the case of programming dependent disciplines, I expect AI coding assistance to start to eat away at the underexplored ideas, and for full AI researchers to burn through the space of implied hypotheses very fast indeed. I expect this to result in a big surge of progress once we pass that capability threshold.

Replies from: M. Y. Zuo, nikolas-kuhn↑ comment by M. Y. Zuo · 2024-10-15T18:21:41.469Z · LW(p) · GW(p)

Or perhaps on the flip side there is a ‘super genius underhang’ where there are insufficient numbers of super competent people to do that work. (Or willing to bet on their future selves being super competent.)

It makes sense for the above average, but not that much above average, researcher to choose to focus on their narrow niche, since their relative prospects are either worse or not evaluable after wading into the large ocean of possibilities.

↑ comment by Amalthea (nikolas-kuhn) · 2024-10-14T20:27:45.815Z · LW(p) · GW(p)

Or simply when scaling becomes too expensive.

↑ comment by Jacob Pfau (jacob-pfau) · 2024-10-14T22:57:38.911Z · LW(p) · GW(p)

I agree that academia over rewards long-term specialization. On the other hand, it is compatible to also think, as I do, that EA under-rates specialization. At a community level, accumulating generalists has fast diminishing marginal returns compared to having easy access to specialists with hard-to-acquire skillsets.

↑ comment by jacquesthibs (jacques-thibodeau) · 2024-10-15T16:21:19.042Z · LW(p) · GW(p)

This is one of the reasons I think 'independent' research is valuable, even if it isn't immediately obvious from a research output (papers, for example) standpoint.

That said, I've definitely had the thought, "I should niche down into a specific area where there is already a bunch of infrastructure I can leverage and churn out papers with many collaborators because I expect to be in a more stable funding situation as an independent researcher. It would also make it much easier to pivot into a role at an organization if I want to or necessary. It would definitely be a much more stable situation for me."(And I also agree that specialization is often underrated.)

Ultimately, I decided not to do this because I felt like there were already enough people in alignment/governance who would take the above option due to financial and social incentives and published directions seeming more promising. However, since this makes me produce less output, I hope this is something grantmakers keep in consideration for my future grant applications.

comment by leogao · 2024-12-17T23:38:16.242Z · LW(p) · GW(p)

I decided to conduct an experiment at neurips this year: I randomly surveyed people walking around in the conference hall to ask whether they had heard of AGI

I found that out of 38 respondents, only 24 could tell me what AGI stands for (63%)

we live in a bubble

(https://x.com/nabla_theta/status/1869144832595431553)

Replies from: UnexpectedValues, daniel-kokotajlo, elityre, lc, nathan-helm-burger↑ comment by Eric Neyman (UnexpectedValues) · 2024-12-18T17:36:18.816Z · LW(p) · GW(p)

What's your guess about the percentage of NeurIPS attendees from anglophone countries who could tell you what AGI stands for?

Replies from: leogao↑ comment by leogao · 2024-12-18T21:31:37.473Z · LW(p) · GW(p)

not sure, i didn't keep track of this info. an important data point is that because essentially all ML literature is in english, non-anglophones generally either use english for all technical things, or at least codeswitch english terms into their native language. for example, i'd bet almost all chinese ML researchers would be familiar with the term CNN and it would be comparatively rare for people to say 卷积神经网络. (some more common terms like 神经网络 or 模型 are used instead of their english counterparts - neural network / model - but i'd be shocked if people didn't know the english translations)

overall i'd be extremely surprised if there were a lot of people who knew conceptually the idea of AGI but didn't know that it was called AGI in english

↑ comment by Daniel Kokotajlo (daniel-kokotajlo) · 2024-12-18T17:41:51.133Z · LW(p) · GW(p)

Very interesting!

Those who couldn't tell you what AGI stands for -- what did they say? Did they just say "I don't know" or did they say e.g. "Artificial Generative Intelligence...?"

Is it possible that some of them totally HAD heard the term AGI a bunch, and basically know what it means, but are just being obstinate? I'm thinking of someone who is skeptical of all the hype and aware the lots of people define AGI differently. Such a person might respond to "Can you tell me what AGI means" with "No I can't (because it's a buzzword that means different things to different people)"

↑ comment by leogao · 2024-12-18T21:26:39.474Z · LW(p) · GW(p)

the specific thing i said to people was something like:

excuse me, can i ask you a question to help settle a bet? do you know what AGI stands for? [if they say yes] what does it stand for? [...] cool thanks for your time

i was careful not to say "what does AGI mean".

most people who didn't know just said "no" and didn't try to guess. a few said something like "artificial generative intelligence". one said "amazon general intelligence" (??). the people who answered incorrectly were obviously guessing / didn't seem very confident in the answer.

if they seemed confused by the question, i would often repeat and say something like "the acronym AGI" or something.

several people said yes but then started walking away the moment i asked what it stood for. this was kind of confusing and i didn't count those people.

Replies from: lsgos↑ comment by lewis smith (lsgos) · 2024-12-19T09:24:05.757Z · LW(p) · GW(p)

not to be 'i trust my priors more than your data', but i have to say that i find the AGI thing quite implausible; my impression is that most AI researchers (way more than 60%), even ones working in like something very non-deep learning adjacent, have heard of the term AGI, but many of them are/were quite dismissive of it as an idea or associate it strongly (not entirely unfairly) with hype /bullshit, hence maybe walking away from you when you ask them about it.

e.g deepmind and openAI have been massive producers of neurips papers for years now (at least since I started a phd in 2016), and both organisations explictly talked about AGI fairly often for years.

maybe neurips has way more random attendees now (i didn't go this year), but I still find this kind of hard to believe; I think I've read about AGI in the financial times now.

Replies from: leogao, Mo Nastri↑ comment by leogao · 2024-12-19T17:37:16.078Z · LW(p) · GW(p)

only 2 people walked away without answering (after saying yes initially); they were not counted as yes or no. another several people refused to even answer, but this was also quite rare. the no responders seemed genuinely confused, as opposed to dismissive.

feel free to replicate this experiment at ICML or ICLR or next neurips.

Replies from: lsgos↑ comment by lewis smith (lsgos) · 2024-12-22T00:42:49.418Z · LW(p) · GW(p)

i mean i think that its' definitely an update (anything short of 95% i think would have been quite surprising to me)

↑ comment by Mo Putera (Mo Nastri) · 2024-12-19T17:02:22.231Z · LW(p) · GW(p)

Why not try out leogao's survey yourself to corroborate/falsify your priors?

↑ comment by Eli Tyre (elityre) · 2024-12-20T20:04:45.466Z · LW(p) · GW(p)

Was this possibly a language thing? Are there Chinese or Indian machine learning researchers who would use a different term than AGI in their native language?

Replies from: leogao↑ comment by leogao · 2024-12-20T20:16:42.342Z · LW(p) · GW(p)

I'd be surprised if this were the case. next neurips I can survey some non native English speakers to see how many ML terms they know in English vs in their native language. I'm confident in my ability to administer this experiment on Chinese, French, and German speakers, which won't be an unbiased sample of non-native speakers, but hopefully still provides some signal.

↑ comment by Nathan Helm-Burger (nathan-helm-burger) · 2024-12-18T21:23:07.868Z · LW(p) · GW(p)

I'd be curious to hear some of the guesses people make when they say they don't know.

comment by leogao · 2025-02-05T04:19:53.987Z · LW(p) · GW(p)

when i was new to research, i wouldn't feel motivated to run any experiment that wouldn't make it into the paper. surely it's much more efficient to only run the experiments that people want to see in the paper, right?

now that i'm more experienced, i mostly think of experiments as something i do to convince myself that a claim is correct. once i get to that point, actually getting the final figures for the paper is the easy part. the hard part is finding something unobvious but true. with this mental frame, it feels very reasonable to run 20 experiments for every experiment that makes it into the paper.

Replies from: Gunnar_Zarncke, sheikh-abdur-raheem-ali↑ comment by Gunnar_Zarncke · 2025-02-05T14:06:21.750Z · LW(p) · GW(p)

What is often left out in papers is all of these experiments and the though chains people had about them.

↑ comment by Sheikh Abdur Raheem Ali (sheikh-abdur-raheem-ali) · 2025-02-07T13:40:01.566Z · LW(p) · GW(p)

This is also because of Jevon's Paradox. As the cost of doing an experiment reduces with experience, the number of experiments run tends to rise.

comment by leogao · 2024-12-07T16:14:54.082Z · LW(p) · GW(p)

it's quite plausible (40% if I had to make up a number, but I stress this is completely made up) that someday there will be an AI winter or other slowdown, and the general vibe will snap from "AGI in 3 years" to "AGI in 50 years". when this happens it will become deeply unfashionable to continue believing that AGI is probably happening soonish (10-15 years), in the same way that suggesting that there might be a winter/slowdown is unfashionable today. however, I believe in these timelines roughly because I expect the road to AGI to involve both fast periods and slow bumpy periods. so unless there is some super surprising new evidence, I will probably only update moderately on timelines if/when this winter happens

Replies from: leogao, TsviBT, william-brewer, sharmake-farah, anaguma↑ comment by leogao · 2024-12-07T16:18:46.363Z · LW(p) · GW(p)

also a lot of people will suggest that alignment people are discredited because they all believed AGI was 3 years away, because surely that's the only possible thing an alignment person could have believed. I plan on pointing to this and other statements similar in vibe that I've made over the past year or two as direct counter evidence against that

(I do think a lot of people will rightly lose credibility for having very short timelines, but I think this includes a big mix of capabilities and alignment people, and I think they will probably lose more credibility than is justified because the rest of the world will overupdate on the winter)

Replies from: Jozdien, leogao, lahwran↑ comment by Jozdien · 2024-12-07T17:43:26.728Z · LW(p) · GW(p)

My timelines are roughly 50% probability on something like transformative AI by 2030, 90% by 2045, and a long tail afterward. I don't hold this strongly either, and my views on alignment are mostly decoupled from these beliefs. But if we do get an AI winter longer than that (through means other than by government intervention, which I haven't accounted for), I should lose some Bayes points, and it seems worth saying so publicly.

Replies from: leogao↑ comment by leogao · 2024-12-08T16:33:43.856Z · LW(p) · GW(p)

to be clear, a "winter/slowdown" in my typology is more about the vibes and could only be a few years counterfactual slowdown. like the dot-com crash didn't take that long for companies like Amazon or Google to recover from, but it was still a huge vibe shift

↑ comment by leogao · 2024-12-08T15:57:13.682Z · LW(p) · GW(p)

also to further clarify this is not an update I've made recently, I'm just making this post now as a regular reminder of my beliefs because it seems good to have had records of this kind of thing (though everyone who has heard me ramble about this irl can confirm I've believed sometime like this for a while now)

↑ comment by the gears to ascension (lahwran) · 2024-12-08T18:30:13.548Z · LW(p) · GW(p)

I was someone who had shorter timelines. At this point, most of the concrete part of what I expected has happened, but the "actually AGI" thing hasn't. I'm not sure how long the tail will turn out to be. I only say this to get it on record.

↑ comment by TsviBT · 2024-12-07T20:16:22.396Z · LW(p) · GW(p)

If you keep updating such that you always "think AGI is <10 years away" then you will never work on things that take longer than 15 years to help. This is absolutely a mistake, and it should at least be corrected after the first round of "let's not work on things that take too long because AGI is coming in the next 10 years". I will definitely be collecting my Bayes points https://www.lesswrong.com/posts/sTDfraZab47KiRMmT/views-on-when-agi-comes-and-on-strategy-to-reduce [LW · GW]

↑ comment by yams (william-brewer) · 2024-12-07T19:18:50.603Z · LW(p) · GW(p)

Does it seem likely to you that, conditional on ‘slow bumpy period soon’, a lot of the funding we see at frontier labs dries up (so there’s kind of a double slowdown effect of ‘the science got hard, and also now we don’t have nearly the money we had to push global infrastructure and attract top talent’), or do you expect that frontier labs will stay well funded (either by leveraging low hanging fruit in mundane utility, or because some subset of their funders are true believers, or a secret third thing)?

↑ comment by Noosphere89 (sharmake-farah) · 2024-12-07T21:47:47.271Z · LW(p) · GW(p)

My guess is that for now, I'd give around a 10-30% chance to "AI winter happens for a short period/AI progress slows down" by 2027.

Also, what would you consider super surprising new evidence?

comment by leogao · 2025-04-03T22:18:06.995Z · LW(p) · GW(p)

every 4 years, the US has the opportunity to completely pivot its entire policy stance on a dime. this is more politically costly to do if you're a long-lasting autocratic leader, because it is embarrassing to contradict your previous policies. I wonder how much of a competitive advantage this is.

Replies from: interstice, D0TheMath, ben-lang↑ comment by interstice · 2025-04-04T14:19:24.957Z · LW(p) · GW(p)

Or disadvantage, because it makes it harder to make long-term plans and commitments?

↑ comment by Garrett Baker (D0TheMath) · 2025-04-04T16:59:35.938Z · LW(p) · GW(p)

Autarchies, including China, seem more likely to reconfigure their entire economic and social systems overnight than democracies like the US, so this seems false.

Replies from: leogao↑ comment by leogao · 2025-04-04T18:24:57.365Z · LW(p) · GW(p)

It's often very costly to do so - for example, ending the zero covid policy was very politically costly even though it was the right thing to do. Also, most major reconfigurations even for autocratic countries probably mostly happen right after there is a transition of power (for China, Mao is kind of an exception, but thats because he had so much power that it was impossible to challenge his authority even when he messed up).

Replies from: D0TheMath↑ comment by Garrett Baker (D0TheMath) · 2025-04-04T19:05:39.780Z · LW(p) · GW(p)

The closing off of China after/during Tinamen square I don't think happened after a transition of power, though I could be mis-remembering. See also the one-child policy, which I also don't think happened during a power transition (allowed for 2 children in 2015, then removed all limits in 2021, while Xi came to power in 2012).

I agree the zero-covid policy change ended up being slow. I don't know why it was slow though, I know a popular narrative is that the regime didn't want to lose face, but one fact about China is the reason why many decisions are made is highly obscured. It seems entirely possible to me there were groups (possibly consisting of Xi himself) who believed zero-covid was smart. I don't know much about this though.

I will also say this is one example of china being abnormally slow of many examples of them being abnormally fast, and I think the abnormally fast examples win out overall.

Mao is kind of an exception, but thats because he had so much power that it was impossible to challenge his authority even when he messed up

Ish? The reason he pursued the cultural revolution was because people were starting to question his power, after the great leap forward, but yeah he could be an outlier. I do think that many autocracies are governed by charismatic & powerful leaders though, so not that much an outlier.

Replies from: leogao↑ comment by leogao · 2025-04-05T00:20:05.837Z · LW(p) · GW(p)

I mean, the proximate cause of the 1989 protests was the death of the quite reformist general secretary Hu Yaobang. The new general secretary, Zhao Ziyang, was very sympathetic towards the protesters and wanted to negotiate with them, but then he lost a power struggle against Li Peng and Deng Xiaoping (who was in semi retirement but still held onto control of the military). Immediately afterwards, he was removed as general secretary and martial law was declared, leading to the massacre.

↑ comment by Ben (ben-lang) · 2025-04-04T21:32:42.033Z · LW(p) · GW(p)

Having unstable policy making comes with a lot of disadvantages as well as advantages.

For example, imagine a small poor country somewhere with much of the population living in poverty. Oil is discovered, and a giant multinational approaches the government to seek permission to get the oil. The government offers some kind of deal - tax rates, etc. - but the company still isn't sure. What if the country's other political party gets in at the next election? If that happened the oil company might have just sunk a lot of money into refinery's and roads and drills only to see them all taken away by the new government as part of its mission to "make the multinationals pay their share for our people." Who knows how much they might take?

What can the multinational company do to protect itself? One answer is to try and find a different country where the opposition parties don't seem likely to do that. However, its even better to find a dictatorship to work with. If people think a government might turn on a dime, then they won't enter into certain types of deal with it. Not just companies, but also other countries.

So, whenever a government does turn on a dime, it is gaining some amount of reputation for unpredictability/instability, which isn't a good reputation to have when trying to make agreements in the future.

comment by leogao · 2024-12-23T00:43:00.420Z · LW(p) · GW(p)

people around these parts often take their salary and divide it by their working hours to figure out how much to value their time. but I think this actually doesn't make that much sense (at least for research work), and often leads to bad decision making.

time is extremely non fungible; some time is a lot more valuable than other time. further, the relation of amount of time worked to amount earned/value produced is extremely nonlinear (sharp diminishing returns). a lot of value is produced in short flashes of insight that you can't just get more of by spending more time trying to get insight (but rather require other inputs like life experience/good conversations/mentorship/happiness). resting or having fun can help improve your mental health, which is especially important for positive tail outcomes.

given that the assumptions of fungibility and linearity are extremely violated, I think it makes about as much sense as dividing salary by number of keystrokes or number of slack messages.

concretely, one might forgo doing something fun because it seems like the opportunity cost is very high, but actually diminishing returns means one more hour on the margin is much less valuable than the average implies, and having fun improves productivity in ways not accounted for when just considering the intrinsic value one places on fun.

Replies from: habryka4, CstineSublime↑ comment by habryka (habryka4) · 2024-12-23T00:55:11.826Z · LW(p) · GW(p)

but actually diminishing returns means one more hour on the margin is much less valuable than the average implies

This importantly also goes in the other direction!

One dynamic I have noticed people often don't understand is that in a competitive market (especially in winner-takes-all-like situations) the marginal returns to focusing more on a single thing can be sharply increasing, not only decreasing.

In early-stage startups, having two people work 60 hours is almost always much more valuable than having three people work 40 hours. The costs of growing a team are very large, the costs of coordination go up very quickly, and so if you are at the core of an organization, whether you work 40 hours or 60 hours is the difference between being net-positive vs. being net-negative.

This is importantly quite orthogonal whether you should rest or have fun or whatever. While there might be at an aggregate level increasing marginal returns to more focus, it is also the case that in such leadership positions, the most important hours are much much more productive than the median hour, and so figuring out ways to get more of the most important hours (which often rely on peak cognitive performance and a non-conflicted motivational system) is even more leveraged than adding the marginal hour (but I think it's important to recognize both effects).

Replies from: leogao, maxime-riche↑ comment by leogao · 2024-12-23T01:44:33.827Z · LW(p) · GW(p)

agree it goes in both directions. time when you hold critical context is worth more than time when you don't. it's probably at least sometimes a good strategy to alternate between working much more than sustainable and then recovering.

my main point is this is a very different style of reasoning than what people usually do when they talk about how much their time is worth.

↑ comment by Maxime Riché (maxime-riche) · 2024-12-24T00:30:01.873Z · LW(p) · GW(p)

It seems that your point applies significantly more to "zero-sum markets". So it may be good to notice it may not apply for altruistic people when non-instrumentally working on AI safety.

↑ comment by CstineSublime · 2024-12-23T01:08:06.734Z · LW(p) · GW(p)

Are these people trying to determine how much they (subjectively) value their time or how much they should value their time?

Because I think if it's the former and Descriptive, wouldn't the obvious approach be to look at what time-saving services they have employed recently or in the past and see how much they have paid for them relative to how much time they saved? I'm referring to services or products where they could have done it themselves as they have the tools, abilities and freedom to commit to it, but opted to buy a machine or outsource the task to someone else. (I am aware that the hidden variable of 'effort' complicates this model). For example, in what situations will I walk or take public transport to get somewhere, and which ones will I order an Uber: There's a certain cross-over point where if the time-saved is enough I'll justify the expense to myself, which would seem to be a good starting point for evaluating in descriptive terms how much I value my time.

I'm guessing if you had enough of these examples where the effort-saved was varied enough then you'd begin to get more accurate model of how one values their time?

↑ comment by leogao · 2024-12-23T01:54:19.222Z · LW(p) · GW(p)

I think the most important part of paying for goods and services is often not the raw time saved, but the cognitive overhead avoided. for instance, I'd pay much more to avoid having to spend 15 minutes understanding something complicated (assuming there is no learning value) than 15 minutes waiting. so it's plausibly more costly to have to figure out the timetable, fare system, remembering to transfer, navigating the station, than the additional time spent in transit (especially applicable in a new unfamiliar city)

Replies from: Viliam, CstineSublime↑ comment by Viliam · 2024-12-23T10:23:27.992Z · LW(p) · GW(p)

I guess is depends on the kind of work you do (and maybe whether you have ADHD). From my perspective, yes, attention is even more scarce than time or money, because when I get home from work, it feels like all my "thinking energy" is depleted, and even if I could somehow leverage the time or money for some good purpose, I am simply unable to do that. Working even more would mean that my private life would fall apart completely. And people would probably ask "why didn't he simply...?", and the answer would be that even the simple things become very difficult to do when all my "thinking energy" is gone.

There are probably smart ways to use money to reduce the amount of "thinking energy" you need to spend in your free time, but first you need enough "thinking energy" to set up such system. The problem is, the system needs to be flawless, because otherwise you still need to spend "thinking energy" to compensate for its flaws.

EDIT: I especially hate things like the principal-agent problem, where the seemingly simple answer is: "just pay a specialist to do that, duh", but that immediately explodes to "but how can I find a specialist?" and "how can I verify that they are actually doing a good job?", which easily become just as difficult as the original problem I tried to solve.

↑ comment by CstineSublime · 2024-12-23T07:07:38.800Z · LW(p) · GW(p)

I wasn't asking how most people go about determining which goods or services to pay for generally, but rather if you're noticing that they are using the working hours by salary equation to determine what their time is worth, if it's to put a dollar figure on what they do in fact value it at, (and that isolates the time element from the effort or cognitive load element)

I didn't specify nor imply that one route took more cognitive load than the other, only that one was quicker than the other, and that differential would be one such way of revealing the value of time. (Otherwise they're not, in fact, trying to ascertain what their time is worth at all... but something else)

Nowadays using Public Transport is often no more complicated or takes no more effort than using Uber thanks to Google Maps, but this tangent is immaterial to my question: are you noticing these people are trying to measure how much they DO value their time, or are they trying to ascertain how much they SHOULD value their time?

comment by leogao · 2025-03-07T00:28:26.495Z · LW(p) · GW(p)

timelines takes

- i've become more skeptical of rsi over time. here's my current best guess at what happens as we automate ai research.

- for the next several years, ai will provide a bigger and bigger efficiency multiplier to the workflow of a human ai researcher.

- ai assistants will probably not uniformly make researchers faster across the board, but rather make certain kinds of things way faster and other kinds of things only a little bit faster.

- in fact probably it will make some things 100x faster, a lot of things 2x faster, and then be literally useless for a lot of remaining things

- amdahl's law tells us that we will mostly be bottlenecked on the things that don't get sped up a ton. like if the thing that got sped up 100x was only 10% of the original thing, then you don't get more than a 1/(1 - 10%) speedup.

- i think the speedup is a bit more than amdahl's law implies. task X took up 10% of the time because there is diminishing returns to doing more X, and so you'd ideally do exactly the amount of X such that the marginal value of time spent on X is exactly in equilibrium with time spent on anything else. if you suddenly decrease the cost of X substantially, the equilibrium point shifts towards doing more X.

- in other words, if AI makes lit review really cheap, you probably want to do a much more thorough lit review than you otherwise would have, rather than just doing the same amount of lit review but cheaper.

- at the first moment that ai can fully replace a human researcher (that is, you can purely just put more compute in and get more research out, and only negligible human labor is required), the ai will probably be more expensive per unit of research than the human

- (things get a little bit weird because my guess is before ai can drop-in replace a human, we will reach a point where adding ai assistance equivalent to the cost of 100 humans to 2025-era openai research would be equally as good as adding 100 humans, but the ai's are not doing the same things as the humans, and if you just keep adding ai's you start experiencing diminishing returns faster than with adding humans. i think my analysis still mostly holds despite this)

- naively, this means that the first moment that AIs can fully automate AI research at human-cost is not a special criticality threshold. if you are at equilibrium for allocating money between researchers and compute, then suddenly having the ability to convert compute into researchers at the exchange rate of the salary of a human researcher doesn't really make sense

- in reality, you will probably not be at equilibrium, because there are a lot of inefficiencies in hiring humans - recruiting is a lemon market, you have to onboard new hires relatively slowly, management capacity is limited, there is a inelastic and inefficient supply of qualified hires, etc. but i claim this is a relatively small effect and can't explain a one OOM increase in workforce size

- also: anyone who has worked in a large organization knows that team size is not everything. having too many people can often even be a liability and slow you down. even when it doesn't, adding more people almost never makes your team linearly more productive.

- however, if AIs have much better scaling laws with additional parallel compute than human organizations do, then this could change things a lot. this is one of my biggest uncertainties here and one reason i still take rsi seriously.

- your AIs might higher have bandwidth communication with each other than your humans do. but also maybe they might be worse at generalizing previous findings to new situations or something.

- they might be more aligned with doing lots of research all day, whereas humans care about a lot of other things like money and status and fun and so on. but if outer alignment is hard we might get the AI equivalent of corporate politics.

- one other thing is that compute is a necessary input to research. i'll mostly roll this into the compute cost of actually running the AIs.

- the part where AI research feeds back into how good the AIs are could be very slow in practice

- there are logarithmic returns to more pretraining compute and more test time compute. so an improvement that 10xes the effective compute doesn't actually get you that much. 4.5 isn't that much better than 4 despite being 10x more compute (which is in turn not that much better than 3.5, I would claim).

- you run out of low hanging fruit at some point. each 2x in compute efficiency is harder to find than the previous one.

- i would claim that in fact much of the recent feeling that AI progress is fast is due to a lot of low hanging fruit being picked. for example, the shift from pretrained models to RL for reasoning picked a lot of low hanging fruit due to not using test time compute / not eliciting CoTs well, and we shouldn't expect the same kind of jump consistently.

- an emotional angle: exponentials can feel very slow in practice; for example, moore's law is kind of insane when you think about it (doubling every 18 months is pretty fast), but it still takes decades to play out

- for the next several years, ai will provide a bigger and bigger efficiency multiplier to the workflow of a human ai researcher.

↑ comment by ryan_greenblatt · 2025-03-07T04:01:11.066Z · LW(p) · GW(p)

My current best guess median is that we'll see 6 OOMs of effective compute in the first year after full automation of AI R&D if this occurs in ~2029 using a 1e29 training run and compute is scaled up by a factor of 3.5x[1] over the course of this year[2]. This is around 5 years of progress at the current rate[3].

How big of a deal is 6 OOMs? I think it's a pretty big deal; I have a draft post discussing how much an OOM gets you (on top of full automation of AI R&D) that I should put out somewhat soon.

Further, my distribution over this is radically uncertain with a 25th percentile of 2.5 OOMs (2 years of progress) and a 75th percentile of 12 OOMs.

The short breakdown of the key claims is:

- Initial progress will be fast, perhaps ~15x faster algorithmic progress than humans.

- Progress will probably speed up before slowing down due to training smarter AIs that can accelerate progress even faster, and this being faster than returns diminish on software.

- We'll be quite far from the limits of software progress (perhaps median 12 OOMs) at the point when we first achieve full automation.

Here is a somewhat summarized and rough version of the argument (stealing heavily from some of Tom Davidson's forthcoming work):

- At the point of full automation, progress will be fast:

- Probably you'll have lots of parallel workers running pretty fast at the point when you have full automation or shortly after this. This isn't totally obvious due to inference compute, but prices often drop fast.

- My guess is you'll have enough compute that if you use 1/6 of your compute running AIs, you'll be able to run the equivalent of ~1 million AIs which are roughly as good as the best human research scientists+engineers (taking into account cost reductions for using weaker models for many tasks). This is attempting to account for a reduction in the number of models due to using a bunch of inference compute. You'll be able to run these AIs at the equivalent of 60x speed (3x from hours, 5x from direct speed, 2x from coordination, and 2x from variable time compute and/or context swapping with a cheaper+faster model). So, like 15k parallel copies at 60x speed.

- Probably the AI company has like ~3k researchers, but when you adjust for quality, this is only as good as like 600 of the top engineers/researchers.

- Let's say marginal returns to parallelism are roughly 0.55.

- Then, the increase in "serial labor equivalents" is roughly (15k / 600)^0.55 * 60 = 350. (Note that most of this is from speed and quality rather than parallel copies!)

- Production of algorithmic research is due to both compute and labor. So, let's say Cobb-Douglas with labor^0.5 compute^0.5 (My guess is that current marginal returns are more like labor^0.6 compute^0.4, but returns will get worse as you add more labor.) So, we do 350^0.5 = 19x which roughly matches my 15x speed up median.

- Progress will speed up before slowing down:

- Limits are high:

- Human brain is 1e24 training flop.

- We're using 1e29 flop to get a bit over human level, so like 4 OOMs of headroom from this.

- We can probably get a lot more efficiency, maybe 9 OOMs, at least for efficiency up rather than down. (By efficiency up rather than down I mean: we can do as well as using 1e33 flop with 1e24 real flop relative to scaling at the point when you hit human efficiency, but probably can't train a human-level AI for 1e15 real flop.)

At some point, I'll write a post that makes a better version of this argument and presents a full version of my picture.

I don't think we'll see a speed criticality per se; rather, I expect the rate of progress to accelerate up to the point of full automation. But I currently don't think this makes a huge difference to the bottom line of "progress in the first year after full automation in practice", as I expect to initially see fast cost decreases and inference time compute can only go so far. I could expand this argument to the extent you have cruxes like "slower takeoff because we've already eaten low-hanging fruit with earlier AI acceleration" and "inference compute means you hit full automation much faster".

3.5x is roughly the rate of bare metal compute scale-up per year. ↩︎

That is, after the first company fully automates AI R&D internally, if they decide to go as fast as possible and their AIs/employees/others don't try to sabotage these efforts. And I'm assuming that AI software progress hasn't substantially slowed down by the time of full automation, though conditioning on a 1e29 training run means that at least compute scaling progress (which is a key driver of software progress) hasn't slowed down all that much. ↩︎

I think we see about 1.2 OOMs per year including both hardware and software. ↩︎

↑ comment by Stephen McAleese (stephen-mcaleese) · 2025-03-09T13:14:01.838Z · LW(p) · GW(p)

Thanks for these thoughtful predictions. Do you think there's anything we can do today to prepare for accelerated or automated AI research?

↑ comment by Hjalmar_Wijk · 2025-03-08T02:06:49.540Z · LW(p) · GW(p)

Maybe distracting technicality:

This seems to make the simplifying assumption that the R&D automation is applied to a large fraction of all the compute that was previously driving algorithmic progress right?

If we imagine that a company only owns 10% of the compute being used to drive algorithmic progress pre-automation (and is only responsible for say 30% of its own algorithmic progress, with the rest coming from other labs/academia/open-source), and this company is the only one automating their AI R&D, then the effect on overall progress might be reduced (the 15X multiplier only applies to 30% of the relevant algorithmic progress).

In practice I would guess that either the leading actor has enough of a lead that they are already responsible for most of their algorithmic progress, or other groups are close behind and will thus automate their own AI R&D around the same time anyway. But I could imagine this slowing down the impact of initial AI R&D automation a little bit (and it might make a big difference for questions like "how much would it accelerate a non-frontier lab that stole the model weights and tried to do rsi").

Replies from: ryan_greenblatt↑ comment by ryan_greenblatt · 2025-03-08T02:23:18.180Z · LW(p) · GW(p)

Yes, I think frontier AI companies are responsible for most of the algorithmic progress. I think its unclear how much the leading actor benefits from progress done at other slightly behind AI companies and this could make progress substantially slower. (However, it's possible the leading AI company would be able to acquire the GPUs from these other companies.)

↑ comment by Garrett Baker (D0TheMath) · 2025-03-07T16:03:59.365Z · LW(p) · GW(p)

at the first moment that ai can fully replace a human researcher (that is, you can purely just put more compute in and get more research out, and only negligible human labor is required), the ai will probably be more expensive per unit of research than the human

Why do you think this? It seems to me that for most tasks once an AI gets some skill it is much cheaper to run it for that skill than a human.

comment by leogao · 2025-01-31T21:48:43.952Z · LW(p) · GW(p)

libraries abstract away the low level implementation details; you tell them what you want to get done and they make sure it happens. frameworks are the other way around. they abstract away the high level details; as long as you implement the low level details you're responsible for, you can assume the entire system works as intended.

a similar divide exists in human organizations and with managing up vs down. with managing up, you abstract away the details of your work and promise to solve some specific problem. with managing down, you abstract away the mission and promise that if a specific problem is solved, it will make progress towards the mission.

(of course, it's always best when everyone has state on everything. this is one reason why small teams are great. but if you have dozens of people, there is no way for everyone to have all the state, and so you have to do a lot of abstracting.)

when either abstraction leaks, it causes organizational problems -- micromanagement, or loss of trust in leadership.

comment by leogao · 2025-02-23T21:58:40.276Z · LW(p) · GW(p)

there are a lot of video games (and to a lesser extent movies, books, etc) that give the player an escapist fantasy of being hypercompetent. It's certainly an alluring promise: with only a few dozen hours of practice, you too could become a world class fighter or hacker or musician! But because becoming hypercompetent at anything is a lot of work, the game has to put its finger on the scale to deliver on this promise. Maybe flatter the user a bit, or let the player do cool things without the skill you'd actually need in real life.

It's easy to dismiss this kind of media as inaccurate escapism that distorts people's views of how complex these endeavors of skill really are. But it's actually a shockingly accurate simulation of what it feels like to actually be really good at something. As they say, being competent doesn't feel like being competent, it feels like the thing just being really easy.

Replies from: TrevorWiesinger, weightt-an, Viliam↑ comment by trevor (TrevorWiesinger) · 2025-02-24T07:07:15.472Z · LW(p) · GW(p)

"power fantasies" are actually a pretty mundane phenomenon given how human genetic diversity shook out; most people intuitively gravitate towards anyone who looks and acts like a tribal chief, or towards the possibility that you yourself or someone you meet could become (or already be) a tribal chief, via constructing some abstract route that requires forging a novel path instead of following other people's.

Also a mundane outcome of human genetic diversity is how division of labor shakes out; people noticing they were born with savant-level skills and that they can sink thousands of hours into skills like musical instruments, programming, data science, sleight of hand party tricks, social/organizational modelling, painting, or psychological manipulation. I expect the pool to be much larger for power-seeking-adjacent skills than art, and that some proportion of that larger pool of people managed to get their skills's mental muscle memory sufficiently intensely honed that everyone should feel uncomfortable sharing a planet with them.

↑ comment by Canaletto (weightt-an) · 2025-02-24T11:27:46.668Z · LW(p) · GW(p)

The alternative is to pit people against each other in some competitive games, 1 on 1 or in teams. I don't think the feeling you get from such games is consistent with "being competent doesn't feel like being competent, it feels like the thing just being really easy", probably mainly because there is skill level matching, there are always opponents who pose you a real challenge.

Hmm maybe such games need some more long tail probabilistic matching, to sometimes feel the difference. Or maybe variable team sizes, with many incompetent people versus few competent, to get a more "doomguy" feeling.

↑ comment by Viliam · 2025-03-04T08:45:58.786Z · LW(p) · GW(p)

Some games do put their finger on the scale, for example you have a first-person shooter where you learn to aim better but you also now have a gun that deals 200 damage per hit, as opposed to your starting gun that dealt 10.

But puzzle-solving games are usually fair, I think.

comment by leogao · 2024-08-12T22:11:49.277Z · LW(p) · GW(p)

reliability is surprisingly important. if I have a software tool that is 90% reliable, it's actually not that useful for automation, because I will spend way too much time manually fixing problems. this is especially a problem if I'm chaining multiple tools together in a script. I've been bit really hard by this because 90% feels pretty good if you run it a handful of times by hand, but then once you add it to your automated sweep or whatever it breaks and then you have to go in and manually fix things. and getting to 99% or 99.9% is really hard because things break in all sorts of weird ways.

I think this has lessons for AI - lack of reliability is one big reason I fail to get very much value out of AI tools. if my chatbot catastrophically hallucinates once every 10 queries, then I basically have to look up everything anyways to check. I think this is a major reason why cool demos often don't mean things that are practically useful - 90% reliable it's great for a demo (and also you can pick tasks that your AI is more reliable at, rather than tasks which are actually useful in practice). this is an informing factor for why my timelines are longer than some other people's

Replies from: faul_sname, sharmake-farah, alexander-gietelink-oldenziel, M. Y. Zuo↑ comment by faul_sname · 2024-08-12T22:40:02.566Z · LW(p) · GW(p)

One nuance here is that a software tool that succeeds at its goal 90% of the time, and fails in an automatically detectable fashion the other 10% of the time is pretty useful for partial automation. Concretely, if you have a web scraper which performs a series of scripted clicks in hardcoded locations after hardcoded delays, and then extracts a value from the page from immediately after some known hardcoded text, that will frequently give you a ≥ 90% success rate of getting the piece of information you want while being much faster to code up than some real logic (especially if the site does anti-scraper stuff like randomizing css classes and DOM structure) and saving a bunch of work over doing it manually (because now you only have to manually extract info from the pages that your scraper failed to scrape).

Replies from: leogao↑ comment by leogao · 2024-08-12T23:14:57.173Z · LW(p) · GW(p)

I think even if failures are automatically detectable, it's quite annoying. the cost is very logarithmic: there's a very large cliff in effort when going from zero manual intervention required to any manual intervention required whatsoever; and as the amount of manual intervention continues to increase, you can invest in infrastructure to make it less painful, and then to delegate the work out to other people.

↑ comment by Noosphere89 (sharmake-farah) · 2024-08-13T01:26:23.604Z · LW(p) · GW(p)

While I agree with this, I do want to note that this:

this is an informing factor for why my timelines are longer than some other people's

Only lengthens timelines very much if we also assume scaling can't solve the reliability problem.

Replies from: leogao↑ comment by leogao · 2024-08-13T01:31:52.560Z · LW(p) · GW(p)

even if scaling does eventually solve the reliability problem, it means that very plausibly people are overestimating how far along capabilities are, and how fast the rate of progress is, because the most impressive thing that can be done with 90% reliability plausibly advances faster than the most impressive thing that can be done with 99.9% reliability

↑ comment by Alexander Gietelink Oldenziel (alexander-gietelink-oldenziel) · 2024-08-15T11:42:05.256Z · LW(p) · GW(p)

Perhaps it shouldn't be too surprising. Reliability, machine precision, economy are likely the deciding factors to whether many (most?) technologies take off. The classic RoP case study: the bike.

↑ comment by M. Y. Zuo · 2024-08-13T17:27:51.184Z · LW(p) · GW(p)

Motorola engineers figured this out a few decades ago, even 99.99 to 99.999 makes a huge difference on a large scale. They even published a few interesting papers and monographs on it from what I recall.

Replies from: gietema↑ comment by gietema · 2024-08-13T21:24:36.149Z · LW(p) · GW(p)

This can be explained when thinking about what these accuracy levels mean: 99.99% accuracy is one error every 10K trials. 99.999% accuracy is one error every 100K trials. So the 99.999% system is 10x better! When errors are costly and you’re operating at scale, this is a huge difference.

comment by leogao · 2024-01-28T04:46:54.866Z · LW(p) · GW(p)

i've noticed a life hyperparameter that affects learning quite substantially. i'd summarize it as "willingness to gloss over things that you're confused about when learning something". as an example, suppose you're modifying some code and it seems to work but also you see a warning from an unrelated part of the code that you didn't expect. you could either try to understand exactly why it happened, or just sort of ignore it.

reasons to set it low:

- each time your world model is confused, that's an opportunity to get a little bit of signal to improve your world model. if you ignore these signals you increase the length of your feedback loop, and make it take longer to recover from incorrect models of the world.

- in some domains, it's very common for unexpected results to actually be a hint at a much bigger problem. for example, many bugs in ML experiments cause results that are only slightly weird, but if you tug on the thread of understanding why your results are slightly weird, this can cause lots of your experiments to unravel. and doing so earlier rather than later can save a huge amount of time

- understanding things at least one level of abstraction down often lets you do things more effectively. otherwise, you have to constantly maintain a bunch of uncertainty about what will happen when you do any particular thing, and have a harder time thinking of creative solutions

reasons to set it high:

- it's easy to waste a lot of time trying to understand relatively minor things, instead of understanding the big picture. often, it's more important to 80-20 by understanding the big picture, and you can fill in the details when it becomes important to do so (which often is only necessary in rare cases).

- in some domains, we have no fucking idea why anything happens, so you have to be able to accept that we don't know why things happen to be able to make progress

- often, if e.g you don't quite get a claim that a paper is making, you could resolve your confusion just by reading a bit ahead. if you always try to fully understand everything before digging into it, you'll find it very easy to get stuck before actually make it to the main point the paper is making

there are very different optimal configurations for different kinds of domains. maybe the right approach is to be aware that this is an important hparameter and occasionally try going down some rabbit holes and seeing how much value it provides

Replies from: Gunnar_Zarncke, johannes-c-mayer, johannes-c-mayer↑ comment by Gunnar_Zarncke · 2024-01-28T23:23:19.467Z · LW(p) · GW(p)

This seems to be related to Goldfish Reading [LW · GW]. Or maybe complementary. In Goldfish Reading [LW · GW] one reads the same text multiple times, not trying to understand it all at once or remember everything, i.e., intentionally ignoring confusion. But in a structured form to avoid overload.

Replies from: leogao↑ comment by leogao · 2024-01-28T23:52:31.596Z · LW(p) · GW(p)

Yeah, this seems like a good idea for reading - lets you get best of both worlds. Though it works for reading mostly because it doesn't take that much longer to do so. This doesn't translate as directly to e.g what to do when debugging code or running experiments.

↑ comment by Johannes C. Mayer (johannes-c-mayer) · 2024-01-29T21:41:25.443Z · LW(p) · GW(p)

I think it's very important to keep track of what you don't know. It can be useful to not try to get the best model when that's not the bottleneck. But I think it's always useful to explicitly store the knowledge of what models are developed to what extent.

↑ comment by Johannes C. Mayer (johannes-c-mayer) · 2024-01-29T21:40:56.869Z · LW(p) · GW(p)

The algorithm that I have been using, where what to understand to what extend is not a hyperparameter, is to just solve the actual problems I want to solve, and then always slightly overdo the learning, i.e. I would always learn a bit more than necessary to solve whatever subproblem I am solving right now. E.g. I am just trying to make a simple server, and then I learn about the protocol stack.

This has the advantage that I am always highly motivated to learn something, as the path to the problem on the graph of justifications is always pretty short. It also ensures that all the things that I learn are not completely unrelated to the problem I am solving.

I am pretty sure if you had perfect control over your motivation this is not the best algorithm, but given that you don't, this is the best algorithm I have found so far.

comment by leogao · 2025-01-22T08:52:55.604Z · LW(p) · GW(p)

don't worry too much about doing things right the first time. if the results are very promising, the cost of having to redo it won't hurt nearly as much as you think it will. but if you put it off because you don't know exactly how to do it right, then you might never get around to it.

Replies from: Viliamcomment by leogao · 2024-09-27T21:30:46.238Z · LW(p) · GW(p)

in some way, bureaucracy design is the exact opposite of machine learning. while the goal of machine learning is to make clusters of computers that can think like humans, the goal of bureaucracy design is to make clusters of humans that can think like a computer

comment by leogao · 2025-03-03T06:28:06.883Z · LW(p) · GW(p)

my referral/vouching policy is i try my best to completely decouple my estimate of technical competence from how close a friend someone is. i have very good friends i would not write referrals for and i have written referrals for people i basically only know in a professional context. if i feel like it's impossible for me to disentangle, i will defer to someone i trust and have them make the decision. this leads to some awkward conversations, but if someone doesn't want to be friends with me because it won't lead to a referral, i don't want to be friends with them either.

Replies from: neel-nanda-1↑ comment by Neel Nanda (neel-nanda-1) · 2025-03-03T15:09:44.717Z · LW(p) · GW(p)

Strong agree (except in that liking someone's company is evidence that they would be a pleasant co-worker, but that's generally not a high order bit). I find it very annoying that standard reference culture seems to often imply giving extremely positive references unless someone was truly awful, since it makes it much harder to get real info from references

Replies from: Josephm↑ comment by Joseph Miller (Josephm) · 2025-03-03T19:17:13.249Z · LW(p) · GW(p)

I find it very annoying that standard reference culture seems to often imply giving extremely positive references unless someone was truly awful, since it makes it much harder to get real info from references

Agreed, but also most of the world does operate in this reference culture. If you choose to take a stand against it, you might screw over a decent candidate by providing only a quite positive recommendation.

Replies from: neel-nanda-1↑ comment by Neel Nanda (neel-nanda-1) · 2025-03-04T12:44:36.827Z · LW(p) · GW(p)

Agreed. If I'm talking to someone who I expect to be able to recalibrate, I just explain that I think the standard norms are dumb, the norms I actually follow, and then give an honest and balanced assessment. If I'm talking to someone I don't really know, I generally give a positive but not very detailed reference or don't reply, depending on context.

comment by leogao · 2024-07-11T06:11:50.632Z · LW(p) · GW(p)

learning thread for taking notes on things as i learn them (in public so hopefully other people can get value out of it)

Replies from: leogao↑ comment by leogao · 2024-07-11T07:23:58.470Z · LW(p) · GW(p)

VAEs:

a normal autoencoder decodes single latents z to single images (or whatever other kind of data) x, and also encodes single images x to single latents z.

with VAEs, we want our decoder (p(x|z)) to take single latents z and output a distribution over x's. for simplicity we generally declare that this distribution is a gaussian with identity covariance, and we have our decoder output a single x value that is the mean of the gaussian.

because each x can be produced by multiple z's, to run this backwards you also need a distribution of z's for each single x. we call the ideal encoder p(z|x) - the thing that would perfectly invert our decoder p(x|z). unfortunately, we obviously don't have access to this thing. so we have to train an encoder network q(z|x) to approximate it. to make our encoder output a distribution, we have it output a mean vector and a stddev vector for a gaussian. at runtime we sample a random vector eps ~ N(0, 1) and multiply it by the mean and stddev vectors to get an N(mu, std).

to train this thing, we would like to optimize the following loss function:

-log p(x) + KL(q(z|x)||p(z|x))

where the terms optimize the likelihood (how good is the VAE at modelling data, assuming we have access to the perfect z distribution) and the quality of our encoder (how good is our q(z|x) at approximating p(z|x)). unfortunately, neither term is tractable - the former requires marginalizing over z, which is intractable, and the latter requires p(z|x) which we also don't have access to. however, it turns out that the following is mathematically equivalent and is tractable:

-E z~q(z|x) [log p(x|z)] + KL(q(z|x)||p(z))

the former term is just the likelihood of the real data under the decoder distribution given z drawn from the encoder distribution (which happens to be exactly equivalent to the MSE, because it's the log of gaussian pdf). the latter term can be computed analytically, because both distributions are gaussians with known mean and std. (the distribution p is determined in part by the decoder p(x|z), but that doesn't pin down the entire distribution; we still have a degree of freedom in how we pick p(z). so we typically declare by fiat that p(z) is a N(0, 1) gaussian. then, p(z|x) is implied to be equal to p(x|z) p(z) / sum z' p(x|z') p(z'))

comment by leogao · 2024-12-07T15:46:18.123Z · LW(p) · GW(p)

a take I've expressed a bunch irl but haven't written up yet: feature sparsity might be fundamentally the wrong thing for disentangling superposition; circuit sparsity might be more correct to optimize for. in particular, circuit sparsity doesn't have problems with feature splitting/absorption

Replies from: Sodium↑ comment by Sodium · 2024-12-07T18:54:47.409Z · LW(p) · GW(p)

Yeah my view is that as long as our features/intermediate variables form human understandable circuits [LW · GW], it doesn't matter how "atomic" they are.

comment by leogao · 2024-11-27T04:37:04.317Z · LW(p) · GW(p)

the most valuable part of a social event is often not the part that is ostensibly the most important, but rather the gaps between the main parts.

- at ML conferences, the headline keynotes and orals are usually the least useful part to go to; the random spontaneous hallway chats and dinners and afterparties are extremely valuable

- when doing an activity with friends, the activity itself is often of secondary importance. talking on the way to the activity, or in the gaps between doing the activity, carry a lot of the value

- at work, a lot of the best conversations happen outside of scheduled 1:1s and group meetings, but rather happen in spontaneous hallway or dinner groups

↑ comment by johnswentworth · 2024-11-28T06:24:38.509Z · LW(p) · GW(p)

I have heard people say this so many times, and it is consistently the opposite of my experience. The random spontaneous conversations at conferences are disproportionately shallow and tend toward the same things which have been discussed to death online already, or toward the things which seem simple enough that everyone thinks they have something to say on the topic. When doing an activity with friends, it's usually the activity which is novel and/or interesting, while the conversation tends to be shallow and playful and fun but not as substantive as the activity. At work, spontaneous conversations generally had little relevance to the actual things we were/are working on (there are some exceptions, but they're rarely as high-value as ordinary work).

Replies from: D0TheMath↑ comment by Garrett Baker (D0TheMath) · 2024-11-28T18:44:50.368Z · LW(p) · GW(p)

I think you are possibly better/optimizing more than most others at selecting conferences & events you actually want to do. Even with work, I think many get value out of having those spontaneous conversations because it often shifts what they're going to do--the number one spontaneous conversation is "what are you working on" or "what have you done so far", which forces you to re-explain what you're doing & the reasons for doing it to a skeptical & ignorant audience. My understanding is you and David already do this very often with each other.

Replies from: johnswentworth↑ comment by johnswentworth · 2024-11-29T06:23:23.423Z · LW(p) · GW(p)

the number one spontaneous conversation is "what are you working on" or "what have you done so far", which forces you to re-explain what you're doing & the reasons for doing it to a skeptical & ignorant audience

I'm very curious if others also find this to be the biggest value-contributor amongst spontaneous conversations. (Also, more generally, I'm curious what kinds of spontaneous conversations people are getting so much value out of.)

Replies from: alexander-gietelink-oldenziel, Lblack↑ comment by Alexander Gietelink Oldenziel (alexander-gietelink-oldenziel) · 2024-11-29T11:18:57.130Z · LW(p) · GW(p)

One of the directions im currently most excited about (modern control theory through algebraic analysis) I learned about while idly chitchatting with a colleague at lunch about old school cybernetics. We were both confused why it was such a big deal in the 50s and 60s then basically died.

A stranger at the table had overheard our conversation and immediately started ranting to us about the history of cybernetics and modern methods of control theory. Turns out that control theory has developed far beyond whay people did in the 60s but names, techniques, methods have changed and this guy was one of the world experts. I wouldn't have known to ask him because the guy's specialization on the face of it had nothing to do with control theory.

↑ comment by Lucius Bushnaq (Lblack) · 2024-12-03T23:00:25.534Z · LW(p) · GW(p)

I do not find this to be the biggest value-contributor amongst my spontaneous conversations.

I don't have a good hypothesis for why spontaneous-ish conversations can end up being valuable to me so frequently. I have a vague intuition that it might be an expression of the same phenomenon that makes slack and playfulness in research and internet browsing very valuable for me.

↑ comment by metachirality · 2024-11-27T09:07:39.760Z · LW(p) · GW(p)

What would an event optimized for this sort of thing look like?

Replies from: Josephm↑ comment by Joseph Miller (Josephm) · 2024-11-27T13:25:03.944Z · LW(p) · GW(p)

Unconferences are a thing for this reason

comment by leogao · 2023-04-14T02:28:46.473Z · LW(p) · GW(p)

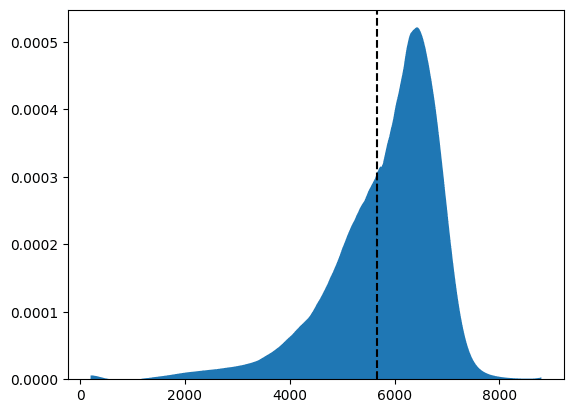

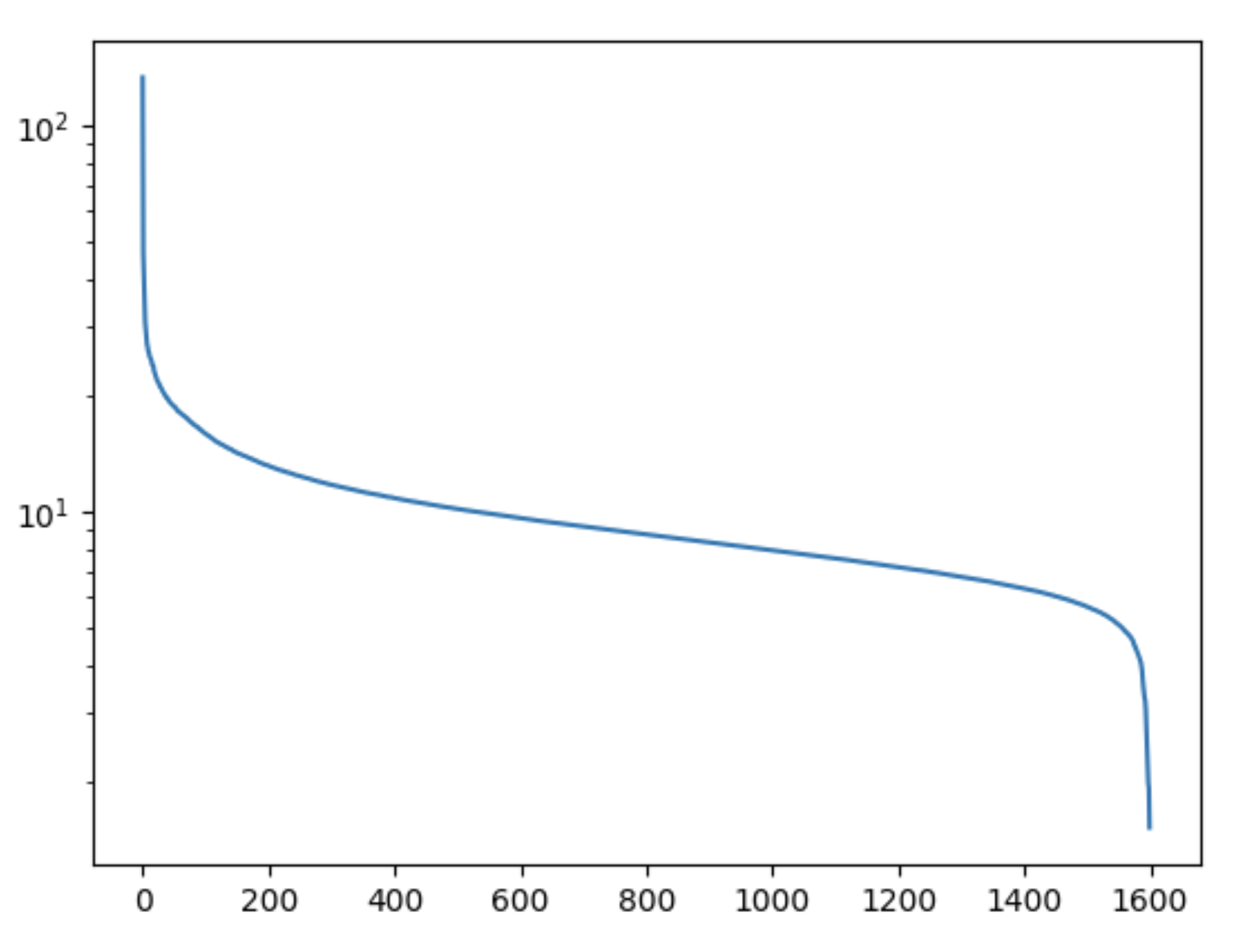

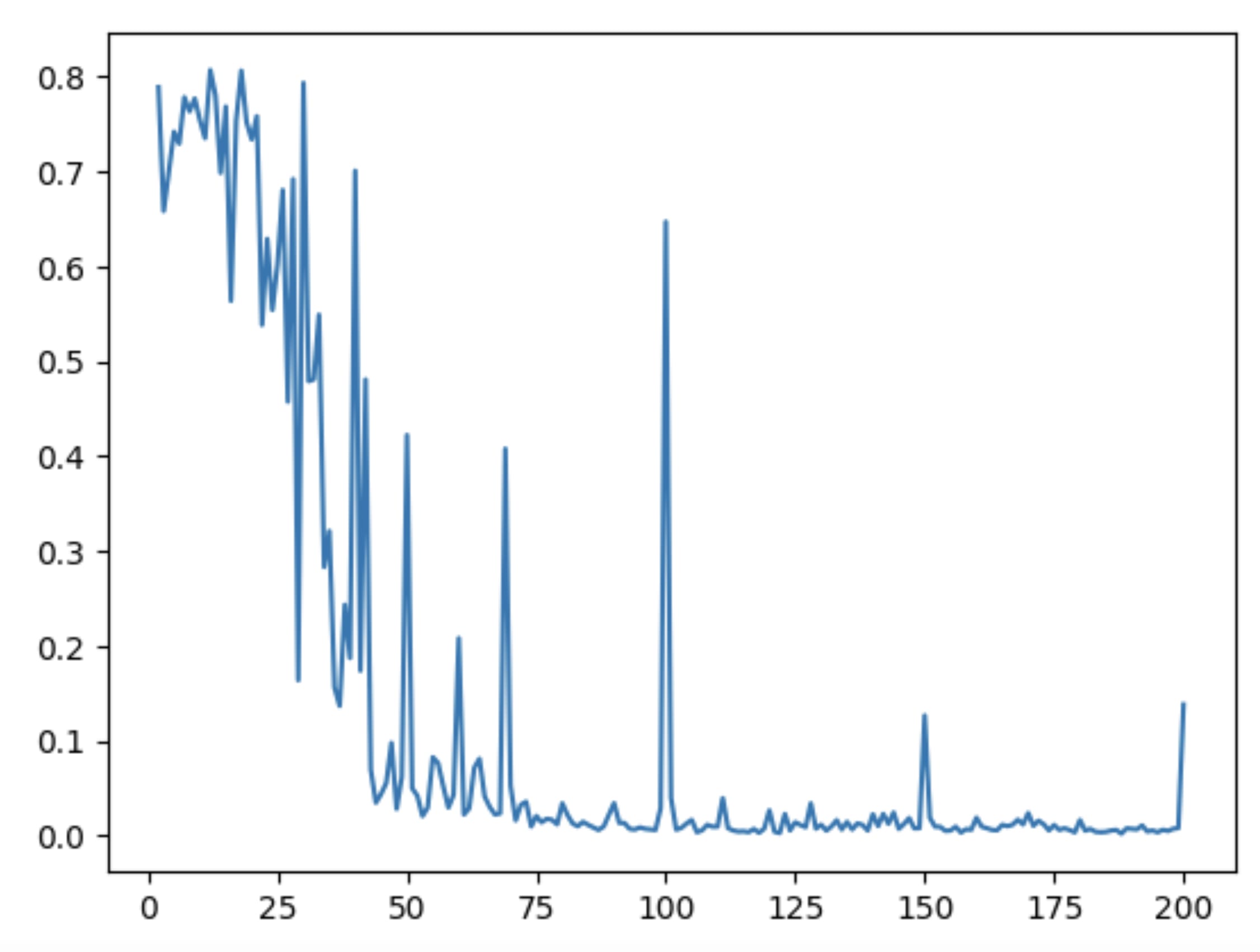

random fun experiment: accuracy of GPT-4 on "Q: What is 1 + 1 + 1 + 1 + ...?\nA:"

↑ comment by aphyer · 2023-04-14T02:35:08.342Z · LW(p) · GW(p)

...I am suddenly really curious what the accuracy of humans on that is.

Replies from: Richard_Kennaway↑ comment by Richard_Kennaway · 2023-04-14T07:40:20.136Z · LW(p) · GW(p)

'Can you do Addition?' the White Queen asked. 'What's one and one and one and one and one and one and one and one and one and one?'

'I don't know,' said Alice. 'I lost count.'

comment by leogao · 2025-02-24T02:29:30.835Z · LW(p) · GW(p)

you might expect that the butterfly effect applies to ML training. make one small change early in training and it might cascade to change the training process in huge ways.

at least in non-RL training, this intuition seems to be basically wrong. you can do some pretty crazy things to the training process without really affecting macroscopic properties of the model (e.g loss). one very well known example is that using mixed precision training results in training curves that are basically identical to full precision training, even though you're throwing out a ton of bits of precision on every step.

comment by leogao · 2024-12-27T09:46:28.297Z · LW(p) · GW(p)

a lot of unconventional people choose intentionally to ignore normie-legible status systems. this can take the form of either expert consensus or some form of feedback from reality that is widely accepted. for example, many researchers especially around these parts just don't publish at all in normal ML conferences at all, opting instead to depart into their own status systems. or they don't care whether their techniques can be used to make very successful products, or make surprisingly accurate predictions etc. instead, they substitute some alternative status system, like approval of a specific subcommunity.

there's a grain of truth to this, which is that the normal status system is often messed up (academia has terrible terrible incentives). it is true that many people overoptimize the normal status system really hard and end up not producing very much value.

but the problem with starting your own status system (or choosing to compete in a less well-agreed-upon one) is that it's unclear to other people how much stock to put in your status points. it's too easy to create new status systems. the existing ones might be deeply flawed, but at least their difficulty is a known quantity.

one common retort is that it's not worth proving yourself to people who are too closed minded and only accept ideas if they are validated by some legible status system. this is true to some extent, and i'm generally against people spending too much effort to optimize normie status too hard (e.g i think people should be way less worried about getting a degree in order to be taken seriously / get a job offer), but it's possible to take too far.

a rational decision maker should in fact discount claims of extremely illegible quality, because there are simply too many of them and it's too hard to pick out the good ones even if they were there (that's sort of the whole thing about illegibillity!). it seems bad to only bestow the truth upon people who happen to be irrational in ways that cause them to take you seriously by chance. if left unchecked, this kind of thing can also very easily evolve into a cult, where the unmooring from reality checks allows huge epistemic distortions.

a good in between approach might be to do some very legibly impressive things, just to prove that you can in fact do well at the legible status system if you chose to, and are intentionally choosing not to (as opposed to choosing alternative status systems because you're not capable of getting status in the legible system).

Replies from: johnswentworth, habryka4, dmurfet, Amyr, oliver-daniels-koch, CstineSublime↑ comment by johnswentworth · 2024-12-27T13:32:40.239Z · LW(p) · GW(p)

This comment seems to implicitly assume markers of status are the only way to judge quality of work. You can just, y'know, look at it? Even without doing a deep dive, the sort of papers or blog posts which present good research have a different style and rhythm to them than the crap. And it's totally reasonable to declare that one's audience is the people who know how to pick up on that sort of style.

The bigger reason we can't entirely escape "status"-ranking systems is that there's far too much work to look at it all, so people have to choose which information sources to pay attention to.

Replies from: dmurfet, Viliam, leogao↑ comment by Daniel Murfet (dmurfet) · 2024-12-28T01:21:48.532Z · LW(p) · GW(p)