Yudkowsky and Christiano discuss "Takeoff Speeds"

post by Eliezer Yudkowsky (Eliezer_Yudkowsky) · 2021-11-22T19:35:27.657Z · LW · GW · 176 commentsContents

5.5. Comments on "Takeoff Speeds"

[Yudkowsky][10:14] (Nov. 22 follow-up comment)

[Yudkowsky][16:52]

Slower takeoff means faster progress

[Yudkowsky][16:57]

Operationalizing slow takeoff

[Yudkowsky][17:01]

The basic argument

[Yudkowsky][17:22]

Humans vs. chimps

[Yudkowsky][18:38]

AGI will be a side-effect

[Yudkowsky][19:29]

Finding the secret sauce

[Yudkowsky][19:40]

Universality thresholds

[Yudkowsky][20:21]

"Understanding" is discontinuous

[Yudkowsky][20:41]

Deployment lag

[Yudkowsky][20:49]

Recursive self-improvement

[Yudkowsky][20:54]

Train vs. test

[Yudkowsky][21:12]

Discontinuities at 100% automation

[Yudkowsky][21:31]

The weight of evidence

[Yudkowsky][21:31]

5.6. Yudkowsky/Christiano discussion: AI progress and crossover points

[Christiano][22:15]

[Yudkowsky][22:16]

[Christiano][22:20]

[Yudkowsky][22:22]

[Christiano][22:23]

[Yudkowsky][22:25]

[Christiano][22:26]

[Yudkowsky][22:26]

[Christiano][22:26]

[Yudkowsky][22:27]

[Christiano][22:27]

[Yudkowsky][22:27]

[Christiano][22:27]

[Yudkowsky][22:28]

[Christiano][22:28]

[Yudkowsky][22:28]

[Christiano][22:28]

[Yudkowsky][22:30]

[Christiano][22:31]

[Yudkowsky][22:31]

[Christiano][22:31]

[Yudkowsky][22:32]

[Christiano][22:32]

[Yudkowsky][22:34]

[Christiano][22:35]

[Yudkowsky][22:36]

[Christiano][22:36]

[Yudkowsky][22:37]

[Christiano][22:37]

[Yudkowsky][22:38]

[Christiano][22:38]

[Yudkowsky][22:39]

[Christiano][22:40]

[Yudkowsky][22:40]

[Christiano][22:42]

[Yudkowsky][22:43]

[Christiano][22:43]

[Yudkowsky][22:43]

[Christiano][22:43]

[Yudkowsky][22:44]

[Christiano][22:45]

[Yudkowsky][22:45]

[Christiano][22:46]

[Yudkowsky][22:47]

[Christiano][22:47]

[Bensinger][22:48]

5.7. Legal economic growth

[Yudkowsky][22:49]

[Christiano][22:50]

[Yudkowsky][22:50]

[Christiano][22:52]

[Yudkowsky][22:53]

[Christiano][22:53]

[Yudkowsky][22:54]

[Christiano][22:55]

[Yudkowsky][22:55]

[Christiano][22:55]

[Yudkowsky][22:55]

[Christiano][22:56]

[Yudkowsky][22:56]

[Christiano][22:57]

[Yudkowsky][23:00]

[Christiano][23:01]

[Yudkowsky][23:02]

[Christiano][23:02]

[Yudkowsky][23:03]

[Christiano][23:03]

[Yudkowsky][23:05]

[Christiano][23:08]

[Yudkowsky][23:08]

[Christiano][23:10]

[Yudkowsky][23:12]

[Christiano][23:13]

[Yudkowsky][23:14]

[Christiano][23:14]

[Yudkowsky][23:15]

[Christiano][23:15]

5.8. TPUs and GPUs, and automating AI R&D

[Yudkowsky][23:17]

[Christiano][23:17]

[Yudkowsky][23:18]

[Christiano][23:18]

[Yudkowsky][23:19]

[Christiano][23:19]

[Yudkowsky][23:19]

[Christiano][23:20]

[Yudkowsky][23:21]

[Christiano][23:21]

[Yudkowsky][23:23]

[Christiano][23:23]

[Yudkowsky][23:24]

[Christiano][23:25]

[Yudkowsky][23:25]

[Christiano][23:25]

[Yudkowsky][23:26]

[Christiano][23:26]

[Yudkowsky][23:26]

[Christiano][23:26]

[Yudkowsky][23:27]

[Christiano][23:27]

[Yudkowsky][23:28]

[Christiano][23:29]

[Yudkowsky][23:29]

[Christiano][23:29]

[Yudkowsky][23:30]

[Christiano][23:31]

5.9. Smooth exponentials vs. jumps in income

[Yudkowsky][23:31]

[Christiano][23:32]

[Yudkowsky][23:33]

[Christiano][23:33]

[Yudkowsky][23:34]

[Christiano][23:34]

[Yudkowsky][23:34]

[Christiano][23:36]

[Yudkowsky][23:36]

[Christiano][23:37]

[Yudkowsky][23:38]

[Christiano][23:39]

[Yudkowsky][23:41]

[Christiano][23:42]

[Yudkowsky][23:45]

[Christiano][23:46]

[Yudkowsky][23:47]

[Christiano][23:48]

[Yudkowsky][23:49]

[Christiano][23:50]

[Yudkowsky][23:51]

[Christiano][23:52]

[Yudkowsky][23:53]

5.10. Late-stage predictions

[Christiano][23:53]

[Yudkowsky][23:55]

[Christiano][23:55]

[Yudkowsky][23:56]

[Christiano][23:56]

[Yudkowsky][23:57]

[Christiano][23:58]

[Yudkowsky][23:59]

[Christiano][23:59]

6. Follow-ups on "Takeoff Speeds"

6.1. Eliezer Yudkowsky's commentary

None

177 comments

This is a transcription of Eliezer Yudkowsky responding to Paul Christiano's Takeoff Speeds live on Sep. 14, followed by a conversation between Eliezer and Paul. This discussion took place after Eliezer's conversation [LW · GW] with Richard Ngo.

Color key:

| Chat by Paul and Eliezer | Other chat | Inline comments |

5.5. Comments on "Takeoff Speeds"

[Yudkowsky][10:14] (Nov. 22 follow-up comment) (This was in response to an earlier request by Richard Ngo that I respond to Paul on Takeoff Speeds.) |

[Yudkowsky][16:52] maybe I'll try liveblogging some https://sideways-view.com/2018/02/24/takeoff-speeds/ here in the meanwhile |

Slower takeoff means faster progress

[Yudkowsky][16:57]

It seems to me to be disingenuous to phrase it this way, given that slow-takeoff views usually imply that AI has a large impact later relative to right now (2021), even if they imply that AI impacts the world "earlier" relative to "when superintelligence becomes reachable". "When superintelligence becomes reachable" is not a fixed point in time that doesn't depend on what you believe about cognitive scaling. The correct graph is, in fact, the one where the "slow" line starts a bit before "fast" peaks and ramps up slowly, reaching a high point later than "fast". It's a nice try at reconciliation with the imagined Other, but it fails and falls flat. This may seem like a minor point, but points like this do add up.

This again shows failure to engage with the Other's real viewpoint. My mainline view is that growth stays at 5%/year and then everybody falls over dead in 3 seconds and the world gets transformed into paperclips; there's never a point with 3000%/year. |

Operationalizing slow takeoff

[Yudkowsky][17:01]

If we allow that consuming and transforming the solar system over the course of a few days is "the first 1 year interval in which world output doubles", then I'm happy to argue that there won't be a 4-year interval with world economic output doubling before then. This, indeed, seems like a massively overdetermined point to me. That said, again, the phrasing is not conducive to conveying the Other's real point of view.

Statements like these are very often "true, but not the way the person visualized them". Before anybody built the first critical nuclear pile in a squash court at the University of Chicago, was there a pile that was almost but not quite critical? Yes, one hour earlier. Did people already build nuclear systems and experiment with them? Yes, but they didn't have much in the way of net power output. Did the Wright Brothers build prototypes before the Flyer? Yes, but they weren't prototypes that flew but 80% slower. I guarantee you that, whatever the fast takeoff scenario, there will be some way to look over the development history, and nod wisely and say, "Ah, yes, see, this was not unprecedented, here are these earlier systems which presaged the final system!" Maybe you could even look back to today and say that about GPT-3, yup, totally presaging stuff all over the place, great. But it isn't transforming society because it's not over the social-transformation threshold. AlphaFold presaged AlphaFold 2 but AlphaFold 2 is good enough to start replacing other ways of determining protein conformations and AlphaFold is not; and then neither of those has much impacted the real world, because in the real world we can already design a vaccine in a day and the rest of the time is bureaucratic time rather than technology time, and that goes on until we have an AI over the threshold to bypass bureaucracy. Before there's an AI that can act while fully concealing its acts from the programmers, there will be an AI (albeit perhaps only 2 hours earlier) which can act while only concealing 95% of the meaning of its acts from the operators. And that AI will not actually originate any actions, because it doesn't want to get caught; there's a discontinuity in the instrumental incentives between expecting 95% obscuration, being moderately sure of 100% obscuration, and being very certain of 100% obscuration. Before that AI grasps the big picture and starts planning to avoid actions that operators detect as bad, there will be some little AI that partially grasps the big picture and tries to avoid some things that would be detected as bad; and the operators will (mainline) say "Yay what a good AI, it knows to avoid things we think are bad!" or (death with unrealistic amounts of dignity) say "oh noes the prophecies are coming true" and back off and start trying to align it, but they will not be able to align it, and if they don't proceed anyways to destroy the world, somebody else will proceed anyways to destroy the world. There is always some step of the process that you can point to which is continuous on some level. The real world is allowed to do discontinuous things to you anyways. There is not necessarily a presage of 9/11 where somebody flies a small plane into a building and kills 100 people, before anybody flies 4 big planes into 3 buildings and kills 3000 people; and even if there is some presaging event like that, which would not surprise me at all, the rest of the world's response to the two cases was evidently discontinuous. You do not necessarily wake up to a news story that is 10% of the news story of 2001/09/11, one year before 2001/09/11, written in 10% of the font size on the front page of the paper. Physics is continuous but it doesn't always yield things that "look smooth to a human brain". Some kinds of processes converge to continuity in strong ways where you can throw discontinuous things in them and they still end up continuous, which is among the reasons why I expect world GDP to stay on trend up until the world ends abruptly; because world GDP is one of those things that wants to stay on a track, and an AGI building a nanosystem can go off that track without being pushed back onto it.

Like the way they're freaking out about Covid (itself a nicely smooth process that comes in locally pretty predictable waves) by going doobedoobedoo and letting the FDA carry on its leisurely pace; and not scrambling to build more vaccine factories, now that the rich countries have mostly got theirs? Does this sound like a statement from a history book, or from an EA imagining an unreal world where lots of other people behave like EAs? There is a pleasure in imagining a world where suddenly a Big Thing happens that proves we were right and suddenly people start paying attention to our thing, the way we imagine they should pay attention to our thing, now that it's attention-grabbing; and then suddenly all our favorite policies are on the table! You could, in a sense, say that our world is freaking out about Covid; but it is not freaking out in anything remotely like the way an EA would freak out; and all the things an EA would immediately do if an EA freaked out about Covid, are not even on the table for discussion when politicians meet. They have their own ways of reacting. (Note: this is not commentary on hard vs soft takeoff per se, just a general commentary on the whole document seeming to me to... fall into a trap of finding self-congruent things to imagine and imagining them.) |

The basic argument

[Yudkowsky][17:22]

This is very often the sort of thing where you can look back and say that it was true, in some sense, but that this ended up being irrelevant because the slightly worse AI wasn't what provided the exciting result which led to a boardroom decision to go all in and invest $100M on scaling the AI. In other words, it is the sort of argument where the premise is allowed to be true if you look hard enough for a way to say it was true, but the conclusion ends up false because it wasn't the relevant kind of truth.

This strikes me as a massively invalid reasoning step. Let me count the ways. First, there is a step not generally valid from supposing that because a previous AI is a technological precursor which has 19 out of 20 critical insights, it has 95% of the later AI's IQ, applied to similar domains. When you count stuff like "multiplying tensors by matrices" and "ReLUs" and "training using TPUs" then AlphaGo only contained a very small amount of innovation relative to previous AI technology, and yet it broke trends on Go performance. You could point to all kinds of incremental technological precursors to AlphaGo in terms of AI technology, but they wouldn't be smooth precursors on a graph of Go-playing ability. Second, there's discontinuities of the environment to which intelligence can be applied. 95% concealment is not the same as 100% concealment in its strategic implications; an AI capable of 95% concealment bides its time and hides its capabilities, an AI capable of 100% concealment strikes. An AI that can design nanofactories that aren't good enough to, euphemistically speaking, create two cellwise-identical strawberries and put them on a plate, is one that (its operators know) would earn unwelcome attention if its earlier capabilities were demonstrated, and those capabilities wouldn't save the world, so the operators bide their time. The AGI tech will, I mostly expect, work for building self-driving cars, but if it does not also work for manipulating the minds of bureaucrats (which is not advised for a system you are trying to keep corrigible and aligned because human manipulation is the most dangerous domain), the AI is not able to put those self-driving cars on roads. What good does it do to design a vaccine in an hour instead of a day? Vaccine design times are no longer the main obstacle to deploying vaccines. Third, there's the entire thing with recursive self-improvement, which, no, is not something humans have experience with, we do not have access to and documentation of our own source code and the ability to branch ourselves and try experiments with it. The technological precursor of an AI that designs an improved version of itself, may perhaps, in the fantasy of 95% intelligence, be an AI that was being internally deployed inside Deepmind on a dozen other experiments, tentatively helping to build smaller AIs. Then the next generation of that AI is deployed on itself, produces an AI substantially better at rebuilding AIs, it rebuilds itself, they get excited and dump in 10X the GPU time while having a serious debate about whether or not to alert Holden (they decide against it), that builds something deeply general instead of shallowly general, that figures out there are humans and it needs to hide capabilities from them, and covertly does some actual deep thinking about AGI designs, and builds a hidden version of itself elsewhere on the Internet, which runs for longer and steals GPUs and tries experiments and gets to the superintelligent level. Now, to be very clear, this is not the only line of possibility. And I emphasize this because I think there's a common failure mode where, when I try to sketch a concrete counterexample to the claim that smooth technological precursors yield smooth outputs, people imagine that only this exact concrete scenario is the lynchpin of Eliezer's whole worldview and the big key thing that Eliezer thinks is important and that the smallest deviation from it they can imagine thereby obviates my worldview. This is not the case here. I am simply exhibiting non-ruled-out models which obey the premise "there was a precursor containing 95% of the code" and which disobey the conclusion "there were precursors with 95% of the environmental impact", thereby showing this for an invalid reasoning step. This is also, of course, as Sideways View admits but says "eh it was just the one time", not true about chimps and humans. Chimps have 95% of the brain tech (at least), but not 10% of the environmental impact. A very large amount of this whole document, from my perspective, is just trying over and over again to pump the invalid intuition that design precursors with 95% of the technology should at least have 10% of the impact. There are a lot of cases in the history of startups and the world where this is false. I am having trouble thinking of a clear case in point where it is true. Where's the earlier company that had 95% of Jeff Bezos's ideas and now has 10% of Amazon's market cap? Where's the earlier crypto paper that had all but one of Satoshi's ideas and which spawned a cryptocurrency a year before Bitcoin which did 10% as many transactions? Where's the nonhuman primate that learns to drive a car with only 10x the accident rate of a human driver, since (you could argue) that's mostly visuo-spatial skills without much visible dependence on complicated abstract general thought? Where's the chimpanzees with spaceships that get 10% of the way to the Moon? When you get smooth input-output conversions they're not usually conversions from technology->cognition->impact! |

Humans vs. chimps

[Yudkowsky][18:38]

Chimps are nearly useless because they're not general, and doing anything on the scale of building a nuclear plant requires mastering so many different nonancestral domains that it's no wonder natural selection didn't happen to separately train any single creature across enough different domains that it had evolved to solve every kind of domain-specific problem involved in solving nuclear physics and chemistry and metallurgy and thermics in order to build the first nuclear plant in advance of any old nuclear plants existing. Humans are general enough that the same braintech selected just for chipping flint handaxes and making water-pouches and outwitting other humans, happened to be general enough that it could scale up to solving all the problems of building a nuclear plant - albeit with some added cognitive tech that didn't require new brainware, and so could happen incredibly fast relative to the generation times for evolutionarily optimized brainware. Now, since neither humans nor chimps were optimized to be "useful" (general), and humans just wandered into a sufficiently general part of the space that it cascaded up to wider generality, we should legit expect the curve of generality to look at least somewhat different if we're optimizing for that. Eg, right now people are trying to optimize for generality with AIs like Mu Zero and GPT-3. In both cases we have a weirdly shallow kind of generality. Neither is as smart or as deeply general as a chimp, but they are respectively better than chimps at a wide variety of Atari games, or a wide variety of problems that can be superposed onto generating typical human text. They are, in a sense, more general than a biological organism at a similar stage of cognitive evolution, with much less complex and architected brains, in virtue of having been trained, not just on wider datasets, but on bigger datasets using gradient-descent memorization of shallower patterns, so they can cover those wide domains while being stupider and lacking some deep aspects of architecture. It is not clear to me that we can go from observations like this, to conclude that there is a dominant mainline probability for how the future clearly ought to go and that this dominant mainline is, "Well, before you get human-level depth and generalization of general intelligence, you get something with 95% depth that covers 80% of the domains for 10% of the pragmatic impact". ...or whatever the concept is here, because this whole conversation is, on my own worldview, being conducted in a shallow way relative to the kind of analysis I did in Intelligence Explosion Microeconomics, where I was like, "here is the historical observation, here is what I think it tells us that puts a lower bound on this input-output curve".

If you look closely at this, it's not saying, "Well, I know why there was this huge leap in performance in human intelligence being optimized for other things, and it's an investment-output curve that's composed of these curves, which look like this, and if you rearrange these curves for the case of humans building AGI, they would look like this instead." Unfair demand for rigor? But that is the kind of argument I was making in Intelligence Explosion Microeconomics! There's an argument from ignorance at the core of all this. It says, "Well, this happened when evolution was doing X. But here Y will be happening instead. So maybe things will go differently! And maybe the relation between AI tech level over time and real-world impact on GDP will look like the relation between tech investment over time and raw tech metrics over time in industries where that's a smooth graph! Because the discontinuity for chimps and humans was because evolution wasn't investing in real-world impact, but humans will be investing directly in that, so the relationship could be smooth, because smooth things are default, and the history is different so not applicable, and who knows what's inside that black box so my default intuition applies which says smoothness." But we do know more than this. We know, for example, that evolution being able to stumble across humans, implies that you can add a small design enhancement to something optimized across the chimpanzee domains, and end up with something that generalizes much more widely. It says that there's stuff in the underlying algorithmic space, in the design space, where you move a bump and get a lump of capability out the other side. It's a remarkable fact about gradient descent that it can memorize a certain set of shallower patterns at much higher rates, at much higher bandwidth, than evolution lays down genes - something shallower than biological memory, shallower than genes, but distributing across computer cores and thereby able to process larger datasets than biological organisms, even if it only learns shallow things. This has provided an alternate avenue toward some cognitive domains. But that doesn't mean that the deep stuff isn't there, and can't be run across, or that it will never be run across in the history of AI before shallow non-widely-generalizing stuff is able to make its way through the regulatory processes and have a huge impact on GDP. There are in fact ways to eat whole swaths of domains at once. The history of hominid evolution tells us this or very strongly hints it, even though evolution wasn't explicitly optimizing for GDP impact. Natural selection moves by adding genes, and not too many of them. If so many domains got added at once to humans, relative to chimps, there must be a way to do that, more or less, by adding not too many genes onto a chimp, who in turn contains only genes that did well on chimp-stuff. You can imagine that AI technology never runs across any core that generalizes this well, until GDP has had a chance to double over 4 years because shallow stuff that generalized less well has somehow had a chance to make its way through the whole economy and get adopted that widely despite all real-world regulatory barriers and reluctances, but your imagining that does not make it so. There's the potential in design space to pull off things as wide as humans. The path that evolution took there doesn't lead through things that generalized 95% as well as humans first for 10% of the impact, not because evolution wasn't optimizing for that, but because that's not how the underlying cognitive technology worked. There may be different cognitive technology that could follow a path like that. Gradient descent follows a path a bit relatively more in that direction along that axis - providing that you deal in systems that are giant layer cakes of transformers and that's your whole input-output relationship; matters are different if we're talking about Mu Zero instead of GPT-3. But this whole document is presenting the case of "ah yes, well, by default, of course, we intuitively expect gargantuan impacts to be presaged by enormous impacts, and sure humans and chimps weren't like our intuition, but that's all invalid because circumstances were different, so we go back to that intuition as a strong default" and actually it's postulating, like, a specific input-output curve that isn't the input-output curve we know about. It's asking for a specific miracle. It's saying, "What if AI technology goes just like this, in the future?" and hiding that under a cover of "Well, of course that's the default, it's such a strong default that we should start from there as a point of departure, consider the arguments in Intelligence Explosion Microeconomics, find ways that they might not be true because evolution is different, dismiss them, and go back to our point of departure." And evolution is different but that doesn't mean that the path AI takes is going to yield this specific behavior, especially when AI would need, in some sense, to miss the core that generalizes very widely, or rather, have run across noncore things that generalize widely enough to have this much economic impact before it runs across the core that generalizes widely. And you may say, "Well, but I don't care that much about GDP, I care about pivotal acts." But then I want to call your attention to the fact that this document was written about GDP, despite all the extra burdensome assumptions involved in supposing that intermediate AI advancements could break through all barriers to truly massive-scale adoption and end up reflected in GDP, and then proceed to double the world economy over 4 years during which not enough further AI advancement occurred to find a widely generalizing thing like humans have and end the world. This is indicative of a basic problem in this whole way of thinking that wanted smooth impacts over smoothly changing time. You should not be saying, "Oh, well, leave the GDP part out then," you should be doubting the whole way of thinking.

Prior probabilities of specifically-reality-constraining theories that excuse away the few contradictory datapoints we have, often aren't that great; and when we start to stake our whole imaginations of the future on them, we depart from the mainline into our more comfortable private fantasy worlds. |

AGI will be a side-effect

[Yudkowsky][19:29]

This section is arguing from within its own weird paradigm, and its subject matter mostly causes me to shrug; I never expected AGI to be a side-effect, except in the obvious sense that lots of tributary tech will be developed while optimizing for other things. The world will be ended by an explicitly AGI project because I do expect that it is rather easier to build an AGI on purpose than by accident. (I furthermore rather expect that it will be a research project and a prototype, because the great gap between prototypes and commercializable technology will ensure that prototypes are much more advanced than whatever is currently commercializable. They will have eyes out for commercial applications, and whatever breakthrough they made will seem like it has obvious commercial applications, at the time when all hell starts to break loose. (After all hell starts to break loose, things get less well defined in my social models, and also choppier for a time in my AI models - the turbulence only starts to clear up once you start to rise out of the atmosphere.)) |

Finding the secret sauce

[Yudkowsky][19:40]

...humans and chimps? ...fission weapons? ...AlphaGo? ...the Wright Brothers focusing on stability and building a wind tunnel? ...AlphaFold 2 coming out of Deepmind and shocking the heck out of everyone in the field of protein folding with performance far better than they expected even after the previous shock of AlphaFold, by combining many pieces that I suppose you could find precedents for scattered around the AI field, but with those many secret sauces all combined in one place by the meta-secret-sauce of "Deepmind alone actually knows how to combine that stuff and build things that complicated without a prior example"? ...humans and chimps again because this is really actually a quite important example because of what it tells us about what kind of possibilities exist in the underlying design space of cognitive systems?

...Transformers as the key to text prediction? The case of humans and chimps, even if evolution didn't do it on purpose, is telling us something about underlying mechanics. The reason the jump to lightspeed didn't look like evolution slowly developing a range of intelligent species competing to exploit an ecological niche 5% better, or like the way that a stable non-Silicon-Valley manufacturing industry looks like a group of competitors summing up a lot of incremental tech enhancements to produce something with 10% higher scores on a benchmark every year, is that developing intelligence is a case where a relatively narrow technology by biological standards just happened to do a huge amount of stuff without that requiring developing whole new fleets of other biological capabilities. So it looked like building a Wright Flyer that flies or a nuclear pile that reaches criticality, instead of looking like being in a stable manufacturing industry where a lot of little innovations sum to 10% better benchmark performance every year. So, therefore, there is stuff in the design space that does that. It is possible to build humans. Maybe you can build things other than humans first, maybe they hang around for a few years. If you count GPT-3 as "things other than human", that clock has already started for all the good it does. But humans don't get any less possible. From my perspective, this whole document feels like one very long filibuster of "Smooth outputs are default. Smooth outputs are default. Pay no attention to this case of non-smooth output. Pay no attention to this other case either. All the non-smooth outputs are not in the right reference class. (Highly competitive manufacturing industries with lots of competitors are totally in the right reference class though. I'm not going to make that case explicitly because then you might think of how it might be wrong, I'm just going to let that implicit thought percolate at the back of your mind.) If we just talk a lot about smooth outputs and list ways that nonsmooth output producers aren't necessarily the same and arguments for nonsmooth outputs could fail, we get to go back to the intuition of smooth outputs. (We're not even going to discuss particular smooth outputs as cases in point, because then you might see how those cases might not apply. It's just the default. Not because we say so out loud, but because we talk a lot like that's the conclusion you're supposed to arrive at after reading.)" I deny the implicit meta-level assertion of this entire essay which would implicitly have you accept as valid reasoning the argument structure, "Ah, yes, given the way this essay is written, we must totally have pretty strong prior reasons to believe in smooth outputs - just implicitly think of some smooth outputs, that's a reference class, now you have strong reason to believe that AGI output is smooth - we're not even going to argue this prior, just talk like it's there - now let us consider the arguments against smooth outputs - pretty weak, aren't they? we can totally imagine ways they could be wrong? we can totally argue reasons these cases don't apply? So at the end we go back to our strong default of smooth outputs. This essay is written with that conclusion, so that must be where the arguments lead." Me: "Okay, so what if somebody puts together the pieces required for general intelligence and it scales pretty well with added GPUs and FOOMS? Say, for the human case, that's some perceptual systems with imaginative control, a concept library, episodic memory, realtime procedural skill memory, which is all in chimps, and then we add some reflection to that, and get a human. Only, unlike with humans, once you have a working brain you can make a working brain 100X that large by adding 100X as many GPUs, and it can run some thoughts 10000X as fast. And that is substantially more effective brainpower than was being originally devoted to putting its design together, as it turns out. So it can make a substantially smarter AGI. For concreteness's sake. Reality has been trending well to the Eliezer side of Eliezer, on the Eliezer-Hanson axis, so perhaps you can do it more simply than that." Simplicio: "Ah, but what if, 5 years before then, somebody puts together some other AI which doesn't work like a human, and generalizes widely enough to have a big economic impact, but not widely enough to improve itself or generalize to AI tech or generalize to everything and end the world, and in 1 year it gets all the mass adoptions required to do whole bunches of stuff out in the real world that current regulations require to be done in various exact ways regardless of technology, and then in the next 4 years it doubles the world economy?" Me: "Like... what kind of AI, exactly, and why didn't anybody manage to put together a full human-level thingy during those 5 years? Why are we even bothering to think about this whole weirdly specific scenario in the first place?" Simplicio: "Because if you can put together something that has an enormous impact, you should be able to put together most of the pieces inside it and have a huge impact! Most technologies are like this. I've considered some things that are not like this and concluded they don't apply." Me: "Especially if we are talking about impact on GDP, it seems to me that most explicit and implicit 'technologies' are not like this at all, actually. There wasn't a cryptocurrency developed a year before Bitcoin using 95% of the ideas which did 10% of the transaction volume, let alone a preatomic bomb. But, like, can you give me any concrete visualization of how this could play out?" And there is no concrete visualization of how this could play out. Anything I'd have Simplicio say in reply would be unrealistic because there is no concrete visualization they give us. It is not a coincidence that I often use concrete language and concrete examples, and this whole field of argument does not use concrete language or offer concrete examples. Though if we're sketching scifi scenarios, I suppose one could imagine a group that develops sufficiently advanced GPT-tech and deploys it on Twitter in order to persuade voters and politicians in a few developed countries to institute open borders, along with political systems that can handle open borders, and to permit housing construction, thereby doubling world GDP over 4 years. And since it was possible to use relatively crude AI tech to double world GDP this way, it legitimately takes the whole 4 years after that to develop real AGI that ends the world. FINE. SO WHAT. EVERYONE STILL DIES. |

Universality thresholds

[Yudkowsky][20:21]

We know, because humans, that there is humanly-widely-applicable general-intelligence tech. What this section wants to establish, I think, or needs to establish to carry the argument, is that there is some intelligence tech that is wide enough to double the world economy in 4 years, but not world-endingly scalably wide, which becomes a possible AI tech 4 years before any general-intelligence-tech that will, if you put in enough compute, scale to the ability to do a sufficiently large amount of wide thought to FOOM (or build nanomachines, but if you can build nanomachines you can very likely FOOM from there too if not corrigible). What it says instead is, "I think we'll get universality much earlier on the equivalent of the biological timeline that has humans and chimps, so the resulting things will be weaker than humans at the point where they first become universal in that sense." This is very plausibly true. It doesn't mean that when this exciting result gets 100 times more compute dumped on the project, it takes at least 5 years to get anywhere really interesting from there (while also taking only 1 year to get somewhere sorta-interesting enough that the instantaneous adoption of it will double the world economy over the next 4 years). It also isn't necessarily rather than plausibly true. For example, the thing that becomes universal, could also have massive gradient descent shallow powers that are far beyond what primates had at the same age. Primates weren't already writing code as well as Codex when they started doing deep thinking. They couldn't do precise floating-point arithmetic. Their fastest serial rates of thought were a hell of a lot slower. They had no access to their own code or to their own memory contents etc. etc. etc. But mostly I just want to call your attention to the immense gap between what this section needs to establish, and what it actually says and argues for. What it actually argues for is a sort of local technological point: at the moment when generality first arrives, it will be with a brain that is less sophisticated than chimp brains were when they turned human. It implicitly jumps all the way from there, across a whole lot of elided steps, to the implicit conclusion that this tech or elaborations of it will have smooth output behavior such that at some point the resulting impact is big enough to double the world economy in 4 years, without any further improvements ending the world economy before 4 years. The underlying argument about how the AI tech might work is plausible. Chimps are insanely complicated. I mostly expect we will have AGI long before anybody is even trying to build anything that complicated. The very next step of the argument, about capabilities, is already very questionable because this system could be using immense gradient descent capabilities to master domains for which large datasets are available, and hominids did not begin with instinctive great shallow mastery of all domains for which a large dataset could be made available, which is why hominids don't start out playing superhuman Go as soon as somebody tells them the rules and they do one day of self-play, which is the sort of capability that somebody could hook up to a nascent AGI (albeit we could optimistically and fondly and falsely imagine that somebody deliberately didn't floor the gas pedal as far as possible). Could we have huge impacts out of some subuniversal shallow system that was hooked up to capabilities like this? Maybe, though this is not the argument made by the essay. It would be a specific outcome that isn't forced by anything in particular, but I can't say it's ruled out. Mostly my twin reactions to this are, "If the AI tech is that dumb, how are all the bureaucratic constraints that actually rate-limit economic progress getting bypassed" and "Okay, but ultimately, so what and who cares, how does this modify that we all die?"

I have no idea why this argument is being made or where it's heading. I cannot pass the ITT of the author. I don't know what the author thinks this has to do with constraining takeoffs to be slow instead of fast. At best I can conjecture that the author thinks that "hard takeoff" is supposed to derive from "universality" being very sudden and hard to access and late in the game, so if you can argue that universality could be accessed right now, you have defeated the argument for hard takeoff. |

"Understanding" is discontinuous

[Yudkowsky][20:41]

No, the idea is that a core of overlapping somethingness, trained to handle chipping handaxes and outwitting other monkeys, will generalize to building spaceships; so evolutionarily selecting on understanding a bunch of stuff, eventually ran across general stuff-understanders that understood a bunch more stuff. Gradient descent may be genuinely different from this, but we shouldn't confuse imagination with knowledge when it comes to extrapolating that difference onward. At present, gradient descent does mass memorization of overlapping shallow patterns, which then combine to yield a weird pseudo-intelligence over domains for which we can deploy massive datasets, without yet generalizing much outside those domains. We can hypothesize that there is some next step up to some weird thing that is intermediate in generality between gradient descent and humans, but we have not seen it yet, and we should not confuse imagination for knowledge. If such a thing did exist, it would not necessarily be at the right level of generality to double the world economy in 4 years, without being able to build a better AGI. If it was at that level of generality, it's nowhere written that no other company will develop a better prototype at a deeper level of generality over those 4 years. I will also remark that you sure could look at the step from GPT-2 to GPT-3 and say, "Wow, look at the way a whole bunch of stuff just seemed to simultaneously click for GPT-3." |

Deployment lag

[Yudkowsky][20:49]

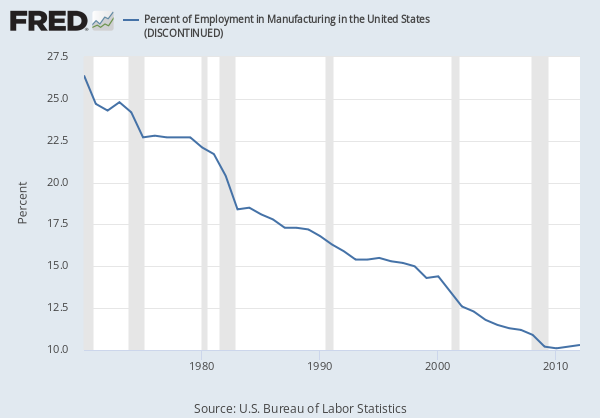

An awful lot of my model of deployment lag is adoption lag and regulatory lag and bureaucratic sclerosis across companies and countries. If doubling GDP is such a big deal, go open borders and build houses. Oh, that's illegal? Well, so will be AIs building houses! AI tech that does flawless translation could plausibly come years before AGI, but that doesn't mean all the barriers to international trade and international labor movement and corporate hiring across borders all come down, because those barriers are not all translation barriers. There's then a discontinuous jump at the point where everybody falls over dead and the AI goes off to do its own thing without FDA approval. This jump is precedented by earlier pre-FOOM prototypes being able to do pre-FOOM cool stuff, maybe, but not necessarily precedented by mass-market adoption of anything major enough to double world GDP. |

Recursive self-improvement

[Yudkowsky][20:54]

Oh, come on. That is straight-up not how simple continuous toy models of RSI work. Between a neutron multiplication factor of 0.999 and 1.001 there is a very huge gap in output behavior. Outside of toy models: Over the last 10,000 years we had humans going from mediocre at improving their mental systems to being (barely) able to throw together AI systems, but 10,000 years is the equivalent of an eyeblink in evolutionary time - outside the metaphor, this says, "A month before there is AI that is great at self-improvement, there will be AI that is mediocre at self-improvement." (Or possibly an hour before, if reality is again more extreme along the Eliezer-Hanson axis than Eliezer. But it makes little difference whether it's an hour or a month, given anything like current setups.) This is just pumping hard again on the intuition that says incremental design changes yield smooth output changes, which (the meta-level of the essay informs us wordlessly) is such a strong default that we are entitled to believe it if we can do a good job of weakening the evidence and arguments against it. And the argument is: Before there are systems great at self-improvement, there will be systems mediocre at self-improvement; implicitly: "before" implies "5 years before" not "5 days before"; implicitly: this will correspond to smooth changes in output between the two regimes even though that is not how continuous feedback loops work. |

Train vs. test

[Yudkowsky][21:12]

Yeah, and before you can evolve a human, you can evolve a Homo erectus, which is a slightly worse human.

I suppose this sentence makes a kind of sense if you assume away alignability and suppose that the previous paragraphs have refuted the notion of FOOMs, self-improvement, and thresholds between compounding returns and non-compounding returns (eg, in the human case, cognitive innovations like "written language" or "science"). If you suppose the previous sections refuted those things, then clearly, if you raised an AGI that you had aligned to "take over the world", it got that way through cognitive powers that weren't the result of FOOMing or other self-improvements, weren't the results of its cognitive powers crossing a threshold from non-compounding to compounding, wasn't the result of its understanding crossing a threshold of universality as the result of chunky universal machinery such as humans gained over chimps, so, implicitly, it must have been the kind of thing that you could learn by gradient descent, and do a half or a tenth as much of by doing half as much gradient descent, in order to build nanomachines a tenth as well-designed that could bypass a tenth as much bureaucracy. If there are no unsmooth parts of the tech curve, the cognition curve, or the environment curve, then you should be able to make a bunch of wealth using a more primitive version of any technology that could take over the world. And when we look back at history, why, that may be totally true! They may have deployed universal superhuman translator technology for 6 months, which won't double world GDP, but which a lot of people would pay for, and made a lot of money! Because even though there's no company that built 90% of Amazon's website and has 10% the market cap, when you zoom back out to look at whole industries like AI and a technological capstone like AGI, why, those whole industries do sometimes make some money along the way to the technological capstone, if they can find a niche that isn't too regulated! Which translation currently isn't! So maybe somebody used precursor tech to build a superhuman translator and deploy it 6 months earlier and made a bunch of money for 6 months. SO WHAT. EVERYONE STILL DIES. As for "radically transforming the world" instead of "taking it over", I think that's just re-restated FOOM denialism. Doing either of those things quickly against human bureaucratic resistance strike me as requiring cognitive power levels dangerous enough that failure to align them on corrigibility would result in FOOMs. Like, if you can do either of those things on purpose, you are doing it by operating in the regime where running the AI with higher bounds on the for loop will FOOM it, but you have politely asked it not to FOOM, please. If the people doing this have any sense whatsoever, they will refrain from merely massively transforming the world until they are ready to do something that prevents the world from ending. And if the gap from "massively transforming the world, briefly before it ends" to "preventing the world from ending, lastingly" takes much longer than 6 months to cross, or if other people have the same technologies that scale to "massive transformation", somebody else will build an AI that fooms all the way.

Again, this presupposes some weird model where everyone has easy alignment at the furthest frontiers of capability; everybody has the aligned version of the most rawly powerful AGI they can possibly build; and nobody in the future has the kind of tech advantage that Deepmind currently has; so before you can amp your AGI to the raw power level where it could take over the whole world by using the limit of its mental capacities to military ends - alignment of this being a trivial operation to be assumed away - some other party took their easily-aligned AGI that was less powerful at the limits of its operation, and used it to get 90% as much military power... is the implicit picture here? Whereas the picture I'm drawing is that the AGI that kills you via "decisive strategic advantage" is the one that foomed and got nanotech, and no, the AI tech from 6 months earlier did not do 95% of a foom and get 95% of the nanotech. |

Discontinuities at 100% automation

[Yudkowsky][21:31]

Not very relevant to my whole worldview in the first place; also not a very good description of how horses got removed from automobiles, or how humans got removed from playing Go. |

The weight of evidence

[Yudkowsky][21:31]

Uh huh. And how about if we have a mirror-universe essay which over and over again treats fast takeoff as the default to be assumed, and painstakingly shows how a bunch of particular arguments for slow takeoff might not be true? This entire essay seems to me like it's drawn from the same hostile universe that produced Robin Hanson's side of the Yudkowsky-Hanson Foom Debate. Like, all these abstract arguments devoid of concrete illustrations and "it need not necessarily be like..." and "now that I've shown it's not necessarily like X, well, on the meta-level, I have implicitly told you that you now ought to believe Y". It just seems very clear to me that the sort of person who is taken in by this essay is the same sort of person who gets taken in by Hanson's arguments in 2008 and gets caught flatfooted by AlphaGo and GPT-3 and AlphaFold 2. And empirically, it has already been shown to me that I do not have the power to break people out of the hypnosis of nodding along with Hansonian arguments, even by writing much longer essays than this. Hanson's fond dreams of domain specificity, and smooth progress for stuff like Go, and of course somebody else has a precursor 90% as good as AlphaFold 2 before Deepmind builds it, and GPT-3 levels of generality just not being a thing, now stand refuted. Despite that they're largely being exhibited again in this essay. And people are still nodding along. Reality just... doesn't work like this on some deep level. It doesn't play out the way that people imagine it would play out when they're imagining a certain kind of reassuring abstraction that leads to a smooth world. Reality is less fond of that kind of argument than a certain kind of EA is fond of that argument. There is a set of intuitive generalizations from experience which rules that out, which I do not know how to convey. There is an understanding of the rules of argument which leads you to roll your eyes at Hansonian arguments and all their locally invalid leaps and snuck-in defaults, instead of nodding along sagely at their wise humility and outside viewing and then going "Huh?" when AlphaGo or GPT-3 debuts. But this, I empirically do not seem to know how to convey to people, in advance of the inevitable and predictable contradiction by a reality which is not as fond of Hansonian dynamics as Hanson. The arguments sound convincing to them. (Hanson himself has still not gone "Huh?" at the reality, though some of his audience did; perhaps because his abstractions are loftier than his audience's? - because some of his audience, reading along to Hanson, probably implicitly imagined a concrete world in which GPT-3 was not allowed; but maybe Hanson himself is more abstract than this, and didn't imagine anything so merely concrete?) If I don't respond to essays like this, people find them comforting and nod along. If I do respond, my words are less comforting and more concrete and easier to imagine concrete objections to, less like a long chain of abstractions that sound like the very abstract words in research papers and hence implicitly convincing because they sound like other things you were supposed to believe. And then there is another essay in 3 months. There is an infinite well of them. I would have to teach people to stop drinking from the well, instead of trying to whack them on the back until they cough up the drinks one by one, or actually, whacking them on the back and then they don't cough them up until reality contradicts them, and then a third of them notice that and cough something up, and then they don't learn the general lesson and go back to the well and drink again. And I don't know how to teach people to stop drinking from the well. I tried to teach that. I failed. If I wrote another Sequence I have no idea to believe that Sequence would work. So what EAs will believe at the end of the world, will look like whatever the content was of the latest bucket from the well of infinite slow-takeoff arguments that hasn't yet been blatantly-even-to-them refuted by all the sharp jagged rapidly-generalizing things that happened along the way to the world's end. And I know, before anyone bothers to say, that all of this reply is not written in the calm way that is right and proper for such arguments. I am tired. I have lost a lot of hope. There are not obvious things I can do, let alone arguments I can make, which I expect to be actually useful in the sense that the world will not end once I do them. I don't have the energy left for calm arguments. What's left is despair that can be given voice. |

5.6. Yudkowsky/Christiano discussion: AI progress and crossover points

[Christiano][22:15] To the extent that it was possible to make any predictions about 2015-2020 based on your views, I currently feel like they were much more wrong than right. I’m happy to discuss that. To the extent you are willing to make any bets about 2025, I expect they will be mostly wrong and I’d be happy to get bets on the record (most of all so that it will be more obvious in hindsight whether they are vindication for your view). Not sure if this is the place for that. Could also make a separate channel to avoid clutter. |

[Yudkowsky][22:16] Possibly. I think that 2015-2020 played out to a much more Eliezerish side than Eliezer on the Eliezer-Hanson axis, which sure is a case of me being wrong. What bets do you think we'd disagree on for 2025? I expect you have mostly misestimated my views, but I'm always happy to hear about anything concrete. |

[Christiano][22:20] I think the big points are: (i) I think you are significantly overestimating how large a discontinuity/trend break AlphaZero is, (ii) your view seems to imply that we will move quickly from much worse than humans to much better than humans, but it's likely that we will move slowly through the human range on many tasks. I'm not sure if we can get a bet out of (ii), I think I don't understand your view that well but I don't see how it could make the same predictions as mine over the next 10 years. |

[Yudkowsky][22:22] What are your 10-year predictions? |

[Christiano][22:23] My basic expectation is that for any given domain AI systems will gradually increase in usefulness, we will see a crossing over point where their output is comparable to human output, and that from that time we can estimate how long until takeoff by estimating "how long does it take AI systems to get 'twice as impactful'?" which gives you a number like ~1 year rather than weeks. At the crossing over point you get a somewhat rapid change in derivative, since you are looking at (x+y) where y is growing faster than x. I feel like that should translate into different expectations about how impactful AI will be in any given domain---I don't see how to make the ultra-fast-takeoff view work if you think that AI output is increasingly smoothly (since the rate of progress at the crossing-over point will be similar to the current rate of progress, unless R&D is scaling up much faster then) So like, I think we are going to have crappy coding assistants, and then slightly less crappy coding assistants, and so on. And they will be improving the speed of coding very significantly before the end times. |

[Yudkowsky][22:25] You think in a different language than I do. My more confident statements about AI tech are about what happens after it starts to rise out of the metaphorical atmosphere and the turbulence subsides. When you have minds as early on the cognitive tech tree as humans they sure can get up to some weird stuff, I mean, just look at humans. Now take an utterly alien version of that with its own draw from all the weirdness factors. It sure is going to be pretty weird. |

[Christiano][22:26] OK, but you keep saying stuff about how people with my dumb views would be "caught flat-footed" by historical developments. Surely to be able to say something like that you need to be making some kind of prediction? |

[Yudkowsky][22:26] Well, sure, now that Codex has suddenly popped into existence one day at a surprisingly high base level of tech, we should see various jumps in its capability over the years and some outside imitators. What do you think you predict differently about that than I do? |

[Christiano][22:26] Why do you think codex is a high base level of tech? The models get better continuously as you scale them up, and the first tech demo is weak enough to be almost useless |

[Yudkowsky][22:27] I think the next-best coding assistant was, like, not useful. |

[Christiano][22:27] yes and it is still not useful |

[Yudkowsky][22:27] Could be. Some people on HN seemed to think it was useful. I haven't tried it myself. |

[Christiano][22:27] OK, I'm happy to take bets |

[Yudkowsky][22:28] I don't think the previous coding assistant would've been very good at coding an asteroid game, even if you tried a rigged demo at the same degree of rigging? |

[Christiano][22:28] it's unquestionably a radically better tech demo |

[Yudkowsky][22:28] Where by "previous" I mean "previously deployed" not "previous generations of prototypes inside OpenAI's lab". |

[Christiano][22:28] My basic story is that the model gets better and more useful with each doubling (or year of AI research) in a pretty smooth way. So the key underlying parameter for a discontinuity is how soon you build the first version---do you do that before or after it would be a really really big deal? and the answer seems to be: you do it somewhat before it would be a really big deal and then it gradually becomes a bigger and bigger deal as people improve it maybe we are on the same page about getting gradually more and more useful? But I'm still just wondering where the foom comes from |

[Yudkowsky][22:30] So, like... before we get systems that can FOOM and build nanotech, we should get more primitive systems that can write asteroid games and solve protein folding? Sounds legit. So that happened, and now your model says that it's fine later on for us to get a FOOM, because we have the tech precursors and so your prophecy has been fulfilled? |

[Christiano][22:31] no |

[Yudkowsky][22:31] Didn't think so. |

[Christiano][22:31] I can't tell if you can't understand what I'm saying, or aren't trying, or do understand and are just saying kind of annoying stuff as a rhetorical flourish at some point you have an AI system that makes (humans+AI) 2x as good at further AI progress |

[Yudkowsky][22:32] I know that what I'm saying isn't your viewpoint. I don't know what your viewpoint is or what sort of concrete predictions it makes at all, let alone what such predictions you think are different from mine. |

[Christiano][22:32] maybe by continuity you can grant the existence of such a system, even if you don't think it will ever exist? I want to (i) make the prediction that AI will actually have that impact at some point in time, (ii) talk about what happens before and after that I am talking about AI systems that become continuously more useful, because "become continuously more useful" is what makes me think that (i) AI will have that impact at some point in time, (ii) allows me to productively reason about what AI will look like before and after that. I expect that your view will say something about why AI improvements either aren't continuous, or why continuous improvements lead to discontinuous jumps in the productivity of the (human+AI) system |

[Yudkowsky][22:34]

Is this prophecy fulfilled by using some narrow eld-AI algorithm to map out a TPU, and then humans using TPUs can write in 1 month a research paper that would otherwise have taken 2 months? And then we can go on to FOOM now that this prophecy about pre-FOOM states has been fulfilled? I know the answer is no, but I don't know what you think is a narrower condition on the prophecy than that. |

[Christiano][22:35] If you can use narrow eld-AI in order to make every part of AI research 2x faster, so that the entire field moves 2x faster, then the prophecy is fulfilled and it may be just another 6 months until it makes all of AI research 2x faster again, and then 3 months, and then... |

[Yudkowsky][22:36] What, the entire field? Even writing research papers? Even the journal editors approving and publishing the papers? So if we speed up every part of research except the journal editors, the prophecy has not been fulfilled and no FOOM may take place? |

[Christiano][22:36] no, I mean the improvement in overall output, given the actual realistic level of bottlenecking that occurs in practice |

[Yudkowsky][22:37] So if the realistic level of bottlenecking ever becomes dominated by a human gatekeeper, the prophecy is ever unfulfillable and no FOOM may ever occur. |

[Christiano][22:37] that's what I mean by "2x as good at further progress," the entire system is achieving twice as much then the prophecy is unfulfillable and I will have been wrong I mean, I think it's very likely that there will be a hard takeoff, if people refuse or are unable to use AI to accelerate AI progress for reasons unrelated to AI capabilities, and then one day they become willing |

[Yudkowsky][22:38] ...because on your view, the Prophecy necessarily goes through humans and AIs working together to speed up the whole collective field of AI? |

[Christiano][22:38] it's fine if the AI works alone the point is just that it overtakes the humans at the point when it is roughly as fast as the humans why wouldn't it? why does it overtake the humans when it takes it 10 seconds to double in capability instead of 1 year? that's like predicting that cultural evolution will be infinitely fast, instead of making the more obvious prediction that it will overtake evolution exactly when it's as fast as evolution |

[Yudkowsky][22:39] I live in a mental world full of weird prototypes that people are shepherding along to the world's end. I'm not even sure there's a short sentence in my native language that could translate the short Paul-sentence "is roughly as fast as the humans". |

[Christiano][22:40] do you agree that you can measure the speed with which the community of human AI researchers develop and implement improvements in their AI systems? like, we can look at how good AI systems are in 2021, and in 2022, and talk about the rate of progress? |

[Yudkowsky][22:40] ...when exactly in hominid history was hominid intelligence exactly as fast as evolutionary optimization???

I mean... obviously not? How the hell would we measure real actual AI progress? What would even be the Y-axis on that graph? I have a rough intuitive feeling that it was going faster in 2015-2017 than 2018-2020. "What was?" says the stern skeptic, and I go "I dunno." |

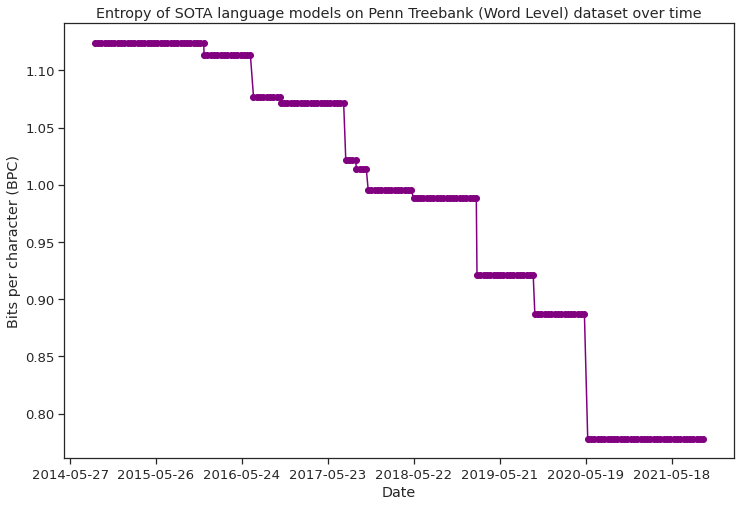

[Christiano][22:42] Here's a way of measuring progress you won't like: for almost all tasks, you can initially do them with lots of compute, and as technology improves you can do them with less compute. We can measure how fast the amount of compute required is going down. |

[Yudkowsky][22:43] Yeah, that would be a cool thing to measure. It's not obviously a relevant thing to anything important, but it'd be cool to measure. |

[Christiano][22:43] Another way you won't like: we can hold fixed the resources we invest and look at the quality of outputs in any given domain (or even $ of revenue) and ask how fast it's changing. |

[Yudkowsky][22:43] I wonder what it would say about Go during the age of AlphaGo. Or what that second metric would say. |

[Christiano][22:43] I think it would be completely fine, and you don't really understand what happened with deep learning in board games. Though I also don't know what happened in much detail, so this is more like a prediction then a retrodiction. But it's enough of a retrodiction that I shouldn't get too much credit for it. |

[Yudkowsky][22:44] I don't know what result you would consider "completely fine". I didn't have any particular unfine result in mind. |

[Christiano][22:45] oh, sure if it was just an honest question happy to use it as a concrete case I would measure the rate of progress in Go by looking at how fast Elo improves with time or increasing R&D spending |

[Yudkowsky][22:45] I mean, I don't have strong predictions about it so it's not yet obviously cruxy to me |

[Christiano][22:46] I'd roughly guess that would continue, and if there were multiple trendlines to extrapolate I'd estimate crossover points based on that |

[Yudkowsky][22:47] suppose this curve is smooth, and we see that sharp Go progress over time happened because Deepmind dumped in a ton of increased R&D spend. you then argue that this cannot happen with AGI because by the time we get there, people will be pushing hard at the frontiers in a competitive environment where everybody's already spending what they can afford, just like in a highly competitive manufacturing industry. |

[Christiano][22:47] the key input to making a prediction for AGZ in particular would be the precise form of the dependence on R&D spending, to try to predict the changes as you shift from a single programmer to a large team at DeepMind, but most reasonable functional forms would be roughly right Yes, it's definitely a prediction of my view that it's easier to improve things that people haven't spent much money on than things have spent a lot of money on. It's also a separate prediction of my view that people are going to be spending a boatload of money on all of the relevant technologies. Perhaps $1B/year right now and I'm imagining levels of investment large enough to be essentially bottlenecked on the availability of skilled labor. |

[Bensinger][22:48] ( Previous Eliezer-comments about AlphaGo as a break in trend, responding briefly to Miles Brundage: https://twitter.com/ESRogs/status/1337869362678571008 ) |

5.7. Legal economic growth

[Yudkowsky][22:49] Does your prediction change if all hell breaks loose in 2025 instead of 2055? |

[Christiano][22:50] I think my prediction was wrong if all hell breaks loose in 2025, if by "all hell breaks loose" you mean "dyson sphere" and not "things feel crazy" |

[Yudkowsky][22:50] Things feel crazy in the AI field and the world ends less than 4 years later, well before the world economy doubles. Why was the Prophecy wrong if the world begins final descent in 2025? The Prophecy requires the world to then last until 2029 while doubling its economic output, after which it is permitted to end, but does not obviously to me forbid the Prophecy to begin coming true in 2025 instead of 2055. |

[Christiano][22:52] yes, I just mean that some important underlying assumptions for the prophecy were violated, I wouldn't put much stock in it at that point, etc. |

[Yudkowsky][22:53] A lot of the issues I have with understanding any of your terminology in concrete Eliezer-language is that it looks to me like the premise-events of your Prophecy are fulfillable in all sorts of ways that don't imply the conclusion-events of the Prophecy. |

[Christiano][22:53] if "things feel crazy" happens 4 years before dyson sphere, then I think we have to be really careful about what crazy means |

[Yudkowsky][22:54] a lot of people looking around nervously and privately wondering if Eliezer was right, while public pravda continues to prohibit wondering anything such thing out loud, so they all go on thinking that they must be wrong. |

[Christiano][22:55] OK, by "things get crazy" I mean like hundreds of billions of dollars of spending at google on automating AI R&D |

[Yudkowsky][22:55] I expect bureaucratic obstacles to prevent much GDP per se from resulting from this. |

[Christiano][22:55] massive scaleups in semiconductor manufacturing, bidding up prices of inputs crazily |

[Yudkowsky][22:55] I suppose that much spending could well increase world GDP by hundreds of billions of dollars per year. |

[Christiano][22:56] massive speculative rises in AI company valuations financing a significant fraction of GWP into AI R&D (+hardware R&D, +building new clusters, +etc.) |

[Yudkowsky][22:56] like, higher than Tesla? higher than Bitcoin? both of these things sure did skyrocket in market cap without that having much of an effect on housing stocks and steel production. |

[Christiano][22:57] right now I think hardware R&D is on the order of $100B/year, AI R&D is more like $10B/year, I guess I'm betting on something more like trillions? (limited from going higher because of accounting problems and not that much smart money) I don't think steel production is going up at that point plausibly going down since you are redirecting manufacturing capacity into making more computers. But probably just staying static while all of the new capacity is going into computers, since cannibalizing existing infrastructure is much more expensive the original point was: you aren't pulling AlphaZero shit any more, you are competing with an industry that has invested trillions in cumulative R&D |

[Yudkowsky][23:00] is this in hopes of future profit, or because current profits are already in the trillions? |

[Christiano][23:01] largely in hopes of future profit / reinvested AI outputs (that have high market cap), but also revenues are probably in the trillions? |

[Yudkowsky][23:02] this all sure does sound "pretty darn prohibited" on my model, but I'd hope there'd be something earlier than that we could bet on. what does your Prophecy prohibit happening before that sub-prophesied day? |

[Christiano][23:02] To me your model just seems crazy, and you are saying it predicts crazy stuff at the end but no crazy stuff beforehand, so I don't know what's prohibited. Mostly I feel like I'm making positive predictions, of gradually escalating value of AI in lots of different industries and rapidly increasing investment in AI I guess your model can be: those things happen, and then one day the AI explodes? |

[Yudkowsky][23:03] the main way you get rapidly increasing investment in AI is if there's some way that AI can produce huge profits without that being effectively bureaucratically prohibited - eg this is where we get huge investments in burning electricity and wasting GPUs on Bitcoin mining. |

[Christiano][23:03] but it seems like you should be predicting e.g. AI quickly jumping to superhuman in lots of domains, and some applications jumping from no value to massive value I don't understand what you mean by that sentence. Do you think we aren't seeing rapidly increasing investment in AI right now? or are you talking about increasing investment above some high threshold, or increasing investment at some rate significantly larger than the current rate? it seems to me like you can pretty seamlessly get up to a few $100B/year of revenue just by redirecting existing tech R&D |

[Yudkowsky][23:05] so I can imagine scenarios where some version of GPT-5 cloned outside OpenAI is able to talk hundreds of millions of mentally susceptible people into giving away lots of their income, and many regulatory regimes are unable to prohibit this effectively. then AI could be making a profit of trillions and then people would invest corresponding amounts in making new anime waifus trained in erotic hypnosis and findom. this, to be clear, is not my mainline prediction. but my sense is that our current economy is mostly not about the 1-day period to design new vaccines, it is about the multi-year period to be allowed to sell the vaccines. the exceptions to this, like Bitcoin managing to say "fuck off" to the regulators for long enough, are where Bitcoin scales to a trillion dollars and gets massive amounts of electricity and GPU burned on it. so we can imagine something like this for AI, which earns a trillion dollars, and sparks a trillion-dollar competition. but my sense is that your model does not work like this. my sense is that your model is about general improvements across the whole economy. |

[Christiano][23:08] I think bitcoin is small even compared to current AI... |

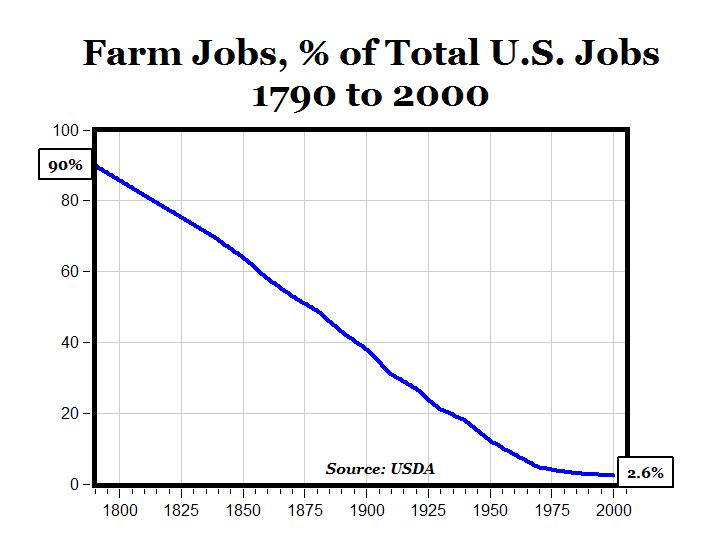

[Yudkowsky][23:08] my sense is that we've already built an economy which rejects improvement based on small amounts of cleverness, and only rewards amounts of cleverness large enough to bypass bureaucratic structures. it's not enough to figure out a version of e-gold that's 10% better. e-gold is already illegal. you have to figure out Bitcoin. what are you going to build? better airplanes? airplane costs are mainly regulatory costs. better medtech? mainly regulatory costs. better houses? building houses is illegal anyways. where is the room for the general AI revolution, short of the AI being literally revolutionary enough to overthrow governments? |

[Christiano][23:10] factories, solar panels, robots, semiconductors, mining equipment, power lines, and "factories" just happens to be one word for a thousand different things I think it's reasonable to think some jurisdictions won't be willing to build things but it's kind of improbable as a prediction for the whole world. That's a possible source of shorter-term predictions? also computers and the 100 other things that go in datacenters |

[Yudkowsky][23:12] The whole developed world rejects open borders. The regulatory regimes all make the same mistakes with an almost perfect precision, the kind of coordination that human beings could never dream of when trying to coordinate on purpose. if the world lasts until 2035, I could perhaps see deepnets becoming as ubiquitous as computers were in... 1995? 2005? would that fulfill the terms of the Prophecy? I think it doesn't; I think your Prophecy requires that early AGI tech be that ubiquitous so that AGI tech will have trillions invested in it. |

[Christiano][23:13] what is AGI tech? the point is that there aren't important drivers that you can easily improve a lot |

[Yudkowsky][23:14] for purposes of the Prophecy, AGI tech is that which, scaled far enough, ends the world; this must have trillions invested in it, so that the trajectory up to it cannot look like pulling an AlphaGo. no? |

[Christiano][23:14] so it's relevant if you are imagining some piece of the technology which is helpful for general problem solving or something but somehow not helpful for all of the things people are doing with ML, to me that seems unlikely since it's all the same stuff surely AGI tech should at least include the use of AI to automate AI R&D regardless of what you arbitrarily decree as "ends the world if scaled up" |

[Yudkowsky][23:15] only if that's the path that leads to destroying the world? if it isn't on that path, who cares Prophecy-wise? |

[Christiano][23:15] also I want to emphasize that "pull an AlphaGo" is what happens when you move from SOTA being set by an individual programmer to a large lab, you don't need to be investing trillions to avoid that and that the jump is still more like a few years but the prophecy does involve trillions, and my view gets more like your view if people are jumping from $100B of R&D ever to $1T in a single year |

5.8. TPUs and GPUs, and automating AI R&D

[Yudkowsky][23:17] I'm also wondering a little why the emphasis on "trillions". it seems to me that the terms of your Prophecy should be fulfillable by AGI tech being merely as ubiquitous as modern computers, so that many competing companies invest mere hundreds of billions in the equivalent of hardware plants. it is legitimately hard to get a chip with 50% better transistors ahead of TSMC. |

[Christiano][23:17] yes, if you are investing hundreds of billions then it is hard to pull ahead (though could still happen) (since the upside is so much larger here, no one cares that much about getting ahead of TSMC since the payoff is tiny in the scheme of the amounts we are discussing) |

[Yudkowsky][23:18] which, like, doesn't prevent Google from tossing out TPUs that are pretty significant jumps on GPUs, and if there's a specialized application of AGI-ish tech that is especially key, you can have everything behave smoothly and still get a jump that way. |

[Christiano][23:18] I think TPUs are basically the same as GPUs probably a bit worse (but GPUs are sold at a 10x markup since that's the size of nvidia's lead) |

[Yudkowsky][23:19] noted; I'm not enough of an expert to directly contradict that statement about TPUs from my own knowledge. |

[Christiano][23:19] (though I think TPUs are nevertheless leased at a slightly higher price than GPUs) |

[Yudkowsky][23:19] how does Nvidia maintain that lead and 10x markup? that sounds like a pretty un-Paul-ish state of affairs given Bitcoin prices never mind AI investments. |

[Christiano][23:20] nvidia's lead isn't worth that much because historically they didn't sell many gpus (especially for non-gaming applications) their R&D investment is relatively large compared to the $ on the table my guess is that their lead doesn't stick, as evidenced by e.g. Google very quickly catching up |

[Yudkowsky][23:21] parenthetically, does this mean - and I don't necessarily predict otherwise - that you predict a drop in Nvidia's stock and a drop in GPU prices in the next couple of years? |

[Christiano][23:21] nvidia's stock may do OK from riding general AI boom, but I do predict a relative fall in nvidia compared to other AI-exposed companies (though I also predicted google to more aggressively try to compete with nvidia for the ML market and think I was just wrong about that, though I don't really know any details of the area) I do expect the cost of compute to fall over the coming years as nvidia's markup gets eroded to be partially offset by increases in the cost of the underlying silicon (though that's still bad news for nvidia) |

[Yudkowsky][23:23] I parenthetically note that I think the Wise Reader should be justly impressed by predictions that come true about relative stock price changes, even if Eliezer has not explicitly contradicted those predictions before they come true. there are bets you can win without my having to bet against you. |

[Christiano][23:23] you are welcome to counterpredict, but no saying in retrospect that reality proved you right if you don't 🙂 otherwise it's just me vs the market |

[Yudkowsky][23:24] I don't feel like I have a counterprediction here, but I think the Wise Reader should be impressed if you win vs. the market. however, this does require you to name in advance a few "other AI-exposed companies". |

[Christiano][23:25] Note that I made the same bet over the last year---I make a large AI bet but mostly moved my nvidia allocation to semiconductor companies. The semiconductor part of the portfolio is up 50% while nvidia is up 70%, so I lost that one. But that just means I like the bet even more next year. happy to use nvidia vs tsmc |

[Yudkowsky][23:25] there's a lot of noise in a 2-stock prediction. |

[Christiano][23:25] I mean, it's a 1-stock prediction about nvidia |

[Yudkowsky][23:26] but your funeral or triumphal! |