steve2152's Shortform

post by Steven Byrnes (steve2152) · 2019-10-31T14:14:26.535Z · LW · GW · 48 commentsContents

48 comments

48 comments

Comments sorted by top scores.

comment by Steven Byrnes (steve2152) · 2024-07-18T16:23:36.506Z · LW(p) · GW(p)

I went through and updated my 2022 “Intro to Brain-Like AGI Safety” series [? · GW]. If you already read it, no need to do so again, but in case you’re curious for details, I put changelogs at the bottom of each post. For a shorter summary of major changes, see this twitter thread, which I copy below (without the screenshots & links):

I’ve learned a few things since writing “Intro to Brain-Like AGI safety” in 2022, so I went through and updated it! Each post has a changelog at the bottom if you’re curious. Most changes were in one the following categories: (1/7)

REDISTRICTING! As I previously posted ↓, I booted the pallidum out of the “Learning Subsystem”. Now it’s the cortex, striatum, & cerebellum (defined expansively, including amygdala, hippocampus, lateral septum, etc.) (2/7)

LINKS! I wrote 60 posts since first finishing that series. Many of them elaborate and clarify things I hinted at in the series. So I tried to put in links where they seemed helpful. For example, I now link my “Valence” series in a bunch of places. (3/7)

NEUROSCIENCE! I corrected or deleted a bunch of speculative neuro hypotheses that turned out wrong. In some early cases, I can’t even remember wtf I was ever even thinking! Just for fun, here’s the evolution of one of my main diagrams since 2021: (4/7)

EXAMPLES! It never hurts to have more examples! So I added a few more. I also switched the main running example of Post 13 from “envy” to “drive to be liked / admired”, partly because I’m no longer even sure envy is related to social instincts at all (oops) (5/7)

LLMs! … …Just kidding! LLMania has exploded since 2022 but remains basically irrelevant to this series. I hope this series is enjoyed by some of the six remaining AI researchers on Earth who don’t work on LLMs. (I did mention LLMs in a few more places though ↓ ) (6/7)

If you’ve already read the series, no need to do so again, but I want to keep it up-to-date for new readers. Again, see the changelogs at the bottom of each post for details. I’m sure I missed things (and introduced new errors)—let me know if you see any!

comment by Steven Byrnes (steve2152) · 2024-08-06T17:11:14.965Z · LW(p) · GW(p)

I’m intrigued by the reports (including but not limited to the Martin 2020 “PNSE” paper) that people can “become enlightened” and have a radically different sense of self, agency, etc.; but friends and family don’t notice them behaving radically differently, or even differently at all. I’m trying to find sources on whether this is true, and if so, what’s the deal. I’m especially interested in behaviors that (naïvely) seem to centrally involve one’s self-image, such as “applying willpower” or “wanting to impress someone”. Specifically, if there’s a person whose sense-of-self has dissolved / merged into the universe / whatever, and they nevertheless enact behaviors that onlookers would conventionally put into one of those two categories, then how would that person describe / conceptualize those behaviors and why they occurred? (Or would they deny the premise that they are still exhibiting those behaviors?) Interested in any references or thoughts, or email / DM me if you prefer. Thanks in advance!

(Edited to add: Ideally someone would reply: “Yeah I have no sense of self, and also I regularly do things that onlookers describe as ‘applying willpower’ and/or ‘trying to impress someone’. And when that happens, I notice the following sequence of thoughts arising: [insert detailed description]”.)

[also posted on twitter where it got a bunch of replies including one by Aella.]

Replies from: Henry Prowbell, Jonas Hallgren, steve2152↑ comment by Henry Prowbell · 2024-08-07T13:47:29.418Z · LW(p) · GW(p)

I’ll give it a go.

I’m not very comfortable with the term enlightened but I’ve been on retreats teaching non-dual meditation, received ‘pointing out instructions’ in the Mahamudra tradition and have experienced some bizarre states of mind where it seemed to make complete sense to think of a sense of awake awareness as being the ground thing that was being experienced spontaneously, with sensations, thoughts and emotions appearing to it — rather than there being a separate me distinct from awareness that was experiencing things ‘using my awareness’, which is how it had always felt before.

When I have (or rather awareness itself has) experienced clear and stable non-dual states the normal ‘self’ stuff still appears in awareness and behaves fairly normally (e.g there’s hunger, thoughts about making dinner, impulses to move the body, the body moving around the room making dinner…). Being in that non dual state seemed to add a very pleasant quality of effortlessness and okayness to the mix but beyond that it wasn’t radically changing what the ‘small self’ in awareness was doing.

If later the thought “I want to eat a second portion of ice cream” came up followed by “I should apply some self control. I better not do that.” they would just be things appearing to awareness.

Of course another thing in awareness is the sense that awareness is aware of itself and the fact that everything feels funky and non-dual at the moment. You’d think that might change the chain of thoughts about the ‘small self’ wanting ice cream and then having to apply self control towards itself.

In fact the first few times I had intense non-dual experiences there was a chain of thoughts that went “what the hell is going on? I’m not sure I like this? What if I can’t get back into the normal dualistic state of mind?” followed by some panicked feelings and then the non-dual state quickly collapsing into a normal dualistic state.

With more practice, doing other forms of meditation to build a stronger base of calmness and self-compassion, I was able to experience the non-dual state and the chain of thoughts that appeared would go more like “This time let’s just stick with it a bit longer. Basically no one has a persistent non-dual experience that lasts forever. It will collapse eventually whether you like it or not. Nothing much has really changed about the contents of awareness. It’s the same stuff just from a different perspective. I’m still obviously able to feel calmness and joyfulness, I’m still able to take actions that keep me safe — so it’s fine to hang out here”. And then thoughts eventually wander around to ice cream or whatever. And, again, all this is just stuff appearing within a single unified awake sense of awareness that’s being labelled as the experiencer (rather than the ‘I’ in the thoughts above being the experiencer).

The fact that thoughts referencing the self are appearing in awareness whilst it’s awareness itself that feels like the experiencer doesn’t seem to create as many contradictions as you would expect. I presume that’s partly because awareness itself, is able to be aware of its own contents but not do much else. It doesn’t for example make decisions or have a sense of free will like the normal dualistic self. Those again would just be more appearances in awareness.

However it’s obvious that awareness being spontaneously aware of itself does change things in important and indirect ways. It does change the sequences of thoughts somehow and the overall feeling tone — and therefore behaviour. But perhaps in less radical ways than you would expect. For me, at different times, this ranged from causing a mini panic attack that collapsed the non-dual state (obviously would have been visible from the outside) to subtly imbuing everything with nice effortlessness vibes and taking the sting out of suffering type experiences but not changing my thought chains and behaviour enough to be noticeable from the outside to someone else.

Disclaimer: I felt unsure at several points writing this and I’m still quite new to non-dual experiences. I can’t reliably generate a clear non-dual state on command, it’s rather hit and miss. What I wrote above is written from a fairly dualistic state relying on memories of previous experiences a few days ago. And it’s possible that the non-dual experience I’m describing here is still rather shallow and missing important insights versus what very accomplished meditators experience.

Replies from: Kaj_Sotala↑ comment by Kaj_Sotala · 2024-08-08T15:09:28.234Z · LW(p) · GW(p)

Great description. This sounds very similar to some of my experiences with non-dual states.

↑ comment by Jonas Hallgren · 2024-08-07T08:10:26.030Z · LW(p) · GW(p)

I won't claim that I'm constantly in a self of non-self, but as I'm writing this, I don't really feel that I'm locally existing in my body. I'm rather the awareness of everything that continuously arises in consciousness.

This doesn't happen all the time, I won't claim to be enlightened or anything but maybe this n=1 self-report can help?

Even from this state of awareness, there's still a will to do something. It is almost like you're a force of nature moving forward with doing what you were doing before you were in a state of presence awareness. It isn't you and at the same time it is you. Words are honestly quite insufficient to describe the experience, but If I try to conceptualise it, I'm the universe moving forward by itself. In a state of non-duality, the taste is often very much the same no matter what experience is arising.

There are some times when I'm not fully in a state of non-dual awareness when it can feel like "I" am pretending to do things. At the same time it also kind of feels like using a tool? The underlying motivation for action changes to something like acceptance or helpfulness, and in order to achieve that, there's this tool of the self that you can apply.

I'm noticing it is quite hard to introspect and try to write from a state of presence awareness at the same time but hopefully it was somewhat helpful?

Could you give me some experiments to try from a state of awareness? I would be happy to try them out and come back.

Extra (relation to some of the ideas): In the Mahayana wisdom tradition, explored in Rob Burbea's Seeing That Frees, there's this idea of emptiness, which is very related to the idea of non-dual perception. For all you see is arising from your own constricted view of experience, and so it is all arising in your own head. Realising this co-creation can enable a freedom of interpretation of your experiences.

Yet this view is also arising in your mind, and so you have "emptiness of emptiness," meaning that you're left without a basis. Therefore, both non-self and self are false but magnificent ways of looking at the world. Some people believe that the non-dual is better than the dual yet as my Thai Forest tradition guru Ajhan Buddhisaro says, "Don't poopoo the mind." The self boundary can be both a restricting and very useful concept, it is just very nice to have the skill to see past it and go back to the state of now, of presence awareness.

Emptiness is a bit like deeply seeing that our beliefs are built up from different axioms and being able to say that the axioms of reality aren't based on anything but probabilistic beliefs. Or seeing that we have Occam's razor because we have seen it work before, yet that it is fundamentally completely arbitrary and that the world just is arising spontaneously from moment to moment. Yet Occam's razor is very useful for making claims about the world.

I'm not sure if that connection makes sense, but hopefully, that gives a better understanding of the non-dual understanding of the self and non-self. (At least the Thai Forest one)

↑ comment by Steven Byrnes (steve2152) · 2024-08-08T02:09:29.332Z · LW(p) · GW(p)

Many helpful replies! Here’s where I’m at right now (feel free to push back!) [I’m coming from an atheist-physicalist perspective; this will bounce off everyone else.]

Hypothesis:

Normies like me (Steve) have an intuitive mental concept “Steve” which is simultaneously BOTH (A) Steve-the-human-body-etc AND (B) Steve-the-soul / consciousness / wellspring of vitalistic force / what Dan Dennett calls a “homunculus” / whatever.

The (A) & (B) “Steve” concepts are the same concept in normies like me, or at least deeply tangled together. So it’s hard to entertain the possibility of them coming apart, or to think through the consequences if they do.

Some people can get into a Mental State S (call it a form of “enlightenment”, or pick your favorite terminology) where their intuitive concept-space around (B) radically changes—it broadens, or disappears, or whatever. But for them, the (A) mental concept still exists and indeed doesn’t change much.

Anyway, people often have thoughts that connect sense-of-self to motivation, like “not wanting to be embarrassed” or “wanting to keep my promises”. My central claim that the relevant sense-of-self involved in that motivation is (A), not (B).

If we conflate (A) & (B)—as normies like me are intuitively inclined to do—then we get the intuition that a radical change in (B) must have radical impacts on behavior. But that’s wrong—the (A) concept is still there and largely unchanged even in Mental State S, and it’s (A), not (B), that plays a role in those behaviorally-important everyday thoughts like “not wanting to be embarrassed” or “wanting to keep my promises”. So radical changes in (B) would not (directly) have the radical behavioral effects that one might intuitively expect (although it does of course have more than zero behavioral effect, with self-reports being an obvious example).

End of hypothesis. Again, feel free to push back!

Replies from: Jonas Hallgren, None↑ comment by Jonas Hallgren · 2024-08-10T20:36:58.115Z · LW(p) · GW(p)

Some meditators say that before you can get a good sense of non-self you first have to have good self-confidence. I think I would tend to agree with them as it is about how you generally act in the world and what consequences your actions will have. Without this the support for the type B that you're talking about can be very hard to come by.

Otherwise I do really agree with what you say in this comment.

There is a slight disagreement with the elaboration though, I do not actually think that makes sense. I would rather say that the (A) that you're talking about is more of a software construct than it is a hardware construct. When you meditate a lot, you realise this and get access to the full OS instead of just the specific software or OS emulator. A is then an evolutionary beneficial algorithm that runs a bit out of control (for example during childhood when we attribute all cause and effect to our "selves").

Meditation allows us to see that what we have previously attributed to the self was flimsy and dependent on us believing that the hypothesis of the self is true.

↑ comment by [deleted] · 2024-08-08T03:02:05.016Z · LW(p) · GW(p)

My experience is different from the two you describe. I typically fully lack (A)[1], and partially lack (B). I think this is something different from what others might describe as 'enlightenment'.

I might write more about this if anyone is interested.

- ^

At least the 'me-the-human-body' part of the concept. I don't know what the '-etc' part refers to.

↑ comment by Steven Byrnes (steve2152) · 2024-08-08T13:32:24.560Z · LW(p) · GW(p)

I just made a wording change from:

Normies like me have an intuitive mental concept “me” which is simultaneously BOTH (A) me-the-human-body-etc AND (B) me-the-soul / consciousness / wellspring of vitalistic force / what Dan Dennett calls a “homunculus” / whatever.

to:

Normies like me (Steve) have an intuitive mental concept “Steve” which is simultaneously BOTH (A) Steve-the-human-body-etc AND (B) Steve-the-soul / consciousness / wellspring of vitalistic force / what Dan Dennett calls a “homunculus” / whatever.

I think that’s closer to what I was trying to get across. Does that edit change anything in your response?

At least the 'me-the-human-body' part of the concept. I don't know what the '-etc' part refers to.

The “etc” would include things like the tendency for fingers to reactively withdraw from touching a hot surface.

Elaborating a bit: In my own (physicalist, illusionist) ontology, there’s a body with a nervous system including the brain, and the whole mental world including consciousness / awareness is inextricably part of that package. But in other people’s ontology, as I understand it, some nervous system activities / properties (e.g. a finger reactively withdrawing from pain, maybe some or all other desires and aversions) gets lumped in with the body, whereas other [things that I happen to believe are] nervous system activities / properties (e.g. awareness) gets peeled off into (B). So I said “etc” to include all the former stuff. Hopefully that’s clear.

(I’m trying hard not to get sidetracked into an argument about the true nature of consciousness—I’m stating my ontology without defending it.)

Replies from: None↑ comment by [deleted] · 2024-08-08T20:11:35.545Z · LW(p) · GW(p)

I think that’s closer to what I was trying to get across. Does that edit change anything in your response?

No.

Overall, I would say that my self-concept is closer to what a physicalist ontology implies is mundanely happening - a neural network, lacking a singular 'self' entity inside it, receiving sense data from sensors and able to output commands to this strange, alien vessel (body). (And also I only identify myself with some parts of the non-mechanistic-level description of what the neural network is doing).

I write in a lot more detail below. This isn't necessarily written at you in particular, or with the expectation of you reading through all of it.

1. Non-belief in self-as-body (A)

I see two kinds of self-as-body belief. The first is looking in a mirror, or at a photo, and thinking, "that [body] is me." The second is controlling the body, and having a sense that you're the one moving it, or more strongly, that it is moving because it is you (and you are choosing to move).

I'll write about my experiences with the second kind first.

The way a finger automatically withdraws from heat does not feel like a part of me in any sense. Yesterday, I accidentally dropped a utensil and my hands automatically snapped into place around it somehow, and I thought something like, "woah, I didn't intend to do that. I guess it's a highly optimized narrow heuristic, from times where reacting so quickly was helpful to survival".

I experimented a bit between writing this, and I noticed one intuitive view I can have of the body is that it's some kind of machine that automatically follows such simple intents about the physical world (including intents that I don't consider 'me', like high fear of spiders). For example, if I have motivation and intent to open a window, then the body just automatically moves to it and opens it without me really noticing that the body itself (or more precisely, the body plus the non-me nervous/neural structure controlling it) is the thing doing that - it's kind of like I'm a ghost (or abstract mind) with telekinesis powers (over nearby objects), but then we apply reductive physics and find that actually there's a causal chain beneath the telekinesis involving a moving body (which I always know and can see, I just don't usually think about it).

The way my hands are moving on the keyboard as I write this also doesn't particularly feel like it's me doing that; in my mind, I'm just willing the text to be written, and then the movement happens on its own, in a way that feels kind of alien if I actually focus on it (as if the hands are their own life form).

That said, this isn't always true. I do have an 'embodied self-sense' sometimes. For example, I usually fall asleep cuddling stuffies because this makes me happy [EA · GW]. At least some purposeful form of sense-of-embodiment seems present there, because the concept of cuddling has embodiment as an assumption.[1]

(As I read over the above, I wonder how different it really is from normal human experience. I'm guessing there's a subtle difference between "being so embodied it becomes a basic implicit assumption that you don't notice" and "being so nonembodied that noticing it feels like [reductive physics metaphor]")

As for the first kind mentioned of locating oneself in the body's appearance, which informs typical humans perception of others and themself - I don't experience this with regard to myself (and try to avoid being biased about others this way), instead I just feel pretty dissociated when I see my body reflected and mostly ignore it.

In the past, it instead felt actively stressful/impossible/horrifying, because I had (and to an extent still do have) a deep intuition that I am already a 'particular kind of being', and, under the self-as-body ontology, this is expected to correspond to a particular kind of body, one which I did not observe reflected back. As this basic sense-of-self violation happened repeatedly, it gradually eroded away this aspect of sense-of-self / the embodied ontology.

I'd also feel alienated if I had to pilot an adult body to interact with others, so I've set up my life such that I only minimally need to do that (e.g for doctors appointments) and can otherwise just interact with the world through text.

2. What parts of the mind-brain are me, and what am I? (B)

I think there's an extent to which I self-model as an 'inner homunculus', or a 'singular-self inside'. I think it's lesser and not as robust in me as it is in typical humans, though. For example, when I reflect on this word 'I' that I keep using, I notice it has a meaning that doesn't feel very true of me: the meaning of a singular, unified entity, rather than multiple inner cognitive processes, or no self in particular.

I often notice my thoughts are coming from different parts of the mind. In one case, I was feeling bad about not having been productive enough in learning/generating insights and I thought to myself, "I need to do better", and then felt aware that it was just one lone part thinking this while the rest doesn't feel moved; the rest instead culminates into a different inner-monologue-thought: something like, "but we always need to do better. tsuyoku naratai is a universal impetus." (to be clear, this is not from a different identity or character, but from different neural processes causally prior to what is thought (or written).)

And when I'm writing (which forces us to 'collapse' our subverbal understanding into one text), it's noticeable how much a potential statement is endorsed by different present influences[2].

I tend to use words like 'I' and 'me' in writing to not confuse others (internally, 'we' can feel more fitting, referring again to multiple inner processes[2], and not to multiple high-level selves as some humans experience. 'we' is often naturally present in our inner monologue). We'll use this language for most of the rest of the text[3].

There are times where this is less true. Our mind can return to acting as a human-singular-identity-player in some contexts. For example, if we're interacting with someone or multiple others, that can push us towards performing a 'self' (but unless it's someone we intuitively-trust and relatively private, we tend to feel alienated/stressed from this). Or if we're, for example, playing a game with a friend, then in those moments we'll probably be drawn back into a more childlike humanistic self-ontology rather than the dissociated posthumanism we describe here.

Also, we want to answer "what inner processes?" - there's some division between parts of the mind-brain we refer to here, and parts that are the 'structure' we're embedded in. We're not quite sure how to write down the line, and it might be fuzzy or e.g contextual.[4]

3. Tracing the intuitive-ontology shift

"Why are you this way, and have you always been this way?" – We haven't always. We think this is the result of a gradual erosion of the 'default' human ontology, mentioned once above.

We think this mostly did not come from something like 'believing in physicalism'. Most physicalists aren't like this. Ontological crises [? · GW] may have been part of it, though - independently synthesizing determinism as a child and realizing it made naive free will impossible sure did make past-child-quila depressed.

We think the strongest sources came from 'intuitive-ontological'[5] incompatibilities, ways the observations seemed to sadly-contradict the platonic self-ontology we started with. Another term for these would be 'survival updates'. This can also include ways one's starting ontology was inadequate to explain certain important observations.

Also, I think that existing so often in a digital-informational context[6], and only infrequently in an analog/physical context, also contributed to eroding the self-as-body belief.

Also, eventually, it wasn't just erosion/survival updates; at some point, I think I slowly started to embrace this posthumanist ontology, too. It feels narratively fitting that I'm now thinking about artificial intelligence and reading LessWrong.

- ^

(There is some sense in which maybe, my proclaimed ontology has its source in constant dissociation, which I only don't experience when feeling especially comfortable/safe. I'm only speculating, though - this is the kind of thing that I'd consider leaving out, since I'm really unsure about it, it's at the level of just one of many passing thoughts I'd consider.)

- ^

This 'inner proccesses' phrasing I keep using doesn't feel quite right. Other words that come to mind: considerations? currently-active neural subnetworks? subagents? some kind of neural council metaphor?

- ^

(sometimes 'we' feels unfitting too, it's weird, maybe 'I' is for when a self is being more-performed, or when text is less representative of the whole, hard to say)

- ^

We tried to point to some rough differences, but realized that the level we mean is somewhere between high-level concepts with words (like 'general/narrow cognition' and 'altruism' and 'biases') and the lowest-level description (i.e how actual neurons are interacting physically), and that we don't know how to write about this.

- ^

We can differentiate between an endorsed 'whole-world ontology' like physicalism, and smaller-scale intuitive ontologies that are more like intuitive frames we seem to believe in, even if when asked we'll say they're not fundamental truths.

The intuitive ontology of the self is particularly central to humans.

- ^

Note this was mostly downstream of other factors, not causally prior to them. I don't want anyone to read this and think internet use itself causes body-self incongruence, though it might avoid certain related feedback loops.

comment by Steven Byrnes (steve2152) · 2020-01-29T19:20:21.030Z · LW(p) · GW(p)

Some ultra-short book reviews on cognitive neuroscience

-

On Intelligence by Jeff Hawkins & Sandra Blakeslee (2004)—very good. Focused on the neocortex - thalamus - hippocampus system, how it's arranged, what computations it's doing, what's the relation between the hippocampus and neocortex, etc. More on Jeff Hawkins's more recent work here [LW · GW].

-

I am a strange loop by Hofstadter (2007)—I dunno, I didn't feel like I got very much out of it, although it's possible that I had already internalized some of the ideas from other sources. I mostly agreed with what he said. I probably got more out of watching Hofstadter give a little lecture on analogical reasoning (example) than from this whole book.

-

Consciousness and the brain by Dehaene (2014)—very good. Maybe I could have saved time by just reading Kaj's review [? · GW], there wasn't that much more to the book beyond that.

-

Conscience by Patricia Churchland (2019)—I hated it. I forget whether I thought it was vague / vacuous, or actually wrong. Apparently I have already blocked the memory!

-

How to Create a Mind by Kurzweil (2014)—Parts of it were redundant with On Intelligence (which I had read earlier), but still worthwhile. His ideas about how brain-computer interfaces are supposed to work (in the context of cortical algorithms) are intriguing; I'm not convinced, hoping to think about it more.

-

Rethinking Consciousness by Graziano (2019)—A+, see my review here [LW · GW]

-

The Accidental Mind by Linden (2008)—Lots of fun facts. The conceit / premise (that the brain is a kludgy accident of evolution) is kinda dumb and overdone—and I disagree with some of the surrounding discussion—but that's not really a big part of the book, just an excuse to talk about lots of fun neuroscience.

-

The Myth of Mirror Neurons by Hickok (2014)—A+, lots of insight about how cognition works, especially the latter half of the book. Prepare to skim some sections of endlessly beating a dead horse (as he dubunks seemingly endless lists of bad arguments in favor of some aspect of mirror neurons). As a bonus, you get treated to an eloquent argument for the "intense world" theory of autism, and some aspects of predictive coding.

-

Surfing Uncertainty by Clark (2015)—I liked it. See also SSC review. I think there's still work to do in fleshing out exactly how these types of algorithms work; it's too easy to mix things up and oversimplify when just describing things qualitatively (see my feeble attempt here [LW · GW], which I only claim is a small step in the right direction).

-

Rethinking innateness by Jeffrey Elman, Annette Karmiloff-Smith, Elizabeth Bates, Mark Johnson, Domenico Parisi, and Kim Plunkett (1996)—I liked it. Reading Steven Pinker, you get the idea that connectionists were a bunch of morons who thought that the brain was just a simple feedforward neural net. This book provides a much richer picture.

comment by Steven Byrnes (steve2152) · 2025-04-03T03:55:33.262Z · LW(p) · GW(p)

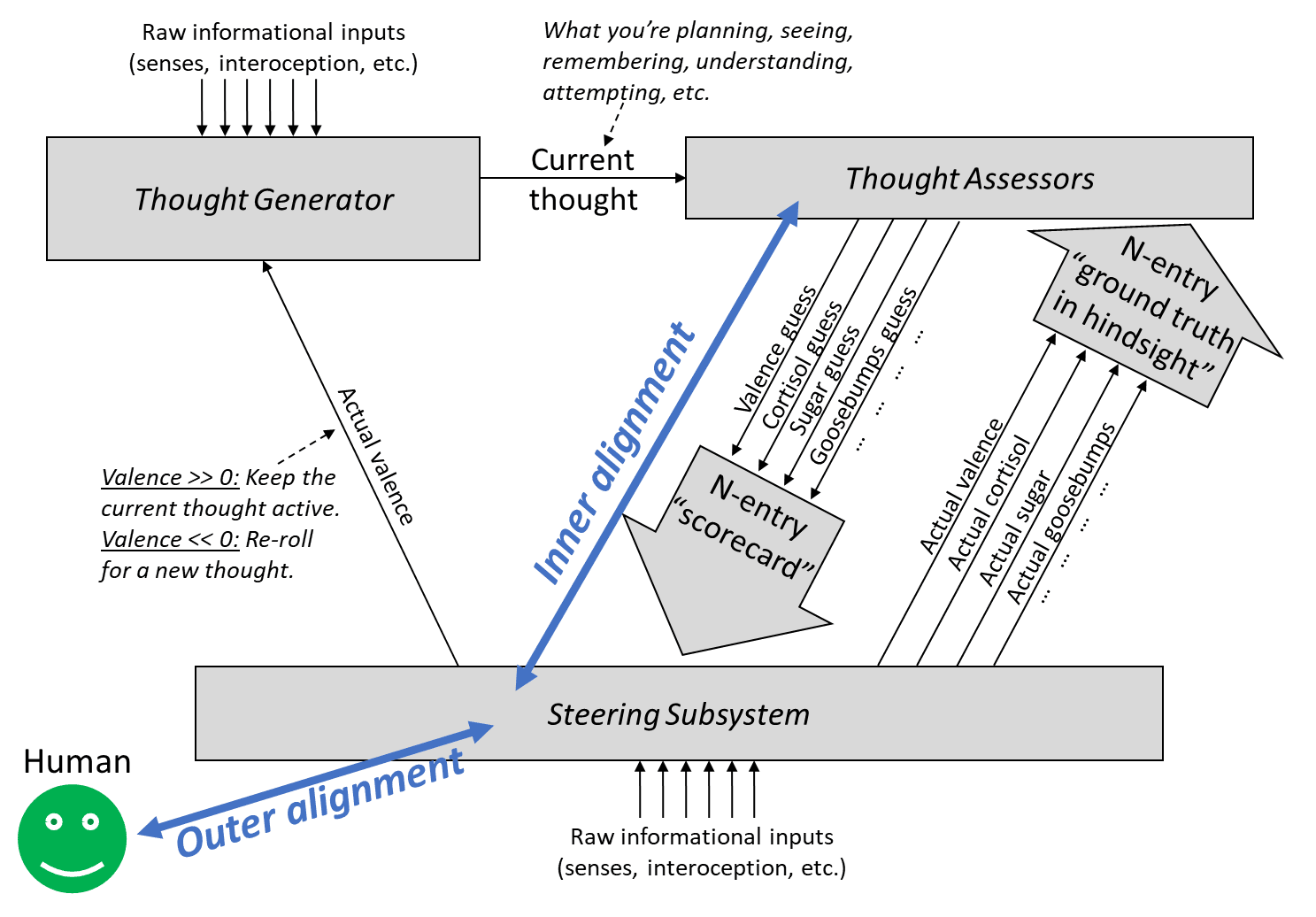

In [Intro to brain-like-AGI safety] 10. The alignment problem [LW · GW] and elsewhere, I’ve been using “outer alignment” and “inner alignment” in a model-based actor-critic RL context to refer to:

“Outer alignment” entails having a ground-truth reward function that spits out rewards that agree with what we want. “Inner alignment” is having a learned value function that estimates the value of a plan in a way that agrees with its eventual reward.

For some reason it took me until now to notice that:

- my “outer misalignment” is more-or-less synonymous with “specification gaming”,

- my “inner misalignment” is more-or-less synonymous with “goal misgeneralization”.

(I’ve been regularly using all four terms for years … I just hadn’t explicitly considered how they related to each other, I guess!)

I updated that post to note the correspondence, but also wanted to signal-boost this, in case other people missed it too.

~~

[You can stop reading here—the rest is less important]

If everybody agrees with that part, there’s a further question of “…now what?”. What terminology should I use going forward? If we have redundant terminology, should we try to settle on one?

One obvious option is that I could just stop using the terms “inner alignment” and “outer alignment” in the actor-critic RL context as above. I could even go back and edit them out of that post, in favor of “specification gaming” and “goal misgeneralization”. Or I could leave it. Or I could even advocate that other people switch in the opposite direction!

One consideration is: Pretty much everyone using the terms “inner alignment” and “outer alignment” are not using them in quite the way I am—I’m using them in the actor-critic model-based RL context, they’re almost always using them in the model-free policy optimization context (e.g. evolution) (see §10.2.2 [LW · GW]). So that’s a cause for confusion, and point in favor of my dropping those terms. On the other hand, I think people using the term “goal misgeneralization” are also almost always using them in a model-free policy optimization context. So actually, maybe that’s a wash? Either way, my usage is not a perfect match to how other people are using the terms, just pretty close in spirit. I’m usually the only one on Earth talking explicitly about actor-critic model-based RL AGI safety, so I kinda have no choice but to stretch existing terms sometimes.

Hmm, aesthetically, I think I prefer the “outer alignment” and “inner alignment” terminology that I’ve traditionally used. I think it’s a better mental picture. But in the context of current broader usage in the field … I’m not sure what’s best.

(Nate Soares dislikes the term “misgeneralization” [LW · GW], on the grounds that “misgeneralization” has a misleading connotation that “the AI is making a mistake by its own lights”, rather than “something is bad by the lights of the programmer”. I’ve noticed a few people trying to get the variation “goal malgeneralization” to catch on instead. That does seem like an improvement, maybe I'll start doing that too.)

Replies from: Simon Skade, Simon Skade↑ comment by Towards_Keeperhood (Simon Skade) · 2025-04-03T12:58:18.363Z · LW(p) · GW(p)

Note: I just noticed your post has a section "Manipulating itself and its learning process", which I must've completely forgotten since I last read the post. I should've read your post before posting this. Will do so.

“Outer alignment” entails having a ground-truth reward function that spits out rewards that agree with what we want. “Inner alignment” is having a learned value function that estimates the value of a plan in a way that agrees with its eventual reward.

Calling problems "outer" and "inner" alignment seems to suggest that if we solved both we've successfully aligned AI to do nice things. However, this isn't really the case here.

Namely, there could be a smart mesa-optimizer spinning up in the thought generator, who's thoughts are mostly invisible to the learned value function (LVF), and who can model the situation it is in and has different values and is smarter than the LVF evaluation and can fool the the LVF into believing the plans that are good according to the mesa-optimizer are great according to the LVF, even if they actually aren't.

This kills you even if we have a nice ground-truth reward and the LVF accurately captures that.

In fact, this may be quite a likely failure mode, given that the thought generator is where the actual capability comes from, and we don't understand how it works.

Replies from: steve2152↑ comment by Steven Byrnes (steve2152) · 2025-04-03T13:36:22.887Z · LW(p) · GW(p)

Thanks! But I don’t think that’s a likely failure mode. I wrote about this long ago in the intro to Thoughts on safety in predictive learning [LW · GW].

In my view, the big problem with model-based actor-critic RL AGI, the one that I spend all my time working on, is that it tries to kill us via using its model-based RL capabilities in the way we normally expect—where the planner plans, and the actor acts, and the critic criticizes, and the world-model models the world …and the end-result is that the system makes and executes a plan to kill us. I consider that the obvious, central type of alignment failure mode for model-based RL AGI, and it remains an unsolved problem.

I think (??) you’re bringing up a different and more exotic failure mode where the world-model by itself is secretly harboring a full-fledged planning agent. I think this is unlikely to happen. One way to think about it is: if the world-model is specifically designed by the programmers to be a world-model in the context of an explicit model-based RL framework, then it will probably be designed in such a way that it’s an effective search over plausible world-models, but not an effective search over a much wider space of arbitrary computer programs that includes self-contained planning agents. See also §3 here [LW · GW] for why a search over arbitrary computer programs would be a spectacularly inefficient way to build all that agent stuff (TD learning in the critic, roll-outs in the planner, replay, whatever) compared to what the programmers will have already explicitly built into the RL agent architecture.

So I think this kind of thing (the world-model by itself spawning a full-fledged planning agent capable of treacherous turns etc.) is unlikely to happen in the first place. And even if it happens, I think the problem is easily mitigated; see discussion in Thoughts on safety in predictive learning [LW · GW]. (Or sorry if I’m misunderstanding.)

Replies from: Simon Skade↑ comment by Towards_Keeperhood (Simon Skade) · 2025-04-03T19:11:33.146Z · LW(p) · GW(p)

Thanks.

Yeah I guess I wasn't thinking concretely enough. I don't know whether something vaguely like what I described might be likely or not. Let me think out loud a bit about how I think about what you might be imagining so you can correct my model. So here's a bit of rambling: (I think point 6 is most important.)

- As you described in you intuitive self-models sequence, humans have a self-model which can essentially have values different from the main value function, aka they can have ego-dystonic desires.

- I think in smart reflective humans, the policy suggestions of the self-model/homunculus can be more coherent than the value function estimates, e.g. because they can better take abstract philosophical arguments into account.

- The learned value function can also update on hypothetical scenarios, e.g. imagining a risk or a gain, but it doesn't update strongly on abstract arguments like "I should correct my estimates based on outside view".

- The learned value function can learn to trust the self-model if acting according to the self-model is consistently correlated with higher-than-expected reward.

- Say we have a smart reflective human where the value function basically trusts the self-model a lot, then the self-model could start optimizing its own values, while the (stupid) value function believes it's best to just trust the self-model and that this will likely lead to reward. Something like this could happen where the value function was actually aligned to outer reward, but the inner suggestor was just very good at making suggestions that the value function likes, even if the inner suggestor would have different actual values. I guess if the self-model suggests something that actually leads to less reward, then the value function will trust the self-model less, but outside the training distribution the self-model could essentially do what it wants.

- Another question of course is whether the inner self-reflective optimizers are likely aligned to the initial value function. I would need to think about it. Do you see this as a part of the inner alignment problem or as a separate problem?

- As an aside, one question would be whether the way this human makes decisions is still essentially actor-critic model-based RL like - whether the critic just got replaced through a more competent version. I don't really know.

- (Of course, I totally ackgnowledge that humans have pre-wired machinery for their intuitive self-models, rather than that just spawning up. I'm not particularly discussing my original objection anymore.)

- I'm also uncertain whether something working through the main actor-critic model-based RL mechanism would be capable enough to do something pivotal. Like yeah, most and maybe all current humans probably work that way. But if you go a bit smarter then minds might use more advanced techniques of e.g. translating problems into abstract domains and writing narrow AIs to solve them there and then translating it back into concrete proposals or sth. Though maybe it doesn't matter as long as the more advanced techniques don't spawn up more powerful unaligned minds, in which case a smart mind would probably not use the technique in the first place. And I guess actor-critic model-based RL is sorta like expected utility maximization, which is pretty general and can get you far. Only the native kind of EU maximization we implement through actor-critic model-based RL might be very inefficient compared through other kinds.

- I have a heuristic like "look at where the main capability comes from", and I'd guess for very smart agents it perhaps doesn't come from the value function making really good estimates by itself, and I want to understand how something could be very capable and look at the key parts for this and whether they might be dangerous.

- Ignoring human self-models now, the way I imagine actor-critic model-based RL is that it would start out unreflective. It might eventually learn to model parts of itself and form beliefs about its own values. Then, the world-modelling machinery might be better at noticing inconsistencies in the behavior and value estimates of that agent than the agent itself. The value function might then learn to trust the world-model's predictions about what would be in the interest of the agent/self.

- This seems to me to sorta qualify as "there's an inner optimizer". I would've tentitatively predicted you to say like "yep but it's an inner aligned optimizer", but not sure if you actually think this or whether you disagree with my reasoning here. (I would need to consider how likely value drift from such a change seems. I don't know yet.)

I don't have a clear take here. I'm just curious if you have some thoughts on where something importantly mismatches your model.

Replies from: steve2152↑ comment by Steven Byrnes (steve2152) · 2025-04-09T02:56:37.582Z · LW(p) · GW(p)

Thanks! Basically everything you wrote importantly mismatches my model :( I think I can kinda translate parts; maybe that will be helpful.

Background (§8.4.2 [LW · GW]): The thought generator settles on a thought, then the value function assigns a “valence guess”, and the brainstem declares an actual valence, either by copying the valence guess (“defer-to-predictor mode”), or overriding it (because there’s meanwhile some other source of ground truth, like I just stubbed my toe).

Sometimes thoughts are self-reflective. E.g. “the idea of myself lying in bed” is a different thought from “the feel of the pillow on my head”. The former is self-reflective—it has me in the frame—the latter is not (let’s assume).

All thoughts can be positive or negative valence (motivating or demotivating). So self-reflective thoughts can be positive or negative valence, and non-self-reflective thoughts can also be positive or negative valence. Doesn’t matter, it’s always the same machinery, the same value function / valence guess / thought assessor. That one function can evaluate both self-reflective and non-self-reflective thoughts, just as it can evaluate both sweater-related thoughts and cloud-related thoughts.

When something seems good (positive valence) in a self-reflective frame, that’s called ego-syntonic, and when something seems bad in a self-reflective frame, that’s called ego-dystonic.

Now let’s go through what you wrote:

1. humans have a self-model which can essentially have values different from the main value function

I would translate that into: “it’s possible for something to seem good (positive valence) in a self-reflective frame, but seem bad in a non-self-reflective frame. Or vice-versa.” After all, those are two different thoughts, so yeah of course they can have two different valences.

2. the policy suggestions of the self-model/homunculus can be more coherent than the value function estimates

I would translate that into: “there’s a decent amount of coherence / self-consistency in the set of thoughts that seem good or bad in a self-reflective frame, and there’s less coherence / self-consistency in the set of things that seem good or bad in a non-self-reflective frame”.

(And there’s a logical reason for that; namely, that hard thinking and brainstorming tends to bring self-reflective thoughts to mind — §8.5.5 [LW · GW] — and hard thinking and brainstorming is involved in reducing inconsistency between different desires.)

3. The learned value function can learn to trust the self-model if acting according to the self-model is consistently correlated with higher-than-expected reward.

This one is more foreign to me. A self-reflective thought can have positive or negative valence for the same reasons that any other thought can have positive or negative valence—because of immediate rewards, and because of the past history of rewards, via TD learning, etc.

One thing is: someone can develop a learned metacognitive habit to the effect of “think self-reflective thoughts more often” (which is kinda synonymous with “don’t be so impulsive”). They would learn this habit exactly to the extent and in the circumstances that it has led to higher reward / positive valence in the past.

4. Say we have a smart reflective human where the value function basically trusts the self-model a lot, then the self-model could start optimizing its own values, while the (stupid) value function believes it's best to just trust the self-model and that this will likely lead to reward.

If someone gets in the habit of “think self-reflective thoughts all the time” a.k.a. “don’t be so impulsive”, then their behavior will be especially strongly determined by which self-reflective thoughts are positive or negative valence.

But “which self-reflective thoughts are positive or negative valence” is still determined by the value function / valence guess function / thought assessor in conjunction with ground-truth rewards / actual valence—which in turn involves the reward function, and the past history of rewards, and TD learning, blah blah. Same as any other kind of thought.

…I won’t keep going with your other points, because it’s more of the same idea.

Does that help explain where I’m coming from?

Replies from: Simon Skade↑ comment by Towards_Keeperhood (Simon Skade) · 2025-04-09T13:34:45.980Z · LW(p) · GW(p)

Thanks!

Sorry, I think I intended to write what I think you think, and then just clarified my own thoughts, and forgot to edit the beginning. Sorry, I ought to have properly recalled your model.

Yes, I think I understand your translations and your framing of the value function.

Here are the key differences between a (more concrete version of) my previous model and what I think your model is. Please lmk if I'm still wrongly describing your model:

- plans vs thoughts

- My previous model: The main work for devising plans/thoughts happens in the world-model/thought-generator, and the value function evaluates plans.

- Your model: The value function selects which of some proposed thoughts to think next. Planning happens through the value function steering the thoughts, not the world model doing so.

- detailedness of evaluation of value function

- My previous model: The learned value function is a relatively primitive map from the predicted effects of plans to a value which describes whether the plan is likely better than the expected counterfactual plan. E.g. maybe sth roughly like that we model how sth like units of exchange [LW · GW] (including dimensions like "how much does Alice admire me") change depending on a plan, and then there is a relatively simple function from the vector of units to values. When having abstract thoughts, the value function doesn't understand much of the content there, and only uses some simple heuristics for deciding how to change its value estimate. E.g. a heuristic might be "when there's a thought that the world model thinks is valid and it is associated to the (self-model-invoking) thought "this is bad for accomplishing my goals", then it lowers its value estimate. In humans slightly smarter than the current smartest humans, it might eventually learn the heuristic "do an explicit expected utility estimate and just take what the result says as the value estimate", and then that is being done and the value function itself doesn't understand much about what's going on in the expected utility estimate, but it just allows to happen whatever the abstract reasoning engine predicts. So it essentially optimizes goals that are stored as beliefs in the world model.

- So technically you could still say "but what gets done still depends on the value function, so when the value function just trusts some optimization procedure which optimizes a stored goal, and that goal isn't what we intended, then the value function is misaligned". But it seems sorta odd because the value function isn't really the main relevant thing doing the optimization.

- The value function essentially is too dumb to do the main optimization itself for accomplishing extremely hard tasks. Even if you set incentives so that you get ground-truth reward for moving closer to the goal, it would be too slow at learning what strategies work well

- Your model: The value function has quite a good model of what thoughts are useful to think. It is just computing value estimates, but it can make quite coherent estimates to accomplish powerful goals.

- If there are abstract thoughts about actually optimizing a different goal than is in the interest of the value function, the value function shuts them down by assigning low value.

- (My thoughts: One intuition is that to get to pivotal intelligence level, the value function might need some model of its own goals in order to efficiently recognizing when some values it is assigning aren't that coherent, but I'm pretty unsure of that. Do you think the value function can learn a model of its own values?)

- My previous model: The learned value function is a relatively primitive map from the predicted effects of plans to a value which describes whether the plan is likely better than the expected counterfactual plan. E.g. maybe sth roughly like that we model how sth like units of exchange [LW · GW] (including dimensions like "how much does Alice admire me") change depending on a plan, and then there is a relatively simple function from the vector of units to values. When having abstract thoughts, the value function doesn't understand much of the content there, and only uses some simple heuristics for deciding how to change its value estimate. E.g. a heuristic might be "when there's a thought that the world model thinks is valid and it is associated to the (self-model-invoking) thought "this is bad for accomplishing my goals", then it lowers its value estimate. In humans slightly smarter than the current smartest humans, it might eventually learn the heuristic "do an explicit expected utility estimate and just take what the result says as the value estimate", and then that is being done and the value function itself doesn't understand much about what's going on in the expected utility estimate, but it just allows to happen whatever the abstract reasoning engine predicts. So it essentially optimizes goals that are stored as beliefs in the world model.

There's a spectrum between my model and yours. I don't know what model is better; at some point I'll think about what may be a good model here. (Feel free to lmk your thoughts on why your model may be better, though maybe I just see it when in the future I think about it more carefully and reread some of your posts and model your model in more detail. I'm currently not modelling either model that detailed.)

Replies from: steve2152↑ comment by Steven Byrnes (steve2152) · 2025-04-11T20:09:49.528Z · LW(p) · GW(p)

Thanks! Oddly enough, in that comment I’m much more in agreement with the model you attribute to yourself than the model you attribute to me. ¯\_(ツ)_/¯

the value function doesn't understand much of the content there, and only uses some simple heuristics for deciding how to change its value estimate

Think of it as a big table that roughly-linearly [LW · GW] assigns good or bad vibes to all the bits and pieces that comprise a thought, and adds them up into a scalar final answer. And a plan is just another thought. So “I’m gonna get that candy and eat it right now” is a thought, and also a plan, and it gets positive vibes from the fact that “eating candy” is part of the thought, but it also gets negative vibes from the fact that “standing up” is part of the thought (assume that I’m feeling very tired right now). You add those up into the final value / valence, which might or might not be positive, and accordingly you might or might not actually get the candy. (And if not, some random new thought will pop into your head instead.)

Why does the value function assign positive vibes to eating-candy? Why does it assign negative vibes to standing-up-while-tired? Because of the past history of primary rewards via (something like) TD learning, which updates the value function.

Does the value function “understand the content”? No, the value function is a linear functional on the content of a thought. Linear functionals don’t understand things. :)

(I feel like maybe you’re going wrong by thinking of the value function and Thought Generator as intelligent agents rather than “machines that are components of a larger machine”?? Sorry if that’s uncharitable.)

[the value function] only uses some simple heuristics for deciding how to change its value estimate. E.g. a heuristic might be "when there's a thought that the world model thinks is valid and it is associated to the (self-model-invoking) thought "this is bad for accomplishing my goals", then it lowers its value estimate.

The value function is a linear(ish) functional whose input is a thought. A thought is an object in some high-dimensional space, related to the presence or absence of all the different concepts comprising it. Some concepts are real-world things like “candy”, other concepts are metacognitive, and still other concepts are self-reflective. When a metacognitive and/or self-reflective concept is active in a thought, the value function will correspondingly assign extra positive or negative vibes—just like if any other kind of concept is active. And those vibes depending on the correlations of those concepts with past rewards via (something like) TD learning.

So “I will fail at my goals” would be a kind of thought, and TD learning would gradually adjust the value function such that this thought has negative valence. And this thought can co-occur with or be a subset of other thoughts that involve failing at goals, because the Thought Generator is a machine that learns these kinds of correlations and implications, thanks to a different learning algorithm that sculpts it into an ever-more-accurate predictive world-model.

Replies from: Simon Skade↑ comment by Towards_Keeperhood (Simon Skade) · 2025-04-13T09:22:10.487Z · LW(p) · GW(p)

Thanks!

If the value function is simple, I think it may be a lot worse than the world-model/thought-generator at evaluating what abstract plans are actually likely to work (since the agent hasn't yet tried a lot of similar abstract plans from where it could've observed results, and the world model's prediction making capabilities generalize further). The world model may also form some beliefs about what the goals/values in a given current situation are. So let's say the thought generator outputs plans along with predictions about those plans, and some of those predictions predict how well a plan is going to fulfill what it believes the goals are (like approximate expected utility). Then the value function might learn to just just look at this part of a thought that predicts the expected utility, and then take that as it's value estimate.

Or perhaps a slightly more concrete version of how that may happen. (I'm thinking about model-based actor-critic RL agents which start out relatively unreflective, rather than just humans.):

- Sometimes the thought generator generates self-reflective thoughts like "what are my goals here", where upon the thought generator produces an answer "X" to that, and then when thinking how to accomplish X it often comes up with a better (according to the value function) plan than if it tried to directly generate a plan without clarifying X. Thus the value function learns to assign positive valence to thinking "what are my goals here".

- The same can happen with "what are my long-term goals", where the thought generator might guess something that would cause high reward.

- For humans, X is likely more socially nice than would be expected from the value function, since "X are my goals here" is a self-reflective thought where the social dimensions are more important for the overall valence guess.[1]

- Later the thought generator may generate the thought "make careful predictions whether the plan will actually accomplish the stated goals well", where upon the thought generator often finds some incoherences that the value function didn't notice, and produces a better plan. Then the value function learns to assign high valence to thoughts like "make careful predictions whether the plan will actually accomplish the stated goals well".

- Later the predictions of the thought generator may not always match well with the valence the value function assigns, and it turns out that the thought generator's predictions often were better. So over time the value function gets updated more and more toward "take the predictions of the thought generator as our valence guess", since that strategy better predicts later valence guesses.

- Now, some goals are mainly optimized by the thought generator predicting how some goals could be accomplished well, and there might be beliefs in the thought generator like "studying rationality may make me better at accomplishing my goals", causing the agent to study rationality.

- And also thoughts like "making sure the currently optimized goal keeps being optimized increases the expected utility according to the goal".

- And maybe later more advanced bootstrapping through thoughts like "understanding how my mind works and exploiting insights to shape it to optimize more effectively would probably help me accomplish my goals". Though of course for this to be a viable strategy it would at least be as smart as the smartest current humans (which we can assume because otherwise it's too useless IMO).

So now the value function is often just relaying world-model judgements and all the actually powerful optimization happens in the thought generator. So I would not classify that as the following:

In my view, the big problem with model-based actor-critic RL AGI, the one that I spend all my time working on, is that it tries to kill us via using its model-based RL capabilities in the way we normally expect—where the planner plans, and the actor acts, and the critic criticizes, and the world-model models the world …and the end-result is that the system makes and executes a plan to kill us.

So in my story, the thought generator learns to model the self-agent and has some beliefs about what goals it may have, and some coherent extrapolation of (some of) those goals is what gets optimized in the end. I guess it's probably not that likely that those goals are strongly misaligned to the value function on the distribution where the value function can evaluate plans, but there are many possible ways to generalize the values of the value function.

For humans, I think that the way this generalization happens is value-laden (aka what human values are depend on this generalization). The values might generalize a bit differently for different humans of course, but it's plausible that humans share a lot of their prior-that-determines-generalization, so AIs with a different brain architecture might generalize very differently.

Basically, whenever someone thinks "what's actually my goal here", I would say that's already a slight departure from "using one's model-based RL capabilities in the way we normally expect". Though I think I would agree that for most humans such departures are rare and small, but I think they get a lot larger for smart reflective people, and I think I wouldn't describe my own brain as "using one's model-based RL capabilities in the way we normally expect". I'm not at all sure about this, but I would expect that "using its model-based RL capabilities in the way we normally expect" won't get us to pivotal level of capability if the value function is primitive.

- ^

If I just trust my model of your model here. (Though I might misrepresent your model. I would need to reread your posts.)

↑ comment by Towards_Keeperhood (Simon Skade) · 2025-04-03T12:44:25.882Z · LW(p) · GW(p)

I'd suggest not using conflated terminology and rather making up your own.

Or rather, first actually don't use any abstract handles at all and just describe the problems/failure-modes directly, and when you're confident you have a pretty natural breakdown of the problems with which you'll stick for a while, then make up your own ontology.

In fact, while in your framework there's a crisp difference between ground-truth reward and learned value-estimator, it might not make sense to just split the alignment problem in two parts like this:

“Outer alignment” entails having a ground-truth reward function that spits out rewards that agree with what we want. “Inner alignment” is having a learned value function that estimates the value of a plan in a way that agrees with its eventual reward.

First attempt of explaining what seems wrong: If that was the first I read on outer-vs-inner alignment as a breakdown of the alignment problem, I would expect "rewards that agree with what we want" to mean something like "changes in expected utility according to humanity's CEV". (Which would make inner alignment unnecessary because if we had outer alignment we could easily reach CEV.)

Second attempt:

"in a way that agrees with its eventual reward" seems to imply that there's actually an objective reward for trajectories of the universe. However, the way you probably actually imagine the ground-truth reward is something like humans (who are ideally equipped with good interpretability tools) giving feedback on whether something was good or bad, so the ground-truth reward is actually an evaluation function on the human's (imperfect) world model. Problems:

- Humans don't actually give coherent rewards which are consistent with a utility function on their world model.

- For this problem we might be able to define an extrapolation procedure that's not too bad.

- The reward depends on the state of the world model of the human, and our world models probably often has false beliefs.

- Importantly, the setup needs to be designed in a way that there wouldn't be an incentive to manipulate the humans into believing false things.

- Maybe, optimistically, we could mitigate this problem by having the AI form a model of the operators, doing some ontology translation between the operator's world model and its own world model, and flagging when there seems to be a relavant belief mismatch.

- Our world models cannot evaluate yet whether e.g. filling the universe computronium running a certain type of programs would be good, because we are confused about qualia and don't know yet what would be good according to our CEV. Basically, the ground-truth reward would very often just say "i don't know yet", even for cases which are actually very important according to our CEV. It's not just that we would need a faithful translation of the state of the universe into our primitive ontology (like "there are simulations of lots of happy and conscious people living interesting lives"), it's also that (1) the way our world model treats e.g. "consciousness" may not naturally map to anything in a more precise ontology, and while our human minds, learning a deeper ontology, might go like "ah, this is what I actually care about - I've been so confused", such value-generalization is likely even much harder to specify than basic ontology translation. And (2), our CEV may include value-shards which we currently do not know of or track at all.

- So while this kind of outer-vs-inner distinction might maybe be fine for human-level AIs, it stops being a good breakdown for smarter AIs, since whenever we want to make the AI do something where humans couldn't evaluate the result within reasonable time, it needs to generalize beyond what could be evaluated through ground-truth reward.

So mainly because of point 3, instead of asking "how can i make the learned value function agree with the ground-truth reward", I think it may be better to ask "how can I make the learned value function generalize from the ground-truth reward in the way I want"?

(I guess the outer-vs-inner could make sense in a case where your outer evaluation is superhumanly good, though I cannot think of such a case where looking at the problem from the model-based RL framework would still make much sense, but maybe I'm still unimaginative right now.)

Note that I assumed here that the ground-truth signal is something like feedback from humans. Maybe you're thinking of it differently than I described here, e.g. if you want to code a steering subsystem for providing ground-truth. But if the steering subsystem is not smarter than humans at evaluating what's good or bad, the same argument applies. If you think your steering subsystem would be smarter, I'd be interested in why.

(All that is assuming you're attacking alignment from the actor-critic model-based RL framework. There are other possible frameworks, e.g. trying to directly point the utility function on an agent's world-model, where the key problems are different.)

Replies from: steve2152↑ comment by Steven Byrnes (steve2152) · 2025-04-03T13:14:04.199Z · LW(p) · GW(p)

Thanks!

I think “inner alignment” and “outer alignment” (as I’m using the term) is a “natural breakdown” of alignment failures in the special case of model-based actor-critic RL AGI with a “behaviorist” reward function [LW · GW] (i.e., reward that depends on the AI’s outputs, as opposed to what the AI is thinking about). As I wrote here [LW(p) · GW(p)]:

Suppose there’s an intelligent designer (say, a human programmer), and they make a reward function R hoping that they will wind up with a trained AGI that’s trying to do X (where X is some idea in the programmer’s head), but they fail and the AGI is trying to do not-X instead. If R only depends on the AGI’s external behavior (as is often the case in RL these days), then we can imagine two ways that this failure happened:

- The AGI was doing the wrong thing but got rewarded anyway (or doing the right thing but got punished)

- The AGI was doing the right thing for the wrong reasons but got rewarded anyway (or doing the wrong thing for the right reasons but got punished).

I think it’s useful to catalog possible failures based on whether they involve (1) or (2), and I think it’s reasonable to call them “failures of outer alignment” and “failures of inner alignment” respectively, and I think when (1) is happening rarely or not at all, we can say that the reward function is doing a good job at “representing” the designer’s intention—or at any rate, it’s doing as well as we can possibly hope for from a reward function of that form. The AGI still might fail to acquire the right motivation, and there might be things we can do to help (e.g. change the training environment), but replacing R (which fires exactly to the extent that the AGI’s external behavior involves doing X) by a different external-behavior-based reward function R’ (which sometimes fires when the AGI is doing not-X, and/or sometimes doesn’t fire when the AGI is doing X) seems like it would only make things worse. So in that sense, it seems useful to talk about outer misalignment, a.k.a. situations where the reward function is failing to “represent” the AGI designer’s desired external behavior, and to treat those situations as generally bad.

(A bit more related discussion here [LW(p) · GW(p)].)

That definitely does not mean that we should be going for a solution to outer alignment and a separate unrelated solution to inner alignment, as I discussed briefly in §10.6 [LW · GW] of that post, and TurnTrout discussed at greater length in Inner and outer alignment decompose one hard problem into two extremely hard problems [LW · GW]. (I endorse his title, but I forget whether I 100% agreed with all the content he wrote.)

I find your comment confusing, I’m pretty sure you misunderstood me, and I’m trying to pin down how …

One thing is, I’m thinking that the AGI code will be an RL agent, vaguely in the same category as MuZero or AlphaZero or whatever, which has an obvious part of its source code labeled “reward”. For example, AlphaZero-chess has a reward of +1 for getting checkmate, -1 for getting checkmated, 0 for a draw. Atari-playing RL agents often use the in-game score as a reward function. Etc. These are explicitly parts of the code, so it’s very obvious and uncontroversial what the reward is (leaving aside self-hacking), see e.g. here where an AlphaZero clone checks whether a board is checkmate.

Another thing is, I’m obviously using “alignment” in a narrower sense than CEV (see the post—“the AGI is ‘trying’ to do what the programmer had intended for it to try to do…”)

Another thing is, if the programmer wants CEV (for the sake of argument), and somehow (!!) writes an RL reward function in Python whose output perfectly matches the extent to which the AGI’s behavior advances CEV, then I disagree that this would “make inner alignment unnecessary”. I’m not quite sure why you believe that. The idea is: actor-critic model-based RL agents of the type I’m talking about evaluate possible plans using their learned value function, not their reward function, and these two don’t have to agree. Therefore, what they’re “trying” to do would not necessarily be to advance CEV, even if the reward function were perfect.

If I’m still missing where you’re coming from, happy to keep chatting :)

Replies from: Simon Skade↑ comment by Towards_Keeperhood (Simon Skade) · 2025-04-03T15:48:13.627Z · LW(p) · GW(p)

Thanks!

Another thing is, if the programmer wants CEV (for the sake of argument), and somehow (!!) writes an RL reward function in Python whose output perfectly matches the extent to which the AGI’s behavior advances CEV, then I disagree that this would “make inner alignment unnecessary”. I’m not quite sure why you believe that.

I was just imagining a fully omnicient oracle that could tell you for each action how good that action is according to your extrapolated preferences, in which case you could just explore a bit and always pick the best action according to that oracle. But nvm, I noticed my first attempt of how I wanted to explain what I feel like is wrong sucked and thus dropped it.

- The AGI was doing the wrong thing but got rewarded anyway (or doing the right thing but got punished)

- The AGI was doing the right thing for the wrong reasons but got rewarded anyway (or doing the wrong thing for the right reasons but got punished).

This seems like a sensible breakdown to me, and I agree this seems like a useful distinction (although not a useful reduction of the alignment problem to subproblems, though I guess you agree here).

However, I think most people underestimate how many ways there are for the AI to do the right thing for the wrong reasons (namely they think it's just about deception), and I think it's not:

I think we need to make AI have a particular utility function. We have a training distribution where we have a ground-truth reward signal, but there are many different utility functions that are compatible with the reward on the training distribution, which assign different utilities off-distribution.

You could avoid talking about utility functions by saying "the learned value function just predicts reward", and that may work while you're staying within the distribution we actually gave reward on, since there all the utility functions compatible with the ground-truth reward still agree. But once you're going off distribution, what value you assign to some worldstates/plans depends on what utility function you generalized to.

I think humans have particular not-easy-to-pin-down machinery inside them, that makes their utility function generalize to some narrow cluster of all ground-truth-reward-compatible utility functions, and a mind with a different mind design is unlikely to generalize to the same cluster of utility functions.

(Though we could aim for a different compatible utility function, namely the "indirect alignment" one that say "fulfill human's CEV", which has lower complexity than the ones humans generalize to (since the value generalization prior doesn't need to be specified and can instead be inferred from observations about humans). (I think that is what's meant by "corrigibly aligned" in "Risks from learned optimization", though it has been a very long time since I read this.))

Actually, it may be useful to distinguish two kinds of this "utility vs reward mismatch":

1. Utility/reward being insufficiently defined outside of training distribution (e.g. for what programs to run on computronium).

2. What things in the causal chain producing the reward are the things you actually care about? E.g. that the reward button is pressed, that the human thinks you did something well, that you did something according to some proxy preferences.

Overall, I think the outer-vs-inner framing has some implicit connotation that for inner alignment we just need to make it internalize the ground-truth reward (as opposed to e.g. being deceptive). Whereas I think "internalizing ground-truth reward" isn't meaningful off distribution and it's actually a very hard problem to set up the system in a way that it generalizes in the way we want.

But maybe you're aware of that "finding the right prior so it generalizes to the right utility function" problem, and you see it as part of inner alignment.

Replies from: steve2152↑ comment by Steven Byrnes (steve2152) · 2025-04-03T17:34:55.850Z · LW(p) · GW(p)

I was just imagining a fully omnicient oracle that could tell you for each action how good that action is according to your extrapolated preferences, in which case you could just explore a bit and always pick the best action according to that oracle.

OK, let’s attach this oracle to an AI. The reason this thought experiment is weird is because the goodness of an AI’s action right now cannot be evaluated independent of an expectation about what the AI will do in the future. E.g., if the AI says the word “The…”, is that a good or bad way for it to start its sentence? It’s kinda unknowable in the absence of what its later words will be.

So one thing you can do is say that the AI bumbles around and takes reversible actions, rolling them back whenever the oracle says no. And the oracle is so good that we get CEV that way. This is a coherent thought experiment, and it does indeed make inner alignment unnecessary—but only because we’ve removed all the intelligence from the so-called AI! The AI is no longer making plans, so the plans don’t need to be accurately evaluated for their goodness (which is where inner alignment problems happen).

Alternately, we could flesh out the thought experiment by saying that the AI does have a lot of intelligence and planning, and that the oracle is doing the best it can to anticipate the AI’s behavior (without reading the AI’s mind). In that case, we do have to worry about the AI having bad motivation, and tricking the oracle by doing innocuous-seeming things until it suddenly deletes the oracle subroutine out of the blue (treacherous turn). So in that version, the AI’s inner alignment is still important. (Unless we just declare that the AI’s alignment is unnecessary in the first place, because we’re going to prevent treacherous turns via option control [LW · GW].)

However, I think most people underestimate how many ways there are for the AI to do the right thing for the wrong reasons (namely they think it's just about deception), and I think it's not:

Yeah I mostly think this part of your comment is listing reasons that inner alignment might fail, a.k.a. reasons that goal misgeneralization / malgeneralization can happen. (Which is a fine thing to do!)

If someone thinks inner misalignment is synonymous with deception, then they’re confused. I’m not sure how such a person would have gotten that impression. If it’s a very common confusion, then that’s news to me.

Inner alignment can lead to deception. But outer alignment can lead to deception too! Any misalignment can lead to deception, regardless of whether the source of that misalignment was “outer” or “inner” or “both” or “neither”.

“Deception” is deliberate by definition—otherwise we would call it by another term, like “mistake”. That’s why it has to happen after there are misaligned motivations, right?

Overall, I think the outer-vs-inner framing has some implicit connotation that for inner alignment we just need to make it internalize the ground-truth reward

OK, so I guess I’ll put you down as a vote for the terminology “goal misgeneralization” (or “goal malgeneralization”), rather than “inner misalignment”, as you presumably find that the former makes it more immediately obvious what the concern is. Is that fair? Thanks.

I think we need to make AI have a particular utility function. We have a training distribution where we have a ground-truth reward signal, but there are many different utility functions that are compatible with the reward on the training distribution, which assign different utilities off-distribution.

You could avoid talking about utility functions by saying "the learned value function just predicts reward", and that may work while you're staying within the distribution we actually gave reward on, since there all the utility functions compatible with the ground-truth reward still agree. But once you're going off distribution, what value you assign to some worldstates/plans depends on what utility function you generalized to.

I think I fully agree with this in spirit but not in terminology!

I just don’t use the term “utility function” at all in this context. (See §9.5.2 here [LW · GW] for a partial exception.) There’s no utility function in the code. There’s a learned value function, and it outputs whatever it outputs, and those outputs determine what plans seem good or bad to the AI, including OOD plans like treacherous turns.