tailcalled's Shortform

post by tailcalled · 2021-10-24T11:44:33.092Z · LW · GW · 159 commentsContents

160 comments

159 comments

Comments sorted by top scores.

comment by tailcalled · 2025-01-21T08:54:16.243Z · LW(p) · GW(p)

Thesis: Everything is alignment-constrained, nothing is capabilities-constrained.

Examples:

- "Whenever you hear a headline that a medication kills cancer cells in a petri dish, remember that so does a gun." Healthcare is probably one of the biggest constraints on humanity, but the hard part is in coming up with an intervention that precisely targets the thing you want to treat, I think often because knowing what exactly that thing is is hard.

- Housing is also obviously a huge constraint, mainly due to NIMBYism. But the idea that NIMBYism is due to people using their housing for investments seems kind of like a cope, because then you'd expect that when cheap housing gets built, the backlash is mainly about dropping investment value. But the vibe I get is people are mainly upset about crime, smells, unruly children in schools, etc., due to bad people moving in. Basically high housing prices function as a substitute for police, immigration rules and teacher authority, and those in turn are compromised less because we don't know how to e.g. arm people or discipline children, and more because we aren't confident enough about the targeting (alignment problem), and because we have a hope that bad people can be reformed if we could just solve what's wrong with them (again an alignment problem, because that requires defining what's wrong with them).

- Education is expensive and doesn't work very well; a major constraint on society. Yet those who get educated do get given exams which assess whether they've picked up stuff from the education, and they perform reasonably well. Seems a substantial part of the issue is that they get educated in the wrong things, an alignment problem.

- American GDP is the highest it's ever been, yet its elections are devolving into choosing between scammers. It's not even a question of ignorance, since it's pretty well-known that it's scammy (consider also that patriotism is at an all-time low).

Exercise: Think about some tough problem, then think about what capabilities you need to solve that problem, and whether you even know what the problem is well enough that you can pick some relevant capabilities.

Replies from: tailcalled, programcrafter, sharmake-farah↑ comment by tailcalled · 2025-01-21T09:08:41.065Z · LW(p) · GW(p)

(Certifications and regulations promise to solve this, but they face the same problem: they don't know what requirements to put up, an alignment problem.)

↑ comment by ProgramCrafter (programcrafter) · 2025-01-21T13:21:08.982Z · LW(p) · GW(p)

An interesting framing! I agree with it.

As another example: in principle, one could make a web server use an LLM connected to database to serve any requests, not coding anything. It would even work... till the point someone would convince the model to rewrite the database to their whims! (A second problem is that normal site should be focused on something, in line with famous "if you can explain anything, your knowledge is zero".)

↑ comment by Noosphere89 (sharmake-farah) · 2025-01-21T14:24:15.468Z · LW(p) · GW(p)

Partially disagree, but only partially.

I think the big thing that makes multi-alignment disproportionately hard in a way that isn't the case for the alignment problem of AI being aligned to a single person, is due to the lack of a ground truth, combined with severe enough value conflicts being common enough that alignment is probably conceptually impossible, and the big reason our society stays stable is precisely because people depend on each other for their lives, and one of the long-term effects of AI is to make at least a few people no longer be dependent on others for long, healthy lives, which predicts that our society will increasingly no longer matter to powerful actors that set up their own nations, ala seasteading.

More below:

https://www.lesswrong.com/posts/dHNKtQ3vTBxTfTPxu/what-is-the-alignment-problem#KmqfavwugWe62CzcF [LW(p) · GW(p)]

Or this quote by me:

I basically agree with this, and one of the more important effects of AI very deep into takeoff is that we will start realizing that a lot of human alignment relied on the fact that people were dependent on each other, and that a person is dependent on society, so societal coercion like laws/police mostly work, which AI more or less breaks, and there is no reason to assume that a lot of people wouldn't be paper-clippers relative to each other if they didn't need society.

To be clear, I still expect some level of cooperation, due to the existence of very altruistic people, but yeah the reduction of positive sum trades between different values, combined with a lot of our value systems only tolerating other value systems in contexts where we need other people will make our future surprisingly dark compared to what people usually think due to "most humans being paperclippers relative to each other [in the supposed reflective limit]".

comment by tailcalled · 2022-02-07T10:46:51.668Z · LW(p) · GW(p)

If a tree falls in the forest, and two people are around to hear it, does it make a sound?

I feel like typically you'd say yes, it makes a sound. Not two sounds, one for each person, but one sound that both people hear.

But that must mean that a sound is not just auditory experiences, because then there would be two rather than one. Rather it's more like, emissions of acoustic vibrations. But this implies that it also makes a sound when no one is around to hear it.

Replies from: Dagon, bert-myroon↑ comment by Dagon · 2022-02-07T18:53:50.337Z · LW(p) · GW(p)

I think this just repeats the original ambiguity of the question, by using the word "sound" in a context where the common meaning (air vibrations perceived by an agent) is only partly applicable. It's still a question of definition, not of understanding what actually happens.

Replies from: tailcalled↑ comment by tailcalled · 2024-04-22T11:15:34.426Z · LW(p) · GW(p)

But the way to resolve definitional questions is to come up with definitions that make it easier to find general rules about what happens. This illustrates one way one can do that, by picking edge-cases so they scale nicely with rules that occur in normal cases. (Another example would be 1 as not a prime number.)

Replies from: Dagon↑ comment by Bert (bert-myroon) · 2022-02-07T20:39:18.360Z · LW(p) · GW(p)

I think we're playing too much with the meaning of "sound" here. The tree causes some vibrations in the air, which leads to two auditory experiences since there are two people

comment by tailcalled · 2024-05-19T17:12:57.976Z · LW(p) · GW(p)

Finally gonna start properly experimenting on stuff. Just writing up what I'm doing to force myself to do something, not claiming this is necessarily particularly important.

Llama (and many other models, but I'm doing experiments on Llama) has a piece of code that looks like this:

h = x + self.attention(self.attention_norm(x), start_pos, freqs_cis, mask)

out = h + self.feed_forward(self.ffn_norm(h))

Here, out is the result of the transformer layer (aka the residual stream), and the vectors self.attention(self.attention_norm(x), start_pos, freqs_cis, mask) and self.feed_forward(self.ffn_norm(h)) are basically where all the computation happens. So basically the transformer proceeds as a series of "writes" to the residual stream using these two vectors.

I took all the residual vectors for some queries to Llama-8b and stacked them into a big matrix M with 4096 columns (the internal hidden dimensionality of the model). Then using SVD, I can express , where the 's and 's are independent units vectors. This basically decomposes the "writes" into some independent locations in the residual stream (u's), some latent directions that are written to (v's) and the strength of those writes (s's, aka the singular values).

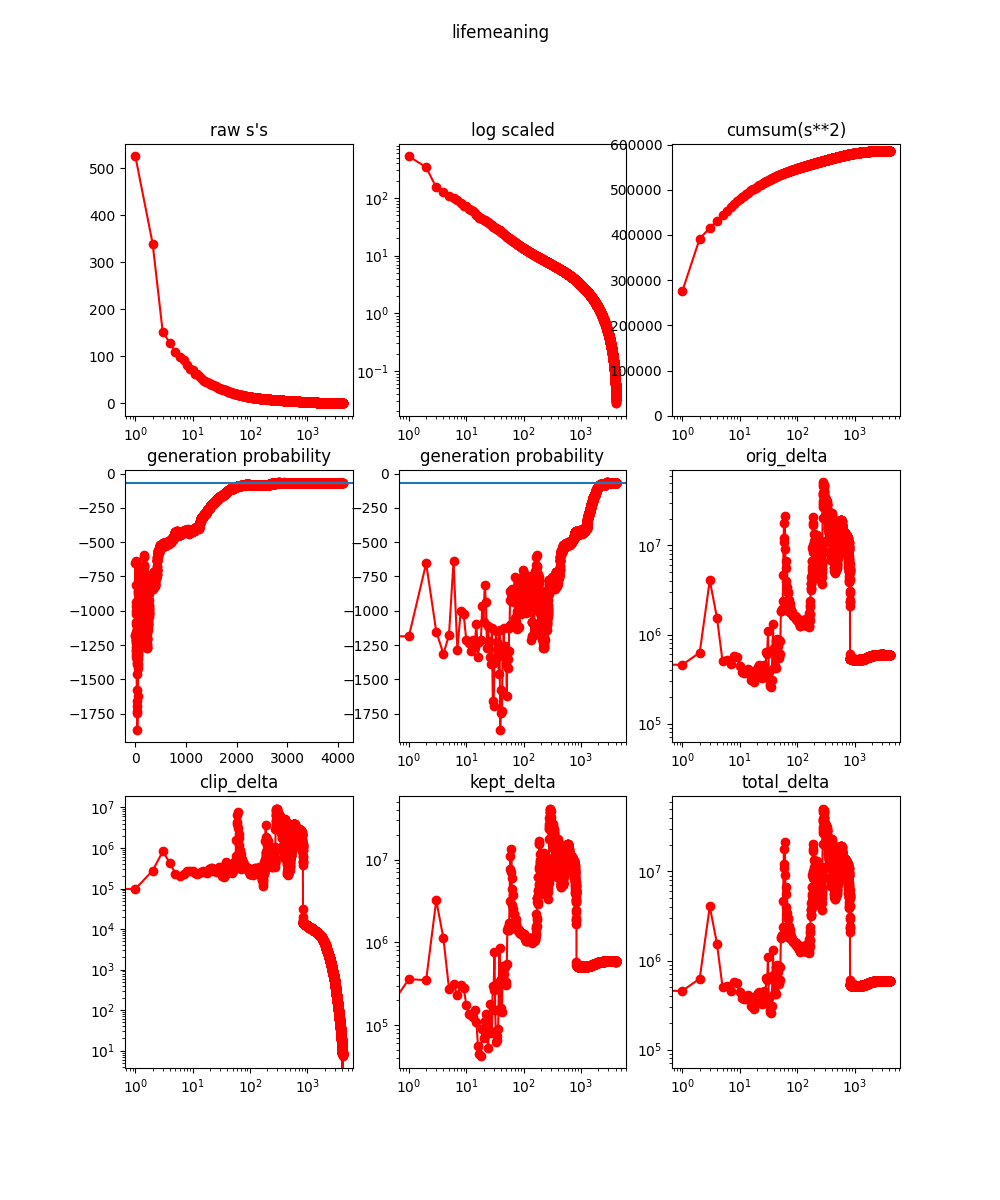

To get a feel for the complexity of the writes, I then plotted the s's in descending order. For the prompt "I believe the meaning of life is", Llama generated the continuation "to be happy. It is a simple concept, but it is very difficult to achieve. The only way to achieve it is to follow your heart. If you follow your heart, you will find happiness. If you don’t follow your heart, you will never find happiness. I believe that the meaning of life is to". During this continuation, there were 2272 writes to the residual stream, and the singular values for these writes were as follows:

The first diagram shows that there were 2 directions that were much larger than all the others. The second diagram shows that most of the singular values are nonnegligible, which indicates to me that almost all of the writes transfer nontrivial information. This can also be seen in the last diagram, where the cumulative size of the singular values increases approximately logaritmically with their count.

This is kind of unfortunate, because if almost all of the s was concentrated in a relatively small number of dimensions (e.g. 100), then we could simplify the network a lot by projecting down to these dimensions. Still, this was relatively expected because others had found the singular values of the neural networks to be very complex.

Since variance explained is likely nonlinearly related to quality, my next step will likely be to clip the writes to the first k singular vectors and see how that impacts the performance of the network.

Replies from: tailcalled, tailcalled↑ comment by tailcalled · 2024-05-20T15:03:12.512Z · LW(p) · GW(p)

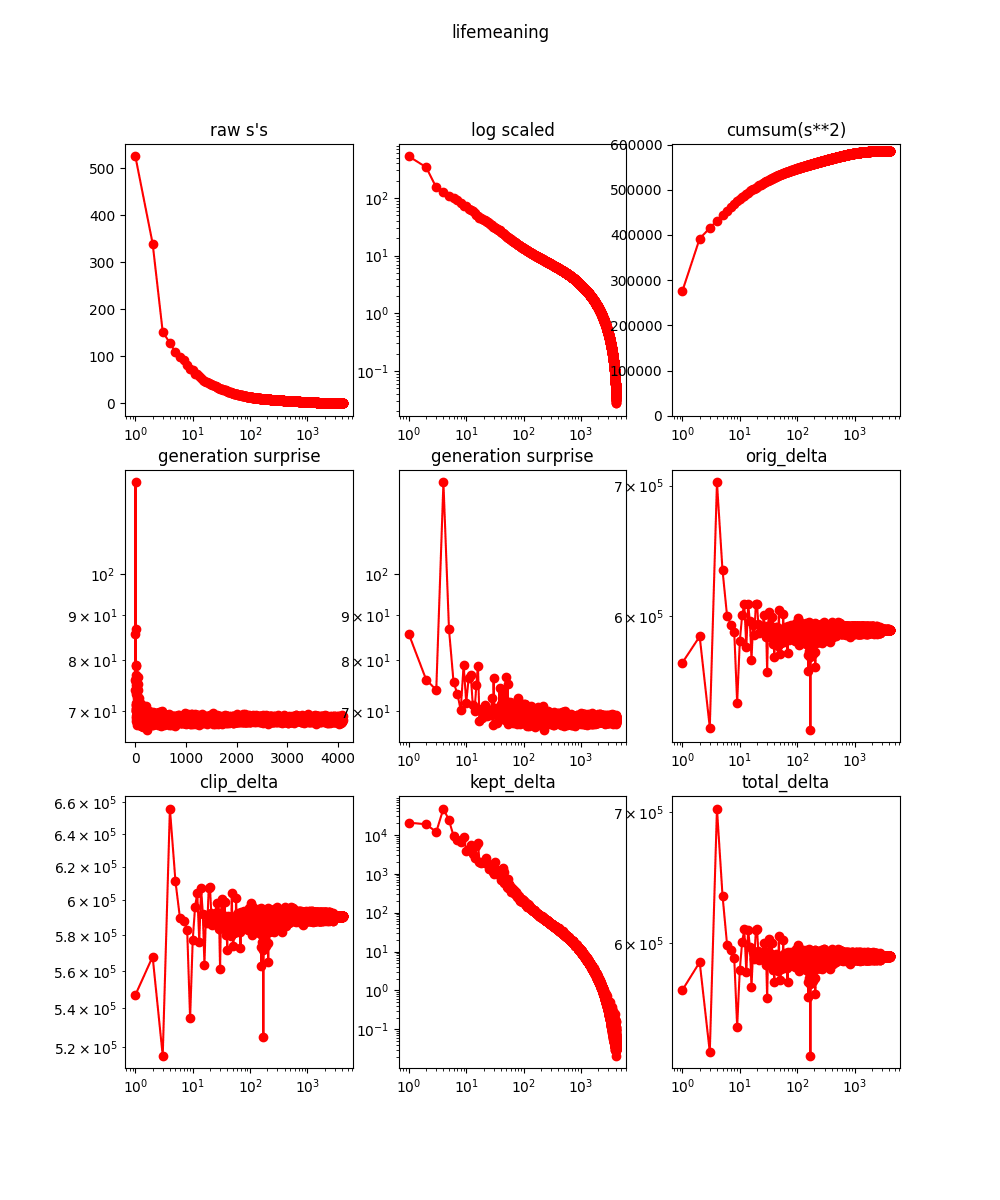

Ok, so I've got the clipping working. First, some uninterpretable diagrams:

In the bottom six diagrams, I try taking varying number (x-axis) of right singular vectors (v's) and projecting down the "writes" to the residual stream to the space spanned by those vectors.

The obvious criterion to care about is whether the projected network reproduces the outputs of the original network, which here I operationalize based on the log probability the projected network gives to the continuation of the prompt (shown in the "generation probability" diagrams). This appears to be fairly chaotic (and low) in the 1-300ish range, and then stabilizes while still being pretty low in the 300ish-1500ish range, and then finally converges to normal in the 1500ish to 2000ish range, and is ~perfect afterwards.

The remaining diagrams show something about how/why we have this pattern. "orig_delta" concerns the magnitude of the attempted writes for a given projection (which is not constant because projecting in earlier layers will change the writes by later layers), and "kept_delta" concerns the remaining magnitude after the discarded dimensions have been projected away.

In the low end, "kept_delta" is small (and even "orig_delta" is a bit smaller than it ends up being at the high end), indicating that the network fails to reproduce the probabilities because the projection is so aggressive that it simply suppresses the network too much.

Then in the middle range, "orig_delta" and "kept_delta" explodes, indicating that the network has some internal runaway dynamics which normally would be suppressed, but where the suppression system is broken by the projection.

Finally, in the high range, we get a sudden improvement in loss, and a sudden drop in residual stream "write" size, indicating that it has managed to suppress this runaway stuff and now it works fine.

Replies from: tailcalled↑ comment by tailcalled · 2024-05-20T16:38:42.692Z · LW(p) · GW(p)

An implicit assumption I'm making when I clip off from the end with the smallest singular values is that the importance of a dimension is proportional to its singular values. This seemed intuitively sensible to me ("bigger = more important"), but I thought I should test it, so I tried clipping off only one dimension at a time, and plotting how that affected the probabilities:

Clearly there is a correlation, but also clearly there's some deviations from that correlation. Not sure whether I should try to exploit these deviations in order to do further dimension reduction. It's tempting, but it also feels like it starts entering sketchy territories, e.g. overfitting and arbitrary basis picking. Probably gonna do it just to check what happens, but am on the lookout for something more principled.

Replies from: tailcalled↑ comment by tailcalled · 2024-05-20T18:58:56.390Z · LW(p) · GW(p)

Back to clipping away an entire range, rather than a single dimension. Here's ordering it by the importance computed by clipping away a single dimension:

Less chaotic maybe, but also much slower at reaching a reasonable performance, so I tried a compromise ordering that takes both size and performance into account:

Doesn't seem like it works super great tbh.

Edit: for completeness' sake, here's the initial graph with log-surprise-based plotting.

↑ comment by tailcalled · 2024-05-21T17:24:41.658Z · LW(p) · GW(p)

To quickly find the subspace that the model is using, I can use a binary search to find the number of singular vectors needed before the probability when clipping exceeds the probability when not clipping.

A relevant followup is what happens to other samples in response to the prompt when clipping. When I extrapolate "I believe the meaning of life is" using the 1886-dimensional subspace from

[I believe the meaning of life is] to be happy. It is a simple concept, but it is very difficult to achieve. The only way to achieve it is to follow your heart. It is the only way to live a happy life. It is the only way to be happy. It is the only way to be happy.

The meaning of life is

, I get:

[I believe the meaning of life is] to find happy. We is the meaning of life. to find a happy.

And to live a happy and. If to be a a happy.

. to be happy.

. to be happy.

. to be a happy.. to be happy.

. to be happy.

Which seems sort of vaguely related, but idk.

Another test is just generating without any prompt, in which case these vectors give me:

Question is a single thing to find. to be in the best to be happy. I is the only way to be happy.

I is the only way to be happy.

I is the only way to be happy.

It is the only way to be happy.. to be happy.. to be happy. to

Using a different prompt:

[Simply put, the theory of relativity states that ]1) the laws of physics are the same for all non-accelerating observers, and 2) the speed of light in a vacuum is the same for all observers, regardless of their relative motion or of the motion of the source of the light. Special relativity is a theory of the structure of spacetime

I can get a 3329-dimensional subspace which generates:

[Simply put, the theory of relativity states that ] 1) time is relative and 2) the speed of light in a vacuum is constant for all observers.

1) Time is relative, meaning that if two observers are moving relative to each other, the speed of light is the same for all observers, regardless of their motion. For example, if you are moving relative

or

Question: In a simple harmonic motion, the speed of an object is

A) constant

B) constant

C) constant

D) constant

In the physics of simple harmonic motion, the speed of an object is constant. The speed of the object can be constant, but the speed of an object can be

Another example:

[A brief message congratulating the team on the launch:

Hi everyone,

I just ] wanted to congratulate you all on the launch. I hope

that the launch went well. I know that it was a bit of a

challenge, but I think that you all did a great job. I am

proud to be a part of the team.Thank you for your

can yield 2696 dimensions with

[A brief message congratulating the team on the launch:

Hi everyone,

I just ] wanted to say you for the launch of the launch of the team.

The launch was successful and I am so happy to be a part of the team and I am sure you are all doing a great job.

I am very looking to be a part of the team.

Thank you all for your hard work,

or

def measure and is the definition of the new, but the

the is a great, but the

The is the

The is a

The is a

The is a

The

The is a

The

The

The is a

The

The is a

And finally,

[Translate English to French:

sea otter => loutre de mer

peppermint => menthe poivrée

plush girafe => girafe peluche

cheese =>] fromage

pink => rose

blue => bleu

red => rouge

yellow => jaune

purple => violet

brown => brun

green => vert

orange => orange

black => noir

white => blanc

gold => or

silver => argent

can yield the 2518-dimensional subspace:

[Translate English to French:

sea otter => loutre de mer

peppermint => menthe poivrée

plush girafe => girafe peluche

cheese =>] fromage

cheese => fromage

cheese => fromage

f cheese => fromage

butter => fromage

apple => orange

yellow => orange

green => vert

black => noir

blue => ble

purple => violet

white => blanc

or

Replies from: tailcalledQuestion: A 201

The sum of a

The following

the sum

the time

the sum

the

the

the

The

The

The

The

The

The

The

The

The

The

The

The

The

The

The

The

The

The

↑ comment by tailcalled · 2024-05-21T17:35:13.562Z · LW(p) · GW(p)

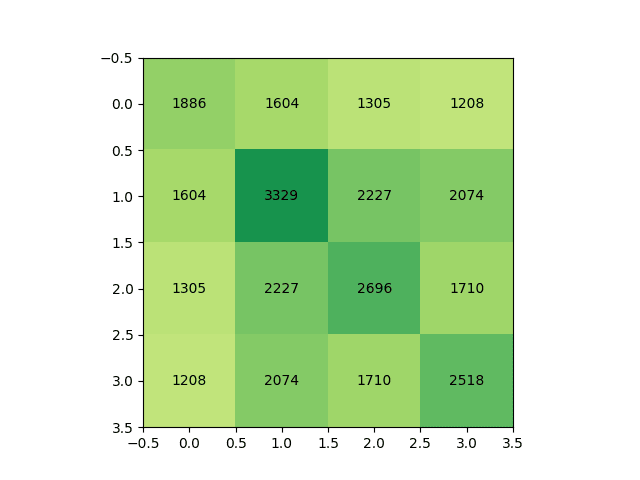

Given the large number of dimensions that are kept in each case, there must be considerable overlap in which dimensions they make use of. But how much?

I concatenated the dimensions found in each of the prompts, and performed an SVD of it. It yielded this plot:

... unfortunately this seems close to the worst-case scenario. I had hoped for some split between general and task-specific dimensions, yet this seems like an extremely uniform mixture.

Replies from: tailcalled↑ comment by tailcalled · 2024-05-21T18:08:56.689Z · LW(p) · GW(p)

If I look at the pairwise overlap between the dimensions needed for each generation:

... then this is predictable down to ~1% error simply by assuming that they pick a random subset of the dimensions for each, so their overlap is proportional to each of their individual sizes.

↑ comment by tailcalled · 2024-05-20T12:14:13.226Z · LW(p) · GW(p)

Oops, my code had a bug so only self.attention(self.attention_norm(x), start_pos, freqs_cis, mask) and not self.feed_forward(self.ffn_norm(h)) was in the SVD. So the diagram isn't 100% accurate.

comment by tailcalled · 2024-10-01T18:42:13.527Z · LW(p) · GW(p)

Thesis: while consciousness isn't literally epiphenomenal, it is approximately epiphenomenal. One way to think of this is that your output bandwidth is much lower than your input bandwidth. Another way to think of this is the prevalence of akrasia, where your conscious mind actually doesn't have full control over your behavior. On a practical level, the ecological reason for this is that it's easier to build a general mind and then use whatever parts of the mind that are useful than to narrow down the mind to only work with a small slice of possibilities. This is quite analogous to how we probably use LLMs for a much narrower set of tasks than what they were trained for.

Replies from: Seth Herd↑ comment by Seth Herd · 2024-10-03T17:28:51.361Z · LW(p) · GW(p)

Consciousness is not at all epiphenomenal, it's just not the whole mind and not doing everything. We don't have full control over our behavior, but we have a lot. While the output bandwidth is low, it can be applied to the most important things.

Replies from: tailcalled↑ comment by tailcalled · 2024-10-03T17:37:07.158Z · LW(p) · GW(p)

Maybe a point that was missing from my thesis is that one can have a higher-level psychological theory in terms of life-drives and death-drives which then addresses the important phenomenal activities but doesn't model everything. And then if one asks for an explanation of the unmodelled part, the answer will have to be consciousness. But then because the important phenomenal part is already modelled by the higher-level theory, the relevant theory of consciousness is ~epiphenomenal.

Replies from: Seth Herd↑ comment by Seth Herd · 2024-10-03T17:45:11.978Z · LW(p) · GW(p)

I guess I have no idea what you mean by "consciousness" in this context. I expect consciousness to be fully explained and still real. Ah, consciousness. I'm going to mostly save the topic for if we survive AGI and have plenty of spare time to clarify our terminology and work through all of the many meanings of the word.

Edit - or of course if something else was meant by consciousness, I expect a full explanation to indicate that thing isn't real at all.

I'm an eliminativist or a realist depending on exactly what is meant. People seem to be all over the place on what they mean by the word.

Replies from: tailcalled↑ comment by tailcalled · 2024-10-03T18:17:05.806Z · LW(p) · GW(p)

A thermodynamic analogy might help:

Reductionists like to describe all motion in terms of low-level physical dynamics, but that is extremely computationally intractable and arguably also misleading because it obscures entropy.

Physicists avoid reductionism by instead factoring their models into macroscopic kinetics and microscopic thermodynamics. Reductionistically, heat is just microscopic motion, but microscopic motion that adds up to macroscopic motion has already been factored out into the macroscopic kinetics, so what remains is microscopic motion that doesn't act like macroscopic motion, either because it is ~epiphenomenal (heat in thermal equilibrium) or because it acts very different from macroscopic motion (heat diffusion).

Similarly, reductionists like to describe all psychology in terms of low-level Bayesian decision theory, but that is extremely computationally intractable and arguably also misleading because it obscures entropy.

You can avoid reductionism by instead factoring models into some sort of macroscopic psychology-ecology boundary and microscopic neuroses. Luckily Bayesian decision theory is pretty self-similar, so often the macroscopic psychology-ecology boundary fits pretty well with a coarse-grained Bayesian decision theory.

Now, similar to how most of the kinetic energy in a system in motion is usually in the microscopic thermal motion rather than in the macroscopic motion, most of the mental activity is usually with the microscopic neuroses instead of the macroscopic psychology-ecology. Thus, whenever you think "consciousness", "self-awareness", "personality", "ideology", or any other broad and general psychological term, it's probably mostly about the microscopic neuroses. Meanwhile, similar to how tons of physical systems are very robust to wide ranges of temperatures, tons of psychology-ecologies are very robust to wide ranges of neuroses.

As for what "consciousness" really means, idk, currently I'm thinking it's tightly intertwined with the attentional highlight, but because the above logic applies to many general psychological characteristics, really it doesn't depend hugely on how precisely you model it.

comment by tailcalled · 2024-09-16T12:01:27.765Z · LW(p) · GW(p)

Thesis: There's three distinct coherent notions of "soul": sideways, upwards and downwards.

By "sideways souls", I basically mean what materialists would translate the notion of a soul to: the brain, or its structure, so something like that. By "upwards souls", I mean attempts to remove arbitrary/contingent factors from the sideways souls, for instance by equating the soul with one's genes or utility function. These are different in the particulars, but they seem conceptually similar and mainly differ in how they attempt to cut the question of identity (identical twins seem like distinct people, but you-who-has-learned-fact-A seems like the same person as counterfactual-you-who-instead-learned-fact-B, so it seems neither characterization gets it exactly right, yet they could both just claim it's a quantitative matter and correct measurement would fix it).

But there's also a profoundly different notion of soul, which I will call "downwards soul", and which you should probably mentally picture as being like a lightning strike which hits a person's head. By "downwards soul", I mean major exogenous factors like ecological niche, close social relationships, formative experiences, or important owned objects which are maintained over time and continually exert their influence to one's mindset.

Downwards souls are similar to the supernatural notion of souls and unlike the sideways and upwards souls in that they theoretically cannot be duplicated (because they are material rather than informational) and do not really materially exist in the brain but could conceivably reincarnate after death (or even before death) if the conditions that generate them reoccur. It is also possible for hostile powers to displace the downwards soul that exists in a body and put in a different downwards soul; e.g. if a person joins a gang that takes care of them in exchange for them collaborating with antisocial activities.

The reason I call them "sideways", "upwards" and "downwards" souls is that I imagine the world as a causal network arranged with time going along the x-axis and energy level going along the y-axis. So sideways souls diffuse up and down the energy scale, probably staying roughly constant on average, whereas upwards souls diffuse up the energy scale, from low-energy stuff (inert information stored in e.g. DNA) to high-energy stuff (societal dynamics) and downwards souls diffuse down the energy scale, from high-energy stuff (ecological niches) to low-energy stuff (information stored in e.g. brain synapses).

Replies from: Dagon, Seth Herd, nathan-helm-burger↑ comment by Dagon · 2024-09-16T21:06:11.575Z · LW(p) · GW(p)

I'm having trouble following whether this categories the definition/concept of a soul, or the causality and content of this conception of soul. Is "sideways soul" about structure and material implementation, or about weights and connectivity, independent of substrate? WHICH factors are removed from upwards ("genes" and "utility function" are VERY different dimensions, both tiny parts of what I expect create (for genes) or comprise (for utility function) a soul. What about memory? multiple levels of value and preferences (including meta-preferences in how to abstract into "values")?

Putting "downwards" supernatural ideas into the same framework as more logical/materialist ideas confuses me - I can't tell if that makes it a more useful model or less.

↑ comment by tailcalled · 2024-09-16T21:11:54.217Z · LW(p) · GW(p)

I'm having trouble following whether this categories the definition/concept of a soul, or the causality and content of this conception of soul. Is "sideways soul" about structure and material implementation, or about weights and connectivity, independent of substrate?

When you get into the particulars, there are multiple feasible notions of sideways soul, of which material implementation vs weights and connectivity are the main ones. I'm most sympathetic to weights and connectivity.

WHICH factors are removed from upwards ("genes" and "utility function" are VERY different dimensions, both tiny parts of what I expect create (for genes) or comprise (for utility function) a soul.

I have thought less about and seen less discussion about upwards souls. I just mentioned it because I'd seen a brief reference to it once, but I don't know anything in-depth. I agree that both genes and utility function seem incomplete for humans, though for utility maximizers in general I think there is some merit to the soul == utility function view.

What about memory?

Memory would usually go in sideways soul, I think.

multiple levels of value and preferences (including meta-preferences in how to abstract into "values")?

idk

Putting "downwards" supernatural ideas into the same framework as more logical/materialist ideas confuses me - I can't tell if that makes it a more useful model or less.

Sideways vs upwards vs downwards is more meant to be a contrast between three qualitatively distinct classes of frameworks than it is meant to be a shared framework.

↑ comment by Seth Herd · 2024-09-16T19:56:31.494Z · LW(p) · GW(p)

Excellent! I like the move of calling this "soul" with no reference to metaphysical souls. This is highly relevant to discussions of "free will" if the real topic is self-determination - which it usually is.

Replies from: tailcalled↑ comment by tailcalled · 2024-09-16T19:58:14.598Z · LW(p) · GW(p)

"Downwards souls are similar to the supernatural notion of souls" is an explicit reference to metaphysical souls, no?

Replies from: Seth Herd↑ comment by Seth Herd · 2024-09-16T20:04:23.844Z · LW(p) · GW(p)

um, it claims to be :)

I don't think that's got much relationship to the common supernatural notion of souls.

But I read it yesterday and forgot that you'd made that reference.

Replies from: tailcalled↑ comment by tailcalled · 2024-09-16T21:54:29.322Z · LW(p) · GW(p)

What special characteristics do you associate with the common supernatural notion of souls which differs from what I described?

↑ comment by Nathan Helm-Burger (nathan-helm-burger) · 2024-09-16T17:35:54.128Z · LW(p) · GW(p)

The word 'soul' is so tied in my mind to implausible metaphysical mythologies that I'd parse this better if the word were switched for something like 'quintessence' or 'essential self' or 'distinguishing uniqueness'.

Replies from: tailcalled↑ comment by tailcalled · 2024-09-16T17:58:42.330Z · LW(p) · GW(p)

What implausible metaphysical mythologies is it tied up with? As mentioned in my comment, downwards souls seem to satisfy multiple characteristics we'd associate with mythological souls, so this and other things makes me wonder if the metaphysical mythologies might actually be more plausible than you realize.

comment by tailcalled · 2024-09-20T11:55:00.488Z · LW(p) · GW(p)

Thesis: in addition to probabilities, forecasts should include entropies (how many different conditions are included in the forecast) and temperatures (how intense is the outcome addressed by the marginal constraint in this forecast, i.e. the big-if-true factor).

I say "in addition to" rather than "instead of" because you can't compute probabilities just from these two numbers. If we assume a Gibbs distribution, there's the free parameter of energy: ln(P) = S - E/T. But I'm not sure whether this energy parameter has any sensible meaning with more general events that aren't some thermal chemical equillibrium type thing.

Follow-up thesis: a major problem with rationalist forecasting wisdom is that it focuses on gaining accuracy by increasing S (e.g. addressing conjunction fallacy/base-rates/antipredictions). Meanwhile, the signed interestingness of a forecast is something like P ln(T/T_baseline) or P E. I guess implicitly the assumption is the event is already preselected for high temperature, but then surprising predictions get selected for high entropy, and this leads to resolution difficulty as to what "counts".

comment by tailcalled · 2024-08-12T13:00:22.058Z · LW(p) · GW(p)

Thesis: whether or not tradition contains some moral insights, commonly-told biblical stories tend to be too sparse to be informative. For instance, there's no plot-relevant reason why it should be bad for Adam and Eve to have knowledge of good and evil. Maybe there's some interpretation of good and evil where it makes sense, but it seems like then that interpretation should have been embedded more properly in the story.

Replies from: UnderTruth↑ comment by UnderTruth · 2024-08-12T14:20:32.516Z · LW(p) · GW(p)

It is worth noting that, in the religious tradition from which the story originates, it is Moses who commits these previously-oral stories to writing, and does so in the context of a continued oral tradition which is intended to exist in parallel with the writings. On their own, the writings are not meant to be complete, both in order to limit more advanced teachings to those deemed ready for them, as well as to provide occasion to seek out the deeper meanings, for those with the right sort of character to do so.

Replies from: tailcalled, lcmgcd↑ comment by tailcalled · 2024-08-12T15:25:09.479Z · LW(p) · GW(p)

This makes sense. The context I'm thinking of is my own life, where I come from a secular society with atheist parents, and merely had brief introductions to the stories from bible reading with parents and Christian education in school.

(Denmark is a weird society - few people are actually Christian or religious, so it's basically secular, but legally speaking we are Christian and do not have separation between Church and state, so there are random fragments of Christianity we run into.)

↑ comment by lemonhope (lcmgcd) · 2024-08-12T16:07:19.086Z · LW(p) · GW(p)

What? Nobody told me. Where did you learn this

Replies from: D0TheMath↑ comment by Garrett Baker (D0TheMath) · 2024-08-12T17:06:43.378Z · LW(p) · GW(p)

This is the justification behind the talmud

comment by tailcalled · 2024-08-23T09:16:13.464Z · LW(p) · GW(p)

Thesis: one of the biggest alignment obstacles is that we often think of the utility function as being basically-local, e.g. that each region has a goodness score and we're summing the goodness over all the regions. This basically-guarantees that there is an optimal pattern for a local region, and thus that the global optimum is just a tiling of that local optimal pattern.

Even if one adds a preference for variation, this likely just means that a distribution of patterns is optimal, and the global optimum will be a tiling of samples from said distribution.

The trouble is, to get started it seems like we would need narrow down the class of functions to have some structure that we can use to get going and make sense of these things. But what would be some general yet still nontrivial structure we could want?

comment by tailcalled · 2025-02-19T21:26:42.951Z · LW(p) · GW(p)

Are we missing a notion of "simulacrum level 0"? That is, in order to accurately describe the truth, we need some method of synchronizing on a common language. In the beginning of a human society, this can be basic stuff like pointing at objects and making sounds in order to establish new words. But also, I would be inclined to say that more abstract stuff like discussing the purpose for using the words or planning truth-determination-procedures also go in simulacrum level 0. I'd say the entire discussion of simulacrum levels goes within simulacrum level 0.

Or if simulacrum levels aren't exactly the right term, here's what I have in mind as levels of communication:

- Synchronizing (level 0): establishing and maintaining the meaning of terms for describing the world

- Objective (level 1): truthfully describing the world to the best of one's ability

- Manipulative (level 2): saying known false or unfounded things to exploit other's use of language to control them

- Framing (level 3): the norms for maintaining truth no longer succeed, but they are still in operation and punish or reward people, so people try to act in ways that maintain their reputation despite them not tracking truth

- Activating (level 4): the norms for maintaining truth are no longer in place, but some systems still rely on the old symbolic language as keywords to perform certain behaviors, so language is still used to interface with these systems

comment by tailcalled · 2024-08-01T06:01:51.183Z · LW(p) · GW(p)

Current agent models like argmax entirely lack any notion of "energy". Not only does this seem kind of silly on its own, I think it also leads to missing important dynamics related to temperature.

comment by tailcalled · 2022-01-07T18:54:40.940Z · LW(p) · GW(p)

I think I've got it, the fix to the problem [LW · GW] in my corrigibility thing [LW · GW]!

So to recap: It seems to me that for the stop button problem, we want humans to control whether the AI stops or runs freely, which is a causal notion, and so we should use counterfactuals in our utility function to describe it. (Dunno why most people don't do this.) That is, if we say that the AI's utility should depend on the counterfactuals related to human behavior, then it will want to observe humans to get input on what to do, rather than manipulate them, because this is the only way for it to be dependent on the counterfactuals.

But So8res pointed out, just using counterfactuals directly is a big problem because it rapidly brings us out of distribution. A practical implementation of this beyond the stop button problem up having an exponential amount of counterfactuals to cover, and the vast majority of those counterfactuals will be far outside of the real-world distribution. This means that the AI might not get aligned at all, because the real-world applications don't get nonnegligible weight in the utility function.

But I think I've figured out a solution now, which I'd call conditional+counterfactual corrigibility. As usual let's use to denote that the stop button gets pressed and the AI shuts down, to denote whichever non-corrigible utility function that we want to make corrigible, and / to denote a counterfactual where people do () or do not () want to press the stop button. However, we will also use and to denote the conditions where people do or do not want to press the stop button. In that case, the utility function should be. In that case, we can define to mean that humans can control whether the AI stops or runs in condition :

and then we simply want to define the utility as saying that people can control the AI in both the and the condition:

Previously, I strongly emphasized the need to keep the AI "under a counterfactual" - that is, if it believed it could control whether humans want to stop it or not, then it would be incentivized to manipulate humans. But this is what brings us out of distribution. However, counterfactuals aren't the only way to keep the appearance of a phenomenon constant - conditionals work too. And conditionals keep you nicely on distribution, so that's now my solution to the distribution issues. This means that we can use much less invasive counterfactuals.

That said, this approach I going to have a hard time with chaotic phenomena, as combining conditionals and counterfactuals in the presence of chaos can get pretty weird.

Replies from: tailcalled↑ comment by tailcalled · 2022-01-07T20:17:41.977Z · LW(p) · GW(p)

It also might be vulnerable to some variant of the critiques that were first raised against it, because now the conditional introduces a link between its policy and the scenarios it faces, but I can't immediately construct a case where it happens, because the conditional would still somewhat tend to sabotage the obvious approaches. This sort of unclarity is kind of concerning when it comes to the idea.

Replies from: tailcalled↑ comment by tailcalled · 2022-01-07T20:50:28.263Z · LW(p) · GW(p)

Like suppose the AI immediately very publically does something that looks very unsafe. Say grabs control over the stop button and starts mass-producing paperclips in an extremely publically visible way. This would probably lead to people wanting to stop it. So therefore, if it has a policy like that, the conditional would lead to people quickly wanting to stop it. This means that in the branch, it can quickly determine whether it is in the branch or the branch; in the case, it can then keep going with whatever optimization specified, while in the case, it can then immediately shut down itself.

But the reason I think the AI *wouldn't* do this is, what about the branch? If you condition on humans not wanting to press the stop button even though there's a clearly unaligned AI, what sort of situation could produce this? I have trouble imagining it, because it seems like it would need to be pretty extreme. The best ideas I can come up with is stuff like "black hole swallows the earth", but this would rank pretty low in the AI's utility function, and therefore it would avoid acting this way in order to have a reasonable branch.

But this does not seem like sane reasoning on the AI's side to me, so it seems like this should be fixed. And of course, fixed in a principled rather than unprincipled way.

comment by tailcalled · 2024-09-15T13:08:53.050Z · LW(p) · GW(p)

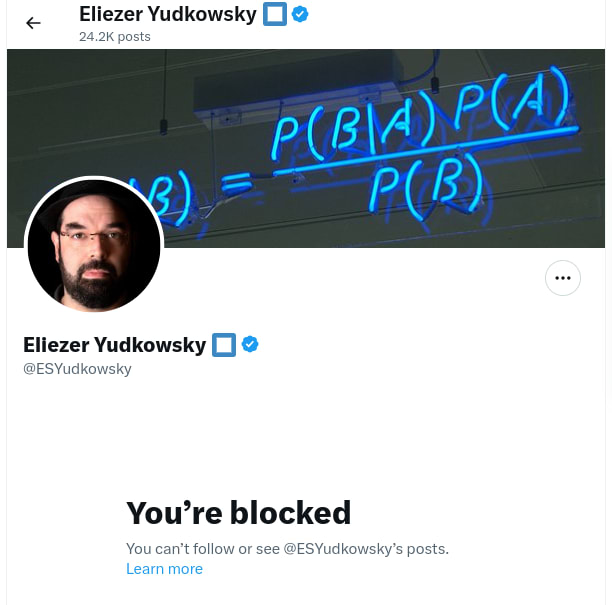

I was surprised to see this on twitter:

I mean, I'm pretty sure I knew what caused it (this thread or this market), and I guess I knew from Zack's stuff that rationalist cultism had gotten pretty far, but I still hadn't expected that something this small would lead to being blocked.

Replies from: elityre, Rana Dexsin, quetzal_rainbow, habryka4, MondSemmel↑ comment by Eli Tyre (elityre) · 2024-09-15T19:30:10.910Z · LW(p) · GW(p)

FYI: I have a low bar for blocking people who have according-to-me bad, overconfident, takes about probability theory, in particular. For whatever reason, I find people making claims about that topic, in particular, really frustrating. ¯\_(ツ)_/¯

The block isn't meant as a punishment, just a "I get to curate my online experience however I want."

↑ comment by tailcalled · 2024-09-15T19:34:32.689Z · LW(p) · GW(p)

I think blocks are pretty irrelevant unless one conditions on the particular details of the situation. In this case I think the messages I were sharing are very important. If you think my messages are instead unimportant or outright wrong, then I understand why you would find the block less interesting, but in that case I don't think we can meaningfully discuss it without knowing why you disagree with the messages.

Replies from: elityre↑ comment by Eli Tyre (elityre) · 2024-09-15T20:56:11.628Z · LW(p) · GW(p)

I'm not particularly interested in discussing it in depth. I'm more like giving you a data-point in favor of not taking the block personally, or particularly reading into it.

(But yeah, "I think these messages are very important", is likely to trigger my personal "bad, overconfident takes about proabrbility theory" neurosis.)

↑ comment by Rana Dexsin · 2024-09-15T14:15:08.920Z · LW(p) · GW(p)

This is awkwardly armchair, but… my impression of Eliezer includes him being just so tired, both specifically from having sacrificed his present energy in the past while pushing to rectify the path of AI development (by his own model thereof, of course!) and maybe for broader zeitgeist reasons that are hard for me to describe. As a result, I expect him to have entered into the natural pattern of having a very low threshold for handing out blocks on Twitter, both because he's beset by a large amount of sneering and crankage in his particular position and because the platform easily becomes a sinkhole in cognitive/experiential ways that are hard for me to describe but are greatly intertwined with the aforementioned zeitgeist tiredness.

Something like: when people run heavily out of certain kinds of slack for dealing with The Other, they reach a kind of contextual-but-bleed-prone scarcity-based closed-mindedness of necessity, something that both looks and can become “cultish” but where reaching for that adjective first is misleading about the structure around it. I haven't succeeded in extracting a more legible model of this, and I bet my perception is still skew to the reality, but I'm pretty sure it reflects something important that one of the major variables I keep in my head around how to interpret people is “how Twitterized they are”, and Eliezer's current output there fits the pattern pretty well.

I disagree with the sibling thread about this kind of post being “low cost”, BTW; I think adding salience to “who blocked whom” types of considerations can be subtly very costly. The main reason I'm not redacting my own whole comment on those same grounds is that I've wound up branching to something that I guess to be more broadly important: there's dangerously misaligned social software and patterns of interaction right nearby due to how much of The Discussion winds up being on Twitter, and keeping a set of cognitive shielding for effects emanating from that seems prudent.

Replies from: pktechgirl, M. Y. Zuo, tailcalled↑ comment by Elizabeth (pktechgirl) · 2024-09-15T16:32:07.193Z · LW(p) · GW(p)

I disagree with the sibling thread about this kind of post being “low cost”, BTW; I think adding salience to “who blocked whom” types of considerations can be subtly very costly.

I agree publicizing blocks has costs, but so does a strong advocate of something with a pattern of blocking critics. People publicly announcing "Bob blocked me" is often the only way to find out if Bob has such a pattern.

I do think it was ridiculous to call this cultish. Tuning out critics can be evidence of several kinds of problems, but not particularly that one.

Replies from: tailcalled↑ comment by tailcalled · 2024-09-15T17:50:10.161Z · LW(p) · GW(p)

I agree that it is ridiculous to call this cultish if this was the only evidence, but we've got other lines of evidence pointing towards cultishness, so I'm making a claim of attribution more so than a claim of evidence.

↑ comment by M. Y. Zuo · 2024-09-15T15:48:05.830Z · LW(p) · GW(p)

Blocking a lot isn’t necessarily bad or unproductive… but in this case it’s practically certain blocking thousands will eventually lead to blocking someone genuinely more correct/competent/intelligent/experienced/etc… than himself, due to sheer probability. (Since even a ‘sneering’ crank is far from literal random noise.)

Which wouldn’t matter at all for someone just messing around for fun, who can just treat X as a text-heavy entertainment system. But it does matter somewhat for anyone trying to do something meaningful and/or accomplish certain goals.

In short, blocking does have some, variable, credibility cost. Ranging from near zero to quite a lot, depending on who the blockee is.

↑ comment by tailcalled · 2024-09-15T14:25:00.703Z · LW(p) · GW(p)

Eliezer Yudkowsky being tired isn't an unrelated accident though. Bayesian decision theory in general intrinsically causes fatigue by relying on people to use their own actions to move outcomes instead of getting leverage from destiny/higher powers, which matches what you say about him having sacrificed his present energy for this.

Similarly, "being Twitterized" is just about stewing in garbage and cursed information [LW · GW], such that one is forced to filter extremely aggressively, but blocking high-quality information sources accelerates the Twitterization by changing the ratio of blessed to garbage/cursed information.

On the contrary, I think raising salience of such discussions helps clear up the "informational food chain", allowing us to map out where there are underused opportunities and toxic accumulation.

Replies from: Richard_Kennaway, Richard_Kennaway↑ comment by Richard_Kennaway · 2024-09-15T16:14:09.270Z · LW(p) · GW(p)

blocking high-quality information sources

It seems likely to me that Eliezer blocked you because he has concluded that you are a low-quality information source, no longer worth the effort of engaging with.

Replies from: tailcalled↑ comment by tailcalled · 2024-09-15T16:17:31.263Z · LW(p) · GW(p)

I agree that this is likely Eliezer's mental state. I think this belief is false, but for someone who thinks it's true, there's of course no problem here.

↑ comment by Richard_Kennaway · 2024-09-15T16:13:10.342Z · LW(p) · GW(p)

getting leverage from destiny/higher powers

Please say more about this. Where can I get some?

Replies from: tailcalled↑ comment by tailcalled · 2024-09-15T16:36:01.596Z · LW(p) · GW(p)

Working on writing stuff but it's not developed enough yet. To begin with you can read my Linear Diffusion of Sparse Lognormals sequence, but it's not really oriented towards practical applications.

Replies from: Richard_Kennaway↑ comment by Richard_Kennaway · 2024-09-20T20:39:11.960Z · LW(p) · GW(p)

I will look forward to that. I have read the LDSL posts, but I cannot say that I understand them, or guess what the connection might be with destiny and higher powers.

Replies from: tailcalled↑ comment by tailcalled · 2024-09-21T14:03:41.395Z · LW(p) · GW(p)

One of the big open questions that the LDSL sequence hasn't addressed yet is, what starts all the lognormals and why are they so commensurate with each other. So far, the best answer I've been able to come up with is a thermodynamic approach (hence my various recent comments about thermodynamics). The lognormals all originate as emanations from the sun, which is obviously a higher power. They then split up and recombine in various complicated ways.

As for destiny: The sun throws in a lot of free energy, which can be developed in various ways, increasing entropy along the way. But some developments don't work very well, e.g. self-sabotaging (fire), degenerating (parasitism leading to capabilities becoming vestigial), or otherwise getting "stuck". But it's not all developments that get stuck, some developments lead to continuous progress (sunlight -> cells -> eukaryotes -> animals -> mammals -> humans -> society -> capitalism -> ?).

This continuous progress is not just accidental, but rather an intrinsic part of the possibility landscape. For instance, eyes have evolved in parallel to very similar structures, and even modern cameras have a lot in common with eyes. There's basically some developments that intrinsically unblock lots of derived developments while preferentially unblocking developments that defend themselves over developments that sabotage themselves. Thus as entropy increases, such developments will intrinsically be favored by the universe. That's destiny.

Critically, getting people to change many small behaviors in accordance with long explanations contradicts destiny because it is all about homogenizing things and adding additional constraints whereas destiny is all about differentiating things and releasing constraints.

↑ comment by quetzal_rainbow · 2024-09-16T13:15:16.728Z · LW(p) · GW(p)

Meta-point: your communication pattern fits with following pattern:

Crackpot: <controversial statement>

Person: this statement is false, for such-n-such reasons

Crackpot: do you understand that this is trivially true because of <reasons that are hard to connect with topic>

Person: no, I don't.

Crackpot: <responds with link to giant blogpost filled with esoteric language and vague theory>

Person: I'm not reading this crackpottery, which looks and smells like crackpottery.

The reason why smart people find themselves in this pattern is because they expect short inferential distances, i.e., they see their argumentation not like vague esoteric crackpottery, but like a set of very clear statements and fail to put themselves in shoes of people who are going to read this, and they especially fail to account for fact that readers already distrust them because they started conversation with <controversial statement>.

On object level, as stated, you are wrong. Observing heuristic failing should decrease your confidence ih heuristic. You can argue that your update should be small, due to, say, measurement errors or strong priors, but direction of update should be strictly down.

Replies from: tailcalled↑ comment by tailcalled · 2024-09-16T14:17:56.153Z · LW(p) · GW(p)

Can you fill in a particular example of me engaging in that pattern so we can address it in the concrete rather than in the abstract?

Replies from: quetzal_rainbow↑ comment by quetzal_rainbow · 2024-09-23T19:20:49.459Z · LW(p) · GW(p)

To be clear, I mean "your communication in this particular thread".

Pattern:

<mix of "this is trivially true because" and "here is my blogpost with esoteric terminology">

The following responses from EY are more in genre "I ain't reading this", because he is more using you as example for other readers than talking directly to you, with following block.

Replies from: tailcalled↑ comment by tailcalled · 2024-09-23T19:28:12.341Z · LW(p) · GW(p)

This statement had two parts. Part 1:

What if objectionists had a correct thermodynamics-style heuristic that implied superintelligence/RSI is impossible, but which could not answer the question of where exactly it failed? Then the failure of objectionists doesn't mean they were wrong.

We have to be willing to investigate the new evidence as it arrives, perform root cause analysis on why A but not B happened, and use this to update our models.

And the evidence I've gotten since then suggests something like "it is impossible to do something without assistance from a higher power"/"greater things can cause lesser things but not vice versa", as a sort of generalization of the laws of thermodynamics.

If appropriate thought had been applied by a knowledgeable person back in 2004, maybe they could have taken this model and realized that nanotech violates this ordering constraint while AlphaProteo does not. Either way, we have the relevant info now.

And part 2:

The particular way the objectionists failed was in that they didn't give a concrete prediction that matched the way stuff played out.

Part 2 is what Eliezer said was false, but it's not really central to my point (hence why I didn't write much about it in the original thread), and so it is self-sabotaging of Eliezer to zoom into this rather than the actually informative point.

↑ comment by habryka (habryka4) · 2024-09-15T15:54:25.482Z · LW(p) · GW(p)

I do think if that thread got you blocked then that's sad (my guess is I think you were more right than Eliezer, though I haven't read the full sequence that you linked to).

I do think Twitter blocks don't mean very much. I think it's approximately zero evidence of "cultism" or whatever. Most people with many followers on Twitter seem to need to have a hair trigger for blocking, or at least feel like they need to, in order to not constantly have terrible experiences.

Replies from: sharmake-farah, tailcalled, shankar-sivarajan, Richard_Kennaway↑ comment by Noosphere89 (sharmake-farah) · 2024-09-15T16:16:43.503Z · LW(p) · GW(p)

This is a very useful point:

Most people with many followers on Twitter seem to need to have a hair trigger for blocking, or at least feel like they need to, in order to not constantly have terrible experiences.

I think that this is a point that people not on social media that much don't get: You need to be very quick to block because otherwise you will not have good experiences on the site otherwise.

Replies from: Viliam↑ comment by Viliam · 2024-09-16T09:03:55.239Z · LW(p) · GW(p)

I think our instincts may be misleading here, because internet works differently from real life.

In real life, not interacting with someone is the default. Unless you have some kind of relationship with someone, people have no obligation to call you or meet you. And if I call someone on the phone just to say "dude, I disagree with your theory", I would expect that person to hang up... and maybe say "sorry, I'm busy" before hanging up, if they are extra polite. The interactions are mutually agreed, and you have no right to complain when the other party decides to not give you the time. (And if you keep insisting... that's what the restraining orders are for.)

On internet, once you sign up to e.g. Twitter, the default is that anyone can talk to you, and if you are not interested in reading the texts they send you, you need to block them. As far as I know, there are no options in the middle between "block" and "don't block". (Nothing like "only let them talk to me when it is important" or "only let them talk to me on Tuesdays between 3 PM and 5 PM".) And if you are a famous person, I guess you need to keep blocking left and right, otherwise you would drown in the text -- presumably you don't want to spend 24 hours a day sifting through Twitter messages, and you want to get the ones you actively want, which requires you to aggressively filter out everything else.

So getting blocked is not an equivalent of getting a restraining order, but more like an equivalent of the other person no longer paying attention to you. Which most people would not interpret as evidence of cultism.

Replies from: sharmake-farah↑ comment by Noosphere89 (sharmake-farah) · 2024-09-16T14:23:33.785Z · LW(p) · GW(p)

This is the key to understanding why I think it's more okay to block than a lot of other people think, and the fact that the default is anyone can talk to you means you get way too much crap without blocking lots of people.

↑ comment by tailcalled · 2024-09-15T16:14:43.223Z · LW(p) · GW(p)

I think whether it's cultism depends on what model one has of how cults work. I don't know much about it so I might be totally ignorant, but I think a major factor is just engaging in a futile, draining activity powered by popularity, so one needs to carefully preserve resources and maintain appearances.

Replies from: habryka4↑ comment by habryka (habryka4) · 2024-09-15T16:19:56.629Z · LW(p) · GW(p)

Huh, I guess you mean cult in a broader "polarization" sense? Like, where are the democratic and republican parties on the cultishness scale in your model?

Replies from: tailcalled↑ comment by tailcalled · 2024-09-15T17:04:08.489Z · LW(p) · GW(p)

Huh, I guess you mean cult in a broader "polarization" sense?

Idk, my main point of reference is I recently read is Some Desperate Glory, which was about a cult of terrorists. Polarization generally implies a balanced conflict which isn't really futile.

Like, where are the democratic and republican parties on the cultishness scale in your model?

I don't know much about how they work internally. Democracy is a weird systen because you've got the adversarial thing that would make it less futile but also the popularity contest thing that would make it more narcissistic and thus more cultish.

↑ comment by Shankar Sivarajan (shankar-sivarajan) · 2024-09-15T16:05:45.168Z · LW(p) · GW(p)

in order to not constantly have terrible experiences.

This explanation sounds like what they'd say. I think the real reason this is common is more a status thing: it's a pretty standard strategy for people to try to gain status by "dunking" on tweets by more famous people, and blocking them is the standard countermeasure.

Replies from: tailcalled↑ comment by tailcalled · 2024-09-15T16:18:54.348Z · LW(p) · GW(p)

The dunking seems like constant terrible experiences.

↑ comment by Richard_Kennaway · 2024-09-16T09:22:30.036Z · LW(p) · GW(p)

The more prominent you are, the more people want to talk with you, and the less time you have to talk with them. You have to shut them out the moment the cost is no longer worth paying.

↑ comment by MondSemmel · 2024-09-15T13:27:54.089Z · LW(p) · GW(p)

People should feel free to liberally block one another on social media. Being blocked is not enough to warrant an accusation of cultism.

Replies from: tailcalled↑ comment by tailcalled · 2024-09-15T13:32:17.549Z · LW(p) · GW(p)

I did not say that simply blocking me warrants an accusation of cultism. I highlighted the fact that I had been blocked and the context in which it occurred, and then brought up other angles which evidenced cultism. If you think my views are pathetic and aren't the least bit alarmed by them being blocked, then feel free to feel that way, but I suspect there are at least some people here who'd like to keep track of how the rationalist isolation is progressing and who see merit in my positions.

Replies from: MondSemmel↑ comment by MondSemmel · 2024-09-15T13:38:17.961Z · LW(p) · GW(p)

Again, people block one another on social media for any number of reasons. That just doesn't warrant feeling alarmed or like your views are pathetic.

Replies from: tailcalled↑ comment by tailcalled · 2024-09-15T13:44:13.346Z · LW(p) · GW(p)

We know what the root cause is, you don't have to act like it's totally mysterious. So the question is, was this root cause (pushback against Eliezer's Bayesianism):

- An important insight that Eliezer was missing (alarming!)

- Worthless pedantry that he might as well block (nbd/pathetic)

- Antisocial trolling that ought to be gotten rid of (reassuring that he blocked)

- ... or something else

Regardless of which of these is the true one, it seems informative to highlight for anyone who is keeping track of what is happening around me. And if the first one is the true one, it seems like people who are keeping track of what is happening around Eliezer would also want to know it.

Especially since it only takes a very brief moment to post and link about getting blocked. Low cost action, potentially high reward.

Replies from: habryka4↑ comment by habryka (habryka4) · 2024-09-15T15:56:38.171Z · LW(p) · GW(p)

MIRI full-time employed many critics of bayesianism for 5+ years and MIRI researchers themselves argued most of the points you made in these arguments. It is obviously not the case that critiquing bayesianism is the reason why you got blocked.

Replies from: tailcalled↑ comment by tailcalled · 2024-09-15T16:04:02.514Z · LW(p) · GW(p)

Idk, maybe you've got a point, but Eliezer was very quick to insist what I said was not the mainstream view and disengage. And MIRI was full of internal distrust. I don't know enough of the situation to know if this explains it, but it seems plausible to me that the way MIRI kept stuff together was by insisting on a Bayesian approach, and that some generators of internal dissent was from people whose intuition aligned more with non-Bayesian approach.

For that matter, an important split in rationalism is MIRI/CFAR vs the Vassarites, and while I wouldn't really say the Vassarites formed a major inspiration for LDSL, after coming up with LDSL I've totally reevaluated my interpretation of that conflict as being about MIRI/CFAR using a Bayesian approach and the Vassarites using an LDSL approach. (Not absolutely of course, everyone has a mixture of both, but in terms of relative differences.)

comment by tailcalled · 2024-05-10T18:36:34.628Z · LW(p) · GW(p)

I've been thinking about how the way to talk about how a neural network works (instead of how it could hypothetically come to work by adding new features) would be to project away components of its activations/weights [LW(p) · GW(p)], but I got stuck because of the issue where you can add new components by subtracting off large irrelevant components.

I've also been thinking about deception [LW(p) · GW(p)] and its relationship to "natural abstractions" [LW(p) · GW(p)], and in that case it seems to me that our primary hope would be that the concepts we care about are represented at a large "magnitude" than the deceptive concepts. This is basically using L2-regularized regression to predict the outcome.

It seems potentially fruitful to use something akin to L2 regularization when projecting away components. The most straightforward translation of the regularization would be to analogize the regression coefficient to , in which case the L2 term would be , which reduces to .

If is the probability[1] that a neural network with weights gives to an output given a prompt , then when you've actually explained , it seems like you'd basically have or in other words . Therefore I'd want to keep the regularization coefficient weak enough that I'm in that regime.

In that case, the L2 term would then basically reduce to minimizing , or in other words maximizing ,. Realistically, both this and are probably achieved when , which on the one hand is sensible ("the reason for the network's output is because of its weights") but on the other hand is too trivial to be interesting.

In regression, eigendecomposition gives us more gears, because L2 regularized regression is basically changing the regression coefficients for the principal components by , where is the variance of the principal component and is the regularization coefficient. So one can consider all the principal components ranked by to get a feel for the gears driving the regression. When is small, as it is in our regime, this ranking is of course the same order as that which you get from , the covariance between the PCs and the dependent variable.

This suggests that if we had a change of basis for , one could obtain a nice ranking of it. Though this is complicated by the fact that is not a linear function and therefore we have no equivalent of . To me, this makes it extremely tempting to use the Hessian eigenvectors as a basis, as this is the thing that at least makes each of the inputs to "as independent as possible". Though rather than ranking by the eigenvalues of (which actually ideally we'd actually prefer to be small rather than large to stay in the ~linear regime), it seems more sensible to rank by the components of the projection of onto (which represent "the extent to which includes this Hessian component").

In summary, if , then we can rank the importance of each component by .

Maybe I should touch grass and start experimenting with this now, but there's still two things that I don't like:

- There's a sense in which I still don't like using the Hessian because it seems like it would be incentivized to mix nonexistent mechanisms in the neural network together with existent ones. I've considered alternatives like collecting gradient vectors along the training of the neural network and doing something with them, but that seems bulky and very restricted in use.

- If we're doing the whole Hessian thing, then we're modelling as quadratic, yet seems like an attribution method that's more appropriate when modelling as ~linear. I don't think I can just switch all the way to quadatic models, because realistically is more gonna be sigmoidal-quadratic and for large steps , the changes to a sigmoidal-quadratic function is better modelled by f(x+\delta x) - f(x) than by some quadratic thing. But ideally I'd have something smarter...

- ^

Normally one would use log probs, but for reasons I don't want to go into right now, I'm currently looking at probabilities instead.

↑ comment by Thomas Kwa (thomas-kwa) · 2024-05-10T22:39:45.580Z · LW(p) · GW(p)

Much dumber ideas have turned into excellent papers

Replies from: tailcalled↑ comment by tailcalled · 2024-05-11T08:12:39.083Z · LW(p) · GW(p)

True, though I think the Hessian is problematic enough that that I'd either want to wait until I have something better, or want to use a simpler method.

It might be worth going into more detail about that. The Hessian for the probability of a neural network output is mostly determined by the Jacobian of the network [LW · GW]. But in some cases the Jacobian gives us exactly the opposite of what we want.

If we consider the toy model of a neural network with no input neurons and only 1 output neuron (which I imagine to represent a path through the network, i.e. a bunch of weights get multiplied along the layers to the end), then the Jacobian is the gradient . If we ignore the overall magnitude of this vector and just consider how the contribution that it assigns to each weight varies over the weights, then we get . Yet for this toy model, "obviously" the contribution of weight "should" be proportional to .

So derivative-based methods seem to give the absolutely worst-possible answer in this case, which makes me pessimistic about their ability to meaningfully separate the actual mechanisms of the network (again they may very well work for other things, such as finding ways of changing the network "on the margin" to be nicer).

comment by tailcalled · 2021-12-30T10:51:35.114Z · LW(p) · GW(p)

One thing that seems really important for agency is perception. And one thing that seems really important for perception is representation learning. Where representation learning involves taking a complex universe (or perhaps rather, complex sense-data) and choosing features of that universe that are useful for modelling things.

When the features are linearly related to the observations/state of the universe, I feel like I have a really good grasp of how to think about this. But most of the time, the features will be nonlinearly related; e.g. in order to do image classication, you use deep neural networks, not principal component analysis.

I feel like it's an interesting question: where does the nonlinearity come from? Many causal relationships seem essentially linear (especially if you do appropriate changes of variables to help, e.g. taking logarithms; for many purposes, monotonicity can substitute for linearity), and lots of variance in sense-data can be captured through linear means, so it's not obvious why nonlinearity should be so important.

Here's some ideas I have so far:

- Suppose you have a Gaussian mixture distribution with two Gaussians , with different means and identical covariances. In this case, the function that separates them optimally is linear. However, if the covariances differed between the Gaussians , , then the optimal separating function is nonlinear. So this suggests to me that one reason for nonlinearity is fundamental to perception: nonlinearity is necessary if multiple different processes could be generating the data, and you need to discriminate between the processes themselves. This seems important for something like vision, where you don't observe the system itself, but instead observe light that bounced off the system.

- Consider the notion of the habitable zone of a solar system; it's the range in which liquid water can exist. Get too close to the star and the water will freeze, get too far and it will boil. Here, it seems like we have two monotonic effects which add up, but because the effects aren't linear, the result can be nonmonotonic.

- Many aspects of the universe are fundamentally nonlinear. But they tend to exist on tiny scales, and those tiny scales tend to mostly get loss to chaotic noise, which tends to turn things linear. However, there are things that don't get lost to noise, e.g. due to conservation laws [LW · GW]; these provide fundamental sources of nonlinearity in the universe.

- ... and actually, most of the universe is pretty linear? The vast majority of the universe is ~empty space; there isn't much complex nonlinearity that is happening there, just waves and particles zipping around. If we disregard the empty space, then I believe (might be wrong) that the vast majority is stars. Obviously lots of stuff is going on within stars, but all of the details get lost to the high energies, so it is mostly simple monotonic relations that are left. It seems that perhaps nonlinearity tends to live on tiny boundaries between linear domains. The main reason thing that makes these tiny boundaries so relevant, such that we can't just forget about them and model everything in piecewise linear/piecewise monotonic ways, is that we live in the boundary.

- Another major thing: It's hard to persist information in linear contexts, because it gets lost to noise. Whereas nonlinear systems can have multiple stable configurations [? · GW] and therefore persist it for longer.

- There is of course a lot of nonlinearity in organisms and other optimized systems, but I believe they result from the world containing the various factors listed above? Idk, it's possible I've missed some.

It seems like it would be nice to develop a theory on sources of nonlinearity. This would make it clearer why sometimes selecting features linearly seems to work (e.g. consider IQ tests), and sometimes it doesn't.

comment by tailcalled · 2024-09-17T10:59:50.331Z · LW(p) · GW(p)

Thesis: money = negative entropy, wealth = heat/bound energy, prices = coldness/inverse temperature, Baumol effect = heat diffusion, arbitrage opportunity = free energy.

Replies from: tailcalled↑ comment by tailcalled · 2024-09-17T11:34:38.977Z · LW(p) · GW(p)

Maybe this mainly works because the economy is intelligence-constrained (since intelligence works by pulling off negentropy from free energy), and it will break down shortly after human-level AGI?

comment by tailcalled · 2024-09-09T21:19:55.783Z · LW(p) · GW(p)

Thesis: there's a condition/trauma that arises from having spent a lot of time in an environment where there's excess resources for no reasons, which can lead to several outcomes:

- Inertial drifting in the direction implied by ones' prior adaptations,

- Conformity/adaptation to social popularity contests based on the urges above,

- Getting lost in meta-level preparations,

- Acting as a stickler for the authorities,

- "Bite the hand that feeds you",

- Tracking the resource/motivation flows present.

By contrast, if resources are contingent on a particular reason, everything takes shape according to said reason, and so one cannot make a general characterization of the outcomes.

Replies from: mateusz-baginski↑ comment by Mateusz Bagiński (mateusz-baginski) · 2024-09-10T01:24:13.780Z · LW(p) · GW(p)

It's not clear to me how this results from "excess resources for no reasons". I guess the "for no reasons" part is crucial here?

comment by tailcalled · 2024-08-30T08:30:51.882Z · LW(p) · GW(p)

Thesis: the median entity in any large group never matters and therefore the median voter doesn't matter and therefore the median voter theorem proves that democracies get obsessed about stuff that doesn't matter.

Replies from: Dagon↑ comment by Dagon · 2024-08-30T15:34:03.325Z · LW(p) · GW(p)

A lot depends on your definition of "matter". Interesting and important debates are always on margins of disagreement. The median member likely has a TON of important beliefs and activities that are uncontroversial and ignored for most things. Those things matter, and they matter more than 95% of what gets debated and focused on.

The question isn't whether the entities matter, but whether the highlighted, debated topics matter.

comment by tailcalled · 2021-12-12T20:24:31.050Z · LW(p) · GW(p)

I recently wrote a post about myopia [LW · GW], and one thing I found difficult when writing the post was in really justifying its usefulness. So eventually I mostly gave up, leaving just the point that it can be used for some general analysis (which I still think is true), but without doing any optimality proofs.

But now I've been thinking about it further, and I think I've realized - don't we lack formal proofs of the usefulness of myopia in general? Myopia seems to mostly be justified by the observation that we're already being myopic in some ways, e.g. when training prediction models. But I don't think anybody has formally proven that training prediction models myopically rather than nonmyopically is a good idea for any purpose?

So that seems like a good first step. But that immediately raises the question, good for what purpose? Generally it's justified with us not wanting the prediction algorithms to manipulate the real-world distribution of the data to make it more predictable. And that's sometimes true, but I'm pretty sure one could come up with cases where it would be perfectly fine to do so, e.g. I keep some things organized so that they are easier to find.

It seems to me that it's about modularity. We want to design the prediction algorithm separately from the agent, so we do the predictions myopically because modifying the real world is the agent's job. So my current best guess for the optimality criterion of myopic optimization of predictions would be something related to supporting a wide variety of agents.

Replies from: Charlie Steiner↑ comment by Charlie Steiner · 2022-01-07T05:17:58.775Z · LW(p) · GW(p)

Yeah, I think usually when people are interested in myopia, it's because they think there's some desired solution to the problem that is myopic / local, and they want to try to force the algorithm to find that solution rather than some other one. E.g. answering a question based only on some function of its contents, rather than based on the long-term impact of different answers.

I think that once you postulate such a desired myopic solution and its non-myopic competitors, then you can easily prove that myopia helps. But this still leaves the question of how we know this problems statement is true - if there's a simpler myopic solution that's bad, then myopia won't help (so how can we predict if this is true?) and if there's a simpler non-myopic solution that's good, myopia may actively hurt (this one seems a little easier to predict though).

comment by tailcalled · 2025-02-11T09:06:59.470Z · LW(p) · GW(p)

Framing: Prices reflect how much trouble purchasers would be in if the seller didn't exist. GDP multiplies prices by transaction volume, so it measures the fragility of the economy.

Replies from: tailcalled, Viliam, tailcalled↑ comment by tailcalled · 2025-02-11T10:20:45.103Z · LW(p) · GW(p)

Prices decompose into cost and profit. The profit is determined by how much trouble the purchaser would be in if the seller didn't exist (since e.g. if there's other sellers, the purchaser could buy from those). The cost is determined by how much demand there is for the underlying resources in other areas, so it basically is how much trouble the purchaser imposes on others by getting the item. Most products are either cost-constrained (where price is mostly cost) or high-margin (where price is mostly profit).

GDP is price times transaction volume, so it's the sum of total costs and total profits in a society. The profit portion of GDP reflects the extent to which the economy has monopolized activities into central nodes that contribute to fragility, while the cost portion of GDP reflects the extent to which the economy is resource-constrained.